Pytorch深度学习——用全连接神经网络实现MNIST数据集分类

目录

1 准备数据集

2 建立模型

3 构建损失函数和优化器

4 训练+测试

5 完整代码+运行结果

6 遇到问题

我们之前学习的案例中,输入x都是一个向量;在MNIST数据集中,我们需要输入的是一个图像,怎样,图像怎么才能输入到模型中进行训练呢?一种方法是我们可以把图像映射成一个矩向量,再输入到模型中进行训练。

怎样将一个图像映射成一个向量?

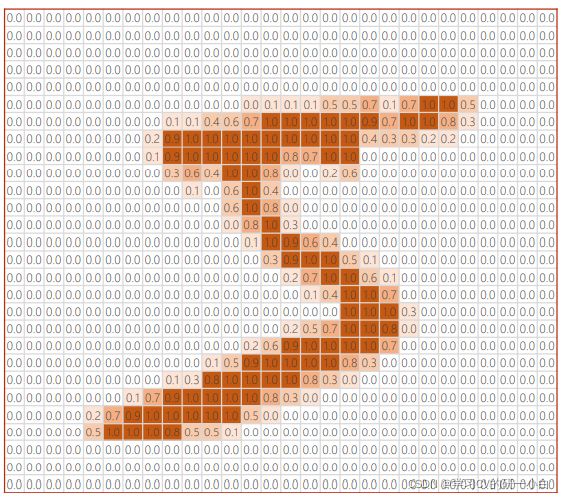

如图所示是MNIST数据集中一个方格的图像,它是由28x28=784个像素组成,其中越深的地方数值越接近0,越亮的地方数值越接近1。

因此可以将此图像按照对应的像素和数值映射成一个28x28的一个矩阵,如下图所示:

1 准备数据集

具体代码如下:

# 准备数据集

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

#均值、标准差

transforms.Normalize((0.1307, ), (0.3081, ))

])

train_dataset = datasets.MNIST(root='../dataset/mnist/',

train=True,

download=True,

transform=transform)

train_loader = DataLoader(train_dataset,

shuffle=True,

batch_size=batch_size)

test_dataset = datasets.MNIST(root='../dataset/mnist',

train=False,

download=True,

transform=transform)

test_loader = DataLoader(test_dataset,

shuffle=False,

batch_size=batch_size)注:

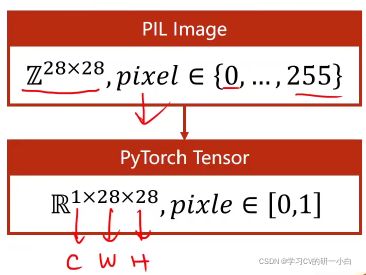

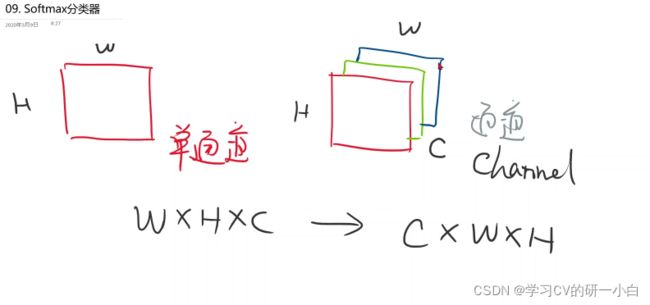

- 图像张量:灰度图(黑白图像)就是一个单通道的图像,彩色图像是多通道的图像(分别是R,G,B三个通道),一个通道具有高度——H,宽度——W,通道由——C表示。

- transform作用:再pytorch中读取图像时使用的是python的PIL,而由PIL读取的图像一般是由W x H x C组成,而在pytorch中为了进行更高效的图像处理,需要图像由C x W x H这样的顺序组成,因此transform的作用就是将PIL读取的图像顺序转换成C x W x H;像素值从0~255转换成0~1。

2 建立模型

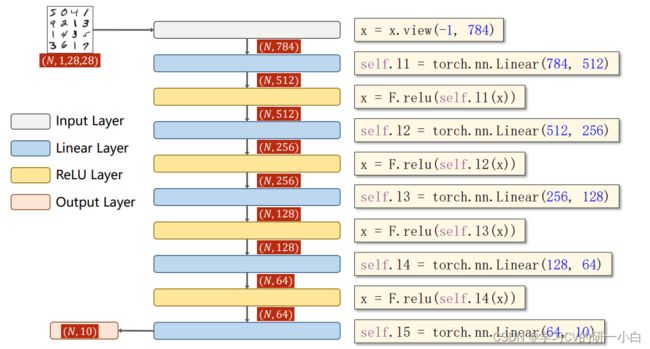

全连接网络中,要求输入的是一个矩阵,因此需要将1x28x28的这个三阶的张量变成一个一阶的向量,因此将图像的每一行的向量横着拼起来变成一串,这样就变成了一个维度为1x784的向量,一共输入N个手写数图,因此,输入矩阵维度为(N,784)。这样就可以设计我们的模型,如下图所示:

具体代码:

# 设计模型

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.l1 = torch.nn.Linear(784, 512)

self.l2 = torch.nn.Linear(512, 256)

self.l3 = torch.nn.Linear(256, 128)

self.l4 = torch.nn.Linear(128, 64)

self.l5 = torch.nn.Linear(64, 10)

def forward(self, x):

x = x.view(-1, 784)

x = F.relu(self.l1(x))

x = F.relu(self.l2(x))

x = F.relu(self.l3(x))

x = F.relu(self.l4(x))

return self.l5(x)

model = Net()3 构建损失函数和优化器

- 这里我们选择CrossEntropyLoss损失函数;

- 因为训练数据集有些大,所以优化器可以加上冲量参数。

具体代码:

# 构建损失函数和优化器

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)4 训练+测试

- 为了方便,这里我们将一轮训练循环封装成函数;

- test函数不需要反向传播,只用计算正向的就可以;

- torch.max的返回值有两个,第一个是每一行的最大值是多少,第二个是每一行最大值的下标(索引)是多少;

- torch.no_grad() :不需要计算梯度;

- Python 各种下划线都是啥意思_、_xx、xx_、__xx、__xx__、_classname_ - 知乎

具体代码:

# 定义训练函数

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

# 前馈+反馈+更新

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

# 每300次迭代输出一次

if batch_idx % 300 == 299:

print('[%d,%5d] loss:%.3f' % (epoch + 1, batch_idx + 1, running_loss / 300))

running_loss = 0.0

# 定义测试函数

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

# 沿着第一维度找最大值的下标

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy on test set:%d %%' % (100 * correct / total))

# 实例化训练和测试

if __name__ == '__main__':

# 训练10轮

for epoch in range(10):

train(epoch)

test()5 完整代码+运行结果

完整代码:

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

# 准备数据集

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307, ), (0.3081, ))

])

train_dataset = datasets.MNIST(root='../dataset/mnist/',

train=True,

download=True,

transform=transform)

train_loader = DataLoader(train_dataset,

shuffle=True,

batch_size=batch_size)

test_dataset = datasets.MNIST(root='../dataset/mnist',

train=False,

download=True,

transform=transform)

test_loader = DataLoader(test_dataset,

shuffle=False,

batch_size=batch_size)

# 设计模型

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.l1 = torch.nn.Linear(784, 512)

self.l2 = torch.nn.Linear(512, 256)

self.l3 = torch.nn.Linear(256, 128)

self.l4 = torch.nn.Linear(128, 64)

self.l5 = torch.nn.Linear(64, 10)

def forward(self, x):

x = x.view(-1, 784)

x = F.relu(self.l1(x))

x = F.relu(self.l2(x))

x = F.relu(self.l3(x))

x = F.relu(self.l4(x))

return self.l5(x)

model = Net()

# 构建损失函数和优化器

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

# 定义训练函数

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

# 前馈+反馈+更新

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d,%5d] loss:%.3f' % (epoch + 1, batch_idx + 1, running_loss / 300))

running_loss = 0.0

# 定义测试函数

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy on test set:%d %%' % (100 * correct / total))

# 实例化训练和测试

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

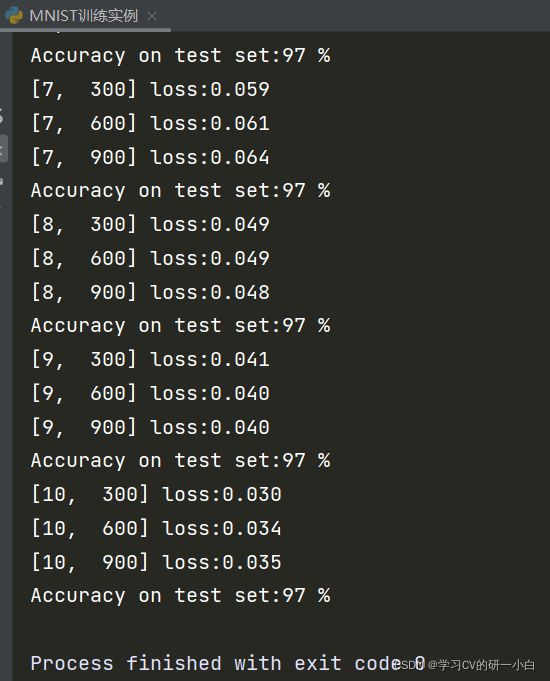

test()运行截图如下:

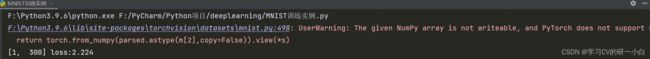

6 遇到问题

运行时遇到警告:The given NumPy array is not writeable,and PyTorch does not support non-writeable tensor,如图:

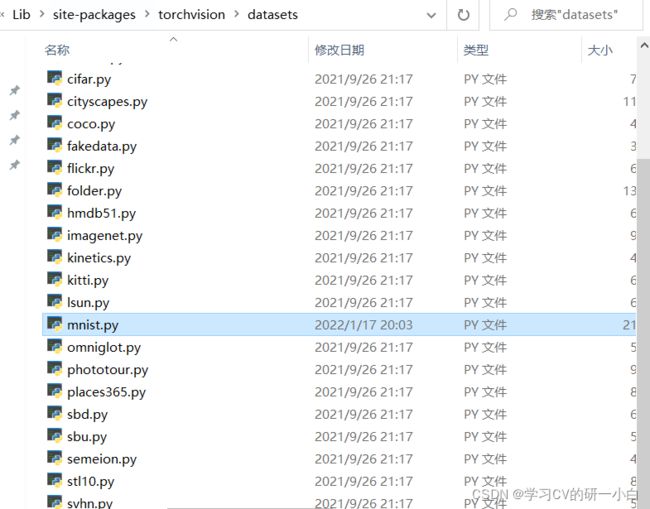

按照路径找到mnist.py文件:

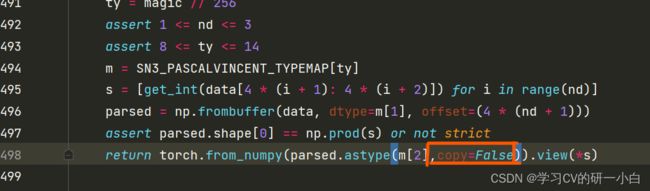

点开修改:删除copy+False,就没有报错,程序可以继续运行了