1. 前言

虽然博客园注册已经有五年多了,但是最近才正式开始在这里写博客。(进了博客园才知道这里面个个都是人才,说话又好听,超喜欢这里...)但是由于写的内容都是软件测试相关,热度一直不是很高。看到首页的推荐博客排行时,心里痒痒的,想想看看这些大佬究竟是写了什么文章这么受欢迎,可以被推荐。所以用Python抓取了这100位推荐博客,简单分析了每个博客的文章分类,阅读排行榜,评论排行榜及推荐排行榜,最后统计汇总并生成词云。正好这也算是一篇非常好的Python爬虫入门教程了。

2. 环境准备

2.1 操作系统及浏览器版本

Windows 10

Chrome 62

2.2 Python版本

Python 2.7

2.3 用到的lib库

1. requests Http库

2. re 正则表达式

3. json json数据处理

4. BeautifulSoup Html网页数据提取

5. jieba 分词

6. wordcloud 生成词云

7. concurrent.futures 异步并发

所有模块均可使用pip命令安装,如下:

pip install requests pip install beautifulsoup4 pip install jieba pip install wordcloud pip install futures

3. 编写爬虫

上面的环境准备好之后,我们正式开始编写爬虫,但是写代码之前,我们首先需要对需要爬取的页面进行分析。

3.1 页面分析

3.1.1 博客园首页推荐博客排行

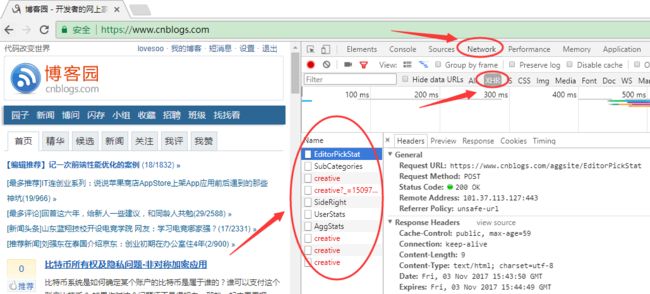

1. 运行Chrome浏览器,按快捷键F12打开开发者工具,打开博客园首页:https://www.cnblogs.com/

2. 在右侧点击Network,选中XHR类型,点击下面的每一个请求都可以看到详细的Http请求信息

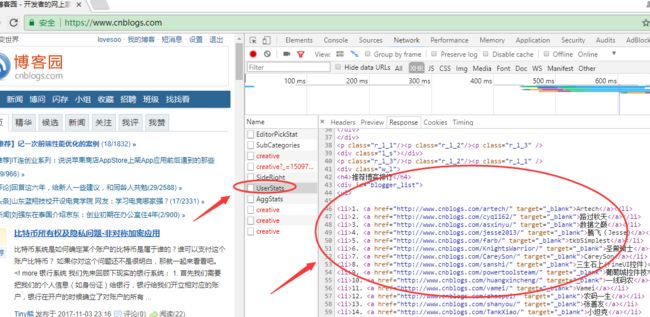

3. 依次选中右侧的Response,查看接口响应,筛选我们需要的接口,这里我们找到了UserStats接口,可以看到这个接口返回了我们需要的“推荐博客排行”信息

4. 点击右侧Headers查看详细的接口信息,可以看到这是一个简单的Http GET接口,不需要传递任何参数:https://www.cnblogs.com/aggsite/UserStats

5. 这样我们使用requests编写简单的请求就可以获取首页“推荐博客排行”信息

#coding:utf-8 import requests r=requests.get('https://www.cnblogs.com/aggsite/UserStats') print r.text

返回结果如下:

<p class="r_l_3"/><p class="r_l_2"/><p class="r_l_1" /> <div class="w_l"> <h4>博问专家排行h4> <div> <ul> <li><a href="http://q.cnblogs.com/u/Galactica/" target="_blank">Launchera>li> <li><a href="http://q.cnblogs.com/u/astar/" target="_blank">Astara>li> <li><a href="http://q.cnblogs.com/u/humin/" target="_blank">幻天芒a>li> <li><a href="http://q.cnblogs.com/u/dudu/" target="_blank">dudua>li> <li><a href="http://q.cnblogs.com/u/puda/" target="_blank">爱编程的大叔a>li> <li><a href="http://q.cnblogs.com/u/downmoon/" target="_blank">邀月a>li> <li><a href="http://q.cnblogs.com/u/wrx362114/" target="_blank">吴瑞祥a>li> <li><a href="http://q.cnblogs.com/u/dingxue/" target="_blank">丁学a>li> <li><a href="http://q.cnblogs.com/u/GrayZhang/" target="_blank">Gray Zhanga>li> <li><a href="http://q.cnblogs.com/u/eaglet/" target="_blank">eagleta>li> <li class="blogger_more"><a href="http://q.cnblogs.com/q/rank" target="_blank">» 更多博问专家a>li> ul> div> div> <p class="r_l_1"/><p class="r_l_2"/><p class="r_l_3" /> <div class="l_s">div> <p class="r_l_3"/><p class="r_l_2"/><p class="r_l_1" /> <div class="w_l"> <h4>最新推荐博客h4> <div> <ul> <li><a href="http://www.cnblogs.com/RainingNight/" target="_blank">雨夜朦胧a>li> <li><a href="http://www.cnblogs.com/zhenbianshu/" target="_blank">枕边书a>li> <li><a href="http://www.cnblogs.com/sparkdev/" target="_blank">sparkdeva>li> <li><a href="http://www.cnblogs.com/ljhdo/" target="_blank">悦光阴a>li> <li><a href="http://www.cnblogs.com/emrys5/" target="_blank">Emrys5a>li> <li class="blogger_more"><a href="http://www.cnblogs.com/expert/" target="_blank">» 更多推荐博客a>li> ul> div> div> <p class="r_l_1"/><p class="r_l_2"/><p class="r_l_3" /> <div class="l_s">div> <p class="r_l_3"/><p class="r_l_2"/><p class="r_l_1" /> <div class="w_l"> <h4>推荐博客排行h4> <div id="blogger_list"> <ul> <li>1. <a href="http://www.cnblogs.com/artech/" target="_blank">Artecha>li> <li>2. <a href="http://www.cnblogs.com/cyq1162/" target="_blank">路过秋天a>li> <li>3. <a href="http://www.cnblogs.com/asxinyu/" target="_blank">数据之巅a>li> <li>4. <a href="http://www.cnblogs.com/jesse2013/" target="_blank">腾飞(Jesse)a>li> <li>5. <a href="http://www.cnblogs.com/farb/" target="_blank">tkbSimplesta>li> <li>6. <a href="http://www.cnblogs.com/KnightsWarrior/" target="_blank">圣殿骑士a>li> <li>7. <a href="http://www.cnblogs.com/CareySon/" target="_blank">CareySona>li> <li>8. <a href="http://www.cnblogs.com/sanshi/" target="_blank">三生石上(FineUI控件)a>li> <li>9. <a href="http://www.cnblogs.com/powertoolsteam/" target="_blank">葡萄城控件技术团队a>li> <li>10. <a href="http://www.cnblogs.com/huangxincheng/" target="_blank">一线码农a>li> <li>11. <a href="http://www.cnblogs.com/vamei/" target="_blank">Vameia>li> <li>12. <a href="http://www.cnblogs.com/zhaopei/" target="_blank">农码一生a>li> <li>13. <a href="http://www.cnblogs.com/shanyou/" target="_blank">张善友a>li> <li>14. <a href="http://www.cnblogs.com/TankXiao/" target="_blank">小坦克a>li> <li>15. <a href="http://www.cnblogs.com/coco1s/" target="_blank">ChokCocoa>li> <li>16. <a href="http://www.cnblogs.com/JimmyZhang/" target="_blank">Jimmy Zhanga>li> <li>17. <a href="http://www.cnblogs.com/edisonchou/" target="_blank">Edison Choua>li> <li>18. <a href="http://www.cnblogs.com/kenshincui/" target="_blank">KenshinCuia>li> <li>19. <a href="http://www.cnblogs.com/heyuquan/" target="_blank">滴答的雨a>li> <li>20. <a href="http://www.cnblogs.com/insus/" target="_blank">Insus.NETa>li> <li>21. <a href="http://www.cnblogs.com/rubylouvre/" target="_blank">司徒正美a>li> <li>22. <a href="http://www.cnblogs.com/aaronjs/" target="_blank">【艾伦】a>li> <li>23. <a href="http://www.cnblogs.com/toutou/" target="_blank">请叫我头头哥a>li> <li>24. <a href="http://www.cnblogs.com/savorboard/" target="_blank">Savorboarda>li> <li>25. <a href="http://www.cnblogs.com/lyhabc/" target="_blank">桦仔a>li> <li>26. <a href="http://www.cnblogs.com/Wayou/" target="_blank">刘哇勇a>li> <li>27. <a href="http://www.cnblogs.com/gaochundong/" target="_blank">匠心十年a>li> <li>28. <a href="http://www.cnblogs.com/keepfool/" target="_blank">keepfoola>li> <li>29. <a href="http://www.cnblogs.com/zuoxiaolong/" target="_blank">左潇龙a>li> <li>30. <a href="http://www.cnblogs.com/stoneniqiu/" target="_blank">stoneniqiua>li> <li>31. <a href="http://www.cnblogs.com/alamiye010/" target="_blank">深蓝色右手a>li> <li>32. <a href="http://www.cnblogs.com/mindwind/" target="_blank">mindwinda>li> <li>33. <a href="http://www.cnblogs.com/yanweidie/" target="_blank">焰尾迭a>li> <li>34. <a href="http://www.cnblogs.com/baihmpgy/" target="_blank">道法自然a>li> <li>35. <a href="http://www.cnblogs.com/netfocus/" target="_blank">netfocusa>li> <li>36. <a href="http://www.cnblogs.com/ityouknow/" target="_blank">纯洁的微笑a>li> <li>37. <a href="http://www.cnblogs.com/snandy/" target="_blank">snandya>li> <li>38. <a href="http://www.cnblogs.com/CreateMyself/" target="_blank">Jeffckya>li> <li>39. <a href="http://www.cnblogs.com/JustRun1983/" target="_blank">JustRuna>li> <li>40. <a href="http://www.cnblogs.com/daxnet/" target="_blank">dax.neta>li> <li>41. <a href="http://www.cnblogs.com/wolf-sun/" target="_blank">wolfya>li> <li>42. <a href="http://www.cnblogs.com/index-html/" target="_blank">EtherDreama>li> <li>43. <a href="http://www.cnblogs.com/wangiqngpei557/" target="_blank">王清培a>li> <li>44. <a href="http://www.cnblogs.com/kerrycode/" target="_blank">潇湘隐者a>li> <li>45. <a href="http://www.cnblogs.com/chenxizhang/" target="_blank">陈希章a>li> <li>46. <a href="http://www.cnblogs.com/freeflying/" target="_blank">自由飞a>li> <li>47. <a href="http://www.cnblogs.com/lyj/" target="_blank">李永京a>li> <li>48. <a href="http://www.cnblogs.com/xiaozhi_5638/" target="_blank">周见智a>li> <li>49. <a href="http://www.cnblogs.com/OceanEyes/" target="_blank">木宛城主a>li> <li>50. <a href="http://www.cnblogs.com/haogj/" target="_blank">冠军a>li> <li>51. <a href="http://www.cnblogs.com/highend/" target="_blank">dotNetDR_a>li> <li>52. <a href="http://www.cnblogs.com/downmoon/" target="_blank">邀月a>li> <li>53. <a href="http://www.cnblogs.com/hustskyking/" target="_blank">Barret Leea>li> <li>54. <a href="http://www.cnblogs.com/chengxingliang/" target="_blank">程兴亮a>li> <li>55. <a href="http://www.cnblogs.com/sparkdev/" target="_blank">sparkdeva>li> <li>56. <a href="http://www.cnblogs.com/subconscious/" target="_blank">计算机的潜意识a>li> <li>57. <a href="http://www.cnblogs.com/murongxiaopifu/" target="_blank">慕容小匹夫a>li> <li>58. <a href="http://www.cnblogs.com/iamzhanglei/" target="_blank">【当耐特】a>li> <li>59. <a href="http://www.cnblogs.com/vajoy/" target="_blank">vajoya>li> <li>60. <a href="http://www.cnblogs.com/yjmyzz/" target="_blank">菩提树下的杨过a>li> <li>61. <a href="http://www.cnblogs.com/weidagang2046/" target="_blank">Todd Weia>li> <li>62. <a href="http://www.cnblogs.com/huang0925/" target="_blank">黄博文a>li> <li>63. <a href="http://www.cnblogs.com/LoveJenny/" target="_blank">LoveJennya>li> <li>64. <a href="http://www.cnblogs.com/webabcd/" target="_blank">webabcda>li> <li>65. <a href="http://www.cnblogs.com/ljhdo/" target="_blank">悦光阴a>li> <li>66. <a href="http://www.cnblogs.com/leslies2/" target="_blank">风尘浪子a>li> <li>67. <a href="http://www.cnblogs.com/liuhaorain/" target="_blank">木小楠a>li> <li>68. <a href="http://www.cnblogs.com/yukaizhao/" target="_blank">玉开a>li> <li>69. <a href="http://www.cnblogs.com/over140/" target="_blank">农民伯伯a>li> <li>70. <a href="http://www.cnblogs.com/TerryBlog/" target="_blank">Terry_龙a>li> <li>71. <a href="http://www.cnblogs.com/bitzhuwei/" target="_blank">BIT祝威a>li> <li>72. <a href="http://www.cnblogs.com/zjutlitao/" target="_blank">beautifulzzzza>li> <li>73. <a href="http://www.cnblogs.com/GoodHelper/" target="_blank">刘冬.NETa>li> <li>74. <a href="http://www.cnblogs.com/legendxian/" target="_blank">传说中的弦哥a>li> <li>75. <a href="http://www.cnblogs.com/luminji/" target="_blank">最课程陆敏技a>li> <li>76. <a href="http://www.cnblogs.com/zichi/" target="_blank">韩子迟a>li> <li>77. <a href="http://www.cnblogs.com/daizhj/" target="_blank">代震军a>li> <li>78. <a href="http://www.cnblogs.com/lsxqw2004/" target="_blank">hystara>li> <li>79. <a href="http://www.cnblogs.com/dowinning/" target="_blank">随它去吧a>li> <li>80. <a href="http://www.cnblogs.com/hongru/" target="_blank">岑安a>li> <li>81. <a href="http://www.cnblogs.com/skyme/" target="_blank">skymea>li> <li>82. <a href="http://www.cnblogs.com/DebugLZQ/" target="_blank">DebugLZQa>li> <li>83. <a href="http://www.cnblogs.com/unruledboy/" target="_blank">灵感之源a>li> <li>84. <a href="http://www.cnblogs.com/jyk/" target="_blank">金色海洋(jyk)阳光男孩a>li> <li>85. <a href="http://www.cnblogs.com/skyivben/" target="_blank">银河a>li> <li>86. <a href="http://www.cnblogs.com/lovecindywang/" target="_blank">lovecindywanga>li> <li>87. <a href="http://www.cnblogs.com/graphics/" target="_blank">zdda>li> <li>88. <a href="http://www.cnblogs.com/foreach-break/" target="_blank">foreach_breaka>li> <li>89. <a href="http://www.cnblogs.com/zgynhqf/" target="_blank">BloodyAngela>li> <li>90. <a href="http://www.cnblogs.com/jeffwongishandsome/" target="_blank">JeffWonga>li> <li>91. <a href="http://www.cnblogs.com/zhongweiv/" target="_blank">porscheva>li> <li>92. <a href="http://www.cnblogs.com/me-sa/" target="_blank">坚强2002a>li> <li>93. <a href="http://www.cnblogs.com/leefreeman/" target="_blank">飘扬的红领巾a>li> <li>94. <a href="http://www.cnblogs.com/hlxs/" target="_blank">啊汉a>li> <li>95. <a href="http://www.cnblogs.com/del/" target="_blank">万一a>li> <li>96. <a href="http://www.cnblogs.com/dinglang/" target="_blank">丁浪a>li> <li>97. <a href="http://www.cnblogs.com/oppoic/" target="_blank">心态要好a>li> <li>98. <a href="http://www.cnblogs.com/1-2-3/" target="_blank">1-2-3a>