TensorFlow学习笔记之CIFAR10与VGG13实战

上上篇博文TensorFlow学习笔记之Fashion MNIST数据集简单分类我们学习了Fashion MINST集的简单分类,但是Fashion MINIST数据集只保存了图片灰度的信息,不适用输入为RGB三通道的网络模型,此节我们展开CIFAR10与VGG13的实战

目录

-

- 1 CIFAR 10数据集

-

- 1 CIFAR 10介绍

- 1.2 CIFAR10数据集的下载

-

- 1.2.1 官方下载

- 1.2.2 keras模块直接加载

- 2 VGG13

-

- 2.1 选取VGG13的原因

- 2.2 VGG部分网络结构修改

- 2.3 VGG13模型结构

- 3代码

-

- 3.1 导入必要的库

- 3.2 处理部分

- 3.3 主函数

- 3.4 VGG网络结构

- 3.5 输出结果

- 4 每文一语

1 CIFAR 10数据集

1 CIFAR 10介绍

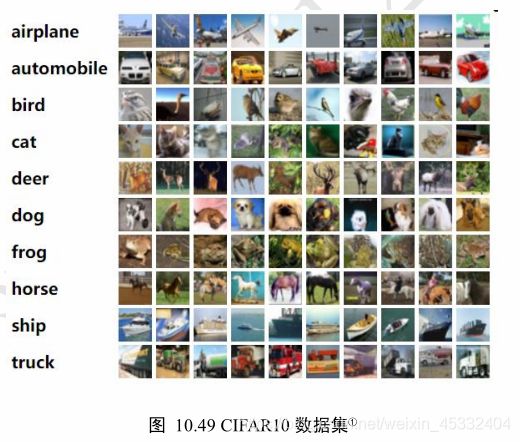

CIFAR-10 是由 Hinton 的学生 Alex Krizhevsky 和 Ilya Sutskever 整理的一个用于识别普适物体的小型数据集。一共包含 10 个类别的 RGB 彩色图 片:飞机( plane )、汽车( automobile )、鸟类( bird )、猫( cat )、鹿( deer )、狗( dog )、蛙类( frog )、马( horse )、船( ship )和卡车( truck )。图片的尺寸为 32×32 ,数据集中一共有 50000 张训练图片和 10000 张测试图片。 CIFAR-10 的图片样例如图所示。下面这幅图就是列举了10各类,每一类展示了随机的10张图片:

1.2 CIFAR10数据集的下载

1.2.1 官方下载

http://www.cs.toronto.edu/~kriz/cifar-10-python.tar.gz

1.2.2 keras模块直接加载

在TensorFlow中,不需要手动下载,解析和加载CIFAR10数据集,通过datasets.cifar10.load_data()函数就可以加载切割好的训练集和测试集

from keras import datasets

(x,y), (x_test, y_test) = datasets.cifar10.load_data()

TensorFlow 会自动将数据集下载在 C:\Users\用户名.keras\datasets 路径下,用户可以查

看,也可手动删除不需要的数据集缓存。

2 VGG13

2.1 选取VGG13的原因

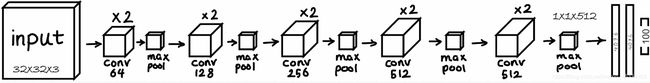

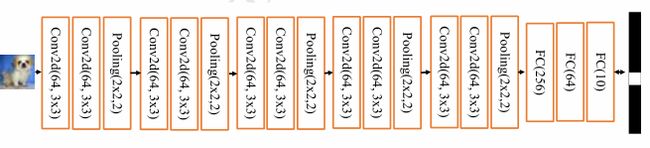

CIFAR10图片识别的任务识别的任务不太简单。主要是因为保存的图片的分辨率仅为32x32,部分主体信息较为模糊,有时人肉眼也难以分辨。浅层的神经网络表达呢能力有限,很难训练优化到较好的性能,所以采用VGG13网络,再根据此数据集的特点****修改部分网络结构。

2.2 VGG部分网络结构修改

- List itemVGG原网络输入为224x224,现将网络输入参数调整为32x32。原网络会导致全连接输入特征维度过大,网络参数量过大

- 3个全连接层的维度调整为[256,64,10]

2.3 VGG13模型结构

3代码

3.1 导入必要的库

import tensorflow as tf

from tensorflow.keras import layers, optimizers, datasets, Sequential

import os

3.2 处理部分

def preprocess(x, y):

# 归一化处理[0~1]

x= 2 * tf.cast(x, dtype = tf.float32) / 255. - 1

y = tf.cast(y,dtype = tf.int32)

return x,y

#加载数据集

(x, y), (x_test, y_test) = datasets.cifar10.load_data()

y = tf.squeeze(y, axis=1)

y_test = tf.squeeze(y_test, axis=1)

print(x.shape, y.shape, x_test.shape, y_test.shape)

test_db = tf.data.Dataset.from_tensor_slices((x_test, y_test))

test_db = test_db.map(preprocess).batch(64)

sample = next(iter(train_db))

print('sample:', sample[0].shape, sample[1].shape,

tf.reduce_min(sample[0]), tf.reduce_max(sample[0]))

3.3 主函数

def main():

#利用前面创建的层列表构建网络容器

conv_net = Sequential(conv_layers)

# 全连接子网络包含了 3 个全连接层,每层添加 ReLU 非线性激活函数,最后一层除外

# 创建3层全连接层子网络

fc_net = Sequential([

layers.Dense(256,activation = tf.nn.relu),

layers.Dense(128,activation = tf.nn.relu),

layers.Dense(10,activation = None),

])

# bulid2个子网络,并打印网络参数信息

conv_net.build(input_shape=[None, 32, 32, 3])

fc_net.build(input_shape=[None, 512])

conv_net.summary()

fc_net.summary()

optimizer = optimizers.Adam(lr=1e-4)

# 列表合并,合并2个子网络的参数

variables = conv_net.trainable_variables + fc_net.trainable_variables

for epoch in range(50): #循环训练 训练次数50

for step, (x, y) in enumerate(train_db):

with tf.GradientTape() as tape:

# [b, 32, 32, 3] => [b, 1, 1, 512]

out = conv_net(x)

# flatten, => [b, 512]

out = tf.reshape(out, [-1, 512])

# [b, 512] => [b, 10]

logits = fc_net(out)

# [b] => [b, 10]

y_onehot = tf.one_hot(y, depth=10)

# compute loss

loss = tf.losses.categorical_crossentropy(y_onehot, logits, from_logits=True)

loss = tf.reduce_mean(loss)

# 对所有参数求梯度

grads = tape.gradient(loss, variables)

# 自动更新

optimizer.apply_gradients(zip(grads, variables))

if step % 100 == 0:

print(epoch, step, 'loss:', float(loss))

total_num = 0

total_correct = 0

for x, y in test_db:

out = conv_net(x)

out = tf.reshape(out, [-1, 512])

logits = fc_net(out)

prob = tf.nn.softmax(logits, axis=1)

pred = tf.argmax(prob, axis=1)

pred = tf.cast(pred, dtype=tf.int32)

correct = tf.cast(tf.equal(pred, y), dtype=tf.int32)

correct = tf.reduce_sum(correct)

total_num += x.shape[0]

total_correct += int(correct)

acc = total_correct / total_num

print(epoch, 'acc:', acc)

3.4 VGG网络结构

conv_layers = [

# 先创建包含多网络层的列表

# Conv-Conv-Pooling单元1

# 64个3x3卷积核,输入输出同大小

layers.Conv2D(64, kernel_size = [3, 3], padding = "same", activation = tf.nn.relu),

layers.Conv2D(64, kernel_size = [3, 3], padding = "same", activation = tf.nn.relu),

# 高度减半

layers.MaxPool2D(pool_size = [2, 2], strides = 2, padding = 'same'),

# Conv-Conv-Pooling 单元2,输出通道提升至128,高宽大小减半

layers.Conv2D(128, kernel_size = [3, 3], padding = "same", activation=tf.nn.relu),

layers.Conv2D(128, kernel_size = [3, 3], padding = "same", activation=tf.nn.relu),

layers.MaxPool2D(pool_size=[2, 2], strides = 2, padding = 'same'),

# Conv-Conv-Pooling 单元3,输出通道提升至256,高宽大小减半

layers.Conv2D(256, kernel_size = [3, 3], padding = "same", activation=tf.nn.relu),

layers.Conv2D(256, kernel_size = [3, 3], padding="same", activation= tf.nn.relu),

layers.MaxPool2D(pool_size=[2, 2], strides= 2, padding='same'),

# Conv-Conv-Pooling 单元4,输出通道提升至512,高宽大小减半

layers.Conv2D(512, kernel_size=[3, 3], padding = "same", activation=tf.nn.relu),

layers.Conv2D(512, kernel_size=[3, 3],padding = "same", activation= tf.nn.relU),

layers.MaxPool2D(pool_size=[2, 2], stries = 2,padding='same')

]

3.5 输出结果

卷积层参数量

Total params: 9,404,992

Trainable params: 9,404,992

Non-trainable params: 0

全连接参数量

Total params: 165,514

Trainable params: 165,514

Non-trainable params: 0

loss

loss: 2.302992582321167

4 每文一语

今夜群星给我的光芒,能留到余生用。世事如意的话,下辈子也用不完。