统计学习方法03—朴素贝叶斯算法

目录

1.朴素贝叶斯的基本原理

2. 贝叶斯算法实现

2.1 数据集的准备与处理

2.2 GaussianNB 高斯朴素贝叶斯

2.2.1 @staticmethod静态方法

2.2.2 几种概率统计量的编码

2.3 scikit-learn 高斯贝叶斯实例

2.4 贝叶斯的伯努利模型和多项式模型

3. 意犹未尽

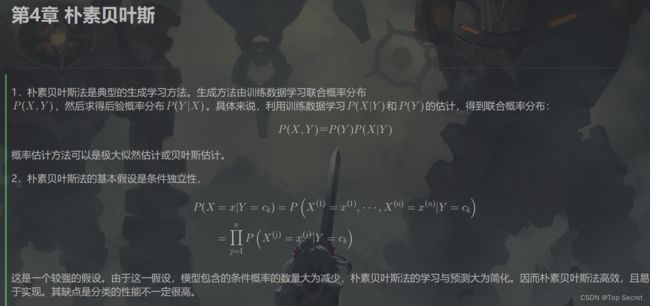

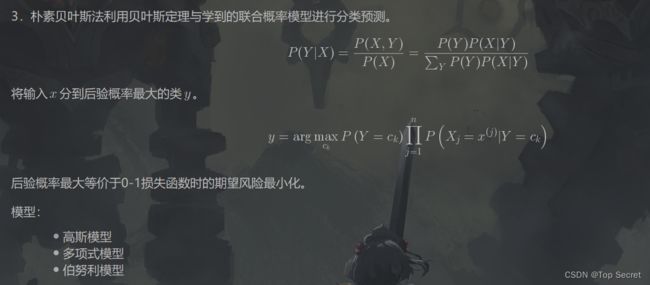

1.朴素贝叶斯的基本原理

2. 贝叶斯算法实现

2.1 数据集的准备与处理

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from sklearn.datasets import load_iris #数据集提供包

from sklearn.model_selection import train_test_split #数据集划分包

from collections import Counter

import math

# 加载数据,并做预处理

def create_data():

iris = load_iris() # 加载数据集

df = pd.DataFrame(iris.data, columns=iris.feature_names)

df['label'] = iris.target

df.columns = ['sepal length', 'sepal width', 'petal length', 'petal width', 'label']

data = np.array(df.iloc[:100, :])

print("查看data的数据详情:",data)

return data[:,:-1], data[:,-1]

#将调用create_data()的返回值data[:,:-1], data[:,-1],分别传给X,y

#Tips: data[:,:-1]---> 表示取最后一列以外的全部数据(作为训练数据)

# data[:,-1]----> 表示取最后一列(通常用作标签)

X, y = create_data()

# 将所有训练数据X以及所有标签y,按test_size=0.3的比例分为训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3)

print("查看第一个测试集的训练数据和标签数据:",X_test[0], y_test[0])

C:\Users\ZARD\anaconda3\envs\PyTorch\python.exe C:/Users/ZARD/PycharmProjects/pythonProject/统计学习算法实现/Naive_Bayes.py

查看data的数据详情: [[5.1 3.5 1.4 0.2 0. ]

[4.9 3. 1.4 0.2 0. ]

[4.7 3.2 1.3 0.2 0. ]

[4.6 3.1 1.5 0.2 0. ]

[5. 3.6 1.4 0.2 0. ]

[5.4 3.9 1.7 0.4 0. ]

[4.6 3.4 1.4 0.3 0. ]

[5. 3.4 1.5 0.2 0. ]

[4.4 2.9 1.4 0.2 0. ]

[4.9 3.1 1.5 0.1 0. ]

[5.4 3.7 1.5 0.2 0. ]

[4.8 3.4 1.6 0.2 0. ]

[4.8 3. 1.4 0.1 0. ]

[4.3 3. 1.1 0.1 0. ]

[5.8 4. 1.2 0.2 0. ]

[5.7 4.4 1.5 0.4 0. ]

[5.4 3.9 1.3 0.4 0. ]

[5.1 3.5 1.4 0.3 0. ]

[5.7 3.8 1.7 0.3 0. ]

[5.1 3.8 1.5 0.3 0. ]

[5.4 3.4 1.7 0.2 0. ]

[5.1 3.7 1.5 0.4 0. ]

[4.6 3.6 1. 0.2 0. ]

[5.1 3.3 1.7 0.5 0. ]

[4.8 3.4 1.9 0.2 0. ]

[5. 3. 1.6 0.2 0. ]

[5. 3.4 1.6 0.4 0. ]

[5.2 3.5 1.5 0.2 0. ]

[5.2 3.4 1.4 0.2 0. ]

[4.7 3.2 1.6 0.2 0. ]

[4.8 3.1 1.6 0.2 0. ]

[5.4 3.4 1.5 0.4 0. ]

[5.2 4.1 1.5 0.1 0. ]

[5.5 4.2 1.4 0.2 0. ]

[4.9 3.1 1.5 0.2 0. ]

[5. 3.2 1.2 0.2 0. ]

[5.5 3.5 1.3 0.2 0. ]

[4.9 3.6 1.4 0.1 0. ]

[4.4 3. 1.3 0.2 0. ]

[5.1 3.4 1.5 0.2 0. ]

[5. 3.5 1.3 0.3 0. ]

[4.5 2.3 1.3 0.3 0. ]

[4.4 3.2 1.3 0.2 0. ]

[5. 3.5 1.6 0.6 0. ]

[5.1 3.8 1.9 0.4 0. ]

[4.8 3. 1.4 0.3 0. ]

[5.1 3.8 1.6 0.2 0. ]

[4.6 3.2 1.4 0.2 0. ]

[5.3 3.7 1.5 0.2 0. ]

[5. 3.3 1.4 0.2 0. ]

[7. 3.2 4.7 1.4 1. ]

[6.4 3.2 4.5 1.5 1. ]

[6.9 3.1 4.9 1.5 1. ]

[5.5 2.3 4. 1.3 1. ]

[6.5 2.8 4.6 1.5 1. ]

[5.7 2.8 4.5 1.3 1. ]

[6.3 3.3 4.7 1.6 1. ]

[4.9 2.4 3.3 1. 1. ]

[6.6 2.9 4.6 1.3 1. ]

[5.2 2.7 3.9 1.4 1. ]

[5. 2. 3.5 1. 1. ]

[5.9 3. 4.2 1.5 1. ]

[6. 2.2 4. 1. 1. ]

[6.1 2.9 4.7 1.4 1. ]

[5.6 2.9 3.6 1.3 1. ]

[6.7 3.1 4.4 1.4 1. ]

[5.6 3. 4.5 1.5 1. ]

[5.8 2.7 4.1 1. 1. ]

[6.2 2.2 4.5 1.5 1. ]

[5.6 2.5 3.9 1.1 1. ]

[5.9 3.2 4.8 1.8 1. ]

[6.1 2.8 4. 1.3 1. ]

[6.3 2.5 4.9 1.5 1. ]

[6.1 2.8 4.7 1.2 1. ]

[6.4 2.9 4.3 1.3 1. ]

[6.6 3. 4.4 1.4 1. ]

[6.8 2.8 4.8 1.4 1. ]

[6.7 3. 5. 1.7 1. ]

[6. 2.9 4.5 1.5 1. ]

[5.7 2.6 3.5 1. 1. ]

[5.5 2.4 3.8 1.1 1. ]

[5.5 2.4 3.7 1. 1. ]

[5.8 2.7 3.9 1.2 1. ]

[6. 2.7 5.1 1.6 1. ]

[5.4 3. 4.5 1.5 1. ]

[6. 3.4 4.5 1.6 1. ]

[6.7 3.1 4.7 1.5 1. ]

[6.3 2.3 4.4 1.3 1. ]

[5.6 3. 4.1 1.3 1. ]

[5.5 2.5 4. 1.3 1. ]

[5.5 2.6 4.4 1.2 1. ]

[6.1 3. 4.6 1.4 1. ]

[5.8 2.6 4. 1.2 1. ]

[5. 2.3 3.3 1. 1. ]

[5.6 2.7 4.2 1.3 1. ]

[5.7 3. 4.2 1.2 1. ]

[5.7 2.9 4.2 1.3 1. ]

[6.2 2.9 4.3 1.3 1. ]

[5.1 2.5 3. 1.1 1. ]

[5.7 2.8 4.1 1.3 1. ]]

查看第一个测试集的训练数据和标签数据: [4.8 3.1 1.6 0.2] 0.0

Process finished with exit code 0

Tips: data[:,:-1]---> 表示取最后一列以外的全部数据(作为训练数据)

data[:,-1]----> 表示取最后一列(通常用作标签)将加载数据,并做预处理步骤打包成函数,也是不错的一个思想诺!

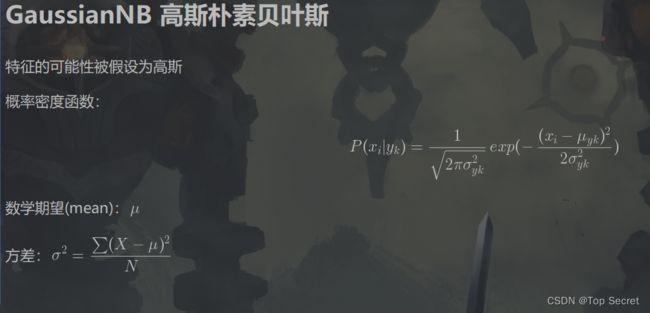

2.2 GaussianNB 高斯朴素贝叶斯

参考:https://machinelearningmastery.com/naive-bayes-classifier-scratch-python/

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from sklearn.datasets import load_iris #数据集提供包

from sklearn.model_selection import train_test_split #数据集划分包

from collections import Counter

import math

# 加载数据,并做预处理

def create_data():

iris = load_iris() # 加载数据集

df = pd.DataFrame(iris.data, columns=iris.feature_names)

df['label'] = iris.target

df.columns = ['sepal length', 'sepal width', 'petal length', 'petal width', 'label']

data = np.array(df.iloc[:100, :])

print("查看data的数据详情:",data)

return data[:,:-1], data[:,-1]

#将调用create_data()的返回值data[:,:-1], data[:,-1],分别传给X,y

#Tips: data[:,:-1]---> 表示取最后一列以外的全部数据(作为训练数据)

# data[:,-1]----> 表示取最后一列(通常用作标签)

X, y = create_data()

# 将所有训练数据X以及所有标签y,按test_size=0.3的比例分为训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3)

print("查看第一个测试集的训练数据和标签数据:",X_test[0], y_test[0])

class NaiveBayes:

def __init__(self):

self.model = None

# 数学期望

@staticmethod #静态方法:不实例化类的情况下可以直接访问该方法

def mean(X):

return sum(X) / float(len(X))

# 标准差(方差)

def stdev(self, X):

avg = self.mean(X)

return math.sqrt(sum([pow(x - avg, 2) for x in X]) / float(len(X)))

# 概率密度函数

def gaussian_probability(self, x, mean, stdev):

exponent = math.exp(-(math.pow(x - mean, 2) /

(2 * math.pow(stdev, 2))))

return (1 / (math.sqrt(2 * math.pi) * stdev)) * exponent

# 处理X_train

def summarize(self, train_data):

summaries = [(self.mean(i), self.stdev(i)) for i in zip(*train_data)]

return summaries

# 分类别求出数学期望和标准差

def fit(self, X, y):

labels = list(set(y))

data = {label: [] for label in labels}

for f, label in zip(X, y):

data[label].append(f)

self.model = {

label: self.summarize(value)

for label, value in data.items()

}

return 'gaussianNB train done!'

# 计算概率

def calculate_probabilities(self, input_data):

# summaries:{0.0: [(5.0, 0.37),(3.42, 0.40)], 1.0: [(5.8, 0.449),(2.7, 0.27)]}

# input_data:[1.1, 2.2]

probabilities = {}

for label, value in self.model.items():

probabilities[label] = 1

for i in range(len(value)):

mean, stdev = value[i]

probabilities[label] *= self.gaussian_probability(

input_data[i], mean, stdev)

return probabilities

# 类别

def predict(self, X_test):

# {0.0: 2.9680340789325763e-27, 1.0: 3.5749783019849535e-26}

label = sorted(

self.calculate_probabilities(X_test).items(),

key=lambda x: x[-1])[-1][0]

return label

def score(self, X_test, y_test):

right = 0

for X, y in zip(X_test, y_test):

label = self.predict(X)

if label == y:

right += 1

return right / float(len(X_test))

model = NaiveBayes() #实例化朴素贝叶斯

model.fit(X_train, y_train) #分类别求出数学期望和标准差

print(model.predict([4.4, 3.2, 1.3, 0.2])) # 0.0

model.score(X_test, y_test) #1.02.2.1 @staticmethod静态方法

(1条消息) python 理解@staticmethod静态方法_季布,的博客-CSDN博客_python @staticmethod原理

2.2.2 几种概率统计量的编码

# 数学期望

@staticmethod #静态方法:不实例化类的情况下可以直接访问该方法

def mean(X):

return sum(X) / float(len(X))

# 标准差(方差)

def stdev(self, X):

avg = self.mean(X)

return math.sqrt(sum([pow(x - avg, 2) for x in X]) / float(len(X)))

# 概率密度函数

def gaussian_probability(self, x, mean, stdev):

exponent = math.exp(-(math.pow(x - mean, 2) /

(2 * math.pow(stdev, 2))))

return (1 / (math.sqrt(2 * math.pi) * stdev)) * exponent

# 处理X_train

def summarize(self, train_data):

summaries = [(self.mean(i), self.stdev(i)) for i in zip(*train_data)]

return summaries

# 分类别求出数学期望和标准差

def fit(self, X, y):

labels = list(set(y))

data = {label: [] for label in labels}

for f, label in zip(X, y):

data[label].append(f)

self.model = {

label: self.summarize(value)

for label, value in data.items()

}

return 'gaussianNB train done!'

# 计算概率

def calculate_probabilities(self, input_data):

# summaries:{0.0: [(5.0, 0.37),(3.42, 0.40)], 1.0: [(5.8, 0.449),(2.7, 0.27)]}

# input_data:[1.1, 2.2]

probabilities = {}

for label, value in self.model.items():

probabilities[label] = 1

for i in range(len(value)):

mean, stdev = value[i]

probabilities[label] *= self.gaussian_probability(

input_data[i], mean, stdev)

return probabilities2.3 scikit-learn 高斯贝叶斯实例

from sklearn.naive_bayes import GaussianNB主要代码如下:

from sklearn.naive_bayes import GaussianNB

clf = GaussianNB() # 实例化高斯贝叶斯模型

clf.fit(X_train, y_train) #分类别求出数学期望和标准差

print(clf.score(X_test, y_test)) #类别

print(clf.predict([[4.4, 3.2, 1.3, 0.2]])) # 0.0from sklearn.naive_bayes import GaussianNB

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from sklearn.datasets import load_iris #数据集提供包

from sklearn.model_selection import train_test_split #数据集划分包

from collections import Counter

import math

# 加载数据,并做预处理

def create_data():

iris = load_iris() # 加载数据集

df = pd.DataFrame(iris.data, columns=iris.feature_names)

df['label'] = iris.target

df.columns = ['sepal length', 'sepal width', 'petal length', 'petal width', 'label']

data = np.array(df.iloc[:100, :])

print("查看data的数据详情:",data)

return data[:,:-1], data[:,-1]

#将调用create_data()的返回值data[:,:-1], data[:,-1],分别传给X,y

#Tips: data[:,:-1]---> 表示取最后一列以外的全部数据(作为训练数据)

# data[:,-1]----> 表示取最后一列(通常用作标签)

X, y = create_data()

# 将所有训练数据X以及所有标签y,按test_size=0.3的比例分为训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3)

print("查看第一个测试集的训练数据和标签数据:",X_test[0], y_test[0])

clf = GaussianNB() # 实例化高斯贝叶斯模型

clf.fit(X_train, y_train) #分类别求出数学期望和标准差

print(clf.score(X_test, y_test)) #类别

print(clf.predict([[4.4, 3.2, 1.3, 0.2]])) # 0.0

2.4 贝叶斯的伯努利模型和多项式模型

from sklearn.naive_bayes import BernoulliNB, MultinomialNB # 伯努利模型和多项式模型参考代码:https://github.com/wzyonggege/statistical-learning-method

本文代码更新地址:https://github.com/fengdu78/lihang-code

中文注释制作:机器学习初学者公众号:ID:ai-start-com

3. 意犹未尽

如下大佬文章来满足:

scikit-learn 朴素贝叶斯类库使用小结 - 刘建平Pinard - 博客园 (cnblogs.com)

(1条消息) 朴素贝叶斯分类算法[sklearn.naive_bayes/GaussianNB/MultinomialNB/BernoulliNB]_Doris_H_n_q的博客-CSDN博客

一文搞懂Python库中的5种贝叶斯算法 - 知乎 (zhihu.com)

超参数调优总结,贝叶斯优化Python代码示例 - 知乎 (zhihu.com)

贝叶斯超参数寻优(附Python代码,scikit-optimize) - 知乎 (zhihu.com)