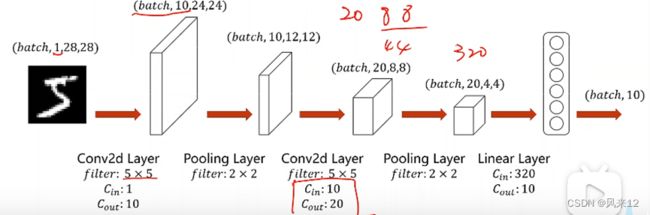

一个简单的卷积神经网络

一、图像成像原理

区域经过透镜,形成了一个锥型区域,该光线被光敏电阻所感知。多个光敏电阻并列即可呈现图像。一个光敏电阻一个像素,如下就可形成2像素的图片。

对彩色图像而言,需对传感器器件进行改进,如绿色、红色、蓝色的传感器合成一组,最终像素值来自于三种不同的光敏器件。

二、卷积

单通道进行卷积

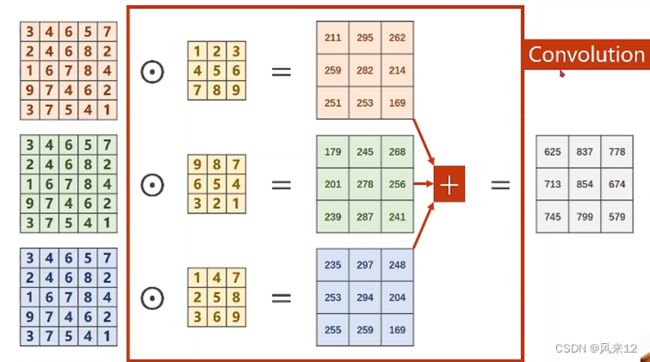

多通道进行卷积,以3通道为例

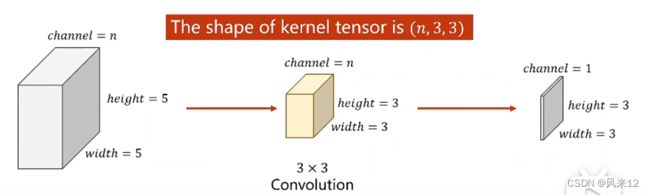

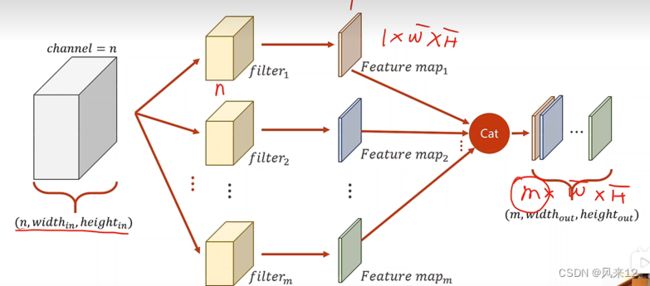

卷积核的输入通道数、输出通道数、宽度和高度,确定了一个卷积核。

其中:输出通道数:等于卷积核的个数;输入通过数:等于上一层的通道数。

代码示例:

import torch

in_chanels,outchuaels=5,10

width,height=100,100

kernel_size=3

batch_size=1

#batch为1 小批量第几个,输入通道为5,宽和高为100*100的图片

input=torch.randn(batch_size,in_chanels,width,height)

conv_layer=torch.nn.Conv2d(in_chanels,outchuaels,kernel_size=kernel_size)

output=conv_layer(input)

print(input.shape)

print(output.shape)

print(conv_layer.weight.shape)

输出:torch.Size([1, 5, 100, 100])

torch.Size([1, 10, 98, 98])

torch.Size([10, 5, 3, 3])

1为样本数量,卷积核:输出通道为10,输入通道为5,大小为3*3

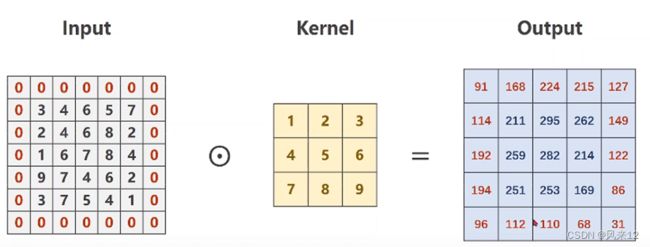

三、padding

外围按0进行填充

代码实现:

input=[3,4,6,5,7,

2,4,6,8,2,

1,6,7,8,4,

9,7,4,6,2,

3,7,5,4,1]

#转化成(B,C,W,H),批量维度,通道,宽,高

input=torch.Tensor(input).view(1,1,5,5)

conv_layer=torch.nn.Conv2d(1,1,kernel_size=3,padding=1,bias=False)

kennel=torch.Tensor([1,2,3,4,5,6,7,8,9]).view(1,1,3,3)

conv_layer.weight.data=kennel.data

output=conv_layer(input)

print(output)

四、最大池化层

- 一个简单的卷积神经网络

样本数量、通道数、宽度、高度确立了一个卷积层。

(batch,20,4,4)展开为1为一行,进行变换得到10*1的结果。

利用卷积神经网络做手写数字的识别:

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

batch_size=64

#利用transforms 转换为tensor 类型的图片,

transform =transforms.Compose([transforms.ToTensor(),transforms.Normalize((0.1307,),(0.3081,))

])

train_dataset=datasets.MNIST(root='../data/mnist',train=True,download=True,transform=transform)

train_loader= DataLoader(train_dataset,shuffle=True,batch_size=batch_size)

test_dataset=datasets.MNIST(root='../data/mnist',train=False,download=True,transform=transform)

test_loader=DataLoader(train_dataset,shuffle=False,batch_size=batch_size)

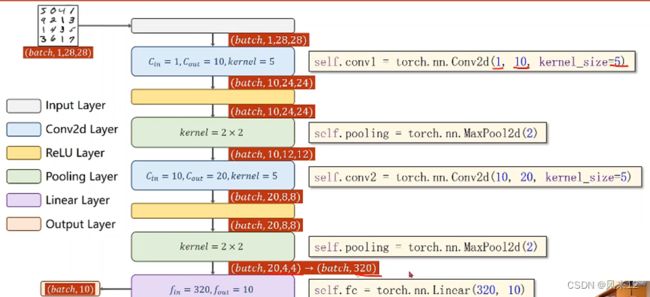

class Net(torch.nn.Module):

def __init__(self):

super(Net,self).__init__()

self.conv1=torch.nn.Conv2d(1,10,kernel_size=5)

self.conv2=torch.nn.Conv2d(10,20,kernel_size=5)

self.pooling=torch.nn.MaxPool2d(20)

self.fc=torch.nn.Linear(320,10)

def forward(self,x):

batch_size=x.size(0)

x=F.relu(self.pooling(self.conv1(x)))

x=F.relu(self.pooling(self.conv2(x)))

x=x.view(batch_size,-1) #变成全连接网络需要的输入

x=self.fc(x)

return x

model=Net()

crierion=torch.nn.CrossEntropyLoss()

optimizer=optim.SGD(model.parameters(),lr=0.01,momentum=0.5)

def train(epoch):

running_loss=0.0

for batch_idx,data in enumerate(train_loader,0):

inputs,target=data

optimizer.zero_grad()

#forward +backward+update

outputs=model(inputs)

loss=crierion(outputs,target)

loss.backward()

optimizer.step()

running_loss+=loss.item()

if batch_idx%300==299:

print('[%d,%5d] loss:%.3f' %(epoch+1,batch_idx+1,running_loss/300))

running_loss==0.0

def test():

correct=0

total=0

with torch.no_grad():

for data in test_loader:

images,labels=data

outputs=model(images)

_,predicted=torch.max(outputs.data,dim=1)

total+=labels.size(0)

correct+=(predicted==labels).sum().item()

print('Accuacy on test set:%d %%'%(100*correct/total))

if __name__=='__main__':

for epoch in range(10):

train(epoch)

test()