pytorch 深度学习入门代码 (三)Logistic 回归代码实现

"""Logistic 回归的代码实现"""

import matplotlib.pyplot as plt

import torch

import torch.nn as nn

from torch.autograd import Variable

import numpy as np

class LogisticRegression(nn.Module):

def __init__(self):

super(LogisticRegression, self).__init__()

self.lr = nn.Linear(2, 1)

self.sm = nn.Sigmoid()

def forward(self, x):

x = self.lr(x)

x = self.sm(x)

return x

if __name__ == '__main__':

with open('data.txt', 'r', encoding='utf8') as f:

data_list = f.readlines()

data_list = [i.split('\n')[0] for i in data_list]

data_list = [i.split(',') for i in data_list]

data = [(float(i[0]), float(i[1]), float(i[2])) for i in data_list]

data = torch.Tensor(data)

logistic_model = LogisticRegression()

if torch.cuda.is_available():

logistic_model.cuda()

criterion = nn.BCELoss()

optimizer = torch.optim.SGD(logistic_model.parameters(), lr=1e-3, momentum=0.9)

for epoch in range(10000):

if torch.cuda.is_available():

x = Variable(data[:, 0:2]).cuda()

y = Variable(data[:, 2]).cuda().unsqueeze(1)

else:

x = Variable(data[:, 0:2])

y = Variable(data[:, 2]).unsqueeze(1)

# forward

out = logistic_model(x)

loss = criterion(out, y)

print_loss = loss.data.item()

mask = out.ge(0.5).float()

correct = (mask == y).sum()

acc = correct.item() / x.size(0)

# backward

optimizer.zero_grad()

loss.backward()

optimizer.step()

if (epoch + 1) % 1000 == 0:

print('*' * 10)

print('epoch {}'.format(epoch + 1))

print('loss is {:.4f}'.format(print_loss))

print('acc is {:.4f}'.format(acc))

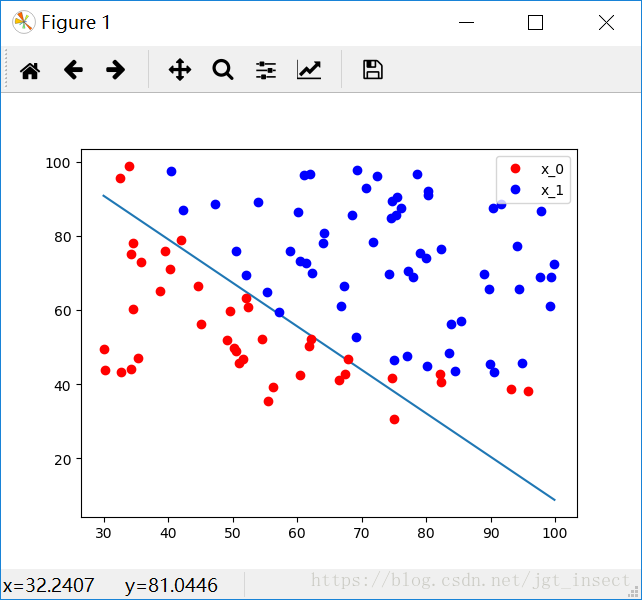

w0, w1 = logistic_model.lr.weight[0]

w0 = w0.item()

w1 = w1.item()

b = logistic_model.lr.bias.item()

plot_x = np.arange(30, 100, 0.1)

plot_y = (-w0 * plot_x - b) / w1

plt.plot(plot_x, plot_y)

x0 = list(filter(lambda x: x[-1] == 0.0, data))

x1 = list(filter(lambda x: x[-1] == 1.0, data))

plot_x0_0 = [i[0] for i in x0]

plot_x0_1 = [i[1] for i in x0]

plot_x1_0 = [i[0] for i in x1]

plot_x1_1 = [i[1] for i in x1]

plt.plot(plot_x0_0, plot_x0_1, 'ro', label='x_0')

plt.plot(plot_x1_0, plot_x1_1, 'bo', label='x_1')

plt.legend()

plt.show()

少量测试数据集

34.62365962451697,78.0246928153624,0

30.2867107622687,43.89499752400101,0

35.84740876993872,72.90219802708364,0

60.18259938620976,86.3855209546826,1

79.0327360507101,75.3443764369103,1

45.08327747668339,56.3163717815305,0

61.10666453684766,96.51142588489624,1

75.02474556738889,46.55401354116538,1

76.09878670226257,87.42056971926803,1

84.43281996120035,43.53339331072109,1

95.86155507093572,38.22527805795094,0

75.01365838958247,30.60326323428011,0

82.30705337399482,76.48196330235604,1

69.36458875970939,97.71869196188608,1

39.53833914367223,76.03681085115882,0

53.9710521485623,89.20735013750265,1

69.07014406283025,52.74046973016765,1

67.9468554771161746,67.857410673128,0附100个训练数据集

https://download.csdn.net/download/jgt_insect/10575505