Python机器学习11——支持向量机

本系列所有的代码和数据都可以从陈强老师的个人主页上下载:Python数据程序

参考书目:陈强.机器学习及Python应用. 北京:高等教育出版社, 2021.

本系列基本不讲数学原理,只从代码角度去让读者们利用最简洁的Python代码实现机器学习方法。

前面的决策树,随机森林,梯度提升都是属于树模型,而支持向量机被称为核方法。其主要是依赖核函数将数据映射到高维空间进行分离。支持向量机适合用于变量越多越好的问题,因此在神经网络之前,它对于文本和图片领域都算效果还不错的方法。学术界偏爱支持向量机是因为它具有非常严格和漂亮的数学证明过程。支持向量机可以分类也可以回归,但一般用于分类问题更好。

支持向量机二分类Python案例

采用垃圾邮件的数据集,导入包读取数据:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.preprocessing import StandardScaler

from sklearn.model_selection import train_test_split

from sklearn.model_selection import KFold, StratifiedKFold

from sklearn.model_selection import GridSearchCV

from sklearn.metrics import plot_confusion_matrix

from sklearn.svm import SVC

from sklearn.svm import SVR

#from sklearn.svm import LinearSVC

from sklearn.datasets import load_boston

from sklearn.datasets import load_digits

from sklearn.datasets import make_blobs

from mlxtend.plotting import plot_decision_regions

spam = pd.read_csv('spam.csv')

spam.shape

spam.head(3)数据长这样

取出X和y,划分训练测试集,将数据标准化

X = spam.iloc[:, :-1]

y = spam.iloc[:, -1]

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=1000, stratify=y, random_state=0)

scaler = StandardScaler()

scaler.fit(X_train)

X_train_s = scaler.transform(X_train)

X_test_s = scaler.transform(X_test)分别采用不同的核函数进行支持向量机的估计

#线性核函数

model = SVC(kernel="linear", random_state=123)

model.fit(X_train_s, y_train)

model.score(X_test_s, y_test)

#二次多项式核

model = SVC(kernel="poly", degree=2, random_state=123)

model.fit(X_train_s, y_train)

model.score(X_test_s, y_test)

#三次多项式

model = SVC(kernel="poly", degree=3, random_state=123)

model.fit(X_train_s, y_train)

model.score(X_test_s, y_test)

#径向核

model = SVC(kernel="rbf", random_state=123)

model.fit(X_train_s, y_train)

model.score(X_test_s, y_test)

#S核

model = SVC(kernel="sigmoid",random_state=123)

model.fit(X_train_s, y_train)

model.score(X_test_s, y_test)

一般来说,径向核效果比较好,网格化搜索最优超参数

param_grid = {'C': [0.1, 1, 10], 'gamma': [0.01, 0.1, 1]}

kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=1)

model = GridSearchCV(SVC(kernel="rbf", random_state=123), param_grid, cv=kfold)

model.fit(X_train_s, y_train)

model.best_params_

model.score(X_test_s, y_test)

pred = model.predict(X_test)

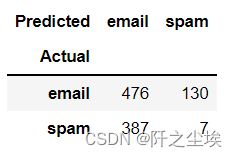

pd.crosstab(y_test, pred, rownames=['Actual'], colnames=['Predicted'])

预测得到混淆矩阵

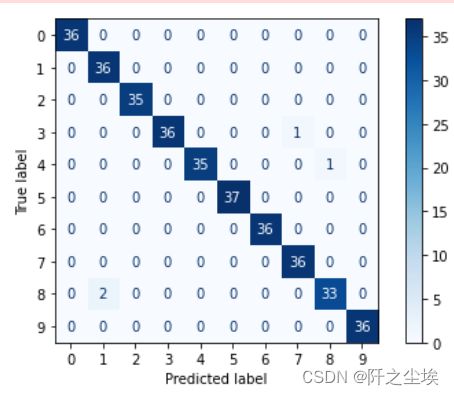

支持向量机多分类Python案例

使用sklearn库自带的手写数字的案例,采用支持向量机分类

digits = load_digits()

dir(digits)

#图片数据(三维)

digits.images.shape

#拉成二维

digits.data.shape

#y的形状

digits.target.shape

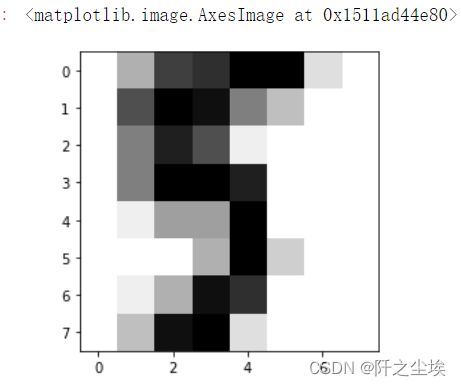

#查看第十五个图片

plt.imshow(digits.images[15], cmap=plt.cm.gray_r)

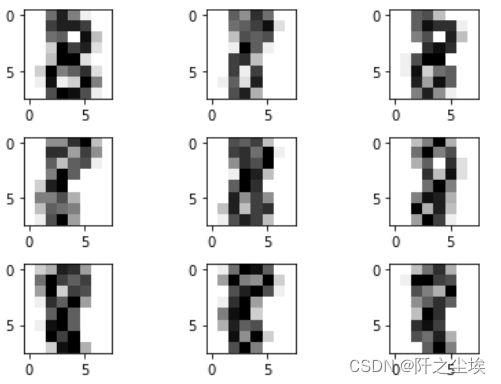

打印数字为8 的图片展示

images_8 = digits.images[digits.target==8]

for i in range(1, 10):

plt.subplot(3, 3, i)

plt.imshow(images_8[i-1], cmap=plt.cm.gray_r)

plt.tight_layout()取出X和y,进行支持向量机分类

X = digits.data

y = digits.target

X_train, X_test, y_train, y_test = train_test_split(X, y, stratify=y, test_size=0.2, random_state=0)

model = SVC(kernel="linear", random_state=123)

model.fit(X_train, y_train)

model.score(X_test, y_test)

model = SVC(kernel="poly", degree=2, random_state=123)

model.fit(X_train, y_train)

model.score(X_test, y_test)

model = SVC(kernel="poly", degree=3, random_state=123)

model.fit(X_train, y_train)

model.score(X_test, y_test)

model = SVC(kernel='rbf', random_state=123)

model.fit(X_train, y_train)

model.score(X_test, y_test)

model = SVC(kernel="sigmoid",random_state=123)

model.fit(X_train, y_train)

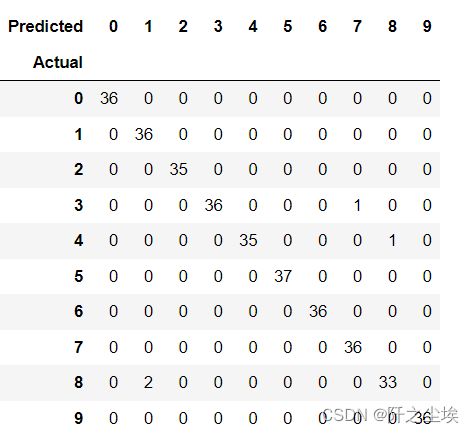

model.score(X_test, y_test)网格化搜索最优超参数,预测得到混淆矩阵

param_grid = {'C': [0.001, 0.01, 0.1, 1, 10], 'gamma': [0.001, 0.01, 0.1, 1, 10]}

kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=1)

model = GridSearchCV(SVC(kernel='rbf',random_state=123), param_grid, cv=kfold)

model.fit(X_train, y_train)

model.best_params_

model.score(X_test, y_test)

pred = model.predict(X_test)

pd.crosstab(y_test, pred, rownames=['Actual'], colnames=['Predicted'])

画热力图

plot_confusion_matrix(model, X_test, y_test,cmap='Blues')

plt.tight_layout()

支持向量机回归Python案例

依旧采用波士顿房价数据集进行回归

# Support Vector Regression with Boston Housing Data

X, y = load_boston(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=1)

scaler = StandardScaler()

scaler.fit(X_train)

X_train_s = scaler.transform(X_train)

X_test_s = scaler.transform(X_test)

# Radial Kernel

model = SVR(kernel='rbf')

model.fit(X_train_s, y_train)

model.score(X_test_s, y_test)

param_grid = {'C': [0.01, 0.1, 1, 10, 50, 100, 150], 'epsilon': [0.01, 0.1, 1, 10], 'gamma': [0.01, 0.1, 1, 10]}

kfold = KFold(n_splits=10, shuffle=True, random_state=1)

model = GridSearchCV(SVR(), param_grid, cv=kfold)

model.fit(X_train_s, y_train)

model.best_params_

model = model.best_estimator_

len(model.support_)

model.support_vectors_

model.score(X_test_s, y_test)

# Comparison with Linear Regression

from sklearn.linear_model import LinearRegression

model = LinearRegression()

model.fit(X_train, y_train)

model.score(X_test, y_test)