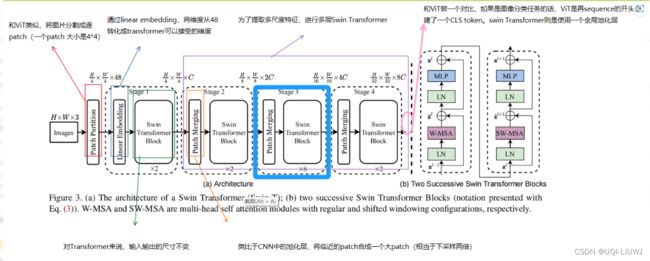

pytorch 笔记: Swin-Transformer 代码

理论部分: 论文笔记:Swin Transformer: Hierarchical Vision Transformer using Shifted Windows_UQI-LIUWJ的博客-CSDN博客

源码部分:Swin-Transformer/models at main · microsoft/Swin-Transformer (github.com)

- 输入图片尺寸 Batch_size*H*W

- 送入SwinTransformer

- PatchEmbedding【Parameter】

- 每个patch 分别进行Embedding

- Batch_size*H*W——>Batch_size,Patch_H*Patch_W,emb_dim

- 每个图片加1,Patch_H*Patch*W,emb_dim大小的绝对位置embedding

- 送入BasicLayer

- 多个SwinTranformer

- 奇数 shift window attention,偶数 window attention

- 内部实现

- (如果需要)通过torch.roll进行window shift

- (如果需要)生成Patch_H*Patch_W的mask(针对滑动窗口情况)

- window_partition

- 从patch视角转换成window视角

- Batch_size,Patch_H*Patch_W,emb_dim —> Batch_size*num_window,window_size,window_size,emb_dim

- window_attention

- Batch_size*num_window,window_size,window_size,emb_dim ——> Batch_size*num_window,window_size,window_size,emb_dim

- 相对位置编码(Parameter)加在QK之上

- (2*window_size-1,2*window_size-1,head)

- window内每个点和另一个点的相对位置索引矩阵

- (head,window_size*window_size,window_size*window_size)

- window_reversion

- 从windows视角再回到patch视角

- 内部实现

- Batch_size,Patch_H*Patch_W,emb_dim —> Batch_size,Patch_H*Patch_W,emb_dim

- 奇数 shift window attention,偶数 window attention

- (除了末层不需要,其他都需要)Patch_Merging

- Batch_size,Patch_H*Patch_W,emb_dim —> Batch_size,Patch_H/2*Patch_W/2,emb_dim*2

- 多个SwinTranformer

- 得到Batch_size,L,dim

- 每张图片每个dim的L个patch进行平均池化——>Batch_size,dim

- 全连接进行分类——>Batch_size,num_class.

- PatchEmbedding【Parameter】

1 class SwinTransformer

class SwinTransformer(nn.Module):1.1 init输入部分

1.1.1 主要输入参数

| img_size | 输入的图片的大小 (int,或者int的tuple) 默认224 |

| patch_size | patch的大小 (int,或者int的tuple) 默认4 |

| in_chans | 输入图片的channel数 默认3 |

| num_classes | 图片分类的类别数 默认1000 |

| embed_dim | patch embedding的维数 默认96 |

| depths | 各swin-transformer层的深度 (int的tuple) |

| num_heads | 各swin-transformer层的attention的头数 (int的tuple) |

| window_size | 窗口大小 (窗口内的点进行attention) |

| mlp_ratio | mlp隐藏层维度:embedding层维度 |

| qkv_bias | QKV是否有bias |

| drop_rate | dropout rate |

| attn_drop_rate | attention的drop rate |

| drop_path_rate | stochastic depth的p值大小 |

| norm_layer | 进行何种规范化 |

| ape | 是否加绝对位置positional encodding |

| patch_norm | 是否在patch embedding之后进行normalization |

1.1.2 代码部分(init)

def __init__(self, img_size=224, patch_size=4, in_chans=3, num_classes=1000,

embed_dim=96, depths=[2, 2, 6, 2], num_heads=[3, 6, 12, 24],

window_size=7, mlp_ratio=4., qkv_bias=True, qk_scale=None,

drop_rate=0., attn_drop_rate=0., drop_path_rate=0.1,

norm_layer=nn.LayerNorm, ape=False, patch_norm=True,

use_checkpoint=False, fused_window_process=False, **kwargs):

super().__init__()

self.num_classes = num_classes

self.num_layers = len(depths)

self.embed_dim = embed_dim

self.ape = ape

self.patch_norm = patch_norm

self.num_features = int(embed_dim * 2 ** (self.num_layers - 1))

self.mlp_ratio = mlp_ratio

#####################将像素级图片转成patch级图片的类初始类#######################

# split image into non-overlapping patches

self.patch_embed = PatchEmbed(

img_size=img_size,

patch_size=patch_size,

in_chans=in_chans,

embed_dim=embed_dim,

norm_layer=norm_layer if self.patch_norm else None)

#用于将图片转换成一个一个patch

num_patches = self.patch_embed.num_patches

#一张图片中有几个patch

patches_resolution = self.patch_embed.patches_resolution

#一张图片基于patch的分辨率

self.patches_resolution = patches_resolution

#############################################################################

########################### 绝对位置编码 #####################################

# absolute position embedding

if self.ape:

self.absolute_pos_embed = nn.Parameter(torch.zeros(1, num_patches, embed_dim))

trunc_normal_(self.absolute_pos_embed, std=.02)

#1*num_patches*embed_dim维度,每一个embed_dim都代表了一个patch的绝对位置的向量

#############################################################################

self.pos_drop = nn.Dropout(p=drop_rate)

# stochastic depth

dpr = [x.item() for x in torch.linspace(0, drop_path_rate, sum(depths))] # stochastic depth decay rule

#层数越深stochastic depth的p(不激活这一层的概率)越大

###############################搭建swin-transformer################################

# build layers

self.layers = nn.ModuleList()

for i_layer in range(self.num_layers):

layer = BasicLayer(dim=int(embed_dim * 2 ** i_layer),

input_resolution=(patches_resolution[0] // (2 ** i_layer),

patches_resolution[1] // (2 ** i_layer)),

#随着层数的推进,维度翻倍,图片分辨率(大小)减半

depth=depths[i_layer],

#不同层swin-transformer需要不同的block数量

num_heads=num_heads[i_layer],

#不同层swin-transformer需要不同的注意力头数量

window_size=window_size,

mlp_ratio=self.mlp_ratio,

qkv_bias=qkv_bias,

qk_scale=qk_scale,

drop=drop_rate,

attn_drop=attn_drop_rate,

drop_path=dpr[sum(depths[:i_layer]):sum(depths[:i_layer + 1])],

norm_layer=norm_layer,

downsample=PatchMerging if (i_layer < self.num_layers - 1) else None,

#除了最后一层,其他的都需要PatchMerge(类似于CNN的池化)

use_checkpoint=use_checkpoint,

fused_window_process=fused_window_process)

self.layers.append(layer)

self.norm = norm_layer(self.num_features)

self.avgpool = nn.AdaptiveAvgPool1d(1)

self.head = nn.Linear(self.num_features, num_classes) if num_classes > 0 else nn.Identity()

#################################################################################

self.apply(self._init_weights)

#对每个子模组进行初始化1.1.3 _init_weights

对每个子模组分别递归地进行初始化

def _init_weights(self, m):

if isinstance(m, nn.Linear):

trunc_normal_(m.weight, std=.02)

if isinstance(m, nn.Linear) and m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.LayerNorm):

nn.init.constant_(m.bias, 0)

nn.init.constant_(m.weight, 1.0)1.1.4 forward_features

def forward_features(self, x):

x = self.patch_embed(x)

#将图片转成patch级别分辨率,其中每个patch有emb_dim维

#B,Patch_H*Patch_W,C

if self.ape:

x = x + self.absolute_pos_embed

#绝对位置编码

#B,Patch_H*Patch_W,C

x = self.pos_drop(x)

#Dropout

for layer in self.layers:

x = layer(x)

#依次传入不同的layer层

x = self.norm(x)

#LayerNorm

# batch_size, length, dim

x = self.avgpool(x.transpose(1, 2))

#平均池化 # batch_size,dim,1

// 每张图片每个dimension取平均池化,就是这个dimension平均的feature

x = torch.flatten(x, 1)

#[batch_size,dim]

#每张图片有dim个特征,每个特征是这张图片各个patch在这一dimension的平均值

return x1.1.5 forward

def forward(self, x):

x = self.forward_features(x)

#swin transformer 学习特征

x = self.head(x)

#全连接层进行分类

return x2 PatchEmbed

将像素级图片转化成patch级图片

class PatchEmbed(nn.Module):2.1 init

def __init__(self, img_size=224, patch_size=4, in_chans=3, embed_dim=96, norm_layer=None):

super().__init__()

img_size = to_2tuple(img_size)

#224——>(224,224)

patch_size = to_2tuple(patch_size)

#4——>(4,4)

patches_resolution = [img_size[0] // patch_size[0], img_size[1] // patch_size[1]]

#一张图片基于patch的分辨率

self.img_size = img_size

self.patch_size = patch_size

self.patches_resolution = patches_resolution

self.num_patches = patches_resolution[0] * patches_resolution[1]

#一张图片有几个patch

self.in_chans = in_chans

self.embed_dim = embed_dim

self.proj = nn.Conv2d(in_chans, embed_dim, kernel_size=patch_size, stride=patch_size)

'''

卷积核是patch_size*patch_size,stride是patch_size

——>每个patch*patch*in_chans的部分,通过proj,变成1*1*embed_dim

'''

if norm_layer is not None:

self.norm = norm_layer(embed_dim)

else:

self.norm = None2.2 forward

def forward(self, x):

B, C, H, W = x.shape

//batch_size,channel_num,height,width

# FIXME look at relaxing size constraints

assert H == self.img_size[0] and W == self.img_size[1], \

f"Input image size ({H}*{W}) doesn't match model ({self.img_size[0]}*{self.img_size[1]})."

x = self.proj(x).flatten(2).transpose(1, 2)

'''

每个patch里面的内容进行卷积

将每个patch_size*patch_size的内容变成1*1的内容

proj——> B,emb_dim,Patch_H,Patch_W

flatten(2)——>B,emb_dim,Patch_H*Patch_W

transpose(1,2)——>B,Patch_H*Patch_W,emb_dim

'''

if self.norm is not None:

x = self.norm(x)

return x3 BasicLayer

一个stage的swin transformer层

class BasicLayer(nn.Module):

""" A basic Swin Transformer layer for one stage.

3.1 主要参数

| dim | 输入channel的数量 |

| input_resolution | 输入的分辨率 |

| depth | block的数量 |

| num_heads | attention头的数量 |

| window_size | window的大小,window_size*window_sizw的内容进行attention |

| mlp_ratio | mlp隐藏层维度:embedding层维度 |

| qkv_bias | QKV是否有bias |

| drop | dropout rate |

| attn_drop | attention的drop rate |

| drop_path | stochastic depth的p |

3.2 init

def __init__(self, dim, input_resolution, depth, num_heads, window_size,

mlp_ratio=4., qkv_bias=True, qk_scale=None, drop=0., attn_drop=0.,

drop_path=0., norm_layer=nn.LayerNorm, downsample=None, use_checkpoint=False,

fused_window_process=False):

super().__init__()

self.dim = dim

self.input_resolution = input_resolution

self.depth = depth

self.use_checkpoint = use_checkpoint

# build blocks

self.blocks = nn.ModuleList([

SwinTransformerBlock(dim=dim,

input_resolution=input_resolution,

num_heads=num_heads,

window_size=window_size,

shift_size=0 if (i % 2 == 0) else window_size // 2,

#由于一个window attention加一个shift window attention是一个swin-transformer块

#所以这里需要根据奇偶判断shift_size是windos_size的一半还是0

mlp_ratio=mlp_ratio,

qkv_bias=qkv_bias,

qk_scale=qk_scale,

drop=drop,

attn_drop=attn_drop,

drop_path=drop_path[i] if isinstance(drop_path, list) else drop_path,

norm_layer=norm_layer,

fused_window_process=fused_window_process)

for i in range(depth)])

# patch merging layer

if downsample is not None:

self.downsample = downsample(input_resolution, dim=dim, norm_layer=norm_layer)

else:

self.downsample = None

#除了最后一层,其他的都需要PatchMerge(类似于CNN的池化)3.3 forward

def forward(self, x):

///x:B,Patch_H*Patch_W,C

for blk in self.blocks:

if self.use_checkpoint:

x = checkpoint.checkpoint(blk, x)

else:

x = blk(x)

#依次送入这个basic block 里面的每个swin-transformer block

if self.downsample is not None:

x = self.downsample(x)

#除非最后一层,否则都进行PatchEmerging

return x4 SwinTransformerBlock

4.1 主要输入参数、

| dim | 输入channel的数量 |

| input_resolution | 输入的分辨率 |

| num_heads | attention头的数量 |

| window_size | window的大小,window_size*window_sizw的内容进行attention |

| shitf_size | 是否需要滑动窗口,偶数层不用奇数层用 |

| mlp_ratio | mlp隐藏层维度:embedding层维度 |

| qkv_bias | QKV是否有bias |

| drop | dropout rate |

| attn_drop | attention的drop rate |

| drop_path | stochastic depth的p |

4.2 init

def __init__(self, dim, input_resolution, num_heads, window_size=7, shift_size=0,

mlp_ratio=4., qkv_bias=True, qk_scale=None, drop=0., attn_drop=0., drop_path=0.,

act_layer=nn.GELU, norm_layer=nn.LayerNorm,

fused_window_process=False):

super().__init__()

self.dim = dim

self.input_resolution = input_resolution

self.num_heads = num_heads

self.window_size = window_size

self.shift_size = shift_size

self.mlp_ratio = mlp_ratio

if min(self.input_resolution) <= self.window_size:

# if window size is larger than input resolution, we don't partition windows

self.shift_size = 0

self.window_size = min(self.input_resolution)

#如果当前图片的分辨率大小比window size小,那么将window size设置成图片的分辨率大小。同时不进行shift window

assert 0 <= self.shift_size < self.window_size, "shift_size must in 0-window_size"

#默认shift window比windows size 小

self.norm1 = norm_layer(dim)

self.attn = WindowAttention(

dim,

window_size=to_2tuple(self.window_size),

num_heads=num_heads,

qkv_bias=qkv_bias,

qk_scale=qk_scale,

attn_drop=attn_drop,

proj_drop=drop)

self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

self.norm2 = norm_layer(dim)

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop)

##################################滑动窗口WSA########################### if self.shift_size > 0: # calculate attention mask for SW-MSA H, W = self.input_resolution img_mask = torch.zeros((1, H, W, 1)) # 1 H W 1 h_slices = (slice(0, -self.window_size), slice(-self.window_size, -self.shift_size), slice(-self.shift_size, None)) w_slices = (slice(0, -self.window_size), slice(-self.window_size, -self.shift_size), slice(-self.shift_size, None)) cnt = 0 for h in h_slices: for w in w_slices: img_mask[:, h, w, :] = cnt cnt += 1 mask_windows = window_partition(img_mask, self.window_size) #B,H,W,C——>B*num_W, window_size, window_size, C #num_W表示可以划分成几个窗口 mask_windows = mask_windows.view(-1, self.window_size * self.window_size) #B*num_W*C,window_size*window_size attn_mask = mask_windows.unsqueeze(1) - mask_windows.unsqueeze(2) #B*num_W*C,window_size*window_size,window_size*window_size attn_mask = attn_mask.masked_fill(attn_mask != 0, float(-100.0)).masked_fill(attn_mask == 0, float(0.0)) else: attn_mask = None ############################################################################## self.register_buffer("attn_mask", attn_mask) self.fused_window_process = fused_window_process

不同数字的cnt对应的是下面这九块

对于滑动窗口这一部分,我们举个例子:H=W=6,window_size=2,shift_size=1

img_mask

'''

tensor([[[[0.],[0.],[0.],[0.],[1.],[2.]],

[[0.],[0.],[0.],[0.],[1.],[2.]],

[[0.],[0.],[0.],[0.],[1.],[2.]],

[[0.],[0.],[0.],[0.],[1.],[2.]],

[[3.],[3.],[3.],[3.],[4.],[5.]],

[[6.],[6.],[6.],[6.],[7.],[8.]]]])

'''mask_windows

'''

tensor([[0., 0., 0., 0.],

[0., 0., 0., 0.],

[1., 2., 1., 2.],

[0., 0., 0., 0.],

[0., 0., 0., 0.],

[1., 2., 1., 2.],

[3., 3., 6., 6.],

[3., 3., 6., 6.],

[4., 5., 7., 8.]])

''' mask_windows.unsqueeze(1) - mask_windows.unsqueeze(2)

'''

我们记:

A=[[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.]]

B=[[ 0., 1., 0., 1.],

[-1., 0., -1., 0.],

[ 0., 1., 0., 1.],

[-1., 0., -1., 0.]]

C=[[ 0., 0., 3., 3.],

[ 0., 0., 3., 3.],

[-3., -3., 0., 0.],

[-3., -3., 0., 0.]]

D=[[ 0., 1., 3., 4.],

[-1., 0., 2., 3.],

[-3., -2., 0., 1.],

[-4., -3., -1., 0.]]

结果是[A,A,B,A,A,B,C,C,D]

'''

atten_mask

'''

我们记:

A[[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.]]

B=[[ 0., -100., 0., -100.],

[-100., 0., -100., 0.],

[ 0., -100., 0., -100.],

[-100., 0., -100., 0.]]

C=[[ 0., -100., 0., -100.],

[-100., 0., -100., 0.],

[ 0., -100., 0., -100.],

[-100., 0., -100., 0.]]

D=[[ 0., -100., -100., -100.],

[-100., 0., -100., -100.],

[-100., -100., 0., -100.],

[-100., -100., -100., 0.]]

结果是[A,A,B,A,A,B,C,C,D]

'''A,B,C,D分别对应Window 0,1,2,3

4.3 forward

def forward(self, x):

#x:B,Patch_H*Patch_W,C

H, W = self.input_resolution

B, L, C = x.shape

assert L == H * W, "input feature has wrong size"

shortcut = x

x = self.norm1(x)

x = x.view(B, H, W, C)

#x:B,Patch_H,Patch_W,C

#############################(如果需要的话)滑动窗口############################

# cyclic shift

if self.shift_size > 0:

if not self.fused_window_process:

shifted_x = torch.roll(x, shifts=(-self.shift_size, -self.shift_size), dims=(1, 2))

#向右下方横纵各平移shift_size

#(最左边和最上面的翻折下来)

# partition windows

x_windows = window_partition(shifted_x, self.window_size)

# num_Window*B, window_size, window_size, C

else:

x_windows = WindowProcess.apply(x, B, H, W, C, -self.shift_size, self.window_size)

else:

shifted_x = x

# partition windows

x_windows = window_partition(shifted_x, self.window_size)

# num_Window*B, window_size, window_size, C

x_windows = x_windows.view(-1, self.window_size * self.window_size, C)

# num_Window*B, window_size*window_size, C

############################################################################

############################(滑动)窗口attention#################

# W-MSA/SW-MSA

attn_windows = self.attn(x_windows, mask=self.attn_mask)

#根据是否是滑动窗口attention,来进行窗口attention/滑动窗口attention

# num_Window*B, window_size*window_size, C

# merge windows

attn_windows = attn_windows.view(-1, self.window_size, self.window_size, C)

# num_Window*B, window_size,window_size, C

# reverse cyclic shift

if self.shift_size > 0:

if not self.fused_window_process:

shifted_x = window_reverse(attn_windows, self.window_size, H, W)

# B H W C

#从window级别视角转换回patch级别视角

x = torch.roll(shifted_x, shifts=(self.shift_size, self.shift_size), dims=(1, 2))

#将向右下方平移后的矩阵平移回去

else:

x = WindowProcessReverse.apply(attn_windows, B, H, W, C, self.shift_size, self.window_size)

else:

shifted_x = window_reverse(attn_windows, self.window_size, H, W)

#如果没有滑动窗口,只要从window级别视角转换回patch级别视角即可

x = shifted_x

######################################################################

x = x.view(B, H * W, C)

x = shortcut + self.drop_path(x)

#每一个SwinTransformerBlock做完后(window att/shift window att),都进行一次stochastic depth

# FFN

x = x + self.drop_path(self.mlp(self.norm2(x)))

#这论文的模型图里没有说明,但应该也是一个stochastic depth的操作

return x

5 WindowAttention

逐window的attention

5.1 输入参数

| dim | 输入channel的数量 |

| num_heads | attention头的数量 |

| window_size | window的大小,window_size*window_sizw的内容进行attention |

| qkv_bias | QKV是否有bias |

| attn_drop | attention的drop rate |

| proj_drop | 输出层的droprate |

5.2 init

def __init__(self, dim, window_size, num_heads, qkv_bias=True, qk_scale=None, attn_drop=0., proj_drop=0.):

super().__init__()

self.dim = dim

self.window_size = window_size

# Wh, Ww

self.num_heads = num_heads

head_dim = dim // num_heads

#由于需要保持维度,所以每个window attention输入输出的维度都是dim

#由于window attention有num_heads个头,所以每个头的dim就是dim//num_heads

self.scale = qk_scale or head_dim ** -0.5

#############################相对位置编码#################################

# define a parameter table of relative position bias

self.relative_position_bias_table = nn.Parameter(

torch.zeros((2 * window_size[0] - 1) * (2 * window_size[1] - 1), num_heads))

# 2*Wh-1 * 2*Ww-1, n_heads

# get pair-wise relative position index for each token inside the window

coords_h = torch.arange(self.window_size[0])

coords_w = torch.arange(self.window_size[1])

coords = torch.stack(torch.meshgrid([coords_h, coords_w]))

# 2, Wh, Ww

coords_flatten = torch.flatten(coords, 1)

# 2, Wh*Ww

relative_coords = coords_flatten[:, :, None] - coords_flatten[:, None, :]

# 2, Wh*Ww, Wh*Ww

relative_coords = relative_coords.permute(1, 2, 0).contiguous() # Wh*Ww, Wh*Ww, 2

relative_coords[:, :, 0] += self.window_size[0] - 1 # shift to start from 0

relative_coords[:, :, 1] += self.window_size[1] - 1

relative_coords[:, :, 0] *= 2 * self.window_size[1] - 1

relative_position_index = relative_coords.sum(-1) # Wh*Ww, Wh*Ww

self.register_buffer("relative_position_index", relative_position_index)

############################################################################

self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

#这里和分别写三个dim——>dim的q,k,v Linear function是异曲同工的

self.attn_drop = nn.Dropout(attn_drop)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop)

trunc_normal_(self.relative_position_bias_table, std=.02)

self.softmax = nn.Softmax(dim=-1)5.2.1 相对位置编码详述

-

relative_position_bias_table是一个可更新的Parameter

-

我们现在假定窗口大小是2*2

- 那么从相对位置来说(0,0)——>(0,1);(1,0)——>(1,1),他们的相对位置是一样的

(0,0) (0,1) (1,0) (1,1)

- 那么从相对位置来说(0,0)——>(0,1);(1,0)——>(1,1),他们的相对位置是一样的

- 换言之,需要这样的相对位置索引(值相同的表示相对位置是一样的)

-

4 (0,0)—>(0,0) 2 (0,0)—>(0,1) 1 (0,0)—>(1,0) 0 (0,0)—>(1,1) 5 (0,1)——(0,0) 4 (0,1)—>(0,1) 3 (0,1)—>(1,0) 1 (1,0)—>(1,1) 7 (1,0)——>(0,0) 6 (1,0)—>(0.1) 4 (2,2)—>(2,2) 2 (1,0)—>(1,1) 8 (1,1)—>(0,0) 7 (1,1)—>(0,1) 5 (1,1)—>(1,0) 4 (3,3)—>(3,3) coords_h = torch.arange(window_size[0]) coords_w = torch.arange(window_size[1]) coords_h,coords_w ''' (tensor([0, 1]), tensor([0, 1])) ''' coords = torch.stack(torch.meshgrid([coords_h, coords_w])) coords ''' (tensor([[0, 0], [1, 1]]), tensor([[0, 1], [0, 1]])) ''' coords_flatten = torch.flatten(coords, 1) coords_flatten ''' tensor([[0, 0, 1, 1], [0, 1, 0, 1]]) ''' relative_coords = coords_flatten[:, :, None] - coords_flatten[:, None, :] relative_coords ''' 每个window和另一个window之间相对位置,一共4*4个,所以这里是4*4的矩阵 第一个4*4矩阵是相对位置的纵轴;第二个4*4是相对位置的横轴 tensor([[[ 0, 0, -1, -1], [ 0, 0, -1, -1], [ 1, 1, 0, 0], [ 1, 1, 0, 0]], [[ 0, -1, 0, -1], [ 1, 0, 1, 0], [ 0, -1, 0, -1], [ 1, 0, 1, 0]]]) '''relative_coords = relative_coords.permute(1, 2, 0).contiguous() relative_coords ''' 每一行是一个相对位置索引 tensor([[[ 0, 0], [ 0, -1], [-1, 0], [-1, -1]], [[ 0, 1], [ 0, 0], [-1, 1], [-1, 0]], [[ 1, 0], [ 1, -1], [ 0, 0], [ 0, -1]], [[ 1, 1], [ 1, 0], [ 0, 1], [ 0, 0]]]) ''' relative_coords[:, :, 0] += window_size[0] - 1 relative_coords[:, :, 1] += window_size[1] - 1 relative_coords[:, :, 0] *= 2 * window_size[1] - 1 ''' tensor([[[3, 1], [3, 0], [0, 1], [0, 0]], [[3, 2], [3, 1], [0, 2], [0, 1]], [[6, 1], [6, 0], [3, 1], [3, 0]], [[6, 2], [6, 1], [3, 2], [3, 1]]]) ''' relative_position_index = relative_coords.sum(-1) relative_position_index ''' tensor([[4, 3, 1, 0], [5, 4, 2, 1], [7, 6, 4, 3], [8, 7, 5, 4]]) '''

5.3 forward

def forward(self, x, mask=None):

"""

Args:

x: input features with shape of (num_windows*B, N, C)

mask: (0/-inf) mask with shape of (num_windows, Wh*Ww, Wh*Ww) or None

"""

B_, N, C = x.shape

#num_Window*B, window_size*window_size, C

qkv = self.qkv(x).reshape(B_, N, 3, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

#3,B_,self.num_heads,N,C // self.num_heads

#3,num_Window*B,self.num_heads,window_size*window_size,C // self.num_heads

q, k, v = qkv[0], qkv[1], qkv[2]

# make torchscript happy (cannot use tensor as tuple)

#num_Window*B,self.num_heads,window_size*window_size,C // self.num_heads

q = q * self.scale

attn = (q @ k.transpose(-2, -1))

#Q,K内积

#B_,self.num_heads,N,N

#num_Window*B,self.num_heads,window_size*window_size,window_size*window_size

relative_position_bias = self.relative_position_bias_table[self.relative_position_index.view(-1)].view(

self.window_size[0] * self.window_size[1],

self.window_size[0] * self.window_size[1],

-1)

# window_size*window_size,window_size*window_size,self.num_heads

relative_position_bias = relative_position_bias.permute(2, 0, 1).contiguous()

# self.num_heads,window_size*window_size,window_size*window_size

attn = attn + relative_position_bias.unsqueeze(0)

#Batch张图片中每个window都加上这个relative position

if mask is not None:

nW = mask.shape[0]

attn = attn.view(B_ // nW, nW, self.num_heads, N, N) + mask.unsqueeze(1).unsqueeze(0)

#B_ // nW,num_Window,self.num_heads,window_size*window_size,window_size*window_size

#mask:#nW,window_size*window_size,window_size*window_size

attn = attn.view(-1, self.num_heads, N, N)

attn = self.softmax(attn)

else:

attn = self.softmax(attn)

attn = self.attn_drop(attn)

x = (attn @ v).transpose(1, 2).reshape(B_, N, C)

x = self.proj(x)

x = self.proj_drop(x)

return x6 window partition

将patch级别的图片划分成窗口级别

def window_partition(x, window_size):

"""

Args:

x: (B, H, W, C)

window_size (int): window size

Returns:

windows: (num_windows*B, window_size, window_size, C)

比如原来是(1,56,56,3),窗口大小为7,可分成8*8个窗口

那么返回维度是(64,7,7,3)

"""

B, H, W, C = x.shape

x = x.view(B, H // window_size, window_size, W // window_size, window_size, C)

windows = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(-1, window_size, window_size, C)

return windows

举例

a=torch.arange(16).reshape(1,4,4,1)

print(a)

'''

tensor([[[[ 0],[ 1],[ 2],[ 3]],

[[ 4],[ 5],[ 6],[ 7]],

[[ 8],[ 9],[10],[11]],

[[12],[13],[14],[15]]]])

'''

w=window_partition(a,2)

w

'''

tensor([[[[ 0],[ 1]],

[[ 4],[ 5]]],

[[[ 2], [ 3]],

[[ 6],[ 7]]],

[[[ 8],[ 9]],

[[12],[13]]],

[[[10],[11]],

[[14],[15]]]])

'''7 window reversion

把窗口级别的还原成patch级别

def window_reverse(windows, window_size, H, W):

"""

Args:

windows: (num_windows*B, window_size, window_size, C)

window_size (int): Window size

H (int): Height of image

W (int): Width of image

Returns:

x: (B, H, W, C)

"""

B = int(windows.shape[0] / (H * W / window_size / window_size))

#(H * W / window_size / window_size)就是num_windows

x = windows.view(B, H // window_size, W // window_size, window_size, window_size, -1)

# B, n_patch_H,n_patch_W,window_size,window_size,C

x = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(B, H, W, -1)

#(B,H,W,C)

return x举例

w

'''

tensor([[[[ 0],[ 1]],

[[ 4],[ 5]]],

[[[ 2], [ 3]],

[[ 6],[ 7]]],

[[[ 8],[ 9]],

[[12],[13]]],

[[[10],[11]],

[[14],[15]]]])

'''

window_reverse(w,2,4,4)

'''

tensor([[[[ 0],[ 1],[ 2],[ 3]],

[[ 4],[ 5],[ 6],[ 7]],

[[ 8],[ 9],[10],[11]],

[[12],[13],[14],[15]]]])

'''8 PatchMerging

class PatchMerging(nn.Module):

r""" Patch Merging Layer.

Args:

input_resolution (tuple[int]): Resolution of input feature.

dim (int): Number of input channels.

norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm

B,H,W,C——>

"""

def __init__(self, input_resolution, dim, norm_layer=nn.LayerNorm):

super().__init__()

self.input_resolution = input_resolution

self.dim = dim

self.reduction = nn.Linear(4 * dim, 2 * dim, bias=False)

self.norm = norm_layer(4 * dim)

def forward(self, x):

"""

x: B, H*W, C

"""

H, W = self.input_resolution

B, L, C = x.shape

assert L == H * W, "input feature has wrong size"

assert H % 2 == 0 and W % 2 == 0, f"x size ({H}*{W}) are not even."

x = x.view(B, H, W, C)

x0 = x[:, 0::2, 0::2, :] # B H/2 W/2 C

x1 = x[:, 1::2, 0::2, :] # B H/2 W/2 C

x2 = x[:, 0::2, 1::2, :] # B H/2 W/2 C

x3 = x[:, 1::2, 1::2, :] # B H/2 W/2 C

x = torch.cat([x0, x1, x2, x3], -1) # B H/2 W/2 4*C

x = x.view(B, -1, 4 * C) # B H/2*W/2 4*C

x = self.norm(x)

x = self.reduction(x)

return x