NNDL 实验六 卷积神经网络(5)使用预训练resnet18实现CIFAR-10分类

目录

5.5 实践:基于ResNet18网络完成图像分类任务

5.5.1 数据处理

5.5.1.1 数据集介绍

5.5.1.2 数据读取

5.5.1.3 构造Dataset类

5.5.2 模型构建

5.5.3 模型训练

5.5.4 模型评价

5.5.5 模型预测

总结

编辑

参考

5.5 实践:基于ResNet18网络完成图像分类任务

图像分类(Image Classification)是计算机视觉中的一个基础任务,将图像的语义将不同图像划分到不同类别。很多任务也可以转换为图像分类任务。比如人脸检测就是判断一个区域内是否有人脸,可以看作一个二分类的图像分类任务。

- 数据集:CIFAR-10数据集,

- 网络:ResNet18模型,

- 损失函数:交叉熵损失,

- 优化器:Adam优化器,Adam优化器的介绍参考NNDL第7.2.4.3节。

- 评价指标:准确率。

5.5.1 数据处理

5.5.1.1 数据集介绍

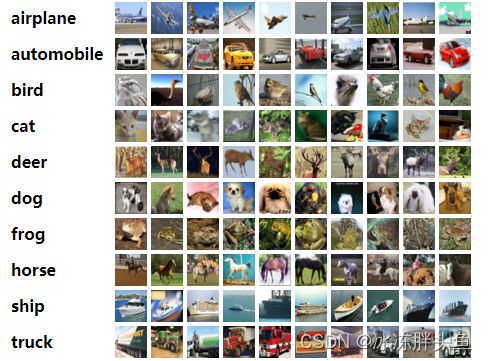

CIFAR-10数据集包含了10种不同的类别、共60,000张图像,其中每个类别的图像都是6000张,图像大小均为32×3232×32像素。CIFAR-10数据集的示例如 图 所示。

5.5.1.2 数据读取

在本实验中,将原始训练集拆分成了train_set、dev_set两个部分,分别包括40 000条和10 000条样本。将data_batch_1到data_batch_4作为训练集,data_batch_5作为验证集,test_batch作为测试集。

最终的数据集构成为:

- 训练集:40 000条样本。

- 验证集:10 000条样本。

- 测试集:10 000条样本。

读取一个batch数据的代码如下所示:

import os

import pickle

import numpy as np

def load_cifar10_batch(folder_path, batch_id=1, mode='train'):

if mode == 'test':

file_path = os.path.join(folder_path, 'test_batch')

else:

file_path = os.path.join(folder_path, 'data_batch_' + str(batch_id))

# 加载数据集文件

with open(file_path, 'rb') as batch_file:

batch = pickle.load(batch_file, encoding='latin1')

imgs = batch['data'].reshape((len(batch['data']), 3, 32, 32)) / 255.

labels = batch['labels']

return np.array(imgs, dtype='float32'), np.array(labels)

imgs_batch, labels_batch = load_cifar10_batch(

folder_path='C:/Users/ASUS/shujuji/cifar-10-python/cifar-10-batches-py', batch_id=1, mode='train')查看数据的维度:

#查看数据的维度

# 打印一下每个batch中X和y的维度

print("batch of imgs shape: ",imgs_batch.shape, "batch of labels shape: ", labels_batch.shape)运行结果

batch of imgs shape: (10000, 3, 32, 32) batch of labels shape: (10000,)

可视化观察其中的一张样本图像和对应的标签,代码如下所示:

代码如下

#可视化

import matplotlib.pyplot as plt

image, label = imgs_batch[1], labels_batch[1]

print("The label in the picture is {}".format(label))

plt.figure(figsize=(2, 2))

plt.imshow(image.transpose(1, 2, 0))

plt.savefig('cnn-car.pdf')

plt.show()运行结果

5.5.1.3 构造Dataset类

构造一个CIFAR10Dataset类,其将继承自torch.io.DataSet类,可以逐个数据进行处理。代码实现如下:

#构造Dataset类

import torch

from torch.utils.data import Dataset, DataLoader

from torchvision.transforms import transforms

class CIFAR10Dataset(Dataset):

def __init__(self,

folder_path='C:/Users/ASUS/shujuji/cifar-10-python/cifar-10-batches-py',

mode='train'):

if mode == 'train':

self.imgs, self.labels = load_cifar10_batch(folder_path=folder_path, batch_id=1, mode='train')

for i in range(2, 5):

imgs_batch, labels_batch = load_cifar10_batch(folder_path=folder_path, batch_id=i, mode='train')

self.imgs, self.labels = np.concatenate([self.imgs, imgs_batch]), np.concatenate(

[self.labels, labels_batch])

elif mode == 'dev':

self.imgs, self.labels = load_cifar10_batch(folder_path=folder_path, batch_id=5, mode='dev')

elif mode == 'test':

self.imgs, self.labels = load_cifar10_batch(folder_path=folder_path, mode='test')

self.transform = transforms.Compose(

[transforms.ToTensor(), transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])])

def __getitem__(self, idx):

img, label = self.imgs[idx], self.labels[idx]

img = img.transpose(1, 2, 0)

img = self.transform(img)

return img, label

def __len__(self):

return len(self.imgs)

torch.manual_seed(100)

train_dataset = CIFAR10Dataset(folder_path='C:/Users/ASUS/shujuji/cifar-10-python/cifar-10-batches-py',

mode='train')

dev_dataset = CIFAR10Dataset(folder_path='C:/Users/ASUS/shujuji/cifar-10-python/cifar-10-batches-py',

mode='dev')

test_dataset = CIFAR10Dataset(folder_path='C:/Users/ASUS/shujuji/cifar-10-python/cifar-10-batches-py',

mode='test')5.5.2 模型构建

使用torchvision.modelsAPI中的resnet18进行图像分类实验。

from torchvision.models import resnet18

resnet18_model = resnet18()什么是预训练模型?什么是迁移学习?

预训练模型是深度学习架构,已经过训练以执行大量数据上的特定任务(例如,识别图片中的分类问题)。 是使自然语言处理由原来的手工调参、依靠 ML 专家的阶段,进入到可以大规模、可复制的大工业施展的阶段。而且预训练模型从单语言、扩展到多语言、多模态任务。一路锐气正盛,所向披靡。预训练模型就意味着把人类的语言知识,先学了一个东西,然后再代入到某个具体任务,就顺手了,就是这么一个简单的道理。

迁移学习是一种机器学习技术,顾名思义就是指将知识从一个领域迁移到另一个领域的能力。

我们知道,神经网络需要用数据来训练,它从数据中获得信息,进而把它们转换成相应的权重。这些权重能够被提取出来,迁移到其他的神经网络中,我们"迁移"了这些学来的特征,就不需要从零开始训练一个神经网络了 。

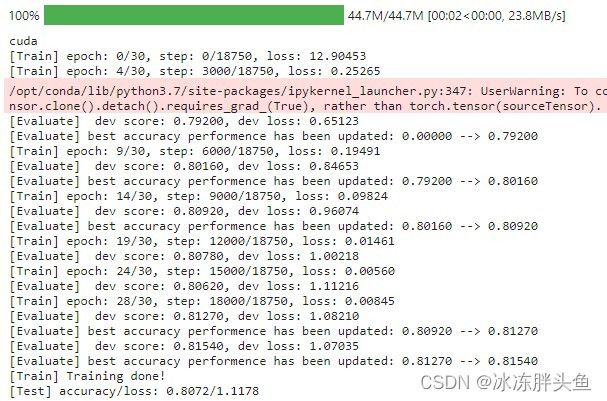

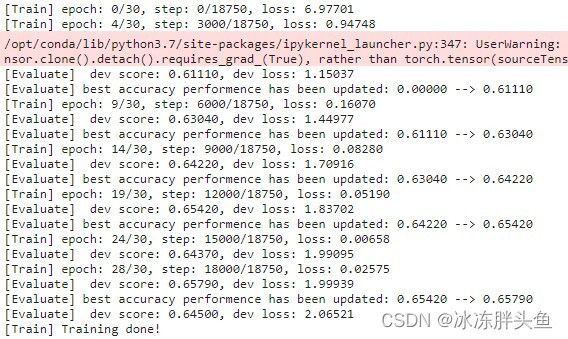

比较“使用预训练模型”和“不使用预训练模型的效果”

使用预训练模型

不使用预训练模型

5.5.3 模型训练

复用RunnerV3类,实例化RunnerV3类,并传入训练配置。

使用训练集和验证集进行模型训练,共训练30个epoch。

在实验中,保存准确率最高的模型作为最佳模型。代码实现如下:

class Accuracy:

def __init__(self, is_logist=True):

"""

输入:

- is_logist: outputs是logist还是激活后的值

"""

# 用于统计正确的样本个数

self.num_correct = 0

# 用于统计样本的总数

self.num_count = 0

self.is_logist = is_logist

def update(self, outputs, labels):

"""

输入:

- outputs: 预测值, shape=[N,class_num]

- labels: 标签值, shape=[N,1]

"""

# 判断是二分类任务还是多分类任务,shape[1]=1时为二分类任务,shape[1]>1时为多分类任务

if outputs.shape[1] == 1: # 二分类

outputs = torch.squeeze(outputs, dim=-1)

if self.is_logist:

# logist判断是否大于0

preds = torch.tensor((outputs >= 0), dtype=torch.float32)

else:

# 如果不是logist,判断每个概率值是否大于0.5,当大于0.5时,类别为1,否则类别为0

preds = torch.tensor((outputs >= 0.5), dtype=torch.float32)

else:

# 多分类时,使用'torch.argmax'计算最大元素索引作为类别

preds = torch.argmax(outputs, dim=1)

# 获取本批数据中预测正确的样本个数

labels = torch.squeeze(labels, dim=-1)

batch_correct = torch.sum(preds == labels).clone().detach().numpy()

batch_count = len(labels)

# 更新num_correct 和 num_count

self.num_correct += batch_correct

self.num_count += batch_count

def accumulate(self):

# 使用累计的数据,计算总的指标

if self.num_count == 0:

return 0

return self.num_correct / self.num_count

def reset(self):

# 重置正确的数目和总数

self.num_correct = 0

self.num_count = 0

def name(self):

return "Accuracy"

class RunnerV3(object):

def __init__(self, model, optimizer, loss_fn, metric, **kwargs):

self.model = model

self.optimizer = optimizer

self.loss_fn = loss_fn

self.metric = metric

self.dev_scores = []

self.train_epoch_losses = []

self.train_step_losses = []

self.dev_losses = []

self.best_score = 0

def train(self, train_loader, dev_loader=None, **kwargs):

self.model.train()

num_epochs = kwargs.get("num_epochs", 0)

log_steps = kwargs.get("log_steps", 100)

eval_steps = kwargs.get("eval_steps", 0)

save_path = kwargs.get("save_path", "best_model.pdparams")

custom_print_log = kwargs.get("custom_print_log", None)

num_training_steps = num_epochs * len(train_loader)

if eval_steps:

if self.metric is None:

raise RuntimeError('Error: Metric can not be None!')

if dev_loader is None:

raise RuntimeError('Error: dev_loader can not be None!')

global_step = 0

for epoch in range(num_epochs):

total_loss = 0

for step, data in enumerate(train_loader):

X, y = data

X = X.cuda()

y = y.cuda()

logits = self.model(X).cuda()

y = y.to(dtype=torch.int64)

loss = self.loss_fn(logits, y)

total_loss += loss

self.train_step_losses.append((global_step, loss.item()))

if log_steps and global_step % log_steps == 0:

print(

f"[Train] epoch: {epoch}/{num_epochs}, step: {global_step}/{num_training_steps}, loss: {loss.item():.5f}")

loss.backward()

if custom_print_log:

custom_print_log(self)

self.optimizer.step()

optimizer.zero_grad()

if eval_steps > 0 and global_step > 0 and \

(global_step % eval_steps == 0 or global_step == (num_training_steps - 1)):

dev_score, dev_loss = self.evaluate(dev_loader, global_step=global_step)

print(f"[Evaluate] dev score: {dev_score:.5f}, dev loss: {dev_loss:.5f}")

self.model.train()

if dev_score > self.best_score:

self.save_model(save_path)

print(

f"[Evaluate] best accuracy performence has been updated: {self.best_score:.5f} --> {dev_score:.5f}")

self.best_score = dev_score

global_step += 1

trn_loss = (total_loss / len(train_loader)).item()

self.train_epoch_losses.append(trn_loss)

print("[Train] Training done!")

@torch.no_grad()

def evaluate(self, dev_loader, **kwargs):

assert self.metric is not None

self.model.eval()

global_step = kwargs.get("global_step", -1)

total_loss = 0

self.metric.reset()

for batch_id, data in enumerate(dev_loader):

X, y = data

y = y.to(torch.int64)

X = X.cuda()

y = y.cuda()

logits = self.model(X).cuda()

loss = self.loss_fn(logits, y).item()

total_loss += loss

self.metric.update(logits, y)

dev_loss = (total_loss / len(dev_loader))

dev_score = self.metric.accumulate()

if global_step != -1:

self.dev_losses.append((global_step, dev_loss))

self.dev_scores.append(dev_score)

return dev_score, dev_loss

@torch.no_grad()

def predict(self, x, **kwargs):

self.model.eval()

logits = self.model(x)

return logits

def save_model(self, save_path):

torch.save(self.model.state_dict(), save_path)

def load_model(self, model_path):

state_dict = torch.load(model_path)

self.model.load_state_dict(state_dict)

import torch

def accuracy(preds, labels):

print(preds)

if preds.shape[1] == 1:

preds = torch.can_cast((preds >= 0.5).dtype, to=torch.float32)

else:

preds = torch.argmax(preds, dim=1)

torch.can_cast(preds.dtype, torch.int32)

return torch.mean(torch.tensor((preds == labels), dtype=torch.float32))

class Accuracy():

def __init__(self):

self.num_correct = 0

self.num_count = 0

self.is_logist = True

def update(self, outputs, labels):

if outputs.shape[1] == 1:

outputs = torch.squeeze(outputs, axis=-1)

if self.is_logist:

preds = torch.can_cast((outputs >= 0), dtype=torch.float32)

else:

preds = torch.can_cast((outputs >= 0.5), dtype=torch.float32)

else:

preds = torch.argmax(outputs, dim=1).int()

labels = torch.squeeze(labels, dim=-1)

batch_correct = torch.sum(torch.tensor(preds == labels, dtype=torch.float32)).cpu().numpy()

batch_count = len(labels)

self.num_correct += batch_correct

self.num_count += batch_count

def accumulate(self):

if self.num_count == 0:

return 0

return self.num_correct / self.num_count

def reset(self):

self.num_correct = 0

self.num_count = 0

def name(self):

return "Accuracy"

import torch.nn.functional as F

import torch.optim as opt

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(device)

lr = 0.001

batch_size = 64

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

dev_loader = DataLoader(dev_dataset, batch_size=batch_size)

test_loader = DataLoader(test_dataset, batch_size=batch_size)

model = resnet18_model

model.to(device)

optimizer = opt.SGD(model.parameters(), lr=lr, momentum=0.9)

loss_fn = F.cross_entropy

metric = Accuracy()

runner = RunnerV3(model, optimizer, loss_fn, metric)

log_steps = 3000

eval_steps = 3000

runner.train(train_loader, dev_loader, num_epochs=30, log_steps=log_steps,

eval_steps=eval_steps, save_path="best_model.pdparams")运行结果

5.5.4 模型评价

# 加载最优模型

runner.load_model('best_model.pdparams')

# 模型评价

score, loss = runner.evaluate(iter(test_loader))

print("[Test] accuracy/loss: {:.4f}/{:.4f}".format(score, loss))

运行结果

![]()

5.5.5 模型预测

同样地,也可以使用保存好的模型,对测试集中的数据进行模型预测,观察模型效果,具体代码实现如下:

#获取测试集中的一个batch的数据

X, label = next(iter(test_loader))

X = X.cpu()

logits = runner.predict(X)

#多分类,使用softmax计算预测概率

pred = F.softmax(logits)

#获取概率最大的类别

pred_class = torch.argmax(pred[2]).numpy()

print(label[2].numpy())

label = label[2].numpy()

#输出真实类别与预测类别

print("The true category is {} and the predicted category is {}".format(label, pred_class))

#可视化图片

plt.figure(figsize=(2, 2))

imgs, labels = load_cifar10_batch(folder_path='C:/Users/ASUS/shujuji/cifar-10-python/cifar-10-batches-py', mode='test')

plt.imshow(imgs[2].transpose(1,2,0))

plt.savefig('cnn-test-vis.pdf')运行结果

![]()

总结

参考

NNDL 实验5(下) - HBU_DAVID - 博客园邱锡鹏,神经网络与深度学习,机械工业出版社,https://nndl.github.io/, 2020. https://github.com/nndl/practice-in-paddle/ 5.5https://www.cnblogs.com/hbuwyg/p/16617678.html预训练模型_lucca的博客-CSDN博客_预训练模型是什么意思1 预训练模型由来预训练模型是深度学习架构,已经过训练以执行大量数据上的特定任务(例如,识别图片中的分类问题)。这种训练不容易执行,并且通常需要大量资源,超出许多可用于深度学习模型的人可用的资源,我就没有大批次GPU。在谈论预训练模型时,通常指的是在Imagenet上训练的CNN(用于视觉相关任务的架构)。ImageNet数据集包含超过1400万个图像,其中120万个图像分为1000个类别(大...https://blog.csdn.net/w275840140/article/details/89532040?ops_request_misc=%257B%2522request%255Fid%2522%253A%2522166823869716782388082125%2522%252C%2522scm%2522%253A%252220140713.130102334..%2522%257D&request_id=166823869716782388082125&biz_id=0&utm_medium=distribute.pc_search_result.none-task-blog-2~all~sobaiduend~default-1-89532040-null-null.142%5Ev63%5Econtrol,201%5Ev3%5Eadd_ask,213%5Ev2%5Et3_control1&utm_term=%E4%BB%80%E4%B9%88%E6%98%AF%E9%A2%84%E8%AE%AD%E7%BB%83%E6%A8%A1%E5%9E%8B&spm=1018.2226.3001.4187深度学习中预训练模型是指什么?如何得到?_ZhangJingHuaJYO的博客-CSDN博客_深度学习预训练转自:作者:微软亚洲研究院链接:https://www.zhihu.com/question/327642286/answer/1465037757来源:知乎预训练模型到底是什么,它是如何被应用在产品里,未来又有哪些机会和挑战?根据微软亚洲研究院副院长、国际计算语言学会(ACL)前任主席、中国计算机学会副理事长周明在2020年中国人工智能大会做的主题为《预训练模型在多语言、多模态任务的进展》的特邀报告,我们整理了以下答案,希望能对你有所帮助~预训练模型把迁移学习很好地用起来了,让我们感到眼前一亮https://blog.csdn.net/ZhangJingHuaJYO/article/details/124617277?ops_request_misc=&request_id=&biz_id=102&utm_term=%E4%BB%80%E4%B9%88%E6%98%AF%E9%A2%84%E8%AE%AD%E7%BB%83%E6%A8%A1%E5%9E%8B&utm_medium=distribute.pc_search_result.none-task-blog-2~all~sobaiduweb~default-3-124617277.nonecase&spm=1018.2226.3001.4187【深度学习】使用预训练模型_DrCrypto的博客-CSDN博客_使用预训练模型的最后一层输出层进行下游任务,在训练时会更新所有层的参数吗?主要有两种方法:特征提取微调模型特征提取特征提取就是使用已经训练好的网络在新的样本上提取特征,然后将这些特征输入到新的分类器,从头开始训练的过程。卷积神经网络分为两个部分:一系列池化层+卷积层,也叫卷积基全连接层特征提取就是去除之前训练好的网络的卷积基,在此之上运行新数据,训练新的分类器。我们只是复用卷积基,而不用训练好的分类器的数据,这样做的原因是卷积基学到的表示更加...https://blog.csdn.net/u011240016/article/details/85217630