神经网络与深度学习day08-卷积神经网络1:卷积

神经网络与深度学习day08-卷积神经网络1:卷积

- 5.1 卷积

-

- 5.1.1 二维卷积运算

- 5.1.2 二维卷积算子

- 5.1.3 二维卷积的参数量和计算量

- 5.1.4 感受野

- 5.1.5 卷积的变种

- 5.1.6 带步长和零填充的二维卷积算子

- 5.1.7 使用卷积运算完成图像边缘检测任务

- 选做题:实现1、2;阅读3、4、5

-

- 边缘检测系列1:传统边缘检测算子 - 飞桨AI Studio

-

- 1.1 构建通用的边缘检测算子

- 1.2 图像边缘检测测试函数

- 1.3 roberts算子

- 1.4 prewitt算子

- 1.5 sobel算子

- 1.6 scharr算子

- 1.7 Krisch算子

- 1.8 robinson算子

- 1.9 laplacian算子

- 边缘检测系列2:简易的 Canny 边缘检测器 - 飞桨AI Studio

-

- 2.1 算法原理

- 2.2 基于 OpenCV 实现快速的 Canny 边缘检测

- 2.3 基于 Numpy 模块实现简单的 Canny 检测器

- 2.4 基于 torch 实现的 Canny 边缘检测器

- 边缘检测系列3:【HED】 Holistically-Nested 边缘检测 - 飞桨AI Studio

- 边缘检测系列4:【RCF】基于更丰富的卷积特征的边缘检测 - 飞桨AI Studio (baidu.com)

- 边缘检测系列5:【CED】添加了反向细化路径的 HED 模型 - 飞桨AI Studio (baidu.com)

- 总结

- 参考博客:

卷积神经网络(Convolutional Neural Network,CNN)

- 受生物学上感受野机制的启发而提出。

- 一般是由卷积层、汇聚层和全连接层交叉堆叠而成的前馈神经网络

- 有三个结构上的特性:局部连接、权重共享、汇聚。

- 具有一定程度上的平移、缩放和旋转不变性。

- 和前馈神经网络相比,卷积神经网络的参数更少。

- 主要应用在图像和视频分析的任务上,其准确率一般也远远超出了其他的神经网络模型。

- 近年来卷积神经网络也广泛地应用到自然语言处理、推荐系统等领域。

5.1 卷积

5.1.1 二维卷积运算

上课听到老师说二维卷积其实是要翻转的,我在学周老师《机器学习》这本书中也了解过相关卷积的概念,但是没有深究为什么二维卷积没有反转,而是直接相乘。于是找到了下边这篇文章,我看得懂了,希望大家也可以了解一下。为什么卷积要翻转/为什么深度学习卷积不翻转/卷积和相关

这里稍微提炼一下这篇文章的解释:

- 解释1:因为卷积核的参数是推导出来的,因此如果二维卷积要翻转,那么这个卷积核其实是已经翻转了之后的卷积核。

- 解释2:图像(二维/三维)没有时间概念,所以没有类似1中信号与“系统”的概念,**没有旧时刻输入对现在时刻输出的影响效应,其实是不需要卷积的。而深度学习borrow了图像处理的空间“卷积”名词,而图像处理的“卷积”本质上也是不用翻转的(相关),所以深度学习卷积不用翻转。**所以,深度学习,用的应该是“相关”这个概念。(邱老师也在书中提到了"互相关"这个词。)

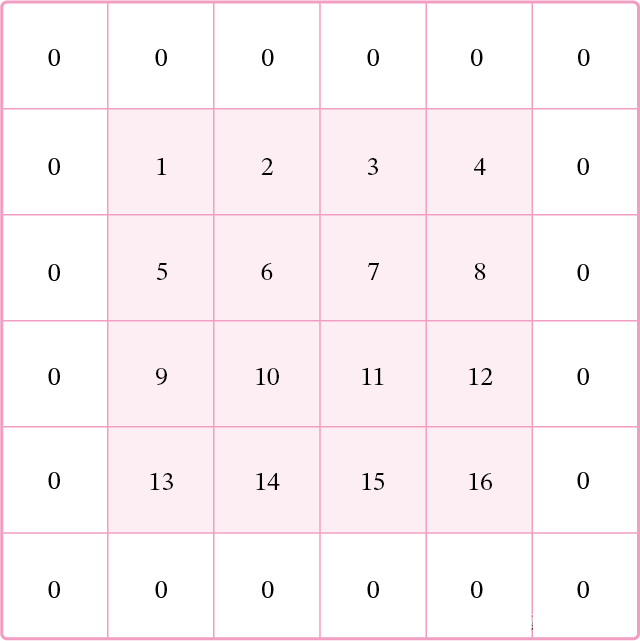

5.1.2 二维卷积算子

在本书后面的实现中,算子都继承paddle.nn.Layer,并使用支持反向传播的飞桨API进行实现,这样我们就可以不用手工写backword()的代码实现。

在本节实现中,我们使用torch复现,算子继承torch.nn.Module,我们使用支持方向传播的torch.nn.Parameter函数,允许梯度下降,可以不用手工书写backword()的代码实现。

我的代码竟然真有人看,说让我写写注释,我觉得挺好的,万一有地方我也不明白呢,希望新加的注释能看懂,拜托emoij。

torch实现二维卷积算子code如下:

import torch#导入包

class Conv2D(torch.nn.Module):#继承torch.nn.Module下的二维卷积算子

def __init__(self, kernel_size, weight_attr=torch.tensor([[0., 1.],[2., 3.]])):#类初始化,初始化权重属性为默认值

super(Conv2D, self).__init__()#继承torch.nn.Module中的Conv2D卷积算子

self.weight = torch.nn.Parameter(weight_attr)

#torch.nn.Parameter将一个不可训练的类型为Tensor的参数转化为可训练的类型为parameter的参数,并将这个参数绑定到module里面,成为module中可训练的参数。

self.weight.reshape([kernel_size,kernel_size])#将卷积核大小改为kernel_size*kernel_size

def forward(self, X):#定义前向传播

"""

输入:

- X:输入矩阵,shape=[B, M, N],B为样本数量

输出:

- output:输出矩阵

"""

u, v = self.weight.shape#得到输入形状大小

output = torch.zeros([X.shape[0], X.shape[1] - u + 1, X.shape[2] - v + 1])#初始化输出矩阵

for i in range(output.shape[1]):

for j in range(output.shape[2]):

output[:, i, j] = torch.sum(X[:, i:i+u, j:j+v]*self.weight, axis=[1,2])#进行卷积

return output

# 随机构造一个二维输入矩阵

torch.manual_seed(100)#定义随机种子

inputs = torch.tensor([[[1.,2.,3.],[4.,5.,6.],[7.,8.,9.]]])#初始化输入

kernel = torch.tensor([[0.,1.],[2.,3.]])#初始化kernel即weight_attr

conv2d = Conv2D(kernel_size=2)#传入kernel_size参数

outputs = conv2d(inputs)#测试二维卷积算子

print("input:\n {}, \nuse kernel:\n {}\noutput:\n {}".format(inputs,kernel, outputs))#输出结果。

测试二维卷积算子:

结果表明: 我们可以看出input 经过kernel这个卷积核进行卷积之后,得到了output的卷积结果。

5.1.3 二维卷积的参数量和计算量

随着隐藏层神经元数量的变多以及层数的加深,

使用全连接前馈网络处理图像数据时,参数量会急剧增加。

如果使用卷积进行图像处理,相较于全连接前馈网络,参数量少了非常多。

因为没有一个统一的标准来比较全连接前馈网络和卷积网络的参数量和计算量,所以这里我们只提出一层二维卷积的参数量和计算量来体现一下:

参数量

我们使用的卷积核大小为[KS,KS]

其参数量为: K S ∗ K S + 1 KS*KS + 1 KS∗KS+1

若考虑输入和输出通道,则产生的参数量为: ( C i n ∗ ( K S ∗ K S ) + 1 ) ∗ C o u t (C_{in} * (KS * KS ) + 1)*C_{out} (Cin∗(KS∗KS)+1)∗Cout

其中, C i n C_{in} Cin为输入通道大小, C o u t C_{out} Cout为输出通道大小。

计算量

我们使用的卷积核大小为[KS,KS]

输入图的大小为: ( H i n , W i n ) (H_{in},W_{in}) (Hin,Win),输出图的大小为: ( H o u t , W o u t ) (H_{out},W_{out}) (Hout,Wout)

若考虑输入和输出通道: C i n ∗ K S ∗ K S ∗ H o u t ∗ W o u t ∗ C o u t C_{in}*KS*KS*H_{out}*W_{out}*C_{out} Cin∗KS∗KS∗Hout∗Wout∗Cout

参考博客:卷积中参数量和计算量

5.1.4 感受野

5.1.5 卷积的变种

5.1.5.1 步长(Stride=2)

5.1.5.2 零填充(Zero Padding)

5.1.6 带步长和零填充的二维卷积算子

从输出结果看出,使用3×3大小卷积,padding为1,

- 当stride=1时,模型的输出特征图与输入特征图保持一致;

- 当stride=2时,模型的输出特征图的宽和高都缩小一倍。

下面我们使用pytorch实现自定义带步长和零填充的二维卷积算子,代码如下:

class Conv2D(torch.nn.Module):#继承torch.nn.Module下的二维卷积算子

def __init__(self, kernel_size, stride=1, padding=0, weight_attr=torch.ones([3,3])):

super(Conv2D, self).__init__()

self.weight = torch.nn.Parameter(weight_attr)

self.weight.reshape([kernel_size,kernel_size])

# 步长

self.stride = stride

# 零填充

self.padding = padding

def forward(self, X):

# 零填充

new_X = torch.zeros([X.shape[0], X.shape[1]+2*self.padding, X.shape[2]+2*self.padding])#初始化填充

new_X[:, self.padding:X.shape[1]+self.padding, self.padding:X.shape[2]+self.padding] = X#填充

u, v = self.weight.shape#获得形状大小

output_w = (new_X.shape[1] - u) // self.stride + 1#计算输出width

output_h = (new_X.shape[2] - v) // self.stride + 1#计算输出height

output = torch.zeros([X.shape[0], output_w, output_h])#初始化输出

for i in range(0, output.shape[1]):

for j in range(0, output.shape[2]):

output[:, i, j] = torch.sum(

new_X[:, self.stride*i:self.stride*i+u, self.stride*j:self.stride*j+v]*self.weight,#卷积

axis=[1,2])

return output

inputs = torch.randn([2, 8, 8])#初始化输入大小

conv2d_padding = Conv2D(kernel_size=3, stride=1,padding=1)

outputs = conv2d_padding(inputs)

print("When kernel_size=3, padding=1 stride=1, input's shape: {}, output's shape: {}".format(inputs.shape, outputs.shape))

conv2d_stride = Conv2D(kernel_size=3, stride=2, padding=1)

outputs = conv2d_stride(inputs)

print("When kernel_size=3, padding=1 stride=2, input's shape: {}, output's shape: {}".format(inputs.shape, outputs.shape))

测试结果:

![]()

When kernel_size=3, padding=1 stride=1, input’s shape: torch.Size([2, 8, 8]), output’s shape: torch.Size([2, 8, 8])

When kernel_size=3, padding=1 stride=2, input’s shape: torch.Size([2, 8, 8]), output’s shape: torch.Size([2, 4, 4])

5.1.7 使用卷积运算完成图像边缘检测任务

参考代码:

%matplotlib inline

import matplotlib.pyplot as plt

from PIL import Image

import numpy as np

# 读取图片

img = Image.open('deer.jpeg').convert('L')#转成灰度图

img.resize((256,256))

# 设置卷积核参数

w = np.array([[-1,-1,-1], [-1,8,-1], [-1,-1,-1]], dtype='float32')

# 创建卷积算子,卷积核大小为3x3,并使用上面的设置好的数值作为卷积核权重的初始化参数

conv = Conv2D(kernel_size=3, stride=1, padding=0, weight_attr=torch.tensor(w))

# 将读入的图片转化为float32类型的numpy.ndarray

inputs = np.array(img).astype('float32')

print("bf to_tensor, inputs:",inputs)

# 将图片转为Tensor

inputs = torch.tensor(inputs)

print("bf unsqueeze, inputs:",inputs)

inputs = torch.unsqueeze(inputs, axis=0)

print("af unsqueeze, inputs:",inputs)

outputs = conv(inputs)

outputs.detach().numpy()

# 可视化结果

plt.figure(figsize=(8, 4))

f = plt.subplot(121)

f.set_title('input image', fontsize=15)

plt.imshow(img)

f = plt.subplot(122)

f.set_title('output feature map', fontsize=15)

plt.imshow(outputs.detach().numpy().squeeze(), cmap='gray')

plt.savefig('conv-vis.pdf')

plt.show()

选做题:实现1、2;阅读3、4、5

边缘检测系列1:传统边缘检测算子 - 飞桨AI Studio

实现一些传统边缘检测算子,如:Roberts、Prewitt、Sobel、Scharr、Kirsch、Robinson、Laplacian

1.1 构建通用的边缘检测算子

- 因为上述的这些算子在本质上都是通过卷积计算实现的,只是所使用到的卷积核参数有所不同

- 所以可以构建一个通用的计算算子,只需要传入对应的卷积核参数即可实现不同的边缘检测

- 并且在后处理时集成了上述的四种计算最终边缘强度的方式

import numpy as np

import torch

import torch.nn as nn

import os

import cv2

from PIL import Image

class EdgeOP(nn.Module):

def __init__(self, kernel):

'''

kernel: shape(out_channels, in_channels, h, w)

'''

super(EdgeOP, self).__init__()

out_channels, in_channels, h, w = kernel.shape

self.filter = nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=(h, w), padding='same',

bias=False)

self.filter.weight.data=torch.tensor(kernel,dtype=torch.float32)

@staticmethod

def postprocess(outputs, mode=0, weight=None):

'''

Input: NCHW

Output: NHW(mode==1-3) or NCHW(mode==4)

Params:

mode: switch output mode(0-4)

weight: weight when mode==3

'''

if mode == 0:

results = torch.sum(torch.abs(outputs), dim=1)

elif mode == 1:

results = torch.sqrt(torch.sum(torch.pow(outputs, 2), dim=1))

elif mode == 2:

results = torch.max(torch.abs(outputs), dim=1).values

elif mode == 3:

if weight is None:

C = outputs.shape[1]

weight = torch.tensor([1 / C] * C, dtype=torch.float32)

else:

weight = torch.tensor(weight, dtype=torch.float32)

results = torch.einsum('nchw, c -> nhw', torch.abs(outputs), weight)

elif mode == 4:

results = torch.abs(outputs)

return torch.clip(results, 0, 255).to(torch.uint8)

@torch.no_grad()

def forward(self, images, mode=0, weight=None):

outputs = self.filter(images)

return self.postprocess(outputs, mode, weight)

1.2 图像边缘检测测试函数

为了方便测试就构建了如下的测试函数,测试同一张图片不同算子/不同边缘强度计算方法的边缘检测效果。

代码如下:

import os

import cv2

from PIL import Image

def test_edge_det(kernel, img_path='deer.jpeg'):

img = cv2.imread(img_path, 0)

print(img)

img_tensor = torch.tensor(img, dtype=torch.float32)[None, None, ...]

op = EdgeOP(kernel)

all_results = []

for mode in range(4):

results = op(img_tensor, mode=mode)

all_results.append(results.numpy()[0])

results = op(img_tensor, mode=4)

for result in results.numpy()[0]:

all_results.append(result)

return all_results, np.concatenate(all_results, 1)

1.3 roberts算子

传入roberts算子:

#roberts算子

roberts_kernel = np.array([

[[

[1, 0],

[0, -1]

]],

[[

[0, -1],

[1, 0]

]]

])

_, concat_res = test_edge_det(roberts_kernel)

Image.fromarray(concat_res)

1.4 prewitt算子

传入prewitt算子:

#prewitt算子

prewitt_kernel = np.array([

[[

[-1, -1, -1],

[ 0, 0, 0],

[ 1, 1, 1]

]],

[[

[-1, 0, 1],

[-1, 0, 1],

[-1, 0, 1]

]],

[[

[ 0, 1, 1],

[-1, 0, 1],

[-1, -1, 0]

]],

[[

[ -1, -1, 0],

[ -1, 0, 1],

[ 0, 1, 1]

]]

])

_, concat_res = test_edge_det(prewitt_kernel)

Image.fromarray(concat_res)

1.5 sobel算子

传入sobel算子:

#sobel算子

sobel_kernel = np.array([

[[

[-1, -2, -1],

[ 0, 0, 0],

[ 1, 2, 1]

]],

[[

[-1, 0, 1],

[-2, 0, 2],

[-1, 0, 1]

]],

[[

[ 0, 1, 2],

[-1, 0, 1],

[-2, -1, 0]

]],

[[

[ -2, -1, 0],

[ -1, 0, 1],

[ 0, 1, 2]

]]

])

_, concat_res = test_edge_det(sobel_kernel)

Image.fromarray(concat_res)

1.6 scharr算子

传入scharr算子:

#scharr算子

scharr_kernel = np.array([

[[

[-3, -10, -3],

[ 0, 0, 0],

[ 3, 10, 3]

]],

[[

[-3, 0, 3],

[-10, 0, 10],

[-3, 0, 3]

]],

[[

[ 0, 3, 10],

[-3, 0, 3],

[-10, -3, 0]

]],

[[

[ -10, -3, 0],

[ -3, 0, 3],

[ 0, 3, 10]

]]

])

_, concat_res = test_edge_det(scharr_kernel)

Image.fromarray(concat_res)

1.7 Krisch算子

传入Krisch算子:

#Krisch算子

Krisch_kernel = np.array([

[[

[5, 5, 5],

[-3,0,-3],

[-3,-3,-3]

]],

[[

[-3, 5,5],

[-3,0,5],

[-3,-3,-3]

]],

[[

[-3,-3,5],

[-3,0,5],

[-3,-3,5]

]],

[[

[-3,-3,-3],

[-3,0,5],

[-3,5,5]

]],

[[

[-3, -3, -3],

[-3,0,-3],

[5,5,5]

]],

[[

[-3, -3, -3],

[5,0,-3],

[5,5,-3]

]],

[[

[5, -3, -3],

[5,0,-3],

[5,-3,-3]

]],

[[

[5, 5, -3],

[5,0,-3],

[-3,-3,-3]

]],

])

_, concat_res = test_edge_det(Krisch_kernel)

Image.fromarray(concat_res)

1.8 robinson算子

传入robinson算子:

#robinson算子

robinson_kernel = np.array([

[[

[1, 2, 1],

[0, 0, 0],

[-1, -2, -1]

]],

[[

[0, 1, 2],

[-1, 0, 1],

[-2, -1, 0]

]],

[[

[-1, 0, 1],

[-2, 0, 2],

[-1, 0, 1]

]],

[[

[-2, -1, 0],

[-1, 0, 1],

[0, 1, 2]

]],

[[

[-1, -2, -1],

[0, 0, 0],

[1, 2, 1]

]],

[[

[0, -1, -2],

[1, 0, -1],

[2, 1, 0]

]],

[[

[1, 0, -1],

[2, 0, -2],

[1, 0, -1]

]],

[[

[2, 1, 0],

[1, 0, -1],

[0, -1, -2]

]],

])

_, concat_res = test_edge_det(robinson_kernel)

Image.fromarray(concat_res)

1.9 laplacian算子

传入laplacian算子:

#laplacian算子

laplacian_kernel = np.array([

[[

[1, 1, 1],

[1, -8, 1],

[1, 1, 1]

]],

[[

[0, 1, 0],

[1, -4, 1],

[0, 1, 0]

]]

])

_, concat_res = test_edge_det(laplacian_kernel)

Image.fromarray(concat_res)

边缘检测系列2:简易的 Canny 边缘检测器 - 飞桨AI Studio

实现的简易的 Canny 边缘检测算法:

2.1 算法原理

Canny 是一个经典的图像边缘检测算法,一般包含如下几个步骤:

1. 使用高斯模糊对图像进行模糊降噪处理

2. 基于图像梯度幅值进行图像边缘增强

3. 非极大值抑制处理进行图像边缘细化

4. 图像二值化和边缘连接得到最终的结果

2.2 基于 OpenCV 实现快速的 Canny 边缘检测

在 OpenCV 中只需要使用 cv2.Canny 函数即可实现 Canny 边缘检测:

import cv2

import numpy as np

from PIL import Image

lower = 30 # 最小阈值

upper = 70 # 最大阈值

img_path = 'deer.jpeg' # 指定测试图像路径

gray = cv2.imread(img_path, 0) # 读取灰度图像

edge = cv2.Canny(gray, lower, upper) # Canny 图像边缘检测

contrast = np.concatenate([edge, gray], 1) # 图像拼接

Image.fromarray(contrast) # 显示图像

2.3 基于 Numpy 模块实现简单的 Canny 检测器

代码实现:

import cv2

import math

import numpy as np

def smooth(img_gray, kernel_size=5):

# 生成高斯滤波器

"""

要生成一个 (2k+1)x(2k+1) 的高斯滤波器,滤波器的各个元素计算公式如下:

H[i, j] = (1/(2*pi*sigma**2))*exp(-1/2*sigma**2((i-k-1)**2 + (j-k-1)**2))

"""

sigma1 = sigma2 = 1.4

gau_sum = 0

gaussian = np.zeros([kernel_size, kernel_size])

for i in range(kernel_size):

for j in range(kernel_size):

gaussian[i, j] = math.exp(

(-1 / (2 * sigma1 * sigma2)) *

(np.square(i - 3) + np.square(j-3))

) / (2 * math.pi * sigma1 * sigma2)

gau_sum = gau_sum + gaussian[i, j]

# 归一化处理

gaussian = gaussian / gau_sum

# 高斯滤波

img_gray = np.pad(img_gray, ((kernel_size//2, kernel_size//2), (kernel_size//2, kernel_size//2)), mode='constant')

W, H = img_gray.shape

new_gray = np.zeros([W - kernel_size, H - kernel_size])

for i in range(W-kernel_size):

for j in range(H-kernel_size):

new_gray[i, j] = np.sum(

img_gray[i: i + kernel_size, j: j + kernel_size] * gaussian

)

return new_gray

def gradients(new_gray):

"""

:type: image which after smooth

:rtype:

dx: gradient in the x direction

dy: gradient in the y direction

M: gradient magnitude

theta: gradient direction

"""

W, H = new_gray.shape

dx = np.zeros([W-1, H-1])

dy = np.zeros([W-1, H-1])

M = np.zeros([W-1, H-1])

theta = np.zeros([W-1, H-1])

for i in range(W-1):

for j in range(H-1):

dx[i, j] = new_gray[i+1, j] - new_gray[i, j]

dy[i, j] = new_gray[i, j+1] - new_gray[i, j]

# 图像梯度幅值作为图像强度值

M[i, j] = np.sqrt(np.square(dx[i, j]) + np.square(dy[i, j]))

# 计算 θ - artan(dx/dy)

theta[i, j] = math.atan(dx[i, j] / (dy[i, j] + 0.000000001))

return dx, dy, M, theta

def NMS(M, dx, dy):

d = np.copy(M)

W, H = M.shape

NMS = np.copy(d)

NMS[0, :] = NMS[W-1, :] = NMS[:, 0] = NMS[:, H-1] = 0

for i in range(1, W-1):

for j in range(1, H-1):

# 如果当前梯度为0,该点就不是边缘点

if M[i, j] == 0:

NMS[i, j] = 0

else:

gradX = dx[i, j] # 当前点 x 方向导数

gradY = dy[i, j] # 当前点 y 方向导数

gradTemp = d[i, j] # 当前梯度点

# 如果 y 方向梯度值比较大,说明导数方向趋向于 y 分量

if np.abs(gradY) > np.abs(gradX):

weight = np.abs(gradX) / np.abs(gradY) # 权重

grad2 = d[i-1, j]

grad4 = d[i+1, j]

# 如果 x, y 方向导数符号一致

# 像素点位置关系

# g1 g2

# c

# g4 g3

if gradX * gradY > 0:

grad1 = d[i-1, j-1]

grad3 = d[i+1, j+1]

# 如果 x,y 方向导数符号相反

# 像素点位置关系

# g2 g1

# c

# g3 g4

else:

grad1 = d[i-1, j+1]

grad3 = d[i+1, j-1]

# 如果 x 方向梯度值比较大

else:

weight = np.abs(gradY) / np.abs(gradX)

grad2 = d[i, j-1]

grad4 = d[i, j+1]

# 如果 x, y 方向导数符号一致

# 像素点位置关系

# g3

# g2 c g4

# g1

if gradX * gradY > 0:

grad1 = d[i+1, j-1]

grad3 = d[i-1, j+1]

# 如果 x,y 方向导数符号相反

# 像素点位置关系

# g1

# g2 c g4

# g3

else:

grad1 = d[i-1, j-1]

grad3 = d[i+1, j+1]

# 利用 grad1-grad4 对梯度进行插值

gradTemp1 = weight * grad1 + (1 - weight) * grad2

gradTemp2 = weight * grad3 + (1 - weight) * grad4

# 当前像素的梯度是局部的最大值,可能是边缘点

if gradTemp >= gradTemp1 and gradTemp >= gradTemp2:

NMS[i, j] = gradTemp

else:

# 不可能是边缘点

NMS[i, j] = 0

return NMS

def double_threshold(NMS, threshold1, threshold2):

NMS = np.pad(NMS, ((1, 1), (1, 1)), mode='constant')

W, H = NMS.shape

DT = np.zeros([W, H])

# 定义高低阈值

TL = threshold1 * np.max(NMS)

TH = threshold2 * np.max(NMS)

for i in range(1, W-1):

for j in range(1, H-1):

# 双阈值选取

if (NMS[i, j] < TL):

DT[i, j] = 0

elif (NMS[i, j] > TH):

DT[i, j] = 1

# 连接

elif ((NMS[i-1, j-1:j+1] < TH).any() or

(NMS[i+1, j-1:j+1].any() or

(NMS[i, [j-1, j+1]] < TH).any())):

DT[i, j] = 1

return DT

def canny(gray, threshold1, threshold2, kernel_size=5):

norm_gray = gray

gray_smooth = smooth(norm_gray, kernel_size)

dx, dy, M, theta = gradients(gray_smooth)

nms = NMS(M, dx, dy)

DT = double_threshold(nms, threshold1, threshold2)

return DT

import cv2

import numpy as np

from PIL import Image

lower = 0.1 # 最小阈值

upper = 0.3 # 最大阈值

img_path = 'deer.jpeg' # 指定测试图像路径

gray = cv2.imread(img_path, 0) # 读取灰度图像

edge = canny(gray, lower, upper) # Canny 图像边缘检测

edge = (edge * 255).astype(np.uint8) # 反归一化

contrast = np.concatenate([edge, gray], 1) # 图像拼接

Image.fromarray(contrast) # 显示图像

2.4 基于 torch 实现的 Canny 边缘检测器

代码参考(项目参考:DCurro/CannyEdgePytorch):

import torch

import torch.nn as nn

import numpy as np

from scipy.signal import gaussian

class Net(nn.Module):

def __init__(self, threshold=10.0, use_cuda=False):

super(Net, self).__init__()

self.threshold = threshold

self.use_cuda = use_cuda

filter_size = 5

generated_filters = gaussian(filter_size,std=1.0).reshape([1,filter_size])

self.gaussian_filter_horizontal = nn.Conv2d(in_channels=1, out_channels=1, kernel_size=(1,filter_size), padding=(0,filter_size//2))

self.gaussian_filter_horizontal.weight.data.copy_(torch.from_numpy(generated_filters))

self.gaussian_filter_horizontal.bias.data.copy_(torch.from_numpy(np.array([0.0])))

self.gaussian_filter_vertical = nn.Conv2d(in_channels=1, out_channels=1, kernel_size=(filter_size,1), padding=(filter_size//2,0))

self.gaussian_filter_vertical.weight.data.copy_(torch.from_numpy(generated_filters.T))

self.gaussian_filter_vertical.bias.data.copy_(torch.from_numpy(np.array([0.0])))

sobel_filter = np.array([[1, 0, -1],

[2, 0, -2],

[1, 0, -1]])

self.sobel_filter_horizontal = nn.Conv2d(in_channels=1, out_channels=1, kernel_size=sobel_filter.shape, padding=sobel_filter.shape[0]//2)

self.sobel_filter_horizontal.weight.data.copy_(torch.from_numpy(sobel_filter))

self.sobel_filter_horizontal.bias.data.copy_(torch.from_numpy(np.array([0.0])))

self.sobel_filter_vertical = nn.Conv2d(in_channels=1, out_channels=1, kernel_size=sobel_filter.shape, padding=sobel_filter.shape[0]//2)

self.sobel_filter_vertical.weight.data.copy_(torch.from_numpy(sobel_filter.T))

self.sobel_filter_vertical.bias.data.copy_(torch.from_numpy(np.array([0.0])))

# filters were flipped manually

filter_0 = np.array([ [ 0, 0, 0],

[ 0, 1, -1],

[ 0, 0, 0]])

filter_45 = np.array([ [0, 0, 0],

[ 0, 1, 0],

[ 0, 0, -1]])

filter_90 = np.array([ [ 0, 0, 0],

[ 0, 1, 0],

[ 0,-1, 0]])

filter_135 = np.array([ [ 0, 0, 0],

[ 0, 1, 0],

[-1, 0, 0]])

filter_180 = np.array([ [ 0, 0, 0],

[-1, 1, 0],

[ 0, 0, 0]])

filter_225 = np.array([ [-1, 0, 0],

[ 0, 1, 0],

[ 0, 0, 0]])

filter_270 = np.array([ [ 0,-1, 0],

[ 0, 1, 0],

[ 0, 0, 0]])

filter_315 = np.array([ [ 0, 0, -1],

[ 0, 1, 0],

[ 0, 0, 0]])

all_filters = np.stack([filter_0, filter_45, filter_90, filter_135, filter_180, filter_225, filter_270, filter_315])

self.directional_filter = nn.Conv2d(in_channels=1, out_channels=8, kernel_size=filter_0.shape, padding=filter_0.shape[-1] // 2)

self.directional_filter.weight.data.copy_(torch.from_numpy(all_filters[:, None, ...]))

self.directional_filter.bias.data.copy_(torch.from_numpy(np.zeros(shape=(all_filters.shape[0],))))

def forward(self, img):

img_r = img[:,0:1]

img_g = img[:,1:2]

img_b = img[:,2:3]

blur_horizontal = self.gaussian_filter_horizontal(img_r)

blurred_img_r = self.gaussian_filter_vertical(blur_horizontal)

blur_horizontal = self.gaussian_filter_horizontal(img_g)

blurred_img_g = self.gaussian_filter_vertical(blur_horizontal)

blur_horizontal = self.gaussian_filter_horizontal(img_b)

blurred_img_b = self.gaussian_filter_vertical(blur_horizontal)

blurred_img = torch.stack([blurred_img_r,blurred_img_g,blurred_img_b],dim=1)

blurred_img = torch.stack([torch.squeeze(blurred_img)])

grad_x_r = self.sobel_filter_horizontal(blurred_img_r)

grad_y_r = self.sobel_filter_vertical(blurred_img_r)

grad_x_g = self.sobel_filter_horizontal(blurred_img_g)

grad_y_g = self.sobel_filter_vertical(blurred_img_g)

grad_x_b = self.sobel_filter_horizontal(blurred_img_b)

grad_y_b = self.sobel_filter_vertical(blurred_img_b)

# COMPUTE THICK EDGES

grad_mag = torch.sqrt(grad_x_r**2 + grad_y_r**2)

grad_mag += torch.sqrt(grad_x_g**2 + grad_y_g**2)

grad_mag += torch.sqrt(grad_x_b**2 + grad_y_b**2)

grad_orientation = (torch.atan2(grad_y_r+grad_y_g+grad_y_b, grad_x_r+grad_x_g+grad_x_b) * (180.0/3.14159))

grad_orientation += 180.0

grad_orientation = torch.round( grad_orientation / 45.0 ) * 45.0

# THIN EDGES (NON-MAX SUPPRESSION)

all_filtered = self.directional_filter(grad_mag)

inidices_positive = (grad_orientation / 45) % 8

inidices_negative = ((grad_orientation / 45) + 4) % 8

height = inidices_positive.size()[2]

width = inidices_positive.size()[3]

pixel_count = height * width

pixel_range = torch.FloatTensor([range(pixel_count)])

if self.use_cuda:

pixel_range = torch.cuda.FloatTensor([range(pixel_count)])

indices = (inidices_positive.view(-1).data * pixel_count + pixel_range).squeeze()

channel_select_filtered_positive = all_filtered.view(-1)[indices.long()].view(1,height,width)

indices = (inidices_negative.view(-1).data * pixel_count + pixel_range).squeeze()

channel_select_filtered_negative = all_filtered.view(-1)[indices.long()].view(1,height,width)

channel_select_filtered = torch.stack([channel_select_filtered_positive,channel_select_filtered_negative])

is_max = channel_select_filtered.min(dim=0)[0] > 0.0

is_max = torch.unsqueeze(is_max, dim=0)

thin_edges = grad_mag.clone()

thin_edges[is_max==0] = 0.0

# THRESHOLD

thresholded = thin_edges.clone()

thresholded[thin_edges<self.threshold] = 0.0

early_threshold = grad_mag.clone()

early_threshold[grad_mag<self.threshold] = 0.0

assert grad_mag.size() == grad_orientation.size() == thin_edges.size() == thresholded.size() == early_threshold.size()

return blurred_img, grad_mag, grad_orientation, thin_edges, thresholded, early_threshold

import cv2

import imageio

import torch

from torch.autograd import Variable

def canny(raw_img, use_cuda=False):

img = torch.from_numpy(raw_img.transpose((2, 0, 1)))

batch = torch.stack([img]).float()

net = Net(threshold=3.0, use_cuda=use_cuda)

if use_cuda:

net.cuda()

net.eval()

data = Variable(batch)

if use_cuda:

data = Variable(batch).cuda()

blurred_img, grad_mag, grad_orientation, thin_edges, thresholded, early_threshold = net(data)

imageio.imsave('gradient_magnitude.png',grad_mag.data.cpu().numpy()[0,0])

imageio.imsave('thin_edges.png', thresholded.data.cpu().numpy()[0, 0])

imageio.imsave('final.png', (thresholded.data.cpu().numpy()[0, 0] > 0.0).astype(float))

imageio.imsave('thresholded.png', early_threshold.data.cpu().numpy()[0, 0])

if __name__ == '__main__':

img = imageio.imread('deer.jpeg')/ 255.0

# canny(img, use_cuda=False)

canny(img, use_cuda=False)

边缘检测系列3:【HED】 Holistically-Nested 边缘检测 - 飞桨AI Studio

复现论文 Holistically-Nested Edge Detection,发表于 CVPR 2015

一个基于深度学习的端到端边缘检测模型

复现效果图:

边缘检测系列4:【RCF】基于更丰富的卷积特征的边缘检测 - 飞桨AI Studio (baidu.com)

复现论文 Richer Convolutional Features for Edge Detection,CVPR 2017 发表

一个基于更丰富的卷积特征的边缘检测模型 【RCF】。

复现结果:

边缘检测系列5:【CED】添加了反向细化路径的 HED 模型 - 飞桨AI Studio (baidu.com)

Crisp Edge Detection(CED)模型是前面介绍过的 HED 模型的另一种改进模型

复现结果如下:

总结

今天写的挺多的感觉,复现代码真的要累死,,,,简答总结一下这次实验:作为卷积神经网络的开头实验,我们了解了最基础的二维卷积的有关知识,对于二维卷积的运算、算子的实现、计算量和参数量都做了复现和详细的探讨,对于感受野、变种卷积、步长和零填充做了复习,并且使用前边自定义的二维卷积算子完成了一个简单的图片边缘检测,然后呢,通过将paddle代码用torch复现,我了解到边缘检测的基本步骤,了解了边缘检测的一些算子,起码能看懂和能修改代码中的错误。并在选做中用opencv、numpy、torch三种方法实现了canny算子边缘检测器,了解了RCF、HED、CED的网络结构,有时间就做,感觉收获还挺多的,虽然之前偶尔摆个烂,但是该好好写还是要好好写的。

参考博客:

markdown编辑器使用方法(对数学公式的编写方法做了全面详细的说明)

NNDL 实验5(上) - HBU_DAVID - 博客园 (cnblogs.com)

NNDL 实验5(下) - HBU_DAVID - 博客园 (cnblogs.com)

卷积神经网络 — 动手学深度学习 2.0.0-beta1 documentation(d2l.ai)

现代卷积神经网络 — 动手学深度学习 2.0.0-beta1 documentation (d2l.ai)

边缘检测系列1:传统边缘检测算子

边缘检测系列2:简易的 Canny 边缘检测器

边缘检测系列3:【HED】 Holistically-Nested 边缘检测

边缘检测系列4:【RCF】基于更丰富的卷积特征的边缘检测

边缘检测系列5:【CED】添加了反向细化路径的 HED 模型

这个是老师的博客:

NNDL 实验六 卷积神经网络(1)卷积