RNN循环神经网络 - PyTorch

动手学深度学习-循环神经网络笔记

- 一、文本预处理

-

- 1.读取数据集

- 2.Token(词元)化

- 3.构建词表

- 二、读取⻓序列数据

-

- 1.随机采样

- 2.顺序分区

- 三、RNN从零实现

-

- 1.预测

- 2.梯度裁剪

- 3.训练

- 四、RNN简洁实现

一、文本预处理

常⻅预处理步骤:

-

将文本作为字符串加载到内存中。

-

将字符串拆分为词元(如单词和字符)。

-

建立一个词表,将拆分的词元映射到数字索引。

-

将文本转换为数字索引序列,方便模型操作。

1.读取数据集

d2l.DATA_HUB['time_machine'] = (d2l.DATA_URL + 'timemachine.txt',

'090b5e7e70c295757f55df93cb0a180b9691891a')

def read_time_machine():

# 将时间机器数据集加载到文本行的列表中

with open(d2l.download('time_machine'), 'r') as f:

lines = f.readlines()

# 将非字母的转换成空格,再将左右空格去掉,以及大写变小写

return [re.sub('[^A-Za-z]+', ' ', line).strip().lower() for line in lines]

lines = read_time_machine()

print(f'# 文本总行数: {len(lines)}')

print(lines[0])

print(lines[10])

2.Token(词元)化

词元(token)是文本的基本单位。函数返回一个由词元列表组成的列表,其中的每个词元都是一个字符串(string)。

def tokenize(lines, token='word'):

"""将文本行拆分为单词或字符词元"""

if token == 'word':

return [line.split() for line in lines]

elif token == 'char':

return [list(line) for line in lines]

else:

print('错误:未知词元类型:' + token)

tokens = tokenize(lines)

print(tokens[0])

3.构建词表

构建一个字典,通常也叫做词表(vocabulary),用来将字符串类型的词元映射到从0开始的数字索引中。

先将训练集中的所有文档合并在一起,对它们的唯一词元进行统计,得到的统计结果称之为语料(corpus)。

然后根据每个唯一词元的出现频率,为其分配一个数字索引。很少出现的词元通常被移除,这可以降低复杂性。

语料库中不存在或已删除的任何词元都将映射到一个特定的未知词元“

import collections

# 构建字典(词汇表),用来将字符串类型的token映射到从0开始的索引中

class Vocab:

def __init__(self,tokens = None, min_freq = 0,reserved_tokens = None):

if tokens is None:

tokens = []

if reserved_tokens is None:

reserved_tokens = []

# 按出现频率降序排列

counter = count_corpus(tokens)

self.token_freqs = sorted(counter.items(),

key = lambda x:x[1],reverse = True)

# 未知词元的索引为0

self.unk, uniq_tokens = 0,['] + reserved_tokens

uniq_tokens += [token for token,freq in self.token_freqs

if freq >= min_freq and token not in uniq_tokens]

self.idx_to_token, self.token_to_idx = [], dict()

for token in uniq_tokens:

self.idx_to_token.append(token)

self.token_to_idx[token] = len(self.idx_to_token) - 1

def __len__(self):

return len(self.idx_to_token)

def __getitem__(self,tokens):

if not isinstance(tokens,(list,tuple)):

return self.token_to_idx.get(tokens, self.unk)

return [self.__getitem__(token) for token in tokens]

def to_tokens(self, indices):

if not isinstance(indices, (list, tuple)):

return self.idx_to_token[indices]

return [self.idx_to_token[index] for index in indices]

def count_corpus(tokens):

"""统计token的频率"""

if len(tokens) == 0 or isinstance(tokens[0],list):

tokens = [token for line in tokens for token in line]

return collections.Counter(tokens)

- 使用时光机器数据集作为语料库构建词表,打印前几个高频词元及其索引。

vocab = Vocab(tokens)

print(list(vocab.token_to_idx.items())[:10])

- 将每一条文本行转换成一个数字索引列表。

for i in [0, 10]:

print('文本:', tokens[i])

print('索引:', vocab[tokens[i]])

二、读取⻓序列数据

1.随机采样

def seq_data_iter_random(corpus, batch_size, num_steps):

"""

使用随机抽样生成一个小批量子序列

corpus: 原始的长序列

batch_size: 每个小批量中子序列样本的数目

num_steps: 每个子序列中预定义的时间步数(一个样本序列的长度)

"""

corpus = corpus[random.randint(0, num_steps-1): ]

# 减去1,是因为需要考虑标签

num_subseqs = (len(corpus) -1) // num_steps #子序列的数量

# ⻓度为num_steps的子序列的起始索引

initial_indices = list(range(0, num_subseqs * num_steps,num_steps))

random.shuffle(initial_indices)

def data(pos):

# 返回从pos位置开始的⻓度为num_steps的序列

return corpus[pos:pos+num_steps]

num_batches = num_subseqs // batch_size

for i in range(0, batch_size * num_batches, batch_size):

# initial_indices包含子序列的随机起始索引

initial_indices_per_batch = initial_indices[i: i + batch_size]

X = [data(j) for j in initial_indices_per_batch]

Y = [data(j + 1) for j in initial_indices_per_batch]

yield torch.tensor(X), torch.tensor(Y)

下面生成一个从0到34的序列。假设批量大小为2,时间步数为5,这意味着可以生成 ⌊(35 − 1)/5⌋ = 6个 “特征-标签”子序列对。设置小批量大小为2,可以得到3个小批量。

my_seq = list(range(35))

for X, Y in seq_data_iter_random(my_seq, batch_size=2, num_steps=5):

print('X: ', X, '\nY:', Y)

# output

X: tensor([[15, 16, 17, 18, 19],

[20, 21, 22, 23, 24]])

Y: tensor([[16, 17, 18, 19, 20],

[21, 22, 23, 24, 25]])

X: tensor([[ 0, 1, 2, 3, 4],

[25, 26, 27, 28, 29]])

Y: tensor([[ 1, 2, 3, 4, 5],

[26, 27, 28, 29, 30]])

X: tensor([[ 5, 6, 7, 8, 9],

[10, 11, 12, 13, 14]])

Y: tensor([[ 6, 7, 8, 9, 10],

[11, 12, 13, 14, 15]])

2.顺序分区

def seq_data_iter_sequential(corpus, batch_size, num_steps):

"""使用顺序分区生成一个小批量子序列"""

# 从随机偏移量开始划分序列

offset = random.randint(0,num_steps)

num_tokens = ((len(corpus) - offset - 1) // batch_size) * batch_size

Xs = torch.tensor(corpus[offset: offset + num_tokens]).reshape(batch_size, -1)

Ys = torch.tensor(corpus[offset + 1: offset + 1+ num_tokens]).reshape(batch_size, -1)

num_batches = Xs.shape[1] // num_steps

for i in range(0, num_steps * num_batches, num_steps):

X = Xs[:, i: i+num_steps]

Y = Ys[:, i: i+num_steps]

yield X,Y

for X, Y in seq_data_iter_sequential(my_seq, batch_size=2, num_steps=5):

print('X: ', X, '\nY:', Y)

# output

X: tensor([[ 4, 5, 6, 7, 8],

[19, 20, 21, 22, 23]])

Y: tensor([[ 5, 6, 7, 8, 9],

[20, 21, 22, 23, 24]])

X: tensor([[ 9, 10, 11, 12, 13],

[24, 25, 26, 27, 28]])

Y: tensor([[10, 11, 12, 13, 14],

[25, 26, 27, 28, 29]])

X: tensor([[14, 15, 16, 17, 18],

[29, 30, 31, 32, 33]])

Y: tensor([[15, 16, 17, 18, 19],

[30, 31, 32, 33, 34]])

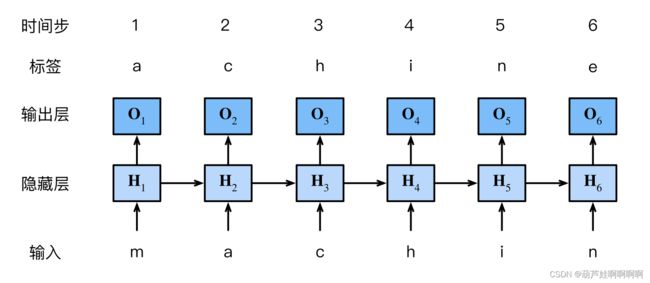

三、RNN从零实现

回忆多层感知机模型,并与循环神经网络比较

无隐状态的多层感知机:单隐藏层,隐藏层输出为 H \pmb H HHH

H = ϕ ( X W x h + b h ) O = H W h q + b q \pmb{H}=\phi(\pmb{XW}_{xh}+\pmb{b}_h) \\ \pmb{O}=\pmb{HW}_{hq}+\pmb{b}_q HHH=ϕ(XWXWXWxh+bbbh)OOO=HWHWHWhq+bbbq

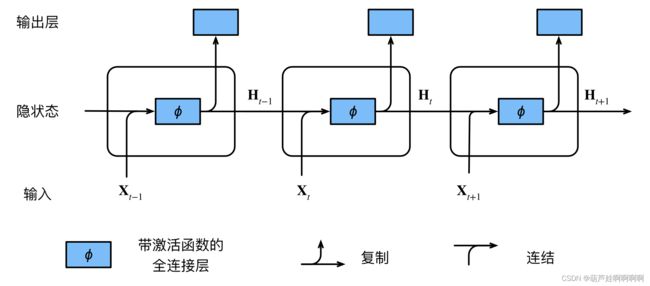

有隐状态的循环神经网络

t t t 时间隐藏变量由 t t t 时间的输入和 t − 1 t-1 t−1 的隐藏变量一起计算得出:

H t = ϕ ( X t W x h + H t − 1 W h h + b h ) O t = H t W h q + b q \pmb{H}_t=\phi(\pmb{X}_t\pmb{W}_{xh}+\pmb{H}_{t-1}\pmb{W}_{hh}+\pmb{b}_h) \\ \pmb{O}_t=\pmb{H}_t\pmb{W}_{hq}+\pmb{b}_q HHHt=ϕ(XXXtWWWxh+HHHt−1WWWhh+bbbh)OOOt=HHHtWWWhq+bbbq

即使在不同的时间步,循环神经网络也总是使用这些模型参数。因此,循环神经网络的参数开销不会随着时间步的增加而增加。

交叉熵损失:

− 1 n ∑ t = 1 n l o g P ( x t ∣ x t − 1 , . . . , x 1 ) -\frac{1}{n}\sum_{t=1}^{n}logP(x_t|x_{t-1},...,x_1) −n1t=1∑nlogP(xt∣xt−1,...,x1)

模型质量的度量:困惑度

p e r p l e x i t y = e x p ( − 1 n ∑ t = 1 n l o g P ( x t ∣ x t − 1 , . . . , x 1 ) ) perplexity = exp(-\frac{1}{n}\sum_{t=1}^{n}logP(x_t|x_{t-1},...,x_1)) perplexity=exp(−n1t=1∑nlogP(xt∣xt−1,...,x1))

- 在最好的情况下,模型总是完美地估计标签词元的概率为1。在这种情况下,模型的困惑度为1。

- 在最坏的情况下,模型总是预测标签词元的概率为0。在这种情况下,困惑度是正无穷大。

- 在基线上,该模型的预测是词表的所有可用词元上的均匀分布。在这种情况下,困惑度等于词表中唯一词元的数量。

1.预测

import math

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

def get_params(vocab_size, num_hiddens, device):

num_inputs = num_outputs = vocab_size

def normal(shape):

return torch.randn(size=shape, device=device) * 0.01

# 隐藏层参数

W_xh = normal((num_inputs, num_hiddens))

W_hh = normal((num_hiddens, num_hiddens))

b_h = torch.zeros(num_hiddens, device=device)

# 输出层参数

W_hq = normal((num_hiddens, num_outputs))

b_q = torch.zeros(num_outputs, device=device)

# 附加梯度

params = [W_xh, W_hh, b_h, W_hq, b_q]

for param in params:

param.requires_grad_(True)

return params

# 初始隐藏状态h_0

def init_rnn_state(batch_size, num_hiddens, device):

return (torch.zeros((batch_size, num_hiddens), device=device), )

# 在一个时间步内计算隐状态和输出

def rnn(inputs, state, params):

W_xh, W_hh, b_h, W_hq, b_q = params

H, = state

outputs = []

# inputs的形状:(时间步数量,批量大小,词表大小)

for X in inputs:

H = torch.tanh(torch.mm(X, W_xh) + torch.mm(H, W_hh) + b_h)

Y = torch.mm(H, W_hq) + b_q

outputs.append(Y)

return torch.cat(outputs, dim=0), (H,)

class RNNModelScratch:

"""从零开始实现的循环神经网络模型"""

def __init__(self, vocab_size, num_hiddens, device,

get_params, init_state, forward_fn):

self.vocab_size, self.num_hiddens = vocab_size, num_hiddens

self.params = get_params(vocab_size, num_hiddens, device)

self.init_state, self.forward_fn = init_state, forward_fn

def __call__(self, X, state):

X = F.one_hot(X.T, self.vocab_size).type(torch.float32)

return self.forward_fn(X, state, self.params)

def begin_state(self, batch_size, device):

return self.init_state(batch_size, self.num_hiddens, device)

# 预测

def predict_ch8(prefix, num_preds, net, vocab, device):

"""

num_preds: prefix之后生成新字符的个数

"""

state = net.begin_state(batch_size=1, device=device)

outputs = [vocab[prefix[0]]]

get_input = lambda: torch.tensor([outputs[-1]], device=device).reshape((1, 1))

for y in prefix[1:]: # 预热期:只需要状态不需要预测,因为有真实值

_, state = net(get_input(), state)

outputs.append(vocab[y])

for _ in range(num_preds): # 预测num_preds步

y, state = net(get_input(), state)

outputs.append(int(y.argmax(dim=1).reshape(1)))

return ''.join([vocab.idx_to_token[i] for i in outputs])

batch_size = 32

num_steps = 35

trainer_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

num_hiddens = 512

net = RNNModelScratch(len(vocab), num_hiddens, d2l.try_gpu(), get_params,

init_rnn_state, rnn)

predict_ch8('time traveller ', 10, net, vocab, d2l.try_gpu())

# 预测的输出

'time traveller ygamnygamn'

2.梯度裁剪

防止随着网络的加深,反向传播过程中梯度连续相乘导致梯度过大,所以进行梯度剪裁。

梯度裁剪可以有效解决梯度爆炸的问题,但是不能解决梯度消失的问题。

def grad_clipping(net, theta):

"""裁剪梯度"""

# 这个函数里的params包含网络中所有可训练参数

if isinstance(net, nn.Module):

params = [p for p in net.parameters() if p.requires_grad]

else:

params = net.params

norm = torch.sqrt(sum(torch.sum((p.grad ** 2)) for p in params))

if norm > theta:

for param in params:

param.grad[:] *= theta / norm

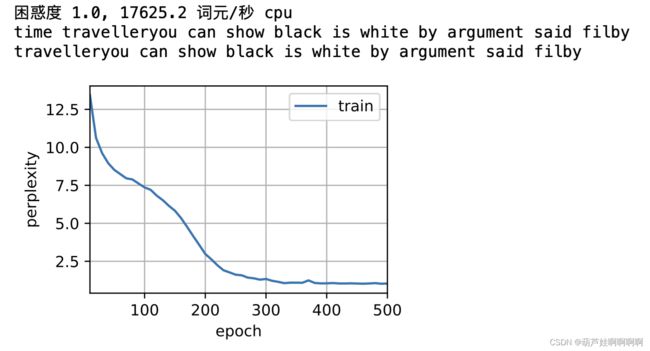

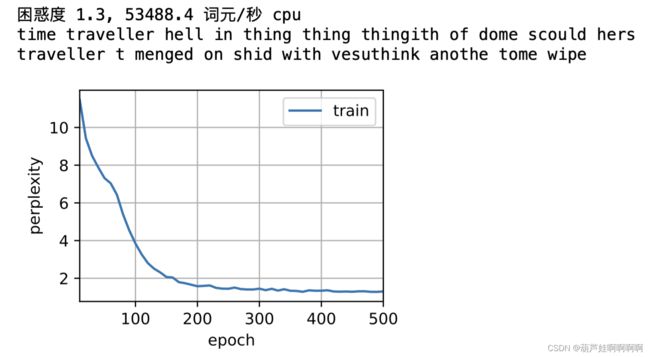

3.训练

定义函数:在一个迭代周期内训练模型。

-

序列数据的不同采样方法(随机采样和顺序分区)将导致隐状态初始化的差异。

-

在更新模型参数之前裁剪梯度。这样的操作的目的是:即使训练过程中某个点上发生了梯度爆炸, 也能保证模型不会发散。

-

在任何一点隐状态的计算,都依赖于同一迭代周期中前面所有的小批量数据,这使得梯度计算变得复杂。为了降低计算量,在处理任何一个小批量数据之前,先分离梯度,使得隐状态的梯度计算总是限制在一个小批量数据的时间步内。

-

用困惑度来评价模型。

def train_epoch_ch8(net, train_iter, loss, updater, device, use_random_iter):

"""训练网络一个迭代周期"""

state, timer = None, d2l.Timer()

metric = d2l.Accumulator(2) # 训练损失之和,词元数量

for X, Y in train_iter:

if state is None or use_random_iter:

# 在第一次迭代或使用随机抽样时初始化state

state = net.begin_state(batch_size=X.shape[0], device=device)

else:

if isinstance(net, nn.Module) and not isinstance(state, tuple):

state.detach_()

else:

for s in state:

s.detach_()

y = Y.T.reshape(-1)

X, y = X.to(device), y.to(device)

y_hat, state = net(X, state)

l = loss(y_hat, y.long()).mean()

if isinstance(updater, torch.optim.Optimizer):

updater.zero_grad()

l.backward()

grad_clipping(net, 1)

updater.step()

else:

l.backward()

grad_clipping(net, 1)

updater(batch_size=1)

metric.add(l * y.numel(), y.numel()) # numel():tensor中一共包含多少个元素

return math.exp(metric[0] / metric[1]), metric[1] / timer.stop()

def train_ch8(net, train_iter, vocab, lr, num_epochs, device,

use_random_iter=False):

"""训练模型"""

loss = nn.CrossEntropyLoss()

animator = d2l.Animator(xlabel='epoch', ylabel='perplexity',

legend=['train'], xlim=[10, num_epochs])

# 初始化

if isinstance(net, nn.Module):

updater = torch.optim.SGD(net.parameters(), lr)

else:

updater = lambda batch_size: d2l.sgd(net.params, lr, batch_size)

predict = lambda prefix: predict_ch8(prefix, 50, net, vocab, device)

# 训练和预测

for epoch in range(num_epochs):

ppl, speed = train_epoch_ch8(

net, train_iter, loss, updater, device, use_random_iter)

if (epoch + 1) % 10 == 0:

print(predict('time traveller'))

animator.add(epoch + 1, [ppl])

print(f'困惑度 {ppl:.1f}, {speed:.1f} 词元/秒 {str(device)}')

print(predict('time traveller'))

print(predict('traveller'))

batch_size, num_steps = 32, 35

num_epochs, lr = 500, 1

num_hiddens = 512

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

net = RNNModelScratch(len(vocab), num_hiddens, d2l.try_gpu(), get_params,

init_rnn_state, rnn)

train_ch8(net, train_iter, vocab, lr, num_epochs, d2l.try_gpu())

# 随机抽样方法

# train_ch8(net, train_iter, vocab, lr, num_epochs, d2l.try_gpu(), use_random_iter=True)

四、RNN简洁实现

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

num_hiddens = 256

rnn_layer = nn.RNN(len(vocab), num_hiddens)

# 初始化隐状态

state = torch.zeros((1, batch_size, num_hiddens)) #(隐藏层数,批量大小,隐藏单元数)

X = torch.rand(size=(num_steps, batch_size, len(vocab)))

# rnn_layer的“输出”(Y)不涉及输出层的计算:它是指每个时间步的隐状态,这些隐状态可以用作后续输出层的输入。

Y, state_new = rnn_layer(X, state)

class RNNModel(nn.Module):

def __init__(self, rnn_layer, vocab_size, **kwargs):

super(RNNModel, self).__init__(**kwargs)

self.rnn = rnn_layer

self.vocab_size = vocab_size

self.num_hiddens = self.rnn.hidden_size

# 如果RNN是双向的,num_directions应该是2,否则应该是1

if not self.rnn.bidirectional:

self.num_directions = 1

self.linear = nn.Linear(self.num_hiddens, self.vocab_size)

else:

self.num_directions = 2

self.linear = nn.Linear(self.num_hiddens * 2, self.vocab_size)

def forward(self, inputs, state):

X = F.one_hot(inputs.T.long(), self.vocab_size)

X = X.to(torch.float32)

Y, state = self.rnn(X, state)

# 全连接层首先将Y的形状改为(时间步数*批量大小,隐藏单元数)

# 它的输出形状是(时间步数*批量大小,词表大小)。

output = self.linear(Y.reshape((-1, Y.shape[-1])))

return output, state

def begin_state(self, device, batch_size=1):

if not isinstance(self.rnn, nn.LSTM):

# nn.GRU以张量作为隐状态

return torch.zeros((self.num_directions * self.rnn.num_layers,batch_size,

self.num_hiddens),device=device)

else:

# nn.LSTM以元组作为隐状态

return (torch.zeros((self.num_directions * self.rnn.num_layers,batch_size,

self.num_hiddens), device=device),

torch.zeros((self.num_directions * self.rnn.num_layers,batch_size,

self.num_hiddens), device=device))

net = RNNModel(rnn_layer, vocab_size=len(vocab))

net = net.to(device)

d2l.predict_ch8('time traveller', 10, net, vocab, d2l.try_gpu())

num_epochs, lr = 500, 1

d2l.train_ch8(net, train_iter, vocab, lr, num_epochs, device)