pytorch可视化

pytorch快速查看网络结构

直接查看

这里是一个小栗子

import torch

from torch import nn

from torch.utils.data import DataLoader,Dataset

import torchvision.models as models

from torchinfo import summary

##定义模型

model = nn.LSTM(input_size=10,hidden_size=20,batch_first=True)

print(model)

OUTPUT:

LSTM(10, 20, batch_first=True)

下面是resnet 的网络

resnet = models.resnet18()

resnet

#OUTPUT:

ResNet(

(conv1): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(maxpool): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

(layer1): Sequential(

(0): BasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(1): BasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer2): Sequential(

(0): BasicBlock(

(conv1): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(64, 128, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer3): Sequential(

(0): BasicBlock(

(conv1): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(128, 256, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(layer4): Sequential(

(0): BasicBlock(

(conv1): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(256, 512, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(avgpool): AdaptiveAvgPool2d(output_size=(1, 1))

(fc): Linear(in_features=512, out_features=1000, bias=True)

)

使用torch_info

from torchinfo import summary

summary(resnet,(1,3,224,224))

#OUTPUT:

==========================================================================================

Layer (type:depth-idx) Output Shape Param #

==========================================================================================

ResNet [1, 1000] --

├─Conv2d: 1-1 [1, 64, 112, 112] 9,408

├─BatchNorm2d: 1-2 [1, 64, 112, 112] 128

├─ReLU: 1-3 [1, 64, 112, 112] --

├─MaxPool2d: 1-4 [1, 64, 56, 56] --

├─Sequential: 1-5 [1, 64, 56, 56] --

│ └─BasicBlock: 2-1 [1, 64, 56, 56] --

│ │ └─Conv2d: 3-1 [1, 64, 56, 56] 36,864

│ │ └─BatchNorm2d: 3-2 [1, 64, 56, 56] 128

│ │ └─ReLU: 3-3 [1, 64, 56, 56] --

│ │ └─Conv2d: 3-4 [1, 64, 56, 56] 36,864

│ │ └─BatchNorm2d: 3-5 [1, 64, 56, 56] 128

│ │ └─ReLU: 3-6 [1, 64, 56, 56] --

│ └─BasicBlock: 2-2 [1, 64, 56, 56] --

│ │ └─Conv2d: 3-7 [1, 64, 56, 56] 36,864

│ │ └─BatchNorm2d: 3-8 [1, 64, 56, 56] 128

│ │ └─ReLU: 3-9 [1, 64, 56, 56] --

│ │ └─Conv2d: 3-10 [1, 64, 56, 56] 36,864

│ │ └─BatchNorm2d: 3-11 [1, 64, 56, 56] 128

│ │ └─ReLU: 3-12 [1, 64, 56, 56] --

├─Sequential: 1-6 [1, 128, 28, 28] --

│ └─BasicBlock: 2-3 [1, 128, 28, 28] --

│ │ └─Conv2d: 3-13 [1, 128, 28, 28] 73,728

│ │ └─BatchNorm2d: 3-14 [1, 128, 28, 28] 256

│ │ └─ReLU: 3-15 [1, 128, 28, 28] --

│ │ └─Conv2d: 3-16 [1, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-17 [1, 128, 28, 28] 256

│ │ └─Sequential: 3-18 [1, 128, 28, 28] 8,448

│ │ └─ReLU: 3-19 [1, 128, 28, 28] --

│ └─BasicBlock: 2-4 [1, 128, 28, 28] --

│ │ └─Conv2d: 3-20 [1, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-21 [1, 128, 28, 28] 256

│ │ └─ReLU: 3-22 [1, 128, 28, 28] --

│ │ └─Conv2d: 3-23 [1, 128, 28, 28] 147,456

│ │ └─BatchNorm2d: 3-24 [1, 128, 28, 28] 256

│ │ └─ReLU: 3-25 [1, 128, 28, 28] --

├─Sequential: 1-7 [1, 256, 14, 14] --

│ └─BasicBlock: 2-5 [1, 256, 14, 14] --

│ │ └─Conv2d: 3-26 [1, 256, 14, 14] 294,912

│ │ └─BatchNorm2d: 3-27 [1, 256, 14, 14] 512

│ │ └─ReLU: 3-28 [1, 256, 14, 14] --

│ │ └─Conv2d: 3-29 [1, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-30 [1, 256, 14, 14] 512

│ │ └─Sequential: 3-31 [1, 256, 14, 14] 33,280

│ │ └─ReLU: 3-32 [1, 256, 14, 14] --

│ └─BasicBlock: 2-6 [1, 256, 14, 14] --

│ │ └─Conv2d: 3-33 [1, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-34 [1, 256, 14, 14] 512

│ │ └─ReLU: 3-35 [1, 256, 14, 14] --

│ │ └─Conv2d: 3-36 [1, 256, 14, 14] 589,824

│ │ └─BatchNorm2d: 3-37 [1, 256, 14, 14] 512

│ │ └─ReLU: 3-38 [1, 256, 14, 14] --

├─Sequential: 1-8 [1, 512, 7, 7] --

│ └─BasicBlock: 2-7 [1, 512, 7, 7] --

│ │ └─Conv2d: 3-39 [1, 512, 7, 7] 1,179,648

│ │ └─BatchNorm2d: 3-40 [1, 512, 7, 7] 1,024

│ │ └─ReLU: 3-41 [1, 512, 7, 7] --

│ │ └─Conv2d: 3-42 [1, 512, 7, 7] 2,359,296

│ │ └─BatchNorm2d: 3-43 [1, 512, 7, 7] 1,024

│ │ └─Sequential: 3-44 [1, 512, 7, 7] 132,096

│ │ └─ReLU: 3-45 [1, 512, 7, 7] --

│ └─BasicBlock: 2-8 [1, 512, 7, 7] --

│ │ └─Conv2d: 3-46 [1, 512, 7, 7] 2,359,296

│ │ └─BatchNorm2d: 3-47 [1, 512, 7, 7] 1,024

│ │ └─ReLU: 3-48 [1, 512, 7, 7] --

│ │ └─Conv2d: 3-49 [1, 512, 7, 7] 2,359,296

│ │ └─BatchNorm2d: 3-50 [1, 512, 7, 7] 1,024

│ │ └─ReLU: 3-51 [1, 512, 7, 7] --

├─AdaptiveAvgPool2d: 1-9 [1, 512, 1, 1] --

├─Linear: 1-10 [1, 1000] 513,000

==========================================================================================

Total params: 11,689,512

Trainable params: 11,689,512

Non-trainable params: 0

Total mult-adds (G): 1.81

==========================================================================================

Input size (MB): 0.60

Forward/backward pass size (MB): 39.75

Params size (MB): 46.76

Estimated Total Size (MB): 87.11

==========================================================================================

LSTM_info

summary(model,(20,2,10))

==========================================================================================

Layer (type:depth-idx) Output Shape Param #

==========================================================================================

LSTM [20, 2, 20] 2,560

==========================================================================================

Total params: 2,560

Trainable params: 2,560

Non-trainable params: 0

Total mult-adds (M): 0.10

==========================================================================================

Input size (MB): 0.00

Forward/backward pass size (MB): 0.01

Params size (MB): 0.01

Estimated Total Size (MB): 0.02

==========================================================================================

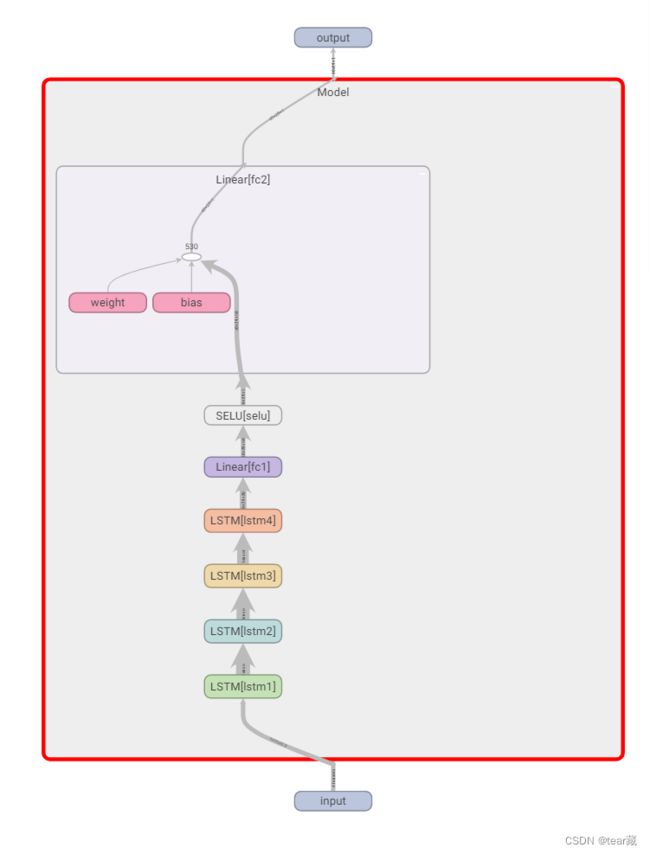

TensorBoard可视化网络结构/loss

from torch.utils.tensorboard import SummaryWriter

writer = SummaryWriter('./runs')

LSTM network for time series

class Model(nn.Module):

def __init__(self, input_size):

hidden = [400, 300, 200, 100]

super().__init__()

self.lstm1 = nn.LSTM(input_size, hidden[0],

batch_first=True, bidirectional=True)

self.lstm2 = nn.LSTM(2 * hidden[0], hidden[1],

batch_first=True, bidirectional=True)

self.lstm3 = nn.LSTM(2 * hidden[1], hidden[2],

batch_first=True, bidirectional=True)

self.lstm4 = nn.LSTM(2 * hidden[2], hidden[3],

batch_first=True, bidirectional=True)

self.fc1 = nn.Linear(2 * hidden[3], 50)

self.selu = nn.SELU()

self.fc2 = nn.Linear(50, 1)

self._reinitialize()

def _reinitialize(self):

"""

Tensorflow/Keras-like initialization

"""

for name, p in self.named_parameters():

if 'lstm' in name:

if 'weight_ih' in name:

nn.init.xavier_uniform_(p.data)

elif 'weight_hh' in name:

nn.init.orthogonal_(p.data)

elif 'bias_ih' in name:

p.data.fill_(0)

# Set forget-gate bias to 1

n = p.size(0)

p.data[(n // 4):(n // 2)].fill_(1)

elif 'bias_hh' in name:

p.data.fill_(0)

elif 'fc' in name:

if 'weight' in name:

nn.init.xavier_uniform_(p.data)

elif 'bias' in name:

p.data.fill_(0)

def forward(self, x):

x, _ = self.lstm1(x)

x, _ = self.lstm2(x)

x, _ = self.lstm3(x)

x, _ = self.lstm4(x)

x = self.fc1(x)

x = self.selu(x)

x = self.fc2(x)

return x

#结构参数

==========================================================================================

Layer (type:depth-idx) Output Shape Param #

==========================================================================================

Model [10, 80, 1] --

├─LSTM: 1-1 [10, 80, 800] 1,318,400

├─LSTM: 1-2 [10, 80, 600] 2,644,800

├─LSTM: 1-3 [10, 80, 400] 1,283,200

├─LSTM: 1-4 [10, 80, 200] 401,600

├─Linear: 1-5 [10, 80, 50] 10,050

├─SELU: 1-6 [10, 80, 50] --

├─Linear: 1-7 [10, 80, 1] 51

==========================================================================================

Total params: 5,658,101

Trainable params: 5,658,101

Non-trainable params: 0

Total mult-adds (G): 4.52

==========================================================================================

Input size (MB): 0.03

Forward/backward pass size (MB): 13.13

Params size (MB): 22.63

Estimated Total Size (MB): 35.79

==========================================================================================

再使用如下代码

writer.add_graph(model,input_to_model=torch.randn(40,29,10))

writer.close()

#最后在终端输入

tensorboard --logdir=runs

#得到

TensorBoard 1.15.0 at http://Tears:6006/ (Press CTRL+C to quit)

就能得到下图了