Unity换脸插件OpenCVForUnity实现换脸

最近捣鼓人脸识别的知识点,脑子笨,无从下手,然后各种度娘,然后发现一位大佬的文章很是有帮助,但是呢大佬就是大佬,只贴出了代码,小白如我的基本搞不定,没办法只能慢慢来细嚼慢咽,根据大佬的提示下载三个插件,三个插件已经备齐需要的自己去拿:点这里

接下来开始Demo之旅:本次使用Unity版本为2018.4.36 因为获取摄像头的AIP在2020的版本会出现卡顿(不知道啥原因,没在意,反正2018的没问题)

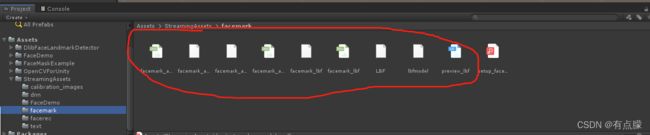

1:新建Unity工程,导入插件,然后找到OpenCVForUnity插件文件夹下的StreamingAssets/dnn - download_dnn_models.py文件 ,运行该文件自动下载某些依赖文件(下载完毕会自动关闭窗口);

2:有些个文件可能下载不下来,找到setup_dnn_module.pdf文件按照相应的示例场景下载所需的依赖文件,然后再将所有插件文件夹下的StreamingAssets文件夹内容全部复制到Assets下的StreamingAssets文件夹下,重复的点击替换就行;Face相关的Demo需要下载必备文件,可以到我博客中查找下载,也可以直接运行文档中的.py文件下载。不过有些个文件说下载不了,可能是链接失效,可以在文档中直接点击链接下载相关文件。

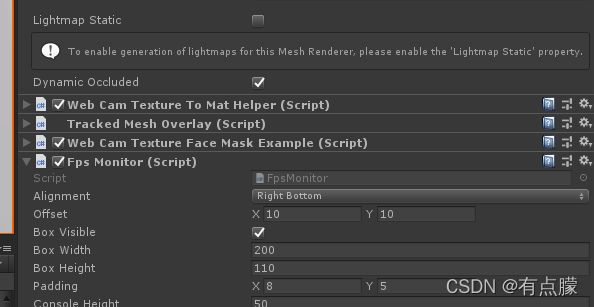

3:我们需要用的示例场景为WebCamTextureFaceMaskExample;主要核心也就这几个脚本,运行结果看图;代码水平差点的着重看WebCamTextureFaceMaskExample这个脚本就行,别人都封装好了,能力高的,可以自己看相关代码在封装一次使用。看到文章提示篇幅过短,不会写作的我,把代码改了改,直接上代码,这下该够了把!!!!!偷笑!

脚本:WebCamTextureFaceMaskExample

using System;

using System.Collections;

using System.Collections.Generic;

using System.IO;

using UnityEngine;

using UnityEngine.UI;

using UnityEngine.SceneManagement;

using DlibFaceLandmarkDetector;

using OpenCVForUnity.RectangleTrack;

using OpenCVForUnity.UnityUtils.Helper;

using OpenCVForUnity.CoreModule;

using OpenCVForUnity.ObjdetectModule;

using OpenCVForUnity.ImgprocModule;

using Rect = OpenCVForUnity.CoreModule.Rect;

using UnityEditor;

using UnityEditor.Experimental.SceneManagement;

using OpenCVForUnity.VideoModule;

namespace FaceMaskExample

{

/// ().material.mainTexture as Texture2D, "wakaka");

//这里是将获取到的图片存入本地。因为我是需要将预制存到本地,所以图片至关重要。

Config.SaveTexture(gameObject.GetComponent<Renderer>().material.mainTexture as Texture2D, Application.dataPath + "/TestForOpenCV/Resources", "wakaka");

if (item==null)

{

//加载预制并将FaceMaskData重新赋值。

GameObject obj = Instantiate(Resources.Load<GameObject>("TestMask"), GameObject.Find("FaceMaskData").transform);

obj.GetComponent<FaceMaskData>().image = gameObject.GetComponent<Renderer>().material.mainTexture as Texture2D;

obj.GetComponent<FaceMaskData>().faceRect = facemaskrect;

obj.GetComponent<FaceMaskData>().landmarkPoints = landmasks;

var prefabInstance = PrefabUtility.GetCorrespondingObjectFromSource(obj);

// 非打开预制体模式下

if (prefabInstance)

{

var prefabPath = AssetDatabase.GetAssetPath(prefabInstance);

// 修改预制体,则只能先Unpack预制体再保存

PrefabUtility.UnpackPrefabInstance(obj, PrefabUnpackMode.Completely, InteractionMode.UserAction);

PrefabUtility.SaveAsPrefabAssetAndConnect(obj, prefabPath, InteractionMode.AutomatedAction);

// 不修改只新增,可以直接保存

PrefabUtility.SaveAsPrefabAsset(obj, prefabPath);

}

else

{

// 预制体模式下,从Prefab场景中取得预制体资源位置和根物体,并保存

// PrefabStage prefabStage = PrefabStageUtility.GetCurrentPrefabStage();

//预制体原始位置

string path = Application.dataPath + "/TestForOpenCV/Resources/TestMask.prefab";//prefabStage.prefabAssetPath;

GameObject root = obj; //prefabStage.prefabContentsRoot;

PrefabUtility.SaveAsPrefabAsset(root, path);

}

OnChangeFaceMaskButtonClick(obj.GetComponent<FaceMaskData>());

/// gameObject.GetComponent().material.mainTexture = Config.GetTexture2d(@"D:\Program Files (x86)\DeskTop\Demo\tjd.png");

}

// webCamTextureToMatHelper.Play();

}

if (webCamTextureToMatHelper.IsPlaying () && webCamTextureToMatHelper.DidUpdateThisFrame ()) {

Mat rgbaMat = webCamTextureToMatHelper.GetMat ();

// detect faces.

List<Rect> detectResult = new List<Rect> ();

if (useDlibFaceDetecter) {

OpenCVForUnityUtils.SetImage (faceLandmarkDetector, rgbaMat);

List<UnityEngine.Rect> result = faceLandmarkDetector.Detect ();

foreach (var unityRect in result) {

detectResult.Add (new Rect ((int)unityRect.x, (int)unityRect.y, (int)unityRect.width, (int)unityRect.height));

}

} else {

// convert image to greyscale.

Imgproc.cvtColor (rgbaMat, grayMat, Imgproc.COLOR_RGBA2GRAY);

using (Mat equalizeHistMat = new Mat ())

using (MatOfRect faces = new MatOfRect ()) {

Imgproc.equalizeHist (grayMat, equalizeHistMat);

cascade.detectMultiScale (equalizeHistMat, faces, 1.1f, 2, 0 | Objdetect.CASCADE_SCALE_IMAGE, new Size (equalizeHistMat.cols () * 0.15, equalizeHistMat.cols () * 0.15), new Size ());

detectResult = faces.toList ();

}

// corrects the deviation of a detection result between OpenCV and Dlib.

foreach (Rect r in detectResult) {

r.y += (int)(r.height * 0.1f);

}

}

// face tracking.

rectangleTracker.UpdateTrackedObjects (detectResult);

List<TrackedRect> trackedRects = new List<TrackedRect> ();

rectangleTracker.GetObjects (trackedRects, true);

// create noise filter.

foreach (var openCVRect in trackedRects) {

if (openCVRect.state == TrackedState.NEW) {

if (!lowPassFilterDict.ContainsKey (openCVRect.id))

lowPassFilterDict.Add (openCVRect.id, new LowPassPointsFilter ((int)faceLandmarkDetector.GetShapePredictorNumParts ()));

if (!opticalFlowFilterDict.ContainsKey (openCVRect.id))

opticalFlowFilterDict.Add (openCVRect.id, new OFPointsFilter ((int)faceLandmarkDetector.GetShapePredictorNumParts ()));

} else if (openCVRect.state == TrackedState.DELETED) {

if (lowPassFilterDict.ContainsKey (openCVRect.id)) {

lowPassFilterDict [openCVRect.id].Dispose ();

lowPassFilterDict.Remove (openCVRect.id);

}

if (opticalFlowFilterDict.ContainsKey (openCVRect.id)) {

opticalFlowFilterDict [openCVRect.id].Dispose ();

opticalFlowFilterDict.Remove (openCVRect.id);

}

}

}

// create LUT texture.

foreach (var openCVRect in trackedRects) {

if (openCVRect.state == TrackedState.NEW) {

faceMaskColorCorrector.CreateLUTTex (openCVRect.id);

} else if (openCVRect.state == TrackedState.DELETED) {

faceMaskColorCorrector.DeleteLUTTex (openCVRect.id);

}

}

// detect face landmark points.

OpenCVForUnityUtils.SetImage (faceLandmarkDetector, rgbaMat);

List<List<Vector2>> landmarkPoints = new List<List<Vector2>> ();

for (int i = 0; i < trackedRects.Count; i++) {

TrackedRect tr = trackedRects [i];

UnityEngine.Rect rect = new UnityEngine.Rect (tr.x, tr.y, tr.width, tr.height);

List<Vector2> points = faceLandmarkDetector.DetectLandmark (rect);

// apply noise filter.

if (enableNoiseFilter) {

if (tr.state > TrackedState.NEW && tr.state < TrackedState.DELETED) {

opticalFlowFilterDict [tr.id].Process (rgbaMat, points, points);

lowPassFilterDict [tr.id].Process (rgbaMat, points, points);

}

}

landmarkPoints.Add (points);

}

if (landmarkPoints.Count > 0)

{

landmasks = landmarkPoints[0];

facemaskrect = new UnityEngine.Rect(trackedRects[0].x, trackedRects[0].y, trackedRects[0].width, trackedRects[0].height);

print(string.Format("当前识别到人脸数量为:{0},标志点为:{1},追踪人数:{2}", landmarkPoints.Count, landmarkPoints[0].Count, trackedRects.Count));

}

SetFaceMask(landmarkPoints, trackedRects, rgbaMat);

// draw face rects.

if (displayFaceRects) {

for (int i = 0; i < detectResult.Count; i++) {

UnityEngine.Rect rect = new UnityEngine.Rect (detectResult [i].x, detectResult [i].y, detectResult [i].width, detectResult [i].height);

OpenCVForUnityUtils.DrawFaceRect (rgbaMat, rect, new Scalar (255, 0, 0, 255), 2);

}

for (int i = 0; i < trackedRects.Count; i++) {

UnityEngine.Rect rect = new UnityEngine.Rect (trackedRects [i].x, trackedRects [i].y, trackedRects [i].width, trackedRects [i].height);

OpenCVForUnityUtils.DrawFaceRect (rgbaMat, rect, new Scalar (255, 255, 0, 255), 2);

// Imgproc.putText (rgbaMat, " " + frontalFaceChecker.GetFrontalFaceAngles (landmarkPoints [i]), new Point (rect.xMin, rect.yMin - 10), Imgproc.FONT_HERSHEY_SIMPLEX, 0.5, new Scalar (255, 255, 255, 255), 2, Imgproc.LINE_AA, false);

// Imgproc.putText (rgbaMat, " " + frontalFaceChecker.GetFrontalFaceRate (landmarkPoints [i]), new Point (rect.xMin, rect.yMin - 10), Imgproc.FONT_HERSHEY_SIMPLEX, 0.5, new Scalar (255, 255, 255, 255), 2, Imgproc.LINE_AA, false);

}

}

// draw face points.

if (displayDebugFacePoints) {

for (int i = 0; i < landmarkPoints.Count; i++) {

OpenCVForUnityUtils.DrawFaceLandmark (rgbaMat, landmarkPoints [i], new Scalar (0, 255, 0, 255), 2);

}

}

// display face mask image.

if (faceMaskTexture != null && faceMaskMat != null) {

if (displayFaceRects) {

OpenCVForUnityUtils.DrawFaceRect (faceMaskMat, faceRectInMask, new Scalar (255, 0, 0, 255), 2);

}

if (displayDebugFacePoints) {

OpenCVForUnityUtils.DrawFaceLandmark (faceMaskMat, faceLandmarkPointsInMask, new Scalar (0, 255, 0, 255), 2);

}

float scale = (rgbaMat.width () / 4f) / faceMaskMat.width ();

float tx = rgbaMat.width () - faceMaskMat.width () * scale;

float ty = 0.0f;

Mat trans = new Mat (2, 3, CvType.CV_32F);//1.0, 0.0, tx, 0.0, 1.0, ty);

trans.put (0, 0, scale);

trans.put (0, 1, 0.0f);

trans.put (0, 2, tx);

trans.put (1, 0, 0.0f);

trans.put (1, 1, scale);

trans.put (1, 2, ty);

Imgproc.warpAffine (faceMaskMat, rgbaMat, trans, rgbaMat.size (), Imgproc.INTER_LINEAR, Core.BORDER_TRANSPARENT, new Scalar (0));

if (displayFaceRects || displayDebugFacePointsToggle)

OpenCVForUnity.UnityUtils.Utils.texture2DToMat (faceMaskTexture, faceMaskMat);

}

// Imgproc.putText (rgbaMat, "W:" + rgbaMat.width () + " H:" + rgbaMat.height () + " SO:" + Screen.orientation, new Point (5, rgbaMat.rows () - 10), Imgproc.FONT_HERSHEY_SIMPLEX, 0.5, new Scalar (255, 255, 255, 255), 1, Imgproc.LINE_AA, false);

OpenCVForUnity.UnityUtils.Utils.fastMatToTexture2D (rgbaMat, texture);

}

}

private void SetFaceMask(List<List<Vector2>> landmarkPoints, List<TrackedRect> trackedRects, Mat rgbaMat)

{

#region face masking.

// face masking.

if (faceMaskTexture != null && landmarkPoints.Count >= 1)

{ // Apply face masking between detected faces and a face mask image.

float maskImageWidth = faceMaskTexture.width;

float maskImageHeight = faceMaskTexture.height;

TrackedRect tr;

for (int i = 0; i < trackedRects.Count; i++)

{

tr = trackedRects[i];

if (tr.state == TrackedState.NEW)

{

meshOverlay.CreateObject(tr.id, faceMaskTexture);

}

if (tr.state < TrackedState.DELETED)

{

MaskFace(meshOverlay, tr, landmarkPoints[i], faceLandmarkPointsInMask, maskImageWidth, maskImageHeight);

if (enableColorCorrection)

{

CorrectFaceMaskColor(tr.id, faceMaskMat, rgbaMat, faceLandmarkPointsInMask, landmarkPoints[i]);

}

}

else if (tr.state == TrackedState.DELETED)

{

meshOverlay.DeleteObject(tr.id);

}

}

}

else if (landmarkPoints.Count >= 1)

{ // Apply face masking between detected faces.

float maskImageWidth = texture.width;

float maskImageHeight = texture.height;

TrackedRect tr;

for (int i = 0; i < trackedRects.Count; i++)

{

tr = trackedRects[i];

if (tr.state == TrackedState.NEW)

{

meshOverlay.CreateObject(tr.id, texture);

}

if (tr.state < TrackedState.DELETED)

{

MaskFace(meshOverlay, tr, landmarkPoints[i], landmarkPoints[0], maskImageWidth, maskImageHeight);

if (enableColorCorrection)

{

CorrectFaceMaskColor(tr.id, rgbaMat, rgbaMat, landmarkPoints[0], landmarkPoints[i]);

}

}

else if (tr.state == TrackedState.DELETED)

{

meshOverlay.DeleteObject(tr.id);

}

}

}

#endregion

}

private void MaskFace (TrackedMeshOverlay meshOverlay, TrackedRect tr, List<Vector2> landmarkPoints, List<Vector2> landmarkPointsInMaskImage, float maskImageWidth = 0, float maskImageHeight = 0)

{

float imageWidth = meshOverlay.width;

float imageHeight = meshOverlay.height;

if (maskImageWidth == 0)

maskImageWidth = imageWidth;

if (maskImageHeight == 0)

maskImageHeight = imageHeight;

TrackedMesh tm = meshOverlay.GetObjectById (tr.id);

Vector3[] vertices = tm.meshFilter.mesh.vertices;

if (vertices.Length == landmarkPoints.Count) {

for (int j = 0; j < vertices.Length; j++) {

vertices [j].x = landmarkPoints [j].x / imageWidth - 0.5f;

vertices [j].y = 0.5f - landmarkPoints [j].y / imageHeight;

}

}

Vector2[] uv = tm.meshFilter.mesh.uv;

if (uv.Length == landmarkPointsInMaskImage.Count) {

for (int jj = 0; jj < uv.Length; jj++) {

uv [jj].x = landmarkPointsInMaskImage [jj].x / maskImageWidth;

uv [jj].y = (maskImageHeight - landmarkPointsInMaskImage [jj].y) / maskImageHeight;

}

}

meshOverlay.UpdateObject (tr.id, vertices, null, uv);

if (tr.numFramesNotDetected > 3) {

tm.sharedMaterial.SetFloat (shader_FadeID, 1f);

} else if (tr.numFramesNotDetected > 0 && tr.numFramesNotDetected <= 3) {

tm.sharedMaterial.SetFloat (shader_FadeID, 0.3f + (0.7f / 4f) * tr.numFramesNotDetected);

} else {

tm.sharedMaterial.SetFloat (shader_FadeID, 0.3f);

}

if (enableColorCorrection) {

tm.sharedMaterial.SetFloat (shader_ColorCorrectionID, 1f);

} else {

tm.sharedMaterial.SetFloat (shader_ColorCorrectionID, 0f);

}

// filter non frontal faces.

if (filterNonFrontalFaces && frontalFaceChecker.GetFrontalFaceRate (landmarkPoints) < frontalFaceRateLowerLimit) {

tm.sharedMaterial.SetFloat (shader_FadeID, 1f);

}

}

private void CorrectFaceMaskColor (int id, Mat src, Mat dst, List<Vector2> src_landmarkPoints, List<Vector2> dst_landmarkPoints)

{

Texture2D LUTTex = faceMaskColorCorrector.UpdateLUTTex (id, src, dst, src_landmarkPoints, dst_landmarkPoints);

TrackedMesh tm = meshOverlay.GetObjectById (id);

tm.sharedMaterial.SetTexture (shader_LUTTexID, LUTTex);

}

///