心电图心跳信号多分类预测(一)

目录

- 1.赛题理解

-

- 1.1赛题概况

- 1.2数据概况

- 1.3代码示例

-

- 1.3.1数据读取

- 1.3.2分类指标计算示例

- 2.baseline

-

- 2.1 导入第三方包

- 2.2 读取数据

- 2.3.数据预处理

- 2.4.训练数据/测试数据准备

- 2.5.模型训练

- 2.6.预测结果

1.赛题理解

Tip:心电图心跳信号多分类预测挑战赛。

2016年6月,国务院办公厅印发《国务院办公厅关于促进和规范健康医疗大数据应用发展的指导意见》,文件指出健康医疗大数据应用发展将带来健康医疗模式的深刻变化,有利于提升健康医疗服务效率和质量。

赛题以心电图数据为背景,要求选手根据心电图感应数据预测心跳信号,其中心跳信号对应正常病例以及受不同心律不齐和心肌梗塞影响的病例,这是一个多分类的问题。通过这道赛题来引导大家了解医疗大数据的应用,帮助竞赛新人进行自我练习、自我提高。

赛题链接:https://tianchi.aliyun.com/competition/entrance/531883/introduction

1.1赛题概况

比赛要求参赛选手根据给定的数据集,建立模型,预测不同的心跳信号。赛题以预测心电图心跳信号类别为任务,数据集报名后可见并可下载,该该数据来自某平台心电图数据记录,总数据量超过20万,主要为1列心跳信号序列数据,其中每个样本的信号序列采样频次一致,长度相等。为了保证比赛的公平性,将会从中抽取10万条作为训练集,2万条作为测试集A,2万条作为测试集B,同时会对心跳信号类别(label)信息进行脱敏。

通过这道赛题来引导大家走进医疗大数据的世界,主要针对于于竞赛新人进行自我练习,自我提高。

1.2数据概况

一般而言,对于数据在比赛界面都有对应的数据概况介绍(匿名特征除外),说明列的性质特征。了解列的性质会有助于我们对于数据的理解和后续分析。

Tip:匿名特征,就是未告知数据列所属的性质的特征列。

train.csv

- id 为心跳信号分配的唯一标识

- heartbeat_signals 心跳信号序列(数据之间采用“,”进行分隔)

- label 心跳信号类别(0、1、2、3)

testA.csv

- id 心跳信号分配的唯一标识

- heartbeat_signals 心跳信号序列(数据之间采用“,”进行分隔)

##1.3预测指标

选手需提交4种不同心跳信号预测的概率,选手提交结果与实际心跳类型结果进行对比,求预测的概率与真实值差值的绝对值。

具体计算公式如下:

总共有n个病例,针对某一个信号,若真实值为[y1,y2,y3,y4],模型预测概率值为[a1,a2,a3,a4],那么该模型的评价指标abs-sum为

a b s − s u m = ∑ j = 1 n ∑ i = 1 4 ∣ y i − a i ∣ {abs-sum={\mathop{ \sum }\limits_{{j=1}}^{{n}}{{\mathop{ \sum }\limits_{{i=1}}^{{4}}{{ \left| {y\mathop{{}}\nolimits_{{i}}-a\mathop{{}}\nolimits_{{i}}} \right| }}}}}} abs−sum=j=1∑ni=1∑4∣yi−ai∣

例如,某心跳信号类别为1,通过编码转成[0,1,0,0],预测不同心跳信号概率为[0.1,0.7,0.1,0.1],那么这个信号预测结果的abs-sum为

a b s − s u m = ∣ 0.1 − 0 ∣ + ∣ 0.7 − 1 ∣ + ∣ 0.1 − 0 ∣ + ∣ 0.1 − 0 ∣ = 0.6 {abs-sum={ \left| {0.1-0} \right| }+{ \left| {0.7-1} \right| }+{ \left| {0.1-0} \right| }+{ \left| {0.1-0} \right| }=0.6} abs−sum=∣0.1−0∣+∣0.7−1∣+∣0.1−0∣+∣0.1−0∣=0.6

多分类算法常见的评估指标如下:

其实多分类的评价指标的计算方式与二分类完全一样,只不过我们计算的是针对于每一类来说的召回率、精确度、准确率和 F1分数。

1、混淆矩阵(Confuse Matrix)

- (1)若一个实例是正类,并且被预测为正类,即为真正类TP(True Positive )

- (2)若一个实例是正类,但是被预测为负类,即为假负类FN(False Negative )

- (3)若一个实例是负类,但是被预测为正类,即为假正类FP(False Positive )

- (4)若一个实例是负类,并且被预测为负类,即为真负类TN(True Negative )

第一个字母T/F,表示预测的正确与否;第二个字母P/N,表示预测的结果为正例或者负例。如TP就表示预测对了,预测的结果是正例,那它的意思就是把正例预测为了正例。

2.准确率(Accuracy)

准确率是常用的一个评价指标,但是不适合样本不均衡的情况,医疗数据大部分都是样本不均衡数据。

A c c u r a c y = C o r r e c t T o t a l A c c u r a c y = T P + T N T P + T N + F P + F N Accuracy=\frac{Correct}{Total}\\ Accuracy = \frac{TP + TN}{TP + TN + FP + FN} Accuracy=TotalCorrectAccuracy=TP+TN+FP+FNTP+TN

3、精确率(Precision)也叫查准率简写为P

精确率(Precision)是针对预测结果而言的,其含义是在被所有预测为正的样本中实际为正样本的概率在被所有预测为正的样本中实际为正样本的概率,精确率和准确率看上去有些类似,但是是两个完全不同的概念。精确率代表对正样本结果中的预测准确程度,准确率则代表整体的预测准确程度,包括正样本和负样本。

P r e c i s i o n = T P T P + F P Precision = \frac{TP}{TP + FP} Precision=TP+FPTP

4.召回率(Recall) 也叫查全率 简写为R

召回率(Recall)是针对原样本而言的,其含义是在实际为正的样本中被预测为正样本的概率。

R e c a l l = T P T P + F N Recall = \frac{TP}{TP + FN} Recall=TP+FNTP

下面我们通过一个简单例子来看看精确率和召回率。假设一共有10篇文章,里面4篇是你要找的。根据你的算法模型,你找到了5篇,但实际上在这5篇之中,只有3篇是你真正要找的。

那么算法的精确率是3/5=60%,也就是你找的这5篇,有3篇是真正对的。算法的召回率是3/4=75%,也就是需要找的4篇文章,你找到了其中三篇。以精确率还是以召回率作为评价指标,需要根据具体问题而定。

5.宏查准率(macro-P)

计算每个样本的精确率然后求平均值

m a c r o P = 1 n ∑ 1 n p i {macroP=\frac{{1}}{{n}}{\mathop{ \sum }\limits_{{1}}^{{n}}{p\mathop{{}}\nolimits_{{i}}}}} macroP=n11∑npi

6.宏查全率(macro-R)

计算每个样本的召回率然后求平均值

m a c r o R = 1 n ∑ 1 n R i {macroR=\frac{{1}}{{n}}{\mathop{ \sum }\limits_{{1}}^{{n}}{R\mathop{{}}\nolimits_{{i}}}}} macroR=n11∑nRi

7.宏F1(macro-F1)

m a c r o F 1 = 2 × m a c r o P × m a c r o R m a c r o P + m a c r o R {macroF1=\frac{{2 \times macroP \times macroR}}{{macroP+macroR}}} macroF1=macroP+macroR2×macroP×macroR

与上面的宏不同,微查准查全,先将多个混淆矩阵的TP,FP,TN,FN对应位置求平均,然后按照P和R的公式求得micro-P和micro-R,最后根据micro-P和micro-R求得micro-F1

8.微查准率(micro-P)

m i c r o P = T P ‾ T P ‾ × F P ‾ {microP=\frac{{\overline{TP}}}{{\overline{TP} \times \overline{FP}}}} microP=TP×FPTP

9.微查全率(micro-R)

m i c r o R = T P ‾ T P ‾ × F N ‾ {microR=\frac{{\overline{TP}}}{{\overline{TP} \times \overline{FN}}}} microR=TP×FNTP

10.微F1(micro-F1)

m i c r o F 1 = 2 × m i c r o P × m i c r o R m i c r o P + m i c r o R {microF1=\frac{{2 \times microP\times microR }}{{microP+microR}}} microF1=microP+microR2×microP×microR

1.3代码示例

本部分为对于数据读取和指标评价的示例。

1.3.1数据读取

import pandas as pd

import numpy as np

path='./data/'

train_data=pd.read_csv(path+'train.csv')

test_data=pd.read_csv(path+'testA.csv')

print('Train data shape:',train_data.shape)

print('TestA data shape:',test_data.shape)

Train data shape: (100000, 3)

TestA data shape: (20000, 2)

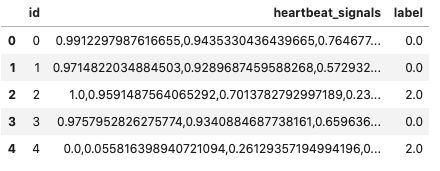

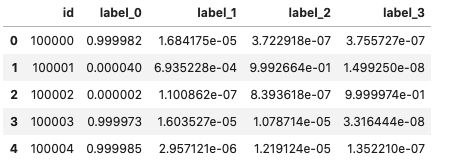

train_data.head()

1.3.2分类指标计算示例

多分类评估指标的计算示例:

from sklearn.metrics import accuracy_score, precision_score, recall_score

from sklearn.metrics import f1_score

y_test = [1, 1, 1, 1, 1, 2, 2, 2, 2, 3, 3, 3, 4, 4,5,5,6,6,6,0,0,0,0] #真实值

y_predict = [1, 1, 1, 3, 3, 2, 2, 3, 3, 3, 4, 3, 4, 3,5,1,3,6,6,1,1,0,6] #预测值

#计算准确率

print("accuracy:", accuracy_score(y_test, y_predict))

#计算精确率

#计算macro_precision

print("macro_precision:", precision_score(y_test, y_predict, average='macro'))

#计算micro_precision

print("micro_precision:", precision_score(y_test, y_predict, average='micro'))

#计算召回率

#计算macro_recall

print("macro_recall:", recall_score(y_test, y_predict, average='macro'))

#计算micro_recall

print("micro_recall:", recall_score(y_test, y_predict, average='micro'))

#计算F1

#计算macro_f1

print("macro_f1:", f1_score(y_test, y_predict, average='macro'))

#计算micro_f1

print("micro_f1:", f1_score(y_test, y_predict, average='micro'))

accuracy: 0.5217391304347826

macro_precision: 0.7023809523809524

micro_precision: 0.5217391304347826

macro_recall: 0.5261904761904762

micro_recall: 0.5217391304347826

macro_f1: 0.5441558441558441

micro_f1: 0.5217391304347826

def abs_sum(y_pre,y_tru):

#y_pre为预测概率矩阵

#y_tru为真实类别矩阵

y_pre=np.array(y_pre)

y_tru=np.array(y_tru)

loss=sum(sum(abs(y_pre-y_tru)))

return loss

y_pre=[[0.1,0.1,0.7,0.1],[0.1,0.1,0.7,0.1]]

y_tru=[[0,0,1,0],[0,0,1,0]]

print(abs_sum(y_pre,y_tru))

def abs_sum(y_pre,y_tru):

#y_pre为预测概率矩阵

#y_tru为真实类别矩阵

y_pre=np.array(y_pre)

y_tru=np.array(y_tru)

loss=sum(sum(abs(y_pre-y_tru)))

return loss

y_pre=[[0.1,0.1,0.7,0.1],[0.1,0.1,0.7,0.1]]

y_tru=[[0,0,1,0],[0,0,1,0]]

print(abs_sum(y_pre,y_tru))

1.2

2.baseline

2.1 导入第三方包

import os

import gc

import math

import pandas as pd

import numpy as np

import lightgbm as lgb

import xgboost as xgb

from catboost import CatBoostRegressor

from sklearn.linear_model import SGDRegressor, LinearRegression, Ridge

from sklearn.preprocessing import MinMaxScaler

from sklearn.model_selection import StratifiedKFold, KFold

from sklearn.metrics import log_loss

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import OneHotEncoder

from tqdm import tqdm

import matplotlib.pyplot as plt

import time

import warnings

warnings.filterwarnings('ignore')

2.2 读取数据

train = pd.read_csv('./data/train.csv')

test=pd.read_csv('./data/testA.csv')

train.head()

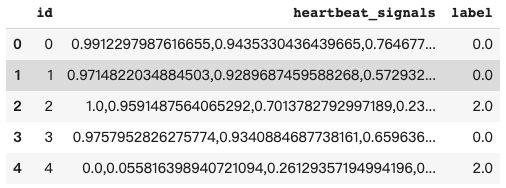

test.head()

2.3.数据预处理

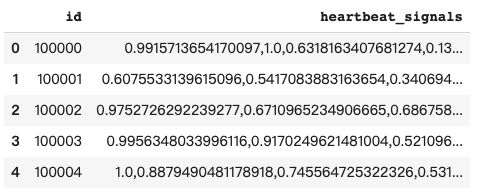

def reduce_mem_usage(df):

start_mem = df.memory_usage().sum() / 1024**2

print('Memory usage of dataframe is {:.2f} MB'.format(start_mem))

for col in df.columns:

col_type = df[col].dtype

if col_type != object:

c_min = df[col].min()

c_max = df[col].max()

if str(col_type)[:3] == 'int':

if c_min > np.iinfo(np.int8).min and c_max < np.iinfo(np.int8).max:

df[col] = df[col].astype(np.int8)

elif c_min > np.iinfo(np.int16).min and c_max < np.iinfo(np.int16).max:

df[col] = df[col].astype(np.int16)

elif c_min > np.iinfo(np.int32).min and c_max < np.iinfo(np.int32).max:

df[col] = df[col].astype(np.int32)

elif c_min > np.iinfo(np.int64).min and c_max < np.iinfo(np.int64).max:

df[col] = df[col].astype(np.int64)

else:

if c_min > np.finfo(np.float16).min and c_max < np.finfo(np.float16).max:

df[col] = df[col].astype(np.float16)

elif c_min > np.finfo(np.float32).min and c_max < np.finfo(np.float32).max:

df[col] = df[col].astype(np.float32)

else:

df[col] = df[col].astype(np.float64)

else:

df[col] = df[col].astype('category')

end_mem = df.memory_usage().sum() / 1024**2

print('Memory usage after optimization is: {:.2f} MB'.format(end_mem))

print('Decreased by {:.1f}%'.format(100 * (start_mem - end_mem) / start_mem))

return df

# 简单预处理

train_list = []

for items in train.values:

train_list.append([items[0]] + [float(i) for i in items[1].split(',')] + [items[2]])

train = pd.DataFrame(np.array(train_list))

train.columns = ['id'] + ['s_'+str(i) for i in range(len(train_list[0])-2)] + ['label']

train = reduce_mem_usage(train)

test_list=[]

for items in test.values:

test_list.append([items[0]] + [float(i) for i in items[1].split(',')])

test = pd.DataFrame(np.array(test_list))

test.columns = ['id'] + ['s_'+str(i) for i in range(len(test_list[0])-1)]

test = reduce_mem_usage(test)

2.4.训练数据/测试数据准备

x_train = train.drop(['id','label'], axis=1)

y_train = train['label']

x_test=test.drop(['id'], axis=1)

2.5.模型训练

def abs_sum(y_pre,y_tru):

y_pre=np.array(y_pre)

y_tru=np.array(y_tru)

loss=sum(sum(abs(y_pre-y_tru)))

return loss

def cv_model(clf, train_x, train_y, test_x, clf_name):

folds = 5

seed = 2021

kf = KFold(n_splits=folds, shuffle=True, random_state=seed)

test = np.zeros((test_x.shape[0],4))

cv_scores = []

onehot_encoder = OneHotEncoder(sparse=False)

for i, (train_index, valid_index) in enumerate(kf.split(train_x, train_y)):

print('************************************ {} ************************************'.format(str(i+1)))

trn_x, trn_y, val_x, val_y = train_x.iloc[train_index], train_y[train_index], train_x.iloc[valid_index], train_y[valid_index]

if clf_name == "lgb":

train_matrix = clf.Dataset(trn_x, label=trn_y)

valid_matrix = clf.Dataset(val_x, label=val_y)

params = {

'boosting_type': 'gbdt',

'objective': 'multiclass',

'num_class': 4,

'num_leaves': 2 ** 5,

'feature_fraction': 0.8,

'bagging_fraction': 0.8,

'bagging_freq': 4,

'learning_rate': 0.1,

'seed': seed,

'nthread': 28,

'n_jobs':24,

'verbose': -1,

}

model = clf.train(params,

train_set=train_matrix,

valid_sets=valid_matrix,

num_boost_round=2000,

verbose_eval=100,

early_stopping_rounds=200)

val_pred = model.predict(val_x, num_iteration=model.best_iteration)

test_pred = model.predict(test_x, num_iteration=model.best_iteration)

val_y=np.array(val_y).reshape(-1, 1)

val_y = onehot_encoder.fit_transform(val_y)

print('预测的概率矩阵为:')

print(test_pred)

test += test_pred

score=abs_sum(val_y, val_pred)

cv_scores.append(score)

print(cv_scores)

print("%s_scotrainre_list:" % clf_name, cv_scores)

print("%s_score_mean:" % clf_name, np.mean(cv_scores))

print("%s_score_std:" % clf_name, np.std(cv_scores))

test=test/kf.n_splits

return test

def lgb_model(x_train, y_train, x_test):

lgb_test = cv_model(lgb, x_train, y_train, x_test, "lgb")

return lgb_train, lgb_test

lgb_test = lgb_model(x_train, y_train, x_test)

************************************ 1 ************************************

Training until validation scores don't improve for 200 rounds.

[100] valid_0's multi_logloss: 0.064112

[200] valid_0's multi_logloss: 0.0459167

[300] valid_0's multi_logloss: 0.0408373

[400] valid_0's multi_logloss: 0.0392399

[500] valid_0's multi_logloss: 0.0392175

[600] valid_0's multi_logloss: 0.0405053

Early stopping, best iteration is:

[463] valid_0's multi_logloss: 0.0389485

预测的概率矩阵为:

[[9.99961153e-01 3.79594757e-05 5.40269890e-07 3.47753326e-07]

[4.99636350e-05 5.92518438e-04 9.99357510e-01 8.40463147e-09]

[1.13534131e-06 6.17413877e-08 5.21828651e-07 9.99998281e-01]

...

[1.22083700e-01 2.13622465e-04 8.77693686e-01 8.99180470e-06]

[9.99980119e-01 1.98198246e-05 3.10446404e-08 2.99597008e-08]

[9.94006248e-01 1.02828877e-03 3.81770253e-03 1.14776091e-03]]

[579.1476207255505]

************************************ 2 ************************************

Training until validation scores don't improve for 200 rounds.

[100] valid_0's multi_logloss: 0.0687167

[200] valid_0's multi_logloss: 0.0502461

[300] valid_0's multi_logloss: 0.0448571

[400] valid_0's multi_logloss: 0.0434752

[500] valid_0's multi_logloss: 0.0437877

[600] valid_0's multi_logloss: 0.0451813

Early stopping, best iteration is:

[436] valid_0's multi_logloss: 0.0432897

预测的概率矩阵为:

[[9.99991739e-01 7.88513434e-06 2.75344752e-07 1.00915251e-07]

[2.49685427e-05 2.43497381e-04 9.99731529e-01 4.61614730e-09]

[1.44652230e-06 5.27814431e-08 8.75834875e-07 9.99997625e-01]

...

[1.73518192e-02 6.25526922e-04 9.82020954e-01 1.69956047e-06]

[9.99964916e-01 3.49310996e-05 6.38724919e-08 8.85421858e-08]

[9.49334496e-01 2.90531517e-03 4.51978522e-02 2.56233644e-03]]

[579.1476207255505, 604.2307776963927]

************************************ 3 ************************************

Training until validation scores don't improve for 200 rounds.

[100] valid_0's multi_logloss: 0.0618889

[200] valid_0's multi_logloss: 0.0430953

[300] valid_0's multi_logloss: 0.0375819

[400] valid_0's multi_logloss: 0.0352853

[500] valid_0's multi_logloss: 0.0351532

[600] valid_0's multi_logloss: 0.0358523

Early stopping, best iteration is:

[430] valid_0's multi_logloss: 0.0349329

预测的概率矩阵为:

[[9.99983568e-01 1.56724846e-05 1.94436783e-07 5.64651188e-07]

[2.99349484e-05 2.43726437e-04 9.99726329e-01 9.67287310e-09]

[2.31218700e-06 1.82129075e-07 6.88966798e-07 9.99996817e-01]

...

[2.96181314e-02 2.17104772e-04 9.70149950e-01 1.48136138e-05]

[9.99966536e-01 3.34076405e-05 4.80303648e-08 7.93476494e-09]

[9.73829094e-01 5.30951041e-03 1.50529670e-02 5.80842848e-03]]

[579.1476207255505, 604.2307776963927, 555.3013640683623]

************************************ 4 ************************************

Training until validation scores don't improve for 200 rounds.

[100] valid_0's multi_logloss: 0.0686223

[200] valid_0's multi_logloss: 0.0500697

[300] valid_0's multi_logloss: 0.045032

[400] valid_0's multi_logloss: 0.0440971

[500] valid_0's multi_logloss: 0.0446604

[600] valid_0's multi_logloss: 0.0464719

Early stopping, best iteration is:

[441] valid_0's multi_logloss: 0.0439851

预测的概率矩阵为:

[[9.99993398e-01 5.63383991e-06 4.13276650e-07 5.54430625e-07]

[4.23117526e-05 1.07414935e-03 9.98883518e-01 2.07032580e-08]

[1.48865216e-06 1.48204734e-07 6.84974277e-07 9.99997678e-01]

...

[1.76412184e-02 1.88982715e-04 9.82166158e-01 3.64074385e-06]

[9.99938870e-01 6.10399865e-05 4.18069924e-08 4.79151711e-08]

[8.63192676e-01 1.44352297e-02 1.12629861e-01 9.74223271e-03]]

[579.1476207255505, 604.2307776963927, 555.3013640683623, 605.9808495854531]

************************************ 5 ************************************

Training until validation scores don't improve for 200 rounds.

[100] valid_0's multi_logloss: 0.0620219

[200] valid_0's multi_logloss: 0.0442736

[300] valid_0's multi_logloss: 0.038578

[400] valid_0's multi_logloss: 0.0372811

[500] valid_0's multi_logloss: 0.03731

[600] valid_0's multi_logloss: 0.0382158

Early stopping, best iteration is:

[439] valid_0's multi_logloss: 0.036957

预测的概率矩阵为:

[[9.99982194e-01 1.70578170e-05 4.38130927e-07 3.10112892e-07]

[5.29666403e-05 1.31372254e-03 9.98633279e-01 3.15655986e-08]

[2.00659963e-06 1.05574382e-07 1.42520417e-06 9.99996463e-01]

...

[1.00123126e-02 4.74424633e-05 9.89939074e-01 1.17041518e-06]

[9.99920877e-01 7.90255835e-05 7.54952546e-08 2.23046247e-08]

[9.65319082e-01 3.09196193e-03 2.49116248e-02 6.67733096e-03]]

[579.1476207255505, 604.2307776963927, 555.3013640683623, 605.9808495854531, 570.2883889772514]

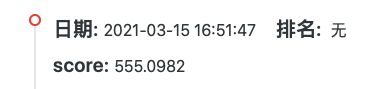

lgb_scotrainre_list: [579.1476207255505, 604.2307776963927, 555.3013640683623, 605.9808495854531, 570.2883889772514]

lgb_score_mean: 582.9898002106021

lgb_score_std: 19.60869787836831

2.6.预测结果

temp=pd.DataFrame(lgb_test)

result=pd.read_csv('sample_submit.csv')

result['label_0']=temp[0]

result['label_1']=temp[1]

result['label_2']=temp[2]

result['label_3']=temp[3]

result.to_csv('submit.csv',index=False)