- ThinkSound V2版 - 一键给无声视频配音,为AI视频生成匹配音效 支持50系显卡 一键整合包下载

昨日之日2006

ai语音音视频人工智能

ThinkSound是阿里通义实验室开源的首个音频生成模型,它能够让AI像专业“音效师”一样,根据视频内容生成高度逼真、与视觉内容完美契合的音频。ThinkSound可直接应用于影视后期制作,为AI生成的视频自动匹配精准的环境噪音与爆炸声效;服务于游戏开发领域,实时生成雨势变化等动态场景的自适应音效;同时可以无障碍视频生产,为视障用户同步生成画面描述与环境音效。今天分享的ThinkSoundV2版

- 玩转Docker | 使用Docker部署gopeed下载工具

心随_风动

玩转Dockerdocker容器运维

玩转Docker|使用Docker部署gopeed下载工具前言一、gopeed介绍Gopeed简介主要特点二、系统要求环境要求环境检查Docker版本检查检查操作系统版本三、部署gopeed服务下载镜像创建容器检查容器状态检查服务端口安全设置四、访问gopeed应用五、测试与下载六、总结前言在当今信息爆炸的时代,高效地获取和管理网络资源变得尤为重要。无论是下载大型文件还是进行日常的数据传输,一个稳

- 深度学习模型表征提取全解析

ZhangJiQun&MXP

教学2024大模型以及算力2021AIpython深度学习人工智能pythonembedding语言模型

模型内部进行表征提取的方法在自然语言处理(NLP)中,“表征(Representation)”指将文本(词、短语、句子、文档等)转化为计算机可理解的数值形式(如向量、矩阵),核心目标是捕捉语言的语义、语法、上下文依赖等信息。自然语言表征技术可按“静态/动态”“有无上下文”“是否融入知识”等维度划分一、传统静态表征(无上下文,词级为主)这类方法为每个词分配固定向量,不考虑其在具体语境中的含义(无法解

- 视频分析:让AI看懂动态画面

随机森林404

计算机视觉音视频人工智能microsoft

引言:动态视觉理解的革命在数字信息爆炸的时代,视频已成为最主要的媒介形式。据统计,每分钟有超过500小时的视频内容被上传到YouTube平台,而全球互联网流量的82%来自视频数据传输。面对如此海量的视频内容,传统的人工处理方式已无法满足需求,这正是人工智能视频分析技术大显身手的舞台。视频分析技术赋予机器"看懂"动态画面的能力,使其能够自动理解、解释甚至预测视频中的内容,这一突破正在彻底改变我们与视

- 卫星分析系列之 使用卫星图像量化野火烧毁面积 在 Google Colab 中使用 Python 使用 Sentinel-2 图像确定森林火灾烧毁面积

知识大胖

NVIDIAGPU和大语言模型开发教程pythonsentinel开发语言

简介几年前,当大多数气候模型预测如果我们不采取必要措施,洪水、热浪和野火将会发生更多时,我没想到这些不寻常的灾难现象会成为常见事件。其中,野火每年摧毁大量森林面积。如果你搜索不同地方的重大野火表格,你会发现令人震惊的统计数据,显示由于野火,地球上有多少森林面积正在消失。在本教程中,我将结合我已经发表过的关于下载、处理卫星图像和可视化野火的故事,量化加州发生的其中一场重大野火的烧毁面积。与之前的帖子

- 【AI大模型】LLM模型架构深度解析:BERT vs. GPT vs. T5

我爱一条柴ya

学习AI记录ai人工智能AI编程python

引言Transformer架构的诞生(Vaswanietal.,2017)彻底改变了自然语言处理(NLP)。在其基础上,BERT、GPT和T5分别代表了三种不同的模型范式,主导了预训练语言模型的演进。理解它们的差异是LLM开发和学习的基石。一、核心架构对比特性BERT(BidirectionalEncoder)GPT(GenerativePre-trainedTransformer)T5(Text

- Python爬虫实战:使用最新技术爬取新华网新闻数据

Python爬虫项目

2025年爬虫实战项目python爬虫开发语言scrapy音视频

一、前言在当今信息爆炸的时代,网络爬虫技术已经成为获取互联网数据的重要手段。作为国内权威新闻媒体,新华网每天发布大量高质量的新闻内容,这些数据对于舆情分析、市场研究、自然语言处理等领域具有重要价值。本文将详细介绍如何使用Python最新技术构建一个高效、稳定的新华网新闻爬虫系统。二、爬虫技术选型2.1技术栈选择在构建新华网爬虫时,我们选择了以下技术栈:请求库:httpx(支持HTTP/2,异步请求

- NLP_知识图谱_大模型——个人学习记录

macken9999

自然语言处理知识图谱大模型自然语言处理知识图谱学习

1.自然语言处理、知识图谱、对话系统三大技术研究与应用https://github.com/lihanghang/NLP-Knowledge-Graph深度学习-自然语言处理(NLP)-知识图谱:知识图谱构建流程【本体构建、知识抽取(实体抽取、关系抽取、属性抽取)、知识表示、知识融合、知识存储】-元気森林-博客园https://www.cnblogs.com/-402/p/16529422.htm

- MCP协议:AI时代的“万能插座”如何重构IT生态与未来

MCP协议:AI时代的“万能插座”如何重构IT生态与未来在人工智能技术爆炸式发展的浪潮中,一个名为ModelContextProtocol(MCP)的技术协议正以惊人的速度重塑IT行业的底层逻辑。2024年11月由Anthropic首次发布,MCP在短短半年内获得OpenAI、谷歌、亚马逊、阿里、腾讯等全球科技巨头的支持,被业内誉为AI时代的HTTP协议或USB-C接口,正在成为连接大模型与现实世

- 从RNN循环神经网络到Transformer注意力机制:解析神经网络架构的华丽蜕变

熊猫钓鱼>_>

神经网络rnntransformer

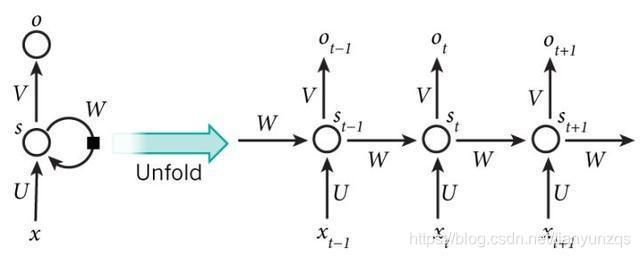

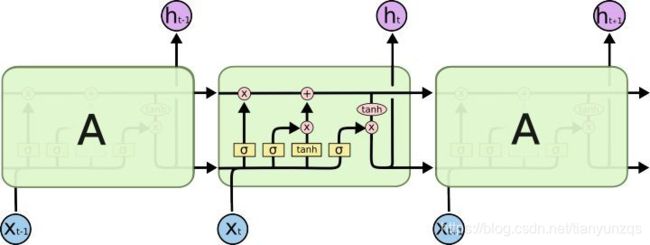

1.引言在自然语言处理和序列建模领域,神经网络架构经历了显著的演变。从早期的循环神经网络(RNN)到现代的Transformer架构,这一演变代表了深度学习方法在处理序列数据方面的重大进步。本文将深入比较这两种架构,分析它们的工作原理、优缺点,并通过实验结果展示它们在实际应用中的性能差异。2.循环神经网络(RNN)2.1基本原理循环神经网络是专门为处理序列数据而设计的神经网络架构。RNN的核心思想

- 【机器学习笔记Ⅰ】9 特征缩放

巴伦是只猫

机器学习机器学习笔记人工智能

特征缩放(FeatureScaling)详解特征缩放是机器学习数据预处理的关键步骤,旨在将不同特征的数值范围统一到相近的尺度,从而加速模型训练、提升性能并避免某些特征主导模型。1.为什么需要特征缩放?(1)问题背景量纲不一致:例如:特征1:年龄(范围0-100)特征2:收入(范围0-1,000,000)梯度下降的困境:量纲大的特征(如收入)会导致梯度更新方向偏离最优路径,收敛缓慢。量纲小的特征(如

- 【osgEarth】在osgEarth中实现的一些模型效果:雷达波、通信链路、爆炸、尾焰、轨迹、文字标牌等

bailang_zhizun

OSGosgEarthQTqtc++

学习osgEarth也有一段时间了,记录一下最近一段时间的学习成果。主要是在osgEarth三维场景中实现了一些模型效果,部分模型参考借鉴了西安恒歌的一些显示效果(当然是不能和他们比的doge),期间也从杨总(freesouths)的一些资料、文章中学到了很多,在此也感谢杨总他们的无私奉献。1、简单的仿真小场景简单的仿真小场景,感兴趣的可以看看。基于osgEarth制作的一个简单的飞机对抗仿真小场

- 目前主流图像分类模型的详细对比分析

@comefly

闲聊linux运维服务器

以下是目前主流图像分类模型的详细对比分析,结合性能、架构特点及应用场景进行整理:一、主流模型架构分类与定量对比模型名称架构类型核心特点ImageNetTop-1准确率参数量(百万)计算效率典型应用场景ResNetCNN残差连接解决梯度消失,支持超深网络(如ResNet-152)76.1%25.6中等通用分类、目标检测ViTTransformer将图像分割为patches,用标准Transforme

- 【深度学习解惑】在实践中如何发现和修正RNN训练过程中的数值不稳定?

云博士的AI课堂

大模型技术开发与实践哈佛博后带你玩转机器学习深度学习深度学习rnn人工智能tensorflowpytorch神经网络机器学习

在实践中发现和修正RNN训练过程中的数值不稳定目录引言与背景介绍原理解释代码说明与实现应用场景与案例分析实验设计与结果分析性能分析与技术对比常见问题与解决方案创新性与差异性说明局限性与挑战未来建议和进一步研究扩展阅读与资源推荐图示与交互性内容语言风格与通俗化表达互动交流1.引言与背景介绍循环神经网络(RNN)在处理序列数据时表现出色,但训练过程中常面临梯度消失和梯度爆炸问题,导致数值不稳定。当网络

- Mysql:分库分表

爱吃汉堡的Saul.

数据库mysql数据库

引言:随着互联网业务的飞速发展,数据量与并发请求呈现爆炸式增长。传统的单机数据库架构,即使经过垂直扩展(如提升硬件配置、优化SQL、引入读写分离),也终将面临性能瓶颈。主要挑战体现在:单表性能极限:当单表数据行数达到千万乃至亿级时,B+树索引深度增加,导致查询效率显著下降。此外,DDL(数据定义语言)操作如添加索引、修改表结构等,可能耗时数小时并长时间锁定表,严重影响业务可用性。单库资源瓶颈:单个

- 【亲测免费】 CatBoost 教程项目使用指南

CatBoost教程项目使用指南tutorials项目地址:https://gitcode.com/gh_mirrors/tutorials1/tutorials1.项目介绍CatBoost是一个高效、灵活且易于使用的梯度提升库,特别适用于处理分类特征。它由Yandex开发,广泛应用于机器学习和数据科学领域。CatBoost提供了丰富的功能,包括自动处理分类特征、支持GPU训练、内置的交叉验证和模

- 数据安全审计平台的三大关键技术:日志分析、行为监测与智能告警

KKKlucifer

安全算法

在数字化浪潮中,数据安全审计是企业守护核心资产的“瞭望塔”。通过日志分析、行为监测、智能告警三大技术,数据安全审计平台构建起“全流程监控-异常识别-快速响应”的闭环,为数据安全筑牢防线。以下从技术原理、实践价值与行业应用展开解析。日志分析:数据安全的“DNA图谱”1.多源日志融合技术实现:通过Agent采集操作系统、数据库、网络设备等200+日志源,利用正则表达式、NLP技术解析非结构化日志(如“

- Python 强化学习算法实用指南(二)

原文:annas-archive.org/md5/e3819a6747796b03b9288831f4e2b00c译者:飞龙协议:CCBY-NC-SA4.0第六章:学习随机优化与PG优化到目前为止,我们已经探讨并开发了基于价值的强化学习算法。这些算法通过学习一个价值函数来找到一个好的策略。尽管它们表现良好,但它们的应用受限于一些内在的限制。在本章中,我们将介绍一类新的算法——策略梯度方法,它们通过

- 使用Qlib基于LightGBM预测沪深300涨跌

DeepReinforce

量化投资

Qlib是一个专为量化金融和算法交易研究设计的开源库。本文配置一个基于LightGBM的梯度提升决策树(GBDT)模型,并使用金融数据集(包含158个技术指标特征)进行训练和预测。1.导入必要的模块pythonCollapseWrapRunCopyfromqlib.contrib.model.gbdtimportLGBModelfromqlib.contrib.data.handlerimport

- 零基础数据结构与算法——第四章:基础算法-排序(总)

qqxhb

零基础数据结构与算法算法小学生编程算法排序算法数据结构插入桶归并

排序上(冒泡/选择/插入)排序中(归并/堆排/快排)排序下(计数/基数/桶)4.1.10排序算法的比较性能比较下表总结了我们学习的排序算法的性能特点:排序算法平均时间复杂度最坏时间复杂度最好时间复杂度空间复杂度稳定性是否基于比较冒泡排序O(n²)O(n²)O(n)O(1)稳定是选择排序O(n²)O(n²)O(n²)O(1)不稳定是插入排序O(n²)O(n²)O(n)O(1)稳定是归并排序O(nlo

- Transformer、BERT等模型原理与应用案例

程序猿全栈の董(董翔)

人工智能热门技术领域transformerbert深度学习

Transformer、BERT等模型原理与应用案例Transformer模型原理Transformer是一种基于注意力机制的深度学习模型架构,由Vaswani等人在2017年的论文"AttentionIsAllYouNeed"中提出。与传统的循环神经网络(RNN)和卷积神经网络(CNN)不同,Transformer完全依赖自注意力机制来处理输入序列的全局依赖关系。核心组件多头自注意力机制(Mul

- ER综述论文阅读-Emotion recognition in EEG signals using deep learning methods: A review

今天早睡了

情绪识别EmotionRecognition论文阅读深度学习人工智能

EmotionrecognitioninEEGsignalsusingdeeplearningmethods:AreviewQ1期刊,2023论文链接:https://d1wqtxts1xzle7.cloudfront.net/105887899/emotionreview-libre.pdf?1695460941=&response-content-disposition=inline%3B+f

- 【论文阅读笔记】TimesURL: Self-supervised Contrastive Learning for Universal Time Series

少写代码少看论文多多睡觉

#论文阅读笔记论文阅读笔记

TimesURL:Self-supervisedContrastiveLearningforUniversalTimeSeriesRepresentationLearning摘要 学习适用于多种下游任务的通用时间序列表示,并指出这在实际应用中具有挑战性但也是有价值的。最近,研究人员尝试借鉴自监督对比学习(SSCL)在计算机视觉(CV)和自然语言处理(NLP)中的成功经验,以解决时间序列表示的问题。

- lstm 输入数据维度_[mcj]pytorch中LSTM的输入输出解释||LSTM输入输出详解

萬重

lstm输入数据维度

最近想了解一些关于LSTM的相关知识,在进行代码测试的时候,有个地方一直比较疑惑,关于LSTM的输入和输出问题。一直不清楚在pytorch里面该如何定义LSTM的输入和输出。首先看个pytorch官方的例子:#首先导入LSTM需要的相关模块importtorchimporttorch.nnasnn#神经网络模块#数据向量维数10,隐藏元维度20,2个LSTM层串联(如果是1,可以省略,默认为1)r

- lstm 输入数据维度_keras中关于输入尺寸、LSTM的stateful问题

weixin_39856269

lstm输入数据维度

补充:return_sequence,return_state都是针对一个时间切片(步长)内的h和c状态,而stateful是针对不同的batch之间的。多层LSTM需要设置return_sequence=True,后面再设置return_sequence=False.最近在学习使用keras搭建LSTM的时候,遇到了一些不明白的地方。有些搞懂了,有些还没有搞懂。现在记下来,因为很快就会忘记!-_

- 【机器学习&深度学习】为什么分类任务中类别比例应接近 1:1?

一叶千舟

深度学习【理论】机器学习深度学习人工智能

目录前言一、什么是类别不平衡?二、为什么类别比例应接近1:1?2.1⚠模型容易“偏科”2.2精确率、召回率失真2.3模型训练失衡,梯度方向偏移三、现实案例中的“灾难性后果”四、如何应对类别不平衡问题?4.1数据层面处理4.2模型训练层面优化4.3评估指标替代五、实际场景举例六、模拟场景:银行信用卡欺诈检测6.1场景描述6.2数据集情况6.3模型训练结果(未处理不平衡)6.4模型做了什么?6.5实际

- golang的各种原生类型之间赋值是原子的吗

无用程序员~

Linux应用编程golang开发语言后端

原始代码我在项目里写了这样一段代码packageid2nameimport("time")typeId2Namestruct{mmap[int]string}funcNew()(*Id2Name,error){m,err:=getId2NameMap()iferr!=nil{returnnil,err}ins:=&Id2Name{m:m,}goins.reload()returnins,nil}f

- torch 填充补齐

AI算法网奇

python宝典python

目录行填充补齐1.填充长度(Padding)2.掩码(Masking)3.排序优化(可选)行填充补齐importtorchfromtorch.nn.utils.rnnimportpad_sequence#原始序列(每个序列是二维张量,行数不同)batch_data=[torch.tensor([[1,2,3]])#1行#torch.tensor([[4,5,6],[7,8,9]]),#2行#tor

- lstm 数据输入问题

AI算法网奇

python基础lstm人工智能

lstm我有20*6条数据,20个样本,每个样本6条历史数据,每条数据有5个值,我送给网络输入时应该是20*6*5还是6*20*5你的数据是:20个样本(batchsize=20)每个样本有6条历史数据(sequencelength=6)每条数据有5个值(inputsize=5)✅正确的输入形状是:(20,6,5)#即batch_size=20,seq_len=6,input_size=5前提是你

- pytorch 自动微分

this_show_time

pytorch人工智能python机器学习

自动微分1.基础概念1.1.**张量**1.2.**计算图**:1.3.**反向传播**1.4.**梯度**2.计算梯度2.1标量梯度计算2.2向量梯度计算2.3多标量梯度计算2.4多向量梯度计算3.梯度上下文控制3.1控制梯度计算(withtorch.no_grad())3.2累计梯度3.3梯度清零(torch.zero_())自动微分模块torch.autograd负责自动计算张量操作的梯度,

- Algorithm

香水浓

javaAlgorithm

冒泡排序

public static void sort(Integer[] param) {

for (int i = param.length - 1; i > 0; i--) {

for (int j = 0; j < i; j++) {

int current = param[j];

int next = param[j + 1];

- mongoDB 复杂查询表达式

开窍的石头

mongodb

1:count

Pg: db.user.find().count();

统计多少条数据

2:不等于$ne

Pg: db.user.find({_id:{$ne:3}},{name:1,sex:1,_id:0});

查询id不等于3的数据。

3:大于$gt $gte(大于等于)

&n

- Jboss Java heap space异常解决方法, jboss OutOfMemoryError : PermGen space

0624chenhong

jvmjboss

转自

http://blog.csdn.net/zou274/article/details/5552630

解决办法:

window->preferences->java->installed jres->edit jre

把default vm arguments 的参数设为-Xms64m -Xmx512m

----------------

- 文件上传 下载 解析 相对路径

不懂事的小屁孩

文件上传

有点坑吧,弄这么一个简单的东西弄了一天多,身边还有大神指导着,网上各种百度着。

下面总结一下遇到的问题:

文件上传,在页面上传的时候,不要想着去操作绝对路径,浏览器会对客户端的信息进行保护,避免用户信息收到攻击。

在上传图片,或者文件时,使用form表单来操作。

前台通过form表单传输一个流到后台,而不是ajax传递参数到后台,代码如下:

<form action=&

- 怎么实现qq空间批量点赞

换个号韩国红果果

qq

纯粹为了好玩!!

逻辑很简单

1 打开浏览器console;输入以下代码。

先上添加赞的代码

var tools={};

//添加所有赞

function init(){

document.body.scrollTop=10000;

setTimeout(function(){document.body.scrollTop=0;},2000);//加

- 判断是否为中文

灵静志远

中文

方法一:

public class Zhidao {

public static void main(String args[]) {

String s = "sdf灭礌 kjl d{';\fdsjlk是";

int n=0;

for(int i=0; i<s.length(); i++) {

n = (int)s.charAt(i);

if((

- 一个电话面试后总结

a-john

面试

今天,接了一个电话面试,对于还是初学者的我来说,紧张了半天。

面试的问题分了层次,对于一类问题,由简到难。自己觉得回答不好的地方作了一下总结:

在谈到集合类的时候,举几个常用的集合类,想都没想,直接说了list,map。

然后对list和map分别举几个类型:

list方面:ArrayList,LinkedList。在谈到他们的区别时,愣住了

- MSSQL中Escape转义的使用

aijuans

MSSQL

IF OBJECT_ID('tempdb..#ABC') is not null

drop table tempdb..#ABC

create table #ABC

(

PATHNAME NVARCHAR(50)

)

insert into #ABC

SELECT N'/ABCDEFGHI'

UNION ALL SELECT N'/ABCDGAFGASASSDFA'

UNION ALL

- 一个简单的存储过程

asialee

mysql存储过程构造数据批量插入

今天要批量的生成一批测试数据,其中中间有部分数据是变化的,本来想写个程序来生成的,后来想到存储过程就可以搞定,所以随手写了一个,记录在此:

DELIMITER $$

DROP PROCEDURE IF EXISTS inse

- annot convert from HomeFragment_1 to Fragment

百合不是茶

android导包错误

创建了几个类继承Fragment, 需要将创建的类存储在ArrayList<Fragment>中; 出现不能将new 出来的对象放到队列中,原因很简单;

创建类时引入包是:import android.app.Fragment;

创建队列和对象时使用的包是:import android.support.v4.ap

- Weblogic10两种修改端口的方法

bijian1013

weblogic端口号配置管理config.xml

一.进入控制台进行修改 1.进入控制台: http://127.0.0.1:7001/console 2.展开左边树菜单 域结构->环境->服务器-->点击AdminServer(管理) &

- mysql 操作指令

征客丶

mysql

一、连接mysql

进入 mysql 的安装目录;

$ bin/mysql -p [host IP 如果是登录本地的mysql 可以不写 -p 直接 -u] -u [userName] -p

输入密码,回车,接连;

二、权限操作[如果你很了解mysql数据库后,你可以直接去修改系统表,然后用 mysql> flush privileges; 指令让权限生效]

1、赋权

mys

- 【Hive一】Hive入门

bit1129

hive

Hive安装与配置

Hive的运行需要依赖于Hadoop,因此需要首先安装Hadoop2.5.2,并且Hive的启动前需要首先启动Hadoop。

Hive安装和配置的步骤

1. 从如下地址下载Hive0.14.0

http://mirror.bit.edu.cn/apache/hive/

2.解压hive,在系统变

- ajax 三种提交请求的方法

BlueSkator

Ajaxjqery

1、ajax 提交请求

$.ajax({

type:"post",

url : "${ctx}/front/Hotel/getAllHotelByAjax.do",

dataType : "json",

success : function(result) {

try {

for(v

- mongodb开发环境下的搭建入门

braveCS

运维

linux下安装mongodb

1)官网下载mongodb-linux-x86_64-rhel62-3.0.4.gz

2)linux 解压

gzip -d mongodb-linux-x86_64-rhel62-3.0.4.gz;

mv mongodb-linux-x86_64-rhel62-3.0.4 mongodb-linux-x86_64-rhel62-

- 编程之美-最短摘要的生成

bylijinnan

java数据结构算法编程之美

import java.util.HashMap;

import java.util.Map;

import java.util.Map.Entry;

public class ShortestAbstract {

/**

* 编程之美 最短摘要的生成

* 扫描过程始终保持一个[pBegin,pEnd]的range,初始化确保[pBegin,pEnd]的ran

- json数据解析及typeof

chengxuyuancsdn

jstypeofjson解析

// json格式

var people='{"authors": [{"firstName": "AAA","lastName": "BBB"},'

+' {"firstName": "CCC&

- 流程系统设计的层次和目标

comsci

设计模式数据结构sql框架脚本

流程系统设计的层次和目标

- RMAN List和report 命令

daizj

oraclelistreportrman

LIST 命令

使用RMAN LIST 命令显示有关资料档案库中记录的备份集、代理副本和映像副本的

信息。使用此命令可列出:

• RMAN 资料档案库中状态不是AVAILABLE 的备份和副本

• 可用的且可以用于还原操作的数据文件备份和副本

• 备份集和副本,其中包含指定数据文件列表或指定表空间的备份

• 包含指定名称或范围的所有归档日志备份的备份集和副本

• 由标记、完成时间、可

- 二叉树:红黑树

dieslrae

二叉树

红黑树是一种自平衡的二叉树,它的查找,插入,删除操作时间复杂度皆为O(logN),不会出现普通二叉搜索树在最差情况时时间复杂度会变为O(N)的问题.

红黑树必须遵循红黑规则,规则如下

1、每个节点不是红就是黑。 2、根总是黑的 &

- C语言homework3,7个小题目的代码

dcj3sjt126com

c

1、打印100以内的所有奇数。

# include <stdio.h>

int main(void)

{

int i;

for (i=1; i<=100; i++)

{

if (i%2 != 0)

printf("%d ", i);

}

return 0;

}

2、从键盘上输入10个整数,

- 自定义按钮, 图片在上, 文字在下, 居中显示

dcj3sjt126com

自定义

#import <UIKit/UIKit.h>

@interface MyButton : UIButton

-(void)setFrame:(CGRect)frame ImageName:(NSString*)imageName Target:(id)target Action:(SEL)action Title:(NSString*)title Font:(CGFloa

- MySQL查询语句练习题,测试足够用了

flyvszhb

sqlmysql

http://blog.sina.com.cn/s/blog_767d65530101861c.html

1.创建student和score表

CREATE TABLE student (

id INT(10) NOT NULL UNIQUE PRIMARY KEY ,

name VARCHAR

- 转:MyBatis Generator 详解

happyqing

mybatis

MyBatis Generator 详解

http://blog.csdn.net/isea533/article/details/42102297

MyBatis Generator详解

http://git.oschina.net/free/Mybatis_Utils/blob/master/MybatisGeneator/MybatisGeneator.

- 让程序员少走弯路的14个忠告

jingjing0907

工作计划学习

无论是谁,在刚进入某个领域之时,有再大的雄心壮志也敌不过眼前的迷茫:不知道应该怎么做,不知道应该做什么。下面是一名软件开发人员所学到的经验,希望能对大家有所帮助

1.不要害怕在工作中学习。

只要有电脑,就可以通过电子阅读器阅读报纸和大多数书籍。如果你只是做好自己的本职工作以及分配的任务,那是学不到很多东西的。如果你盲目地要求更多的工作,也是不可能提升自己的。放

- nginx和NetScaler区别

流浪鱼

nginx

NetScaler是一个完整的包含操作系统和应用交付功能的产品,Nginx并不包含操作系统,在处理连接方面,需要依赖于操作系统,所以在并发连接数方面和防DoS攻击方面,Nginx不具备优势。

2.易用性方面差别也比较大。Nginx对管理员的水平要求比较高,参数比较多,不确定性给运营带来隐患。在NetScaler常见的配置如健康检查,HA等,在Nginx上的配置的实现相对复杂。

3.策略灵活度方

- 第11章 动画效果(下)

onestopweb

动画

index.html

<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN" "http://www.w3.org/TR/xhtml1/DTD/xhtml1-transitional.dtd">

<html xmlns="http://www.w3.org/

- FAQ - SAP BW BO roadmap

blueoxygen

BOBW

http://www.sdn.sap.com/irj/boc/business-objects-for-sap-faq

Besides, I care that how to integrate tightly.

By the way, for BW consultants, please just focus on Query Designer which i

- 关于java堆内存溢出的几种情况

tomcat_oracle

javajvmjdkthread

【情况一】:

java.lang.OutOfMemoryError: Java heap space:这种是java堆内存不够,一个原因是真不够,另一个原因是程序中有死循环; 如果是java堆内存不够的话,可以通过调整JVM下面的配置来解决: <jvm-arg>-Xms3062m</jvm-arg> <jvm-arg>-Xmx

- Manifest.permission_group权限组

阿尔萨斯

Permission

结构

继承关系

public static final class Manifest.permission_group extends Object

java.lang.Object

android. Manifest.permission_group 常量

ACCOUNTS 直接通过统计管理器访问管理的统计

COST_MONEY可以用来让用户花钱但不需要通过与他们直接牵涉的权限

D