Kaggle泰坦尼克号比赛项目详解

Kaggle泰坦尼克号比赛项目详解

-

-

- 项目背景

- 目标

- 数据字典

-

- 一、基础字段

- 二、衍生字段(部分,在后续代码中补充)

- 特征工程

- 特征分析

-

- 一、导入必要库

- 二、导入数据

- 三、查看数据

- 四、查看字段信息

- 五、查看字段统计数据

- 六、查看船舱等级与幸存量的关系

- 七、查看性别与幸存情况的关系

- 八、查看乘客年龄与幸存情况的关系

- 九、查看兄弟姐妹及配偶数量与幸存情况关系

- 十、查看父母与孩子数量与幸存情况的关系

- 十一、查看不同票价对应与幸存情况的关系

- 特征清洗

-

- 1.NameLength (名字长度)

- 2.HasCabin(是否有船舱)

- 3.Title 称号字段

- 4.sex 性别字段

- 5.Age 年龄字段

- 6. IsChildren (是否为儿童)

- 7.FamilySize (将兄弟姐妹或配偶总数以及父母或孩子总数合并成一个特征)

- 8.IsAlone (是否为单独一人)

- 9.Embarked (港口因素)

- 9.Fare (票价)

- 10.特征相关性可视化

- 建模和优化

-

- 1.逻辑回归

- 2.SVC(支持向量机)

- 3.KNN(K近邻分类算法)

- 4.GNB(贝叶斯分类算法)

- 5.Perceptron模型

- 6.Linear SVC

- 7.SGD模型

- 8.决策树模型

- 9.随机森林算法

- 10.kfold交叉验证模型

- 模型效果比较

- 保存结果

-

- 1.保存随机森林模型预测结果

- 2.保存决策树模型预测结果

- 3.保存KNN模型预测结果

- 4.保存SVC(支持向量机模型)预测结果

- 5.保存SGD模型预测结果

- 6.保存Linear SVC模型预测结果

- 7.保存逻辑回归模型预测结果

- 结果文件

-

数据链接: https://pan.baidu.com/s/1gE4JvsgK5XV-G9dGpylcew

提取码:y409

项目背景

1、泰坦尼克号:英国白星航运公司下辖的一艘奥林匹克级邮轮,于1909年3月31日在爱尔兰贝尔法斯特港的哈兰德与沃尔夫造船厂动工建造,1911年5月31日下水,1912年4月2日完工试航。

2、首航时间:1912年4月10日

3、航线:从英国南安普敦出发,途经法国瑟堡-奥克特维尔以及爱尔兰昆士敦,驶向美国纽约。

4、沉船:1912年4月15日(1912年4月14日23时40分左右撞击冰山)

船员+乘客人数:2224

5、遇难人数:1502(67.5%)

目标

根据训练集中各位乘客的特征及是否获救标志的对应关系训练模型,预测测试集中的乘客是否获救。(二元分类问题)

数据字典

一、基础字段

PassengerId 乘客id:

训练集891(1- 891),测试集418(892 - 1309)

Survived 是否获救:

1=是,0=不是

获救:38%

遇难:62%(实际遇难比例:67.5%)

Pclass 船票级别:

代表社会经济地位。 1=高级,2=中级,3=低级

1 : 2 : 3 = 0.24 : 0.21 : 0.55

Name 姓名:

示例:Futrelle, Mrs. Jacques Heath (Lily May Peel)

示例:Heikkinen, Miss. Laina

Sex 性别:

male 男 577,female 女 314

男 : 女 = 0.65 : 0.35

Age 年龄(缺少20%数据):

训练集:714/891 = 80%

测试集:332/418 = 79%

SibSp 同行的兄弟姐妹或配偶总数:

68%无,23%有1个 … 最多8个

Parch 同行的父母或孩子总数:

76%无,13%有1个,9%有2个 … 最多6个

Some children travelled only with a nanny, therefore parch=0 for them.

Ticket 票号(格式不统一):

示例:A/5 21171

示例:STON/O2. 3101282

Fare 票价:

测试集缺一个数据

Cabin 船舱号:

训练集只有204条数据,测试集有91条数据

示例:C85

Embarked 登船港口:

C = Cherbourg(瑟堡)19%, Q = Queenstown(皇后镇)9%, S = Southampton(南安普敦)72%

训练集少两个数据

二、衍生字段(部分,在后续代码中补充)

Title 称谓:

dataset.Name.str.extract( “ ([A-Za-z]+).”, expand = False)

从姓名中提取,与姓名和社会地位相关

FamilySize 家庭规模:

Parch + SibSp + 1

用于计算是否独自出行IsAlone特征的中间特征,暂且保留

IsAlone 独自一人:

FamilySize == 1

是否独自出行

HasCabin 有独立舱室:

不确定没CabinId的样本是没有舱室还是数据确实

特征工程

Classifying:

样本分类或分级

Correlating:

样本预测结果和特征的关联程度,特征之间的关联程度

Converting:

特征转换(向量化)

Completing:

特征缺失值预估完善、

Correcting:

对于明显离群或会造成预测结果明显倾斜的异常数据,进行修正或排除

Creating:

根据现有特征衍生新的特征,以满足关联性、向量化以及完整度等目标上的要求

Charting:

根据数据性质和问题目标选择正确的可视化图表

特征分析

一、导入必要库

# 导入库

# 数据分析和探索

import pandas as pd

import numpy as np

import random as rnd

# 可视化

import seaborn as sns

import matplotlib.pyplot as plt

%matplotlib inline

# 消除警告

import warnings

warnings.filterwarnings('ignore')

# 机器学习模型

# 逻辑回归模型

from sklearn.linear_model import LogisticRegression

# 线性分类支持向量机

from sklearn.svm import SVC, LinearSVC

# 随机森林分类模型

from sklearn.ensemble import RandomForestClassifier

# K近邻分类模型

from sklearn.neighbors import KNeighborsClassifier

# 贝叶斯分类模型

from sklearn.naive_bayes import GaussianNB

# 感知机模型

from sklearn.linear_model import Perceptron

# 梯度下降算法

from sklearn.linear_model import SGDClassifier

# 决策树模型

from sklearn.tree import DecisionTreeClassifier

二、导入数据

# 获取数据,训练集train_df,测试集test_df

train_df = pd.read_csv('E:/PythonData/titanic/train.csv')

test_df = pd.read_csv('E:/PythonData/titanic/test.csv')

combine = [train_df, test_df]

将train_df和test_df合并为combine(便于对特征进行处理时统一处理:for df in combine:)

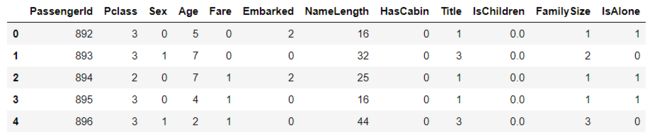

三、查看数据

# 探索数据

# 查看字段结构、类型及head示例

train_df.head()

四、查看字段信息

# 查看各特征非空样本量及字段类型

train_df.info()

print("*"*40)

test_df.info()

五、查看字段统计数据

# 查看数值类(int,float)特征的数据分布情况

train_df.describe()

# 查看非数值类(object类型)特征的数据分布情况

train_df.describe(include=["O"])

六、查看船舱等级与幸存量的关系

1. 创建船舱等级与生存量列联表

#生成Pclass_Survived的列联表

Pclass_Survived = pd.crosstab(train_df['Pclass'], train_df['Survived'])

# 绘制条形图

Pclass_Survived.plot(kind = 'bar')

plt.xticks(rotation=360)

plt.show()

train_df[["Pclass","Survived"]].groupby(["Pclass"],as_index=True).mean().sort_values(by="Pclass",ascending=True).plot()

plt.xticks(range(1,4)[::1])

plt.show()

分析: 1,2,3分别表示1等,2等,3等船舱等级。富人和中等阶层有更高的生还率,底层生还率低。

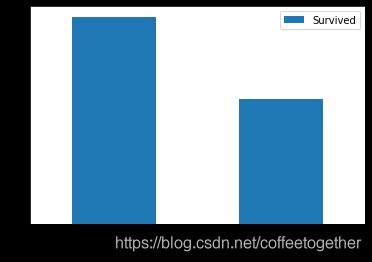

七、查看性别与幸存情况的关系

1.创建性别与生存量列联表

#生成性别与生存列联表

Sex_Survived = pd.crosstab(train_df['Sex'],train_df['Survived'])

Sex_Survived.plot(kind='bar')

# 横坐标0,1分别表示男性和女性

plt.xticks(rotation=360)

plt.show()

# 查看性别与生存率

train_df[["Sex","Survived"]].groupby(["Sex"],as_index=False).mean().sort_values(by="Survived",ascending=False)

八、查看乘客年龄与幸存情况的关系

1.处年年龄缺失情况(用中位数数代替缺失数据)

# 用年龄的中位数代替年龄缺失值

Agemedian = train_df['Age'].median()

#在当前表填充缺失值

train_df.Age.fillna(Agemedian, inplace = True)

#重置索引

train_df.reset_index(inplace = True)

2.对年龄进行分组,绘制年龄与幸存数量条形图

#对Age进行分组: 2**10>891分成10组, 组距为(最大值80-最小值0)/10 =8取9

bins = [0, 9, 18, 27, 36, 45, 54, 63, 72, 81, 90]

train_df['GroupAge'] = pd.cut(train_df.Age, bins)

GroupAge_Survived = pd.crosstab(train_df['GroupAge'], train_df['Survived'])

GroupAge_Survived.plot(kind = 'bar',figsize=(10,6))

plt.xticks(rotation=360)

plt.title('Survived status by GroupAge')

# 不同年龄段幸存数

GroupAge_Survived_1 = GroupAge_Survived[1]

# 不同年龄段幸存率

GroupAge_all = GroupAge_Survived.sum(axis=1)

GroupAge_Survived_rate = round(GroupAge_Survived_1/GroupAge_all,2)

GroupAge_Survived_rate.plot(figsize=(10,6))

plt.show()

分析:年龄段在0-9以及72-81岁时,对应的幸存率较高。说明在逃生时老人小孩优先。但63-72对应的幸存率是最低,猜测时对应年龄段的人数太少导致。

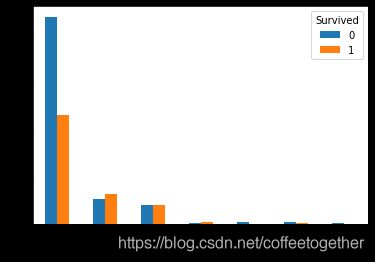

九、查看兄弟姐妹及配偶数量与幸存情况关系

1.创建兄弟姐妹及配偶数量与生存量列联表

# 生成列联表

SibSp_Survived = pd.crosstab(train_df['SibSp'], train_df['Survived'])

SibSp_Survived

SibSp_Survived.plot(kind='bar')

plt.xticks(rotation=360)

plt.show()

# 查看兄弟姐妹配偶数量与生存率的关系

train_df[["SibSp","Survived"]].groupby(["SibSp"],as_index=True).mean().sort_values(by="SibSp",ascending=True).plot()

plt.show()

分析:兄弟姐妹与配偶数量从在1-2时对应的生存率较高,其他相对较低。

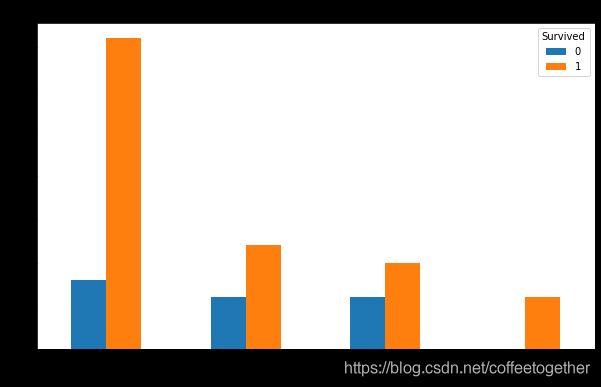

十、查看父母与孩子数量与幸存情况的关系

1.创建父母与孩子数量与生存量列联表

创建列联表

Parch_Survived = pd.crosstab(train_df['Parch'], train_df['Survived'])

Parch_Survived

Parch_Survived.plot(kind='bar')

plt.xticks(rotation=360)

plt.show()

# 查看父母与孩子数与生存率的关系

train_df[["Parch","Survived"]].groupby(["Parch"],as_index = True).mean().sort_values(by="Parch",ascending=True).plot()

plt.show()

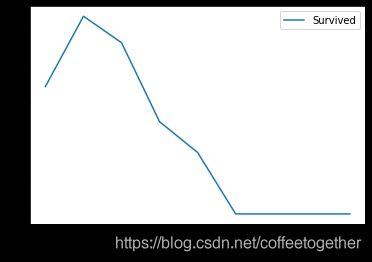

十一、查看不同票价对应与幸存情况的关系

1.划分船票价格,创建不同船票对应生存量列联表

#对Fare进行分组: 2**10>891分成10组, 组距为(最大值512.3292-最小值0)/10取值60

bins = [0, 60, 120, 180, 240, 300, 360, 420, 480, 540, 600]

train_df['GroupFare'] = pd.cut(train_df.Fare, bins, right = False)

GroupFare_Survived = pd.crosstab(train_df['GroupFare'], train_df['Survived'])

GroupFare_Survived

# 绘制簇状柱形图

GroupFare_Survived.plot(kind = 'bar',figsize=(10,6))

# 调整刻度

plt.xticks(rotation=360)

plt.title('Survived status by GroupFare')

GroupFare_Survived.iloc[2:].plot(kind = 'bar',figsize=(10,6))

# 调整刻度

plt.xticks(rotation=360)

plt.title('Survived status by GroupFare(Fare>=120)')

# 绘制不票价对应生存率折线图

# 不同票价对应存活数

GroupFare_Survived_1 = GroupFare_Survived[1]

# 不同票价对应存活率

GroupFare_all = GroupFare_Survived.sum(axis=1)

GroupFare_Survived_rate = round(GroupFare_Survived_1/GroupFare_all,2)

GroupFare_Survived_rate.plot()

plt.show()

分析:票价在120-180以及480-540时对应的生存率较高,且票价与生存率总体上呈正相关趋势。

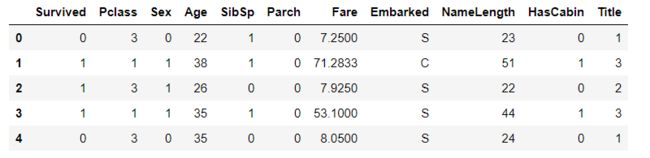

train_df.head()

train_df = train_df.drop(["index","GroupAge","GroupFare"],axis=1)

train_df.head()

特征清洗

1.NameLength (名字长度)

# 创建训练集和测试集姓名长度字段

train_df['NameLength'] = train_df['Name'].apply(len)

test_df['NameLength'] = test_df['Name'].apply(len)

注:这里解释一下Kaggle作者使用名称字段作为特征之一,原因在于姓名里带有该乘客的头衔,名字长度越长对应的头衔越多。即相对应的社会地位较高。

2.HasCabin(是否有船舱)

将乘客是否有船舱归为两类

# 使用匿名函数,NaN为浮点型,为浮点型的为0,否则为1(即没有船舱的为0,有船舱的为1)

train_df['HasCabin'] = train_df["Cabin"].apply(lambda x: 0 if type(x) == float else 1)

test_df['HasCabin'] = test_df["Cabin"].apply(lambda x: 0 if type(x) == float else 1)

train_df.head()

删除Ticket和Cabin字段,原因在于Ticket字段表示船票的名称。与乘客的生存率无关联。删除Cabin是因为通过HasCabin来代替Cabin字段。

# 剔除Ticket(人为判断无关联)和Cabin(有效数据太少)两个特征

train_df = train_df.drop(["Ticket","Cabin"],axis=1)

test_df = test_df.drop(["Ticket","Cabin"],axis=1)

combine = [train_df,test_df]

print(train_df.shape,test_df.shape,combine[0].shape,combine[1].shape)

3.Title 称号字段

# 根据姓名创建称号特征,会包含性别和阶层信息

# dataset.Name.str.extract(' ([A-Za-z]+)\.' -> 把空格开头.结尾的字符串抽取出来

# 和性别匹配,看各类称号分别属于男or女,方便后续归类

for dataset in combine:

dataset['Title'] = dataset.Name.str.extract(' ([A-Za-z]+)\.', expand=False)

pd.crosstab(train_df['Title'], train_df['Sex']).sort_values(by=["male","female"],ascending=False)

# 把称号归类为Mr,Miss,Mrs,Master,Rare_Male,Rare_Female(按男性和女性区分了Rare)

for dataset in combine:

dataset["Title"] = dataset["Title"].replace(['Lady', 'Countess', 'Dona'],"Rare_Female")

dataset["Title"] = dataset["Title"].replace(['Capt', 'Col','Don','Dr','Major',

'Rev','Sir','Jonkheer',],"Rare_Male")

dataset["Title"] = dataset["Title"].replace('Mlle', 'Miss')

dataset['Title'] = dataset['Title'].replace('Ms', 'Miss')

dataset['Title'] = dataset['Title'].replace('Mme', 'Miss')

绘制不同称号对应的存活率

# 按Title汇总计算Survived均值,查看相关性

T_S = train_df[["Title","Survived"]].groupby(["Title"],as_index=False).mean().sort_values(by='Survived',ascending=True)

plt.figure(figsize=(10,6))

plt.bar(T_S['Title'],T_S['Survived'])

![]()

分析:称号为Miss、Mrs、Rare_Female乘客的存活率居高,说明在逃生时,大家遵循女士优先的原则。

将Title特征映射成数值

title_mapping = {"Mr":1,"Miss":2,"Mrs":3,"Master":4,"Rare_Female":5,"Rare_Male":6}

for dataset in combine:

dataset["Title"] = dataset["Title"].map(title_mapping)

dataset["Title"] = dataset["Title"].fillna(0)

# 为了避免有空数据的常规操作

train_df.head()

# Name字段可以剔除了

# 训练集的PassengerId字段仅为自增字段,与预测无关,可剔除

train_df = train_df.drop(["Name","PassengerId"],axis=1)

test_df = test_df.drop(["Name"],axis=1)

train_df.head()

# 每次删除特征时都要重新combine

combine = [train_df,test_df]

combine[0].shape,combine[1].shape

4.sex 性别字段

将性别字段转换成数值,将女性设置为0,男性设置为1

# sex特征映射为数值

for dataset in combine:

dataset["Sex"] = dataset["Sex"].map({"female":1,"male":0}).astype(int)

# 后面加astype(int)是为了避免处理为布尔型?

train_df.head()

5.Age 年龄字段

guess_ages = np.zeros((6,3))

guess_ages

对年龄字段进行空值处理(使用相同Pclass和Title的Age中位数来填充)

# 给age年龄字段的空值填充估值

# 使用相同Pclass和Title的Age中位数来替代(对于中位数为空的组合,使用Title整体的中位数来替代)

for dataset in combine:

# 取6种组合的中位数

for i in range(0, 6):

for j in range(0, 3):

guess_title_df = dataset[dataset["Title"]==i+1]["Age"].dropna()

guess_df = dataset[(dataset['Title'] == i+1) & (dataset['Pclass'] == j+1)]['Age'].dropna()

# age_mean = guess_df.mean()

# age_std = guess_df.std()

# age_guess = rnd.uniform(age_mean - age_std, age_mean + age_std)

age_guess = guess_df.median() if ~np.isnan(guess_df.median()) else guess_title_df.median()

#print(i,j,guess_df.median(),guess_title_df.median(),age_guess)

# Convert random age float to nearest .5 age

guess_ages[i,j] = int( age_guess/0.5 + 0.5 ) * 0.5

# 给满足6中情况的Age字段赋值

for i in range(0, 6):

for j in range(0, 3):

dataset.loc[ (dataset.Age.isnull()) & (dataset.Title == i+1) & (dataset.Pclass == j+1),

'Age'] = guess_ages[i,j]

dataset['Age'] = dataset['Age'].astype(int)

train_df.head()

6. IsChildren (是否为儿童)

将年龄小于等于12的视为儿童,其余的为非儿童。分别用1,0来表示

#创建是否儿童特征

for dataset in combine:

dataset.loc[dataset["Age"] > 12,"IsChildren"] = 0

dataset.loc[dataset["Age"] <= 12,"IsChildren"] = 1

train_df.head()

划分年龄区间

# 创建年龄区间特征

# pd.cut是按值的大小均匀切分,每组值区间大小相同,但样本数可能不一致

# pd.qcut是按照样本在值上的分布频率切分,每组样本数相同

train_df["AgeBand"] = pd.qcut(train_df["Age"],8)

train_df.head()

train_df[["AgeBand","Survived"]].groupby(["AgeBand"],as_index = False).mean().sort_values(by="AgeBand",ascending=True)

将年龄区间转换成数值

# 把年龄按区间标准化为0到4

for dataset in combine:

dataset.loc[ dataset['Age'] <= 17, 'Age'] = 0

dataset.loc[(dataset['Age'] > 17) & (dataset['Age'] <= 21), 'Age'] = 1

dataset.loc[(dataset['Age'] > 21) & (dataset['Age'] <= 25), 'Age'] = 2

dataset.loc[(dataset['Age'] > 25) & (dataset['Age'] <= 26), 'Age'] = 3

dataset.loc[(dataset['Age'] > 26) & (dataset['Age'] <= 31), 'Age'] = 4

dataset.loc[(dataset['Age'] > 31) & (dataset['Age'] <= 36.5), 'Age'] = 5

dataset.loc[(dataset['Age'] > 36.5) & (dataset['Age'] <= 45), 'Age'] = 6

dataset.loc[ dataset['Age'] > 45, 'Age'] = 7

train_df.head()

# 移除AgeBand特征

train_df = train_df.drop('GroupAge',axis=1)

combine = [train_df,test_df]

train_df.head()

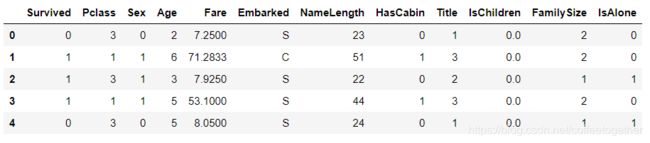

7.FamilySize (将兄弟姐妹或配偶总数以及父母或孩子总数合并成一个特征)

# 创建家庭规模FamilySize组合特征 +1是考虑本人

for dataset in combine:

dataset["FamilySize"] = dataset["Parch"] + dataset["SibSp"] + 1

train_df[["FamilySize","Survived"]].groupby(["FamilySize"],as_index = True).mean().sort_values(by="FamilySize",ascending=True).plot()

plt.xticks(range(12)[::1])

plt.show()

8.IsAlone (是否为单独一人)

# 创建是否独自一人IsAlone特征

for dataset in combine:

dataset["IsAlone"] = 0

dataset.loc[dataset["FamilySize"] == 1,"IsAlone"] = 1

train_df[["IsAlone","Survived"]].groupby(["IsAlone"],as_index=True).mean().sort_values(by="IsAlone",ascending=True).plot(kind='bar')

plt.xticks(rotation=360)

plt.show()

查看是否单独一人与生存率柱状图

移除Parch,Sibsp 字段

train_df = train_df.drop(["Parch","SibSp"],axis=1)

test_df = test_df.drop(["Parch","SibSp"],axis=1)

combine = [train_df,test_df]

train_df.head()

9.Embarked (港口因素)

# 给Embarked补充空值

# 获取上船最多的港口

freq_port = train_df["Embarked"].dropna().mode()[0]

freq_port

for dataset in combine:

dataset["Embarked"] = dataset["Embarked"].fillna(freq_port)

Embarked_Survived = pd.crosstab(train_df['Embarked'],train_df['Survived'])

Embarked_Survived

Embarked_Survived.plot(kind='bar')

plt.xticks(rotation=360)

plt.show()

# 查看不同港口与幸存量的关系

train_df[["Embarked","Survived"]].groupby(["Embarked"],as_index=True).mean().sort_values(by="Embarked",ascending=True).plot(kind='bar')

plt.xticks(rotation=360)

plt.show()

# 把Embarked数字化

for dataset in combine:

dataset["Embarked"] = dataset["Embarked"].map({"S":0,"C":1,"Q":2}).astype(int)

train_df.head()

9.Fare (票价)

# 给测试集中的Fare填充空值,使用中位数

test_df["Fare"].fillna(test_df["Fare"].dropna().median(),inplace=True)

test_df.info()

# 创建FareBand区间特征

train_df["FareBand"] = pd.qcut(train_df["Fare"],4)

train_df[["FareBand","Survived"]].groupby(["FareBand"],as_index=False).mean().sort_values(by="FareBand",ascending=True)

# 根据FareBand将Fare特征转换为序数值

for dataset in combine:

dataset.loc[ dataset['Fare'] <= 7.91, 'Fare'] = 0

dataset.loc[(dataset['Fare'] > 7.91) & (dataset['Fare'] <= 14.454), 'Fare'] = 1

dataset.loc[(dataset['Fare'] > 14.454) & (dataset['Fare'] <= 31), 'Fare'] = 2

dataset.loc[ dataset['Fare'] > 31, 'Fare'] = 3

dataset['Fare'] = dataset['Fare'].astype(int)

# 移除FareBand

train_df = train_df.drop(['FareBand'], axis=1)

combine = [train_df, test_df]

train_df.head(10)

test_df.head()

10.特征相关性可视化

# 用seaborn的heatmap对特征之间的相关性进行可视化

colormap = plt.cm.RdBu

plt.figure(figsize=(14,12))

plt.title('Pearson Correlation of Features', y=1.05, size=15)

sns.heatmap(train_df.astype(float).corr(),linewidths=0.1,vmax=1.0,

square=True, cmap=colormap, linecolor='white', annot=True)

plt.show()

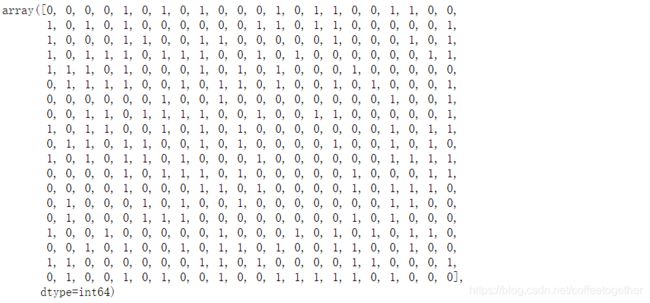

X_train = train_df.drop(['Survived','index'],axis=1)

Y_train = train_df["Survived"]

X_test = test_df.drop("PassengerId",axis=1).copy()

X_train

Y_train

X_test.head()

X_train.shape,Y_train.shape,X_test.shape

![]()

建模和优化

1.逻辑回归

# Logistic Regression 逻辑回归模型

logreg = LogisticRegression()

logreg.fit(X_train,Y_train)

Y_pred_logreg = logreg.predict(X_test)

acc_log = round(logreg.score(X_train,Y_train)*100,2)

# 预测结果

Y_pred_logreg

acc_log

![]()

计算相关性

# 计算相关性

coeff_df = pd.DataFrame(train_df.columns.delete(0))

coeff_df.columns = ['Feature']

coeff_df["Correlation"] = pd.Series(logreg.coef_[0])

coeff_df.sort_values(by='Correlation', ascending=False)

2.SVC(支持向量机)

# Support Vector Machines 支持向量机模型

svc = SVC()

svc.fit(X_train, Y_train)

Y_pred_svc = svc.predict(X_test)

acc_svc = round(svc.score(X_train, Y_train) * 100, 2)

Y_pred_svc

acc_svc

![]()

3.KNN(K近邻分类算法)

# KNN k近邻分类模型

knn = KNeighborsClassifier(n_neighbors = 3)

knn.fit(X_train, Y_train)

Y_pred_knn = knn.predict(X_test)

acc_knn = round(knn.score(X_train, Y_train) * 100, 2)

Y_pred_knn

acc_knn

4.GNB(贝叶斯分类算法)

# 贝叶斯分类

# Gaussian Naive Bayes

gaussian = GaussianNB()

gaussian.fit(X_train, Y_train)

Y_pred_gaussian = gaussian.predict(X_test)

acc_gaussian = round(gaussian.score(X_train, Y_train) * 100, 2)

Y_pred_gaussian

acc_gaussian

![]()

5.Perceptron模型

# Perceptron

perceptron = Perceptron()

perceptron.fit(X_train, Y_train)

Y_pred_perceptron = perceptron.predict(X_test)

acc_perceptron = round(perceptron.score(X_train, Y_train) * 100, 2)

acc_perceptron

acc_perceptron

![]()

6.Linear SVC

# Linear SVC

linear_svc = LinearSVC()

linear_svc.fit(X_train, Y_train)

Y_pred_linear_svc= linear_svc.predict(X_test)

acc_linear_svc = round(linear_svc.score(X_train, Y_train) * 100, 2)

Y_pred_linear_svc

acc_linear_svc

![]()

7.SGD模型

# Stochastic Gradient Descent

sgd = SGDClassifier()

sgd.fit(X_train, Y_train)

Y_pred_sgd = sgd.predict(X_test)

acc_sgd = round(sgd.score(X_train, Y_train) * 100, 2)

Y_pred_sgd

acc_sgd

![]()

8.决策树模型

# Decision Tree 决策树模型

decision_tree = DecisionTreeClassifier()

decision_tree.fit(X_train, Y_train)

Y_pred_decision_tree = decision_tree.predict(X_test)

acc_decision_tree = round(decision_tree.score(X_train, Y_train) * 100, 2)

Y_pred_decision_tree

acc_decision_tree

![]()

9.随机森林算法

from sklearn.model_selection import train_test_split

X_all = train_df.drop(['Survived'], axis=1)

y_all = train_df['Survived']

num_test = 0.20

X_train, X_test, y_train, y_test = train_test_split(X_all, y_all, test_size=num_test, random_state=23)

# Random Forest

from sklearn.metrics import make_scorer, accuracy_score

from sklearn.model_selection import GridSearchCV

random_forest = RandomForestClassifier()

parameters = {'n_estimators': [4, 6, 9],

'max_features': ['log2', 'sqrt','auto'],

'criterion': ['entropy', 'gini'],

'max_depth': [2, 3, 5, 10],

'min_samples_split': [2, 3, 5],

'min_samples_leaf': [1,5,8]

}

acc_scorer = make_scorer(accuracy_score)

grid_obj = GridSearchCV(random_forest, parameters, scoring=acc_scorer)

grid_obj = grid_obj.fit(X_train, y_train)

clf = grid_obj.best_estimator_

clf.fit(X_train, y_train)

pred = clf.predict(X_test)

acc_random_forest_split=accuracy_score(y_test, pred)

pred

![]()

acc_random_forest_split

![]()

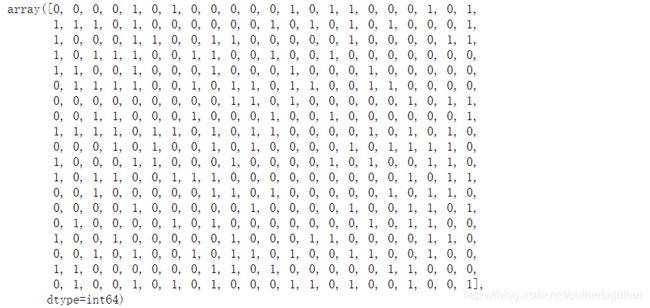

10.kfold交叉验证模型

from sklearn.model_selection import KFold

def run_kfold(clf):

kf = KFold(891,n_splits=10)

outcomes = []

fold = 0

for train_index, test_index in kf.split(train_df):

fold += 1

X_train, X_test = X_all.values[train_index], X_all.values[test_index]

y_train, y_test = y_all.values[train_index], y_all.values[test_index]

clf.fit(X_train, y_train)

predictions = clf.predict(X_test)

accuracy = accuracy_score(y_test, predictions)

outcomes.append(accuracy)

mean_outcome = np.mean(outcomes)

print("Mean Accuracy: {0}".format(mean_outcome))

run_kfold(clf)

![]()

test_df.head()

模型效果比较

Y_pred_random_forest_split = clf.predict(test_df.drop("PassengerId",axis=1))

models = pd.DataFrame({

'Model': ['SVM', 'KNN', 'Logistic Regression',

'Random Forest', 'Naive Bayes', 'Perceptron',

'SGD', 'Linear SVC',

'Decision Tree'],

'Score': [acc_svc, acc_knn,

acc_log,

acc_random_forest_split,

#acc_random_forest,

acc_gaussian,

acc_perceptron,

acc_sgd,

acc_linear_svc,

acc_decision_tree]})

M_s = models.sort_values(by='Score', ascending=False)

M_s

plt.figure(figsize=(20,8),dpi=80)

plt.bar(M_s['Model'],M_s['Score'])

plt.show()

保存结果

# 导入时间模块,利用时间戳作为文件名

import time

print(time.strftime('%Y%m%d%H%M',time.localtime(time.time())))

![]()

1.保存随机森林模型预测结果

# 取最后更新的随机森林模型的预测数据进行提交

submission = pd.DataFrame({

"PassengerId": test_df["PassengerId"],

"Survived": Y_pred_random_forest_split

#"Survived": Y_pred_random_forest

})

submission.to_csv('E:/PythonData/titanic/submission_random_forest_'

+time.strftime('%Y%m%d%H%M',time.localtime(time.time()))

+".csv",

index=False)

2.保存决策树模型预测结果

submission = pd.DataFrame({

"PassengerId": test_df["PassengerId"],

"Survived": Y_pred_decision_tree

})

submission.to_csv('E:/PythonData/titanic/submission_decision_tree'

+time.strftime('%Y%m%d%H%M',time.localtime(time.time()))

+".csv",

index=False)

3.保存KNN模型预测结果

submission = pd.DataFrame({

"PassengerId": test_df["PassengerId"],

"Survived": Y_pred_knn

})

submission.to_csv('E:/PythonData/titanic/submission_knn_'

+time.strftime('%Y%m%d%H%M',time.localtime(time.time()))

+".csv",

index=False)

4.保存SVC(支持向量机模型)预测结果

submission = pd.DataFrame({

"PassengerId": test_df["PassengerId"],

"Survived": Y_pred_svc

})

submission.to_csv('E:/PythonData/titanic/submission_svc_'

+time.strftime('%Y%m%d%H%M',time.localtime(time.time()))

+".csv",

index=False)

5.保存SGD模型预测结果

# SGD

submission = pd.DataFrame({

"PassengerId": test_df["PassengerId"],

"Survived": Y_pred_sgd

})

submission.to_csv('E:/PythonData/titanic/submission_sgd_'

+time.strftime('%Y%m%d%H%M',time.localtime(time.time()))

+".csv",

index=False)

6.保存Linear SVC模型预测结果

# Linear SVC

submission = pd.DataFrame({

"PassengerId": test_df["PassengerId"],

"Survived": Y_pred_linear_svc

})

submission.to_csv('E:/PythonData/titanic/submission_linear_svc_'

+time.strftime('%Y%m%d%H%M',time.localtime(time.time()))

+".csv",

index=False)

7.保存逻辑回归模型预测结果

# 逻辑回归

submission = pd.DataFrame({

"PassengerId": test_df["PassengerId"],

"Survived": Y_pred_logreg

})

submission.to_csv('E:/PythonData/titanic/submission_logreg_'

+time.strftime('%Y%m%d%H%M',time.localtime(time.time()))

+".csv",

index=False)