NNDL 实验四 线性分类

yo前言:不知不觉已经进行了三次深度学习实验,本次实验是关于线性分类及其相关知识,利用不同的线性模型去执行二分类或多分类任务,虽说上学期学习了机器学习,但是通过解决深度学习实验中的每一个问题仍能获得许多新的知识和领悟,接下来开始本次实验,如有错误的地方请大家指正,希望能够相互学习。

线性分类是指利用一个或多个线性函数将样本进行分类。

常用的线性分类模型有Logistic回归和Softmax回归:Logistic回归是一种常用的处理二分类问题的线性模型。Softmax回归是Logistic回归在多分类问题上的推广。

本章实验内容主要包括:

-

模型解读:掌握两个最常用的线性分类模型Logistic回归和Softmax回归的原理和相应的代码实现。通过理论和代码的结合,加深对线性模型的理解;

-

案例实践:基于Softmax回归算法完成鸢尾花分类任务。

基于Logistic回归的二分类任务

我所写的程序将各个函数与主函数分开,使用的时候直接进行调用,个人认为这样比较清晰简洁,出现bug也容易去寻找修改。

数据集构建

首先将实验使用到的导包提前给出

import matplotlib.pyplot as plt

import math

from Runner import Runner # Runner类

from BinaryCrossEntropyLoss import BinaryCrossEntropyLoss # 损失函数调用

import torch

from model_LR import model_LR # model_LR函数

from Optimizer import SimpleBatchGD # 导用optimizer函数中的SimpleBatchGD

import os # 由于我的电脑中含有多个环境,需要导入这两行代码以保证程序正常运行

os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"构建一个简单的分类任务,并构建训练集、验证集和测试集,数据集来自噪音的两个弯月型函数,分别对应一个类别。

def make_moon(n_samples=1000, shuffle=True, noise=None):

"""

输入

n_samples: 数据集量的大小

shuffle: 是否打乱数据

noise: 以多大程度增加噪音

输出

X:特征数据,shape=[n_samples,2]

y:标签数据, shape=[n_samples]

"""

n_samples_out = n_samples / 2

n_samples_in = n_samples - n_samples_out

# 采集第1类数据,特征为(x,y)

# 使用torch.linspace在0到pi上均匀取n_samples_out个值

# 使用torch.cos计算上述取值的余弦值作为特征1,使用torch.sin计算上述取值的正弦值作为特征2

outer_circ_x = torch.cos(torch.linspace(0, math.pi, int(n_samples_out)))

outer_circ_y = torch.sin(torch.linspace(0, math.pi, int(n_samples_out)))

inner_circ_x = 1 - torch.cos(torch.linspace(0, math.pi, int(n_samples_in)))

inner_circ_y = 0.5 - torch.sin(torch.linspace(0, math.pi, int(n_samples_in)))

print('outer_circ_x.shape:', outer_circ_x.shape, 'outer_circ_y.shape:', outer_circ_y.shape)

print('inner_circ_x.shape:', inner_circ_x.shape, 'inner_circ_y.shape:', inner_circ_y.shape)

# 使用torch.concat将两类数据的特征1和特征2分别延维度0拼接在一起,得到全部特征1和特征2

# 使用torch.stack将两类特征延维度1堆叠在一起

X = torch.stack(

[torch.cat([outer_circ_x, inner_circ_x]),

torch.cat([outer_circ_y, inner_circ_y])],

1

)

print('after concat shape:', torch.cat([outer_circ_x, inner_circ_x]).shape)

print('X shape:', X.shape)

# 使用torch. zeros将第一类数据的标签全部设置为0

# 使用torch. ones将第一类数据的标签全部设置为1

y = torch.cat(

[torch.zeros([int(n_samples_out)]), torch.ones([int(n_samples_in)])]

)

print('y shape:', y.shape)

# 如果shuffle为True,将所有数据打乱

if shuffle:

# 使用torch.randperm生成一个数值在0到X.shape[0],随机排列的一维Tensor做索引值,用于打乱数据

idx = torch.randperm(X.shape[0])

X = X[idx]

y = y[idx]

# 如果noise不为None,则给特征值加入噪声

if noise is not None:

# 使用torch.normal生成符合正态分布的随机Tensor作为噪声,并加到原始特征上

X += torch.normal(0.0, noise, X.shape)

return X, y接下来随机采集1000个样本,并进行可视化

n_samples = 1000

X, y = make_moon(n_samples=n_samples, shuffle=True, noise=0.5)

# 可视化生产的数据集,不同颜色代表不同类别

plt.figure(figsize=(5, 5))

plt.scatter(x=X[:, 0].tolist(), y=X[:, 1].tolist(), marker='*', c=y.tolist())

plt.xlim(-3, 4)

plt.ylim(-3, 4)

plt.savefig('linear-dataset-vis.pdf')

plt.show()运行结果:

outer_circ_x.shape: torch.Size([500]) outer_circ_y.shape: torch.Size([500])

inner_circ_x.shape: torch.Size([500]) inner_circ_y.shape: torch.Size([500])

after concat shape: torch.Size([1000])

X shape: torch.Size([1000, 2])

y shape: torch.Size([1000])将1000条样本数据拆分成训练集、验证集和测试集,其中训练集640条、验证集160条、测试集200条

# 将实验数据拆分

num_train = 640

num_dev = 160

num_test = 200

X_train, y_train = X[:num_train], y[:num_train]

X_dev, y_dev = X[num_train:num_train + num_dev], y[num_train:num_train + num_dev]

X_test, y_test = X[num_train + num_dev:], y[num_train + num_dev:]

y_train = y_train.reshape([-1, 1])

y_dev = y_dev.reshape([-1, 1])

y_test = y_test.reshape([-1, 1])模型构建

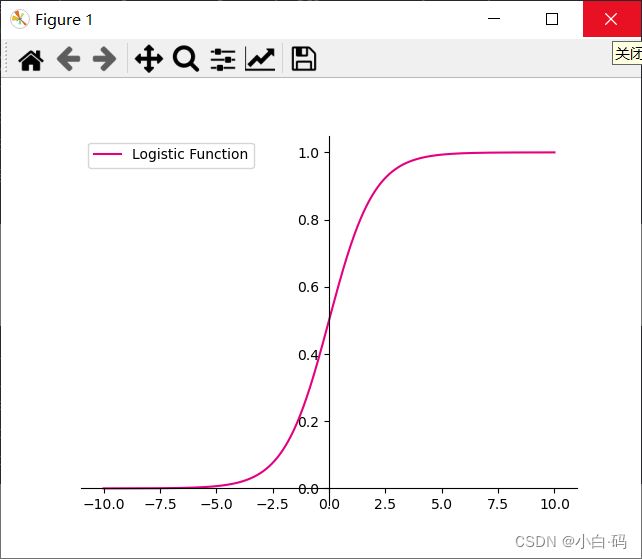

根据Logistic函数创建回归线性模型,Logistic回归模型其实就是线性层与Logistic函数的组合

# 定义Logistic函数

def logistic(x):

return 1 / (1 + torch.exp(-x))

# 在[-10,10]的范围内生成一系列的输入值,用于绘制函数曲线

x = torch.linspace(-10, 10, 10000)

plt.figure()

# tolist()用于将数组或矩阵转为列表

plt.plot(x.tolist(), logistic(x).tolist(), color="#e4007f", label="Logistic Function")

# 设置坐标轴

ax = plt.gca()

# 取消右侧和上侧坐标轴

ax.spines['top'].set_color('none')

ax.spines['right'].set_color('none')

# 设置默认的x轴和y轴方向,坐标原点

ax.xaxis.set_ticks_position('bottom')

ax.yaxis.set_ticks_position('left')

ax.spines['left'].set_position(('data', 0))

ax.spines['bottom'].set_position(('data', 0))

# 添加图例

plt.legend()

plt.savefig('linear-logistic.pdf')

plt.show()运行结果:

从输出结果看,当输入在0附近时,Logistic函数近似为线性函数;而当输入值非常大或非常小时,函数会对输入进行抑制。输入越小,则越接近0;输入越大,则越接近1。正因为Logistic函数具有这样的性质,使得其输出可以直接看作为概率分布。

Logistic回归算子

from nndl import op

import torch

# 定义Logistic函数

def logistic(x):

return 1 / (1 + torch.exp(-x))

class model_LR(op.Op):

def __init__(self, input_dim):

super(model_LR, self).__init__()

# 存放线性层参数

self.params = {}

# 将线性层的权重参数全部初始化为0

self.params['w'] = torch.zeros([input_dim, 1])

# self.params['w'] = paddle.normal(mean=0, std=0.01, shape=[input_dim, 1])

# 将线性层的偏置参数初始化为0

self.params['b'] = torch.zeros([1])

# 存放参数的梯度

self.grads = {}

self.X = None

self.outputs = None

def __call__(self, inputs):

return self.forward(inputs)

def forward(self, inputs):

# 线性计算

score = torch.matmul(inputs, self.params['w']) + self.params['b']

# Logistic 函数

outputs = logistic(score)

return outputs

问题1:Logistic回归在不同的书籍中,有许多其他的称呼,具体有哪些?你认为哪个称呼最好?

首先Logistic回归在我们所学习的课本中就叫作‘Logistic回归’,可能是为了避免其他人的争端所以直接使用,而又有的人称之为逻辑回归,在以前所学到的许多数学课程中,见到的偏多,感觉在机器学习等计算机专业课程中多称之为Logistic回归,在数学领域中多称之为逻辑回归;我认为名字只是个代称而已,具体叫什么完全可以根据个人喜好,比如我不会读那个词,就叫逻辑回归......

问题2:什么是激活函数?为什么要用激活函数?常见激活函数有哪些?

在神经元中,输入的 inputs 通过加权,求和后,还被作用了一个函数,这个函数就是激活函数,通俗点讲就是将非线性特性引入到我们的网络中,增加网络模型的非线性。没有激活函数的每层都相当于矩阵相乘;

激活函数给神经元引入了非线性因素,使得神经网络可以任意逼近任何非线性函数,这样神经网络就可以应用到众多的非线性模型中;

常见的激活函数:Sigmoid函数,ReLU函数,Tanh函数等

损失函数

在模型训练过程中,需要使用损失函数来量化预测值和真实值之间的差异。(交叉熵损失函数)

from nndl import op

import torch

# 二分类任务交叉熵损失函数

class BinaryCrossEntropyLoss(op.Op):

def __init__(self):

self.predicts = None

self.labels = None

self.num = None

def __call__(self, predicts, labels):

return self.forward(predicts, labels)

def forward(self, predicts, labels):

"""

- predicts:预测值,shape=[N, 1],N为样本数量

- labels:真实标签,shape=[N, 1]

- 损失值:shape=[1]

"""

self.predicts = predicts

self.labels = labels

self.num = self.predicts.shape[0]

loss = -1. / self.num * (torch.matmul(self.labels.t(), torch.log(self.predicts)) + torch.matmul((1-self.labels.t()), torch.log(1-self.predicts)))

loss = torch.squeeze(loss, 1)

return loss对其进行测试

# 生成一组长度为3,值为1的标签数据

labels = torch.ones([3, 1])

# 计算风险函数

bce_loss = BinaryCrossEntropyLoss()

print(bce_loss(outputs, labels))运算结果:

tensor([0.6931])模型优化

与上章线性回归中直接使用最小二乘法即可求得模型参数不同,Logistic回归需要使用优化算法对模型参数进行有限次优化来得到更优的模型,从而使实验结果更准确。

梯队下降法:求解其参数w和b,不断计算梯度并沿梯度反方向更新参数

梯度计算

通常将偏导数的计算过程定义在Logistic回归算子的backward函数中

def backward(self, labels):

"""

- labels:真实标签,shape=[N, 1]

"""

N = labels.shape[0]

# 计算偏导数

self.grads['w'] = -1 / N * torch.matmul(self.X.t(), (labels - self.outputs))

self.grads['b'] = -1 / N * torch.sum(labels - self.outputs)参数更新

from abc import abstractmethod

# 优化器基类

class Optimizer(object):

def __init__(self, init_lr, model):

# 优化器类初始化

# 初始化学习率,用于参数更新的计算

self.init_lr = init_lr

# 指定优化器需要优化的模型

self.model = model

@abstractmethod

def step(self):

# 定义每次迭代如何更新参数

pass

class SimpleBatchGD(Optimizer):

def __init__(self, init_lr, model):

super(SimpleBatchGD, self).__init__(init_lr=init_lr, model=model)

def step(self):

# 参数更新

# 遍历所有参数,按照公式(3.8)和(3.9)更新参数

if isinstance(self.model.params, dict):

for key in self.model.params.keys():

self.model.params[key] = self.model.params[key] - self.init_lr * self.model.grads[key]评价指标

本次分类任务使用准确率作为评价指标,准确率即正确预测的数量与总的预测数量的比值

def accuracy(preds, labels):

"""

- preds:预测值,二分类时,shape=[N, 1],N为样本数量,多分类时,shape=[N, C],C为类别数量

- labels:真实标签,shape=[N, 1]

- 准确率:shape=[1]

"""

# 判断是二分类任务还是多分类任务,preds.shape[1]=1时为二分类任务,preds.shape[1]>1时为多分类任务

if preds.shape[1] == 1:

# 二分类时,判断每个概率值是否大于0.5,当大于0.5时,类别为1,否则类别为0

# preds的数据类型转换为float32类型

preds = (preds >= 0.5).to(torch.float32)

else:

# 多分类时,使用torch.argmax计算最大元素索引作为类别

preds = torch.argmax(preds, 1)

preds = preds.to(torch.int32)

return torch.mean(torch.as_tensor((preds == labels), dtype=torch.float32))

# 假设模型的预测值为[[0.],[1.],[1.],[0.]],真实类别为[[1.],[1.],[0.],[0.]],计算准确率

preds = torch.tensor([[0.], [1.], [1.], [0.]])

labels = torch.tensor([[1.], [1.], [0.], [0.]])

print("accuracy is:", accuracy(preds, labels))运行结果:

accuracy is: tensor(0.5000)完善Runner类

基于线性回归实验中的Runner,本章的Runner类在训练过程中使用梯度下降法进行网络优化,模型训练过程中计算在训练集和验证集上的损失及评价指标并打印,训练过程中保存最优模型

import torch

class RunnerV2(object):

def __init__(self, model, optimizer, metric, loss_fn):

self.model = model

self.optimizer = optimizer

self.loss_fn = loss_fn

self.metric = metric

# 记录训练过程中的评价指标变化情况

self.train_scores = []

self.dev_scores = []

# 记录训练过程中的损失函数变化情况

self.train_loss = []

self.dev_loss = []

def train(self, train_set, dev_set, **kwargs):

# 传入训练轮数,如果没有传入值则默认为0

num_epochs = kwargs.get("num_epochs", 0)

# 传入log打印频率,如果没有传入值则默认为100

log_epochs = kwargs.get("log_epochs", 100)

# 传入模型保存路径,如果没有传入值则默认为"best_model.pdparams"

save_path = kwargs.get("save_path", "best_model.pdparams")

# 梯度打印函数,如果没有传入则默认为"None"

print_grads = kwargs.get("print_grads", None)

# 记录全局最优指标

best_score = 0

# 进行num_epochs轮训练

for epoch in range(num_epochs):

X, y = train_set

# 获取模型预测

logits = self.model(X)

# 计算交叉熵损失

trn_loss = self.loss_fn(logits, y).item()

self.train_loss.append(trn_loss)

# 计算评价指标

trn_score = self.metric(logits, y).item()

self.train_scores.append(trn_score)

# 计算参数梯度

self.model.backward(y)

if print_grads is not None:

# 打印每一层的梯度

print_grads(self.model)

# 更新模型参数

self.optimizer.step()

dev_score, dev_loss = self.evaluate(dev_set)

# 如果当前指标为最优指标,保存该模型

if dev_score > best_score:

self.save_model(save_path)

print(f"best accuracy performence has been updated: {best_score:.5f} --> {dev_score:.5f}")

best_score = dev_score

if epoch % log_epochs == 0:

print(f"[Train] epoch: {epoch}, loss: {trn_loss}, score: {trn_score}")

print(f"[Dev] epoch: {epoch}, loss: {dev_loss}, score: {dev_score}")

def evaluate(self, data_set):

X, y = data_set

# 计算模型输出

logits = self.model(X)

# 计算损失函数

loss = self.loss_fn(logits, y).item()

self.dev_loss.append(loss)

# 计算评价指标

score = self.metric(logits, y).item()

self.dev_scores.append(score)

return score, loss

def predict(self, X):

return self.model(X)

def save_model(self, save_path):

torch.save(self.model.params, save_path)

def load_model(self, model_path):

self.model.params = torch.load(model_path)模型训练

使用交叉熵损失函数和梯度下降法进行优化Logistic回归模型,共训练 500个epoch,每隔50个epoch打印出训练集上的指标

# 固定随机种子,保持每次运行结果一致

torch.manual_seed(102)

# 特征维度

input_dim = 2

# 学习率

lr = 0.1

# 实例化模型

model = model_LR(input_dim=input_dim)

# 指定优化器

optimizer = SimpleBatchGD(init_lr=lr, model=model)

# 指定损失函数

loss_fn = BinaryCrossEntropyLoss()

# 指定评价方式

metric = accuracy

# 实例化Runner类,并传入训练配置

runner = Runner(model, optimizer, metric, loss_fn)

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs=500, log_epochs=50, save_path="best_model.pdparams")

运行结果:

best accuracy performence has been updated: 0.00000 --> 0.75000

[Train] epoch: 0, loss: 0.693146824836731, score: 0.5

[Dev] epoch: 0, loss: 0.6844645738601685, score: 0.75

[Train] epoch: 50, loss: 0.48319950699806213, score: 0.807812511920929

[Dev] epoch: 50, loss: 0.519908607006073, score: 0.75

[Train] epoch: 100, loss: 0.4398561418056488, score: 0.8140624761581421

[Dev] epoch: 100, loss: 0.4893949627876282, score: 0.75

best accuracy performence has been updated: 0.75000 --> 0.75625

[Train] epoch: 150, loss: 0.42317506670951843, score: 0.817187488079071

[Dev] epoch: 150, loss: 0.4799765646457672, score: 0.7562500238418579

best accuracy performence has been updated: 0.75625 --> 0.76250

[Train] epoch: 200, loss: 0.41500502824783325, score: 0.823437511920929

[Dev] epoch: 200, loss: 0.47652289271354675, score: 0.762499988079071

[Train] epoch: 250, loss: 0.4104517996311188, score: 0.8203125

[Dev] epoch: 250, loss: 0.47522956132888794, score: 0.7437499761581421

[Train] epoch: 300, loss: 0.407705694437027, score: 0.8218749761581421

[Dev] epoch: 300, loss: 0.4748341143131256, score: 0.75

[Train] epoch: 350, loss: 0.4059614837169647, score: 0.823437511920929

[Dev] epoch: 350, loss: 0.4748414158821106, score: 0.7562500238418579

[Train] epoch: 400, loss: 0.40481358766555786, score: 0.8265625238418579

[Dev] epoch: 400, loss: 0.47503310441970825, score: 0.75

[Train] epoch: 450, loss: 0.40403881669044495, score: 0.828125

[Dev] epoch: 450, loss: 0.4753051698207855, score: 0.75

接下来对训练集与验证集的准确率和损失的变化情况进行可视化处理

def plot(runner, fig_name):

plt.figure(figsize=(10, 5))

plt.subplot(1, 2, 1)

epochs = [i for i in range(len(runner.train_scores))]

# 绘制训练损失变化曲线

plt.plot(epochs, runner.train_loss, color='#e4007f', label="Train loss")

# 绘制评价损失变化曲线

plt.plot(epochs, runner.dev_loss, color='#f19ec2', linestyle='--', label="Dev loss")

# 绘制坐标轴和图例

plt.ylabel("loss", fontsize='large')

plt.xlabel("epoch", fontsize='large')

plt.legend(loc='upper right', fontsize='x-large')

plt.subplot(1, 2, 2)

# 绘制训练准确率变化曲线

plt.plot(epochs, runner.train_scores, color='#e4007f', label="Train accuracy")

# 绘制评价准确率变化曲线

plt.plot(epochs, runner.dev_scores, color='#f19ec2', linestyle='--', label="Dev accuracy")

# 绘制坐标轴和图例

plt.ylabel("score", fontsize='large')

plt.xlabel("epoch", fontsize='large')

plt.legend(loc='lower right', fontsize='x-large')

plt.tight_layout()

plt.savefig(fig_name)

plt.show()

plot(runner, fig_name='linear-acc.pdf')运行结果 :

观察图像,其中显示两集合loss都得到了收敛,准确率也较高,表明训练比较合理。

模型评价

使用训练集对训练后的模型进行测试(观察准确率和loss)

score, loss = runner.evaluate([X_test, y_test])

print("[Test] score/loss: {:.4f}/{:.4f}".format(score, loss))运行结果:

[Test] score/loss: 0.8100/0.4706 可视化观察拟合的决策边界![]()

def decision_boundary(w, b, x1):

w1, w2 = w

x2 = (- w1 * x1 - b) / w2

return x2

plt.figure(figsize=(5, 5))

# 绘制原始数据

plt.scatter(X[:, 0].tolist(), X[:, 1].tolist(), marker='*', c=y.tolist())

w = model.params['w']

b = model.params['b']

x1 = torch.linspace(-2, 3, 1000)

x2 = decision_boundary(w, b, x1)

# 绘制决策边界

plt.plot(x1.tolist(), x2.tolist(), color="red")

plt.show()

运行结果:

实践:基于Softmax回归完成鸢尾花分类任务

Logistic回归模型可以很好的解决二分类问题,但是线性分类还包括多分类问题,Softmax回归就是Logistic回归在多分类问题的推广,接下来使用Softmax回归对多分类问题进行实验。

依旧是提前给出实验程序所导入的包

import matplotlib.pyplot as plt

import numpy as np

import torch

import os

os.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"数据集构建

首先构建一个简单的多分类任务,数据来自3个不同的簇,每个簇对一个类别,即C=3.

def make_multiclass_classification(n_samples=100, n_features=2, n_classes=3, shuffle=True, noise=0.1):

"""

生成带噪音的多类别数据:

- n_samples:数据量大小,数据类型为int

- n_features:特征数量,数据类型为int

- shuffle:是否打乱数据,数据类型为bool

- noise:以多大的程度增加噪声,数据类型为None或float,noise为None时表示不增加噪声

- X:特征数据,shape=[n_samples,2]

- y:标签数据, shape=[n_samples,1]

"""

# 计算每个类别的样本数量

n_samples_per_class = [int(n_samples / n_classes) for k in range(n_classes)]

for i in range(n_samples - sum(n_samples_per_class)):

n_samples_per_class[i % n_classes] += 1

# 将特征和标签初始化为0

X = torch.zeros([n_samples, n_features])

y = torch.zeros([n_samples])

y = y.to(torch.int32)

# 随机生成3个簇中心作为类别中心

centroids = torch.randperm(2 ** n_features)[:n_classes]

centroids_bin = np.unpackbits(centroids.numpy().astype('uint8')).reshape((-1, 8))[:, -n_features:]

centroids = torch.tensor(centroids_bin)

centroids = centroids.to(torch.float32)

# 控制簇中心的分离程度

centroids = 1.5 * centroids - 1

# 随机生成特征值

X[:, :n_features] = torch.randn([n_samples, n_features])

stop = 0

# 将每个类的特征值控制在簇中心附近

for k, centroid in enumerate(centroids):

start, stop = stop, stop + n_samples_per_class[k]

# 指定标签值

y[start:stop] = k % n_classes

X_k = X[start:stop, :n_features]

# 控制每个类别特征值的分散程度

A = 2 * torch.rand([n_features, n_features]) - 1

X_k[...] = torch.matmul(X_k, A)

X_k += centroid

X[start:stop, :n_features] = X_k

# 如果noise不为None,则给特征加入噪声

if noise > 0.0:

# 生成noise掩膜,用来指定给那些样本加入噪声

noise_mask = torch.rand([n_samples]) < noise

for i in range(len(noise_mask)):

if noise_mask[i]:

# 给加噪声的样本随机赋标签值

y[i] = torch.randint(n_classes, [1])

y[i] = y[i].to(torch.int32)

# 如果shuffle为True,将所有数据打乱

if shuffle:

idx = torch.randperm(X.shape[0])

X = X[idx]

y = y[idx]

return X, y随机采集1000个样本并进行可视化处理

torch.manual_seed(102)

n_samples = 1000

X, y = make_multiclass_classification(n_samples=n_samples, n_features=2, n_classes=3, noise=0.2)

plt.figure(figsize=(5, 5))

plt.scatter(x=X[:, 0].tolist(), y=X[:, 1].tolist(), marker='*', c=y.tolist())

plt.savefig('linear-dataset-vis2.pdf')

plt.show()运行结果:

拆分训练集、验证集和测试集

num_train = 640

num_dev = 160

num_test = 200

X_train, y_train = X[:num_train], y[:num_train]

X_dev, y_dev = X[num_train:num_train + num_dev], y[num_train:num_train + num_dev]

X_test, y_test = X[num_train + num_dev:], y[num_train + num_dev:]模型构建

在Softmax回归中,对类别进行预测的方式是预测输入属于每个类别的条件概率

Softmax函数

Softmax函数计算公式自行了解,需要注意的是在Softmax函数计算过程中要考虑上溢出和下溢出的问题(https://blog.csdn.net/lanchunhui/article/details/52083273),而为了解决这个问题,在计算Softmax函数时可以使用![]() 代替

代替![]() .

.

def softmax(X):

"""

- X:shape=[N, C],N为向量数量,C为向量维度

"""

# 在末尾需要添加[0]来补充其余位置元素,不然无法进行计算(相减)

x_max = torch.max(X, 1, keepdim=True)[0] # N,1

x_exp = torch.exp(X - x_max)

partition = torch.sum(x_exp, 1, keepdim=True) # N,1

return x_exp / partition

# 观察softmax的计算方式

X = torch.tensor([[0.1, 0.2, 0.3, 0.4], [1, 2, 3, 4]])

predict = softmax(X)

print(predict)运行结果:

tensor([[0.2138, 0.2363, 0.2612, 0.2887],

[0.0321, 0.0871, 0.2369, 0.6439]])Softmax回归算子

from nndl import op

import torch

from torch.nn import functional as F

def softmax(X):

"""

- X:shape=[N, C],N为向量数量,C为向量维度

"""

# 在末尾需要添加[0]来补充其余位置元素,不然无法进行计算(相减)

x_max = torch.max(X, 1, keepdim=True)[0] # N,1

x_exp = torch.exp(X - x_max)

partition = torch.sum(x_exp, 1, keepdim=True) # N,1

return x_exp / partition

class model_SR(op.Op):

def __init__(self, input_dim, output_dim):

super(model_SR, self).__init__()

self.params = {}

# 将线性层的权重参数全部初始化为0

self.params['W'] = torch.zeros([input_dim, output_dim])

# self.params['W'] = paddle.normal(mean=0, std=0.01, shape=[input_dim, output_dim])

# 将线性层的偏置参数初始化为0

self.params['b'] = torch.zeros([output_dim])

# 存放参数的梯度

self.grads = {}

self.X = None

self.outputs = None

self.output_dim = output_dim

def __call__(self, inputs):

return self.forward(inputs)

def forward(self, inputs):

self.X = inputs

# 线性计算

score = torch.matmul(self.X, self.params['W']) + self.params['b']

# Softmax 函数

self.outputs = softmax(score)

return self.outputs

def backward(self, labels):

"""

- labels:真实标签,shape=[N, 1],其中N为样本数量

"""

# 计算偏导数

N =labels.shape[0]

labels = F.one_hot(labels.to(torch.int64), self.output_dim)

self.grads['W'] = -1 / N * torch.matmul(self.X.t(), (labels-self.outputs))

self.grads['b'] = -1 / N * torch.matmul(torch.ones([N]), (labels-self.outputs))* 这里需要注意的是 x_max = torch.max(X, 1, keepdim=True)[0],这行代码最后结尾处需要添加[0]来补充除了max值的其他位置,不然x_max为一维张量,无法与下面多维张量进行计算。

from model_SR import model_SR

from MultiCrossEntropyLoss import MultiCrossEntropyLoss

# 随机生成1条长度为4的数据

inputs = torch.randn([1, 4])

print('Input is:', inputs)

# 实例化模型,这里令输入长度为4,输出类别数为3

model = model_SR(input_dim=4, output_dim=3)

outputs = model(inputs)

print('Output is:', outputs)运行结果:

Input is: tensor([[-0.6014, -1.0122, -0.3023, -1.2277]])

Output is: tensor([[0.3333, 0.3333, 0.3333]])从输出结果可以看出,采用全0初始化后,属于每个类别的条件概率均为![]() .这是因为,不论输入值的大小为多少,线性函数

.这是因为,不论输入值的大小为多少,线性函数![]() 的输出值恒为0。此时,再经过Softmax函数的处理,每个类别的条件概率恒等

的输出值恒为0。此时,再经过Softmax函数的处理,每个类别的条件概率恒等

损失函数

Softmax回归同样使用交叉熵损失作为损失函数,但会额外使用梯度下降法对参数进行优化。

from nndl import op

import torch

class MultiCrossEntropyLoss(op.Op):

def __init__(self):

self.predicts = None

self.labels = None

self.num = None

def __call__(self, predicts, labels):

return self.forward(predicts, labels)

def forward(self, predicts, labels):

"""

- predicts:预测值,shape=[N, 1],N为样本数量

- labels:真实标签,shape=[N, 1]

- 损失值:shape=[1]

"""

self.predicts = predicts

self.labels = labels

self.num = self.predicts.shape[0]

loss = 0

for i in range(0, self.num):

index = self.labels[i]

loss -= torch.log(self.predicts[i][index])

return loss / self.num对其进行测试

# 假设真实标签为第1类

labels = torch.tensor([0])

# 计算风险函数

mce_loss = MultiCrossEntropyLoss()

print(mce_loss(outputs, labels))运行结果:

tensor(1.0986)模型优化

利用梯度下降法对参数w,b进行更新。

梯度计算

from nndl import op

import torch

from torch.nn import functional as F

def softmax(X):

"""

- X:shape=[N, C],N为向量数量,C为向量维度

"""

# 在末尾需要添加[0]来补充其余位置元素,不然无法进行计算(相减)

x_max = torch.max(X, 1, keepdim=True)[0] # N,1

x_exp = torch.exp(X - x_max)

partition = torch.sum(x_exp, 1, keepdim=True) # N,1

return x_exp / partition

class model_SR(op.Op):

def __init__(self, input_dim, output_dim):

super(model_SR, self).__init__()

self.params = {}

self.params['W'] = torch.zeros([input_dim, output_dim])

# self.params['W'] = paddle.normal(mean=0, std=0.01, shape=[input_dim, output_dim])

self.params['b'] = torch.zeros([output_dim])

self.grads = {}

self.X = None

self.outputs = None

self.output_dim = output_dim

def __call__(self, inputs):

return self.forward(inputs)

def forward(self, inputs):

self.X = inputs

score = torch.matmul(self.X, self.params['W']) + self.params['b']

self.outputs = softmax(score)

return self.outputs

def backward(self, labels):

"""

- labels:真实标签,shape=[N, 1],其中N为样本数量

"""

N =labels.shape[0]

labels = F.one_hot(labels.to(torch.int64), self.output_dim)

self.grads['W'] = -1 / N * torch.matmul(self.X.t(), (labels-self.outputs))

self.grads['b'] = -1 / N * torch.matmul(torch.ones([N]), (labels-self.outputs))参数更新

3.1.4中的参数更新

模型训练

实例化Runner类,并传入训练配置。

from Optimizer import SimpleBatchGD

from Runner import Runner

torch.manual_seed(102)

# 特征维度

input_dim = 2

# 类别数

output_dim = 3

# 学习率

lr = 0.1

# 实例化模型

model = model_SR(input_dim=input_dim, output_dim=output_dim)

# 指定优化器

optimizer = SimpleBatchGD(init_lr=lr, model=model)

# 指定损失函数

loss_fn = MultiCrossEntropyLoss()

# 指定评价方式

metric = accuracy

# 实例化RunnerV2类

runner = Runner(model, optimizer, metric, loss_fn)

# 模型训练

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs=500, log_eopchs=50, eval_epochs=1, save_path="best_model.pdparams")

plot(runner, fig_name='linear-acc2.pdf')运行结果:

best accuracy performence has been updated: 0.00000 --> 0.70625

[Train] epoch: 0, loss: 1.0986149311065674, score: 0.3218750059604645

[Dev] epoch: 0, loss: 1.0805636644363403, score: 0.706250011920929

best accuracy performence has been updated: 0.70625 --> 0.71250

best accuracy performence has been updated: 0.71250 --> 0.71875

best accuracy performence has been updated: 0.71875 --> 0.72500

best accuracy performence has been updated: 0.72500 --> 0.73125

best accuracy performence has been updated: 0.73125 --> 0.73750

best accuracy performence has been updated: 0.73750 --> 0.74375

best accuracy performence has been updated: 0.74375 --> 0.75000

best accuracy performence has been updated: 0.75000 --> 0.75625

best accuracy performence has been updated: 0.75625 --> 0.76875

best accuracy performence has been updated: 0.76875 --> 0.77500

best accuracy performence has been updated: 0.77500 --> 0.78750

[Train] epoch: 100, loss: 0.7155234813690186, score: 0.768750011920929

[Dev] epoch: 100, loss: 0.7977758049964905, score: 0.7875000238418579

best accuracy performence has been updated: 0.78750 --> 0.79375

best accuracy performence has been updated: 0.79375 --> 0.80000

[Train] epoch: 200, loss: 0.6921818852424622, score: 0.784375011920929

[Dev] epoch: 200, loss: 0.8020225763320923, score: 0.793749988079071

best accuracy performence has been updated: 0.80000 --> 0.80625

[Train] epoch: 300, loss: 0.6840380430221558, score: 0.7906249761581421

[Dev] epoch: 300, loss: 0.81141597032547, score: 0.8062499761581421

best accuracy performence has been updated: 0.80625 --> 0.81250

[Train] epoch: 400, loss: 0.680213987827301, score: 0.807812511920929

[Dev] epoch: 400, loss: 0.8198073506355286, score: 0.8062499761581421模型评价

score, loss = runner.evaluate([X_test, y_test])

print("[Test] score/loss: {:.4f}/{:.4f}".format(score, loss))运行结果:

[Test] score/loss: 0.8400/0.7014实践:基于Softmax回归完成鸢尾花分类任务

经过上面的对线性分类基础实验的回顾,接下来利用鸾尾花分类任务来实践,使用Iris作为数据集,实验流程包括:数据处理、模型构建、损失函数定义、优化器构建、模型训练、模型评价和模型预测等。

对于这个数据集我们大家应该都已经比较熟悉了,如有需要可到网上去下载查阅

首先导入所需要的包

from sklearn.datasets import load_iris

import matplotlib.pyplot as plt # 可视化工具

from nndl1 import op

import numpy as np

import pandas

import torch

# 模型训练

from nndl1 import op, metric, opitimizer

from nndl1.runner import RunnerV2这里的nndl1为课程群中的程序包,将其转化为torch代码使用即可。

要对数据集进行数据清洗,异常值处理等,将其中的缺失数据异常数据等进行整理

iris_features = np.array(load_iris().data, dtype=np.float32)

iris_labels = np.array(load_iris().target, dtype=np.int32)

print(pandas.isna(iris_features).sum())

print(pandas.isna(iris_labels).sum())

运行结果:

0

0由此可以看出此数据集中并没有缺失数据。

数据读取

本实验将数据集分为训练集,验证集,测试集三种,分别占比0.8,0.1,0.1

def load_data(shuffle=True): # 加载鸾尾花数据

"""

- shuffle:是否打乱数据,数据类型为bool

- X:特征数据,shape=[150,4]

- y:标签数据, shape=[150]

"""

# 加载原始数据

X = np.array(load_iris().data, dtype=np.float32)

y = np.array(load_iris().target, dtype=np.int32)

X = torch.tensor(X)

y = torch.tensor(y)

# 数据归一化

X_min = torch.min(X, 0)

X_max = torch.max(X, 0)

X = (X-X_min.values) / (X_max.values - X_min.values)

# 如果shuffle为True,随机打乱数据

if shuffle:

idx = torch.randperm(X.shape[0])

X = X[idx]

y = y[idx]

return X, y

torch.manual_seed(102)

num_train = 120

num_dev = 15

num_test = 15

X, y = load_data(shuffle=True)

print("X shape: ", X.shape, "y shape: ", y.shape)

X_train, y_train = X[:num_train], y[:num_train]

X_dev, y_dev = X[num_train:num_train + num_dev], y[num_train:num_train + num_dev]

X_test, y_test = X[num_train + num_dev:], y[num_train + num_dev:]模型构建

# 维度

input_dim = 4

# 类别数

output_dim = 3

# 实例化模型

model = op.model_SR(input_dim=input_dim, output_dim=output_dim)模型训练

# 学习率

lr = 0.2

# 梯度下降法

optimizer = opitimizer.SimpleBatchGD(init_lr=lr, model=model)

# 交叉熵损失

loss_fn = op.MultiCrossEntropyLoss()

# 准确率

metric = metric.accuracy

# 实例化RunnerV2

runner = RunnerV2(model, optimizer, metric, loss_fn)

# 启动训

runner.train([X_train, y_train], [X_dev, y_dev], num_epochs=200, log_epochs=10, save_path="best_model.pdparams")

运行结果:

best accuracy performence has been updated: 0.00000 --> 0.40000

[Train] epoch: 0, loss: 1.09861159324646, score: 0.31666672229766846

[Dev] epoch: 0, loss: 1.0828731060028076, score: 0.40000003576278687

best accuracy performence has been updated: 0.40000 --> 0.46667

best accuracy performence has been updated: 0.46667 --> 0.66667

best accuracy performence has been updated: 0.66667 --> 0.73333

[Train] epoch: 10, loss: 0.9869819283485413, score: 0.6416667699813843

[Dev] epoch: 10, loss: 0.974640965461731, score: 0.73333340883255

[Train] epoch: 20, loss: 0.9098353385925293, score: 0.6500000953674316

[Dev] epoch: 20, loss: 0.8997330069541931, score: 0.73333340883255

[Train] epoch: 30, loss: 0.847343385219574, score: 0.6833333969116211

[Dev] epoch: 30, loss: 0.8396621346473694, score: 0.73333340883255

[Train] epoch: 40, loss: 0.7956345677375793, score: 0.7250000238418579

[Dev] epoch: 40, loss: 0.7903936505317688, score: 0.73333340883255

[Train] epoch: 50, loss: 0.7523643970489502, score: 0.7416666746139526

[Dev] epoch: 50, loss: 0.7495585083961487, score: 0.73333340883255

[Train] epoch: 60, loss: 0.7158005237579346, score: 0.783333420753479

[Dev] epoch: 60, loss: 0.7153650522232056, score: 0.73333340883255

best accuracy performence has been updated: 0.73333 --> 0.80000

[Train] epoch: 70, loss: 0.6845439672470093, score: 0.8083333969116211

[Dev] epoch: 70, loss: 0.6863659620285034, score: 0.8000000715255737

best accuracy performence has been updated: 0.80000 --> 0.86667

[Train] epoch: 80, loss: 0.657481849193573, score: 0.8166667819023132

[Dev] epoch: 80, loss: 0.6614803075790405, score: 0.8666667342185974

[Train] epoch: 90, loss: 0.6339080333709717, score: 0.8416667580604553

[Dev] epoch: 90, loss: 0.64008629322052, score: 0.8666667342185974

[Train] epoch: 100, loss: 0.613059937953949, score: 0.8416667580604553

[Dev] epoch: 100, loss: 0.6212700009346008, score: 0.8666667342185974

模型评价

使用测试数据对在训练过程中保存的最佳模型进行评价,观察模型在测试集上的准确率情况

# 加载最优模型

runner.load_model('best_model.pdparams')

# 模型评价

score, loss = runner.evaluate([X_test, y_test])

print("[Test] score/loss: {:.4f}/{:.4f}".format(score, loss))

模型预测

使用训练好的模型对测试集中的数据进行预测

# 预测测试集数据

logits = runner.predict(X_test)

# 观察其中一条样本的预测结果

pred = paddle.argmax(logits[0]).numpy()

# 获取该样本概率最大的类别

label = y_test[0].numpy()

# 输出真实类别与预测类别

print("The true category is {} and the predicted category is {}".format(label[0], pred[0]))实验拓展

- 尝试调整学习率和训练轮数等超参数,观察是否能够得到更高的精度;

- 在解决多分类问题时,还有一个思路是将每个类别的求解问题拆分成一个二分类任务,通过判断是否属于该类别来判断最终结果。请分别尝试两种求解思路,观察哪种能够取得更好的结果;

- 尝试使用《神经网络与深度学习》中的其他模型进行鸢尾花识别任务,观察是否能够得到更高的精度。

1.例如第一个对二分类进行分析的实验中,将其中的学习率从0.1上调至0.2,即Ir=0.1—>Ir=0.2,其

[Test] score/loss: 0.8100/0.4706 变化为 [Test] score/loss: 0.8050/0.4717,多次尝试之后发现不论学习率提高还是降低,都会造成模型精度发生变化,但是会有一个学习率会使精度达到最高(篇幅太长了,可视化图像省略-.-);接下来来训练轮数,在精度下增加训练轮数:num_epoch = 150,通过不断改变训练轮数发现精确率也在发生变化:[Test] score/loss: 0.9333/0.3128 —> [Test] score/loss: 0.8677/0.4475,训练多轮之后不难发现,当训练的轮数较少时精确率是较低的,轮数越多精确率越高,后面到了一定的数量后误差会变为0。

2.对于同一个学习任务,ECOC编码越长,纠错能力越强。但是,这意味着所需要的分类器就越多,开销就越大;另一方面,对有限类别数,可能的组合数目是有限的,码长超过一定的范围后就失去了意义。

实验小结:线性分类是以前学过的知识,但是学的时间久了难免会忘,通过本次实验可以将以前所遗忘的知识拾起来一些,所以对于知识来说,是需要不断重温与回顾的,只有多应用于实践(实验),才能记得更牢固。

参考:AI Studio,博客园(https://blog.csdn.net/lanchunhui/article/details/52083273)

深度学习魏老师csdn主页(https://blog.csdn.net/qq_38975453?type=blog)