CNN学习MNIST实现手写数字识别

CNN的实现

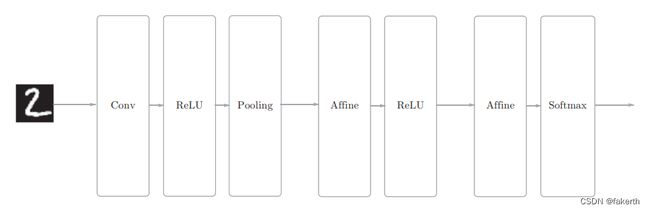

我们之前已经实现了卷积层和池化层,现在来组合这些层,搭建进行手写数字识别的CNN。

# 初始化权重

self.params = {'W1': weight_init_std * np.random.randn(filter_num, input_dim[0], filter_size, filter_size),

'b1': np.zeros(filter_num),

'W2': weight_init_std * np.random.randn(pool_output_size, hidden_size),

'b2': np.zeros(hidden_size),

'W3': weight_init_std * np.random.randn(hidden_size, output_size),

'b3': np.zeros(output_size)}

# 生成层

self.layers = OrderedDict()

self.layers['Conv1'] = Convolution(self.params['W1'], self.params['b1'], conv_param['stride'], conv_param['pad'])

self.layers['Relu1'] = Relu()

self.layers['Pool1'] = Pooling(pool_h=2, pool_w=2, stride=2)

self.layers['Affine1'] = Affine(self.params['W2'], self.params['b2'])

self.layers['Relu2'] = Relu()

self.layers['Affine2'] = Affine(self.params['W3'], self.params['b3'])

self.last_layer = SoftmaxWithLoss()

SimpleConvNet类实现如下:

# coding: utf-8

import sys, os

sys.path.append(os.pardir) # 为了导入父目录的文件而进行的设定

import pickle

import numpy as np

from collections import OrderedDict

from common.layers import *

from common.gradient import numerical_gradient

class SimpleConvNet:

"""简单的ConvNet

conv - relu - pool - affine - relu - affine - softmax

Parameters

----------

input_size : 输入大小(MNIST的情况下为784)

hidden_size_list : 隐藏层的神经元数量的列表(e.g. [100, 100, 100])

output_size : 输出大小(MNIST的情况下为10)

activation : 'relu' or 'sigmoid'

weight_init_std : 指定权重的标准差(e.g. 0.01)

指定'relu'或'he'的情况下设定“He的初始值”

指定'sigmoid'或'xavier'的情况下设定“Xavier的初始值”

"""

def __init__(self, input_dim=(1, 28, 28),

conv_param={'filter_num':30, 'filter_size':5, 'pad':0, 'stride':1},

hidden_size=100, output_size=10, weight_init_std=0.01):

"""

:param input_dim:输入数据的维度:(通道,高,长)

:param conv_param:卷积层的超参数(字典)。字典的关键字如下:

filter_num―滤波器的数量

filter_size―滤波器的大小

stride―步幅

pad―填充

:param hidden_size:隐藏层(全连接)的神经元数量

:param output_size:输出层(全连接)的神经元数量

:param weight_init_std:初始化时权重的标准差

"""

filter_num = conv_param['filter_num']

filter_size = conv_param['filter_size']

filter_pad = conv_param['pad']

filter_stride = conv_param['stride']

input_size = input_dim[1]

conv_output_size = (input_size - filter_size + 2*filter_pad) / filter_stride + 1

pool_output_size = int(filter_num * (conv_output_size/2) * (conv_output_size/2))

# 初始化权重

self.params = {'W1': weight_init_std * np.random.randn(filter_num, input_dim[0], filter_size, filter_size),

'b1': np.zeros(filter_num),

'W2': weight_init_std * np.random.randn(pool_output_size, hidden_size),

'b2': np.zeros(hidden_size),

'W3': weight_init_std * np.random.randn(hidden_size, output_size),

'b3': np.zeros(output_size)}

# 生成层

self.layers = OrderedDict()

self.layers['Conv1'] = Convolution(self.params['W1'], self.params['b1'], conv_param['stride'], conv_param['pad'])

self.layers['Relu1'] = Relu()

self.layers['Pool1'] = Pooling(pool_h=2, pool_w=2, stride=2)

self.layers['Affine1'] = Affine(self.params['W2'], self.params['b2'])

self.layers['Relu2'] = Relu()

self.layers['Affine2'] = Affine(self.params['W3'], self.params['b3'])

self.last_layer = SoftmaxWithLoss()

def predict(self, x):

for layer in self.layers.values():

x = layer.forward(x)

return x

def loss(self, x, t):

"""求损失函数

参数x是输入数据、t是教师标签

"""

y = self.predict(x)

return self.last_layer.forward(y, t)

def accuracy(self, x, t, batch_size=100):

if t.ndim != 1 : t = np.argmax(t, axis=1)

acc = 0.0

for i in range(int(x.shape[0] / batch_size)):

tx = x[i*batch_size:(i+1)*batch_size]

tt = t[i*batch_size:(i+1)*batch_size]

y = self.predict(tx)

y = np.argmax(y, axis=1)

acc += np.sum(y == tt)

return acc / x.shape[0]

def numerical_gradient(self, x, t):

"""求梯度(数值微分)

Parameters

----------

x : 输入数据

t : 教师标签

Returns

-------

具有各层的梯度的字典变量

grads['W1']、grads['W2']、...是各层的权重

grads['b1']、grads['b2']、...是各层的偏置

"""

loss_w = lambda w: self.loss(x, t)

grads = {}

for idx in (1, 2, 3):

grads['W' + str(idx)] = numerical_gradient(loss_w, self.params['W' + str(idx)])

grads['b' + str(idx)] = numerical_gradient(loss_w, self.params['b' + str(idx)])

return grads

def gradient(self, x, t):

"""求梯度(误差反向传播法)

Parameters

----------

x : 输入数据

t : 教师标签

Returns

-------

具有各层的梯度的字典变量

grads['W1']、grads['W2']、...是各层的权重

grads['b1']、grads['b2']、...是各层的偏置

"""

# forward

self.loss(x, t)

# backward

dout = 1

dout = self.last_layer.backward(dout)

layers = list(self.layers.values())

layers.reverse()

for layer in layers:

dout = layer.backward(dout)

# 设定

grads = {'W1': self.layers['Conv1'].dW,

'b1': self.layers['Conv1'].db,

'W2': self.layers['Affine1'].dW,

'b2': self.layers['Affine1'].db,

'W3': self.layers['Affine2'].dW,

'b3': self.layers['Affine2'].db}

return grads

def save_params(self, file_name="params.pkl"):

params = {}

for key, val in self.params.items():

params[key] = val

with open(file_name, 'wb') as f:

pickle.dump(params, f)

def load_params(self, file_name="params.pkl"):

with open(file_name, 'rb') as f:

params = pickle.load(f)

for key, val in params.items():

self.params[key] = val

for i, key in enumerate(['Conv1', 'Affine1', 'Affine2']):

self.layers[key].W = self.params['W' + str(i+1)]

self.layers[key].b = self.params['b' + str(i+1)]

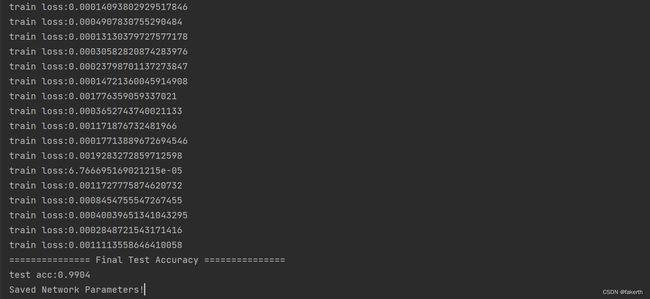

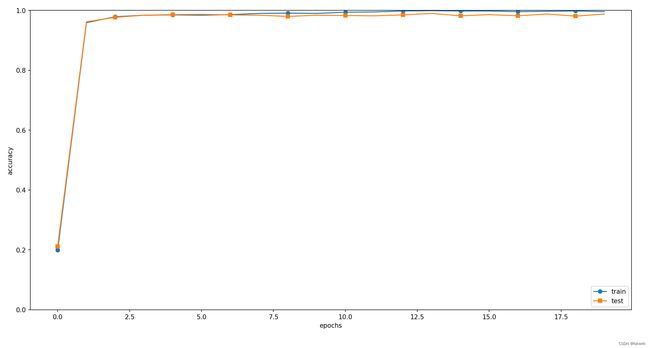

现在,使用这个SimpleConvNet学习MNIST数据集。如果使用MNIST数据集训练SimpleConvNet,则训练数据的识别率为99.82%,测试数据的识别率为98.96%(每次学习的识别精度都会发生一些误差)。测试数据的识别率大约为99%,就小型网络来说,这是一个非常高的识别率。

学习MNIST数据集:

# coding: utf-8

import sys, os

sys.path.append(os.pardir) # 为了导入父目录的文件而进行的设定

import numpy as np

import matplotlib.pyplot as plt

from dataset.mnist import load_mnist

from simple_convnet import SimpleConvNet

from common.trainer import Trainer

# 读入数据

(x_train, t_train), (x_test, t_test) = load_mnist(flatten=False)

# 处理花费时间较长的情况下减少数据

# x_train, t_train = x_train[:5000], t_train[:5000]

# x_test, t_test = x_test[:1000], t_test[:1000]

max_epochs = 20

network = SimpleConvNet(input_dim=(1, 28, 28),

conv_param={'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1},

hidden_size=100, output_size=10, weight_init_std=0.01)

trainer = Trainer(network, x_train, t_train, x_test, t_test,

epochs=max_epochs, mini_batch_size=100,

optimizer='Adam', optimizer_param={'lr': 0.001},

evaluate_sample_num_per_epoch=1000)

trainer.train()

# 保存参数

network.save_params("params.pkl")

print("Saved Network Parameters!")

# 绘制图形

markers = {'train': 'o', 'test': 's'}

x = np.arange(max_epochs)

plt.plot(x, trainer.train_acc_list, marker='o', label='train', markevery=2)

plt.plot(x, trainer.test_acc_list, marker='s', label='test', markevery=2)

plt.xlabel("epochs")

plt.ylabel("accuracy")

plt.ylim(0, 1.0)

plt.legend(loc='lower right')

plt.show()

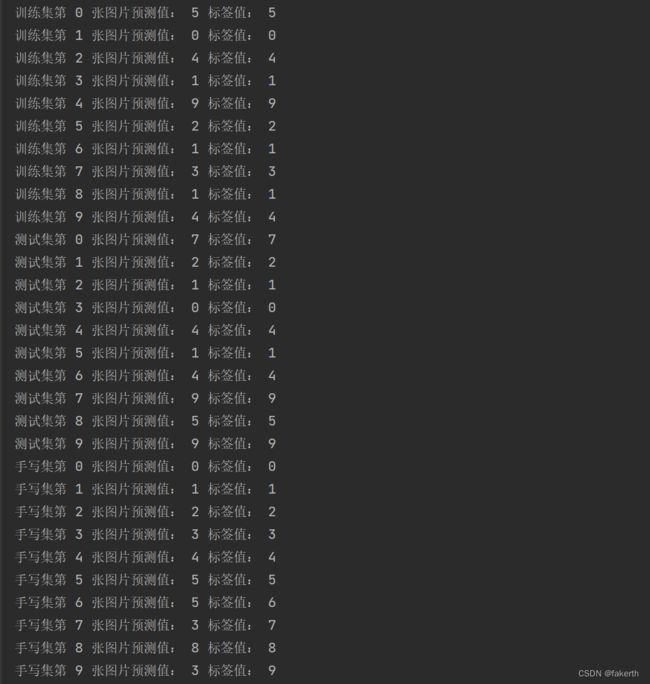

测试泛化能力

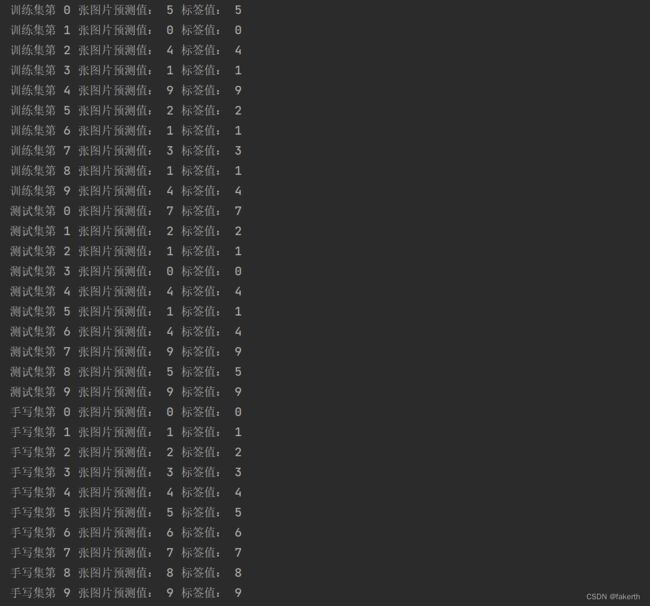

训练好的参数总得拿出来用一用吧,对于mnist测试数据和训练数据直接导入参数文件,推理(识别)就行了。要注意的是格式NCHW,N张C通道高为H宽为W的图片,我们各取10张。

def train_img():

(x_train, t_train), (x_test, t_test) = load_mnist(flatten=False)

t = 10

x_train_sample, t_train_sample = x_train[:t], t_train[:t]

network = SimpleConvNet(input_dim=(1, 28, 28),

conv_param={'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1},

hidden_size=100, output_size=10, weight_init_std=0.01)

network.load_params("params.pkl")

a = network.predict(x_train_sample)

for i in range(0, 10):

print("测试集第", i, "张图片预测值:", np.argmax(a[i]), "标签值:", t_train_sample[i])

def test_img():

(x_train, t_train), (x_test, t_test) = load_mnist(flatten=False)

t = 10

x_test_sample, t_test_sample = x_test[:t], t_test[:t]

network = SimpleConvNet(input_dim=(1, 28, 28),

conv_param={'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1},

hidden_size=100, output_size=10, weight_init_std=0.01)

network.load_params("params.pkl")

a = network.predict(x_test_sample)

for i in range(0, 10):

print("测试集第", i, "张图片预测值:", np.argmax(a[i]), "标签值:", t_test_sample[i])

为什么不测试一下自己手写的呢?还是手写的那10个数字。

这里顺便直接把图像处理封装成一个函数:

def deal_img(filename):

kernel_size = (3, 3)

img = cv2.imread(filename)

img_gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY) # 从RBG和BGR颜色空间转换到灰度空间

ret, thresh2 = cv2.threshold(img_gray, 127, 255, cv2.THRESH_BINARY) # 像素值>threshold设为255 其他0

kernel = np.ones(kernel_size, np.uint8)

thresh2 = cv2.erode(thresh2, kernel, iterations=1)

ret, thresh2 = cv2.threshold(thresh2, 127, 255, cv2.THRESH_BINARY_INV) # 像素值>threshold设为0 其他255

thresh2 = cv2.resize(thresh2, (28, 28), fx=1, fy=1) # 图片缩放 1:1缩放

image_out = thresh2.reshape(1, 1, thresh2.shape[0], thresh2.shape[1])

return image_out

def else_img():

network = SimpleConvNet(input_dim=(1, 28, 28),

conv_param={'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1},

hidden_size=100, output_size=10, weight_init_std=0.01)

network.load_params("params.pkl")

network.load_params("params.pkl")

x = ["0.png", "1.png", "2.png", "3.png", "4.png", "5.png", "6.png", "7.png", "8.png", "9.png"]

t = np.arange(10)

for i in range(10):

image_out = deal_img(x[i])

a = network.predict(image_out)

print("手写集第", i, "张图片预测值:", np.argmax(a), "标签值:", t[i])

最后的识别结果如下,手写的10个数字还是错了3个,这里图像处理的问题应该占了很大一部分原因了。到这里我猛然醒悟,如果我在黑色背景下写白字不就直接略过图像处理这一步了吗?

重新写了10个数字:

果然,全部识别出来。图像并不是不用处理了,只是不那么麻烦了,图像还是要处理成1通道:

def deal_img2(filename):

img = cv2.imread(filename)

img_gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY) # 从RBG和BGR颜色空间转换到灰度空间

thresh2 = cv2.resize(img_gray, (28, 28), fx=1, fy=1) # 图片缩放 1:1缩放

image_out = thresh2.reshape(1, 1, thresh2.shape[0], thresh2.shape[1])

return image_out

完整程序如下:

# coding: utf-8

import sys, os

import cv2

sys.path.append(os.pardir) # 为了导入父目录的文件而进行的设定

import numpy as np

import matplotlib.pyplot as plt

from dataset.mnist import load_mnist

from simple_convnet import SimpleConvNet

from common.trainer import Trainer

from PIL import Image

def img_show(img):

pil_img = Image.fromarray(np.uint8(img))

pil_img.show()

def train_img():

(x_train, t_train), (x_test, t_test) = load_mnist(flatten=False)

t = 10

x_train_sample, t_train_sample = x_train[:t], t_train[:t]

network = SimpleConvNet(input_dim=(1, 28, 28),

conv_param={'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1},

hidden_size=100, output_size=10, weight_init_std=0.01)

network.load_params("params.pkl")

a = network.predict(x_train_sample)

for i in range(0, 10):

print("训练集第", i, "张图片预测值:", np.argmax(a[i]), "标签值:", t_train_sample[i])

def test_img():

(x_train, t_train), (x_test, t_test) = load_mnist(flatten=False)

t = 10

x_test_sample, t_test_sample = x_test[:t], t_test[:t]

network = SimpleConvNet(input_dim=(1, 28, 28),

conv_param={'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1},

hidden_size=100, output_size=10, weight_init_std=0.01)

network.load_params("params.pkl")

a = network.predict(x_test_sample)

for i in range(0, 10):

print("测试集第", i, "张图片预测值:", np.argmax(a[i]), "标签值:", t_test_sample[i])

def deal_img(filename):

kernel_size = (3, 3)

img = cv2.imread(filename)

img_gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY) # 从RBG和BGR颜色空间转换到灰度空间

ret, thresh2 = cv2.threshold(img_gray, 127, 255, cv2.THRESH_BINARY) # 像素值>threshold设为255 其他0

kernel = np.ones(kernel_size, np.uint8)

thresh2 = cv2.erode(thresh2, kernel, iterations=1)

ret, thresh2 = cv2.threshold(thresh2, 127, 255, cv2.THRESH_BINARY_INV) # 像素值>threshold设为0 其他255

thresh2 = cv2.resize(thresh2, (28, 28), fx=1, fy=1) # 图片缩放 1:1缩放

image_out = thresh2.reshape(1, 1, thresh2.shape[0], thresh2.shape[1])

return image_out

def deal_img2(filename):

img = cv2.imread(filename)

img_gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY) # 从RBG和BGR颜色空间转换到灰度空间

thresh2 = cv2.resize(img_gray, (28, 28), fx=1, fy=1) # 图片缩放 1:1缩放

image_out = thresh2.reshape(1, 1, thresh2.shape[0], thresh2.shape[1])

return image_out

def else_img():

network = SimpleConvNet(input_dim=(1, 28, 28),

conv_param={'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1},

hidden_size=100, output_size=10, weight_init_std=0.01)

network.load_params("params.pkl")

network.load_params("params.pkl")

x = ["0.png", "1.png", "2.png", "3.png", "4.png", "5.png", "6.png", "7.png", "8.png", "9.png"]

x1 = ["10.png", "11.png", "12.png", "13.png", "14.png", "15.png", "16.png", "17.png", "18.png", "19.png"]

t = np.arange(10)

for i in range(10):

image_out = deal_img2(x1[i])

a = network.predict(image_out)

print("手写集第", i, "张图片预测值:", np.argmax(a), "标签值:", t[i])

train_img()

test_img()

else_img()