《MobileNetV2: Inverted Residuals and Linear Bottlenecks》

MobileNetV1《MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications》_程大海的博客-CSDN博客

《MobileNetV2: Inverted Residuals and Linear Bottlenecks》_程大海的博客-CSDN博客

《ShuffleNet: An Extremely Efficient Convolutional Neural Network for Mobile Devices》_程大海的博客-CSDN博客

pytorch中MobileNetV2分类模型的源码注解_程大海的博客-CSDN博客

pytorch卷积操作nn.Conv中的groups参数用法解释_程大海的博客-CSDN博客_pytorch中groups

开源代码:https://github.com/xxcheng0708/Pytorch_Image_Classifier_Template

温馨提示:本文仅供自己参考,如有理解错误,有时间再改!

标准残差块:

1、使用1x1卷积减少通道数

2、使用NxN卷积提取特征

3、使用1x1卷积提升通道数,提升后与输入通道数相等

4、将残差块的输入与输出相加,形成short-cut连接

备注:标准残差块的通道数两头大,中间小

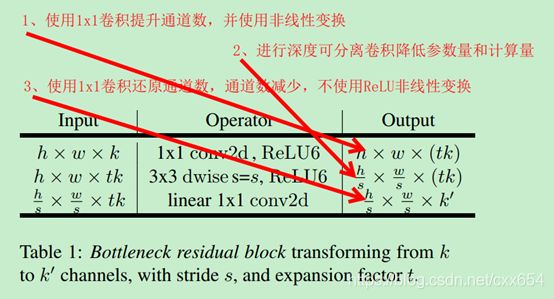

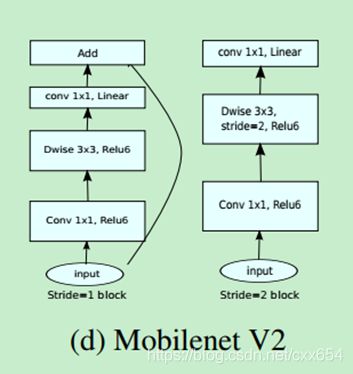

反向残差块(inverted residual):

1、使用1x1卷积提升通道数

2、使用深度可分离卷积提取特征

3、使用1x1卷积减少通道数,减少后与输入通道数相等

4、将残差块的输入与输出相加,形成short-cut连接

备注:翻转残差块的通道数两头小,中间大,中间通道数多的部分采用深度可分离卷积降低参数量和计算量

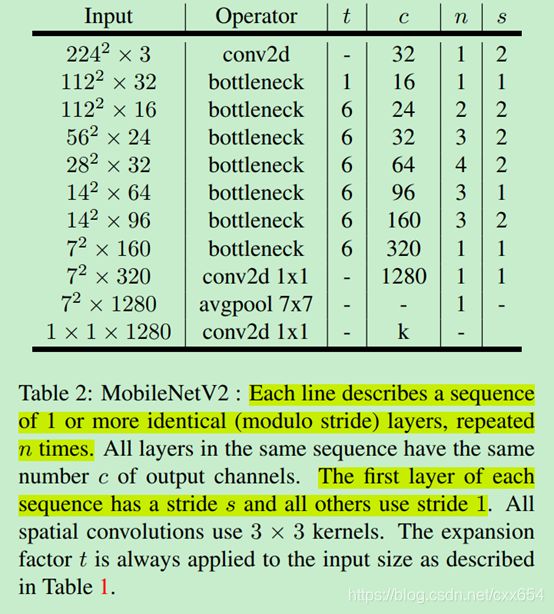

MobileNetV2网络结构:

Pytorch中MobileNetV2反转卷积源码:

class InvertedResidual(nn.Module):

def __init__(self, inp, oup, stride, expand_ratio, norm_layer=None):

super(InvertedResidual, self).__init__()

self.stride = stride

assert stride in [1, 2]

if norm_layer is None:

norm_layer = nn.BatchNorm2d

hidden_dim = int(round(inp * expand_ratio))

self.use_res_connect = self.stride == 1 and inp == oup

layers = []

if expand_ratio != 1:

# pw

layers.append(ConvBNReLU(inp, hidden_dim, kernel_size=1, norm_layer=norm_layer))

layers.extend([

# dw, 深度可分离卷积第一部,分组卷积,group不等于1

ConvBNReLU(hidden_dim, hidden_dim, stride=stride, groups=hidden_dim, norm_layer=norm_layer),

# pw-linear,深度可分离卷积第二步,使用1x1卷积进行channel融合

nn.Conv2d(hidden_dim, oup, 1, 1, 0, bias=False),

norm_layer(oup),

])

self.conv = nn.Sequential(*layers)

def forward(self, x):

if self.use_res_connect:

# 使用残差连接

return x + self.conv(x)

else:

# 不使用残差连接

return self.conv(x)Pytorch中MobileNetV2网络结构:

MobileNetV2(

(features): Sequential(

(0): ConvBNReLU(

(0): Conv2d(3, 32, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=32, bias=False)

(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): Conv2d(32, 16, kernel_size=(1, 1), stride=(1, 1), bias=False)

(2): BatchNorm2d(16, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(2): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(16, 96, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(96, 96, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=96, bias=False)

(1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(96, 24, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(3): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(24, 144, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(144, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(144, 144, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=144, bias=False)

(1): BatchNorm2d(144, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(144, 24, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(4): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(24, 144, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(144, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(144, 144, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=144, bias=False)

(1): BatchNorm2d(144, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(144, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(5): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(32, 192, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(192, 192, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=192, bias=False)

(1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(192, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(6): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(32, 192, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(192, 192, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=192, bias=False)

(1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(192, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(7): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(32, 192, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(192, 192, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=192, bias=False)

(1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(192, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(8): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(64, 384, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(384, 384, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=384, bias=False)

(1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(384, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(9): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(64, 384, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(384, 384, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=384, bias=False)

(1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(384, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(10): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(64, 384, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(384, 384, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=384, bias=False)

(1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(384, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(11): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(64, 384, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(384, 384, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=384, bias=False)

(1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(384, 96, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(12): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(96, 576, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(576, 576, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=576, bias=False)

(1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(576, 96, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(13): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(96, 576, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(576, 576, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=576, bias=False)

(1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(576, 96, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(14): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(96, 576, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(576, 576, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), groups=576, bias=False)

(1): BatchNorm2d(576, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(576, 160, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(15): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(160, 960, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(960, 960, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=960, bias=False)

(1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(960, 160, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(16): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(160, 960, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(960, 960, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=960, bias=False)

(1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(960, 160, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(17): InvertedResidual(

(conv): Sequential(

(0): ConvBNReLU(

(0): Conv2d(160, 960, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(1): ConvBNReLU(

(0): Conv2d(960, 960, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), groups=960, bias=False)

(1): BatchNorm2d(960, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

(2): Conv2d(960, 320, kernel_size=(1, 1), stride=(1, 1), bias=False)

(3): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(18): ConvBNReLU(

(0): Conv2d(320, 1280, kernel_size=(1, 1), stride=(1, 1), bias=False)

(1): BatchNorm2d(1280, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU6(inplace=True)

)

)

(classifier): Sequential(

(0): Dropout(p=0.2, inplace=False)

(1): Linear(in_features=1280, out_features=1000, bias=True)

)

)