语义分割模型总结

之前看了一段时间OoD在语义分割中的应用,并且学习了一些基本的语义分割模型,总结记录

本文目录

-

-

- 语义分割

-

- FCN

- Unet

- SegNet(实时语义分割)

- Deeplab v1(VGG16)

-

- 感受野

- Deeplab v2(Resnet)

- Deeplab v3

-

语义分割

语义分割一直存着语义信息和细节信息的矛盾。语义信息足够,局部细节信息不足,细节就会模糊,边缘就会不精准;细节信息准确,语义信息欠缺,像素点预测就会错误。CNN能够很好地编码语义信息和细节信息,整合到一个局部到全局的特征金字塔中。

FCN

FCN (FCN:Fully Convolutional Networks )

- FCN发布于2014年,是语义分割领域全卷积网络的开山之作,U-Net也在其之后

- 其主要思路是将图像分类的网络改良成语义分割的网络,通过将分类器(全连接层)变成上采样层来恢复特征图的尺寸,进行端到端训练

- 分类器变成上采样,这部分思想作者主要的解释是全连接层是一种特殊的卷积

- 选择了AlexNet、GoogLeNet和VGG作为backbone(主干网络),VGG效果最好,但是推理最慢

1)插值:双线性插值;(2)转置卷积(反卷积);(3)反池化。上小节的“Shift-and-stitch”也是。文章用了双线性插值初始化的转置卷积。

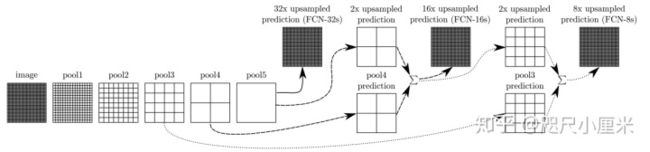

最核心的思想是特征图的融合:假设最后的输出为pool5产生的x,利用转置卷积上采样,放大32倍,得到FCN-32s;将x上采样放大2倍,和pool4产生的特征图直接相加,再上采样放大16倍,得到FCN-16s;将FCN-16s进行上采样放大2倍,与pool3产生的特征图直接相加,在放大8倍,得到FCN-8s。在实验中,FCN-8s的效果最好

Unet

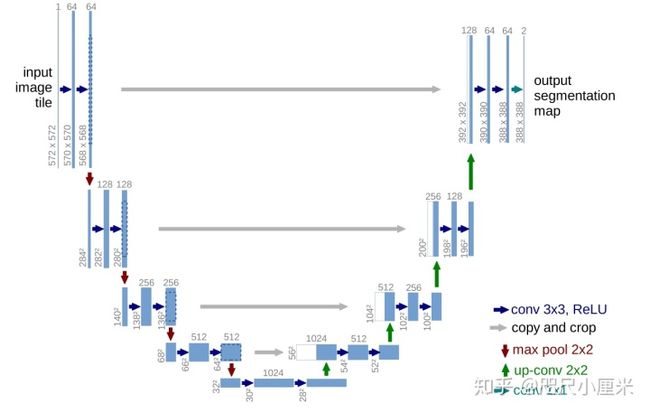

- U-Net发表于2015年,用于医学细胞分割

- 编码器-解码器架构,四次下采样(maxpooling),四次上采样(转置卷积),形成了U型结构

- U-Net最核心的一个思想是特征图的拼接

- 可以应对小样本的数据集进行较快、有效地分割,能够泛化到很多应用场景中去

在在上采样的过程中会丢失部分语义特征,通过拼接的方式,可以恢复部分的语义信息,从而保证分割的精度。

SegNet(实时语义分割)

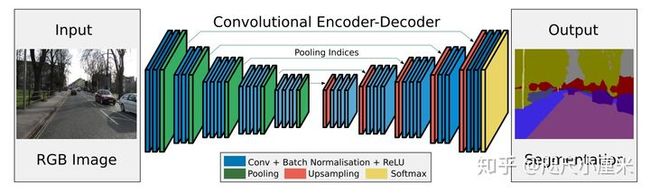

SegNet:A Deep Convolutional Encoder-Decoder Architecture for Image Segmentation

SegNet发布于2015年,使用编码器-解码器结构

其backbone是2个VGG16,去掉全连接层(13层),对应形成编码器-解码器架构

最核心的想法是提出了maxpool的索引来上采样的方法,从而免去了学习上采样的需要,在推理阶段节省了内存

作者说道这个idea是来自于无监督特征学习。在解码器中重新使用编码器池化时的索引下标有这么几个优点:1. 能改善边缘的情况;2. 减少了模型的参数;3. 这种能容易就能整合到任何的编码器-解码器结构中,只需要稍稍改动

文章采用的数据集是CamVid road scene segmentation 和 SUN RGB-D indoor scene segmentation。之所以不用主流的Pascal VOC12,是因为作者认为VOC12的背景太不相同了,所以可能分割起来比较容易

总得来说,SegNet的性能比较一般,不如同时期的DeepLab v1,但是因为它只存储特征映射的maxpool索引,所以最推理阶段内存占用少,更为高效。

Deeplab v1(VGG16)

Semantic Image Segmentation with Deep Convolutional Nets and Fully Connected CRFs

出发点:

- 弥补池化带来的信息丢失

- 像素之间的概率关系并没有被考虑

DCNN应用在语义分割上主要有两个问题:下采样和空间不变性。

在下采样上,现在几乎所有的DCNN都会使用max-pooling来进行下采样,这导致了分辨率的降低,从而引起了细节信息的丢失。本文提出了空洞卷积(‘atrous’ with holes algorithm)来解决这个问题。

在空间不变性上,作者谈到限制DCNN空间准确率一个原因是从分类器获得以对象为中心的决策需要空间变换的不变性。为了提高模型捕捉细节的能力,模型使用了全连接条件随机场(fully-connected CRF)。

如何改善?

- 空洞卷积(dilated convolution)

增加reception field(感受野),参数dilation rate(kernel的间隔数),下图原始卷积核的dilation rate = 1,第二张图dilation rate = 2

max-pooling会降低特征图的分辨率,而利用反卷积等上采样方法会增加时空复杂度,也比较粗糙,因此利用空洞卷积来扩大感受野,相当于下采样-卷积-上采样的过程被一次空洞卷积所取代。值得一提的是,文中并没有详细讲解空洞卷积,网络结构基本是VGG16拿到全连接层换上空洞卷积。

感受野

The receptive field is defined as the region in the input space that a particular CNN’s feature is looking at (i.e. be affected by).

在卷积神经网络中,感受野的定义是 卷积神经网络每一层输出的特征图(feature map)上的像素点在原始图像上映射的区域大小。

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-vIn5jfn1-1649127684946)(C:\Users\Administrator\AppData\Roaming\Typora\typora-user-images\image-20220223205530203.png)]

原始输入为5,stride = 2, padding = 1, kernel = 3, 则第一层输出大小为3x3, 感受野为3, 第二层输出大小为2x2,感受野为7

感受野计算公式:

R j = R j − 1 + ( k − 1 ) ∗ J j − 1 J i = J i − 1 ∗ s t r i d e J 0 = 1 , R 0 = 1 R_j = R_{j-1} + (k-1) * J_{j-1} \\ J_i = J_{i-1} * stride \\ J_0 = 1,\ R_0 = 1 Rj=Rj−1+(k−1)∗Jj−1Ji=Ji−1∗strideJ0=1, R0=1

Deeplab v2(Resnet)

- 第一个贡献是我们突出了卷积(如转置卷积、空洞卷积)在密集预测任务中的作用。空洞卷积允许我们有效地控制深度卷积网络中计算特征响应的分辨率(感受野)。这能允许我们有效地扩大卷积核的视场来整合更多的上下文信息,而不需要增加额外的参数或者计算量。

- 第二个贡献是,我们提出了空洞空间金字塔池化(atrous spatial pyramid pooling,ASPP),在多个尺度上鲁棒地分割图像。ASPP使用多个采样率和有效视场的卷积核来检测传入的卷积特征,从而以多个尺度捕获目标和图像的上下文内容。

- 第三个贡献是,我们通过结合DCNN和概率图模型的方法来改进对象边界的定位。DCNN中通常采用max-pooling和downsampling来实现不变性。我们通过将DCNN的输出和全连接条件随机场(CRF)结合来解决这个问题,在定性和定量上显示了CRF能够改善定位的性能。

但是deeplab的网络和分类网络的不同在于:

- 将全连接层变成了卷积层

- 通过空洞卷积层提高特征分辨率,使我们能够每8个像素而不是原始网络中的每32个像素计算特征响应

Deeplab v3

Deeplab v3:Rethinking Atrous Convolution for Semantic Image Segmentation

Atrous Spatial Pyramid Pooling

Different from most encoder-decoder designs, Deeplab offers a different approach to semantic segmentation. It presents an architecture for controlling signal decimation and learning multi-scale contextual features.

Deeplab uses an ImageNet pre-trained ResNet as its main feature extractor network. However, it proposes a new Residual block for multi-scale feature learning. Instead of regular convolutions, the last ResNet block uses atrous convolutions. Also, each convolution (within this new block) uses different dilation rates to capture multi-scale context.

Additionally, on top of this new block, it uses Atrous Spatial Pyramid Pooling (ASPP). ASPP uses dilated convolutions with different rates as an attempt of classifying regions of an arbitrary scale.

class _AtrousSpatialPyramidPoolingModule(nn.Module):

"""

operations performed:

1x1 x depth

3x3 x depth dilation 6

3x3 x depth dilation 12

3x3 x depth dilation 18

image pooling

concatenate all together

Final 1x1 conv

"""

def __init__(self, in_dim, reduction_dim=256, output_stride=16, rates=(6, 12, 18)):

super(_AtrousSpatialPyramidPoolingModule, self).__init__()

# Check if we are using distributed BN and use the nn from encoding.nn

# library rather than using standard pytorch.nn

if output_stride == 8:

rates = [2 * r for r in rates]

elif output_stride == 16:

pass

else:

raise 'output stride of {} not supported'.format(output_stride)

self.features = []

# 1x1

self.features.append(

nn.Sequential(nn.Conv2d(in_dim, reduction_dim, kernel_size=1, bias=False),

Norm2d(reduction_dim), nn.ReLU(inplace=True)))

# other rates

for r in rates:

self.features.append(nn.Sequential(

nn.Conv2d(in_dim, reduction_dim, kernel_size=3,

dilation=r, padding=r, bias=False),

Norm2d(reduction_dim),

nn.ReLU(inplace=True)

))

self.features = torch.nn.ModuleList(self.features)

# img level features

self.img_pooling = nn.AdaptiveAvgPool2d(1)

self.img_conv = nn.Sequential(

nn.Conv2d(in_dim, reduction_dim, kernel_size=1, bias=False),

Norm2d(reduction_dim), nn.ReLU(inplace=True))

def forward(self, x):

x_size = x.size()

img_features = self.img_pooling(x)

img_features = self.img_conv(img_features)

img_features = Upsample(img_features, x_size[2:])

out = img_features

for f in self.features:

y = f(x)

out = torch.cat((out, y), 1)

return out