【NLP】HuggingFace BERT 微博评论情感分类

【参考:HuggingFace学习2:使用Bert模型训练文本分类任务_呆萌的代Ma的博客-CSDN博客】

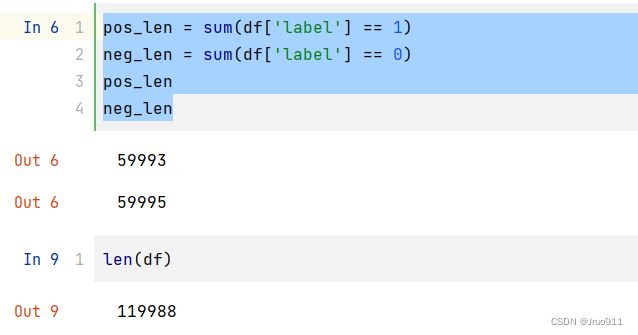

数据集:【参考:利用LSTM+CNN+glove词向量预训练模型进行微博评论情感分析(二分类)_你们卷的我睡不着QAQ的博客-CSDN博客】

在colab上使用GPU大概跑了二十分钟

文本处理

import pandas as pd

import numpy as np

df = pd.read_csv("weibo_senti_100k.csv", encoding="gbk")

df.head()

import re

df.insert(2, 'sentence', "") # 新增一列

# 因为是判断极性,所以不需要区分句子的先后关系,可以把符号全部去掉

for i in range(len(df)):

content = df.loc[i, 'content'] # 行索引,列索引

temp = re.sub('[^\u4e00-\u9fa5]+', '', content) # 去除非汉字

df.loc[i, 'sentence'] = temp

df.to_csv('weibo_senti_100k_sentence.csv') # 保存

为节省内存,删除content列

df = df.drop('content', axis=1)

数据封装

import torch

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(torch.cuda.is_available())

print(device)

True

cuda:0

# 划分数据集

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(df['sentence'].values, # ndarray

df['label'].values,

train_size=0.7,

random_state=100)

from torch.utils.data import Dataset, DataLoader

import torch

class MyDataset(Dataset):

def __init__(self, X, y):

self.X = X

self.y = y

def __getitem__(self, idx):

return self.X[idx], self.y[idx]

def __len__(self):

return len(self.X)

train_dataset = MyDataset(X_train, y_train)

test_dataset = MyDataset(X_test, y_test)

next(iter(train_dataset))

(‘刘姐回复菩提天泉好可爱哈哈扎西德勒’, 1)

batch_size = 32

train_loader = DataLoader(dataset=train_dataset, batch_size=batch_size, shuffle=False)

test_loader = DataLoader(dataset=test_dataset, batch_size=batch_size, shuffle=False)

# next(iter(train_loader))

for i, batch in enumerate(train_loader):

print(batch)

break

[

('刘姐回复菩提天泉好可爱哈哈扎西德勒', ...,'玻璃陶瓷家具股引领涨势亮点原来在此哈哈', '如此去三亚的理由是不是更多啦亲们不要放过机会太开心'),

tensor([1, 0, 1, 1, 1, 1, 0, 0, 1, 1, 1, 0, 0, 1, 0, 1, 1, 1, 1, 0, 1, 1, 0, 1,1, 0, 1, 1, 0, 0, 1, 1])

]

BERT模型

from torch import nn

from transformers import BertModel, BertTokenizer

from transformers import AdamW

from tqdm import tqdm

num_class=2

class BertClassificationModel(nn.Module):

def __init__(self,hidden_size=768): # bert默认最后输出维度为768

super(BertClassificationModel, self).__init__()

model_name = 'bert-base-chinese'

# 读取分词器

self.tokenizer = BertTokenizer.from_pretrained(pretrained_model_name_or_path=model_name)

# 读取预训练模型

self.bert = BertModel.from_pretrained(pretrained_model_name_or_path=model_name)

for p in self.bert.parameters(): # 冻结bert参数

p.requires_grad = False

self.fc = nn.Linear(hidden_size, num_class)

def forward(self, batch_sentences): # [batch_size,1]

# 编码

sentences_tokenizer = self.tokenizer(batch_sentences,

truncation=True,

padding=True,

max_length=512,

add_special_tokens=True)

input_ids=torch.tensor(sentences_tokenizer['input_ids']).to(device) # 变量

attention_mask=torch.tensor(sentences_tokenizer['attention_mask']).to(device) # 变量

bert_out=self.bert(input_ids=input_ids,attention_mask=attention_mask) # 模型

last_hidden_state =bert_out[0].to(device) # [batch_size, sequence_length, hidden_size] # 变量

bert_cls_hidden_state=last_hidden_state[:,0,:].to(device) # 变量

fc_out=self.fc(bert_cls_hidden_state) # 模型

return fc_out

model=BertClassificationModel()

model=model.to(device)

optimizer=AdamW(model.parameters(),lr=1e-4)

loss_func=nn.CrossEntropyLoss()

loss_func=loss_func.to(device)

训练

def train():

model.train()

for i,(data,labels) in enumerate(tqdm(train_loader)):

out=model(data) # [batch_size,num_class]

loss=loss_func(out.cpu(),labels)

loss.backward()

optimizer.step()

optimizer.zero_grad()

if i%5==0:

out=out.argmax(dim=-1)

acc=(out.cpu()==labels).sum().item()/len(labels)

print(i, loss.item(), acc) # 一个batch的数据

train()

0%| | 1/2625 [00:00<22:30, 1.94it/s] 0 0.6587909460067749 0.59375

测试

def test():

model.eval()

correct = 0

total = 0

for i,(data,labels) in enumerate(tqdm(test_loader)):

with torch.no_grad():

out=model(data) # [batch_size,num_class]

out = out.argmax(dim=1)

correct += (out.cpu() == labels).sum().item()

total += len(labels)

print(correct / total)

test()

100%|██████████| 1125/1125 [07:35<00:00, 2.47it/s] 0.8822957468677946

注:本次实验只训练了一个epochs,有兴趣的小伙伴可以多训练几个批次,应该是可以达到95%以上的

torch.save(model, 'BertClassificationModel.pth') # 保存模型

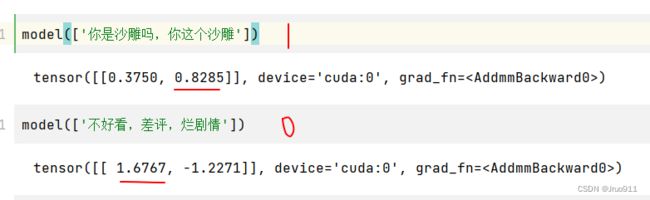

预测

# model = torch.load('BertClassificationModel.pth')

text='真不错,讲得非常好'

out=model([text])

out

tensor([[-0.2593, 1.1362]], device='cuda:0', grad_fn=<AddmmBackward0>)

out=out.argmax(dim=1)

out

tensor([1], device='cuda:0')