Vision Transformer(VIT)代码分析——保姆级教程

目录

- 前言

- 一.代码分析

-

- 1.1.DropPath模块

- 1.2.Patch Embeding

- 1.3.Multi-Head Attention

- 1.4.MLP

- 1.5.Block

- 1.6.VisionTransformer

- 二.构建VIT模型

- 三.完整代码

前言

这篇博文我们主要来分析下Vision Transformer的代码实现部分,关于VIT的理论讲解有不懂的小伙伴可以参考李宏毅老师的视频和下面给的几篇博文,这里我就不过多介绍了。

- 【機器學習2021】自注意力機制 (Self-attention) (上)

- 【機器學習2021】自注意力機制 (Self-attention) (下)

- 【機器學習2021】Transformer (上)

- 【機器學習2021】Transformer (下)

- 详解Transformer中Self-Attention以及Multi-Head Attention

- Vision Transformer详解

一.代码分析

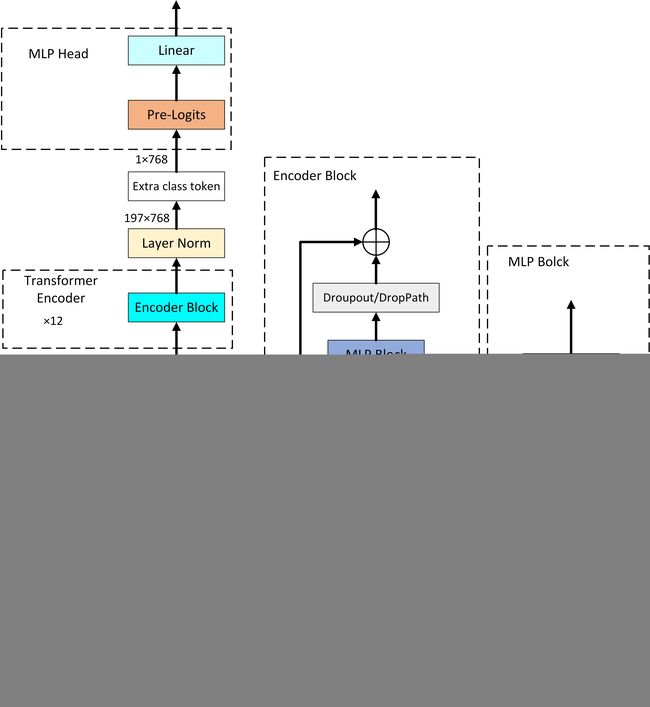

从上面的框图可以看出,VIT的主要组成结构大致可以分为三部分,分别是Patch Embeding、Transformer Encoder、MLP Head三部分。我们下面分析代码也是主要从这三部分进行分析,先分析每个小模块做了什么事情,再分析整个VIT的架构。

1.1.DropPath模块

def drop_path(x, drop_prob: float = 0., training: bool = False):

"""

Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

This is the same as the DropConnect impl I created for EfficientNet, etc networks, however,

the original name is misleading as 'Drop Connect' is a different form of dropout in a separate paper...

See discussion: https://github.com/tensorflow/tpu/issues/494#issuecomment-532968956 ... I've opted for

changing the layer and argument names to 'drop path' rather than mix DropConnect as a layer name and use

'survival rate' as the argument.

"""

if drop_prob == 0. or not training:

return x

# 保留的分支概率

keep_prob = 1 - drop_prob

# shape (b, 1, 1, 1),其中x.ndim输出结果为x的维度,即4。目的是为了创建一个失活矩阵。

shape = (x.shape[0],) + (1,) * (x.ndim - 1) # work with diff dim tensors, not just 2D ConvNets

random_tensor = keep_prob + torch.rand(shape, dtype=x.dtype, device=x.device)

# 向下取整用于确定保存哪些样本,floor_()是floor的原位运算

random_tensor.floor_() # binarize

# 除以keep_drop让一部分分支失活,恒等映射

output = x.div(keep_prob) * random_tensor

return output

class DropPath(nn.Module):

"""

Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

"""

def __init__(self, drop_prob=None):

super(DropPath, self).__init__()

self.drop_prob = drop_prob

def forward(self, x):

return drop_path(x, self.drop_prob, self.training)

在Encoder Block中使用了两个DropPath,我们先来简单介绍下什么是DropPath。DropPath是随机的将深度学习中的多分支结构进行删除,而Dropout 是对神经元随机 “失效”。假设在前向传播中有如下的代码:

x = x + self.drop_path( self.conv(x) )

那么在drop_path分支中,每个batch有drop_prob的概率样本在 self.conv(x) 不会 “执行”,会以0直接传递。若x为输入的张量,其通道为[B,C,H,W],那么drop_path的含义为在一个Batch_size中,随机有drop_prob的样本,不经过主干,而直接由分支进行恒等映射。需要注意的是,不能通过下面的方法进行drop_path:

x = self.drop_path(x)

1.2.Patch Embeding

上面解释了DropPath的用法,下面我们来看下Patch Embeding的用法,先上代码。

class PatchEmbed(nn.Module):

"""

2D Image to Patch Embedding

"""

def __init__(self, img_size=224, patch_size=16, in_c=3, embed_dim=768, norm_layer=None):

super().__init__()

img_size = (img_size, img_size)

patch_size = (patch_size, patch_size)

self.img_size = img_size

self.patch_size = patch_size

# 14*14

self.grid_size = (img_size[0] // patch_size[0], img_size[1] // patch_size[1])

# 196

self.num_patches = self.grid_size[0] * self.grid_size[1]

# 不同的模型emd_dim会变化

self.proj = nn.Conv2d(in_c, embed_dim, kernel_size=patch_size, stride=patch_size)

# 传入nor_mlayer就使用传入的norm_layer,否则就使用Identity不用做任何操作

self.norm = norm_layer(embed_dim) if norm_layer else nn.Identity()

def forward(self, x):

# 获取图片的大小信息

B, C, H, W = x.shape

# 传入的图片高和宽和预设的不一样就会报错,VIT模型里面输入的图像大小必须是固定的

assert H == self.img_size[0] and W == self.img_size[1], \

f"Input image size ({H}*{W}) doesn't match model ({self.img_size[0]}*{self.img_size[1]})."

# flatten: [B, C, H, W] -> [B, C, HW]

# transpose: [B, C, HW] -> [B, HW, C]

# 进过卷积之后从第2维度开始展平,之后再交换1,2维度(为了计算方便)

x = self.proj(x).flatten(2).transpose(1, 2)

x = self.norm(x)

# print(s.shape)

return x

我们来看下这个模块的最终输出结果是什么,把上面的print(s.shape)注释给取消掉(输入的图像大小为(224,224)),看下输出结果。

torch.Size([1, 196, 768])

可以看到输入一个大小为(1,3,224,224)的矩阵后,经过Patch Embeding后变成了[1, 196, 768],也就是把我们之前输入的二位矩阵都变成了一维向量了,这个时候就可以使用Transformer来进行建模了,因为Transformer只能接受一维向量。

1.3.Multi-Head Attention

下面这个模块使我们整个VIT的核心,我们来对每句代码进行逐一分析,看看是怎么实现Transformer里面的注意力机制的。

class Attention(nn.Module):

def __init__(self,

dim, # 输入token的dim,768

num_heads=8, # 8个头,实例化的时候是12个头

qkv_bias=False,

qk_scale=None,

attn_drop_ratio=0.,

proj_drop_ratio=0.):

super(Attention, self).__init__()

self.num_heads = num_heads

# 计算每个head的dim,直接均分操作。

head_dim = dim // num_heads

# 计算分母,q,k相乘之后要除以一个根号下dk。

self.scale = qk_scale or head_dim ** -0.5

# 直接使用一个全连接实现q,k,v。

self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

self.attn_drop = nn.Dropout(attn_drop_ratio)

# 多头拼接之后通过W进行映射,跟上面的q,k,v一样,也是通过全连接实现。

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop_ratio)

def forward(self, x):

# [batch_size, num_patches + 1, total_embed_dim],即(B,197,768)

B, N, C = x.shape

# qkv(): -> [batch_size, num_patches + 1, 3 * total_embed_dim]

# reshape: -> [batch_size, num_patches + 1, 3, num_heads, embed_dim_per_head],即(B,197,3,12,64)

# permute: -> [3, batch_size, num_heads, num_patches + 1, embed_dim_per_head],即(3,B,12,197,64)

# C // self.num_heads:每个head的q,k,v对应的维度

# Linear函数可以接收多维的矩阵输入但是只对最后一维起效果,其他维度不变。permute()函数用于调整维度。

qkv = self.qkv(x).reshape(B, N, 3, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

# [batch_size, num_heads, num_patches + 1, embed_dim_per_head]

# 通过切片获取q,k,v

q, k, v = qkv[0], qkv[1], qkv[2] # make torchscript happy (cannot use tensor as tuple)

# transpose: -> [batch_size, num_heads, embed_dim_per_head, num_patches + 1]

# @: multiply -> [batch_size, num_heads, num_patches + 1, num_patches + 1]。@为矩阵乘法,q,k是多维矩阵,只有最后两个维度进行矩阵乘法。

# 每个head的q,k进行相乘。输出维度大小为(1,12,197,197)

attn = (q @ k.transpose(-2, -1)) * self.scale

# 在最后一个维度,即每一行进行softmax处理

attn = attn.softmax(dim=-1)

# softmax处理后要经过一个dropout层

attn = self.attn_drop(attn)

# @: multiply -> [batch_size, num_heads, num_patches + 1, embed_dim_per_head]

# transpose: -> [batch_size, num_patches + 1, num_heads, embed_dim_per_head]

# reshape: -> [batch_size, num_patches + 1, total_embed_dim]

# q,k矩阵相乘的结果要和v相乘得到一个加权结果,输出维度为(1,12,197,64),然后交换1,2维度,再进行reshape操作,其实这个reshape操作就是对多头的拼接,得到最后的输出shape为(1,197,768)

x = (attn @ v).transpose(1, 2).reshape(B, N, C)

# 经过Woy映射,也就是一个全连接层

x = self.proj(x)

# 经过一个dropout层。一般全连接后面都跟一个dropout层

x = self.proj_drop(x)

return x

上面的代码基本上每句都给了注释,有些地方可能会让人有点费解,就是下面这句话:

qkv = self.qkv(x).reshape(B, N, 3, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

有些小伙伴可能会迷惑,这个x不是一个多维矩阵吗,为什么能够通过Linear函数?具体关于nn.Linear()函数的用法,可以参考下这篇博文:关于 torch.nn.Linear 的输入维度问题。

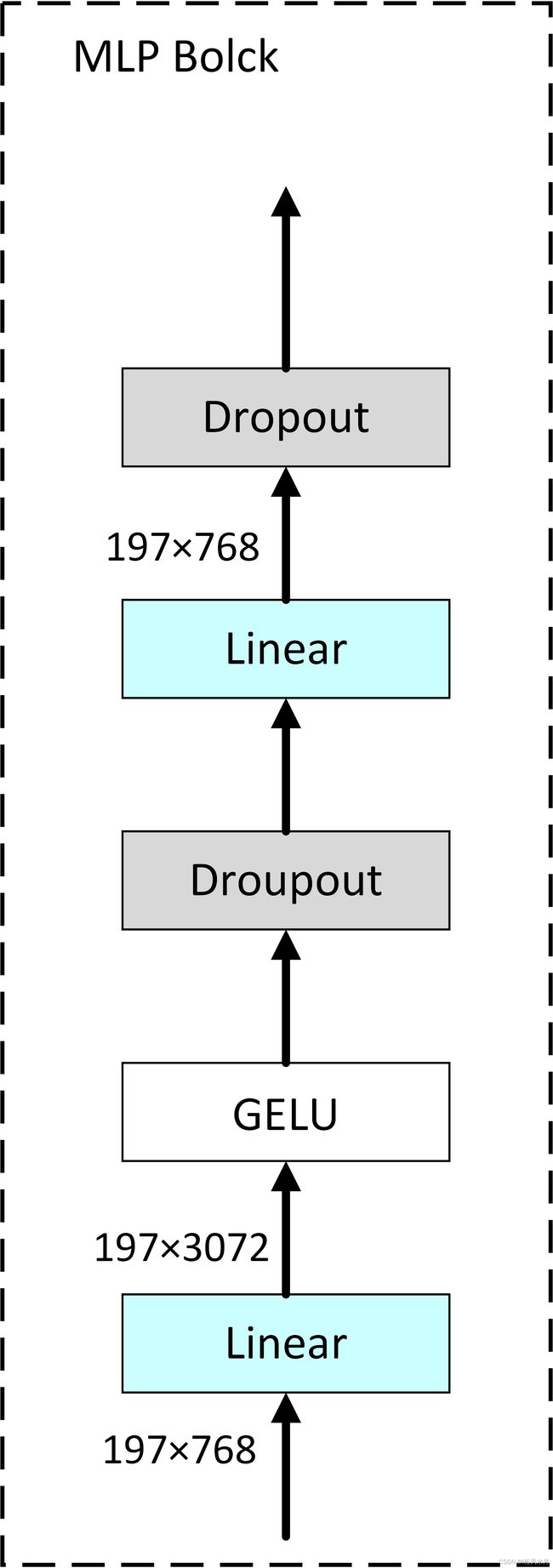

1.4.MLP

上面我们把VIT的核心代码理了一下,下面的内容就不比较简单了,就是对这些模块的拼接,在介绍这些模块的拼接之前,我们还是先把MLP这个结构先看下,如下图。

class Mlp(nn.Module):

"""

MLP as used in Vision Transformer, MLP-Mixer and related networks

"""

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

self.fc1 = nn.Linear(in_features, hidden_features)

self.act = act_layer()

self.fc2 = nn.Linear(hidden_features, out_features)

self.drop = nn.Dropout(drop)

def forward(self, x):

x = self.fc1(x)

x = self.act(x)

x = self.drop(x)

x = self.fc2(x)

x = self.drop(x)

return x

MLP的结构比较简答,代码也比较好理解,其中有一点需要注意,就是第一个全连接之后把输出维度剩了4倍,第二个全连接又将其还原回去。

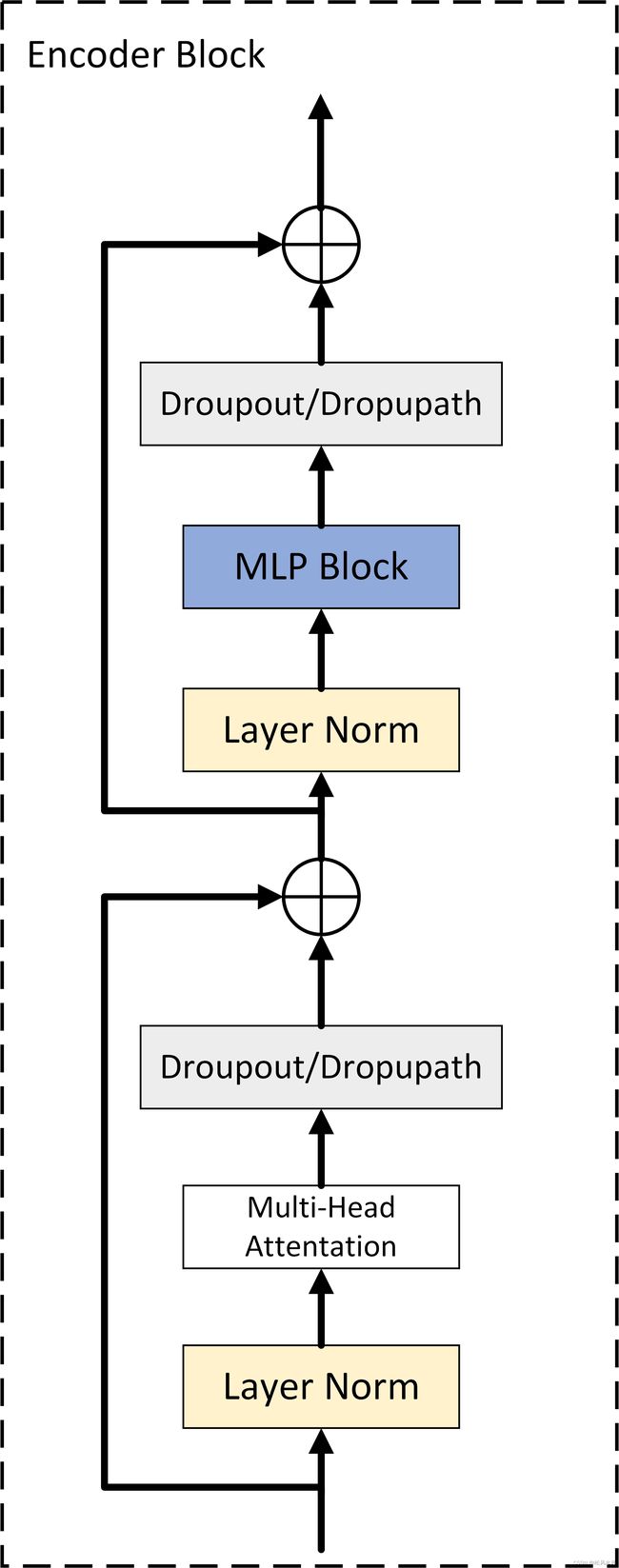

1.5.Block

上面简单看了下MLP的代码,比较简单,就不过多介绍了,下面我们再来看下Block这个模块,我们的Transformer Encoder就是对其进行堆叠12次得到的,我们再来看下Encoder Block模块,如下图:

下面我们来分析下代码:

class Block(nn.Module):

def __init__(self,

dim,

num_heads, # 第一个全连接的倍率

mlp_ratio=4.,

qkv_bias=False,

qk_scale=None,

drop_ratio=0., # 对应multi-head attention最后全连接层的失活比例

attn_drop_ratio=0.,# q,k矩阵相乘之后通过softmax之后的全连接层的失活比例

drop_path_ratio=0., # 框图中Droppath失活比例。也可以使用dropout,没啥影响

act_layer=nn.GELU,

norm_layer=nn.LayerNorm):

super(Block, self).__init__()

# layernorm层

self.norm1 = norm_layer(dim)

# 实例化上面讲的Attention类

self.attn = Attention(dim, num_heads=num_heads, qkv_bias=qkv_bias, qk_scale=qk_scale,

attn_drop_ratio=attn_drop_ratio, proj_drop_ratio=drop_ratio)

# NOTE: drop path for stochastic depth, we shall see if this is better than dropout here

# 失活比例大于0就实例化DropPath,否者不做任何操作

self.drop_path = DropPath(drop_path_ratio) if drop_path_ratio > 0. else nn.Identity()

# 第二个layernorm层

self.norm2 = norm_layer(dim)

# 第一个全连接之后输出维度翻四倍

mlp_hidden_dim = int(dim * mlp_ratio)

# 实例化mlp

self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop_ratio)

def forward(self, x):

x = x + self.drop_path(self.attn(self.norm1(x)))

x = x + self.drop_path(self.mlp(self.norm2(x)))

return x

1.6.VisionTransformer

上面所有的模块我们都创建好了,下面就到了最后的拼接部分了,结合上面的模块搭建我们的VisionTransformer模块,直接看代码:

class VisionTransformer(nn.Module):

def __init__(self, img_size=224, patch_size=16, in_c=3, num_classes=1000,

embed_dim=768, depth=12, num_heads=12, mlp_ratio=4.0, qkv_bias=True,

qk_scale=None, representation_size=None, distilled=False, drop_ratio=0.,

attn_drop_ratio=0., drop_path_ratio=0., embed_layer=PatchEmbed, norm_layer=None,

act_layer=None):

"""

Args:

img_size (int, tuple): input image size

patch_size (int, tuple): patch size

in_c (int): number of input channels

num_classes (int): number of classes for classification head

embed_dim (int): embedding dimension

depth (int): depth of transformer,就是我们上面的Block堆叠多少次

num_heads (int): number of attention heads

mlp_ratio (int): ratio of mlp hidden dim to embedding dim

qkv_bias (bool): enable bias for qkv if True

qk_scale (float): override default qk scale of head_dim ** -0.5 if set

representation_size (Optional[int]): enable and set representation layer (pre-logits) to this value if set。对应的是最后的MLP中的pre-logits中的全连接层的节点个数。默认是none,也就是不会去构建MLP中的pre-logits,mlp中只有一个全连接层。

distilled (bool): model includes a distillation token and head as in DeiT models。为了兼容Deit模型,不用管。

drop_ratio (float): dropout rate

attn_drop_ratio (float): attention dropout rate

drop_path_ratio (float): stochastic depth rate

embed_layer (nn.Module): patch embedding layer

norm_layer: (nn.Module): normalization layer

"""

super(VisionTransformer, self).__init__()

self.num_classes = num_classes

self.num_features = self.embed_dim = embed_dim # num_features for consistency with other models

# 不用管distilled,所有self.num_tokens=1

self.num_tokens = 2 if distilled else 1

#norm_layer默认为none,所有norm_layer=nn.LayerNorm,用partial方法给一个默认参数。partial 函数的功能就是:把一个函数的某些参数给固定住,返回一个新的函数。

norm_layer = norm_layer or partial(nn.LayerNorm, eps=1e-6)

# act_layer默认等于GELU函数

act_layer = act_layer or nn.GELU

# 通过embed_layer构建PatchEmbed

self.patch_embed = embed_layer(img_size=img_size, patch_size=patch_size, in_c=in_c, embed_dim=embed_dim)

# 获得num_patches的总个数196

num_patches = self.patch_embed.num_patches

# 创建一个cls_token,形状为(1,768),直接通过0矩阵进行初始化,后面在训练学习。下面要和num_patches进行拼接,加上一个类别向量,从而变成(197,768)

self.cls_token = nn.Parameter(torch.zeros(1, 1, embed_dim))

# 不用管,用不到,因为distilled默认为none

self.dist_token = nn.Parameter(torch.zeros(1, 1, embed_dim)) if distilled else None

# 创建一个位置编码,形状为(197,768)

self.pos_embed = nn.Parameter(torch.zeros(1, num_patches + self.num_tokens, embed_dim))

# 此处的dropout为加上位置编码后的dropout层

self.pos_drop = nn.Dropout(p=drop_ratio)

# 根据传入的drop_path_ratio构建一个等差序列,总共depth个元素,即在每个Encoder Block中的失活比例都不一样。默认为0,可以传入参数改变。

dpr = [x.item() for x in torch.linspace(0, drop_path_ratio, depth)] # stochastic depth decay rule

# 构建blocks,首先通过列表创建depth次,也就是12次。然后通过nn.Sequential方法把列表中的所有元素打包成整体赋值给self.blocks。

self.blocks = nn.Sequential(*[

Block(dim=embed_dim, num_heads=num_heads, mlp_ratio=mlp_ratio, qkv_bias=qkv_bias, qk_scale=qk_scale,

drop_ratio=drop_ratio, attn_drop_ratio=attn_drop_ratio, drop_path_ratio=dpr[i],

norm_layer=norm_layer, act_layer=act_layer)

for i in range(depth)

])

# 通过norm_layer层

self.norm = norm_layer(embed_dim)

# distilled不用管,只用看representation_size即可,如果有传入representation_size,在MLP中就会构建pre-logits。否者直接 self.has_logits = False,然后执行self.pre_logits = nn.Identity(),相当于没有pre-logits。

if representation_size and not distilled:

self.has_logits = True

self.num_features = representation_size

self.pre_logits = nn.Sequential(OrderedDict([

("fc", nn.Linear(embed_dim, representation_size)),

("act", nn.Tanh())

]))

else:

self.has_logits = False

self.pre_logits = nn.Identity()

# 整个网络的最后一层全连接层,输出就是分类类别个数,前提要num_classes > 0

self.head = nn.Linear(self.num_features, num_classes) if num_classes > 0 else nn.Identity()

self.head_dist = None

if distilled:

self.head_dist = nn.Linear(self.embed_dim, self.num_classes) if num_classes > 0 else nn.Identity()

# 权重初始化

nn.init.trunc_normal_(self.pos_embed, std=0.02)

if self.dist_token is not None:

nn.init.trunc_normal_(self.dist_token, std=0.02)

nn.init.trunc_normal_(self.cls_token, std=0.02)

self.apply(_init_vit_weights)

def forward_features(self, x):

# [B, C, H, W] -> [B, num_patches, embed_dim]

# 先进性patch_embeding处理

x = self.patch_embed(x) # [B, 196, 768]

# [1, 1, 768] -> [B, 1, 768]

# 对cls_token在batch维度进行复制batch_size份

cls_token = self.cls_token.expand(x.shape[0], -1, -1)

# self.dist_token默认为none。

if self.dist_token is None:

# 在dim=1的维度上进行拼接,输出shape:[B, 197, 768]

x = torch.cat((cls_token, x), dim=1) # [B, 197, 768]

else:

x = torch.cat((cls_token, self.dist_token.expand(x.shape[0], -1, -1), x), dim=1)

# 位置编码后有个dropout层

x = self.pos_drop(x + self.pos_embed)

# 通过我们刚才构建好的blocks层。

x = self.blocks(x)

# 再通过一个normlayer层

x = self.norm(x)

# 提取clc_token对应的输出,也就是提取出类别向量。

if self.dist_token is None:

# 返回所有的batch维度和第二个维度上面索引为0的数据

return self.pre_logits(x[:, 0])

else:

return x[:, 0], x[:, 1]

def forward(self, x):

# 首先将x传给forward_features()函数,输出shape为(1,768)

x = self.forward_features(x)

# self.head_dist默认为none,自动执行else后面的语句

if self.head_dist is not None:

x, x_dist = self.head(x[0]), self.head_dist(x[1])

if self.training and not torch.jit.is_scripting():

# during inference, return the average of both classifier predictions

return x, x_dist

else:

return (x + x_dist) / 2

else:

# 输出特征大小为(1,1000),对应1000分类

x = self.head(x)

return x

二.构建VIT模型

上面我们把VIT所有的代码都分析清除了,并且搭建了VisionTransformer,你以为到这就结束了吗?还没有,下面我们对VisionTransformer再进行封装以方便我们直接调用。按惯例,直接上代码:

def vit_base_patch16_224_in21k(num_classes: int = 21843, has_logits: bool = True):

# num_classes表示分类类别个数,因为原始代码是在ImageNet21k上预训练的,所以这里为21843.

# has_logits:是否有这个模块,预训练的时候是有的,不加也可以,这样就只剩全连接层了

"""

ViT-Base model (ViT-B/16) from original paper (https://arxiv.org/abs/2010.11929).

ImageNet-21k weights @ 224x224, source https://github.com/google-research/vision_transformer.

weights ported from official Google JAX impl:

https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-vitjx/jx_vit_base_patch16_224_in21k-e5005f0a.pth

"""

# 创建模型

# img_size:图像尺寸

# patch_size:图像切块大小

# 图像切块后每个小块的向量维度大小,即送入multi-head attention模块的向量长度

# depth:block模块的堆叠次数

# num_heads:采用几头注意力

# representation_size:pre-logits里面用的

# num_classes:分类类别数

model = VisionTransformer(img_size=224,

patch_size=16,

embed_dim=768,

depth=12,

num_heads=12,

representation_size=768 if has_logits else None,

num_classes=num_classes)

return model

def vit_base_patch32_224_in21k(num_classes: int = 21843, has_logits: bool = True):

"""

ViT-Base model (ViT-B/32) from original paper (https://arxiv.org/abs/2010.11929).

ImageNet-21k weights @ 224x224, source https://github.com/google-research/vision_transformer.

weights ported from official Google JAX impl:

https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-vitjx/jx_vit_base_patch32_224_in21k-8db57226.pth

"""

model = VisionTransformer(img_size=224,

patch_size=32,

embed_dim=768,

depth=12,

num_heads=12,

representation_size=768 if has_logits else None,

num_classes=num_classes)

return model

def vit_large_patch16_224_in21k(num_classes: int = 21843, has_logits: bool = True):

"""

ViT-Large model (ViT-L/16) from original paper (https://arxiv.org/abs/2010.11929).

ImageNet-21k weights @ 224x224, source https://github.com/google-research/vision_transformer.

weights ported from official Google JAX impl:

https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-vitjx/jx_vit_large_patch16_224_in21k-606da67d.pth

"""

model = VisionTransformer(img_size=224,

patch_size=16,

embed_dim=1024,

depth=24,

num_heads=16,

representation_size=1024 if has_logits else None,

num_classes=num_classes)

return model

def vit_large_patch32_224_in21k(num_classes: int = 21843, has_logits: bool = True):

"""

ViT-Large model (ViT-L/32) from original paper (https://arxiv.org/abs/2010.11929).

ImageNet-21k weights @ 224x224, source https://github.com/google-research/vision_transformer.

weights ported from official Google JAX impl:

https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-vitjx/jx_vit_large_patch32_224_in21k-9046d2e7.pth

"""

model = VisionTransformer(img_size=224,

patch_size=32,

embed_dim=1024,

depth=24,

num_heads=16,

representation_size=1024 if has_logits else None,

num_classes=num_classes)

return model

def vit_huge_patch14_224_in21k(num_classes: int = 21843, has_logits: bool = True):

"""

ViT-Huge model (ViT-H/14) from original paper (https://arxiv.org/abs/2010.11929).

ImageNet-21k weights @ 224x224, source https://github.com/google-research/vision_transformer.

NOTE: converted weights not currently available, too large for github release hosting.

"""

model = VisionTransformer(img_size=224,

patch_size=14,

embed_dim=1280,

depth=32,

num_heads=16,

representation_size=1280 if has_logits else None,

num_classes=num_classes)

return model

# 模型测试

if __name__ == "__main__":

model = vit_base_patch16_224_in21k(num_classes=1000, has_logits=False)

data = torch.rand(1, 3, 224, 224)

out = model(data)

# print(out.shape)

从上面的代码可以看到,总共5个模型,从上到下复杂的依次递增,上面介绍了一vit_base_patch16_224_in21k这个模型的创建配置参数,其他模型的参数大同小异。

三.完整代码

"""

original code from rwightman:

https://github.com/rwightman/pytorch-image-models/blob/master/timm/models/vision_transformer.py

"""

from functools import partial

from collections import OrderedDict

import torch

import torch.nn as nn

def drop_path(x, drop_prob: float = 0., training: bool = False):

"""

Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

This is the same as the DropConnect impl I created for EfficientNet, etc networks, however,

the original name is misleading as 'Drop Connect' is a different form of dropout in a separate paper...

See discussion: https://github.com/tensorflow/tpu/issues/494#issuecomment-532968956 ... I've opted for

changing the layer and argument names to 'drop path' rather than mix DropConnect as a layer name and use

'survival rate' as the argument.

"""

if drop_prob == 0. or not training:

return x

keep_prob = 1 - drop_prob

shape = (x.shape[0],) + (1,) * (x.ndim - 1) # work with diff dim tensors, not just 2D ConvNets

random_tensor = keep_prob + torch.rand(shape, dtype=x.dtype, device=x.device)

random_tensor.floor_() # binarize

output = x.div(keep_prob) * random_tensor

return output

class DropPath(nn.Module):

"""

Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

"""

def __init__(self, drop_prob=None):

super(DropPath, self).__init__()

self.drop_prob = drop_prob

def forward(self, x):

return drop_path(x, self.drop_prob, self.training)

class PatchEmbed(nn.Module):

"""

2D Image to Patch Embedding

"""

def __init__(self, img_size=224, patch_size=16, in_c=3, embed_dim=768, norm_layer=None):

super().__init__()

# (224,224)

img_size = (img_size, img_size)

# (16,16)

patch_size = (patch_size, patch_size)

self.img_size = img_size

self.patch_size = patch_size

# (14,14)

self.grid_size = (img_size[0] // patch_size[0], img_size[1] // patch_size[1])

# 196

self.num_patches = self.grid_size[0] * self.grid_size[1]

self.proj = nn.Conv2d(in_c, embed_dim, kernel_size=patch_size, stride=patch_size)

self.norm = norm_layer(embed_dim) if norm_layer else nn.Identity()

def forward(self, x):

B, C, H, W = x.shape

assert H == self.img_size[0] and W == self.img_size[1], \

f"Input image size ({H}*{W}) doesn't match model ({self.img_size[0]}*{self.img_size[1]})."

# flatten: [B, C, H, W] -> [B, C, HW]

# transpose: [B, C, HW] -> [B, HW, C]

x = self.proj(x).flatten(2).transpose(1, 2)

x = self.norm(x)

# print(x.shape)

return x

class Attention(nn.Module):

def __init__(self,

dim, # ??token?dim

num_heads=8,

qkv_bias=False,

qk_scale=None,

attn_drop_ratio=0.,

proj_drop_ratio=0.):

super(Attention, self).__init__()

self.num_heads = num_heads

head_dim = dim // num_heads

self.scale = qk_scale or head_dim ** -0.5

self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

self.attn_drop = nn.Dropout(attn_drop_ratio)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop_ratio)

def forward(self, x):

# [batch_size, num_patches + 1, total_embed_dim]

B, N, C = x.shape

# qkv(): -> [batch_size, num_patches + 1, 3 * total_embed_dim]

# reshape: -> [batch_size, num_patches + 1, 3, num_heads, embed_dim_per_head]

# permute: -> [3, batch_size, num_heads, num_patches + 1, embed_dim_per_head]

qkv = self.qkv(x).reshape(B, N, 3, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

# [batch_size, num_heads, num_patches + 1, embed_dim_per_head]

q, k, v = qkv[0], qkv[1], qkv[2] # make torchscript happy (cannot use tensor as tuple)

# transpose: -> [batch_size, num_heads, embed_dim_per_head, num_patches + 1]

# @: multiply -> [batch_size, num_heads, num_patches + 1, num_patches + 1]

attn = (q @ k.transpose(-2, -1)) * self.scale

attn = attn.softmax(dim=-1)

attn = self.attn_drop(attn)

# @: multiply -> [batch_size, num_heads, num_patches + 1, embed_dim_per_head]

# transpose: -> [batch_size, num_patches + 1, num_heads, embed_dim_per_head]

# reshape: -> [batch_size, num_patches + 1, total_embed_dim]

# print((attn @ v).shape)

x = (attn @ v).transpose(1, 2).reshape(B, N, C)

# print(x.shape)

x = self.proj(x)

x = self.proj_drop(x)

return x

class Mlp(nn.Module):

"""

MLP as used in Vision Transformer, MLP-Mixer and related networks

"""

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

self.fc1 = nn.Linear(in_features, hidden_features)

self.act = act_layer()

self.fc2 = nn.Linear(hidden_features, out_features)

self.drop = nn.Dropout(drop)

def forward(self, x):

x = self.fc1(x)

x = self.act(x)

x = self.drop(x)

x = self.fc2(x)

x = self.drop(x)

return x

class Block(nn.Module):

def __init__(self,

dim,

num_heads,

mlp_ratio=4.,

qkv_bias=False,

qk_scale=None,

drop_ratio=0.,

attn_drop_ratio=0.,

drop_path_ratio=0.,

act_layer=nn.GELU,

norm_layer=nn.LayerNorm):

super(Block, self).__init__()

self.norm1 = norm_layer(dim)

self.attn = Attention(dim, num_heads=num_heads, qkv_bias=qkv_bias, qk_scale=qk_scale,

attn_drop_ratio=attn_drop_ratio, proj_drop_ratio=drop_ratio)

# NOTE: drop path for stochastic depth, we shall see if this is better than dropout here

self.drop_path = DropPath(drop_path_ratio) if drop_path_ratio > 0. else nn.Identity()

self.norm2 = norm_layer(dim)

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop_ratio)

def forward(self, x):

x = x + self.drop_path(self.attn(self.norm1(x)))

x = x + self.drop_path(self.mlp(self.norm2(x)))

return x

class VisionTransformer(nn.Module):

def __init__(self, img_size=224, patch_size=16, in_c=3, num_classes=1000,

embed_dim=768, depth=12, num_heads=12, mlp_ratio=4.0, qkv_bias=True,

qk_scale=None, representation_size=None, distilled=False, drop_ratio=0.,

attn_drop_ratio=0., drop_path_ratio=0., embed_layer=PatchEmbed, norm_layer=None,

act_layer=None):

"""

Args:

img_size (int, tuple): input image size

patch_size (int, tuple): patch size

in_c (int): number of input channels

num_classes (int): number of classes for classification head

embed_dim (int): embedding dimension

depth (int): depth of transformer

num_heads (int): number of attention heads

mlp_ratio (int): ratio of mlp hidden dim to embedding dim

qkv_bias (bool): enable bias for qkv if True

qk_scale (float): override default qk scale of head_dim ** -0.5 if set

representation_size (Optional[int]): enable and set representation layer (pre-logits) to this value if set

distilled (bool): model includes a distillation token and head as in DeiT models

drop_ratio (float): dropout rate

attn_drop_ratio (float): attention dropout rate

drop_path_ratio (float): stochastic depth rate

embed_layer (nn.Module): patch embedding layer

norm_layer: (nn.Module): normalization layer

"""

super(VisionTransformer, self).__init__()

self.num_classes = num_classes

self.num_features = self.embed_dim = embed_dim # num_features for consistency with other models

self.num_tokens = 2 if distilled else 1

norm_layer = norm_layer or partial(nn.LayerNorm, eps=1e-6)

act_layer = act_layer or nn.GELU

self.patch_embed = embed_layer(img_size=img_size, patch_size=patch_size, in_c=in_c, embed_dim=embed_dim)

num_patches = self.patch_embed.num_patches

self.cls_token = nn.Parameter(torch.zeros(1, 1, embed_dim))

self.dist_token = nn.Parameter(torch.zeros(1, 1, embed_dim)) if distilled else None

self.pos_embed = nn.Parameter(torch.zeros(1, num_patches + self.num_tokens, embed_dim))

self.pos_drop = nn.Dropout(p=drop_ratio)

dpr = [x.item() for x in torch.linspace(0, drop_path_ratio, depth)] # stochastic depth decay rule

self.blocks = nn.Sequential(*[

Block(dim=embed_dim, num_heads=num_heads, mlp_ratio=mlp_ratio, qkv_bias=qkv_bias, qk_scale=qk_scale,

drop_ratio=drop_ratio, attn_drop_ratio=attn_drop_ratio, drop_path_ratio=dpr[i],

norm_layer=norm_layer, act_layer=act_layer)

for i in range(depth)

])

self.norm = norm_layer(embed_dim)

# Representation layer

if representation_size and not distilled:

self.has_logits = True

self.num_features = representation_size

self.pre_logits = nn.Sequential(OrderedDict([

("fc", nn.Linear(embed_dim, representation_size)),

("act", nn.Tanh())

]))

else:

self.has_logits = False

self.pre_logits = nn.Identity()

# Classifier head(s)

self.head = nn.Linear(self.num_features, num_classes) if num_classes > 0 else nn.Identity()

self.head_dist = None

if distilled:

self.head_dist = nn.Linear(self.embed_dim, self.num_classes) if num_classes > 0 else nn.Identity()

# Weight init

nn.init.trunc_normal_(self.pos_embed, std=0.02)

if self.dist_token is not None:

nn.init.trunc_normal_(self.dist_token, std=0.02)

nn.init.trunc_normal_(self.cls_token, std=0.02)

self.apply(_init_vit_weights)

def forward_features(self, x):

# [B, C, H, W] -> [B, num_patches, embed_dim]

x = self.patch_embed(x) # [B, 196, 768]

# [1, 1, 768] -> [B, 1, 768]

cls_token = self.cls_token.expand(x.shape[0], -1, -1)

if self.dist_token is None:

x = torch.cat((cls_token, x), dim=1) # [B, 197, 768]

else:

x = torch.cat((cls_token, self.dist_token.expand(x.shape[0], -1, -1), x), dim=1)

x = self.pos_drop(x + self.pos_embed)

x = self.blocks(x)

x = self.norm(x)

if self.dist_token is None:

return self.pre_logits(x[:, 0])

else:

return x[:, 0], x[:, 1]

def forward(self, x):

x = self.forward_features(x)

if self.head_dist is not None:

x, x_dist = self.head(x[0]), self.head_dist(x[1])

if self.training and not torch.jit.is_scripting():

# during inference, return the average of both classifier predictions

return x, x_dist

else:

return (x + x_dist) / 2

else:

x = self.head(x)

print(x.shape)

return x

def _init_vit_weights(m):

"""

ViT weight initialization

:param m: module

"""

if isinstance(m, nn.Linear):

nn.init.trunc_normal_(m.weight, std=.01)

if m.bias is not None:

nn.init.zeros_(m.bias)

elif isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode="fan_out")

if m.bias is not None:

nn.init.zeros_(m.bias)

elif isinstance(m, nn.LayerNorm):

nn.init.zeros_(m.bias)

nn.init.ones_(m.weight)

def vit_base_patch16_224_in21k(num_classes: int = 21843, has_logits: bool = True):

"""

ViT-Base model (ViT-B/16) from original paper (https://arxiv.org/abs/2010.11929).

ImageNet-21k weights @ 224x224, source https://github.com/google-research/vision_transformer.

weights ported from official Google JAX impl:

https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-vitjx/jx_vit_base_patch16_224_in21k-e5005f0a.pth

"""

model = VisionTransformer(img_size=224,

patch_size=16,

embed_dim=768,

depth=12,

num_heads=12,

representation_size=768 if has_logits else None,

num_classes=num_classes)

return model

def vit_base_patch32_224_in21k(num_classes: int = 21843, has_logits: bool = True):

"""

ViT-Base model (ViT-B/32) from original paper (https://arxiv.org/abs/2010.11929).

ImageNet-21k weights @ 224x224, source https://github.com/google-research/vision_transformer.

weights ported from official Google JAX impl:

https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-vitjx/jx_vit_base_patch32_224_in21k-8db57226.pth

"""

model = VisionTransformer(img_size=224,

patch_size=32,

embed_dim=768,

depth=12,

num_heads=12,

representation_size=768 if has_logits else None,

num_classes=num_classes)

return model

def vit_large_patch16_224_in21k(num_classes: int = 21843, has_logits: bool = True):

"""

ViT-Large model (ViT-L/16) from original paper (https://arxiv.org/abs/2010.11929).

ImageNet-21k weights @ 224x224, source https://github.com/google-research/vision_transformer.

weights ported from official Google JAX impl:

https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-vitjx/jx_vit_large_patch16_224_in21k-606da67d.pth

"""

model = VisionTransformer(img_size=224,

patch_size=16,

embed_dim=1024,

depth=24,

num_heads=16,

representation_size=1024 if has_logits else None,

num_classes=num_classes)

return model

def vit_large_patch32_224_in21k(num_classes: int = 21843, has_logits: bool = True):

"""

ViT-Large model (ViT-L/32) from original paper (https://arxiv.org/abs/2010.11929).

ImageNet-21k weights @ 224x224, source https://github.com/google-research/vision_transformer.

weights ported from official Google JAX impl:

https://github.com/rwightman/pytorch-image-models/releases/download/v0.1-vitjx/jx_vit_large_patch32_224_in21k-9046d2e7.pth

"""

model = VisionTransformer(img_size=224,

patch_size=32,

embed_dim=1024,

depth=24,

num_heads=16,

representation_size=1024 if has_logits else None,

num_classes=num_classes)

return model

def vit_huge_patch14_224_in21k(num_classes: int = 21843, has_logits: bool = True):

"""

ViT-Huge model (ViT-H/14) from original paper (https://arxiv.org/abs/2010.11929).

ImageNet-21k weights @ 224x224, source https://github.com/google-research/vision_transformer.

NOTE: converted weights not currently available, too large for github release hosting.

"""

model = VisionTransformer(img_size=224,

patch_size=14,

embed_dim=1280,

depth=32,

num_heads=16,

representation_size=1280 if has_logits else None,

num_classes=num_classes)

return model

if __name__ == "__main__":

model = vit_base_patch16_224_in21k(num_classes=5, has_logits=False)

data = torch.rand(1, 3, 224, 224)

out = model(data)

# print(out.shape)

以上就是对VIT框架的完整代码分析,欢迎各位大佬批评指正。