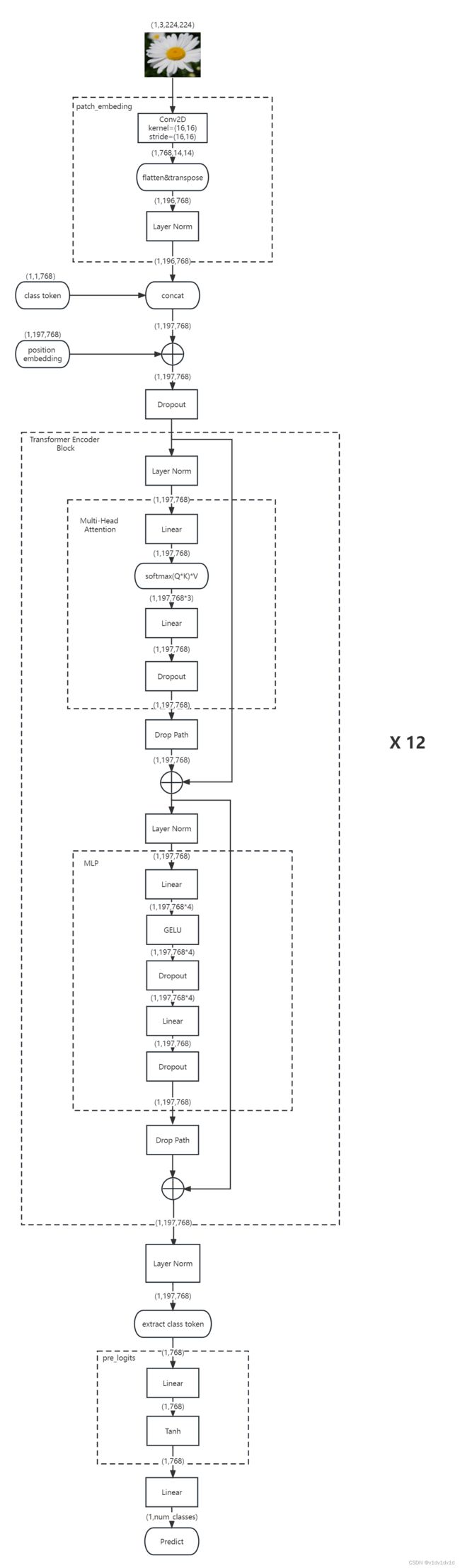

Vision Transformer

Vision Transformer代码实现

Vision Transformer

代码参考链接

# vit模型中使用的正则化方法

# 类似于dropout

# 其含义为:在一个batch中,有drop_prob概率使若干个样本不会经过主干传播(例如linear层),而是以0为值进行传播

def drop_path(x,drop_prob=0.,training = False):

if drop_prob == 0. or not training:

return x

keep_prob = 1 - drop_prob

shape = (x.shape[0],) + (1,) * (x.ndim - 1)

random_tensor = keep_prob + torch.rand(shape, dtype=x.dtype, device=x.device)

random_tensor.floor_() # binarize

output = x.div(keep_prob) * random_tensor

return output

class DropPath(nn.Module):

"""

Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

"""

def __init__(self, drop_prob=None):

super(DropPath, self).__init__()

self.drop_prob = drop_prob

def forward(self, x):

return drop_path(x, self.drop_prob, self.training)

class PatchEmbed(nn.Module):

# img_size 为图片大小

# patch_size 为分割成的patch的大小

# in_c 为输入土图片的维度 RGB图片的 in_c = 3

# embed_dim 为将patch 映射成vector的大小,类似于transformer中的 d_model 和 d_word_vec

# norm_layer 为规定的正则化方法

def __init__(self, img_size=224, patch_size=16, in_c=3, embed_dim=768, norm_layer=None):

super().__init__()

img_size = (img_size, img_size)

patch_size = (patch_size, patch_size)

self.img_size = img_size

self.patch_size = patch_size

self.grid_size = (img_size[0] // patch_size[0], img_size[1] // patch_size[1])

# 最后patch的数量类似于transformer中的句子长度 n = 图片长度*图片宽度/patch大小/patch大小

self.num_patches = self.grid_size[0] * self.grid_size[1]

# self.proj 将图片映射成 self.num_pathes 个 维度为 embed_dim的向量组

self.proj = nn.Conv2d(in_c, embed_dim, kernel_size=patch_size, stride=patch_size)

self.norm = norm_layer(embed_dim) if norm_layer else nn.Identity()

def forward(self,x):

# X的维度为(batchsize,channel,图片长度,图片宽度)

B,C,H,W = x.shape

assert H == self.img_size[0] and W == self.img_size[1], \

f"Input image size ({H}*{W}) doesn't match model ({self.img_size[0]}*{self.img_size[1]})."

#(batchsize,in_c ,图片长度,图片宽度) proj(x) -> (batchsize,embed_dim,self.grid_size[0],self.grid_size[1])

# self.grid_size[0] 是根据 原图片长度 与 卷积核大小、stride计算出来的 self.gird_size[1] 同理

#(batchsize,embed_dim,self.grid_size[0],self.grid_size[1]) flatten(2) ->

# (batchsize , embed_dim , self.grid_size[0]*self.grid_size[1]) transpose(1,2) ->

# (batchsize , self.grid_size[0]*self.grid_size[1],embed_dim)

# 此时x就将一batch图片转化成了 nlp中的序列 可以输入到transformer中了

x = self.proj(x).flatten(2).transpose(1, 2)

x = self.norm(x)

return x

class Attention(nn.Module):

# dim为 token的维度大小

# num_heads 为多头注意力机制中的head个数

# attn_drop_ratio 为 注意力机制中 ScaledDotProductAttention 中的Layer norm中的p

# proj_drop_ratop 为 concat之后是否经过 Layer norm

def __init__(self,

dim, # 输入token的dim

num_heads=8,

qkv_bias=False,

qk_scale=None,

attn_drop_ratio=0.,

proj_drop_ratio=0.):

super(Attention, self).__init__()

self.num_heads = num_heads

head_dim = dim // num_heads

# 和 transformer中的 操作有区别。

# transformer中的多头注意力机制中的head_dim = dim,最后concat成的dim 为 n_head * dim

# vit 中的 head_dim = dim / n_head,最后concat成的dim 为 dim

self.scale = qk_scale or head_dim ** -0.5

self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

self.attn_drop = nn.Dropout(attn_drop_ratio)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop_ratio)

def forward(self,x):

# 这里的num_patches比self.num_patches多了1 多的 1 为 classes 标记 用来分类

# x (bacth_size,num_patches,dim)

B,N,C = x.shape

# (bacth_size,num_patches,dim) -> self.qkv(x) -> (bacth_size,num_patches,dim*3)

# (bacth_size,num_patches,dim*3) -> reshape -> (bacth_size,num_patches,3,n_head,每个head的dim=dim/n_head)

#(bacth_size,num_patches,3,n_head,每个head的dim=dim/n_head) -> permute -> (3,bacth_size,n_head,num_patches,每个head的dim=dim/n_head)

qkv = self.qkv(x).reshape(B, N, 3, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

q = qkv[0]

k = qkv[1]

v = qkv[2]

# q,k,v (bacth_size,n_head,num_patches,每个head的dim=dim/n_head)

attn = (q @ k.transpose(-2,-1)) * self.scale

# attn (bacth_size,n_head,num_patches,num_patches) 表示patches之间的相似度

attn = attn.softmax(dim = -1)

attn = self.attn_drop(attn)

# (attn @ v) : (bacth_size,n_head,num_patches,每个head的dim=dim/n_head)

# (bacth_size,n_head,num_patches,每个head的dim=dim/n_head) -> transpose -> (bacth_size,num_patches,n_head,每个head的dim=dim/n_head)

# (bacth_size,num_patches,n_head,每个head的dim=dim/n_head) -> reshape -> (bacth_size,num_patches,dim)

x = (attn @ v).transpose(1,2).reshape(B,N,C)

# 在transformer中 每个head的dim 就等于 dim,最后通过一个linear层将n_head *dim 重新映射成 dim,即proj操作

# (bacth_size,num_patches,dim) -> proj -> (bacth_size,num_patches,dim)

x = self.proj(x)

x = self.proj_drop(x)

return x

class Mlp(nn.Module):

"""

MLP as used in Vision Transformer, MLP-Mixer and related networks

"""

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

self.fc1 = nn.Linear(in_features, hidden_features)

self.act = act_layer()

self.fc2 = nn.Linear(hidden_features, out_features)

self.drop = nn.Dropout(drop)

def forward(self, x):

x = self.fc1(x)

x = self.act(x)

x = self.drop(x)

x = self.fc2(x)

x = self.drop(x)

return x

class Block(nn.Module):

# dim 为patch维度大小

# num_heads 为多头注意力机制中的n_head

# mlp_ratio 为 mlp层中的hidden层的维度,一般默认设置为(dim*4)

# drop_ratio 为全连接层和attention层最后通过的dropout

# atte_drop_ratio 为注意力机制中q k 矩阵乘积 softmax完毕之后通过的dropout层的参数

# drop_path_ratio 为drop_path 正则化的参数 block中多层注意力和mlp完毕后会经过drop_path

def __init__(self,

dim,

num_heads,

mlp_ratio=4.,

qkv_bias=False,

qk_scale=None,

drop_ratio=0.,

attn_drop_ratio=0.,

drop_path_ratio=0.,

act_layer=nn.GELU,

norm_layer=nn.LayerNorm):

super(Block, self).__init__()

self.norm1 = norm_layer(dim)

self.attn = Attention(dim, num_heads=num_heads, qkv_bias=qkv_bias, qk_scale=qk_scale,

attn_drop_ratio=attn_drop_ratio, proj_drop_ratio=drop_ratio)

# NOTE: drop path for stochastic depth, we shall see if this is better than dropout here

self.drop_path = DropPath(drop_path_ratio) if drop_path_ratio > 0. else nn.Identity()

self.norm2 = norm_layer(dim)

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop_ratio)

def forward(self, x):

x = x + self.drop_path(self.attn(self.norm1(x)))

x = x + self.drop_path(self.mlp(self.norm2(x)))

return x

class VisionTransformer(nn.Module):

# img_size 为图片的大小

# patch_size 为一个patch块的大小

# in_c 为通道数 RGB图像为3

# num_classes 为classification的分类数

# embed_dim 为贯穿模型始终的dim(注意【class】)

# depth 为重复堆叠block的次数

# nun_head 为 n_head

# mlp_ratio 为 mlp层中hidden层大小,一般为dim * 4

# representation_size 在最后的mlp head 做分类时是否额外加上一层linear层 representation_size代表hidden层个数

# distilled DERT 中使用(暂时不懂)

# drop_ratio为全连接层和attention层最后通过的dropout

# atte_drop_ratio 为注意力机制中q k 矩阵乘积 softmax完毕之后通过的dropout层的参数

# drop_path_ratio 为drop_path 正则化的参数 block中多层注意力和mlp完毕后会经过drop_path

def __init__(self, img_size=224, patch_size=16, in_c=3, num_classes=1000,

embed_dim=768, depth=12, num_heads=12, mlp_ratio=4.0, qkv_bias=True,

qk_scale=None, representation_size=None, distilled=False, drop_ratio=0.,

attn_drop_ratio=0., drop_path_ratio=0., embed_layer=PatchEmbed, norm_layer=None,

act_layer=None):

super(VisionTransformer, self).__init__()

self.num_classes = num_classes

self.num_features = self.embed_dim = embed_dim # num_features for consistency with other models

# distilled 在Dert中除了添加【class】以外还需要添加dist_token

self.num_tokens = 2 if distilled else 1

norm_layer = norm_layer or partial(nn.LayerNorm, eps=1e-6)

act_layer = act_layer or nn.GELU

self.patch_embed = embed_layer(img_size=img_size, patch_size=patch_size, in_c=in_c, embed_dim=embed_dim)

# 还能这么用??

num_patches = self.patch_embed.num_patches

#添加class token(1,1,embed_dim) 分别对应 batch_size,patch的个数,dim

# 添加完class token 之后 输入的序列就变成了 batch_size, patch个数+1 , dim

self.cls_token = nn.Parameter(torch.zeros(1, 1, embed_dim))

self.dist_token = nn.Parameter(torch.zeros(1, 1, embed_dim)) if distilled else None

# pos_embed 跟 拼接之后的数据相对应

self.pos_embed = nn.Parameter(torch.zeros(1, num_patches + self.num_tokens, embed_dim))

self.pos_drop = nn.Dropout(p=drop_ratio)

# 针对于每一个block ,执行不同程度的drop_path,越后面的层drop程度越大

dpr = [x.item() for x in torch.linspace(0, drop_path_ratio, depth)] # stochastic depth decay rule

self.blocks = nn.Sequential(*[

Block(dim=embed_dim, num_heads=num_heads, mlp_ratio=mlp_ratio, qkv_bias=qkv_bias, qk_scale=qk_scale,

drop_ratio=drop_ratio, attn_drop_ratio=attn_drop_ratio, drop_path_ratio=dpr[i],

norm_layer=norm_layer, act_layer=act_layer)

for i in range(depth)

])

self.norm = norm_layer(embed_dim)

# 如果传入representation_size,则代表在mlp_head 最后分类的时候额外添加一个隐藏层为representation_size大小的linear层

# 激活函数使用tanh

# Representation layer

if representation_size and not distilled:

self.has_logits = True

self.num_features = representation_size

self.pre_logits = nn.Sequential(OrderedDict([

("fc", nn.Linear(embed_dim, representation_size)),

("act", nn.Tanh())

]))

else:

self.has_logits = False

self.pre_logits = nn.Identity()

# Classifier head(s)

# 最后进行分类

self.head = nn.Linear(self.num_features, num_classes) if num_classes > 0 else nn.Identity()

self.head_dist = None

if distilled:

self.head_dist = nn.Linear(self.embed_dim, self.num_classes) if num_classes > 0 else nn.Identity()

# Weight init

nn.init.trunc_normal_(self.pos_embed, std=0.02)

if self.dist_token is not None:

nn.init.trunc_normal_(self.dist_token, std=0.02)

nn.init.trunc_normal_(self.cls_token, std=0.02)

self.apply(_init_vit_weights)

def forward_features(self, x):

# x 初始 (batch_size,channel,height,width) -> patch_embed(x) -> (batch_size,num_patches,dim)

x = self.patch_embed(x) # [B, 196, 768]

# cls_token 初始为(1,1,dim) -> expand (batch_size,1,dim) :这样就能和x concat

cls_token = self.cls_token.expand(x.shape[0], -1, -1)

# 在vit中 dist_token 为 None

# x 和 cls_token concat 之后就变成 (batch_size,num_patches+1,dim)

# 在DERT中 dist_token 为 True

# x 和 cls_token、dist_token concat 之后就变成 (batch_size,num_patches+2,dim)

if self.dist_token is None:

x = torch.cat((cls_token, x), dim=1) # [B, 197, 768]

else:

x = torch.cat((cls_token, self.dist_token.expand(x.shape[0], -1, -1), x), dim=1)

# 添加位置编码 shape不变 (batch_size,num_patches+1,dim)

x = self.pos_drop(x + self.pos_embed)

# 经过一系列 block块后x的shape也不会变 (batch_size,num_patches+1,dim)

x = self.blocks(x)

x = self.norm(x)

# vit中只利用 [class] 来对模型进行预测

# pre_logits 提取所有batch的第二个维度的第0个index做预测

# 此刻返回的数据为 (batch_size,dim) !!!!!!!!!!!!

if self.dist_token is None:

return self.pre_logits(x[:, 0])

else:

return x[:, 0], x[:, 1]

def forward(self, x):

# x 初始 (batch_size,channel,height,width) -> forward_feature(x) -> (batch_size,dim)

x = self.forward_features(x)

# vit中 self.head_dist 为 None

if self.head_dist is not None:

x, x_dist = self.head(x[0]), self.head_dist(x[1])

if self.training and not torch.jit.is_scripting():

return x, x_dist

else:

return (x + x_dist) / 2

else:

x = self.head(x)

return x

def _init_vit_weights(m):

if isinstance(m, nn.Linear):

nn.init.trunc_normal_(m.weight, std=.01)

if m.bias is not None:

nn.init.zeros_(m.bias)

elif isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode="fan_out")

if m.bias is not None:

nn.init.zeros_(m.bias)

elif isinstance(m, nn.LayerNorm):

nn.init.zeros_(m.bias)

nn.init.ones_(m.weight)