优化算法 - RMSProp算法

文章目录

- RMSProp算法

-

- 1 - 算法

- 2 - 从零开始实现

- 3 - 简洁实现

- 4 - 小结

RMSProp算法

1 - 算法

import math

import torch

from d2l import torch as d2l

d2l.set_figsize()

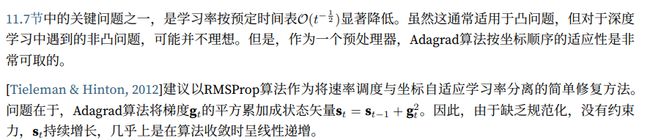

gammas = [0.95,0.9,0.8,0.7]

for gamma in gammas:

x = torch.arange(40).detach().numpy()

d2l.plt.plot(x,(1 - gamma) * gamma ** x,label=f'gamma = {gamma:.2f}')

d2l.plt.xlabel('time')

Text(0.5, 0, 'time')

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-WAJihu7K-1663328156619)(https://yingziimage.oss-cn-beijing.aliyuncs.com/img/202209161925234.svg)]

2 - 从零开始实现

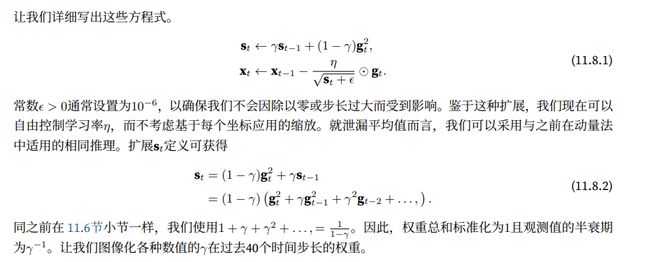

和之前一样,我们使用二次函数 f ( x ) = 0.1 x 1 2 + 2 x 2 2 f(x)=0.1x_1^2+2x_2^2 f(x)=0.1x12+2x22来观察RMSProp算法的轨迹。回想在11.7节中,当我们使用学习率为0.4的Adagrad算法时,变量在算法的后期阶段移动非常缓慢,因为学习率衰减太快。RMSProp算法中不会发生这种情况,因为η时单独控制的

def rmsprop_2d(x1,x2,s1,s2):

g1,g2,eps = 0.2 * x1,4 * x2,1e-6

s1 = gamma * s1 + (1 - gamma) * g1 ** 2

s2 = gamma * s2 + (1 - gamma) * g2 ** 2

x1 -= eta / math.sqrt(s1 + eps) * g1

x2 -= eta / math.sqrt(s2 + eps) * g2

return x1,x2,s1,s2

def f_2d(x1,x2):

return 0.1 * x1 **2 + 2 * x2 ** 2

eta,gamma = 0.4,0.9

d2l.show_trace_2d(f_2d,d2l.train_2d(rmsprop_2d))

epoch 20, x1: -0.010599, x2: 0.000000

C:\Users\20919\anaconda3\envs\d2l\lib\site-packages\torch\functional.py:478: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at C:\actions-runner\_work\pytorch\pytorch\builder\windows\pytorch\aten\src\ATen\native\TensorShape.cpp:2895.)

return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-03w2EP97-1663328156619)(https://yingziimage.oss-cn-beijing.aliyuncs.com/img/202209161925235.svg)]

接下来,我们在深度网络中实现RMSProp算法

def init_rmsprop_states(feature_dim):

s_w = torch.zeros((feature_dim,1))

s_b = torch.zeros(1)

return (s_w,s_b)

def rmsprop(params,states,hyperparams):

gamma,eps = hyperparams['gamma'],1e-6

for p,s in zip(params,states):

with torch.no_grad():

s[:] = gamma * s + (1 - gamma) * torch.square(p.grad)

p[:] -= hyperparams['lr'] * p.grad / torch.sqrt(s + eps)

p.grad.data.zero_()

我们将初始学习率设置为0.01,加权γ设置为0.9.也就是说,s累加了过去的 1 1 − γ = 10 \frac{1}{1-\gamma}=10 1−γ1=10次平方梯度观测值的平均值

data_iter,feature_dim = d2l.get_data_ch11(batch_size=10)

d2l.train_ch11(rmsprop,init_rmsprop_states(feature_dim),

{'lr':0.01,'gamma':0.9},data_iter,feature_dim);

loss: 0.245, 0.005 sec/epoch

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-qnOWqCpo-1663328156619)(https://yingziimage.oss-cn-beijing.aliyuncs.com/img/202209161925236.svg)]

3 - 简洁实现

我们可直接使用深度学习框架中提供的RMSProp算法来训练模型

trainer = torch.optim.RMSprop

d2l.train_concise_ch11(trainer,{'lr':0.01,'alpha':0.9},data_iter)

loss: 0.243, 0.005 sec/epoch

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-CZC7kfFf-1663328156620)(https://yingziimage.oss-cn-beijing.aliyuncs.com/img/202209161925237.svg)]

4 - 小结

- RMSProp算法与Adgrad算法非常相似,因为两者都使用梯度的平方缩放系数

- RMSProp算法与动量法度使用泄露平均值。但是RMSProp算法使用该技术来调整系数顺序的预处理

- 在实验中,学习率需要实验者调度

- 系数γ决定了在调整每坐标比例时历史记录的时长