pytorch情感分析入门4-Convolutional Sentiment Analysis

这一节中,我们将使用卷积神经网络(Convolutional Neaural Network,CNN)来实现句子的情感分类。CNN常用于图像分析,由一个或多个卷积层,及后面的一个或多个线性层组成。卷积层最大的优势是其权重可以通过后向传播学习。学习权重的直观想法是,卷积层就像特征提取器一样,提取图像中对CNN目标最重要的部分。关于权重学习的直观想法是,卷积层好比特征提取器,可以提取对于你的CNN的目标图像上最重要的部分。

CNN处理文本,就和3*3的filter扫描图像上的一小块一样,1*2的filter会扫描文本中的两个连续单词,比如bi-gram。上一节中,我们的FastText模型对于bi-grams的使用,是将其手动添加到文本的末尾。然而,在CNN中,我们在文本中使用多有不同大小的filter,来扫描bi-grams,tri-grams and/or n-grams。这里的直觉是,文本中的某些bi-grams,tri-grams以及n-grams will be a good indication of the final sentiment.

数据准备

因为卷积层期望batch在第一维,所以我们在Filed中设置 batch_first=True。

import torch

from torchtext.legacy import data

from torchtext.legacy import datasets

import random

import numpy as np

SEED = 1234

random.seed(SEED)

np.random.seed(SEED)

torch.manual_seed(SEED)

torch.backends.cudnn.deterministic = True

TEXT = data.Field(tokenize = 'spacy',

tokenizer_language = 'en_core_web_sm',

batch_first = True)

LABEL = data.LabelField(dtype = torch.float)

train_data, test_data = datasets.IMDB.splits(TEXT, LABEL)

train_data, valid_data = train_data.split(random_state = random.seed(SEED))

#构建词典,加载预训练word embedings

MAX_VOCAB_SIZE = 25_000

TEXT.build_vocab(train_data,

max_size = MAX_VOCAB_SIZE,

vectors = "glove.6B.100d",

unk_init = torch.Tensor.normal_)

LABEL.build_vocab(train_data)

#iterators

BATCH_SIZE = 64

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

train_iterator, valid_iterator, test_iterator = data.BucketIterator.splits(

(train_data, valid_data, test_data),

batch_size = BATCH_SIZE,

device = device)搭建模型

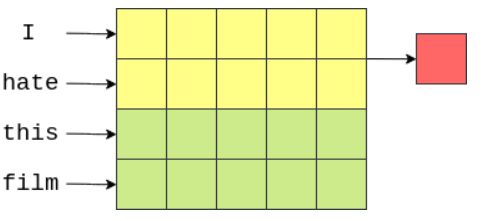

我们使用大小为[n*emb_dim]的filter。这能够包含n个连续的单词,因为他们的宽度为emb_dim维。如图所示,有4个5维的tensor。一个能够包含两个单词(bi grams)的filter大小为[2*5],图中的黄色,filter中的每个元素都有一个对应的权重。filter的输出是其所覆盖的所有元素的加权和。

filter向下移动来覆盖下一个bi grams,计算下一个输出。

输出的向量大小为句子长度-filter高度+1。

我们模型有大量filters。Idea是每个filter会学习提取不同的特征。在上述例子中,我们希望每个[2*emb_dim] filters 能 looking for the occurence of different bi-grams。

我们模型的下一步是在卷积层的输出上使用池化。这和FastText 模型中我们使用F.avg_pool,对每个词向量计算平均值相似,但在这里我们取每个维度上的最大值。下面是一个省略了激活函数的示例:

这里的Idea是,最大值是决定评论情绪的“最重要的”特征,它对应于评论中的“最重要的”n-gram。我们不需要知道最重要的n-grams具体是什么。通过反向传播,filter的权重被改变,因此每当看到高度指示情绪的某些n-grams时,filter的输出就是一个“高”值。如果这个“高”值是输出中的最大值,那么它将通过最大池化层。

由于我们的模型有100个3种不同大小的filter,这意味着我们有300个模型认为重要的n-grams。我们将这些数据连接到一个的向量中,并输入线性层来预测情绪。我们可以将这个线性层的权重视为取自300个n-grams中的“weighting up the evidence”并做出最终决定。

池化层的另一个特点是可以处理不同长度的句子。卷积层输出的大小取决于输入的大小,不同batch包含不同长度的句子。如果没有最大池化层,线性层的输入将取决于输入句子的大小(不是我们想要的)。解决这一问题的方法之一是将所有句子裁剪/填充为相同的长度。但是对于最大池化层,我们总是知道线性层的输入是filter的总数。注意:如果你的句子比使用的最大filter短,则有例外。

具体实现

我们使用nn.Conv2d实现卷积层、其中的参数,in_channels为1,及text本身;output_channels为filters的数量,kernel_size是filter的大小,即[n*emb_dim],其中n即n-grams。

输入CNNs的第一维是batch dimension,第二维是channel dimension。因为text通常没有channel dimension,所以我们unqueeze我们的向量来创造一个。

最后,我们对concatenated filter outputs执行dropout,然后将它们输入线性层来进行预测。

import torch.nn as nn

import torch.nn.functional as F

class CNN(nn.Module):

def __init__(self, vocab_size, embedding_dim, n_filters, filter_sizes, output_dim,

dropout, pad_idx):

super().__init__()

self.embedding = nn.Embedding(vocab_size, embedding_dim, padding_idx = pad_idx)

self.conv_0 = nn.Conv2d(in_channels = 1,

out_channels = n_filters,

kernel_size = (filter_sizes[0], embedding_dim))

self.conv_1 = nn.Conv2d(in_channels = 1,

out_channels = n_filters,

kernel_size = (filter_sizes[1], embedding_dim))

self.conv_2 = nn.Conv2d(in_channels = 1,

out_channels = n_filters,

kernel_size = (filter_sizes[2], embedding_dim))

self.fc = nn.Linear(len(filter_sizes) * n_filters, output_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, text):

#text = [batch size, sent len]

embedded = self.embedding(text)

#embedded = [batch size, sent len, emb dim]

embedded = embedded.unsqueeze(1)

#embedded = [batch size, 1, sent len, emb dim]

conved_0 = F.relu(self.conv_0(embedded).squeeze(3))

conved_1 = F.relu(self.conv_1(embedded).squeeze(3))

conved_2 = F.relu(self.conv_2(embedded).squeeze(3))

#conved_n = [batch size, n_filters, sent len - filter_sizes[n] + 1]

pooled_0 = F.max_pool1d(conved_0, conved_0.shape[2]).squeeze(2)

pooled_1 = F.max_pool1d(conved_1, conved_1.shape[2]).squeeze(2)

pooled_2 = F.max_pool1d(conved_2, conved_2.shape[2]).squeeze(2)

#pooled_n = [batch size, n_filters]

cat = self.dropout(torch.cat((pooled_0, pooled_1, pooled_2), dim = 1))

#cat = [batch size, n_filters * len(filter_sizes)]

return self.fc(cat)目前CNN模型只能使用3个不同大小的filter,但我们实际上可以改进模型的代码,使其更通用,能采用任意数量的filter。我们把所有的卷积层都放在nn.ModuleList,这是用于保存PyTorch nn.Modules列表的函数。如果我们只是使用标准的Python列表,列表内的模块不能被列表外的任何模块“看到”,这将导致一些错误。

我们现在可以传递一个任意大小的filter列表。在forward方法中,我们遍历列表,应用每个卷积层来获得一个卷积输出列表,先将其输入最大池化层,再将其连接在一起并通过dropout层和线性层。

class CNN(nn.Module):

def __init__(self, vocab_size, embedding_dim, n_filters, filter_sizes, output_dim,

dropout, pad_idx):

super().__init__()

self.embedding = nn.Embedding(vocab_size, embedding_dim, padding_idx = pad_idx)

self.convs = nn.ModuleList([

nn.Conv2d(in_channels = 1,

out_channels = n_filters,

kernel_size = (fs, embedding_dim))

for fs in filter_sizes

])

self.fc = nn.Linear(len(filter_sizes) * n_filters, output_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, text):

#text = [batch size, sent len]

embedded = self.embedding(text)

#embedded = [batch size, sent len, emb dim]

embedded = embedded.unsqueeze(1)

#embedded = [batch size, 1, sent len, emb dim]

conved = [F.relu(conv(embedded)).squeeze(3) for conv in self.convs]

#conved_n = [batch size, n_filters, sent len - filter_sizes[n] + 1]

pooled = [F.max_pool1d(conv, conv.shape[2]).squeeze(2) for conv in conved]

#pooled_n = [batch size, n_filters]

cat = self.dropout(torch.cat(pooled, dim = 1))

#cat = [batch size, n_filters * len(filter_sizes)]

return self.fc(cat)#创建实例

INPUT_DIM = len(TEXT.vocab)

EMBEDDING_DIM = 100

N_FILTERS = 100

FILTER_SIZES = [3,4,5]

OUTPUT_DIM = 1

DROPOUT = 0.5

PAD_IDX = TEXT.vocab.stoi[TEXT.pad_token]

model = CNN(INPUT_DIM, EMBEDDING_DIM, N_FILTERS, FILTER_SIZES, OUTPUT_DIM, DROPOUT, PAD_IDX)

#

def count_parameters(model):

return sum(p.numel() for p in model.parameters() if p.requires_grad)

print(f'The model has {count_parameters(model):,} trainable parameters')

#

pretrained_embeddings = TEXT.vocab.vectors

model.embedding.weight.data.copy_(pretrained_embeddings)

#

UNK_IDX = TEXT.vocab.stoi[TEXT.unk_token]

model.embedding.weight.data[UNK_IDX] = torch.zeros(EMBEDDING_DIM)

model.embedding.weight.data[PAD_IDX] = torch.zeros(EMBEDDING_DIM)

模型训练

#Training is the same as before. We initialize the optimizer, loss function (criterion) and place the model and criterion on the GPU (if available)

import torch.optim as optim

optimizer = optim.Adam(model.parameters())

criterion = nn.BCEWithLogitsLoss()

model = model.to(device)

criterion = criterion.to(device)

#

def binary_accuracy(preds, y):

"""

Returns accuracy per batch, i.e. if you get 8/10 right, this returns 0.8, NOT 8

"""

#round predictions to the closest integer

rounded_preds = torch.round(torch.sigmoid(preds))

correct = (rounded_preds == y).float() #convert into float for division

acc = correct.sum() / len(correct)

return acc

#Note: as we are using dropout again, we must remember to use model.train() to ensure the dropout is "turned on" while training.

def train(model, iterator, optimizer, criterion):

epoch_loss = 0

epoch_acc = 0

model.train()

for batch in iterator:

optimizer.zero_grad()

predictions = model(batch.text).squeeze(1)

loss = criterion(predictions, batch.label)

acc = binary_accuracy(predictions, batch.label)

loss.backward()

optimizer.step()

epoch_loss += loss.item()

epoch_acc += acc.item()

return epoch_loss / len(iterator), epoch_acc / len(iterator)

#Note: again, as we are now using dropout, we must remember to use model.eval() to ensure the dropout is "turned off" while evaluating.

def evaluate(model, iterator, criterion):

epoch_loss = 0

epoch_acc = 0

model.eval()

with torch.no_grad():

for batch in iterator:

predictions = model(batch.text).squeeze(1)

loss = criterion(predictions, batch.label)

acc = binary_accuracy(predictions, batch.label)

epoch_loss += loss.item()

epoch_acc += acc.item()

return epoch_loss / len(iterator), epoch_acc / len(iterator)

#

import time

def epoch_time(start_time, end_time):

elapsed_time = end_time - start_time

elapsed_mins = int(elapsed_time / 60)

elapsed_secs = int(elapsed_time - (elapsed_mins * 60))

return elapsed_mins, elapsed_secs

#

N_EPOCHS = 5

best_valid_loss = float('inf')

for epoch in range(N_EPOCHS):

start_time = time.time()

train_loss, train_acc = train(model, train_iterator, optimizer, criterion)

valid_loss, valid_acc = evaluate(model, valid_iterator, criterion)

end_time = time.time()

epoch_mins, epoch_secs = epoch_time(start_time, end_time)

if valid_loss < best_valid_loss:

best_valid_loss = valid_loss

torch.save(model.state_dict(), 'tut4-model.pt')

print(f'Epoch: {epoch+1:02} | Epoch Time: {epoch_mins}m {epoch_secs}s')

print(f'\tTrain Loss: {train_loss:.3f} | Train Acc: {train_acc*100:.2f}%')

print(f'\t Val. Loss: {valid_loss:.3f} | Val. Acc: {valid_acc*100:.2f}%')

#

model.load_state_dict(torch.load('tut4-model.pt'))

test_loss, test_acc = evaluate(model, test_iterator, criterion)

print(f'Test Loss: {test_loss:.3f} | Test Acc: {test_acc*100:.2f}%')用户输入

输入语句必须至少与所使用的最大filter高度一样。我们修改predict_sentiment函数,使其也接受最小长度参数。如果tokenized input sentence小于min_len tokens,我们添加(

import spacy

nlp = spacy.load('en_core_web_sm')

def predict_sentiment(model, sentence, min_len = 5):

model.eval()

tokenized = [tok.text for tok in nlp.tokenizer(sentence)]

if len(tokenized) < min_len:

tokenized += [''] * (min_len - len(tokenized))

indexed = [TEXT.vocab.stoi[t] for t in tokenized]

tensor = torch.LongTensor(indexed).to(device)

tensor = tensor.unsqueeze(0)

prediction = torch.sigmoid(model(tensor))

return prediction.item()

#predict_sentiment(model, "This film is terrible")

#predict_sentiment(model, "This film is great")