LSTM & MultiheadAttention 输入维度

最近遇到点问题,对于模块的输入矩阵的维度搞不清楚,这里在学习一下,记录下来,方便以后查阅。

LSTM & Attention 输入维度

- LSTM

-

- 记忆单元

- 门控机制

- LSTM结构

- LSTM的计算过程

-

- 遗忘门

- 输入门

- 更新记忆单元

- 输出门

- LSTM单元的pytorch实现

- Pytorch中的LSTM

-

- 参数

- 输入Inputs: input, (h_0, c_0)

- 输出Outputs: output, (h_n, c_n)

- 参数解释

- MultiheadAttention

-

- Self Attention 计算过程

- Multihead Attention 计算过程

- MultiheadAttention单元的pytorch实现

- Pytorch中的MultiheadAttention

- 输入的矩阵维度

- 参考资料

LSTM

LSTM是RNN的一种变种,可以有效地解决RNN的梯度爆炸或者消失问题。

记忆单元

LSTM引入了一个新的记忆单元 c t c_t ct,用于进行线性的循环信息传递,同时输出信息给隐藏层的外部状态 h t h_t ht。在每个时刻 t t t, c t c_t ct记录了到当前时刻为止的历史信息。

门控机制

LSTM引入门控机制来控制信息传递的路径,类似于数字电路中的门,0即关闭,1即开启。

LSTM中的三个门为遗忘门 f t f_t ft,输入门 i t i_t it,和输出门 o t o_t ot

- f t f_t ft控制上一个时刻的记忆单元 c t − 1 c_{t-1} ct−1需要遗忘多少信息

- i t i_t it控制当前时刻的候选状态 c ~ t \tilde{c}_t c~t有多少信息需要存储

- o t o_t ot控制当前时刻的记忆单元 c t c_t ct有多少信息需要输出给外部状态 h t h_t ht

LSTM结构

如图一所示为LSTM的结构,LSTM网络由一个个的LSTM单元连接而成。

LSTM 的关键就是记忆单元,水平线在图上方贯穿运行。

记忆单元类似于传送带。直接在整个链上运行,只有一些少量的线性交互。信息在上面流传保持不变会很容易。

LSTM的计算过程

遗忘门

在这一步中,遗忘门读取 h t − 1 h_{t-1} ht−1和 x t x_t xt,经由sigmoid,输入一个在0到1之间数值给每个在记忆单元 c t − 1 c_{t-1} ct−1中的数字,1表示完全保留,0表示完全舍弃。

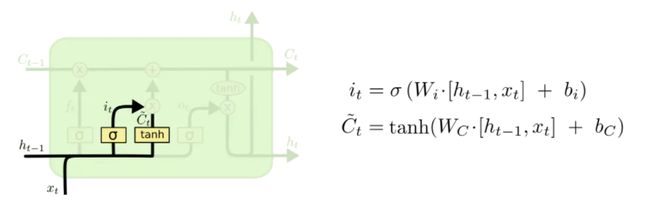

输入门

输入门将确定什么样的信息内存放在记忆单元中,这里包含两个部分。

- sigmoid层同样输出[0,1]的数值,决定候选状态 c ~ t \tilde{c}_t c~t有多少信息需要存储

- tanh层会创建候选状态 c ~ t \tilde{c}_t c~t

更新记忆单元

随后更新旧的细胞状态,将 c t − 1 c_{t-1} ct−1更新为 c t c_t ct

首先将旧状态 c t − 1 c_{t-1} ct−1与 f t f_t ft相乘,遗忘掉由 f t f_t ft所确定的需要遗忘的信息,然后加上 i t ∗ c ~ t i_t*\tilde{c}_t it∗c~t,由此得到了新的记忆单元 c t c_t ct

输出门

结合输出门 o t o_t ot将内部状态的信息传递给外部状态 h t h_t ht。同样传递给外部状态的信息也是个过滤后的信息,首先sigmoid层确定记忆单元的那些信息被传递出去,然后,把细胞状态通过tanh层进行处理(得到[-1,1]的值)并将它和输出门的输出相乘,最终外部状态仅仅会得到输出门确定输出的那部分。

LSTM单元的pytorch实现

class LSTMCell(nn.Module):

def __init__(self, input_size, hidden_size, cell_size, output_size):

super().__init__()

self.hidden_size = hidden_size # 隐含状态h的大小,也即LSTM单元隐含层神经元数量

self.cell_size = cell_size # 记忆单元c的大小

# 门

self.gate = nn.Linear(input_size+hidden_size, cell_size)

self.output = nn.Linear(hidden_size, output_size)

self.sigmoid = nn.Sigmoid()

self.tanh = nn.Tanh()

self.softmax = nn.LogSoftmax(dim=1)

def forward(self, input, hidden, cell):

# 连接输入x与h

combined = torch.cat((input, hidden), 1)

# 遗忘门

f_gate = self.sigmoid(self.gate(combined))

# 输入门

i_gate = self.sigmoid(self.gate(combined))

z_state = self.tanh(self.gate(combined))

# 输出门

o_gate = self.sigmoid(self.gate(combined))

# 更新记忆单元

cell = torch.add(torch.mul(cell, f_gate), torch.mul(z_state, i_gate))

# 更新隐藏状态h

hidden = torch.mul(self.tanh(cell), o_gate)

output = self.output(hidden)

output = self.softmax(output)

return output, hidden, cell

def initHidden(self):

return torch.zeros(1, self.hidden_size)

def initCell(self):

return torch.zeros(1, self.cell_size)

Pytorch中的LSTM

参数

- input_size – 输入特征维数

- hidden_size – 隐含状态h的维数

- num_layers – RNN层的个数:(在竖直方向堆叠的多个相同个数单元的层数),默认为1

- bias – 隐层状态是否带bias,默认为true

- batch_first – 是否输入输出的第一维为batchsize

- dropout – 是否在除最后一个RNN层外的RNN层后面加dropout层

- bidirectional –是否是双向RNN,默认为false

- proj_size – 如果>0, 则会使用相应投影大小的LSTM,默认值:0

其中比较重要的参数就是hidden_size与num_layers,hidden_size所代表的就是LSTM单元中神经元的个数。num_layers所代表的含义,就是depth的堆叠,也就是有几层的隐含层。

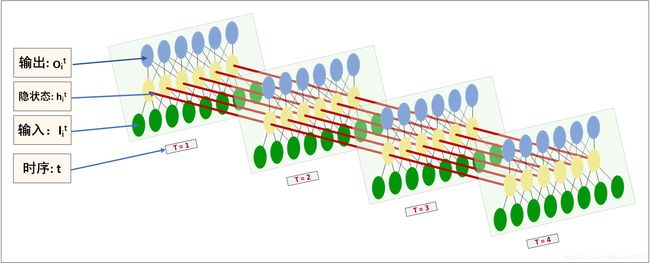

这张图是以MLP的形式展示LSTM的传播方式(不用管左边的符号,输出和隐状态其实是一样的),方便理解hidden_size这个参数。其实hidden_size在各个函数里含义都差不多,就是参数W的第一维(或最后一维)。那么对应前面的公式,hidden_size实际就是以这个size设置所有W的对应维。

这张图非常便于理解参数num_layers。实际上就是个depth堆叠,每个蓝色块都是LSTM单元。只不过第一层输入是 x t , h t − 1 ( 0 ) , c t − 1 ( 0 ) x_t, h_{t-1}^{(0)}, c_{t-1}^{(0)} xt,ht−1(0),ct−1(0),中间层输入是 h t ( k − 1 ) , h t − 1 ( k ) , c t − 1 ( k ) h_{t}^{(k-1)}, h_{t-1}^{(k)}, c_{t-1}^{(k)} ht(k−1),ht−1(k),ct−1(k)。

输入Inputs: input, (h_0, c_0)

- input:当batch_first = False 时形状为(L,N,H_in),当 batch_first = True 则为(N, L, H_in) ,包含批量样本的时间序列输入。该输入也可是一个可变换长度的时间序序列。

- h_0:形状为(D∗num_layers, N, H_out),指的是包含每一个批量样本的初始隐含状态。如果模型未提供(h_0, c_0) ,默认为是全0矩阵。

c_0:形状为(D∗num_layers, N, H_cell), 指的是包含每一个批量样本的初始记忆细胞状态。 如果模型未提供(h_0, c_0) ,默认为是全0矩阵。

输出Outputs: output, (h_n, c_n)

- output: 当batch_first = False 形状为(L, N, D∗H_out) ,当batch_first = True 则为 (N, L, D∗H_out) ,包含LSTM最后一层每一个时间步长 的输出特征()。

- h_n: 形状为(D∗num_layers, N, H_out),包括每一个批量样本最后一个时间步的隐含状态。

- c_n: 形状为(D∗num_layers, N, H_cell),包括每一个批量样本最后一个时间步的记忆细胞状态。

参数解释

- N = 批量大小

- L = 序列长度

- D = 2 如果模型参数bidirectional = 2,否则为1

- H_in = 输入的特征大小(input_size)

- H_cell = 隐含单元数量(hidden_size)

- H_out = proj_size, 如果proj_size > 0, 否则的话 = 隐含单元数量(hidden_size)

MultiheadAttention

Self Attention 计算过程

Multihead Attention 计算过程

MultiheadAttention单元的pytorch实现

class Attention(nn.Module):

'''

Attention Module used to perform self-attention operation allowing the model to attend

information from different representation subspaces on an input sequence of embeddings.

The sequence of operations is as follows :-

Input -> Query, Key, Value -> ReshapeHeads -> Query.TransposedKey -> Softmax -> Dropout

-> AttentionScores.Value -> ReshapeHeadsBack -> Output

Args:

embed_dim: Dimension size of the hidden embedding

heads: Number of parallel attention heads (Default=8)

activation: Optional activation function to be applied to the input while transforming to query, key and value matrixes (Default=None)

dropout: Dropout value for the layer on attention_scores (Default=0.1)

Methods:

_reshape_heads(inp) :-

Changes the input sequence embeddings to reduced dimension according to the number

of attention heads to parallelize attention operation

(batch_size, seq_len, embed_dim) -> (batch_size * heads, seq_len, reduced_dim)

_reshape_heads_back(inp) :-

Changes the reduced dimension due to parallel attention heads back to the original

embedding size

(batch_size * heads, seq_len, reduced_dim) -> (batch_size, seq_len, embed_dim)

forward(inp) :-

Performs the self-attention operation on the input sequence embedding.

Returns the output of self-attention as well as atttention scores

(batch_size, seq_len, embed_dim) -> (batch_size, seq_len, embed_dim), (batch_size * heads, seq_len, seq_len)

Examples:

>>> attention = Attention(embed_dim, heads, activation, dropout)

>>> out, weights = attention(inp)

'''

def __init__(self, embed_dim, heads=8, activation=None, dropout=0.1):

super(Attention, self).__init__()

self.heads = heads

self.embed_dim = embed_dim

self.query = nn.Linear(embed_dim, embed_dim)

self.key = nn.Linear(embed_dim, embed_dim)

self.value = nn.Linear(embed_dim, embed_dim)

self.softmax = nn.Softmax(dim=-1)

if activation == 'relu':

self.activation = nn.ReLU()

elif activation == 'elu':

self.activation = nn.ELU()

else:

self.activation = nn.Identity()

self.dropout = nn.Dropout(dropout)

def forward(self, inp):

# inp: (batch_size, data_aug, cha_tim_dim, embed_dim)

batch_size, data_aug, cha_tim_dim, embed_dim = inp.size()

assert embed_dim == self.embed_dim

query = self.activation(self.query(inp))

key = self.activation(self.key(inp))

value = self.activation(self.value(inp))

# output of _reshape_heads(): (batch_size * heads, data_aug, cha_tim_dim, reduced_dim) | reduced_dim = embed_dim // heads

query = self._reshape_heads(query)

key = self._reshape_heads(key)

value = self._reshape_heads(value)

# attention_scores: (batch_size * heads, data_aug, cha_tim_dim, cha_tim_dim) | Softmaxed along the last dimension

attention_scores = self.softmax(torch.matmul(query, key.transpose(2, 3)))

# out: (batch_size * heads, data_aug, cha_tim_dim, reduced_dim)

out = torch.matmul(self.dropout(attention_scores), value)

# output of _reshape_heads_back(): (batch_size, data_aug, cha_tim_dim, embed_dim)

out = self._reshape_heads_back(out)

return out, attention_scores

def _reshape_heads(self, inp):

# inp: (batch_size, data_aug, cha_tim_dim, embed_dim)

batch_size, data_aug, cha_tim_dim, embed_dim = inp.size()

reduced_dim = self.embed_dim // self.heads

assert reduced_dim * self.heads == self.embed_dim

out = inp.reshape(batch_size, data_aug, cha_tim_dim, self.heads, reduced_dim)

out = out.permute(0, 3, 1, 2, 4)

out = out.reshape(-1, data_aug, cha_tim_dim, reduced_dim)

# out: (batch_size * heads, data_aug, cha_tim_dim, reduced_dim)

return out

Pytorch中的MultiheadAttention

输入的矩阵维度

参考资料

LSTM详解

Pytorch LSTM模型 参数详解

[译] 理解 LSTM 网络

https://pytorch.org/docs/stable/generated/torch.nn.MultiheadAttention.html?highlight=attention#torch.nn.MultiheadAttention