大数据基础平台搭建-(三)Hadoop集群HA+Zookeeper搭建

大数据基础平台搭建-(三)Hadoop集群HA+Zookeeper搭建

大数据平台系列文章:

1、大数据基础平台搭建-(一)基础环境准备

2、大数据基础平台搭建-(二)Hadoop集群搭建

3、大数据基础平台搭建-(三)Hadoop集群HA+Zookeeper搭建

4、大数据基础平台搭建-(四)HBase集群HA+Zookeeper搭建

5、大数据基础平台搭建-(五)Hive搭建

大数据平台是基于Apache Hadoop_3.3.4搭建的;

目录

- 大数据基础平台搭建-(三)Hadoop集群HA+Zookeeper搭建

- 一、部署架构

- 二、Hadoop集群节点分布情况

- 三、搭建Zookeeper集群

-

- 1、在hnode1服务器上部署Zookeeper

-

- 1). 解压安装包

- 2). 配置环境变量

- 3). 配置zookeeper

- 4). 在zkData目录生成myid文件

- 2、在hnode2服务器上部署Zookeeper

-

- 1). 从hnode1服务器复制Zookeeper安装目录

- 2). 配置环境变量

- 3). 修改myid

- 3、在hnode3服务器上部署Zookeeper

-

- 1). 从hnode1服务器复制Zookeeper安装目录

- 2). 配置环境变量

- 3). 修改myid

- 四、修改Hadoop配置,HA模式

-

- 1、在hnode1编辑core-site.xml

- 2、在hnode1上编辑hdfs-site.xml

- 3、在hnode1上编辑yarn-site.xml

- 4、将hnode1节点上修改的hadoop配置同步到hnode2节点上

- 5、将hnode1节点上修改的hadoop配置同步到hnode3节点上

- 6、将hnode1节点上修改的hadoop配置同步到hnode4节点上

- 7、将hnode1节点上修改的hadoop配置同步到hnode5节点上

- 8、删除并重新创建hadoop的data(/opt/hadoop/data)目录

- 五、Hadoop集群初始化、启动

-

- 1、启动Zookeeper集群

-

- 1). 在hnode1节点上启动Zookeeper

- 2). 在hnode2节点上启动Zookeeper

- 3). 在hnode3节点上启动Zookeeper

- 2、在你配置的各个journalnode节点启动该进程

-

- 1). 在hnode1节点上启动journalnode

- 2). 在hnode2节点上启动journalnode

- 3). 在hnode3节点上启动journalnode

- 3、格式化NameNode(先选取一个namenode(hnode1)节点进行格式化)

- 4、要把在hnode1节点上生成的元数据复制到另一个NameNode(hnode2)节点上

- 5、格式化zkfc

- 6、启动Hadoop集群

- 六、确认Hadoop集群的状态

-

- 1、查看HDFS

- 2、 查看DataNode

- 3、查看HistoryServer

一、部署架构

![]()

二、Hadoop集群节点分布情况

| 序号 | 服务节点 | NameNode节点 | Zookeeper节点 | journalnode节点 | datanode节点 | resourcemanager节点 |

|---|---|---|---|---|---|---|

| 1 | hNode1 | √ | √ | √ | - | √ |

| 2 | hNode2 | √ | √ | √ | - | √ |

| 3 | hNode3 | - | √ | √ | √ | - |

| 4 | hNode4 | - | - | - | √ | - |

| 5 | hNode5 | - | - | - | √ | - |

三、搭建Zookeeper集群

1、在hnode1服务器上部署Zookeeper

1). 解压安装包

[root@hnode1 ~]# cd /opt/

[root@hnode1 opt]# tar -xzvf ./apache-zookeeper-3.8.0-bin.tar.gz /opt/zk/apache-zookeeper-3.8.0-bin

[root@hnode1 opt]# cd /opt/zk/apache-zookeeper-3.8.0-bin

2). 配置环境变量

[root@hnode1 apache-zookeeper-3.8.0-bin]# vim /etc/profile

#Zookeeper

export ZOOKEEPER_HOME=/opt/zk/apache-zookeeper-3.8.0-bin

export PATH=$PATH:$ZOOKEEPER_HOME/bin

[root@hnode1 apache-zookeeper-3.8.0-bin]# source /etc/profile

3). 配置zookeeper

[root@znode apache-zookeeper-3.8.0-bin]# mkdir zkData

[root@znode apache-zookeeper-3.8.0-bin]# cd conf

[root@znode conf]# cp ./zoo_sample.cfg ./zoo.cfg

[root@znode conf]# vim ./zoo.cfg

dataDir=/opt/zk/apache-zookeeper-3.8.0-bin/zkData

#添加集群中其他节点的信息

server.1=hnode1:2888:3888

server.2=hnode2:2888:3888

server.3=hnode3:2888:3888

[root@hnode1 apache-zookeeper-3.8.0-bin]# source /etc/profile

4). 在zkData目录生成myid文件

[root@znode apache-zookeeper-3.8.0-bin]# cd zkData/

[root@znode zkData]# vim myid

1

2、在hnode2服务器上部署Zookeeper

1). 从hnode1服务器复制Zookeeper安装目录

[root@hnode2 ~]# cd /opt/

[root@hnode2 opt]# mkdir zk

[root@hnode2 opt]# cd zk

[root@hnode2 zk]# scp -r root@hnode1:/opt/zk/apache-zookeeper-3.8.0-bin ./

2). 配置环境变量

[root@hnode2 zk]# vim /etc/profile

#Zookeeper

export ZOOKEEPER_HOME=/opt/zk/apache-zookeeper-3.8.0-bin

export PATH=$PATH:$ZOOKEEPER_HOME/bin

[root@hnode2 zk]# source /etc/profile

3). 修改myid

[root@hnode2 zk]# cd apache-zookeeper-3.8.0-bin/zkData/

[root@hnode2 zkData]# vim myid

2

3、在hnode3服务器上部署Zookeeper

1). 从hnode1服务器复制Zookeeper安装目录

[root@hnode3 ~]# cd /opt/

[root@hnode3 opt]# mkdir zk

[root@hnode3 opt]# cd zk

[root@hnode3 zk]# scp -r root@hnode1:/opt/zk/apache-zookeeper-3.8.0-bin ./

2). 配置环境变量

[root@hnode3 zk]# vim /etc/profile

#Zookeeper

export ZOOKEEPER_HOME=/opt/zk/apache-zookeeper-3.8.0-bin

export PATH=$PATH:$ZOOKEEPER_HOME/bin

[root@hnode3 zk]# source /etc/profile

3). 修改myid

[root@hnode3 zk]# cd apache-zookeeper-3.8.0-bin/zkData/

[root@hnode3 zkData]# vim myid

3

四、修改Hadoop配置,HA模式

1、在hnode1编辑core-site.xml

[root@hnode1 hadoop]# cd /opt/hadoop/hadoop-3.3.4/etc/hadoop/

[root@hnode1 hadoop]# vim core-site.xml

<configuration>

<property>

<name>io.file.buffer.sizename>

<value>131072value>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/opt/hadoop/datavalue>

property>

<property>

<name>hadoop.http.staticuser.username>

<value>rootvalue>

property>

<property>

<name>fs.defaultFSname>

<value>hdfs://clustervalue>

property>

<property>

<name>fs.trash.intervalname>

<value>1440value>

property>

<property>

<name>ha.zookeeper.quorumname>

<value>hnode1:2181,hnode2:2181,hnode3:2181value>

property>

<property>

<name>hadoop.zk.addressname>

<value>hnode1:2181,hnode2:2181,hnode3:2181value>

property>

<property>

<name>ha.zookeeper.session-timeout.msname>

<value>10000value>

<description>hadoop链接zookeeper的超时时长设置msdescription>

property>

configuration>

2、在hnode1上编辑hdfs-site.xml

[root@hnode1 hadoop]# vim hdfs-site.xml

<configuration>

<property>

<name>dfs.namenode.name.dirname>

<value>/opt/hadoop/data/namenodevalue>

property>

<property>

<name>dfs.datanode.data.dirname>

<value>/opt/hadoop/data/datanodevalue>

property>

<property>

<name>dfs.replicationname>

<value>2value>

property>

<property>

<name>dfs.permissions.enabledname>

<value>falsevalue>

property>

<property>

<name>dfs.webhdfs.enabledname>

<value>truevalue>

property>

<property>

<name>dfs.nameservicesname>

<value>clustervalue>

property>

<property>

<name>dfs.ha.namenodes.clustername>

<value>nn1,nn2value>

property>

<property>

<name>dfs.namenode.rpc-address.cluster.nn1name>

<value>hnode1:8020value>

property>

<property>

<name>dfs.namenode.rpc-address.cluster.nn2name>

<value>hnode2:8020value>

property>

<property>

<name>dfs.namenode.http-address.cluster.nn1name>

<value>hnode1:50070value>

property>

<property>

<name>dfs.namenode.http-address.cluster.nn2name>

<value>hnode2:50070value>

property>

<property>

<name>dfs.namenode.shared.edits.dirname>

<value>qjournal://hnode1:8485;hnode2:8485;hnode3:8485/clustervalue>

property>

<property>

<name>dfs.journalnode.edits.dirname>

<value>/opt/hadoop/data/journalvalue>

property>

<property>

<name>dfs.client.failover.proxy.provider.clustername>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvidervalue>

property>

<property>

<name>dfs.ha.fencing.methodsname>

<value>sshfencevalue>

property>

<property>

<name>dfs.ha.fencing.ssh.private-key-filesname>

<value>/root/.ssh/id_rsavalue>

property>

<property>

<name>dfs.ha.automatic-failover.enabledname>

<value>truevalue>

property>

configuration>

3、在hnode1上编辑yarn-site.xml

[root@hnode1 hadoop]# vim yarn-site.xml

<configuration>

<property>

<name>yarn.resourcemanager.connect.retry-interval.msname>

<value>10000value>

property>

<property>

<name>yarn.resourcemanager.ha.enabledname>

<value>truevalue>

property>

<property>

<name>yarn.resourcemanager.ha.automatic-failover.enabledname>

<value>truevalue>

property>

<property>

<name>yarn.resourcemanager.recovery.enabledname>

<value>truevalue>

<description>RM 重启过程中不影响正在运行的作业description>

property>

<property>

<name>yarn.resourcemanager.store.classname>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStorevalue>

<description>应用的状态等信息保存方式:ha只支持ZKRMStateStoredescription>

property>

<property>

<name>yarn.resourcemanager.cluster-idname>

<value>clustervalue>

property>

<property>

<name>yarn.resourcemanager.ha.rm-idsname>

<value>rm1,rm2value>

property>

<property>

<name>yarn.resourcemanager.scheduler.classname>

<value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairSchedulervalue>

property>

<property>

<name>yarn.resourcemanager.work-preserving-recovery.enabledname>

<value>truevalue>

property>

<property>

<name>yarn.resourcemanager.hostname.rm1name>

<value>hnode2value>

property>

<property>

<name>yarn.resourcemanager.address.rm1name>

<value>hnode2:8032value>

property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm1name>

<value>hnode2:8030value>

property>

<property>

<name>yarn.resourcemanager.webapp.https.address.rm1name>

<value>hnode2:8090value>

property>

<property>

<name>yarn.resourcemanager.webapp.address.rm1name>

<value>hnode2:8088value>

property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm1name>

<value>hnode2:8031value>

property>

<property>

<name>yarn.resourcemanager.admin.address.rm1name>

<value>hnode2:8033value>

property>

<property>

<name>yarn.resourcemanager.hostname.rm2name>

<value>hnode3value>

property>

<property>

<name>yarn.resourcemanager.address.rm2name>

<value>hnode3:8032value>

property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm2name>

<value>hnode3:8030value>

property>

<property>

<name>yarn.resourcemanager.webapp.https.address.rm2name>

<value>hnode3:8090value>

property>

<property>

<name>yarn.resourcemanager.webapp.address.rm2name>

<value>hnode3:8088value>

property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm2name>

<value>hnode3:8031value>

property>

<property>

<name>yarn.resourcemanager.admin.address.rm2name>

<value>hnode3:8033value>

property>

<property>

<description>Address where the localizer IPC is. ********* description>

<name>yarn.nodemanager.localizer.addressname>

<value>hnode2:8040value>

property>

<property>

<description>Address where the localizer IPC is. ********* description>

<name>yarn.nodemanager.addressname>

<value>hnode2:8050value>

property>

<property>

<description>NM Webapp address. ********* description>

<name>yarn.nodemanager.webapp.addressname>

<value>hnode2:8042value>

property>

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.nodemanager.local-dirsname>

<value>/tmp/hadoop/yarn/localvalue>

property>

<property>

<name>yarn.nodemanager.log-dirsname>

<value>/tmp/hadoop/yarn/logvalue>

property>

<property>

<name>yarn.nodemanager.resource.memory-mbname>

<value>2048value>

property>

<property>

<name>yarn.nodemanager.resource.cpu-vcoresname>

<value>2value>

property>

<property>

<name>yarn.scheduler.minimum-allocation-mbname>

<value>2048value>

property>

<property>

<name>yarn.log-aggregation-enablename>

<value>truevalue>

property>

<property>

<name>yarn.log-aggregation.retain-secondsname>

<value>86400value>

property>

<property>

<name>yarn.nodemanager.vmem-check-enabledname>

<value>falsevalue>

property>

<property>

<name>yarn.application.classpathname>

<value>/opt/hadoop/hadoop-3.3.4/etc/hadoop:/opt/hadoop/hadoop-3.3.4/share/hadoop/common/lib/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/common/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/hdfs:/opt/hadoop/hadoop-3.3.4/share/hadoop/hdfs/lib/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/hdfs/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/mapreduce/lib/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/mapreduce/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/yarn:/opt/hadoop/hadoop-3.3.4/share/hadoop/yarn/lib/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/yarn/*value>

property>

configuration>

4、将hnode1节点上修改的hadoop配置同步到hnode2节点上

将hnode1服务器上的core-site.xml、hdfs-site.xml、yarn-site.xml同步到hnode2上

[root@hnode2 opt]# cd /opt/hadoop/hadoop-3.3.4/etc/hadoop/

[root@hnode2 hadoop]# rm -rf core-site.xml

[root@hnode2 hadoop]# rm -rf hdfs-site.xml

[root@hnode2 hadoop]# rm -rf yarn-site.xml

[root@hnode2 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/core-site.xml ./

[root@hnode2 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/hdfs-site.xml ./

[root@hnode2 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/yarn-site.xml ./

5、将hnode1节点上修改的hadoop配置同步到hnode3节点上

将hnode1服务器上的core-site.xml、hdfs-site.xml、yarn-site.xml同步到hnode3上

[root@hnode3 opt]# cd /opt/hadoop/hadoop-3.3.4/etc/hadoop/

[root@hnode3 hadoop]# rm -rf core-site.xml

[root@hnode3 hadoop]# rm -rf hdfs-site.xml

[root@hnode3 hadoop]# rm -rf yarn-site.xml

[root@hnode3 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/core-site.xml ./

[root@hnode3 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/hdfs-site.xml ./

[root@hnode3 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/yarn-site.xml ./

6、将hnode1节点上修改的hadoop配置同步到hnode4节点上

将hnode1服务器上的core-site.xml、hdfs-site.xml、yarn-site.xml同步到hnode4上

[root@hnode4 opt]# cd /opt/hadoop/hadoop-3.3.4/etc/hadoop/

[root@hnode4 hadoop]# rm -rf core-site.xml

[root@hnode4 hadoop]# rm -rf hdfs-site.xml

[root@hnode4 hadoop]# rm -rf yarn-site.xml

[root@hnode4 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/core-site.xml ./

[root@hnode4 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/hdfs-site.xml ./

[root@hnode4 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/yarn-site.xml ./

7、将hnode1节点上修改的hadoop配置同步到hnode5节点上

将hnode1服务器上的core-site.xml、hdfs-site.xml、yarn-site.xml同步到hnode5上

[root@hnode5 opt]# cd /opt/hadoop/hadoop-3.3.4/etc/hadoop/

[root@hnode5 hadoop]# rm -rf core-site.xml

[root@hnode5 hadoop]# rm -rf hdfs-site.xml

[root@hnode5 hadoop]# rm -rf yarn-site.xml

[root@hnode5 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/core-site.xml ./

[root@hnode5 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/hdfs-site.xml ./

[root@hnode5 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/yarn-site.xml ./

8、删除并重新创建hadoop的data(/opt/hadoop/data)目录

因为hadoop之前做过初始化,所以需要删除重建data目录;如果大家的hadoop集群是第一次部署还未执行过初始化,则不需要执行此步

五、Hadoop集群初始化、启动

1、启动Zookeeper集群

1). 在hnode1节点上启动Zookeeper

由于我们采用root账号启动Zookeeper集群会报下面的错,所以需要在start-dfs.sh和stop-dfs.sh中添加配置

ERROR: Attempting to operate on hdfs journalnode as root

ERROR: but there is no HDFS_JOURNALNODE_USER defined. Aborting operation.

Stopping ZK Failover Controllers on NN hosts [hnode1 hnode2]

ERROR: Attempting to operate on hdfs zkfc as root

ERROR: but there is no HDFS_ZKFC_USER defined. Aborting operation.

[root@hnode1 opt]#cd /opt/hadoop/hadoop-3.3.4/sbin

[root@hnode1 sbin]# vim start-dfs.sh

在start-dfs.sh起始位置添加

HDFS_JOURNALNODE_USER=root

HDFS_ZKFC_USER=root

[root@hnode1 sbin]# vim stop-dfs.sh

在stop-dfs.sh起始位置添加

HDFS_JOURNALNODE_USER=root

HDFS_ZKFC_USER=root

[root@hnode1 sbin]# zkServer.sh start

2). 在hnode2节点上启动Zookeeper

[root@hnode2 opt]# zkServer.sh start

3). 在hnode3节点上启动Zookeeper

[root@hnode3 opt]# zkServer.sh start

2、在你配置的各个journalnode节点启动该进程

1). 在hnode1节点上启动journalnode

[root@hnode1 opt]# hadoop-daemon.sh start journalnode

2). 在hnode2节点上启动journalnode

[root@hnode2 opt]# hadoop-daemon.sh start journalnode

3). 在hnode3节点上启动journalnode

[root@hnode2 opt]# hadoop-daemon.sh start journalnode

3、格式化NameNode(先选取一个namenode(hnode1)节点进行格式化)

[root@hnode1 hadoop]# hadoop namenode -format

4、要把在hnode1节点上生成的元数据复制到另一个NameNode(hnode2)节点上

[root@hnode2 hadoop]# scp -r root@hnode1:/opt/hadoop/data ./

5、格式化zkfc

[root@hnode1 hadoop]# hdfs zkfc -formatZK

6、启动Hadoop集群

hadoop.sh脚本参见大数据基础平台搭建-(二)Hadoop集群搭建

有时候执行hadoop.sh start的时候会HDFS会启动失败,原因是8485yarn还没启动完成就要连接此端口会连接失败,如果遇到此种情情况就在每台journalnode节点服务器上执行hadoop-daemon.sh start journalnode,再执行hadoop.sh start

[root@hnode1 hadoop]# cd /opt/hadoop

[root@hnode1 hadoop]# ./hadoop.sh start

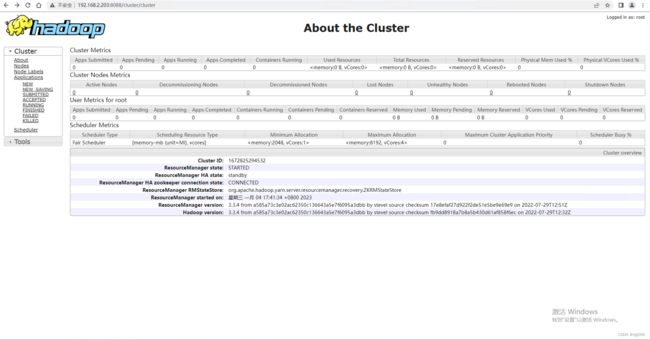

六、确认Hadoop集群的状态

1、查看HDFS

http://hnode1:8088

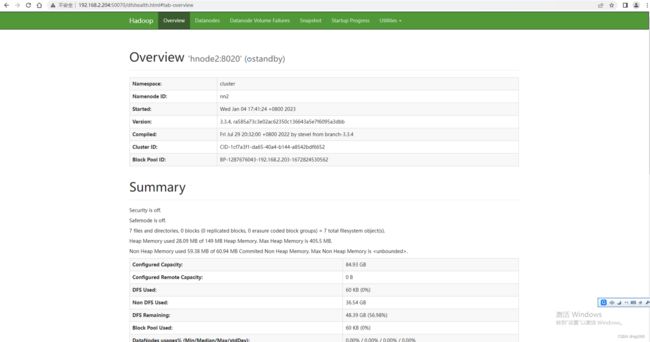

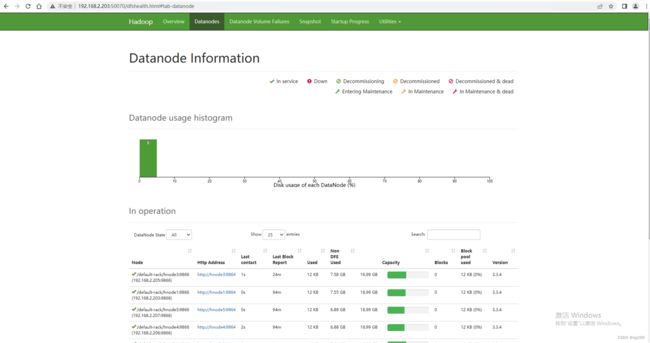

2、 查看DataNode

http://hnode1:50070

1)、NameNode主节点状态

2)、NameNode备份节点状态

3)、数据节点的状态

3、查看HistoryServer

http://hnode2:19888/jobhistory