yolov5训练部署全链路教程

1. Yolov5简介

YOLOv5 模型是 Ultralytics 公司于 2020 年 6 月 9 日公开发布的。YOLOv5 模型是基于 YOLOv3 模型基础上改进而来的,有 YOLOv5s、YOLOv5m、YOLOv5l、YOLOv5x 四个模型。YOLOv5 相比YOLOv4 而言,在检测平均精度降低不多的基础上,具有均值权重文件更小,训练时间和推理速度更短的特点。YOLOv5 的网络结构分为输入端BackboneNeck、Head 四个部分。本教程针对目标检测算法yolov5的训练和部署到EASY-EAI-Nano(RV1126)进行说明,而数据标注方法可以参考我们往期的文章《Labelimg的安装与使用》。以下为YOLOv5训练部署的大致流程:

2. 准备数据集

2.1 数据集下载

本教程以口罩检测为例,数据集的百度网盘下载链接为:

https://pan.baidu.com/s/1vtxWurn1Mqu-wJ017eaQrw 提取码:6666

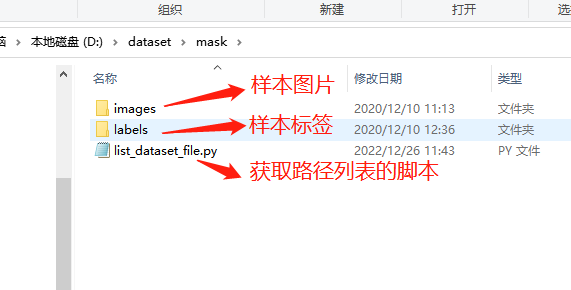

解压完成后得到以下三个文件:

2.2 生成路径列表

在数据集目录下执行脚本list_dataset_file.py:

执行现象如下图所示:

得到训练样本列表文件train.txt和验证样本列表文件valid.txt,如下图所示:

3. 下载yolov5训练源码

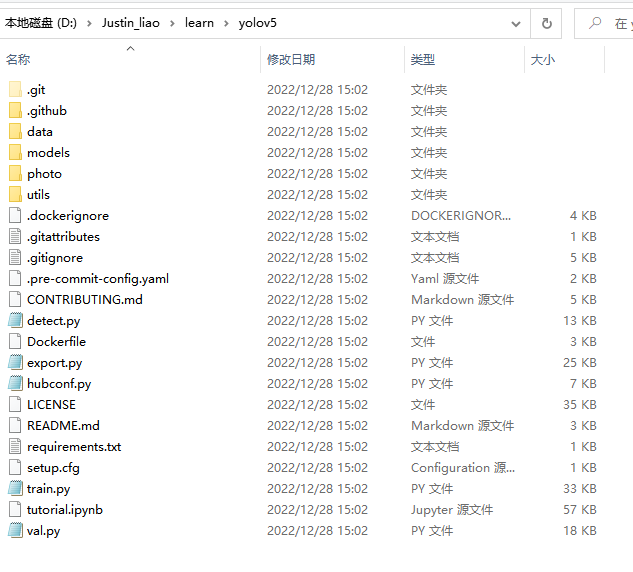

通过git工具,在PC端克隆远程仓库(注:此处可能会因网络原因造成卡顿,请耐心等待):

git clone https://github.com/EASY-EAI/yolov5.git得到下图所示目录:

4. 训练算法模型

切换到yolov5的工作目录,接下来以训练一个口罩检测模型为例进行说明。需要修改data/mask.yaml里面的train.txt和valid.txt的路径。

执行下列脚本训练算法模型:

python train.py --data mask.yaml --cfg yolov5s.yaml --weights "" --batch-size 64开始训练模型,如下图所示:

关于算法精度结果可以查看./runs/train/results.csv获得。

5. 在PC端进行模型预测

训练完毕后,在./runs/train/exp/weights/best.pt生成通过验证集测试的最好结果的模型。同时可以执行模型预测,初步评估模型的效果:

python detect.py --source data/images --weights ./runs/train/exp/weights/best.pt --conf 0.56. pt模型转换为onnx模型

算法部署到EASY-EAI-Nano需要转换为RKNN模型,而转换RKNN之前可以把模型先转换为ONNX模型,同时会生成best.anchors.txt:

python export.py --include onnx --rknpu RV1126 --weights ./runs/train/exp/weights/best.pt生成如下图所示:

7. 转换为rknn模型环境搭建

onnx模型需要转换为rknn模型才能在EASY-EAI-Nano运行,所以需要先搭建rknn-toolkit模型转换工具的环境。当然tensorflow、tensroflow lite、caffe、darknet等也是通过类似的方法进行模型转换,只是本教程onnx为例。

7.1 概述

模型转换环境搭建流程如下所示:

7.2 下载模型转换工具

为了保证模型转换工具顺利运行,请下载网盘里”AI算法开发/RKNN-Toolkit模型转换工具/rknn-toolkit-v1.7.1/docker/rknn-toolkit-1.7.1-docker.tar.gz”。网盘下载链接:https://pan.baidu.com/s/1LUtU_-on7UB3kvloJlAMkA 提取码:teuc

7.3 把工具移到ubuntu18.04

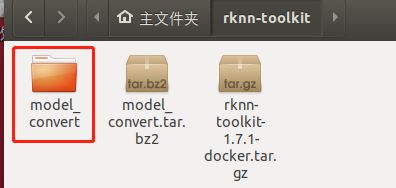

把下载完成的docker镜像移到我司的虚拟机ubuntu18.04的rknn-toolkit目录,如下图所示:

7.4 运行模型转换工具环境

7.4.1 打开终端

在该目录打开终端:

7.4.2 加载docker镜像

执行以下指令加载模型转换工具docker镜像:

docker load --input /home/developer/rknn-toolkit/rknn-toolkit-1.7.1-docker.tar.gz7.4.3 进入镜像bash环境

执行以下指令进入镜像bash环境:

docker run -t -i --privileged -v /dev/bus/usb:/dev/bus/usb rknn-toolkit:1.7.1 /bin/bash现象如下图所示:

7.4.4 测试环境

输入“python”加载python相关库,尝试加载rknn库,如下图环境测试成功:

至此,模型转换工具环境搭建完成。

8. rknn模型转换流程介绍

EASY EAI Nano支持.rknn后缀的模型的评估及运行,对于常见的tensorflow、tensroflow lite、caffe、darknet、onnx和Pytorch模型都可以通过我们提供的 toolkit 工具将其转换至 rknn 模型,而对于其他框架训练出来的模型,也可以先将其转至 onnx 模型再转换为 rknn 模型。模型转换操作流程如下图所示:

8.1 模型转换Demo下载

下载百度网盘链接:https://pan.baidu.com/s/1uAiQ6edeGIDvQ7HAm7p0jg

提取码:6666把model_convert.tar.bz2解压到虚拟机,如下图所示:

8.2 进入模型转换工具docker环境

执行以下指令把工作区域映射进docker镜像,其中/home/developer/rknn-toolkit/model_convert为工作区域,/test为映射到docker镜像,/dev/bus/usb:/dev/bus/usb为映射usb到docker镜像:

docker run -t -i --privileged -v /dev/bus/usb:/dev/bus/usb -v /home/developer/rknn-toolkit/model_convert:/test rknn-toolkit:1.7.1 /bin/bash执行成功如下图所示:

8.3 模型转换操作说明

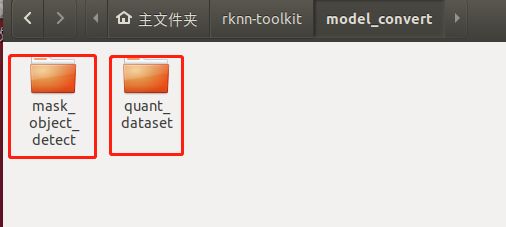

8.3.1 模型转换Demo目录结构

模型转换测试Demo由mask_object_detect和quant_dataset组成。coco_object_detect存放软件脚本,quant_dataset存放量化模型所需的数据。如下图所示:

mask_object_detect文件夹存放以下内容,如下图所示:

8.3.2 生成量化图片列表

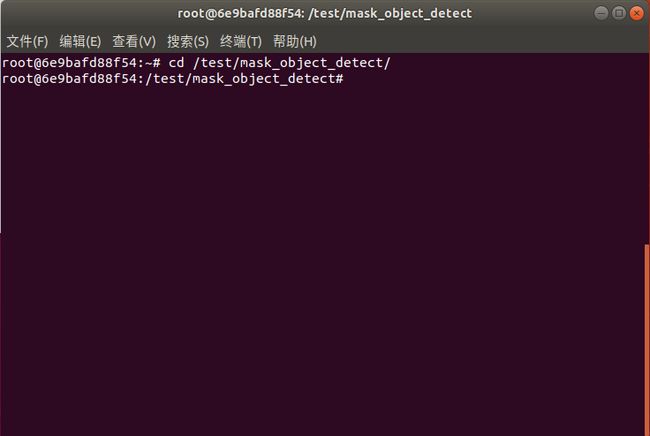

在docker环境切换到模型转换工作目录:

cd /test/mask_object_detect/如下图所示:

执行gen_list.py生成量化图片列表:

python gen_list.py命令行现象如下图所示:

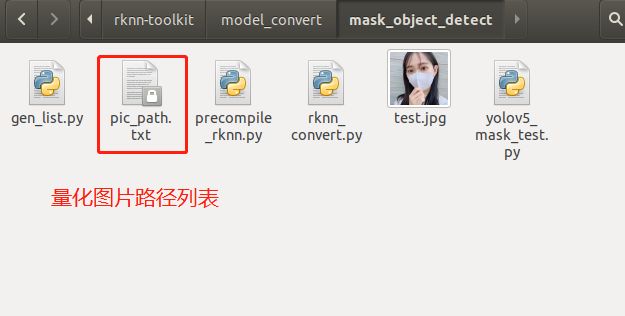

生成“量化图片列表”如下文件夹所示:

8.3.3 onnx模型转换为rknn模型

rknn_convert.py脚本默认进行int8量化操作,脚本代码清单如下所示:

import os

import urllib

import traceback

import time

import sys

import numpy as np

import cv2

from rknn.api import RKNN

ONNX_MODEL = 'best.onnx'

RKNN_MODEL = './yolov5_mask_rv1126.rknn'

DATASET = './pic_path.txt'

QUANTIZE_ON = True

if __name__ == '__main__':

# Create RKNN object

rknn = RKNN(verbose=True)

if not os.path.exists(ONNX_MODEL):

print('model not exist')

exit(-1)

# pre-process config

print('--> Config model')

rknn.config(reorder_channel='0 1 2',

mean_values=[[0, 0, 0]],

std_values=[[255, 255, 255]],

optimization_level=3,

target_platform = 'rv1126',

output_optimize=1,

quantize_input_node=QUANTIZE_ON)

print('done')

# Load ONNX model

print('--> Loading model')

ret = rknn.load_onnx(model=ONNX_MODEL)

if ret != 0:

print('Load yolov5 failed!')

exit(ret)

print('done')

# Build model

print('--> Building model')

ret = rknn.build(do_quantization=QUANTIZE_ON, dataset=DATASET)

if ret != 0:

print('Build yolov5 failed!')

exit(ret)

print('done')

# Export RKNN model

print('--> Export RKNN model')

ret = rknn.export_rknn(RKNN_MODEL)

if ret != 0:

print('Export yolov5rknn failed!')

exit(ret)

print('done')

把onnx模型best.onnx放到mask_object_detect目录,并执行rknn_convert.py脚本进行模型转换:

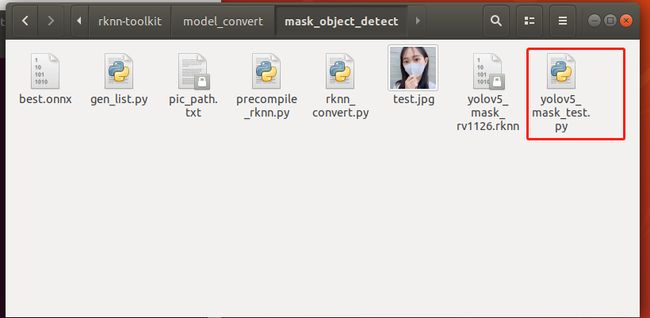

python rknn_convert.py生成模型如下图所示,此模型可以在rknn环境和EASY EAI Nano环境运行:

8.3.4 运行rknn模型

用yolov5_mask_test.py脚本在PC端的环境下可以运行rknn的模型,如下图所示:

yolov5_mask_test.py脚本程序清单如下所示:

import os

import urllib

import traceback

import time

import sys

import numpy as np

import cv2

import random

from rknn.api import RKNN

RKNN_MODEL = 'yolov5_mask_rv1126.rknn'

IMG_PATH = './test.jpg'

DATASET = './dataset.txt'

BOX_THRESH = 0.25

NMS_THRESH = 0.6

IMG_SIZE = 640

CLASSES = ("head", "mask")

def sigmoid(x):

return 1 / (1 + np.exp(-x))

def xywh2xyxy(x):

# Convert [x, y, w, h] to [x1, y1, x2, y2]

y = np.copy(x)

y[:, 0] = x[:, 0] - x[:, 2] / 2 # top left x

y[:, 1] = x[:, 1] - x[:, 3] / 2 # top left y

y[:, 2] = x[:, 0] + x[:, 2] / 2 # bottom right x

y[:, 3] = x[:, 1] + x[:, 3] / 2 # bottom right y

return y

def process(input, mask, anchors):

anchors = [anchors[i] for i in mask]

grid_h, grid_w = map(int, input.shape[0:2])

box_confidence = sigmoid(input[..., 4])

box_confidence = np.expand_dims(box_confidence, axis=-1)

box_class_probs = sigmoid(input[..., 5:])

box_xy = sigmoid(input[..., :2])*2 - 0.5

col = np.tile(np.arange(0, grid_w), grid_w).reshape(-1, grid_w)

row = np.tile(np.arange(0, grid_h).reshape(-1, 1), grid_h)

col = col.reshape(grid_h, grid_w, 1, 1).repeat(3, axis=-2)

row = row.reshape(grid_h, grid_w, 1, 1).repeat(3, axis=-2)

grid = np.concatenate((col, row), axis=-1)

box_xy += grid

box_xy *= int(IMG_SIZE/grid_h)

box_wh = pow(sigmoid(input[..., 2:4])*2, 2)

box_wh = box_wh * anchors

box = np.concatenate((box_xy, box_wh), axis=-1)

return box, box_confidence, box_class_probs

def filter_boxes(boxes, box_confidences, box_class_probs):

"""Filter boxes with box threshold. It's a bit different with origin yolov5 post process!

# Arguments

boxes: ndarray, boxes of objects.

box_confidences: ndarray, confidences of objects.

box_class_probs: ndarray, class_probs of objects.

# Returns

boxes: ndarray, filtered boxes.

classes: ndarray, classes for boxes.

scores: ndarray, scores for boxes.

"""

box_scores = box_confidences * box_class_probs

box_classes = np.argmax(box_class_probs, axis=-1)

box_class_scores = np.max(box_scores, axis=-1)

pos = np.where(box_confidences[...,0] >= BOX_THRESH)

boxes = boxes[pos]

classes = box_classes[pos]

scores = box_class_scores[pos]

return boxes, classes, scores

def nms_boxes(boxes, scores):

"""Suppress non-maximal boxes.

# Arguments

boxes: ndarray, boxes of objects.

scores: ndarray, scores of objects.

# Returns

keep: ndarray, index of effective boxes.

"""

x = boxes[:, 0]

y = boxes[:, 1]

w = boxes[:, 2] - boxes[:, 0]

h = boxes[:, 3] - boxes[:, 1]

areas = w * h

order = scores.argsort()[::-1]

keep = []

while order.size > 0:

i = order[0]

keep.append(i)

xx1 = np.maximum(x[i], x[order[1:]])

yy1 = np.maximum(y[i], y[order[1:]])

xx2 = np.minimum(x[i] + w[i], x[order[1:]] + w[order[1:]])

yy2 = np.minimum(y[i] + h[i], y[order[1:]] + h[order[1:]])

w1 = np.maximum(0.0, xx2 - xx1 + 0.00001)

h1 = np.maximum(0.0, yy2 - yy1 + 0.00001)

inter = w1 * h1

ovr = inter / (areas[i] + areas[order[1:]] - inter)

inds = np.where(ovr <= NMS_THRESH)[0]

order = order[inds + 1]

keep = np.array(keep)

return keep

def yolov5_post_process(input_data):

masks = [[0, 1, 2], [3, 4, 5], [6, 7, 8]]

anchors = [[10, 13], [16, 30], [33, 23], [30, 61], [62, 45],

[59, 119], [116, 90], [156, 198], [373, 326]]

boxes, classes, scores = [], [], []

for input,mask in zip(input_data, masks):

b, c, s = process(input, mask, anchors)

b, c, s = filter_boxes(b, c, s)

boxes.append(b)

classes.append(c)

scores.append(s)

boxes = np.concatenate(boxes)

boxes = xywh2xyxy(boxes)

classes = np.concatenate(classes)

scores = np.concatenate(scores)

nboxes, nclasses, nscores = [], [], []

for c in set(classes):

inds = np.where(classes == c)

b = boxes[inds]

c = classes[inds]

s = scores[inds]

keep = nms_boxes(b, s)

nboxes.append(b[keep])

nclasses.append(c[keep])

nscores.append(s[keep])

if not nclasses and not nscores:

return None, None, None

boxes = np.concatenate(nboxes)

classes = np.concatenate(nclasses)

scores = np.concatenate(nscores)

return boxes, classes, scores

def scale_coords(x1, y1, x2, y2, dst_width, dst_height):

dst_top, dst_left, dst_right, dst_bottom = 0, 0, 0, 0

gain = 0

if dst_width > dst_height:

image_max_len = dst_width

gain = IMG_SIZE / image_max_len

resized_height = dst_height * gain

height_pading = (IMG_SIZE - resized_height)/2

print("height_pading:", height_pading)

y1 = (y1 - height_pading)

y2 = (y2 - height_pading)

print("gain:", gain)

dst_x1 = int(x1 / gain)

dst_y1 = int(y1 / gain)

dst_x2 = int(x2 / gain)

dst_y2 = int(y2 / gain)

return dst_x1, dst_y1, dst_x2, dst_y2

def plot_one_box(x, img, color=None, label=None, line_thickness=None):

tl = line_thickness or round(0.002 * (img.shape[0] + img.shape[1]) / 2) + 1 # line/font thickness

color = color or [random.randint(0, 255) for _ in range(3)]

c1, c2 = (int(x[0]), int(x[1])), (int(x[2]), int(x[3]))

cv2.rectangle(img, c1, c2, color, thickness=tl, lineType=cv2.LINE_AA)

if label:

tf = max(tl - 1, 1) # font thickness

t_size = cv2.getTextSize(label, 0, fontScale=tl / 3, thickness=tf)[0]

c2 = c1[0] + t_size[0], c1[1] - t_size[1] - 3

cv2.rectangle(img, c1, c2, color, -1, cv2.LINE_AA) # filled

cv2.putText(img, label, (c1[0], c1[1] - 2), 0, tl / 3, [225, 255, 255], thickness=tf, lineType=cv2.LINE_AA)

def draw(image, boxes, scores, classes):

"""Draw the boxes on the image.

# Argument:

image: original image.

boxes: ndarray, boxes of objects.

classes: ndarray, classes of objects.

scores: ndarray, scores of objects.

all_classes: all classes name.

"""

for box, score, cl in zip(boxes, scores, classes):

x1, y1, x2, y2 = box

print('class: {}, score: {}'.format(CLASSES[cl], score))

print('box coordinate x1,y1,x2,y2: [{}, {}, {}, {}]'.format(x1, y1, x2, y2))

x1 = int(x1)

y1 = int(y1)

x2 = int(x2)

y2 = int(y2)

dst_x1, dst_y1, dst_x2, dst_y2 = scale_coords(x1, y1, x2, y2, image.shape[1], image.shape[0])

#print("img.cols:", image.cols)

plot_one_box((dst_x1, dst_y1, dst_x2, dst_y2), image, label='{0} {1:.2f}'.format(CLASSES[cl], score))

'''

cv2.rectangle(image, (dst_x1, dst_y1), (dst_x2, dst_y2), (255, 0, 0), 2)

cv2.putText(image, '{0} {1:.2f}'.format(CLASSES[cl], score),

(dst_x1, dst_y1 - 6),

cv2.FONT_HERSHEY_SIMPLEX,

0.6, (0, 0, 255), 2)

'''

def letterbox(im, new_shape=(640, 640), color=(0, 0, 0)):

# Resize and pad image while meeting stride-multiple constraints

shape = im.shape[:2] # current shape [height, width]

if isinstance(new_shape, int):

new_shape = (new_shape, new_shape)

# Scale ratio (new / old)

r = min(new_shape[0] / shape[0], new_shape[1] / shape[1])

# Compute padding

ratio = r, r # width, height ratios

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))

dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - new_unpad[1] # wh padding

dw /= 2 # divide padding into 2 sides

dh /= 2

if shape[::-1] != new_unpad: # resize

im = cv2.resize(im, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))

left, right = int(round(dw - 0.1)), int(round(dw + 0.1))

im = cv2.copyMakeBorder(im, top, bottom, left, right, cv2.BORDER_CONSTANT, value=color) # add border

return im, ratio, (dw, dh)

if __name__ == '__main__':

# Create RKNN object

rknn = RKNN(verbose=True)

print('--> Loading model')

ret = rknn.load_rknn(RKNN_MODEL)

if ret != 0:

print('load rknn model failed')

exit(ret)

print('done')

# init runtime environment

print('--> Init runtime environment')

ret = rknn.init_runtime()

# ret = rknn.init_runtime('rv1126', device_id='1126')

if ret != 0:

print('Init runtime environment failed')

exit(ret)

print('done')

# Set inputs

img = cv2.imread(IMG_PATH)

letter_img, ratio, (dw, dh) = letterbox(img, new_shape=(IMG_SIZE, IMG_SIZE))

letter_img = cv2.cvtColor(letter_img, cv2.COLOR_BGR2RGB)

# Inference

print('--> Running model')

outputs = rknn.inference(inputs=[letter_img])

print('--> inference done')

# post process

input0_data = outputs[0]

input1_data = outputs[1]

input2_data = outputs[2]

input0_data = input0_data.reshape([3,-1]+list(input0_data.shape[-2:]))

input1_data = input1_data.reshape([3,-1]+list(input1_data.shape[-2:]))

input2_data = input2_data.reshape([3,-1]+list(input2_data.shape[-2:]))

input_data = list()

input_data.append(np.transpose(input0_data, (2, 3, 0, 1)))

input_data.append(np.transpose(input1_data, (2, 3, 0, 1)))

input_data.append(np.transpose(input2_data, (2, 3, 0, 1)))

print('--> transpose done')

boxes, classes, scores = yolov5_post_process(input_data)

print('--> get result done')

#img_1 = cv2.cvtColor(img, cv2.COLOR_RGB2BGR)

if boxes is not None:

draw(img, boxes, scores, classes)

cv2.imwrite('./result.jpg', img)

#cv2.imshow("post process result", img_1)

#cv2.waitKeyEx(0)

rknn.release()

执行后得到result.jpg如下图所示:

8.3.5 模型预编译

由于rknn模型用NPU API在EASY EAI Nano加载的时候启动速度会好慢,在评估完模型精度没问题的情况下,建议进行模型预编译。预编译的时候需要通过EASY EAI Nano主板的环境,所以请务必接上adb口与ubuntu保证稳定连接。板子端接线如下图所示,拨码开关需要是adb:

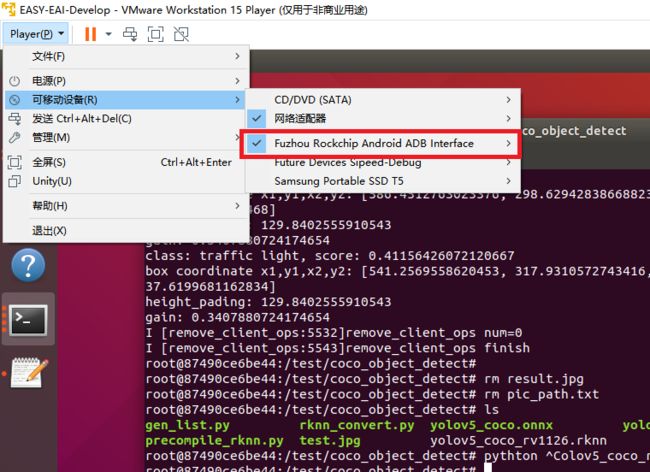

虚拟机要保证接上adb设备:

由于在虚拟机里ubuntu环境与docker环境对adb设备资源是竞争关系,所以需要关掉ubuntu环境下的adb服务,且在docker里面通过apt-get安装adb软件包。以下指令在ubuntu环境与docker环境里各自执行:

在docker环境里执行adb devices,现象如下图所示则设备连接成功:

运行precompile_rknn.py脚本把模型执行预编译:

python precompile_rknn.py执行效果如下图所示,生成预编译模型yolov5_mask_rv1126_pre.rknn:

至此预编译部署完成,模型转换步骤已全部完成。生成如下预编译后的int8量化模型:

9. 模型部署示例

9.1 模型部署示例介绍

本小节展示yolov5模型的在EASY EAI Nano的部署过程,该模型仅经过简单训练供示例使用,不保证模型精度。

9.2 准备工作

9.2.1 硬件准备

EASY EAI Nano开发板,microUSB数据线,带linux操作系统的电脑。需保证EASY EAI Nano与linux系统保持adb连接。

9.2.2 交叉编译环境准备

本示例需要交叉编译环境的支持,可以参考在线文档“入门指南/开发环境准备/安装交叉编译工具链”。链接为:https://www.easy-eai.com/document_details/3/135。

9.2.3 文件下载

下载yolov5 C Demo示例文件。百度网盘链接:https://pan.baidu.com/s/1XmxU9Putp_qSYTSQPqxMDQ提取码:6666下载解压后如下图所示:

9.3 在EASY EAI Nano运行yolov5 demo

9.3.1 解压yolov5 demo

下载程序包移至ubuntu环境后,执行以下指令解压:

tar -xvf yolov5_detect_C_demo.tar.bz29.3.2 编译yolov5 demo

执行以下脚本编译demo:

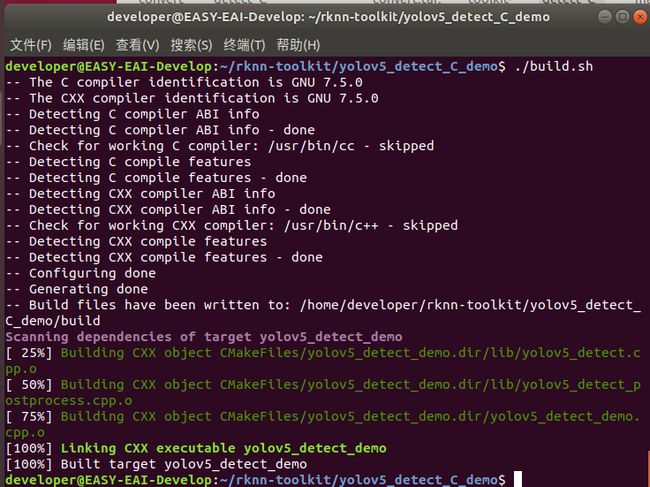

./build.sh编译成功后如下图所示:

9.3.3 执行yolov5 demo

执行以下指令把可执行程序推送到开发板端:

adb push yolov5_detect_demo_release/ /userdata登录到开发板执行程序:

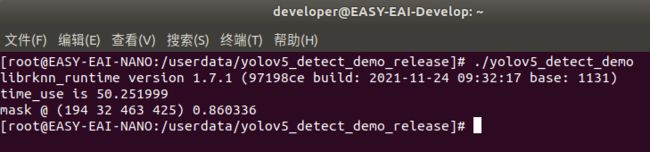

adb shell执行结果如下图所示,算法执行时间为50ms:

取回测试图片:

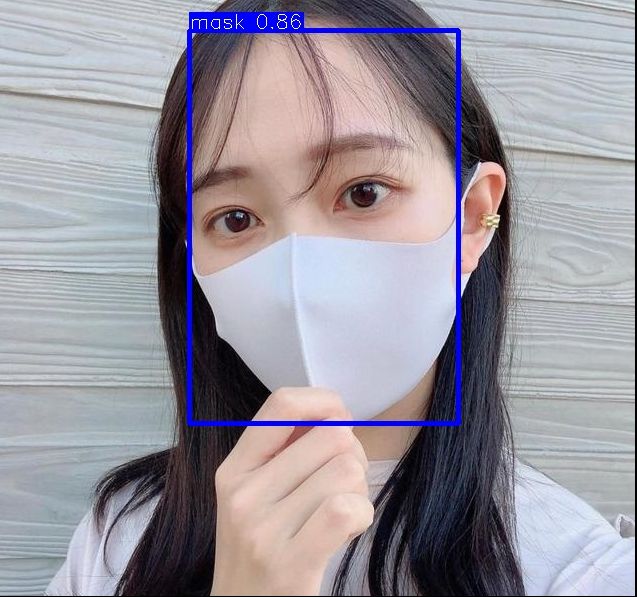

adb pull /userdata/yolov5_detect_demo_release/result.jpg .测试结果如下图所示:

10. 基于摄像头的AI Demo

10.1 摄像头Demo介绍

本小节展示yolov5模型的在EASY EAI Nano执行摄像头Demo的过程,该模型仅经过简单训练供示例使用,不保证模型精度。

10.2 准备工作

10.2.1 硬件准备

EASY-EAI-Nano人工智能开发套件(包括:EASY EAI Nano开发板,双目摄像头,5寸高清屏幕,microUSB数据线),带linux操作系统的电脑,。需保证EASY EAI Nano与linux系统保持adb连接。

10.2.2 交叉编译环境准备

本示例需要交叉编译环境的支持,可以参考在线文档“入门指南/开发环境准备/安装交叉编译工具链”。链接为:https://www.easy-eai.com/document_details/3/135。

10.2.3 文件下载

摄像头识别Demo的程序源码可以通过百度网盘下载:

https://pan.baidu.com/s/18cAp4yT_LhDZ5XAHG-L1lw(提取码:6666 )。

下载解压后如下图所示:

10.3 在EASY EAI Nano运行yolov5 demo

10.3.1 解压yolov5 camera demo

下载程序包移至ubuntu环境后,执行以下指令解压:

tar -xvf yolov5_detect_camera_demo.tar.tar.bz210.3.2 编译yolov5 camera demo

执行以下脚本编译demo:

./build.sh编译成功后如下图所示:

10.3.3 执行yolov5 camera demo

执行以下指令把可执行程序推送到开发板端:

adb push yolov5_detect_camera_demo_release/ /userdata登录到开发板执行程序:

adb shell测试结果如下图所示:

11. 资料下载

| 资料名称 |

链接 |

| 训练代码github |

https://github.com/EASY-EAI/yolov5 |

| 算法教程完整源码包 |

https://pan.baidu.com/s/1-78z8joPYOaGEVFg0I_WZA 提取码:6666 |

| 硬件外设库源码github |

https://github.com/EASY-EAI/EASY-EAI-Toolkit-C-SDK |

12. 硬件使用

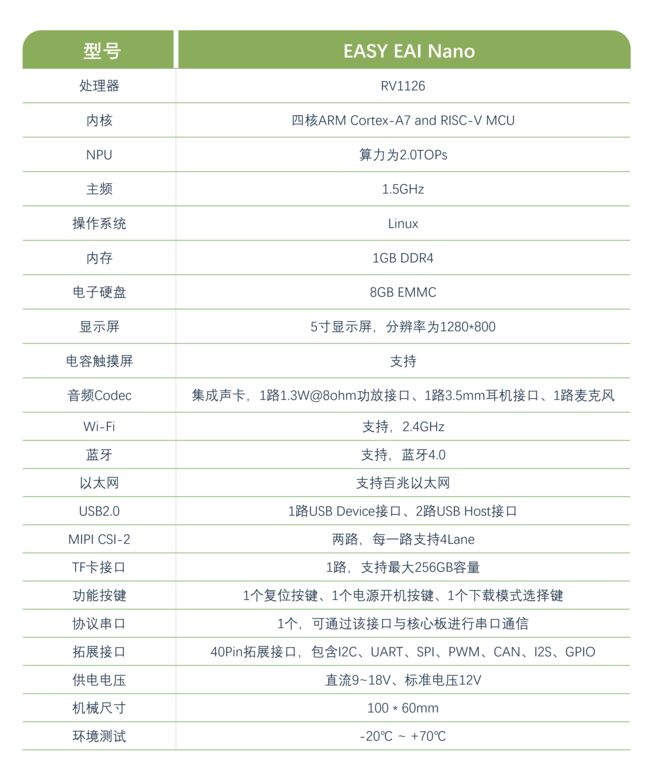

本教程使用的是EASY EAI nano(RV1126)开发板EASY EAI Nano是基于瑞芯微RV1126 处理器设计,具有四核[email protected]与NPU@2Tops AI边缘计算能力。实现AI运算的功耗不及所需GPU的10%。配套AI算法工具完善,支持Tensorflow、Pytorch、Caffe、MxNet、DarkNet、ONNX等主流AI框架直接转换和部署。有丰富的软硬件开发资料,而且外设资源丰富,接口齐全,还有丰富的功能配件可供选择。集成有以太网、Wi-Fi 等通信外设。摄像头、显示屏(带电容触摸)、喇叭、麦克风等交互外设。2 路 USB Host 接口、1 路 USB Device 调试接口。集成协议串口、TF 卡、IO 拓展接口(兼容树莓派/Jetson nano拓展接口)等通用外设。内置人脸识别、安全帽监测、人体骨骼点识别、火焰检测、车辆检测等各类 AI 算法,并提供完整的 Linux 开发包供客户二次开发。

EASY-EAI-Nano产品在线文档:

https://www.easy-eai.com/document_details/3/143

本篇文章到这里就结束啦,关于我们更多请前往官网了解

https://www.easy-eai.com

后续更多教程关注我们,不定时更新各种新品及活动