【深一点学习】我用CPU也能跟着沐神实现单发多框检测(SSD),从底层了解目标检测任务的实现过程,需要什么样的方法调用。《动手学深度学习》Yes,沐神,Yes

-

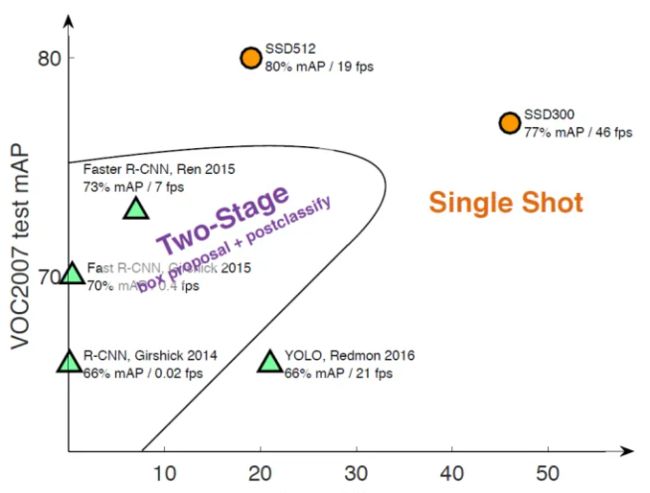

目标检测近年来已经取得了很重要的进展,主流的算法主要分为两个类型[1611.06612] RefineNet: Multi-Path Refinement Networks for High-Resolution Semantic Segmentation (arxiv.org):(1)two-stage方法,如R-CNN系算法,其主要思路是先通过启发式方法(selective search)或者CNN网络(RPN)产生一系列稀疏的候选框,然后对这些候选框进行分类与回归,two-stage方法的优势是准确度高;(2)one-stage方法,如Yolo和SSD,其主要思路是均匀地在图片的不同位置进行密集抽样,抽样时可以采用不同尺度和长宽比,然后利用CNN提取特征后直接进行分类与回归,整个过程只需要一步,所以其优势是速度快,但是均匀的密集采样的一个重要缺点是训练比较困难,这主要是因为正样本与负样本(背景)极其不均衡[1708.02002] Focal Loss for Dense Object Detection (arxiv.org),导致模型准确度稍低。

-

分别介绍了边界框、锚框、多尺度目标检测和用于目标检测的数据集。 现在我们已经准备好使用这样的背景知识来设计一个目标检测模型:单发多框检测(SSD)[1512.02325] SSD: Single Shot MultiBox Detector (arxiv.org)。 该模型简单、快速且被广泛使用。尽管这只是其中一种目标检测模型,但本节中的一些设计原则和实现细节也适用于其他模型。

-

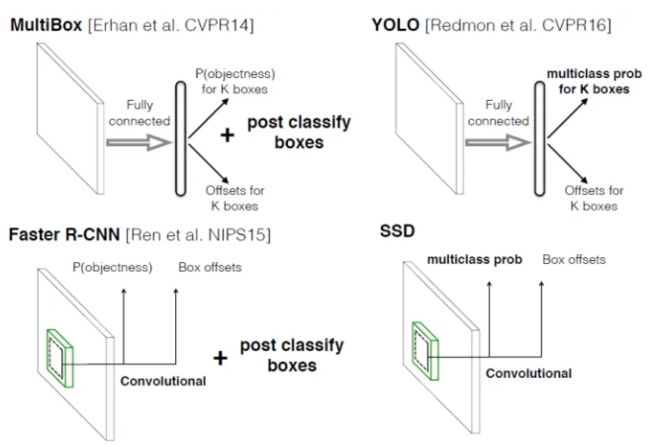

Single shot指明了SSD算法属于one-stage方法,MultiBox指明了SSD是多框预测。从上图也可以看到,SSD算法在准确度和速度(除了SSD512)上都比Yolo要好很多。下图给出了不同算法的基本框架图,对于Faster R-CNN,其先通过CNN得到候选框,然后再进行分类与回归,而Yolo与SSD可以一步到位完成检测。

-

采用多尺度特征图用于检测

-

采用卷积进行检测

- 与Yolo最后采用全连接层不同,SSD直接采用卷积对不同的特征图来进行提取检测结果。对于形状为 m ∗ n ∗ p m*n*p m∗n∗p 的特征图,只需要采用 3 ∗ 3 ∗ p 3*3*p 3∗3∗p 这样比较小的卷积核得到检测值。

-

设置先验框

-

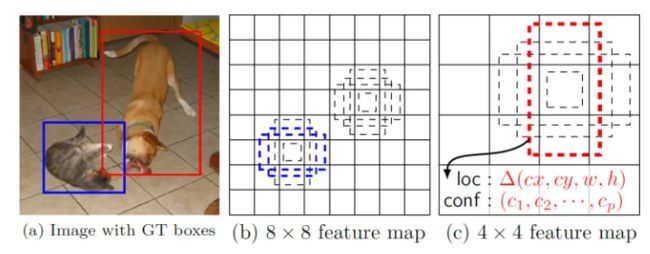

相比Yolo,SSD采用CNN来直接进行检测,而不是像Yolo那样在全连接层之后做检测。其实采用卷积直接做检测只是SSD相比Yolo的其中一个不同点,另外还有两个重要的改变,一是SSD提取了不同尺度的特征图来做检测,大尺度特征图(较靠前的特征图)可以用来检测小物体,而小尺度特征图(较靠后的特征图)用来检测大物体;二是SSD采用了不同尺度和长宽比的先验框(Prior boxes, Default boxes,在Faster R-CNN中叫做锚,Anchors)。Yolo算法缺点是难以检测小目标,而且定位不准,但是这几点重要改进使得SSD在一定程度上克服这些缺点。目标检测|SSD原理与实现 - 知乎 (zhihu.com)

-

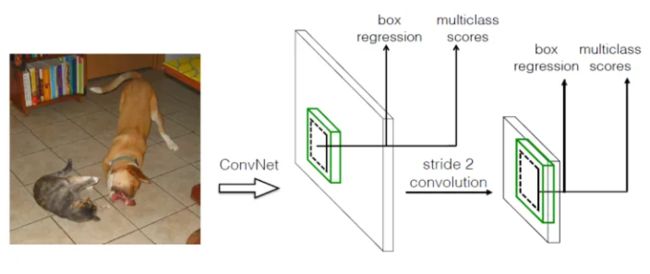

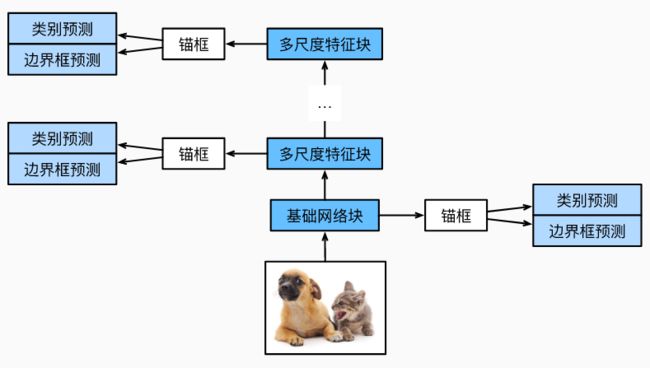

下图描述了单发多框检测模型的设计。 此模型主要由基础网络组成,其后是几个多尺度特征块。 基本网络用于从输入图像中提取特征,因此它可以使用深度卷积神经网络。 单发多框检测论文中选用了在分类层之前截断的VGG,现在也常用ResNet替代。 我们可以设计基础网络,使它输出的高和宽较大。 这样一来,基于该特征图生成的锚框数量较多,可以用来检测尺寸较小的目标。 接下来的每个多尺度特征块将上一层提供的特征图的高和宽缩小(如减半),并使特征图中每个单元在输入图像上的感受野变得更广阔。13.7. 单发多框检测(SSD) — 动手学深度学习 2.0.0 documentation (d2l.ai)

-

通过深度神经网络分层表示图像的多尺度目标检测的设计。 由于接近上图顶部的多尺度特征图较小,但具有较大的感受野,它们适合检测较少但较大的物体。 简而言之,通过多尺度特征块,单发多框检测生成不同大小的锚框,并通过预测边界框的类别和偏移量来检测大小不同的目标,因此这是一个多尺度目标检测模型。

类别预测层

-

设目标类别的数量为 q q q 。这样一来,锚框有个类别 q + 1 q+1 q+1,其中0类是背景。 在某个尺度下,设特征图的高和宽分别为 h h h和 w w w。 如果以其中每个单元为中心生成 a a a个锚框,那么我们需要对 h ∗ w ∗ a h*w*a h∗w∗a个锚框进行分类。 如果使用全连接层作为输出,很容易导致模型参数过多。使用卷积层的通道来输出类别预测的方法, 单发多框检测采用同样的方法来降低模型复杂度。

-

具体来说,类别预测层使用一个保持输入高和宽的卷积层。 这样一来,输出和输入在特征图宽和高上的空间坐标一一对应。 考虑输出和输入同一空间坐标(x、y):输出特征图上(x、y)坐标的通道里包含了以输入特征图(x、y)坐标为中心生成的所有锚框的类别预测。 因此输出通道数为 a ∗ ( q + 1 ) a*(q+1) a∗(q+1),其中索引为 i ∗ ( q + 1 ) + j ( 0 ≤ j ≤ q ) i*(q+1)+j(0\leq j\leq q) i∗(q+1)+j(0≤j≤q) 的通道代表了索引为的锚框有关类别索引为 j 的预测。

-

在下面,我们定义了这样一个类别预测层,通过参数

num_anchors和num_classes分别指定了 a a a 和 q q q 。 该图层使用填充为1的的 3 ∗ 3 3*3 3∗3 卷积层。此卷积层的输入和输出的宽度和高度保持不变。

边界框预测层

- 边界框预测层的设计与类别预测层的设计类似。 唯一不同的是,这里需要为每个锚框预测4个偏移量,而不是 q + 1 q+1 q+1 个类别。

连结多尺度的预测

-

正如我们所提到的,单发多框检测使用多尺度特征图来生成锚框并预测其类别和偏移量。 在不同的尺度下,特征图的形状或以同一单元为中心的锚框的数量可能会有所不同。 因此,不同尺度下预测输出的形状可能会有所不同。

-

在以下示例中,我们为同一个小批量构建两个不同比例(

Y1和Y2)的特征图,其中Y2的高度和宽度是Y1的一半。 以类别预测为例,假设Y1和Y2的每个单元分别生成了5个和3个锚框。 进一步假设目标类别的数量为 10,对于特征图Y1和Y2,类别预测输出中的通道数分别为 5 ∗ ( 10 + 1 ) = 55 5*(10+1)=55 5∗(10+1)=55 和 3 ∗ ( 10 + 1 ) = 33 3*(10+1)=33 3∗(10+1)=33,其中任一输出的形状是(批量大小,通道数,高度,宽度)。 -

除了批量大小这一维度外,其他三个维度都具有不同的尺寸。 为了将这两个预测输出链接起来以提高计算效率,我们将把这些张量转换为更一致的格式。通道维包含中心相同的锚框的预测结果。我们首先将通道维移到最后一维。 因为不同尺度下批量大小仍保持不变,我们可以将预测结果转成二维的(批量大小,高*宽*通道数)的格式,以方便之后在维度 1 上的连结。这样一来,尽管

Y1和Y2在通道数、高度和宽度方面具有不同的大小,我们仍然可以在同一个小批量的两个不同尺度上连接这两个预测输出。 -

%matplotlib inline import torch import torchvision from torch import nn from torch.nn import functional as F # 类别预测层 def cls_predictor(num_inputs, num_anchors, num_classes): return nn.Conv2d(num_inputs, num_anchors * (num_classes + 1), kernel_size=3, padding=1) # 边界框预测层 def bbox_predictor(num_inputs, num_anchors): return nn.Conv2d(num_inputs, num_anchors * 4, kernel_size=3, padding=1) def forward(x, block): # 需要手动调用,在pytorch中是自动调用(类内方法) return block(x) Y1 = forward(torch.zeros((2, 8, 20, 20)), cls_predictor(8, 5, 10)) Y2 = forward(torch.zeros((2, 16, 10, 10)), cls_predictor(16, 3, 10)) print(Y1.shape, Y2.shape) # 连结多尺度 def flatten_pred(pred): return torch.flatten(pred.permute(0, 2, 3, 1), start_dim=1) def concat_preds(preds): return torch.cat([flatten_pred(p) for p in preds], dim=1) print(concat_preds([Y1, Y2]).shape) -

torch.Size([2, 55, 20, 20]) torch.Size([2, 33, 10, 10]) torch.Size([2, 25300]) # 55*20*20=22000 33*10*10=3300 22000+3300=25300

高和宽减半,下采样

-

为了在多个尺度下检测目标,我们在下面定义了高和宽减半块

down_sample_blk,该模块将输入特征图的高度和宽度减半。 事实上,该块应用了在subsec_vgg-blocks中的VGG模块设计。 更具体地说,每个高和宽减半块由两个填充为 1 的 3 ∗ 3 3*3 3∗3卷积层、以及步幅为 2 的 2 ∗ 2 2*2 2∗2最大汇聚层组成。 我们知道,填充为 1 的 3 ∗ 3 3*3 3∗3 卷积层不改变特征图的形状。但是,其后的$2*2 $最大汇聚层将输入特征图的高度和宽度减少了一半。 对于此高和宽减半块的输入和输出特征图,因为 1 ∗ 2 + ( 3 − 1 ) + ( 3 − 1 ) = 6 1*2+(3-1)+(3-1)=6 1∗2+(3−1)+(3−1)=6,所以输出中的每个单元在输入上都有一个 6 ∗ 6 6*6 6∗6的感受野。因此,高和宽减半块会扩大每个单元在其输出特征图中的感受野。我们构建的高和宽减半块会更改输入通道的数量,并将输入特征图的高度和宽度减半。 -

def down_sample_blk(in_channels, out_channels): blk = [] for _ in range(2): blk.append(nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1)) blk.append(nn.BatchNorm2d(out_channels)) blk.append(nn.ReLU()) in_channels = out_channels blk.append(nn.MaxPool2d(2)) return nn.Sequential(*blk) forward(torch.zeros((2, 3, 20, 20)), down_sample_blk(3, 10)).shape #下采样测试 -

torch.Size([2, 10, 10, 10])

基本网络块

-

基本网络块用于从输入图像中抽取特征。 为了计算简洁,我们构造了一个小的基础网络,该网络串联3个高和宽减半块,并逐步将通道数翻倍。 给定输入图像的形状为 256 ∗ 256 256*256 256∗256 ,此基本网络块输出的特征图形状为 32 ∗ 32 , 32 = 256 2 3 32*32,32=\frac{256}{2^3} 32∗32,32=23256。

-

def base_net(): blk = [] num_filters = [3, 16, 32, 64] for i in range(len(num_filters) - 1): blk.append(down_sample_blk(num_filters[i], num_filters[i+1])) return nn.Sequential(*blk) forward(torch.zeros((2, 3, 256, 256)), base_net()).shape -

torch.Size([2, 64, 32, 32])

完整的模型

-

完整的单发多框检测模型由五个模块组成。每个块生成的特征图既用于生成锚框,又用于预测这些锚框的类别和偏移量。在这五个模块中,第一个是基本网络块,第二个到第四个是高和宽减半块,最后一个模块使用全局最大池将高度和宽度都降到1。从技术上讲,第二到第五个区块都是上文图中的多尺度特征块。现在我们为每个块定义前向传播。与图像分类任务不同,此处的输出包括:CNN特征图

Y;在当前尺度下根据Y生成的锚框;预测的这些锚框的类别和偏移量(基于Y)。 -

回想一下,在上文图中,一个较接近顶部的多尺度特征块是用于检测较大目标的,因此需要生成更大的锚框。 在上面的前向传播中,在每个多尺度特征块上,我们通过调用的

multibox_prior函数的sizes参数传递两个比例值的列表。 在下面,0.2和1.05之间的区间被均匀分成五个部分,以确定五个模块的在不同尺度下的较小值:0.2、0.37、0.54、0.71和0.88。 之后,他们较大的值由 0.2 ∗ 0.37 = 0.272 \sqrt{0.2*0.37}=0.272 0.2∗0.37=0.272、 0.37 ∗ 0.54 = 0.447 \sqrt{0.37*0.54}=0.447 0.37∗0.54=0.447等给出。现在,我们就可以按如下方式定义完整的模型TinySSD了。 -

我们创建一个模型实例,然后使用它对一个 256 ∗ 256 256*256 256∗256像素的小批量图像

X执行前向传播。第一个模块输出特征图的形状为 32 ∗ 32 32*32 32∗32。 回想一下,第二到第四个模块为高和宽减半块,第五个模块为全局汇聚层。 由于以特征图的每个单元为中心有 4 个锚框生成,因此在所有五个尺度下,每个图像总共生成 ( 3 2 2 + 1 6 2 + 8 2 + 4 2 + 1 ) ∗ 4 = 5444 (32^2+16^2+8^2+4^2+1)*4=5444 (322+162+82+42+1)∗4=5444个锚框。 -

def multibox_prior(data, sizes, ratios): """Generate anchor boxes with different shapes centered on each pixel. Defined in :numref:`sec_anchor`""" in_height, in_width = data.shape[-2:] device, num_sizes, num_ratios = data.device, len(sizes), len(ratios) boxes_per_pixel = (num_sizes + num_ratios - 1) size_tensor = torch.tensor(sizes, device=device) ratio_tensor = torch.tensor(ratios, device=device) # Offsets are required to move the anchor to the center of a pixel. Since # a pixel has height=1 and width=1, we choose to offset our centers by 0.5 offset_h, offset_w = 0.5, 0.5 steps_h = 1.0 / in_height # Scaled steps in y axis steps_w = 1.0 / in_width # Scaled steps in x axis # Generate all center points for the anchor boxes center_h = (torch.arange(in_height, device=device) + offset_h) * steps_h center_w = (torch.arange(in_width, device=device) + offset_w) * steps_w shift_y, shift_x = torch.meshgrid(center_h, center_w) shift_y, shift_x = shift_y.reshape(-1), shift_x.reshape(-1) # Generate `boxes_per_pixel` number of heights and widths that are later # used to create anchor box corner coordinates (xmin, xmax, ymin, ymax) w = torch.cat((size_tensor * torch.sqrt(ratio_tensor[0]), sizes[0] * torch.sqrt(ratio_tensor[1:])))\ * in_height / in_width # Handle rectangular inputs h = torch.cat((size_tensor / torch.sqrt(ratio_tensor[0]), sizes[0] / torch.sqrt(ratio_tensor[1:]))) # Divide by 2 to get half height and half width anchor_manipulations = torch.stack((-w, -h, w, h)).T.repeat( in_height * in_width, 1) / 2 # Each center point will have `boxes_per_pixel` number of anchor boxes, so # generate a grid of all anchor box centers with `boxes_per_pixel` repeats out_grid = torch.stack([shift_x, shift_y, shift_x, shift_y],dim=1).repeat_interleave(boxes_per_pixel, dim=0) output = out_grid + anchor_manipulations return output.unsqueeze(0) def get_blk(i): if i == 0: blk = base_net() elif i == 1: blk = down_sample_blk(64, 128) elif i == 4: blk = nn.AdaptiveMaxPool2d((1,1)) else: blk = down_sample_blk(128, 128) return blk def blk_forward(X, blk, size, ratio, cls_predictor, bbox_predictor): Y = blk(X) anchors = multibox_prior(Y, sizes=size, ratios=ratio) cls_preds = cls_predictor(Y) bbox_preds = bbox_predictor(Y) return (Y, anchors, cls_preds, bbox_preds) sizes = [[0.2, 0.272], [0.37, 0.447], [0.54, 0.619], [0.71, 0.79],[0.88, 0.961]] ratios = [[1, 2, 0.5]] * 5 num_anchors = len(sizes[0]) + len(ratios[0]) - 1 class TinySSD(nn.Module): def __init__(self, num_classes, **kwargs): super(TinySSD, self).__init__(**kwargs) self.num_classes = num_classes idx_to_in_channels = [64, 128, 128, 128, 128] for i in range(5): # 即赋值语句self.blk_i=get_blk(i) setattr(self, f'blk_{i}', get_blk(i)) setattr(self, f'cls_{i}', cls_predictor(idx_to_in_channels[i],num_anchors, num_classes)) setattr(self, f'bbox_{i}', bbox_predictor(idx_to_in_channels[i],num_anchors)) def forward(self, X): anchors, cls_preds, bbox_preds = [None] * 5, [None] * 5, [None] * 5 for i in range(5): # getattr(self,'blk_%d'%i)即访问self.blk_i X, anchors[i], cls_preds[i], bbox_preds[i] = blk_forward( X, getattr(self, f'blk_{i}'), sizes[i], ratios[i], getattr(self, f'cls_{i}'), getattr(self, f'bbox_{i}')) anchors = torch.cat(anchors, dim=1) cls_preds = concat_preds(cls_preds) cls_preds = cls_preds.reshape( cls_preds.shape[0], -1, self.num_classes + 1) bbox_preds = concat_preds(bbox_preds) return anchors, cls_preds, bbox_preds net = TinySSD(num_classes=1) X = torch.zeros((32, 3, 256, 256)) anchors, cls_preds, bbox_preds = net(X) print('output anchors:', anchors.shape) print('output class preds:', cls_preds.shape) print('output bbox preds:', bbox_preds.shape) -

output anchors: torch.Size([1, 5444, 4]) output class preds: torch.Size([32, 5444, 2]) output bbox preds: torch.Size([32, 21776]) # 5444*4=21776

读取数据集和初始化

- 我们读取 (1条消息) 在多个尺度下生成不同数量和不同大小的锚框,从而在多个尺度下检测不同大小的目标。附加调通《动手学深度学习》课堂数据加载代码,为目标检测实战作准备_羞儿的博客-CSDN博客 中描述的香蕉检测数据集。香蕉检测数据集中,目标的类别数为1。 定义好模型后,我们需要初始化其参数并定义优化算法。

定义损失函数和评价函数

- 目标检测有两种类型的损失。第一种有关锚框类别的损失:我们可以简单地复用之前图像分类问题里一直使用的交叉熵损失函数来计算; 第二种有关正类锚框偏移量的损失:预测偏移量是一个回归问题。 但是,对于这个回归问题,我们在这里不使用平方损失,而是使用 L 1 L_1 L1 范数损失,即预测值和真实值之差的绝对值。 掩码变量

bbox_masks令负类锚框和填充锚框不参与损失的计算。 最后,我们将锚框类别和偏移量的损失相加,以获得模型的最终损失函数。我们可以沿用准确率评价分类结果。 由于偏移量使用了 L 1 L_1 L1 范数损失,我们使用平均绝对误差来评价边界框的预测结果。这些预测结果是从生成的锚框及其预测偏移量中获得的。

训练模型

-

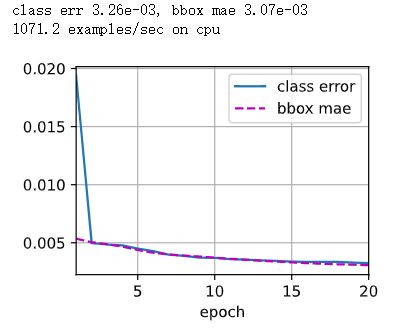

在训练模型时,我们需要在模型的前向传播过程中生成多尺度锚框(

anchors),并预测其类别(cls_preds)和偏移量(bbox_preds)。 然后,我们根据标签信息Y为生成的锚框标记类别(cls_labels)和偏移量(bbox_labels)。 最后,我们根据类别和偏移量的预测和标注值计算损失函数。为了代码简洁,这里没有评价测试数据集。 -

import time from IPython import display import matplotlib_inline import matplotlib.pyplot as plt def set_axes(axes, xlabel, ylabel, xlim, ylim, xscale, yscale, legend): """Set the axes for matplotlib. Defined in :numref:`sec_calculus`""" axes.set_xlabel(xlabel) axes.set_ylabel(ylabel) axes.set_xscale(xscale) axes.set_yscale(yscale) axes.set_xlim(xlim) axes.set_ylim(ylim) if legend: axes.legend(legend) axes.grid() def set_figsize(figsize=(3.5, 2.5)): use_svg_display() # 设置图的尺寸 plt.rcParams['figure.figsize'] = figsize def use_svg_display(): """Use svg format to display plot in jupyter""" matplotlib_inline.backend_inline.set_matplotlib_formats('svg') def load_data_bananas(batch_size): """Load the banana detection dataset. Defined in :numref:`sec_object-detection-dataset`""" train_iter = torch.utils.data.DataLoader(BananasDataset(is_train=True), batch_size, shuffle=True) val_iter = torch.utils.data.DataLoader(BananasDataset(is_train=False), batch_size) return train_iter, val_iter def try_gpu(i=0): """Return gpu(i) if exists, otherwise return cpu(). Defined in :numref:`sec_use_gpu`""" if torch.cuda.device_count() >= i + 1: return torch.device(f'cuda:{i}') return torch.device('cpu') class Timer: """Record multiple running times.""" def __init__(self): """Defined in :numref:`subsec_linear_model`""" self.times = [] self.start() def start(self): """Start the timer.""" self.tik = time.time() def stop(self): """Stop the timer and record the time in a list.""" self.times.append(time.time() - self.tik) return self.times[-1] def avg(self): """Return the average time.""" return sum(self.times) / len(self.times) def sum(self): """Return the sum of time.""" return sum(self.times) def cumsum(self): """Return the accumulated time.""" return np.array(self.times).cumsum().tolist() class Animator: """For plotting data in animation.""" def __init__(self, xlabel=None, ylabel=None, legend=None, xlim=None, ylim=None, xscale='linear', yscale='linear', fmts=('-', 'm--', 'g-.', 'r:'), nrows=1, ncols=1, figsize=(3.5, 2.5)): """Defined in :numref:`sec_softmax_scratch`""" # Incrementally plot multiple lines if legend is None: legend = [] use_svg_display() self.fig, self.axes = plt.subplots(nrows, ncols, figsize=figsize) if nrows * ncols == 1: self.axes = [self.axes, ] # Use a lambda function to capture arguments self.config_axes = lambda: set_axes(self.axes[0], xlabel, ylabel, xlim, ylim, xscale, yscale, legend) self.X, self.Y, self.fmts = None, None, fmts def add(self, x, y): # Add multiple data points into the figure if not hasattr(y, "__len__"): y = [y] n = len(y) if not hasattr(x, "__len__"): x = [x] * n if not self.X: self.X = [[] for _ in range(n)] if not self.Y: self.Y = [[] for _ in range(n)] for i, (a, b) in enumerate(zip(x, y)): if a is not None and b is not None: self.X[i].append(a) self.Y[i].append(b) self.axes[0].cla() for x, y, fmt in zip(self.X, self.Y, self.fmts): self.axes[0].plot(x, y, fmt) self.config_axes() display.display(self.fig) display.clear_output(wait=True) class Accumulator: """For accumulating sums over `n` variables.""" def __init__(self, n): """Defined in :numref:`sec_softmax_scratch`""" self.data = [0.0] * n def add(self, *args): self.data = [a + float(b) for a, b in zip(self.data, args)] def reset(self): self.data = [0.0] * len(self.data) def __getitem__(self, idx): return self.data[idx] def box_iou(boxes1, boxes2): """Compute pairwise IoU across two lists of anchor or bounding boxes. Defined in :numref:`sec_anchor`""" box_area = lambda boxes: ((boxes[:, 2] - boxes[:, 0]) *(boxes[:, 3] - boxes[:, 1])) # Shape of `boxes1`, `boxes2`, `areas1`, `areas2`: (no. of boxes1, 4), # (no. of boxes2, 4), (no. of boxes1,), (no. of boxes2,) areas1 = box_area(boxes1) areas2 = box_area(boxes2) # Shape of `inter_upperlefts`, `inter_lowerrights`, `inters`: (no. of # boxes1, no. of boxes2, 2) inter_upperlefts = torch.max(boxes1[:, None, :2], boxes2[:, :2]) inter_lowerrights = torch.min(boxes1[:, None, 2:], boxes2[:, 2:]) inters = (inter_lowerrights - inter_upperlefts).clamp(min=0) # Shape of `inter_areas` and `union_areas`: (no. of boxes1, no. of boxes2) inter_areas = inters[:, :, 0] * inters[:, :, 1] union_areas = areas1[:, None] + areas2 - inter_areas return inter_areas / union_areas def assign_anchor_to_bbox(ground_truth, anchors, device, iou_threshold=0.5): """Assign closest ground-truth bounding boxes to anchor boxes. Defined in :numref:`sec_anchor`""" num_anchors, num_gt_boxes = anchors.shape[0], ground_truth.shape[0] # Element x_ij in the i-th row and j-th column is the IoU of the anchor # box i and the ground-truth bounding box j jaccard = box_iou(anchors, ground_truth) # Initialize the tensor to hold the assigned ground-truth bounding box for # each anchor anchors_bbox_map = torch.full((num_anchors,), -1, dtype=torch.long, device=device) # Assign ground-truth bounding boxes according to the threshold max_ious, indices = torch.max(jaccard, dim=1) anc_i = torch.nonzero(max_ious >= 0.5).reshape(-1) box_j = indices[max_ious >= 0.5] anchors_bbox_map[anc_i] = box_j col_discard = torch.full((num_anchors,), -1) row_discard = torch.full((num_gt_boxes,), -1) for _ in range(num_gt_boxes): max_idx = torch.argmax(jaccard) # Find the largest IoU box_idx = (max_idx % num_gt_boxes).long() anc_idx = (max_idx / num_gt_boxes).long() anchors_bbox_map[anc_idx] = box_idx jaccard[:, box_idx] = col_discard jaccard[anc_idx, :] = row_discard return anchors_bbox_map def box_corner_to_center(boxes): """Convert from (upper-left, lower-right) to (center, width, height). Defined in :numref:`sec_bbox`""" x1, y1, x2, y2 = boxes[:, 0], boxes[:, 1], boxes[:, 2], boxes[:, 3] cx = (x1 + x2) / 2 cy = (y1 + y2) / 2 w = x2 - x1 h = y2 - y1 boxes = torch.stack((cx, cy, w, h), axis=-1) return boxes def offset_boxes(anchors, assigned_bb, eps=1e-6): """Transform for anchor box offsets. Defined in :numref:`subsec_labeling-anchor-boxes`""" c_anc = box_corner_to_center(anchors) c_assigned_bb = box_corner_to_center(assigned_bb) offset_xy = 10 * (c_assigned_bb[:, :2] - c_anc[:, :2]) / c_anc[:, 2:] offset_wh = 5 * torch.log(eps + c_assigned_bb[:, 2:] / c_anc[:, 2:]) offset = torch.concat([offset_xy, offset_wh], axis=1) return offset def multibox_target(anchors, labels): """Label anchor boxes using ground-truth bounding boxes. Defined in :numref:`subsec_labeling-anchor-boxes`""" batch_size, anchors = labels.shape[0], anchors.squeeze(0) batch_offset, batch_mask, batch_class_labels = [], [], [] device, num_anchors = anchors.device, anchors.shape[0] for i in range(batch_size): label = labels[i, :, :] anchors_bbox_map = assign_anchor_to_bbox(label[:, 1:], anchors, device) bbox_mask = ((anchors_bbox_map >= 0).float().unsqueeze(-1)).repeat( 1, 4) # Initialize class labels and assigned bounding box coordinates with # zeros class_labels = torch.zeros(num_anchors, dtype=torch.long,device=device) assigned_bb = torch.zeros((num_anchors, 4), dtype=torch.float32,device=device) # Label classes of anchor boxes using their assigned ground-truth # bounding boxes. If an anchor box is not assigned any, we label its # class as background (the value remains zero) indices_true = torch.nonzero(anchors_bbox_map >= 0) bb_idx = anchors_bbox_map[indices_true] class_labels[indices_true] = label[bb_idx, 0].long() + 1 assigned_bb[indices_true] = label[bb_idx, 1:] # Offset transformation offset = offset_boxes(anchors, assigned_bb) * bbox_mask batch_offset.append(offset.reshape(-1)) batch_mask.append(bbox_mask.reshape(-1)) batch_class_labels.append(class_labels) bbox_offset = torch.stack(batch_offset) bbox_mask = torch.stack(batch_mask) class_labels = torch.stack(batch_class_labels) return (bbox_offset, bbox_mask, class_labels) import requests #下载数据需要,差不多200M import zipfile import hashlib import os import pandas as pd DATA_HUB = dict() DATA_URL = 'http://d2l-data.s3-accelerate.amazonaws.com/' DATA_HUB['banana-detection'] = (DATA_URL + 'banana-detection.zip','5de26c8fce5ccdea9f91267273464dc968d20d72') def download(name, cache_dir=os.path.join('..', 'data')): """Download a file inserted into DATA_HUB, return the local filename. Defined in :numref:`sec_kaggle_house`""" assert name in DATA_HUB, f"{name} does not exist in {DATA_HUB}." url, sha1_hash = DATA_HUB[name] os.makedirs(cache_dir, exist_ok=True) fname = os.path.join(cache_dir, url.split('/')[-1]) if os.path.exists(fname): # 有文件名就下载好了,后面就不下载了 sha1 = hashlib.sha1() with open(fname, 'rb') as f: while True: data = f.read(1048576) if not data: break sha1.update(data) if sha1.hexdigest() == sha1_hash: return fname # Hit cache print(f'Downloading {fname} from {url}...') r = requests.get(url, stream=True, verify=True) with open(fname, 'wb') as f: f.write(r.content) return fname def download_extract(name, folder=None): """Download and extract a zip/tar file. Defined in :numref:`sec_kaggle_house`""" fname = download(name) base_dir = os.path.dirname(fname) data_dir, ext = os.path.splitext(fname) if ext == '.zip': fp = zipfile.ZipFile(fname, 'r') elif ext in ('.tar', '.gz'): fp = tarfile.open(fname, 'r') else: assert False, 'Only zip/tar files can be extracted.' fp.extractall(base_dir) return os.path.join(base_dir, folder) if folder else data_dir def read_data_bananas(is_train=True): """Read the banana detection dataset images and labels. Defined in :numref:`sec_object-detection-dataset`""" data_dir = download_extract('banana-detection') csv_fname = os.path.join(data_dir, 'bananas_train' if is_train else 'bananas_val', 'label.csv') csv_data = pd.read_csv(csv_fname) csv_data = csv_data.set_index('img_name') images, targets = [], [] for img_name, target in csv_data.iterrows(): images.append(torchvision.io.read_image( os.path.join(data_dir, 'bananas_train' if is_train else 'bananas_val', 'images', f'{img_name}'))) # Here `target` contains (class, upper-left x, upper-left y, # lower-right x, lower-right y), where all the images have the same # banana class (index 0) targets.append(list(target)) return images, torch.tensor(targets).unsqueeze(1) / 256 class BananasDataset(torch.utils.data.Dataset): """一个用于加载香蕉检测数据集的自定义数据集""" def __init__(self, is_train): self.features, self.labels = read_data_bananas(is_train) print('read ' + str(len(self.features)) + (f' training examples' if is_train else f' validation examples')) def __getitem__(self, idx): return (self.features[idx].float(), self.labels[idx]) def __len__(self): return len(self.features) batch_size = 32 # 设置训练参数 train_iter, _ = load_data_bananas(batch_size) device, net =try_gpu(), TinySSD(num_classes=1) # 定义网络,本次训练的只有香蕉一个类 trainer = torch.optim.SGD(net.parameters(), lr=0.2, weight_decay=5e-4) # 优化器为SGD cls_loss = nn.CrossEntropyLoss(reduction='none') # 分类损失为交叉熵 bbox_loss = nn.L1Loss(reduction='none') # 定位回归损失为L1 def calc_loss(cls_preds, cls_labels, bbox_preds, bbox_labels, bbox_masks): batch_size, num_classes = cls_preds.shape[0], cls_preds.shape[2] cls = cls_loss(cls_preds.reshape(-1, num_classes),cls_labels.reshape(-1)).reshape(batch_size, -1).mean(dim=1) bbox = bbox_loss(bbox_preds * bbox_masks, bbox_labels * bbox_masks).mean(dim=1) return cls + bbox # 损失计算 def cls_eval(cls_preds, cls_labels): # 由于类别预测结果放在最后一维,argmax需要指定最后一维。 return float((cls_preds.argmax(dim=-1).type(cls_labels.dtype) == cls_labels).sum()) def bbox_eval(bbox_preds, bbox_labels, bbox_masks): return float((torch.abs((bbox_labels - bbox_preds) * bbox_masks)).sum()) num_epochs, timer = 20, Timer() animator = Animator(xlabel='epoch', xlim=[1, num_epochs], legend=['class error', 'bbox mae']) net = net.to(device) for epoch in range(num_epochs): # 训练精确度的和,训练精确度的和中的示例数 # 绝对误差的和,绝对误差的和中的示例数 metric = Accumulator(4) net.train() for features, target in train_iter: timer.start() trainer.zero_grad() X, Y = features.to(device), target.to(device) # 生成多尺度的锚框,为每个锚框预测类别和偏移量 anchors, cls_preds, bbox_preds = net(X) # 为每个锚框标注类别和偏移量 bbox_labels, bbox_masks, cls_labels = multibox_target(anchors, Y) # 根据类别和偏移量的预测和标注值计算损失函数 l = calc_loss(cls_preds, cls_labels, bbox_preds, bbox_labels, bbox_masks) l.mean().backward() trainer.step() metric.add(cls_eval(cls_preds, cls_labels), cls_labels.numel(), bbox_eval(bbox_preds, bbox_labels, bbox_masks), bbox_labels.numel()) cls_err, bbox_mae = 1 - metric[0] / metric[1], metric[2] / metric[3] animator.add(epoch + 1, (cls_err, bbox_mae)) print(f'class err {cls_err:.2e}, bbox mae {bbox_mae:.2e}') print(f'{len(train_iter.dataset) / timer.stop():.1f} examples/sec on 'f'{str(device)}')

预测目标

-

在预测阶段,我们希望能把图像里面所有我们感兴趣的目标检测出来。在下面,我们读取并调整测试图像的大小,然后将其转成卷积层需要的四维格式。使用下面的

multibox_detection函数,我们可以根据锚框及其预测偏移量得到预测边界框。然后,通过非极大值抑制来移除相似的预测边界框。最后,我们筛选所有置信度不低于0.9的边界框,做为最终输出。 -

单发多框检测是一种多尺度目标检测模型。基于基础网络块和各个多尺度特征块,单发多框检测生成不同数量和不同大小的锚框,并通过预测这些锚框的类别和偏移量检测不同大小的目标。在训练单发多框检测模型时,损失函数是根据锚框的类别和偏移量的预测及标注值计算得出的。

-

import numpy as np def bbox_to_rect(bbox, color): """Convert bounding box to matplotlib format. Defined in :numref:`sec_bbox`""" # Convert the bounding box (upper-left x, upper-left y, lower-right x, # lower-right y) format to the matplotlib format: ((upper-left x, # upper-left y), width, height) return plt.Rectangle( xy=(bbox[0], bbox[1]), width=bbox[2]-bbox[0], height=bbox[3]-bbox[1], fill=False, edgecolor=color, linewidth=2) def nms(boxes, scores, iou_threshold): """Sort confidence scores of predicted bounding boxes. Defined in :numref:`subsec_predicting-bounding-boxes-nms`""" B = torch.argsort(scores, dim=-1, descending=True) keep = [] # Indices of predicted bounding boxes that will be kept while B.numel() > 0: i = B[0] keep.append(i) if B.numel() == 1: break iou = box_iou(boxes[i, :].reshape(-1, 4),boxes[B[1:], :].reshape(-1, 4)).reshape(-1) inds = torch.nonzero(iou <= iou_threshold).reshape(-1) B = B[inds + 1] return torch.tensor(keep, device=boxes.device) def box_center_to_corner(boxes): """Convert from (center, width, height) to (upper-left, lower-right). Defined in :numref:`sec_bbox`""" cx, cy, w, h = boxes[:, 0], boxes[:, 1], boxes[:, 2], boxes[:, 3] x1 = cx - 0.5 * w y1 = cy - 0.5 * h x2 = cx + 0.5 * w y2 = cy + 0.5 * h boxes = torch.stack((x1, y1, x2, y2), axis=-1) return boxes def offset_inverse(anchors, offset_preds): """Predict bounding boxes based on anchor boxes with predicted offsets. Defined in :numref:`subsec_labeling-anchor-boxes`""" anc = box_corner_to_center(anchors) pred_bbox_xy = (offset_preds[:, :2] * anc[:, 2:] / 10) + anc[:, :2] pred_bbox_wh = torch.exp(offset_preds[:, 2:] / 5) * anc[:, 2:] pred_bbox = torch.concat((pred_bbox_xy, pred_bbox_wh), axis=1) predicted_bbox = box_center_to_corner(pred_bbox) return predicted_bbox def multibox_detection(cls_probs, offset_preds, anchors, nms_threshold=0.5, pos_threshold=0.009999999): """Predict bounding boxes using non-maximum suppression. Defined in :numref:`subsec_predicting-bounding-boxes-nms`""" device, batch_size = cls_probs.device, cls_probs.shape[0] anchors = anchors.squeeze(0) num_classes, num_anchors = cls_probs.shape[1], cls_probs.shape[2] out = [] for i in range(batch_size): cls_prob, offset_pred = cls_probs[i], offset_preds[i].reshape(-1, 4) conf, class_id = torch.max(cls_prob[1:], 0) predicted_bb = offset_inverse(anchors, offset_pred) keep = nms(predicted_bb, conf, nms_threshold) # Find all non-`keep` indices and set the class to background all_idx = torch.arange(num_anchors, dtype=torch.long, device=device) combined = torch.cat((keep, all_idx)) uniques, counts = combined.unique(return_counts=True) non_keep = uniques[counts == 1] all_id_sorted = torch.cat((keep, non_keep)) class_id[non_keep] = -1 class_id = class_id[all_id_sorted] conf, predicted_bb = conf[all_id_sorted], predicted_bb[all_id_sorted] # Here `pos_threshold` is a threshold for positive (non-background) # predictions below_min_idx = (conf < pos_threshold) class_id[below_min_idx] = -1 conf[below_min_idx] = 1 - conf[below_min_idx] pred_info = torch.cat((class_id.unsqueeze(1), conf.unsqueeze(1),predicted_bb), dim=1) out.append(pred_info) return torch.stack(out) def show_bboxes(axes, bboxes, labels=None, colors=None): """Show bounding boxes. Defined in :numref:`sec_anchor`""" def make_list(obj, default_values=None): if obj is None: obj = default_values elif not isinstance(obj, (list, tuple)): obj = [obj] return obj labels = make_list(labels) colors = make_list(colors, ['b', 'g', 'r', 'm', 'c']) for i, bbox in enumerate(bboxes): color = colors[i % len(colors)] rect = bbox_to_rect(bbox.detach().numpy(), color) axes.add_patch(rect) if labels and len(labels) > i: text_color = 'k' if color == 'w' else 'w' axes.text(rect.xy[0], rect.xy[1], labels[i], va='center', ha='center', fontsize=9, color=text_color, bbox=dict(facecolor=color, lw=0)) X = torchvision.io.read_image('../../data/banana.jpeg').unsqueeze(0).float() img = X.squeeze(0).permute(1, 2, 0).long() def predict(X): net.eval() anchors, cls_preds, bbox_preds = net(X.to(device)) cls_probs = F.softmax(cls_preds, dim=2).permute(0, 2, 1) output = multibox_detection(cls_probs, bbox_preds, anchors) idx = [i for i, row in enumerate(output[0]) if row[0] != -1] return output[0, idx] output = predict(X) def mydisplay(img, output, threshold): set_figsize((5, 5)) fig = plt.imshow(img) for row in output: score = float(row[1]) if score < threshold: continue h, w = img.shape[0:2] bbox = [row[2:6] * torch.tensor((w, h, w, h), device=row.device)] show_bboxes(fig.axes, bbox, '%.2f' % score, 'w') mydisplay(img, output.cpu(), threshold=0.9) -

由于沐神的课堂中只提供了数据集训练集和验证集,并没有上面检测的

../../data/banana.jpeg。我上传原图如下方便获取。

思考

-

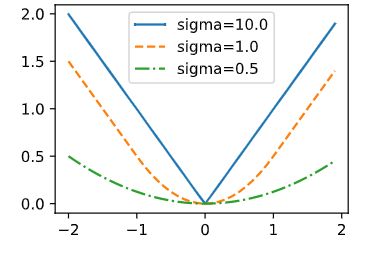

能通过改进损失函数来改进单发多框检测吗?例如,将预测偏移量用到的 L 1 L_1 L1范数损失替换为 L 1 L_1 L1平滑范数损失。它在零点附近使用平方函数从而更加平滑,这是通过一个超参数来控制平滑区域的:

-

f ( x ) = { ( δ ∗ x ) 2 / 2 i f ∣ x ∣ < 1 / δ 2 ∣ x ∣ − 0.5 / δ 2 otherwise f(x) = \begin{cases} (\delta*x)^2/2 &{if~~ |x|<1/\delta^2}\\ |x|-0.5/\delta^2 &\text{otherwise}\\ \end{cases} f(x)={(δ∗x)2/2∣x∣−0.5/δ2if ∣x∣<1/δ2otherwise

-

当 δ \delta δ 非常大时,这种损失类似于 L 1 L_1 L1 范数损失。当它的值较小时,损失函数较平滑。

-

-

def smooth_l1(data, scalar): out = [] for i in data: if abs(i) < 1 / (scalar ** 2): out.append(((scalar * i) ** 2) / 2) else: out.append(abs(i) - 0.5 / (scalar ** 2)) return torch.tensor(out) sigmas = [10, 1, 0.5] lines = ['-', '--', '-.'] x = torch.arange(-2, 2, 0.1) set_figsize() for l, s in zip(lines, sigmas): y = smooth_l1(x, scalar=s) plt.plot(x, y, l, label='sigma=%.1f' % s) plt.legend(); -

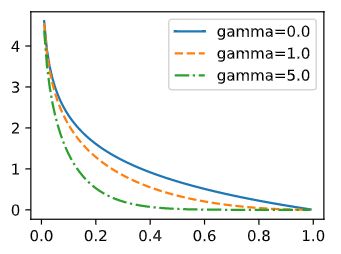

此外,在类别预测时,实验中使用了交叉熵损失:设真实类别 j 的预测概率是 p j p_j pj,交叉熵损失为 − l o g p j -logp_j −logpj。我们还可以使用焦点损失。给定超参数 γ > 0 \gamma>0 γ>0和 α > 0 \alpha>0 α>0,此损失的定义为: − α ( 1 − p j ) γ l o g p j -\alpha(1-p_j)^{\gamma}logp_j −α(1−pj)γlogpj。可以看到,增大 γ \gamma γ 可以有效地减少正类预测概率较大时(例如 p j > 0.5 p_j>0.5 pj>0.5)的相对损失,因此训练可以更集中在那些错误分类的困难示例上。

-

def focal_loss(gamma, x): return -(1 - x) ** gamma * torch.log(x) x = torch.arange(0.01, 1, 0.01) for l, gamma in zip(lines, [0, 1, 5]): y = plt.plot(x, focal_loss(gamma, x), l, label='gamma=%.1f' % gamma) plt.legend(); -

还可以当目标比图像小得多时,模型可以将输入图像调大;通常会存在大量的负锚框。为了使类别分布更加平衡,我们可以将负锚框的高和宽减半;在损失函数中,给类别损失和偏移损失设置不同比重的超参数;使用其他方法评估目标检测模型,例如单发多框检测论文中的方法。