d2l语言模型--生成小批量序列

对语言模型的数据集处理做以下汇总与总结

目录

1.k元语法

1.1一元

1.2 二元

1.3 三元

2.随机抽样

2.1各bs之间随机

2.2各bs之间连续

3.封装

1.k元语法

1.1一元

tokens = d2l.tokenize(d2l.read_time_machine())

# 因为每个⽂本⾏不⼀定是⼀个句⼦或⼀个段落,因此我们把所有⽂本⾏拼接到⼀起

corpus = [token for line in tokens for token in line]

vocab = d2l.Vocab(corpus)看看得到了什么:corpus为原txt所有词汇拉成一维list;token_freqs为按1元语法统计的token-freqs列表。

vocab.token_freqs[:10], corpus[:20]

'''

([('the', 2261),

('i', 1267),

('and', 1245),

('of', 1155),

('a', 816),

('to', 695),

('was', 552),

('in', 541),

('that', 443),

('my', 440)],

['the',

'time',

'machine',

'by',

'h',

'g',

'wells',

'i',

'the',

'time',

'traveller',

'for',

'so',

'it',

'will',

'be',

'convenient',

'to',

'speak',

'of'])

'''1.2 二元

二元即为将前后两词连在一起当作一个词元,其token为一个包含tuple的list,其中每个tuple为元txt各2个相邻词组成。

bigram_tokens = [pair for pair in zip(corpus[:-1], corpus[1:])]

bigram_vocab = d2l.Vocab(bigram_tokens)

# 返回

bigram_vocab.token_freqs[:10],bigram_tokens[:5]

'''

([(('of', 'the'), 309),

(('in', 'the'), 169),

(('i', 'had'), 130),

(('i', 'was'), 112),

(('and', 'the'), 109),

(('the', 'time'), 102),

(('it', 'was'), 99),

(('to', 'the'), 85),

(('as', 'i'), 78),

(('of', 'a'), 73)],

[('the', 'time'),

('time', 'machine'),

('machine', 'by'),

('by', 'h'),

('h', 'g')])

'''其中,讲一下相邻2词的实现:

bigram_tokens = [pair for pair in zip(corpus[:-1], corpus[1:])]

bigram_tokens[:5]

'''

[('the', 'time'),

('time', 'machine'),

('machine', 'by'),

('by', 'h'),

('h', 'g')]

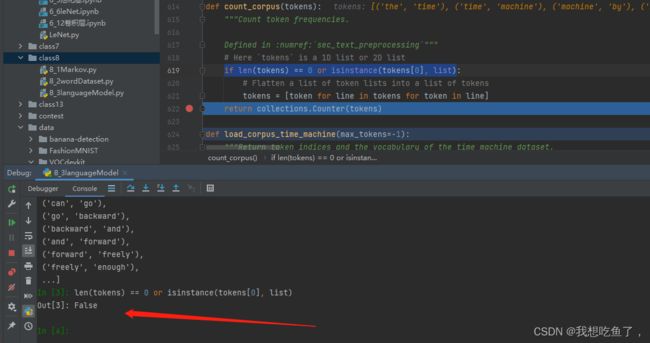

'''传入Vocab的tokens为一个list,如果是2维list则会展平成1维;如果本身就为1维则直接对里面的每个元素进行操作

注意,二元语法中传入Vocab的bigram_tokens是如图组成的二元语法,里面每个元素为前后相邻两个字符串为元组的list,在计数的时候不会被拉成1维的list,因为其本身每个需要计数的元素就是bigram_tokens里面的每个元素(二元元组)

1.3 三元

类似的操作:

trigram_tokens = [triple for triple in zip(

corpus[:-2], corpus[1:-1], corpus[2:])]

trigram_vocab = d2l.Vocab(trigram_tokens)

trigram_vocab.token_freqs[:10]

'''

[(('the', 'time', 'traveller'), 59),

(('the', 'time', 'machine'), 30),

(('the', 'medical', 'man'), 24),

(('it', 'seemed', 'to'), 16),

(('it', 'was', 'a'), 15),

...]

'''实现细节:

corpus[-7:], corpus[:-2][-5:], corpus[1:-1][-5:], corpus[2:][-5:]

'''

(['lived', 'on', 'in', 'the', 'heart', 'of', 'man'],

['lived', 'on', 'in', 'the', 'heart'],

['on', 'in', 'the', 'heart', 'of'],

['in', 'the', 'heart', 'of', 'man'])

'''2.随机抽样

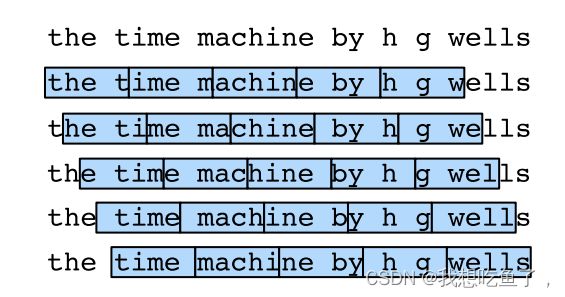

任务,给定一个corpus为所有单词拼起来的一维list,输出X,Y,尺寸为(bs,T),实现如下的分割,注意前k个和最后若不足以配T个的就都扔了。

2.1各bs之间随机

def seq_data_iter_random(corpus, batch_size, num_steps): #@save

"""使⽤随机抽样⽣成⼀个⼩批量⼦序列"""

# 从随机偏移量开始对序列进⾏分区,随机范围包括num_steps-1

corpus = corpus[random.randint(0, num_steps - 1):]

# 减去1,是因为我们需要考虑标签

num_subseqs = (len(corpus) - 1) // num_steps

# ⻓度为num_steps的⼦序列的起始索引

initial_indices = list(range(0, num_subseqs * num_steps, num_steps))

# 在随机抽样的迭代过程中,

# 来⾃两个相邻的、随机的、⼩批量中的⼦序列不⼀定在原始序列上相邻

random.shuffle(initial_indices)

def data(pos):

# 返回从pos位置开始的⻓度为num_steps的序列

return corpus[pos: pos + num_steps]

num_batches = num_subseqs // batch_size

for i in range(0, batch_size * num_batches, batch_size):

# 在这⾥,initial_indices包含⼦序列的随机起始索引

initial_indices_per_batch = initial_indices[i: i + batch_size]

X = [data(j) for j in initial_indices_per_batch]

Y = [data(j + 1) for j in initial_indices_per_batch]

yield torch.tensor(X), torch.tensor(Y) num_steps相当于T,表示切分的每个子块中含有多少个词

corpus考虑对前K个,随机切除,前K个就不要了

num_subseqs是看切后的corpus能组成多少个T的块,采用向下取整。在这里为31//5=6

initial_indices计算每个子序列块起始位置的索引

num_batches表示用块的个数除bs,向下取整

for loop 里面i的分割是bs,保证后面取bs个起始位置时不会取重:(见下图例子)

initial_indices_per_batch表示从块起始索引位置list中,抽取bs个起始位置。

my_seq = list(range(35))

for X, Y in seq_data_iter_random(my_seq, batch_size=2, num_steps=5):

print('X: ', X, '\nY:', Y)

'''

X: tensor([[22, 23, 24, 25, 26],

[ 2, 3, 4, 5, 6]])

Y: tensor([[23, 24, 25, 26, 27],

[ 3, 4, 5, 6, 7]])

X: tensor([[17, 18, 19, 20, 21],

[ 7, 8, 9, 10, 11]])

Y: tensor([[18, 19, 20, 21, 22],

[ 8, 9, 10, 11, 12]])

X: tensor([[12, 13, 14, 15, 16],

[27, 28, 29, 30, 31]])

Y: tensor([[13, 14, 15, 16, 17],

[28, 29, 30, 31, 32]])

'''X,Y均为(bs,T).

22预测23;22,23预测24;22,23,24预测25...

2.2各bs之间连续

def seq_data_iter_sequential(corpus, batch_size, num_steps): #@save

"""使⽤顺序分区⽣成⼀个⼩批量⼦序列"""

# 从随机偏移量开始划分序列

offset = random.randint(0, num_steps)

num_tokens = ((len(corpus) - offset - 1) // batch_size) * batch_size

Xs = torch.tensor(corpus[offset: offset + num_tokens])

Ys = torch.tensor(corpus[offset + 1: offset + 1 + num_tokens])

Xs, Ys = Xs.reshape(batch_size, -1), Ys.reshape(batch_size, -1)

num_batches = Xs.shape[1] // num_steps

for i in range(0, num_steps * num_batches, num_steps):

X = Xs[:, i: i + num_steps]

Y = Ys[:, i: i + num_steps]

yield X, Ynum_batches表示能输出几个bs,后面不够bs的都扔掉:

与random一样,核心都是块数//bs,其中块数=cor//num_step(T)

3.封装

class SeqDataLoader: #@save

"""加载序列数据的迭代器"""

def __init__(self, batch_size, num_steps, use_random_iter, max_tokens):

if use_random_iter:

self.data_iter_fn = d2l.seq_data_iter_random

else:

self.data_iter_fn = d2l.seq_data_iter_sequential

self.corpus, self.vocab = d2l.load_corpus_time_machine(max_tokens)

self.batch_size, self.num_steps = batch_size, num_steps

def __iter__(self):

return self.data_iter_fn(self.corpus, self.batch_size, self.num_steps)对于其中的iter:

def load_data_time_machine(batch_size, num_steps, #@save

use_random_iter=False, max_tokens=10000):

"""返回时光机器数据集的迭代器和词表"""

data_iter = SeqDataLoader(

batch_size, num_steps, use_random_iter, max_tokens)

return data_iter, data_iter.vocab