香橙派4和树莓派4B构建K8S集群实践之八: TiDB

目录

1. 说明

2. 准备工作

3. 安装

3.1 参考Tidb官方 v1.5安装说明

3.2 准备存储类

3.3 创建crd

3.4 执行operator

3.5 创建cluster/dashboard/monitor容器组

3.6 设置访问入口(Ingress & Port)

4. 装好后的容器状况

5. 遇到的问题

6. 参考

1. 说明

- 建立TiDB集群,实现一个基于k8s的云原生分布式数据库方案

- 应用ingress, 子域名访问并测试

- 使用local-volume-provisioner GitHub - kubernetes-sigs/sig-storage-local-static-provisioner: Static provisioner of local volumes作为存储部署方案(注:如果用之前默认设好的NFS SC作为tidb的存储类会导致pd,kv pods不能启动)

| 挂载目录 | 存储目录 | 存储类 | 备注 |

| /mnt/tidb/ssd | /data0/tidb/ssd | ssd-storage | 给 TiKV 使用 |

| /mnt/tidb/sharedssd | /data0/tidb/sharedssd | shared-ssd-storage | 给 PD 使用 |

| /mnt/tidb/monitoring | /data0/tidb/monitoring | monitoring-storage | 给监控数据使用 |

| /mnt/tidb/backup | /data0/tidb/backup | backup-storage | 给 TiDB Binlog 和备份数据使用 |

| ... | ... |

2. 准备工作

拿下需要的文件清单:

| 说明 | 来源 | 备注 | |

| local-volume-provisioner.yaml | 本地存储对象 | https://raw.githubusercontent.com/pingcap/tidb-operator/master/examples/local-pv/local-volume-provisioner.yaml | 修改对应路径 |

| crd.yaml | https://raw.githubusercontent.com/pingcap/tidb-operator/master/manifests/crd.yaml | ||

| tidb-cluster.yaml | https://raw.githubusercontent.com/pingcap/tidb-operator/master/examples/basic/tidb-cluster.yaml | 修改对应Stroage Class | |

| tidb-dashboard.yaml | https://raw.githubusercontent.com/pingcap/tidb-operator/master/examples/basic/tidb-dashboard.yaml | ||

| tidb-monitor.yaml | https://raw.githubusercontent.com/pingcap/tidb-operator/master/examples/basic/tidb-monitor.yaml | ||

| tidb-ng-monitor.yaml | 访问 TiDB Dashboard | PingCAP 文档中心 |

3. 安装

3.1 参考Tidb官方 v1.5安装说明

在标准 Kubernetes 上部署 TiDB 集群 | PingCAP 文档中心介绍如何在标准 Kubernetes 集群上通过 TiDB Operator 部署 TiDB 集群。![]() https://docs.pingcap.com/zh/tidb-in-kubernetes/v1.5/deploy-on-general-kubernetes

https://docs.pingcap.com/zh/tidb-in-kubernetes/v1.5/deploy-on-general-kubernetes

3.2 准备存储类

local-volume-provisioner.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: "monitoring-storage"

provisioner: "kubernetes.io/no-provisioner"

volumeBindingMode: "WaitForFirstConsumer"

---

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: "ssd-storage"

provisioner: "kubernetes.io/no-provisioner"

volumeBindingMode: "WaitForFirstConsumer"

---

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: "shared-ssd-storage"

provisioner: "kubernetes.io/no-provisioner"

volumeBindingMode: "WaitForFirstConsumer"

---

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: "backup-storage"

provisioner: "kubernetes.io/no-provisioner"

volumeBindingMode: "WaitForFirstConsumer"

---

apiVersion: v1

kind: ConfigMap

metadata:

name: local-provisioner-config

namespace: kube-system

data:

setPVOwnerRef: "true"

nodeLabelsForPV: |

- kubernetes.io/hostname

storageClassMap: |

ssd-storage:

hostDir: /mnt/tidb/ssd

mountDir: /mnt/tidb/ssd

shared-ssd-storage:

hostDir: /mnt/tidb/sharedssd

mountDir: /mnt/tidb/sharedssd

monitoring-storage:

hostDir: /mnt/tidb/monitoring

mountDir: /mnt/tidb/monitoring

backup-storage:

hostDir: /mnt/tidb/backup

mountDir: /mnt/tidb/backup

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: local-volume-provisioner

namespace: kube-system

labels:

app: local-volume-provisioner

spec:

selector:

matchLabels:

app: local-volume-provisioner

template:

metadata:

labels:

app: local-volume-provisioner

spec:

serviceAccountName: local-storage-admin

containers:

#- image: "quay.io/external_storage/local-volume-provisioner:v2.3.4"

- image: "quay.io/external_storage/local-volume-provisioner:v2.5.0"

name: provisioner

securityContext:

privileged: true

env:

- name: MY_NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: MY_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: JOB_CONTAINER_IMAGE

#value: "quay.io/external_storage/local-volume-provisioner:v2.3.4"

value: "quay.io/external_storage/local-volume-provisioner:v2.5.0"

resources:

requests:

cpu: 100m

memory: 100Mi

limits:

cpu: 100m

memory: 100Mi

volumeMounts:

- mountPath: /etc/provisioner/config

name: provisioner-config

readOnly: true

- mountPath: /mnt/tidb/ssd

name: local-ssd

mountPropagation: "HostToContainer"

- mountPath: /mnt/tidb/sharedssd

name: local-sharedssd

mountPropagation: "HostToContainer"

- mountPath: /mnt/tidb/backup

name: local-backup

mountPropagation: "HostToContainer"

- mountPath: /mnt/tidb/monitoring

name: local-monitoring

mountPropagation: "HostToContainer"

volumes:

- name: provisioner-config

configMap:

name: local-provisioner-config

- name: local-ssd

hostPath:

path: /mnt/tidb/ssd

- name: local-sharedssd

hostPath:

path: /mnt/tidb/sharedssd

- name: local-backup

hostPath:

path: /mnt/tidb/backup

- name: local-monitoring

hostPath:

path: /mnt/tidb/monitoring

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: local-storage-admin

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: local-storage-provisioner-pv-binding

namespace: kube-system

subjects:

- kind: ServiceAccount

name: local-storage-admin

namespace: kube-system

roleRef:

kind: ClusterRole

name: system:persistent-volume-provisioner

apiGroup: rbac.authorization.k8s.io

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: local-storage-provisioner-node-clusterrole

namespace: kube-system

rules:

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: local-storage-provisioner-node-binding

namespace: kube-system

subjects:

- kind: ServiceAccount

name: local-storage-admin

namespace: kube-system

roleRef:

kind: ClusterRole

name: local-storage-provisioner-node-clusterrole

apiGroup: rbac.authorization.k8s.io

kubectl apply -f /k8s_apps/tidb/1.5.0-beta.1/local-volume-provisioner.yaml

为PV准备挂载点,在目标存储服务器执行脚本:

for i in $(seq 1 5); do

mkdir -p /data0/tidb/ssd/vol${i}

mkdir -p /mnt/tidb/ssd/vol${i}

mount --bind /data0/tidb/ssd/vol${i} /mnt/tidb/ssd/vol${i}

done

for i in $(seq 1 5); do

mkdir -p /data0/tidb/sharedssd/vol${i}

mkdir -p /mnt/tidb/sharedssd/vol${i}

mount --bind /data0/tidb/sharedssd/vol${i} /mnt/tidb/sharedssd/vol${i}

done

for i in $(seq 1 5); do

mkdir -p /data0/tidb/monitoring/vol${i}

mkdir -p /mnt/tidb/monitoring/vol${i}

mount --bind /data0/tidb/monitoring/vol${i} /mnt/tidb/monitoring/vol${i}

done可在KubeSphere看到可用的PVs,等用了SC的Pods起来后就可以赋予绑定,不然这些pod会报错。

3.3 创建crd

kubectl create -f /k8s_apps/tidb/1.5.0-beta.1/crd.yaml3.4 执行operator

kubectl create namespace tidb-admin

helm install --namespace tidb-admin tidb-operator pingcap/tidb-operator --version v1.5.0-beta.1

kubectl get pods --namespace tidb-admin -l app.kubernetes.io/instance=tidb-operator3.5 创建cluster/dashboard/monitor容器组

kubectl create namespace tidb-cluster

kubectl -n tidb-cluster apply -f /k8s_apps/tidb/1.5.0-beta.1/tidb-cluster.yaml

kubectl -n tidb-cluster apply -f /k8s_apps/tidb/1.5.0-beta.1/tidb-dashboard.yaml

kubectl -n tidb-cluster apply -f /k8s_apps/tidb/1.5.0-beta.1/tidb-monitor.yaml

kubectl -n tidb-cluster apply -f /k8s_apps/tidb/1.5.0-beta.1/tidb-ng-monitor.yaml3.6 设置访问入口(Ingress & Port)

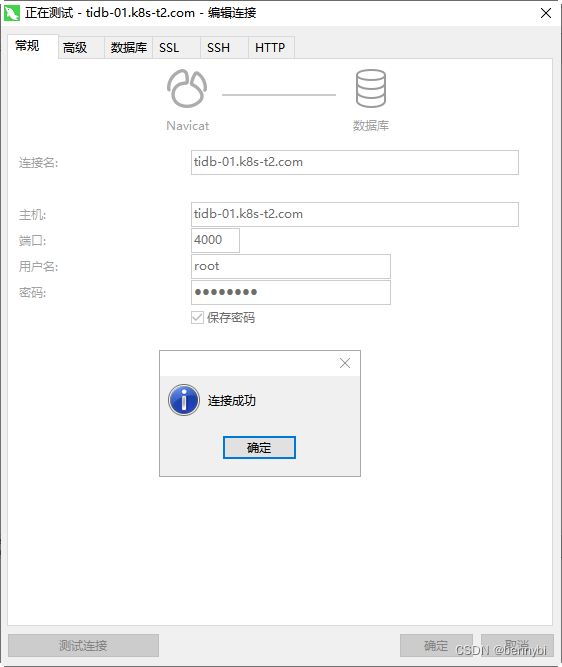

编辑客户机hosts

192.168.0.103 tidb-01.k8s-t2.com

# db连接访问4000端口

192.168.0.103 tidb-pd.k8s-t2.com

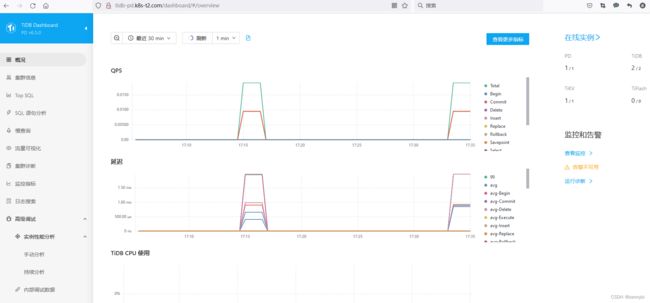

# 管理后台, /dashboard 访问 TiDB Dashboard 页面,默认用户名为 root,密码为空

192.168.0.103 tidb-grafana.k8s-t2.com

# 访问 TiDB 的 Grafana 界面,默认用户名和密码都为 admin

192.168.0.103 tidb-prometheus.k8s-t2.com

# 访问 TiDB 的 Prometheus 管理界面ingress.yaml

# Ingress

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: tidb-pd

namespace: tidb-cluster

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/affinity: cookie

nginx.ingress.kubernetes.io/session-cookie-name: stickounet

nginx.ingress.kubernetes.io/session-cookie-expires: "172800"

nginx.ingress.kubernetes.io/session-cookie-max-age: "172800"

spec:

rules:

- host: tidb-pd.k8s-t2.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: basic-pd

port:

number: 2379

ingressClassName: nginx

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: tidb-grafana

namespace: tidb-cluster

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/affinity: cookie

nginx.ingress.kubernetes.io/session-cookie-name: stickounet

nginx.ingress.kubernetes.io/session-cookie-expires: "172800"

nginx.ingress.kubernetes.io/session-cookie-max-age: "172800"

spec:

rules:

- host: tidb-grafana.k8s-t2.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: basic-grafana

port:

number: 3000

ingressClassName: nginx

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: tidb-prometheus

namespace: tidb-cluster

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/affinity: cookie

nginx.ingress.kubernetes.io/session-cookie-name: stickounet

nginx.ingress.kubernetes.io/session-cookie-expires: "172800"

nginx.ingress.kubernetes.io/session-cookie-max-age: "172800"

spec:

rules:

- host: tidb-prometheus.k8s-t2.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: basic-prometheus

port:

number: 9090

ingressClassName: nginx

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: tidb-db01

namespace: tidb-cluster

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/affinity: cookie

nginx.ingress.kubernetes.io/session-cookie-name: stickounet

nginx.ingress.kubernetes.io/session-cookie-expires: "172800"

nginx.ingress.kubernetes.io/session-cookie-max-age: "172800"

spec:

rules:

- host: tidb-01.k8s-t2.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: basic-tidb

port:

number: 4000

ingressClassName: nginx

用域名访问数据库4000端口,需做些端口开放措施(见: 香橙派4和树莓派4B构建K8S集群实践之五:端口公开访问配置_bennybi的博客-CSDN博客)

4. 装好后的容器状况

5. 遇到的问题

local-volume-provisioner:v2.3.4 没有for arm体系的版本, 拉取时报错

解决办法: 编辑local-volume-provisioner.yaml, 版本修改为image: "quay.io/external_storage/local-volume-provisioner:v2.5.0"

没有正确配置PV挂载点,pd,kv pods起不来

解决办法:参考上面配置存储一节

用tidb-initializer.yaml初始化失败, Init: Error

原因:所含的 image: tnir/mysqlclient 只有amd64体系而没有arm64,导致初始化脚本调用失败,结论:暂不能用。

6. 参考

- 在标准 Kubernetes 上部署 TiDB 集群 | PingCAP 文档中心

- 在 ARM64 机器上部署 TiDB 集群 | PingCAP 文档中心

- k8s Tidb实践-部署篇_TiDB 社区干货传送门的博客-CSDN博客

- local-volume-provisioner使用 - 简书

- https://github.com/kubernetes-sigs/sig-storage-local-static-provisioner/blob/master/docs/operations.md#sharing-a-disk-filesystem-by-multiple-filesystem-pvs