【动手学习深度学习--逐行代码解析合集】18网络中的网络(NiN)

【动手学习深度学习】逐行代码解析合集

18网络中的网络(NiN)

视频链接:动手学习深度学习–网络中的网络(NiN)

课程主页:https://courses.d2l.ai/zh-v2/

教材:https://zh-v2.d2l.ai/

1、NiN网络

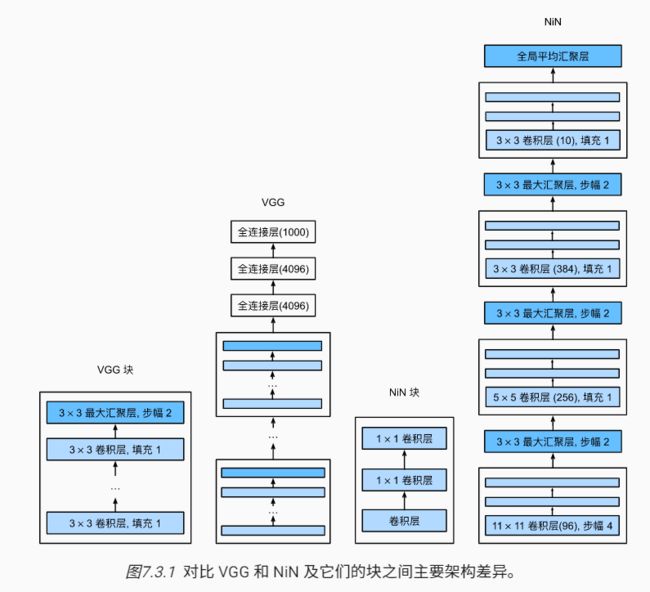

NiN架构

总结:

- NiN块使用卷积层加两个1×1卷积层,后者对每个像素增加了非线性性

- NiN使用全局平均池化层来替代VGG和AlexNet中的全连接层

- 不容易过拟合,更少的参数个数

import torch

from torch import nn

from d2l import torch as d2l

import os

os.environ["KMP_DUPLICATE_LIB_OK"]="TRUE"

"====================1、NiN块结构===================="

def nin_block(in_channels, out_channels, kernel_size, strides, padding):

return nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size, strides, padding),

nn.ReLU(),

# 两个1×1卷积层代替全连接层,不会改变通道数

nn.Conv2d(out_channels, out_channels, kernel_size=1), nn.ReLU(),

nn.Conv2d(out_channels, out_channels, kernel_size=1), nn.ReLU())

2、NiN模型

"====================2、NiN模型===================="

net = nn.Sequential(

# 输入通道数96,卷积核11×11,步长为4,边缘填充为0

nin_block(1, 96, kernel_size=11, strides=4, padding=0),

# 最大池化层卷积核3×3,步长为2

nn.MaxPool2d(3, stride=2),

nin_block(96, 256, kernel_size=5, strides=1, padding=2),

nn.MaxPool2d(3, stride=2),

nin_block(256, 384, kernel_size=3, strides=1, padding=1),

nn.MaxPool2d(3, stride=2),

nn.Dropout(0.5),

# 标签类别数是10,所以输出通道数为10

nin_block(384, 10, kernel_size=3, strides=1, padding=1),

# 全局平均池化层

nn.AdaptiveAvgPool2d((1, 1)),

# 将四维的输出转成二维的输出,其形状为(批量大小,10)

nn.Flatten())

# 查看每个块的输出形状

X = torch.rand(size=(1, 1, 224, 224))

for layer in net:

X = layer(X)

print(layer.__class__.__name__,'output shape:\t', X.shape)

'''

输出:

Sequential output shape: torch.Size([1, 96, 54, 54])

MaxPool2d output shape: torch.Size([1, 96, 26, 26])

Sequential output shape: torch.Size([1, 256, 26, 26])

MaxPool2d output shape: torch.Size([1, 256, 12, 12])

Sequential output shape: torch.Size([1, 384, 12, 12])

MaxPool2d output shape: torch.Size([1, 384, 5, 5])

Dropout output shape: torch.Size([1, 384, 5, 5])

Sequential output shape: torch.Size([1, 10, 5, 5])

AdaptiveAvgPool2d output shape: torch.Size([1, 10, 1, 1])

Flatten output shape: torch.Size([1, 10])

'''

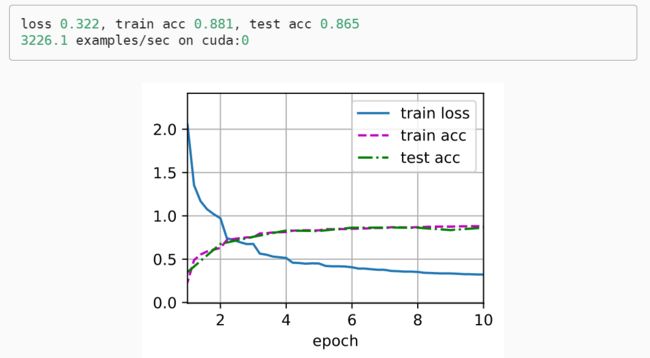

3、训练模型

"====================3、训练模型===================="

# 使用Fashion-MNIST来训练模型。训练NiN与训练AlexNet、VGG时相似。

lr, num_epochs, batch_size = 0.1, 10, 128

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=224)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())