《机器学习实战》学习记录-ch2

PS: 个人笔记,建议不看

原书资料:https://github.com/ageron/handson-ml2

2.1数据获取

import pandas as pd

data = pd.read_csv(r"C:\Users\cyan\Desktop\AI\ML\handson-ml2\datasets\housing\housing.csv")

data.head()

data.info()

RangeIndex: 20640 entries, 0 to 20639

Data columns (total 10 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 longitude 20640 non-null float64

1 latitude 20640 non-null float64

2 housing_median_age 20640 non-null float64

3 total_rooms 20640 non-null float64

4 total_bedrooms 20433 non-null float64

5 population 20640 non-null float64

6 households 20640 non-null float64

7 median_income 20640 non-null float64

8 median_house_value 20640 non-null float64

9 ocean_proximity 20640 non-null object

dtypes: float64(9), object(1)

memory usage: 1.6+ MB

data.columns

Index(['longitude', 'latitude', 'housing_median_age', 'total_rooms',

'total_bedrooms', 'population', 'households', 'median_income',

'median_house_value', 'ocean_proximity'],

dtype='object')

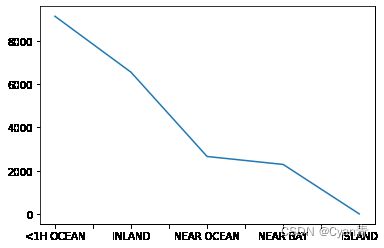

data['ocean_proximity'].value_counts().plot()

data.describe()

| longitude | latitude | housing_median_age | total_rooms | total_bedrooms | population | households | median_income | median_house_value | |

|---|---|---|---|---|---|---|---|---|---|

| count | 20640.000000 | 20640.000000 | 20640.000000 | 20640.000000 | 20433.000000 | 20640.000000 | 20640.000000 | 20640.000000 | 20640.000000 |

| mean | -119.569704 | 35.631861 | 28.639486 | 2635.763081 | 537.870553 | 1425.476744 | 499.539680 | 3.870671 | 206855.816909 |

| std | 2.003532 | 2.135952 | 12.585558 | 2181.615252 | 421.385070 | 1132.462122 | 382.329753 | 1.899822 | 115395.615874 |

| min | -124.350000 | 32.540000 | 1.000000 | 2.000000 | 1.000000 | 3.000000 | 1.000000 | 0.499900 | 14999.000000 |

| 25% | -121.800000 | 33.930000 | 18.000000 | 1447.750000 | 296.000000 | 787.000000 | 280.000000 | 2.563400 | 119600.000000 |

| 50% | -118.490000 | 34.260000 | 29.000000 | 2127.000000 | 435.000000 | 1166.000000 | 409.000000 | 3.534800 | 179700.000000 |

| 75% | -118.010000 | 37.710000 | 37.000000 | 3148.000000 | 647.000000 | 1725.000000 | 605.000000 | 4.743250 | 264725.000000 |

| max | -114.310000 | 41.950000 | 52.000000 | 39320.000000 | 6445.000000 | 35682.000000 | 6082.000000 | 15.000100 | 500001.000000 |

import matplotlib.pyplot as plt

%matplotlib inline # 这是IPython的内置绘图命令,PyCharm用不了,可以省略plt.show()

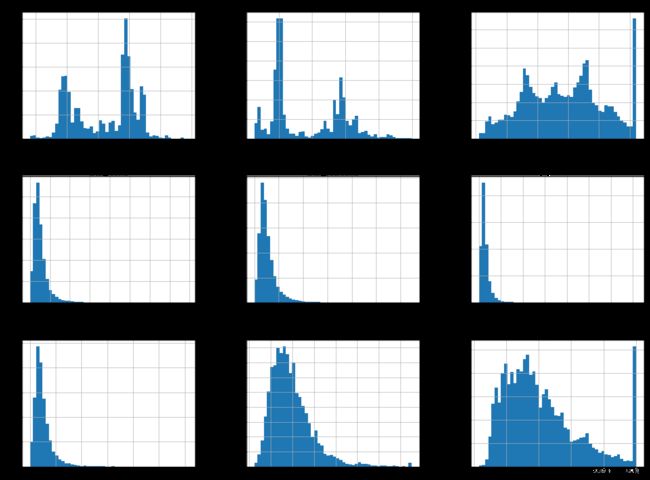

#data.hist(bins=100,figsize=(20,15),column = 'longitude') # 选一列

# 绘制直方图

data.hist(bins=50,figsize=(20,15)) # bins 代表柱子的数目,高度为覆盖宽度内取值数目之和

# plt.show()

# 划分数据集与测试集

import numpy as np

# 自定义划分函数

def split_train_test(data, test_ratio):

shuffled_indices = np.random.permutation(len(data)) # 将 0 ~ len(data) 随机打乱

test_set_size = int(len(data) * test_ratio)

test_indices = shuffled_indices[:test_set_size]

train_indices = shuffled_indices[test_set_size:]

return data.iloc[train_indices], data.iloc[test_indices]

train_data,test_data = my_split_train_test(data,.2)

len(train_data),len(test_data)

(16512, 4128)

from sklearn.model_selection import train_test_split

# 利用 sklean的包 切分数据集,random_state 类似 np.random.seed(42), 保证了每次运行切分出的测试集相同

train_set, test_set = train_test_split(data, test_size=0.2, random_state=42)

len(train_set),len(test_set)

(16512, 4128)

# 但是仅仅随机抽取作为测试集是不合理的,要保证测试集的数据分布跟样本一致

# 创建收入类别属性,为了服从房价中位数的分布对数据进行划分

data["income_cat"] = pd.cut(data["median_income"],

bins=[0., 1.5, 3.0, 4.5, 6., np.inf],

labels=[1, 2, 3, 4, 5])

# 分层抽样

from sklearn.model_selection import StratifiedShuffleSplit

split = StratifiedShuffleSplit(n_splits=1, test_size=0.2, random_state=42) #

for train_index, test_index in split.split(data, data["income_cat"]):

strat_train_set = data.loc[train_index]

strat_test_set = data.loc[test_index]

# 查看测试集数据分布比例

strat_test_set["income_cat"].value_counts() / len(strat_test_set),data["income_cat"].value_counts() / len(data)

(3 0.350533

2 0.318798

4 0.176357

5 0.114341

1 0.039971

Name: income_cat, dtype: float64,

3 0.350581

2 0.318847

4 0.176308

5 0.114438

1 0.039826

Name: income_cat, dtype: float64)

# 删除添加的 income_cat 属性

strat_test_set.drop("income_cat",axis=1,inplace=True)

strat_train_set.drop("income_cat",axis=1,inplace=True)

# 或者如此删除,可能效率更高,或者更美观吧

for set_ in (strat_train_set, strat_test_set):

set_.drop("income_cat", axis=1, inplace=True)