说明:本部署文章参照了 https://github.com/opsnull/follow-me-install-kubernetes-cluster ,欢迎给作者star

kube-proxy 运行在所有 worker 节点上,,它监听 apiserver 中 service 和 Endpoint 的变化情况,创建路由规则来进行服务负载均衡。

本文档讲解部署 kube-proxy 的部署,使用 ipvs 模式。

注意:如果没有特殊指明,本文档的所有操作均在 k8s-master1 节点上执行,然后远程分发文件和执行命令。

创建 kube-proxy 证书

创建证书签名请求:

cd /opt/k8s/work cat > kube-proxy-csr.json <<EOF { "CN": "system:kube-proxy", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "BeiJing", "L": "BeiJing", "O": "k8s", "OU": "4Paradigm" } ] } EOF

- CN:指定该证书的 User 为

system:kube-proxy; - 预定义的 RoleBinding

system:node-proxier将Usersystem:kube-proxy与 Rolesystem:node-proxier绑定,该 Role 授予了调用kube-apiserverProxy 相关 API 的权限; - 该证书只会被 kube-proxy 当做 client 证书使用,所以 hosts 字段为空;

生成证书和私钥:

cd /opt/k8s/work cfssl gencert -ca=/opt/k8s/work/ca.pem \ -ca-key=/opt/k8s/work/ca-key.pem \ -config=/opt/k8s/work/ca-config.json \ -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy ls kube-proxy*

创建和分发 kubeconfig 文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh kubectl config set-cluster kubernetes \ --certificate-authority=/opt/k8s/work/ca.pem \ --embed-certs=true \ --server=${KUBE_APISERVER} \ --kubeconfig=kube-proxy.kubeconfig kubectl config set-credentials kube-proxy \ --client-certificate=kube-proxy.pem \ --client-key=kube-proxy-key.pem \ --embed-certs=true \ --kubeconfig=kube-proxy.kubeconfig kubectl config set-context default \ --cluster=kubernetes \ --user=kube-proxy \ --kubeconfig=kube-proxy.kubeconfig kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

--embed-certs=true:将 ca.pem 和 admin.pem 证书内容嵌入到生成的 kubectl-proxy.kubeconfig 文件中(不加时,写入的是证书文件路径);

分发 kubeconfig 文件:

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_name in k8s-node1 k8s-node2 k8s-node3 do echo ">>> ${node_name}" scp kube-proxy.kubeconfig root@${node_name}:/etc/kubernetes/ done

创建 kube-proxy 配置文件

从 v1.10 开始,kube-proxy 部分参数可以配置文件中配置。可以使用 --write-config-to 选项生成该配置文件,或者参考 kubeproxyconfig 的类型定义源文件

创建 kube-proxy config 文件模板:

cd /opt/k8s/work cat <config.yaml.template kind: KubeProxyConfiguration apiVersion: kubeproxy.config.k8s.io/v1alpha1 clientConnection: kubeconfig: "/etc/kubernetes/kube-proxy.kubeconfig" bindAddress: ##NODE_IP## clusterCIDR: ${CLUSTER_CIDR} healthzBindAddress: ##NODE_IP##:10256 hostnameOverride: ##NODE_NAME## metricsBindAddress: ##NODE_IP##:10249 mode: "ipvs" EOF

bindAddress: 监听地址;clientConnection.kubeconfig: 连接 apiserver 的 kubeconfig 文件;clusterCIDR: kube-proxy 根据--cluster-cidr判断集群内部和外部流量,指定--cluster-cidr或--masquerade-all选项后 kube-proxy 才会对访问 Service IP 的请求做 SNAT;hostnameOverride: 参数值必须与 kubelet 的值一致,否则 kube-proxy 启动后会找不到该 Node,从而不会创建任何 ipvs 规则;mode: 使用 ipvs 模式;

为各节点创建和分发 kube-proxy 配置文件:

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for (( i=3; i < 6; i++ )) do echo ">>> ${NODE_NAMES[i]}" sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" kube-proxy-config.yaml.template > kube-proxy-config-${NODE_NAMES[i]}.yaml.template scp kube-proxy-config-${NODE_NAMES[i]}.yaml.template root@${NODE_NAMES[i]}:/etc/kubernetes/kube-proxy-config.yaml done

替换后的 kube-proxy.config.yaml 文件:kube-proxy.config.yaml

创建和分发 kube-proxy systemd unit 文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh cat > kube-proxy.service <<EOF [Unit] Description=Kubernetes Kube-Proxy Server Documentation=https://github.com/GoogleCloudPlatform/kubernetes After=network.target [Service] WorkingDirectory=${K8S_DIR}/kube-proxy ExecStart=/opt/k8s/bin/kube-proxy \\ --config=/etc/kubernetes/kube-proxy-config.yaml \\ --logtostderr=true \\ --v=2 Restart=on-failure RestartSec=5 LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF

替换后的 unit 文件:kube-proxy.service

分发 kube-proxy systemd unit 文件:

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_name in k8s-node1 k8s-node2 k8s-node3 do echo ">>> ${node_name}" scp kube-proxy.service root@${node_name}:/etc/systemd/system/ done

启动 kube-proxy 服务

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in 192.168.161.170 192.168.161.171 192.168.161.172 do echo ">>> ${node_ip}" ssh root@${node_ip} "mkdir -p ${K8S_DIR}/kube-proxy" ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-proxy && systemctl restart kube-proxy" done

- 必须先创建工作目录;

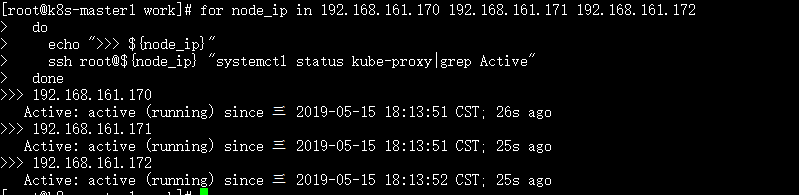

检查启动结果

source /opt/k8s/bin/environment.sh for node_ip in 192.168.161.170 192.168.161.171 192.168.161.172 do echo ">>> ${node_ip}" ssh root@${node_ip} "systemctl status kube-proxy|grep Active" done

确保状态为 active (running),否则查看日志,确认原因:

journalctl -u kube-proxy

查看监听端口和 metrics

[root@k8s-node2 work]# sudo netstat -lnpt|grep kube-prox tcp 0 0 192.168.161.171:10256 0.0.0.0:* LISTEN 3295/kube-proxy tcp 0 0 192.168.161.171:10249 0.0.0.0:* LISTEN 3295/kube-proxy

- 10249:http prometheus metrics port;

- 10256:http healthz port;

查看 ipvs 路由规则

source /opt/k8s/bin/environment.sh for node_ip in 192.168.161.170 192.168.161.171 192.168.161.172 do echo ">>> ${node_ip}" ssh root@${node_ip} "/usr/sbin/ipvsadm -ln" done

预期输出:

[root@k8s-master1 work]# for node_ip in 192.168.161.170 192.168.161.171 192.168.161.172 > do > echo ">>> ${node_ip}" > ssh root@${node_ip} "/usr/sbin/ipvsadm -ln" > done >>> 192.168.161.170 IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 10.254.0.1:443 rr -> 192.168.161.150:6443 Masq 1 0 0 -> 192.168.161.151:6443 Masq 1 0 0 -> 192.168.161.152:6443 Masq 1 0 0 >>> 192.168.161.171 IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 10.254.0.1:443 rr -> 192.168.161.150:6443 Masq 1 0 0 -> 192.168.161.151:6443 Masq 1 0 0 -> 192.168.161.152:6443 Masq 1 0 0 >>> 192.168.161.172 IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 10.254.0.1:443 rr -> 192.168.161.150:6443 Masq 1 0 0 -> 192.168.161.151:6443 Masq 1 0 0 -> 192.168.161.152:6443 Masq 1 0 0

可见将所有到 kubernetes cluster ip 443 端口的请求都转发到 kube-apiserver 的 6443 端口;