DenseNet 和 FractalNet学习笔记

文章目录

- 网络结构

-

- 模型细节

-

- 下采样

- 增长率

- 代码实现

- FractalNet 模型(2016)

网络结构

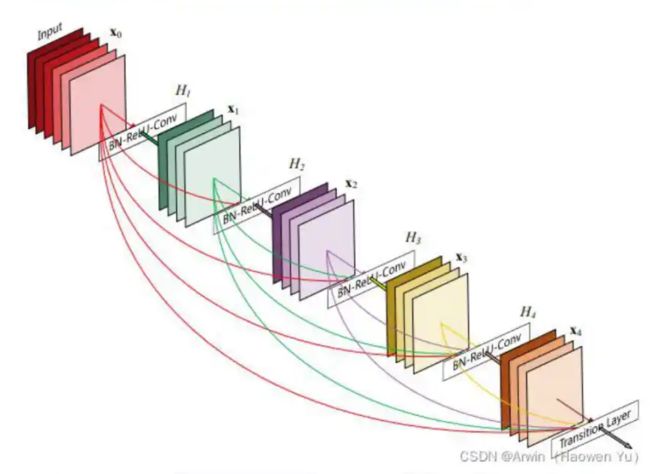

- 假设输入为一个图片X0,经过一个L层的神经网络,第l层的特征输出记作Xl,那么残差连接的公式如下所示: x l = H l ( X l − 1 ) + X l − 1 x_l=H_l(X_l-1)+X_{l-1} xl=Hl(Xl−1)+Xl−1

- 对于ResNet而言,I层的输出是!-1层的输出加上对I-1层输出的非线性变换。

对与DensNet而言,I层的输出是之前所有层的输出集合,公式如下所示: x l = H l ( [ x o , x 1 , . . , x l − 1 ] ) x_l = H_l([x_o,x_1,.., x_{l-1}]) xl=Hl([xo,x1,..,xl−1]) - 其中[]代表concatenation(拼接),既将第0层到 l-1层的所有输出feature map在channel维度上组合在一起.这里所用到的非线性变换H为BN+ReLU+Conv(3×3)的组合。所以从这两个公式就能看出DenseNet和ResNet在本质上的区别。

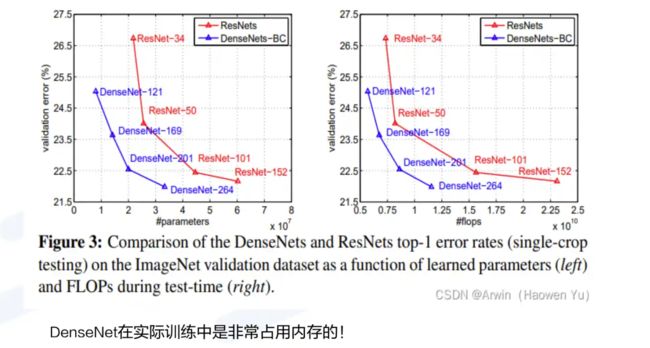

- 虽然这些残差模块中的连线很多看起来很夸张,但是它们代表的操作只是一个空间上的拼接,所以Densenet相比传统的卷积神经网络可训练参数量更少,只是为了在网络深层实现拼接操作,必须把之前的计算结果保存下来,这就比较占内存了。这是DenseNet的一大缺点。

模型细节

下采样

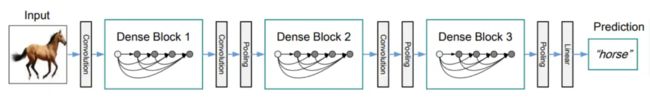

- 由于在DenseNet中需要对不同层的feature map进行cat操作,所以需要不同层的feature map保持相同的feature size,这就限制了网络中Down sampling的实现.为了使用Down sampling,作者将DenseNet分为多个stage,每个stage包含多个Dense blocks,如下图所示:在同一个Denseblock中要求feature size保持相同大小,在不同Denseblock之间设置transition layers实现Down sampling,在作者的实验中transition layer由BN +Conv(kernel size 1×1)+ average-pooling(kernel size 2 × 2)组成.注意这里1X1是为了对channel数量进行降维;而池化才是为了降低特征图的尺寸。

增长率

- 在Denseblock中,假设每一个卷积操作的输出为K个feature map,那么第i层网络的输入便为(i- 1)×K+ (上一个Dense Block的输出channel) ,这个K在论文中的名字叫做Growthrate,默认是等于32的,这里我们可以看到DenseNet和现有网络的一个主要的不同点:DenseNet可以接受较少的特征图数量(32)作为网络层的输出。

- 下采样是为了特征的转移,减少计算量是次要的

- FLOPS:计算复杂度

代码实现

import torch.nn as nn

import torch

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, in_channel, out_channel, stride=1, downsample=None, **kwargs):

super(BasicBlock, self).__init__()

self.conv1 = nn.Conv2d(in_channels=in_channel, out_channels=out_channel, kernel_size=3, stride=stride, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(out_channel)

self.relu = nn.ReLU()

self.conv2 = nn.Conv2d(in_channels=out_channel, out_channels=out_channel, kernel_size=3, stride=1, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_channel)

self.downsample = downsample

def forward(self, x):

identity = x

if self.downsample is not None:

identity = self.downsample(x)

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out += identity

out = self.relu(out)

return out

class Bottleneck(nn.Module):

"""

注意:原论文中,在虚线残差结构的主分支上,第一个1x1卷积层的步距是2,第二个3x3卷积层步距是1。

但在pytorch官方实现过程中是第一个1x1卷积层的步距是1,第二个3x3卷积层步距是2,

这么做的好处是能够在top1上提升大概0.5%的准确率。

可参考Resnet v1.5 https://ngc.nvidia.com/catalog/model-scripts/nvidia:resnet_50_v1_5_for_pytorch

"""

expansion = 4

def __init__(self, in_channel, out_channel, stride=1, downsample=None,

groups=1, width_per_group=64):

super(Bottleneck, self).__init__()

width = int(out_channel * (width_per_group / 64.)) * groups

self.conv1 = nn.Conv2d(in_channels=in_channel, out_channels=width, kernel_size=1, stride=1, bias=False) # squeeze channels

self.bn1 = nn.BatchNorm2d(width)

# -----------------------------------------

self.conv2 = nn.Conv2d(in_channels=width, out_channels=width, groups=groups, kernel_size=3, stride=stride, bias=False, padding=1)

self.bn2 = nn.BatchNorm2d(width)

# -----------------------------------------

self.conv3 = nn.Conv2d(in_channels=width, out_channels=out_channel*self.expansion, kernel_size=1, stride=1, bias=False) # unsqueeze channels

self.bn3 = nn.BatchNorm2d(out_channel*self.expansion)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

def forward(self, x):

identity = x

if self.downsample is not None:

identity = self.downsample(x)

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

out += identity

out = self.relu(out)

return out

class ResNet(nn.Module):

def __init__(self,

block,

blocks_num,

num_classes=1000,

include_top=True,

groups=1,

width_per_group=64):

super(ResNet, self).__init__()

self.include_top = include_top

self.in_channel = 64

self.groups = groups

self.width_per_group = width_per_group

self.conv1 = nn.Conv2d(3, self.in_channel, kernel_size=7, stride=2, padding=3, bias=False)

self.bn1 = nn.BatchNorm2d(self.in_channel)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, blocks_num[0])

self.layer2 = self._make_layer(block, 128, blocks_num[1], stride=2)

self.layer3 = self._make_layer(block, 256, blocks_num[2], stride=2)

self.layer4 = self._make_layer(block, 512, blocks_num[3], stride=2)

if self.include_top:

self.avgpool = nn.AdaptiveAvgPool2d((1, 1)) # output size = (1, 1)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

def _make_layer(self, block, channel, block_num, stride=1):

downsample = None

if stride != 1 or self.in_channel != channel * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.in_channel, channel * block.expansion, kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(channel * block.expansion))

layers = []

layers.append(block(self.in_channel,

channel,

downsample=downsample,

stride=stride,

groups=self.groups,

width_per_group=self.width_per_group))

self.in_channel = channel * block.expansion

for _ in range(1, block_num):

layers.append(block(self.in_channel,

channel,

groups=self.groups,

width_per_group=self.width_per_group))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

if self.include_top:

x = self.avgpool(x)

x = torch.flatten(x, 1)

x = self.fc(x)

return x

# # resnet34 pre-train parameters https://download.pytorch.org/models/resnet34-333f7ec4.pth

# def resnet_samll(num_classes=1000, include_top=True):

# return ResNet(BasicBlock, [3, 4, 6, 3], num_classes=num_classes, include_top=include_top)

# # resnet50 pre-train parameters https://download.pytorch.org/models/resnet50-19c8e357.pth

# def resnet(num_classes=1000, include_top=True):

# return ResNet(Bottleneck, [3, 4, 6, 3], num_classes=num_classes, include_top=include_top)

# # resnet101 pre-train parameters https://download.pytorch.org/models/resnet101-5d3b4d8f.pth

# def resnet_big(num_classes=1000, include_top=True):

# return ResNet(Bottleneck, [3, 4, 23, 3], num_classes=num_classes, include_top=include_top)

# # resneXt pre-train parameters https://download.pytorch.org/models/resnext50_32x4d-7cdf4587.pth

# def resnext(num_classes=1000, include_top=True):

# groups = 32

# width_per_group = 4

# return ResNet(Bottleneck, [3, 4, 6, 3],

# num_classes=num_classes,

# include_top=include_top,

# groups=groups,

# width_per_group=width_per_group)

# # resneXt_big pre-train parameters https://download.pytorch.org/models/resnext101_32x8d-8ba56ff5.pth

# def resnext_big(num_classes=1000, include_top=True):

# groups = 32

# width_per_group = 8

# return ResNet(Bottleneck, [3, 4, 23, 3],

# num_classes=num_classes,

# include_top=include_top,

# groups=groups,

# width_per_group=width_per_group)

def resnet34(num_classes=1000, include_top=True):

# https://download.pytorch.org/models/resnet34-333f7ec4.pth

return ResNet(BasicBlock, [3, 4, 6, 3], num_classes=num_classes, include_top=include_top)

def resnet50(num_classes=1000, include_top=True):

# https://download.pytorch.org/models/resnet50-19c8e357.pth

return ResNet(Bottleneck, [3, 4, 6, 3], num_classes=num_classes, include_top=include_top)

def resnet101(num_classes=1000, include_top=True):

# https://download.pytorch.org/models/resnet101-5d3b4d8f.pth

return ResNet(Bottleneck, [3, 4, 23, 3], num_classes=num_classes, include_top=include_top)

def resnext50_32x4d(num_classes=1000, include_top=True):

# https://download.pytorch.org/models/resnext50_32x4d-7cdf4587.pth

groups = 32

width_per_group = 4

return ResNet(Bottleneck, [3, 4, 6, 3],

num_classes=num_classes,

include_top=include_top,

groups=groups,

width_per_group=width_per_group)

def resnext101_32x8d(num_classes=1000, include_top=True):

# https://download.pytorch.org/models/resnext101_32x8d-8ba56ff5.pth

groups = 32

width_per_group = 8

return ResNet(Bottleneck, [3, 4, 23, 3],

num_classes=num_classes,

include_top=include_top,

groups=groups,

width_per_group=width_per_group)

FractalNet 模型(2016)

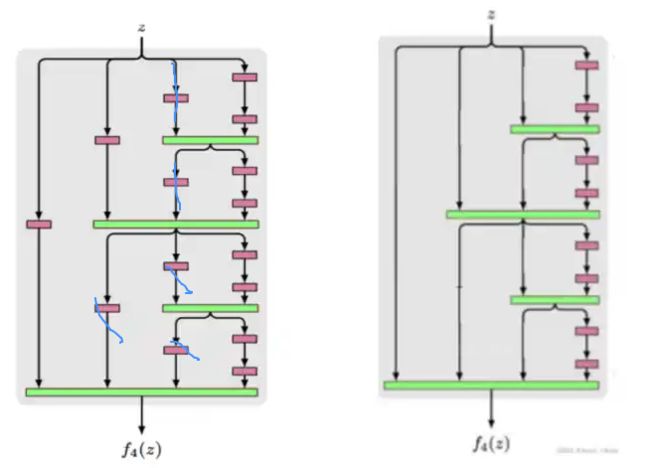

- FractalNet(分型网络),2016年Gustav Larsson首次提出,这个网络跟DenseNet有些类似,因此这里做简单的介绍。

- 分形网络不像resNet那样连一条捷径,而是通过不同长度的子路径组合,网络选择合适的子路径集合提升模型表现

- 分形网络体现的一种特性为:浅层子网提供更迅速的回答,深层子网提供更准确的回答。

- 这里的fC不是CNN中常用到的全连接层,而是指分形次数为C的模块。

- fC模块的表达式如下:

f 1 = c o n v ( z ) f_1=conv(z) f1=conv(z) f C + 1 = [ ( f C ⋅ f C ) ( z ) ] ⊕ [ c o n v ( z ) ] f_{C+1}=[(f_C·f_C)(z)]\oplus[conv(z)] fC+1=[(fC⋅fC)(z)]⊕[conv(z)] - 其中,⊕是一个聚合(join)操作,本文推荐使用均值,而非常见的concat或 addition。

- 网络结构看完了,FratalNet并不存在像ResNet那样skip connect的结构。但是,实际上如果把fC模块改成:

f C + 1 = [ ( f C ⋅ f C ) ( z ) ] ⊕ z f_{C+1}=[(f_C·f_C)(z)]\oplus z fC+1=[(fC⋅fC)(z)]⊕z - 就是 DenseNet

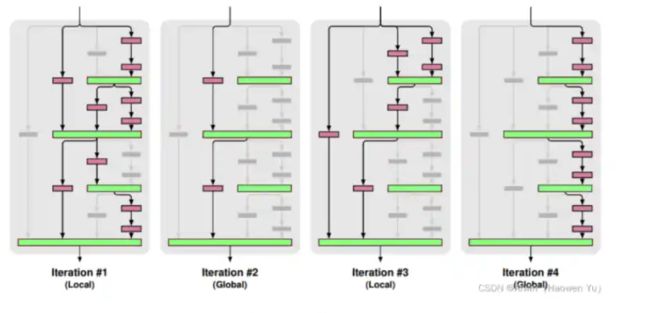

- 最后,路径舍弃(Drop path)也是FractalNet的贡献之一,可以看作一种新的正则化规则。对路径舍弃采用了50%局部以及50%全局的混合采样:

局部:连接层以固定几率舍弃每个输入,但我们保证至少一个输入保留。如图第1、3个。全局:为了整个网络选出每条路径,并限制其为单列结构,激励每列成为有力的预测器,每列只做卷积。如图第2、4个。

老师博客