WeekT8 - 猫狗识别1(VGG-16)

文章目录

- 1. 导入数据

- 2. 数据预处理之加载数据

- 3. 配置数据集

- 4. 可视化数据

- 5. 搭建VGG-16网络

- 5. 编译

- 6. 训练模型

- 7. 模型评估

- 8. 预测

- 9. tqdm说明

-

- 9.1 再来说遇到的那个报错

- 10. 文中比较严重的bug没找到

- 本文为365天深度学习训练营 中的学习记录博客

- 参考文章:365天深度学习训练营-第8周:猫狗识别(训练营内部成员可读)

- 原作者:K同学啊 | 接辅导、项目定制

- 文章来源:K同学的学习圈子

● 难度:夯实基础⭐⭐

● 语言:Python3、TensorFlow2

● 难度:夯实基础⭐⭐

● 语言:Python3、TensorFlow2要求:

- 了解model.train_on_batch()并运用

- 了解tqdm,并使用tqdm实现可视化进度条

拔高(可选):

- 本文代码中存在一个严重的BUG,请找出它并配以文字说明

探索(难度有点大)

- 修改代码,处理BUG

其他说明:这篇文章中放弃了以往的model.fit()训练方法,改用model.train_on_batch方法。

两种方法的比较:● model.fit():用起来十分简单,对新手非常友好

● model.train_on_batch():封装程度更低,可以玩更多花样。此外也引入了进度条的显示方式,更加方便我们及时查看模型训练过程中的情况,可以及时打印各项指标。

1. 导入数据

2. 数据预处理之加载数据

3. 配置数据集

4. 可视化数据

5. 搭建VGG-16网络

from tensorflow.keras import layers, models, Input

from tensorflow.keras.models import Model

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Dense, Flatten, Dropout

def VGG16(nb_classes, input_shape):

input_tensor = Input(shape=input_shape)

# 1st block

x = Conv2D(64, (3,3), activation='relu', padding='same',name='block1_conv1')(input_tensor)

x = Conv2D(64, (3,3), activation='relu', padding='same',name='block1_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block1_pool')(x)

# 2nd block

x = Conv2D(128, (3,3), activation='relu', padding='same',name='block2_conv1')(x)

x = Conv2D(128, (3,3), activation='relu', padding='same',name='block2_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block2_pool')(x)

# 3rd block

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv1')(x)

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv2')(x)

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block3_pool')(x)

# 4th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv1')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block4_pool')(x)

# 5th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv1')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block5_conv3')(x)

x = MaxPooling2D((2,2), strides=(2,2), name = 'block5_pool')(x)

# full connection

x = Flatten()(x)

x = Dense(4096, activation='relu', name='fc1')(x)

x = Dense(4096, activation='relu', name='fc2')(x)

output_tensor = Dense(nb_classes, activation='softmax', name='predictions')(x)

model = Model(input_tensor, output_tensor)

return model

model=VGG16(1000, (img_width, img_height, 3))

model.summary()

Model: "model"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

input_1 (InputLayer) [(None, 224, 224, 3)] 0

block1_conv1 (Conv2D) (None, 224, 224, 64) 1792

block1_conv2 (Conv2D) (None, 224, 224, 64) 36928

block1_pool (MaxPooling2D) (None, 112, 112, 64) 0

block2_conv1 (Conv2D) (None, 112, 112, 128) 73856

block2_conv2 (Conv2D) (None, 112, 112, 128) 147584

block2_pool (MaxPooling2D) (None, 56, 56, 128) 0

block3_conv1 (Conv2D) (None, 56, 56, 256) 295168

block3_conv2 (Conv2D) (None, 56, 56, 256) 590080

block3_conv3 (Conv2D) (None, 56, 56, 256) 590080

block3_pool (MaxPooling2D) (None, 28, 28, 256) 0

block4_conv1 (Conv2D) (None, 28, 28, 512) 1180160

block4_conv2 (Conv2D) (None, 28, 28, 512) 2359808

block4_conv3 (Conv2D) (None, 28, 28, 512) 2359808

block4_pool (MaxPooling2D) (None, 14, 14, 512) 0

block5_conv1 (Conv2D) (None, 14, 14, 512) 2359808

block5_conv2 (Conv2D) (None, 14, 14, 512) 2359808

block5_conv3 (Conv2D) (None, 14, 14, 512) 2359808

block5_pool (MaxPooling2D) (None, 7, 7, 512) 0

flatten (Flatten) (None, 25088) 0

fc1 (Dense) (None, 4096) 102764544

fc2 (Dense) (None, 4096) 16781312

predictions (Dense) (None, 1000) 4097000

=================================================================

Total params: 138,357,544

Trainable params: 138,357,544

Non-trainable params: 0

_________________________________________________________________

5. 编译

在准备对模型进行训练之前,还需要再对其进行一些设置。以下内容是在模型的编译步骤中添加的:

● 损失函数(loss):用于衡量模型在训练期间的准确率。

● 优化器(optimizer):决定模型如何根据其看到的数据和自身的损失函数进行更新。

● 评价函数(metrics):用于监控训练和测试步骤。以下示例使用了准确率,即被正确分类的图像的比率。

6. 训练模型

from tqdm import tqdm

import tensorflow.keras.backend as K

epochs = 10

lr = 1e-4

# 记录训练数据,方便后面的分析

history_train_loss = []

history_train_accuracy = []

history_val_loss = []

history_val_accuracy = []

for epoch in range(epochs):

train_total = len(train_ds)

val_total = len(val_ds)

"""

total:预期的迭代数目

ncols:控制进度条宽度

mininterval:进度更新最小间隔,以秒为单位(默认值:0.1)

"""

with tqdm(total=train_total, desc=f'Epoch {epoch + 1}/{epochs}',mininterval=1,ncols=100) as pbar:

lr = lr*0.92

K.set_value(model.optimizer.lr, lr)

for image,label in train_ds:

"""

训练模型,简单理解train_on_batch就是:它是比model.fit()更高级的一个用法

想详细了解 train_on_batch 的同学,

可以看看我的这篇文章:https://www.yuque.com/mingtian-fkmxf/hv4lcq/ztt4gy

"""

history = model.train_on_batch(image,label)

train_loss = history[0]

train_accuracy = history[1]

pbar.set_postfix({"loss": "%.4f"%train_loss,

"accuracy":"%.4f"%train_accuracy,

"lr": K.get_value(model.optimizer.lr)})

pbar.update(1)

history_train_loss.append(train_loss)

history_train_accuracy.append(train_accuracy)

print('开始验证!')

with tqdm(total=val_total, desc=f'Epoch {epoch + 1}/{epochs}',mininterval=0.3,ncols=100) as pbar:

for image,label in val_ds:

history = model.test_on_batch(image,label)

val_loss = history[0]

val_accuracy = history[1]

pbar.set_postfix({"loss": "%.4f"%val_loss,

"accuracy":"%.4f"%val_accuracy})

pbar.update(1)

history_val_loss.append(val_loss)

history_val_accuracy.append(val_accuracy)

print('结束验证!')

print("验证loss为:%.4f"%val_loss)

print("验证准确率为:%.4f"%val_accuracy)

一些说明:

(1)训练时间比较久: 我用CPU执行的训练过程,跑完一个epoch需要40min,6.60s/it。所以训练完所有的大概花了6个多小时,从下午快6点到晚上12点。

(2)遇到一个报错:(这个问题在后面说)

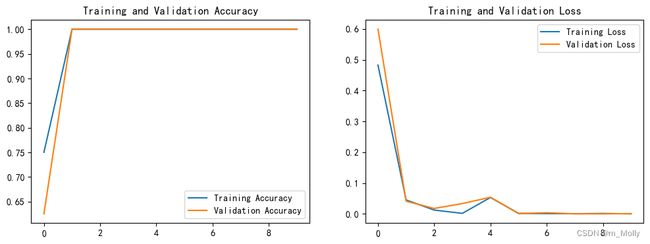

7. 模型评估

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, history_train_accuracy, label='Training Accuracy')

plt.plot(epochs_range, history_val_accuracy, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, history_train_loss, label='Training Loss')

plt.plot(epochs_range, history_val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

8. 预测

import numpy as np

# 采用加载的模型(new_model)来看预测结果

plt.figure(figsize=(18, 3)) # 图形的宽为18高为5

plt.suptitle("预测结果展示")

for images, labels in val_ds.take(1):

for i in range(8):

ax = plt.subplot(1,8, i + 1)

# 显示图片

plt.imshow(images[i].numpy())

# 需要给图片增加一个维度

img_array = tf.expand_dims(images[i], 0)

# 使用模型预测图片中的人物

predictions = model.predict(img_array)

plt.title(class_names[np.argmax(predictions)])

plt.axis("off")

9. tqdm说明

tqdm模块是python进度条库,主要分为两种运行模式:

(1)基于迭代对象运行:tqdm(iterator)

(2)手动更新

文中使用的是第(2)种方法

第一个00:06是已用时间,第二个00:00是剩余时间, 16.04it/s表示每秒16.04项。

下面是简单实例展示:

import time

from tqdm import tqdm

# 发呆0.5s

def action():

time.sleep(0.5)

with tqdm(total=100000, desc='Example', leave=True, ncols=100, unit='B', unit_scale=True) as pbar:

for i in range(10):

# 发呆0.5秒

action()

# 更新发呆进度

pbar.update(10000)

# Example: 100%|███████████████████████████████████████████████████| 100k/100k [00:05<00:00, 19.6kB/s]

9.1 再来说遇到的那个报错

ModuleNotFoundError : No module named 'tqdm'

我搜资料说tqdm是python的自带库,但是导入报错,所以我想要不再安装一下,然后系统提示我“该环境已存在”,我再打开运行,就能导入了。