使用ffmepg实现多路视频流合并

做视频会议系统的时候,有时需要实现多路视频画面合并后推流功能,要直接底层实现这样的功能还是不太容易的,如果借助ffmpeg就方便多了,使用ffmpeg的滤镜功能就能实现多路合并的效果。

首先说明需要用到的ffmpeg对象,以及一些必要的字段。

ffmpeg版本:

version 4.3

所用到的头文件:

#include

#include

#include 需要的数据结构如下:

每一条输入流需要如下的字段

typedef struct Stream{

int x;

int y;

int width;

int height;

int format;//参考AVPixelFormat

AVFilterContext* buffersrc_ctx;

AVFilter* buffersrc;

AVFilterInOut* output;

AVFrame* inputFrame;

} Stream;输出流需要如下字段

typedef struct Merge {

AVFilterGraph* filter_graph;

AVFilterContext* buffersink_ctx;

AVFilter* buffersink;

AVFilterInOut* input;

const char* filters_descr;

AVFrame* outputFrame;

unsigned char* outputBuffer;

int outputWidth;

int outputHeight;

int outputFormat;//参考AVPixelFormat

Stream *streams[128];

int streamCount;

} Merge;主要流程如下:

1、构造输出流及输入流

构造输出流,输出流需要设置分辨率以及输出的像素格式

Merge* Merge_Create(int outputWidth, int outputHeight, int outputFormat) {

Merge* merge = malloc(sizeof(Merge));

memset(merge, 0, sizeof(Merge));

merge->outputWidth = outputWidth;

merge->outputHeight = outputHeight;

merge->outputFormat = outputFormat;

return merge;

}添加输入流,输入流需要设置在输出流中的位置和大小,以及输入流像素格式

Stream* Merge_AddStream(Merge* merge, int x, int y, int width, int height, int format) {

Stream* stream = malloc(sizeof(Stream));

memset(merge, 0, sizeof(Stream));

stream->x = x;

stream->y = y;

stream->width = width;

stream->height = height;

stream->format = format;

merge->streams[merge->streamCount++] = stream;

return stream;

}2、初始化滤镜

主要用到的滤镜是filters_descr = "[in0]pad=1280:640:0:0:black[x0];[x0][in1]overlay=640:0[x1];[x1][in2]overlay=600:0[x2];[x2]null[out]";

//初始化Merge

int Merge_Init(Merge* merge) {

char args[512];

char name[8];

char* filters_descr = NULL;

int ret;

//avfilter_register_all();//旧版可能用到此行

merge->buffersink = avfilter_get_by_name("buffersink");

av_assert0(merge->buffersink);

merge->input = avfilter_inout_alloc();

if (merge->input == NULL)

{

printf("alloc inout fail\n");

goto fail;

}

merge->filter_graph = avfilter_graph_alloc();

if (merge->input == NULL)

{

printf("alloc graph fail\n");

goto fail;

}

ret = avfilter_graph_create_filter(&merge->buffersink_ctx, merge->buffersink, "out", NULL, NULL, merge->filter_graph);

if (ret < 0)

{

printf("graph create fail\n");

goto fail;

}

merge->input->name = av_strdup("out");

merge->input->filter_ctx = merge->buffersink_ctx;

merge->input->pad_idx = 0;

merge->input->next = NULL;

merge->outputFrame = av_frame_alloc();

if (merge->outputFrame == NULL)

{

printf("alloc frame fail\n");

goto fail;

}

merge->outputBuffer = (unsigned char*)av_malloc(av_image_get_buffer_size(merge->outputFormat, merge->outputWidth, merge->outputHeight, 1));

if (merge->outputBuffer == NULL)

{

printf("alloc buffer fail\n");

goto fail;

}

enum AVPixelFormat pix_fmts[2] = { 0, AV_PIX_FMT_NONE };

pix_fmts[0] = merge->outputFormat;

ret = av_opt_set_int_list(merge->buffersink_ctx, "pix_fmts", pix_fmts, AV_PIX_FMT_NONE, AV_OPT_SEARCH_CHILDREN);

if (ret < 0) {

printf("set opt fail\n");

goto fail;

}

Stream** streams = merge->streams;

for (int i = 0; i < merge->streamCount; i++)

{

streams[i]->buffersrc = avfilter_get_by_name("buffer");

av_assert0(streams[i]->buffersrc);

snprintf(args, sizeof(args), "video_size=%dx%d:pix_fmt=%d:time_base=%d/%d:pixel_aspect=%d/%d", streams[i]->width, streams[i]->height, streams[i]->format, 1, 90000, 1, 1);

snprintf(name, sizeof(name), "in%d", i);

ret = avfilter_graph_create_filter(&streams[i]->buffersrc_ctx, streams[i]->buffersrc, name, args, NULL, merge->filter_graph);

if (ret < 0)

{

printf("stream graph create fail\n");

goto fail;

}

streams[i]->output = avfilter_inout_alloc();

streams[i]->output->name = av_strdup(name);

streams[i]->output->filter_ctx = streams[i]->buffersrc_ctx;

streams[i]->output->pad_idx = 0;

streams[i]->output->next = NULL;

streams[i]->inputFrame = av_frame_alloc();

if (streams[i]->inputFrame == NULL)

{

printf("alloc frame fail\n");

goto fail;

}

streams[i]->inputFrame->format = streams[i]->format;

streams[i]->inputFrame->width = streams[i]->width;

streams[i]->inputFrame->height = streams[i]->height;

if (i > 0)

{

streams[i - 1]->output->next = streams[i]->output;

}

}

/*filters_descr = "[in0]pad=1280:640:0:0:black[x0];[x0][in1]overlay=640:0[x1];[x1][in2]overlay=600:0[x2];[x2]null[out]";*/

filters_descr = malloc(sizeof(char) * merge->streamCount * 128);

if (filters_descr == NULL)

{

printf("alloc string fail\n");

goto fail;

}

char sigle_descr[128];

snprintf(sigle_descr, sizeof(sigle_descr), "[in0]pad=%d:%d:%d:%d:black[x0];", merge->outputWidth, merge->outputHeight, streams[0]->x, streams[0]->y);

strcpy(filters_descr, sigle_descr);

int i = 1;

for (; i < merge->streamCount; i++)

{

snprintf(sigle_descr, sizeof(sigle_descr), "[x%d][in%d]overlay=%d:%d[x%d];", i - 1, i, streams[i]->x, streams[i]->y, i);

strcat(filters_descr, sigle_descr);

}

snprintf(sigle_descr, sizeof(sigle_descr), "[x%d]null[out]", i - 1);

strcat(filters_descr, sigle_descr);

ret = avfilter_graph_parse_ptr(merge->filter_graph, filters_descr, &merge->input, &streams[0]->output, NULL);

if (ret < 0)

{

printf("graph parse fail\n");

goto fail;

}

// 过滤配置初始化

ret = avfilter_graph_config(merge->filter_graph, NULL);

if (ret < 0)

{

printf("graph config fail\n");

goto fail;

}

if (filters_descr != NULL)

free(filters_descr);

return 0;

fail:

if (filters_descr != NULL)

free(filters_descr);

Merge_Deinit(merge); //执行反初始化

return -1;

}3、写输入流

void Merge_WriteStream(Merge* merge, Stream* stream, const unsigned char* buffer, int timestamp) {

av_image_fill_arrays(stream->inputFrame->data, stream->inputFrame->linesize, buffer, stream->format, stream->width, stream->height, 1);

stream->inputFrame->pts = timestamp;

if (av_buffersrc_write_frame(stream->buffersrc_ctx, stream->inputFrame) < 0) {

printf("Error while add frame.\n");

}

}4、合并流

调用下列方法,即可得到合并后的一帧的数据。可以按照一定帧率调用3、4方法。

const unsigned char* Merge_Merge(Merge* merge) {

int ret = av_buffersink_get_frame(merge->buffersink_ctx, merge->outputFrame);

if (ret < 0) {

printf("Error while get frame.\n");

return NULL;

}

av_image_copy_to_buffer(merge->outputBuffer,

av_image_get_buffer_size(merge->outputFormat, merge->outputWidth, merge->outputHeight, 1),

merge->outputFrame->data, merge->outputFrame->linesize, merge->outputFrame->format, merge->outputFrame->width, merge->outputFrame->height, 1);

av_frame_unref(merge->outputFrame);

return merge->outputBuffer;

}5、结束,反初始化,销毁对象

static void Merge_Deinit(Merge* merge)

{

if (merge->input != NULL)

avfilter_inout_free(&merge->input);

if (merge->filter_graph != NULL)

avfilter_graph_free(&merge->filter_graph);

if (merge->outputFrame != NULL)

av_frame_free(&merge->outputFrame);

if (merge->outputBuffer != NULL)

av_free(merge->outputBuffer);

for (int i = 0; i < merge->streamCount; i++)

{

Stream* stream = merge->streams[i];

if (stream->inputFrame != NULL)

av_frame_free(&stream->inputFrame);

free(stream);

}

merge->streamCount = 0;

}void Merge_Destroy(Merge* merge) {

Merge_Deinit(merge);

free(merge);

}调用流程示例:

int main() {

int flag = 1;

Merge* merge = Merge_Create(1920, 1080, 0);

Stream* stream1 = Merge_AddStream(merge, 0, 0, 960, 540, 0);

Stream* stream2 = Merge_AddStream(merge, 960, 540, 960, 540, 0);

Stream* stream3 = Merge_AddStream(merge, 0, 540, 960, 540, 0);

if (Merge_Init(merge) != 0)

{

Merge_Destroy(merge);

return -1;

}

while (flag)

{

clock_t time = clock();

unsigned char* buffer1;

unsigned char* buffer2;

unsigned char* buffer3;

//获取每路流的数据

...

//获取每路流的数据-end

Merge_WriteStream(merge, stream1, buffer1, time);

Merge_WriteStream(merge, stream2, buffer2, time);

Merge_WriteStream(merge, stream3, buffer3, time);

unsigned char* mergedBuffer = Merge_Merge(merge);

//显示或编码推流

...

//显示或编码推流-end

}

Merge_Destroy(merge);

}需要注意的是上述方法最好在单线程中使用,多线程使用可能需要另外做修改,或者参考:

https://download.csdn.net/download/u013113678/32899063

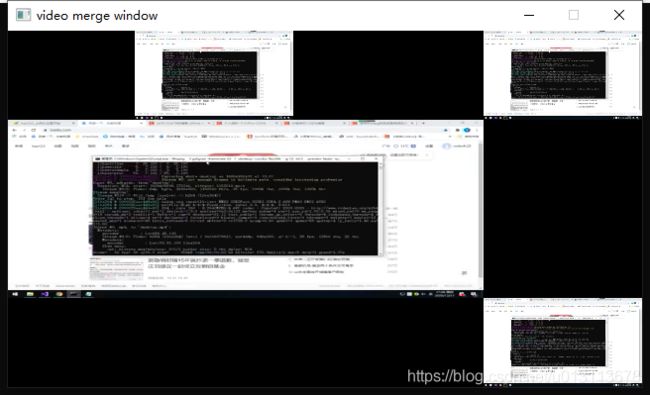

效果如下