使用 trt 的int8 量化和推断 onnx 模型

2022-04-06 更新:

理清几个概念:

1、onnx模型本身要有动态维度,否则只能转静态维度的trt engine。

2、只要一个profile就够了,设个最小最大维度,最优就是最常用的维度。在推断的时候要绑定一下。

3、builder 和 config 里有很多相同的设置,如果用了 config,就不需要设置 builder中的相同参数了。

def onnx_2_trt(onnx_filename, engine_filename, mode='fp32', max_batch_size=1, min_wh=(160,160), max_wh=(320,320), int8_calib=None):

''' convert onnx to tensorrt engine, use mode of ['fp32', 'fp16', 'int8']

:return: trt engine

'''

assert mode in ['fp32', 'fp16', 'int8'], "mode should be in ['fp32', 'fp16', 'int8']"

G_LOGGER = trt.Logger(trt.Logger.WARNING)

# TRT7中的onnx解析器的network,需要指定EXPLICIT_BATCH

EXPLICIT_BATCH = 1 << (int)(trt.NetworkDefinitionCreationFlag.EXPLICIT_BATCH)

with trt.Builder(G_LOGGER) as builder, builder.create_network(EXPLICIT_BATCH) as network, \

trt.OnnxParser(network, G_LOGGER) as parser:

print('Loading ONNX file from path %s...'%(onnx_filename))

with open(onnx_filename, 'rb') as model:

print('Beginning ONNX file parsing')

if not parser.parse(model.read()):

for e in range(parser.num_errors):

print(parser.get_error(e))

raise TypeError('Parser parse failed.')

print('Completed parsing of ONNX file')

# wujp 2022-03-29 如果使用了config,builder就不用设置了。

builder.max_batch_size = max_batch_size # max_batch_size 在config中没有

#builder.max_workspace_size = 1 << 30

if mode == 'int8':

assert (builder.platform_has_fast_int8 == True), 'not support int8'

#builder.int8_mode = True

#builder.int8_calibrator = calib

elif mode == 'fp16':

assert (builder.platform_has_fast_fp16 == True), 'not support fp16'

#builder.fp16_mode = True

profile = builder.create_optimization_profile()

inputs = [network.get_input(i) for i in range(network.num_inputs)]

for inp in inputs:

fbs, shape = inp.shape[0], inp.shape[1:]

if (shape[1] == -1 and shape[2] == -1): # height 和 width 都是动态的。

profile.set_shape(inp.name, min=(1, shape[0], *min_wh), opt=(8, shape[0], *min_wh), max=(max_batch_size, shape[0], *max_wh))

else:

profile.set_shape(inp.name, min=(1, *shape), opt=(8, *shape), max=(max_batch_size, *shape))

config = builder.create_builder_config()

config.max_workspace_size = 1 << 30

if mode == 'int8':

config.set_flag(trt.BuilderFlag.INT8)

config.int8_calibrator = int8_calib

elif mode == 'fp16':

config.set_flag(trt.BuilderFlag.FP16)

config.add_optimization_profile(profile)

config.set_calibration_profile(profile) # 不加会有警告 [TensorRT] WARNING: Calibration Profile is not defined. Runing calibration with Profile 0

print('Building an engine from file %s; this may take a while...'%(onnx_filename))

#engine = builder.build_cuda_engine(network, config)

engine = builder.build_engine(network, config)

print('Created engine success! ')

# 保存计划文件

print('Saving TRT engine file to path %s...'%(engine_filename))

with open(engine_filename, 'wb') as f:

f.write(engine.serialize())

print('Engine file has already saved to %s!'%(engine_filename))

return engine--------------------------------------------------------------------

2022-03-27

以下代码单batch没问题,多batch不行。

目录

生成 trt 模型

1、使用代码

2、onnx模型和图片

3、修改代码

4、结果

推断 trt 模型

生成 trt 模型

1、使用代码

https://github.com/rmccorm4/tensorrt-utils.git

2、onnx模型和图片

模型:动态batch输入(假设为 mob_w160_h160.onnx,输入是 [batchsize, 3, 160, 160])。

图片:一堆图片(假设有1024张),不需要其他描述文件。

在 tensorrt-utils/int8/calibration/ 下创建子文件夹(假设叫 my),模型和图片放入其中。

3、修改代码

来到 tensorrt-utils/int8/calibration/ 目录。

修改 onnx_to_tensorrt.py 中 main,主要是修改路径和校准图片数。

def main():

my_dir = os.path.dirname(os.path.realpath(__file__)) + '/my/'

parser = argparse.ArgumentParser(description="Creates a TensorRT engine from the provided ONNX file.\n")

parser.add_argument("--onnx", help="The ONNX model file to convert to TensorRT",

default=my_dir+'mob_w160_h160.onnx')

parser.add_argument("-o", "--output", type=str, help="The path at which to write the engine",

default=my_dir+'mob_w160_h160.trt')

parser.add_argument("-b", "--max-batch-size", type=int, default=32, help="The max batch size for the TensorRT engine input")

parser.add_argument("-v", "--verbosity", action="count", help="Verbosity for logging. (None) for ERROR, (-v) for INFO/WARNING/ERROR, (-vv) for VERBOSE.")

# 如果explicit-batch 是 False,会有错误

# In node -1 (importModel): INVALID_VALUE: Assertion failed: !_importer_ctx.network()->hasImplicitBatchDimension() &&

# "This version of the ONNX parser only supports TensorRT INetworkDefinitions with an explicit batch dimension. Please ensure the network was created using the EXPLICIT_BATCH NetworkDefinitionCreationFlag."

parser.add_argument("--explicit-batch", action='store_true', help="Set trt.NetworkDefinitionCreationFlag.EXPLICIT_BATCH.",

default=True)

# 如果explicit-precision 是 True, 会有错误

# "python: ../builder/cudnnBuilderWeightConverters.cpp:162: std::vector nvinfer1::cudnn::makeConvDeconvInt8Weights(nvinfer1::ConvolutionParameters&,

# const nvinfer1::rt::EngineTensor&, const nvinfer1::rt::EngineTensor&, float, bool, bool): Assertion `sI.count() == 1' failed."

parser.add_argument("--explicit-precision", action='store_true', help="Set trt.NetworkDefinitionCreationFlag.EXPLICIT_PRECISION.",

default=False)

parser.add_argument("--gpu-fallback", action='store_true', help="Set trt.BuilderFlag.GPU_FALLBACK.")

parser.add_argument("--refittable", action='store_true', help="Set trt.BuilderFlag.REFIT.")

parser.add_argument("--debug", action='store_true', help="Set trt.BuilderFlag.DEBUG.")

parser.add_argument("--strict-types", action='store_true', help="Set trt.BuilderFlag.STRICT_TYPES.")

parser.add_argument("--fp16", action="store_true", help="Attempt to use FP16 kernels when possible.")

parser.add_argument("--int8", action="store_true", help="Attempt to use INT8 kernels when possible. This should generally be used in addition to the --fp16 flag. \

ONLY SUPPORTS RESNET-LIKE MODELS SUCH AS RESNET50/VGG16/INCEPTION/etc.",

default = True)

parser.add_argument("--calibration-cache", help="(INT8 ONLY) The path to read/write from calibration cache.",

default=my_dir+"calibration.cache")

parser.add_argument("--calibration-data", help="(INT8 ONLY) The directory containing {*.jpg, *.jpeg, *.png} files to use for calibration. (ex: Imagenet Validation Set)",

default=my_dir+'images/')

parser.add_argument("--calibration-batch-size", help="(INT8 ONLY) The batch size to use during calibration.", type=int, default=32)

parser.add_argument("--max-calibration-size", help="(INT8 ONLY) The max number of data to calibrate on from --calibration-data.", type=int,

default=1024)

parser.add_argument("-p", "--preprocess_func", type=str, default=None, help="(INT8 ONLY) Function defined in 'processing.py' to use for pre-processing calibration data.")

parser.add_argument("-s", "--simple", action="store_true", help="Use SimpleCalibrator with random data instead of ImagenetCalibrator for INT8 calibration.")

args, _ = parser.parse_known_args()

# 下面的代码不用改 修改 processing.py 中 preprocess_imagenet,修改宽高为160,使用自己的均值和标准差。

#def preprocess_imagenet(image, channels=3, height=224, width=224):

def preprocess_imagenet(image, channels=3, height=160, width=160):

"""Pre-processing for Imagenet-based Image Classification Models:

resnet50, vgg16, mobilenet, etc. (Doesn't seem to work for Inception)

Parameters

----------

image: PIL.Image

The image resulting from PIL.Image.open(filename) to preprocess

channels: int

The number of channels the image has (Usually 1 or 3)

height: int

The desired height of the image (usually 224 for Imagenet data)

width: int

The desired width of the image (usually 224 for Imagenet data)

Returns

-------

img_data: numpy array

The preprocessed image data in the form of a numpy array

"""

# Get the image in CHW format

resized_image = image.resize((width, height), Image.ANTIALIAS)

img_data = np.asarray(resized_image).astype(np.float32)

if len(img_data.shape) == 2:

# For images without a channel dimension, we stack

img_data = np.stack([img_data] * 3)

logger.debug("Received grayscale image. Reshaped to {:}".format(img_data.shape))

else:

img_data = img_data.transpose([2, 0, 1])

#mean_vec = np.array([0.485, 0.456, 0.406])

#stddev_vec = np.array([0.229, 0.224, 0.225])

my_mean = np.array([104, 117, 123])

assert img_data.shape[0] == channels

for i in range(img_data.shape[0]):

# Scale each pixel to [0, 1] and normalize per channel.

#img_data[i, :, :] = (img_data[i, :, :] / 255 - mean_vec[i]) / stddev_vec[i]

img_data[i, :, :] = img_data[i, :, :] - my_mean[i]

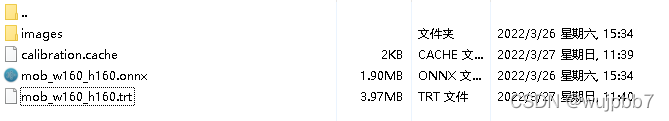

return img_data4、结果

在 my 中生成的 mob_w160_h160.trt 可以做推断,calibration.cache 没啥用。

为啥 trt比onnx大了很多,是由于动态batch,onnx_to_tensorrt.py 中使用 [1, 8, 16, 32, 64] 五种规格的batch来生成优化 profile。

推断 trt 模型

来到 tensorrt-utils/inference/ 目录。

修改 infer.py 中的 main,主要是修改路径和模型输入。

def main():

import os

my_dir = os.path.dirname(os.path.realpath(__file__)) + '/../int8/calibration/my/'

parser = argparse.ArgumentParser()

#parser.add_argument("-e", "--engine", required=True, type=str,

# help="Path to TensorRT engine file.")

parser.add_argument("-e", "--engine", type=str, help="Path to TensorRT engine file.",

default = my_dir+'mob_w160_h160.trt')

parser.add_argument("-s", "--seed", type=int, default=42,

help="Random seed for reproducibility.")

args = parser.parse_args()

...

# Generate random inputs based on profile shapes

#host_inputs = get_random_inputs(engine, context, input_binding_idxs, seed=args.seed)

# 把 host_inputs 换成需要的输入就可以了(以单batch为例,从test.jpg中读取数据)。

# host_inputs 是一个list,有几个输入就有几个元素,元素type是np.array,shape是[N,3,160,160]。

batch_img = []

img = cv2.imread('test.jpg')

img = np.float32(img)

img = cv2.resize(img, (160,160))

img -= my_mean

img = img.transpose(2, 0, 1)

batch_img.append(img)

host_inputs = [np.array(batch_img)]

...

注意在 create_execution_context 和 execute_v2 之间不要有非trt 的 CUDA 操作,(比如 初始化一个 EP为CUDA 的onnxruntime),否则执行时会有莫名其妙的错误,比如:

使用同步的execute_v2时,会有错误:(需要设置 context.debug_sync = True,才能看到错误)

[TensorRT] ERROR: safeContext.cpp (184) - Cudnn Error in configure: 7 (CUDNN_STATUS_MAPPING_ERROR)

[TensorRT] ERROR: FAILED_EXECUTION: std::exception

使用异步的 execute_async 时,会有错误:(异步推断的完整代码可参考https://github.com/RizhaoCai/PyTorch_ONNX_TensorRT)

[TensorRT] ERROR: ../rtSafe/cuda/reformat.cu (740) - Cuda Error in NCHWToNCQHW4: 400 (invalid resource handle)

[TensorRT] ERROR: FAILED_EXECUTION: std::exception