《昇思25天学习打卡营第1天|快速入门》

昇思MindSpore介绍

昇思MindSpore是一个全场景深度学习框架,旨在实现易开发、高效执行、全场景统一部署三大目标。

其中,易开发表现为API友好、调试难度低;高效执行包括计算效率、数据预处理效率和分布式训练效率;全场景则指框架同时支持云、边缘以及端侧场景。

实操

本节通过MindSpore的API来快速实现一个简单的深度学习模型。

MindSpore提供基于Pipeline的数据引擎,通过数据集(Dataset)和数据变换(Transforms)实现高效的数据预处理。

import mindspore

from mindspore import nn

from mindspore.dataset import vision, transforms

from mindspore.dataset import MnistDataset

# Download data from open datasets

from download import download

url = "https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/" \

"notebook/datasets/MNIST_Data.zip"

path = download(url, "./", kind="zip", replace=True)

Downloading data from https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/notebook/datasets/MNIST_Data.zip (10.3 MB)

file_sizes: 100%|███████████████████████████| 10.8M/10.8M [00:00<00:00, 101MB/s]

Extracting zip file…

Successfully downloaded / unzipped to ./

MNIST数据集目录结构如下:

MNIST_Data

└── train

├── train-images-idx3-ubyte (60000个训练图片)

├── train-labels-idx1-ubyte (60000个训练标签)

└── test

├── t10k-images-idx3-ubyte (10000个测试图片)

├── t10k-labels-idx1-ubyte (10000个测试标签)

数据下载完成后,获得数据集对象。

MindSpore的dataset使用数据处理流水线(Data Processing Pipeline),需指定map、batch、shuffle等操作。这里我们使用map对图像数据及标签进行变换处理,然后将处理好的数据集打包为大小为64的batch。

train_dataset = MnistDataset('MNIST_Data/train')

test_dataset = MnistDataset('MNIST_Data/test')

打印数据集中包含的数据列名,用于dataset的预处理。

print(train_dataset.get_col_names())

[‘image’, ‘label’]

def datapipe(dataset, batch_size):

image_transforms = [

vision.Rescale(1.0 / 255.0, 0),

vision.Normalize(mean=(0.1307,), std=(0.3081,)),

vision.HWC2CHW()

]

label_transform = transforms.TypeCast(mindspore.int32)

dataset = dataset.map(image_transforms, 'image')

dataset = dataset.map(label_transform, 'label')

dataset = dataset.batch(batch_size)

return dataset

# Map vision transforms and batch dataset

train_dataset = datapipe(train_dataset, 64)

test_dataset = datapipe(test_dataset, 64)

for image, label in test_dataset.create_tuple_iterator():

print(f"Shape of image [N, C, H, W]: {image.shape} {image.dtype}")

print(f"Shape of label: {label.shape} {label.dtype}")

break

Shape of image [N, C, H, W]: (64, 1, 28, 28) Float32

Shape of label: (64,) Int32

for data in test_dataset.create_dict_iterator():

print(f"Shape of image [N, C, H, W]: {data['image'].shape} {data['image'].dtype}")

print(f"Shape of label: {data['label'].shape} {data['label'].dtype}")

break

Shape of image [N, C, H, W]: (64, 1, 28, 28) Float32

Shape of label: (64,) Int32

# Define model

class Network(nn.Cell):

def __init__(self):

super().__init__()

self.flatten = nn.Flatten()

self.dense_relu_sequential = nn.SequentialCell(

nn.Dense(28*28, 512),

nn.ReLU(),

nn.Dense(512, 512),

nn.ReLU(),

nn.Dense(512, 10)

)

def construct(self, x):

x = self.flatten(x)

logits = self.dense_relu_sequential(x)

return logits

model = Network()

print(model)

Network<

(flatten): Flatten<>

(dense_relu_sequential): SequentialCell<

(0): Dense

(1): ReLU<>

(2): Dense

(3): ReLU<>

(4): Dense

> >

# Instantiate loss function and optimizer

loss_fn = nn.CrossEntropyLoss()

optimizer = nn.SGD(model.trainable_params(), 1e-2)

# 1. Define forward function

def forward_fn(data, label):

logits = model(data)

loss = loss_fn(logits, label)

return loss, logits

# 2. Get gradient function

grad_fn = mindspore.value_and_grad(forward_fn, None, optimizer.parameters, has_aux=True)

# 3. Define function of one-step training

def train_step(data, label):

(loss, _), grads = grad_fn(data, label)

optimizer(grads)

return loss

def train(model, dataset):

size = dataset.get_dataset_size()

model.set_train()

for batch, (data, label) in enumerate(dataset.create_tuple_iterator()):

loss = train_step(data, label)

if batch % 100 == 0:

loss, current = loss.asnumpy(), batch

print(f"loss: {loss:>7f} [{current:>3d}/{size:>3d}]")

def test(model, dataset, loss_fn):

num_batches = dataset.get_dataset_size()

model.set_train(False)

total, test_loss, correct = 0, 0, 0

for data, label in dataset.create_tuple_iterator():

pred = model(data)

total += len(data)

test_loss += loss_fn(pred, label).asnumpy()

correct += (pred.argmax(1) == label).asnumpy().sum()

test_loss /= num_batches

correct /= total

print(f"Test: \n Accuracy: {(100*correct):>0.1f}%, Avg loss: {test_loss:>8f} \n")

epochs = 3

for t in range(epochs):

print(f"Epoch {t+1}\n-------------------------------")

train(model, train_dataset)

test(model, test_dataset, loss_fn)

print("Done!")

Epoch 1

loss: 2.324437 [ 0/938]

loss: 1.895081 [100/938]

loss: 0.988387 [200/938]

loss: 0.488328 [300/938]

loss: 0.471783 [400/938]

loss: 0.339676 [500/938]

loss: 0.491736 [600/938]

loss: 0.280132 [700/938]

loss: 0.527453 [800/938]

loss: 0.341056 [900/938]

Test:

Accuracy: 90.6%, Avg loss: 0.327401

Epoch 2

loss: 0.243178 [ 0/938]

loss: 0.454176 [100/938]

loss: 0.350472 [200/938]

loss: 0.294072 [300/938]

loss: 0.305126 [400/938]

loss: 0.258275 [500/938]

loss: 0.143015 [600/938]

loss: 0.266470 [700/938]

loss: 0.135980 [800/938]

loss: 0.417509 [900/938]

Test:

Accuracy: 92.8%, Avg loss: 0.250266

Epoch 3

loss: 0.335155 [ 0/938]

loss: 0.258822 [100/938]

loss: 0.388704 [200/938]

loss: 0.175153 [300/938]

loss: 0.232374 [400/938]

loss: 0.101277 [500/938]

loss: 0.221097 [600/938]

loss: 0.348889 [700/938]

loss: 0.111365 [800/938]

loss: 0.239588 [900/938]

Test:

Accuracy: 93.7%, Avg loss: 0.212484

Done!

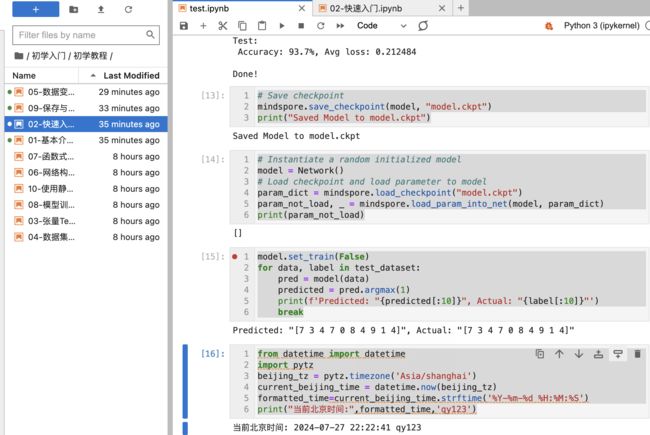

# Save checkpoint

mindspore.save_checkpoint(model, "model.ckpt")

print("Saved Model to model.ckpt")

Saved Model to model.ckpt

# Instantiate a random initialized model

model = Network()

# Load checkpoint and load parameter to model

param_dict = mindspore.load_checkpoint("model.ckpt")

param_not_load, _ = mindspore.load_param_into_net(model, param_dict)

print(param_not_load)

[]

model.set_train(False)

for data, label in test_dataset:

pred = model(data)

predicted = pred.argmax(1)

print(f'Predicted: "{predicted[:10]}", Actual: "{label[:10]}"')

break

Predicted: “[7 3 4 7 0 8 4 9 1 4]”, Actual: “[7 3 4 7 0 8 4 9 1 4]”

from datetime import datetime

import pytz

beijing_tz = pytz.timezone('Asia/shanghai')

current_beijing_time = datetime.now(beijing_tz)

formatted_time=current_beijing_time.strftime('%Y-%m-%d %H:%M:%S')

print("当前北京时间:",formatted_time,'qy123')

当前北京时间: 2024-07-27 22:22:41 qy123