hadoop 在redhat linux5 上部署成功(三机)

断断续续,折腾了一个礼拜,终于在出差的时候把这个hadoop部署成功了。

我的环境是 VMware + redhat linux5,一台namenode,一台dataqnode,配置的过程中也遇到不少麻烦,一会儿再一一列举。

后来又在slaves里面加了个datanode节点,也成功了。看来这段时间的不断尝试没有白费,接下来就要开始在window+cygwin+eclipse里面写分布式搜索引擎了。

现在将成功的运行结果列出来。

【加入一个节点后查看节点】已经有2个可用的了。

Datanodes available: 2 (2 total, 0 dead)

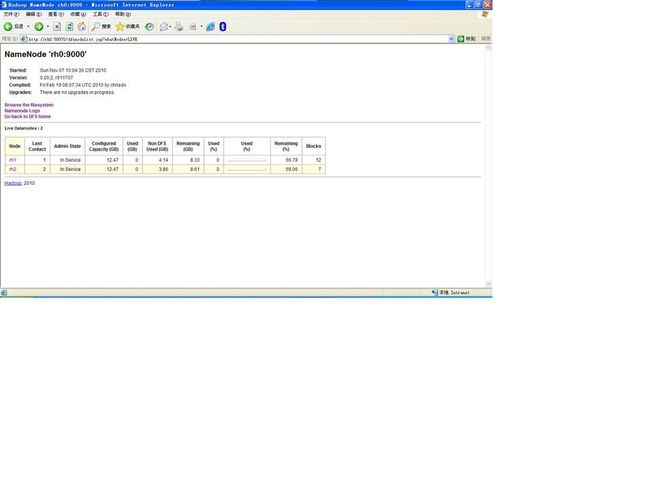

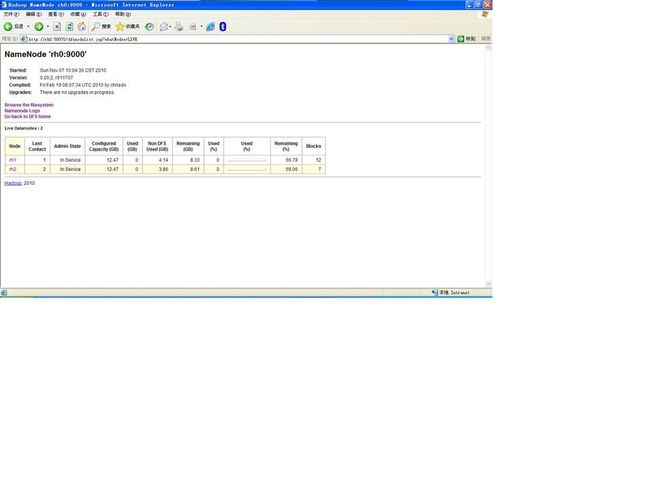

【控制台截图】

【遇到的问题】

0, 要把export HADOOP_HOME=/usr/local/cjd/hadoop/hadoop-0.20.2 加入 /etc/profile,否则datanode不知道这个?

1,"Name node is in safe mode",执行以下命令.

bin/hadoop dfsadmin -safemode leave

bin/hadoop dfsadmin -safemode off

2,如果修改了配置文件,然后datanode起不来,发现报如下错误,

Incompatible namespaceIDs in /tmp/hadoop-root/tmp/dfs/data: namenode namespaceID = 1952086391; datanode namespaceID = 1896626371

那么到 datanode的 /tmp/hadoop-root/tmp/dfs/data/current/文件系统下,把VERSION里面的namespaceID=xxxx 改成 前面这个:1952086391. 重启hadoop即可。

3,配置文件xml里面的机器名要用ip地址。要用ip保险一些。包括 master and slaves文件里面也写ip。

4,要在每个datanode上,telnet namenode 9000,如果能telnet通,说明没有问题。

5,wordcount启动问题:

192.168.126.100: Address 192.168.126.100 maps to rh0, but this does not map back to the address - POSSIBLE BREAK-IN ATTEMPT!

该问题的处理如下:请确保 /etc/xxx/xx/host是如下:

我的环境是 VMware + redhat linux5,一台namenode,一台dataqnode,配置的过程中也遇到不少麻烦,一会儿再一一列举。

后来又在slaves里面加了个datanode节点,也成功了。看来这段时间的不断尝试没有白费,接下来就要开始在window+cygwin+eclipse里面写分布式搜索引擎了。

现在将成功的运行结果列出来。

[root@rh0 bin]# hadoop fs -lsr /tmp drwxr-xr-x - root supergroup 0 2010-11-07 09:41 /tmp/hadoop-root drwxr-xr-x - root supergroup 0 2010-11-07 09:41 /tmp/hadoop-root/tmp drwxr-xr-x - root supergroup 0 2010-11-07 09:41 /tmp/hadoop-root/tmp/mapred drwx-wx-wx - root supergroup 0 2010-11-07 09:42 /tmp/hadoop-root/tmp/mapred/system [root@rh0 bin]# hadoop dfsadmin -report Configured Capacity: 13391486976 (12.47 GB) Present Capacity: 8943796224 (8.33 GB) DFS Remaining: 8943742976 (8.33 GB) DFS Used: 53248 (52 KB) DFS Used%: 0% Under replicated blocks: 0 Blocks with corrupt replicas: 0 Missing blocks: 0 ------------------------------------------------- Datanodes available: 1 (1 total, 0 dead) Name: 192.168.126.101:50010 Decommission Status : Normal Configured Capacity: 13391486976 (12.47 GB) DFS Used: 53248 (52 KB) Non DFS Used: 4447690752 (4.14 GB) DFS Remaining: 8943742976(8.33 GB) DFS Used%: 0% DFS Remaining%: 66.79% Last contact: Sun Nov 07 09:42:18 CST 2010 [root@rh0 bin]# ./hadoop fs -put /usr/local/cjd/b.txt /tmp/hadoop-root/tmp/cjd/b.txt [root@rh0 bin]# ./hadoop jar hadoop-0.20.2-examples.jar wordcount /tmp/hadoop-root/tmp/cjd /tmp/hadoop-root/tmp/output-dir 10/11/07 09:43:03 INFO input.FileInputFormat: Total input paths to process : 1 10/11/07 09:43:03 INFO mapred.JobClient: Running job: job_201011070941_0001 10/11/07 09:43:04 INFO mapred.JobClient: map 0% reduce 0% 10/11/07 09:43:15 INFO mapred.JobClient: map 100% reduce 0% 10/11/07 09:43:36 INFO mapred.JobClient: map 100% reduce 100% 10/11/07 09:43:39 INFO mapred.JobClient: Job complete: job_201011070941_0001 10/11/07 09:43:39 INFO mapred.JobClient: Counters: 17 10/11/07 09:43:39 INFO mapred.JobClient: Job Counters 10/11/07 09:43:39 INFO mapred.JobClient: Launched reduce tasks=1 10/11/07 09:43:39 INFO mapred.JobClient: Launched map tasks=1 10/11/07 09:43:39 INFO mapred.JobClient: Data-local map tasks=1 10/11/07 09:43:39 INFO mapred.JobClient: FileSystemCounters 10/11/07 09:43:39 INFO mapred.JobClient: FILE_BYTES_READ=1836 10/11/07 09:43:39 INFO mapred.JobClient: HDFS_BYTES_READ=1366 10/11/07 09:43:39 INFO mapred.JobClient: FILE_BYTES_WRITTEN=3704 10/11/07 09:43:39 INFO mapred.JobClient: HDFS_BYTES_WRITTEN=1306 10/11/07 09:43:39 INFO mapred.JobClient: Map-Reduce Framework 10/11/07 09:43:39 INFO mapred.JobClient: Reduce input groups=131 10/11/07 09:43:39 INFO mapred.JobClient: Combine output records=131 10/11/07 09:43:39 INFO mapred.JobClient: Map input records=31 10/11/07 09:43:39 INFO mapred.JobClient: Reduce shuffle bytes=1836 10/11/07 09:43:39 INFO mapred.JobClient: Reduce output records=131 10/11/07 09:43:39 INFO mapred.JobClient: Spilled Records=262 10/11/07 09:43:39 INFO mapred.JobClient: Map output bytes=2055 10/11/07 09:43:39 INFO mapred.JobClient: Combine input records=179 10/11/07 09:43:39 INFO mapred.JobClient: Map output records=179 10/11/07 09:43:39 INFO mapred.JobClient: Reduce input records=131 [root@rh0 bin]# hadoop dfsadmin -report Configured Capacity: 13391486976 (12.47 GB) Present Capacity: 8943679754 (8.33 GB) DFS Remaining: 8943587328 (8.33 GB) DFS Used: 92426 (90.26 KB) DFS Used%: 0% Under replicated blocks: 0 Blocks with corrupt replicas: 0 Missing blocks: 0 ------------------------------------------------- Datanodes available: 1 (1 total, 0 dead) Name: 192.168.126.101:50010 Decommission Status : Normal Configured Capacity: 13391486976 (12.47 GB) DFS Used: 92426 (90.26 KB) Non DFS Used: 4447807222 (4.14 GB) DFS Remaining: 8943587328(8.33 GB) DFS Used%: 0% DFS Remaining%: 66.79% Last contact: Sun Nov 07 09:46:22 CST 2010 [root@rh0 bin]# hadoop fs -lsr /tmp drwxr-xr-x - root supergroup 0 2010-11-07 09:41 /tmp/hadoop-root drwxr-xr-x - root supergroup 0 2010-11-07 09:43 /tmp/hadoop-root/tmp drwxr-xr-x - root supergroup 0 2010-11-07 09:42 /tmp/hadoop-root/tmp/cjd -rw-r--r-- 1 root supergroup 1366 2010-11-07 09:42 /tmp/hadoop-root/tmp/cjd/b.txt drwxr-xr-x - root supergroup 0 2010-11-07 09:41 /tmp/hadoop-root/tmp/mapred drwx-wx-wx - root supergroup 0 2010-11-07 09:43 /tmp/hadoop-root/tmp/mapred/system -rw------- 1 root supergroup 4 2010-11-07 09:42 /tmp/hadoop-root/tmp/mapred/system/jobtracker.info drwxr-xr-x - root supergroup 0 2010-11-07 09:43 /tmp/hadoop-root/tmp/output-dir drwxr-xr-x - root supergroup 0 2010-11-07 09:43 /tmp/hadoop-root/tmp/output-dir/_logs drwxr-xr-x - root supergroup 0 2010-11-07 09:43 /tmp/hadoop-root/tmp/output-dir/_logs/history -rw-r--r-- 1 root supergroup 29085 2010-11-07 09:43 /tmp/hadoop-root/tmp/output-dir/_logs/history/rh0_1289094087484_job_201011070941_0001_conf.xml -rw-r--r-- 1 root supergroup 7070 2010-11-07 09:43 /tmp/hadoop-root/tmp/output-dir/_logs/history/rh0_1289094087484_job_201011070941_0001_root_word+count -rw-r--r-- 1 root supergroup 1306 2010-11-07 09:43 /tmp/hadoop-root/tmp/output-dir/part-r-00000 [root@rh0 bin]# hadoop fs -get /tmp/hadoop-root/tmp/output-dir /usr/local/cjd/b [root@rh0 bin]#

【加入一个节点后查看节点】已经有2个可用的了。

Datanodes available: 2 (2 total, 0 dead)

[root@rh0 bin]# hadoop dfsadmin -report Configured Capacity: 26782973952 (24.94 GB) Present Capacity: 18191843343 (16.94 GB) DFS Remaining: 18191613952 (16.94 GB) DFS Used: 229391 (224.01 KB) DFS Used%: 0% Under replicated blocks: 0 Blocks with corrupt replicas: 0 Missing blocks: 0 ------------------------------------------------- Datanodes available: 2 (2 total, 0 dead) Name: 192.168.126.102:50010 Decommission Status : Normal Configured Capacity: 13391486976 (12.47 GB) DFS Used: 53263 (52.01 KB) Non DFS Used: 4143476721 (3.86 GB) DFS Remaining: 9247956992(8.61 GB) DFS Used%: 0% DFS Remaining%: 69.06% Last contact: Sun Nov 07 10:06:55 CST 2010 Name: 192.168.126.101:50010 Decommission Status : Normal Configured Capacity: 13391486976 (12.47 GB) DFS Used: 176128 (172 KB) Non DFS Used: 4447653888 (4.14 GB) DFS Remaining: 8943656960(8.33 GB) DFS Used%: 0% DFS Remaining%: 66.79% Last contact: Sun Nov 07 10:06:55 CST 2010

【控制台截图】

【遇到的问题】

0, 要把export HADOOP_HOME=/usr/local/cjd/hadoop/hadoop-0.20.2 加入 /etc/profile,否则datanode不知道这个?

1,"Name node is in safe mode",执行以下命令.

bin/hadoop dfsadmin -safemode leave

bin/hadoop dfsadmin -safemode off

2,如果修改了配置文件,然后datanode起不来,发现报如下错误,

Incompatible namespaceIDs in /tmp/hadoop-root/tmp/dfs/data: namenode namespaceID = 1952086391; datanode namespaceID = 1896626371

那么到 datanode的 /tmp/hadoop-root/tmp/dfs/data/current/文件系统下,把VERSION里面的namespaceID=xxxx 改成 前面这个:1952086391. 重启hadoop即可。

3,配置文件xml里面的机器名要用ip地址。要用ip保险一些。包括 master and slaves文件里面也写ip。

4,要在每个datanode上,telnet namenode 9000,如果能telnet通,说明没有问题。

5,wordcount启动问题:

192.168.126.100: Address 192.168.126.100 maps to rh0, but this does not map back to the address - POSSIBLE BREAK-IN ATTEMPT!

该问题的处理如下:请确保 /etc/xxx/xx/host是如下:

# that require network functionality will fail. #127.0.0.1 rh0 localhost [配置成这个会报错] 127.0.0.1 localhost ::1 localhost6.localdomain6 localhost6 192.168.126.100 rh0 192.168.126.101 rh1 192.168.126.102 rh2