hadoop2.0 相关问题(持续更新)

搭建了一个hadoop2.0的测试集群,使用的是QJM HA方案,搭建配置过程就不在这里说了,晚上有很多资料。把遇到的一些问题总结一下:

配置HA的时候,hdfs-site.xml文件中:

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>false</value>

</property>

我们在这里使用的是收到恢复故障,如果使用自动恢复,需要配置:

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/qingwu.fu/.ssh/id_rsa</value>

</property>

公司安全规定不能设置无密码登陆,自己修改 /etc/hosts.allow ,ssh无密码登陆可以使用。过了几分钟就不好使了,查看 /etc/hosts.allow 文件,发现又恢复回去了。

由于是测试集群,就没有向安全组申请,不过在ssh可以无密码登陆的时候测试过自动恢复,挺好用的。

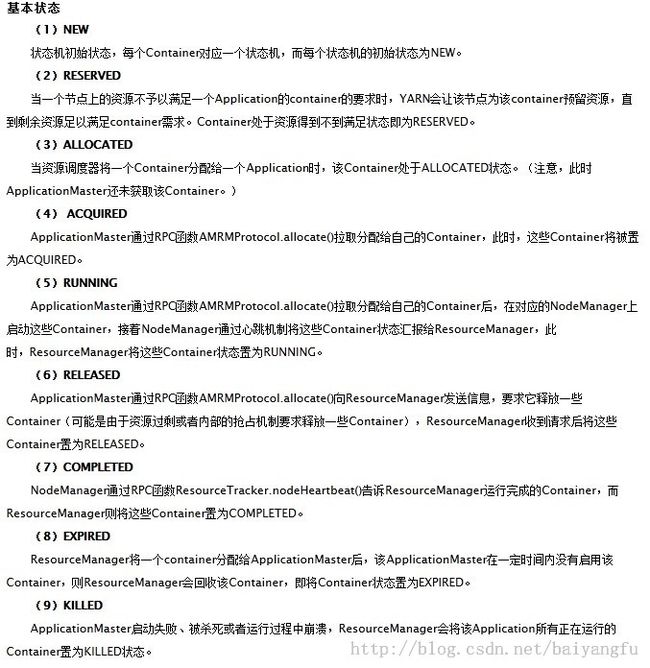

集群搭好以后,跑了一下wordcount 程序,发现有一点问题,任务提交以后总是不执行,原因是 nodemanager 在起container的时候总是处于reserved状态。hadoop2.0 也不会把这样的状态当成是错误,导致找了很长时间才找到问题的所在。首先需要了解一下container的几种基本状态:

原来 container处于reserved状态是由于所需要的资源不能满足,等待nodemanager的资源达到container的需要才运行。

知道这一点就能推断出应该跟nodemanager的资源设置问题,也就是内存设置问题:

yarn-site.xml中与内存相关配置

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>512</value>

<descript>The minimum allocation for every container request at the RM, in MBs. Memory requests lower than this won't take effect, and the specified value will get allocated at minimum.</descript>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>4096</value>

</property>

<!-- configuration for nodemanager -->

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>4096</value>

<descript>Amount of physical memory, in MB, that can be allocated for containers.</descript>

</property>

mapred-site.xml中与内存相关配置:

<property>

<name>mapreduce.map.memory.mb</name>

<value>1024</value>

</property>

<property>

<name>mapreduce.map.java.opts</name>

<value>-Xmx1024M</value>

</property>

注意:mapreduce.map.memory.mb 和 yarn.scheduler.minimum-allocation-mb 的设置不要大于yarn.nodemanager.resource.memory-mb

查看namenode的镜像目录,会发现有很多的edit文件,而且是每隔一秒就会生成一个,这个跟以下配置有关:

<property>

<name>dfs.namenode.name.dir</name>

<value>/export1/hadoop2/hdfs/namenode</value>

</property>

<property>

<name>dfs.namenode.edits.dir</name>

<value>${dfs.namenode.name.dir}</value>

<description>Determines where on the local filesystem the DFS name node

should store the transaction (edits) file. If this is a comma-delimited list

of directories then the transaction file is replicated in all of the

directories, for redundancy. Default value is same as dfs.namenode.name.dir

</description>

</property>

<property>

<name>dfs.namenode.num.extra.edits.retained</name>

<value>1000000</value>

<description>The number of extra transactions which should be retained

beyond what is minimally necessary for a NN restart. This can be useful for

audit purposes or for an HA setup where a remote Standby Node may have

been offline for some time and need to have a longer backlog of retained

edits in order to start again.

Typically each edit is on the order of a few hundred bytes, so the default

of 1 million edits should be on the order of hundreds of MBs or low GBs.

NOTE: Fewer extra edits may be retained than value specified for this setting

if doing so would mean that more segments would be retained than the number

configured by dfs.namenode.max.extra.edits.segments.retained.

</description>

</property>

<property>

<name>dfs.ha.tail-edits.period</name>

<value>60</value>

<description>

How often, in seconds, the StandbyNode should check for new

finalized log segments in the shared edits log.

</description>

</property>