Gstreamer 中的playback插件

http://blog.sina.com.cn/s/articlelist_2160998997_4_1.html

1. PLAYBACK插件基本介绍

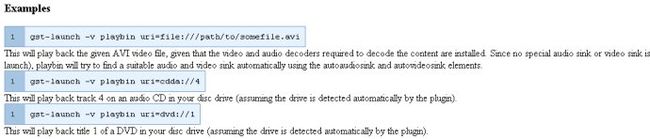

在早期的版本中同时存在playbin和playbin2,但是在最新的版本中,playbin2已经稳定,取代了playbin,playbin不再进行维护。下面是官网上的一些描述:

Playbin2 provides a stand-alone everything-in-one abstraction for an audio and/or video player.

playbin2 is considered stable now. It is the prefered playback API now, and replaces the oldplaybin element, which is no longer supported.

上面的例子中就是没有描述audio/videosink,playbin要负责找到最好的element。

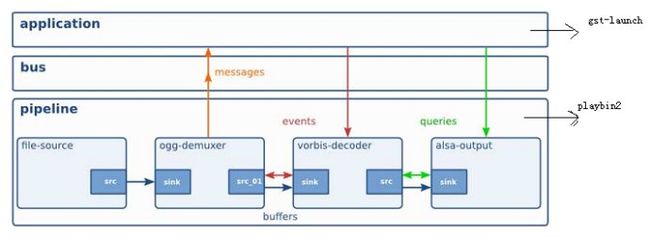

基于下面这个图,gst-lauch就是这里的application,pipeline 就是playbin

也可以不使用playbin,而是直接描述自己的pipeline:

gst-launch gnlfilesource location=file:///tmp/myfile.mov start=0 duration=2000000000 ! autovideosink

在gst-lauch中就是来控制pipeline状态机的状态跳转来驱动pipeline工作,并且接收pipeline发出的message

进行相应的处理,当EOS message发出后跳出处理。application通过event来驱动pipeline工作,并根据

pipeline发出的message确定如何对pipeline进行控制。

可见,playbin只是一个pipeline,而pipeline需要一个application来进行创建和驱动,gst-launch就是这样

的一个application。

1. 创建pipeline

int main (int argc, char *argv[])

{

pipeline =

(GstElement *) gst_parse_launchv ((const gchar **) argvn, &error);//从上面的调用来看,

gst-launch需要再命令行上描述pipeline的组成,因此通过解析命令行可以知道pipeline内的

element的情况,gst-launch解析这些element并且从注册的plugin中找到这些element来创建

出pipeline,得到pipeline后也可以通过pipeline的数据结构得到内部的element的指针,因此

application是可以直接调用element内实现的函数比如change_state等。

}

2. pipeline和element对外函数接口

gstreamer为element定义了一些需要实现的函数,这些函数可以被application调用。在类定义中

申明了这些函数,并且通常都是在class_init()中定义。另外,pipeline是一个多重继承的子类:

继承了Gobject,Gelement, GBin类,

struct _GstPlayBinClass

{

GstPipelineClass parent_class;

.....

}

struct _GstPipelineClass {

GstBinClass parent_class;

...

}

struct _GstBinClass {

GstElementClass parent_class;

....

}

struct _GstElementClass

{

GstObjectClass parent_class;

....

}

struct _GstObjectClass {

GObjectClass parent_class;

。。。。

}

继承关系:

GObjectClass ->GstObjectClass->GstElementClass->GstBinClass->GstPipelineClass->GstPlayBinClass

举个例子:

在gst-lauch中,通过对gstElement调用set_state()函数,

static GstElement *pipeline = NULL;

...

gst_element_set_state (pipeline,GST_STATE_PLAYING);

-> result = (oclass->set_state) (element, state);

-> klass->set_state = GST_DEBUG_FUNCPTR (gst_element_set_state_func);

-> transition = (GstStateChange) GST_STATE_TRANSITION (current, next);

ret = gst_element_change_state (element, transition);

-> ret = (oclass->change_state) (element, transition);

因为当前状态和target状态可能不是一次就能跳转到的,而是要符合状态机跳转的

规则,可能产生中间跳转比如从null->playing,需要经过null->ready->pause->play

因此需要处理这些中间状态,通过对ret返回值进行处理(target_state),需要根据

target_state来建立中间跳转,通过下面的函数来完成中间跳转:

gst_element_continue_state

-> message = gst_message_new_state_changed (GST_OBJECT_CAST (element),

old_state, old_next, pending);

gst_element_post_message (element, message);

set_state()要满足状态机跳转的要求:

typedef enum

{

GST_STATE_CHANGE_NULL_TO_READY = (GST_STATE_NULL<<3) | GST_STATE_READY,

GST_STATE_CHANGE_READY_TO_PAUSED = (GST_STATE_READY<<3) | GST_STATE_PAUSED,

GST_STATE_CHANGE_PAUSED_TO_PLAYING = (GST_STATE_PAUSED<<3) | GST_STATE_PLAYING,

GST_STATE_CHANGE_PLAYING_TO_PAUSED = (GST_STATE_PLAYING<<3) | GST_STATE_PAUSED,

GST_STATE_CHANGE_PAUSED_TO_READY = (GST_STATE_PAUSED<<3) | GST_STATE_READY,

GST_STATE_CHANGE_READY_TO_NULL = (GST_STATE_READY<<3) | GST_STATE_NULL

} GstStateChange;

因此具有这些类的函数接口:

static void

gst_play_bin_class_init (GstPlayBinClass * klass)

{

GObjectClass *gobject_klass;

GstElementClass *gstelement_klass;

GstBinClass *gstbin_klass;

gobject_klass = (GObjectClass *) klass;

gstelement_klass = (GstElementClass *) klass;

gstbin_klass = (GstBinClass *) klass;

}

struct _GstPlayBinClass

{

GstPipelineClass parent_class;

void (*about_to_finish) (GstPlayBin * playbin);

void (*video_changed) (GstPlayBin * playbin);

void (*audio_changed) (GstPlayBin * playbin);

void (*text_changed) (GstPlayBin * playbin);

void (*video_tags_changed) (GstPlayBin * playbin, gint stream);

void (*audio_tags_changed) (GstPlayBin * playbin, gint stream);

void (*text_tags_changed) (GstPlayBin * playbin, gint stream);

GstTagList *(*get_video_tags) (GstPlayBin * playbin, gint stream);

GstTagList *(*get_audio_tags) (GstPlayBin * playbin, gint stream);

GstTagList *(*get_text_tags) (GstPlayBin * playbin, gint stream);

GstBuffer *(*convert_frame) (GstPlayBin * playbin, GstCaps * caps);

GstPad *(*get_video_pad) (GstPlayBin * playbin, gint stream);

GstPad *(*get_audio_pad) (GstPlayBin * playbin, gint stream);

GstPad *(*get_text_pad) (GstPlayBin * playbin, gint stream);

};

static void

gst_play_bin_class_init (GstPlayBinClass * klass)

{

GObjectClass *gobject_klass;

GstElementClass *gstelement_klass;

GstBinClass *gstbin_klass;

gobject_klass = (GObjectClass *) klass;

gstelement_klass = (GstElementClass *) klass;

gstbin_klass = (GstBinClass *) klass;

parent_class = g_type_class_peek_parent (klass);

gobject_klass->set_property = gst_play_bin_set_property;

gobject_klass->get_property = gst_play_bin_get_property;

gobject_klass->finalize = gst_play_bin_finalize;

klass->get_video_tags = gst_play_bin_get_video_tags;

klass->get_audio_tags = gst_play_bin_get_audio_tags;

klass->get_text_tags = gst_play_bin_get_text_tags;

klass->convert_frame = gst_play_bin_convert_frame;

klass->get_video_pad = gst_play_bin_get_video_pad;

klass->get_audio_pad = gst_play_bin_get_audio_pad;

klass->get_text_pad = gst_play_bin_get_text_pad;

gstelement_klass->change_state =

GST_DEBUG_FUNCPTR (gst_play_bin_change_state);

gstelement_klass->query = GST_DEBUG_FUNCPTR (gst_play_bin_query);

gstbin_klass->handle_message =

GST_DEBUG_FUNCPTR (gst_play_bin_handle_message);

}

application可以通过pipeline指针和element指针去调用各个element的类函数比如:

bus = gst_element_get_bus (pipeline);

gst_element_set_index (pipeline, index);

gst_element_set_state (pipeline, GST_STATE_PAUSED);

如何创建message:

//Create a new element-specific message创建一个element相关的message

GstMessage *gst_message_new_element (GstObject * src, GstStructure * structure)

{

return gst_message_new_custom (GST_MESSAGE_ELEMENT, src, structure);//message创建函数

}

所有支持的message类型包括:

static GstMessageQuarks message_quarks[] = {

{GST_MESSAGE_UNKNOWN, "unknown", 0},

{GST_MESSAGE_EOS, "eos", 0},

{GST_MESSAGE_ERROR, "error", 0},

{GST_MESSAGE_WARNING, "warning", 0},

{GST_MESSAGE_INFO, "info", 0},

{GST_MESSAGE_TAG, "tag", 0},

{GST_MESSAGE_BUFFERING, "buffering", 0},

{GST_MESSAGE_STATE_CHANGED, "state-changed", 0},

{GST_MESSAGE_STATE_DIRTY, "state-dirty", 0},

{GST_MESSAGE_STEP_DONE, "step-done", 0},

{GST_MESSAGE_CLOCK_PROVIDE, "clock-provide", 0},

{GST_MESSAGE_CLOCK_LOST, "clock-lost", 0},

{GST_MESSAGE_NEW_CLOCK, "new-clock", 0},

{GST_MESSAGE_STRUCTURE_CHANGE, "structure-change", 0},

{GST_MESSAGE_STREAM_STATUS, "stream-status", 0},

{GST_MESSAGE_APPLICATION, "application", 0},

{GST_MESSAGE_ELEMENT, "element", 0},

{GST_MESSAGE_SEGMENT_START, "segment-start", 0},

{GST_MESSAGE_SEGMENT_DONE, "segment-done", 0},

{GST_MESSAGE_DURATION, "duration", 0},

{GST_MESSAGE_LATENCY, "latency", 0},

{GST_MESSAGE_ASYNC_START, "async-start", 0},

{GST_MESSAGE_ASYNC_DONE, "async-done", 0},

{GST_MESSAGE_REQUEST_STATE, "request-state", 0},

{GST_MESSAGE_STEP_START, "step-start", 0},

{GST_MESSAGE_QOS, "qos", 0},

{GST_MESSAGE_PROGRESS, "progress", 0},

{0, NULL, 0}

};

//把message发到bus上

gboolean gst_bus_post (GstBus * bus, GstMessage * message)

例子:

s = gst_structure_new ("test_message", "msg_id", G_TYPE_INT, i, NULL);

m = gst_message_new_element (NULL, s);

GST_LOG ("posting element message");

gst_bus_post (test_bus, m);

在gst-launch中,由application直接去处理message,

static EventLoopResult

event_loop (GstElement * pipeline, gboolean blocking, GstState target_state)

{

bus = gst_element_get_bus (GST_ELEMENT (pipeline));

while (TRUE) {

message = gst_bus_poll (bus, GST_MESSAGE_ANY, blocking ? -1 : 0);//从bus上获取message再处理

switch (GST_MESSAGE_TYPE (message)) {

case GST_MESSAGE_NEW_CLOCK:

{

GstClock *clock;

gst_message_parse_new_clock (message, &clock);

PRINT ("New clock: %s\n", (clock ? GST_OBJECT_NAME (clock) : "NULL"));

break;

}

case GST_MESSAGE_CLOCK_LOST:

PRINT ("Clock lost, selecting a new one\n");

gst_element_set_state (pipeline, GST_STATE_PAUSED);

gst_element_set_state (pipeline, GST_STATE_PLAYING);

break;

。。。。。。

}

另外一种message处理方法:

由于bus的存在,而message都需要通过bus传输给application,另外一种方法就是在bus上增加watch函数

来处理pipeline发给application的message:

bus = gst_pipeline_get_bus (GST_PIPELINE (play));

gst_bus_add_watch (bus, my_bus_callback, loop);

gst_object_unref (bus);

static gboolean

my_bus_callback (GstBus *bus,

GstMessage *message,

gpointer data)

{

GMainLoop *loop = data;

switch (GST_MESSAGE_TYPE (message)) {

case GST_MESSAGE_ERROR: {

GError *err;

gchar *debug;

gst_message_parse_error (message, &err, &debug);

g_print ("Error: %s\n", err->message);

g_error_free (err);

g_free (debug);

g_main_loop_quit (loop);

break;

}

case GST_MESSAGE_EOS:

g_main_loop_quit (loop);

break;

.....

}

3. playbin2

playbin2是一个pipeline,受到application的驱动。pipeline也是一个element,因此包含一个4状态机:

NULL,ready,pause,play

首先playbin2和普通的plugin的element一样要实现相关的类函数供Application来调用,并且自动按照状态跳转图来维护状态机的正常跳转。

如果需要和APPLICATION进行通信,发出message信息通过bus传递给application。在element中是如何发出message。

可以定义一些signal来绑定信号处理函数的方式:(基于event的方式)

enum

{

SIGNAL_ABOUT_TO_FINISH,

SIGNAL_CONVERT_FRAME,

SIGNAL_VIDEO_CHANGED,

SIGNAL_AUDIO_CHANGED,

SIGNAL_TEXT_CHANGED,

SIGNAL_VIDEO_TAGS_CHANGED,

SIGNAL_AUDIO_TAGS_CHANGED,

SIGNAL_TEXT_TAGS_CHANGED,

SIGNAL_GET_VIDEO_TAGS,

SIGNAL_GET_AUDIO_TAGS,

SIGNAL_GET_TEXT_TAGS,

SIGNAL_GET_VIDEO_PAD,

SIGNAL_GET_AUDIO_PAD,

SIGNAL_GET_TEXT_PAD,

LAST_SIGNAL

};

当某个signal发出以后,该handler函数自动调用:

g_signal_emit (G_OBJECT (playbin), gst_play_bin_signals[signal], 0, NULL);

g_signal_emit (G_OBJECT (playbin),gst_play_bin_signals[SIGNAL_ABOUT_TO_FINISH], 0, NULL);

signal和handler绑定方法1: 在class_init()中:

gst_play_bin_signals[SIGNAL_GET_AUDIO_PAD] =

g_signal_new ("get-audio-pad", G_TYPE_FROM_CLASS (klass),

G_SIGNAL_RUN_LAST | G_SIGNAL_ACTION,

G_STRUCT_OFFSET (GstPlayBinClass, get_audio_pad ), NULL, NULL,

gst_play_marshal_OBJECT__INT, GST_TYPE_PAD, 1, G_TYPE_INT);

klass->get_audio_pad = gst_play_bin_get_audio_pad;

另外还有种绑定signal和handler(callback函数)的方法g_signal_connect:

group->pad_added_id = g_signal_connect (uridecodebin, "pad-added",

G_CALLBACK (pad_added_cb), group);

group->pad_removed_id = g_signal_connect (uridecodebin, "pad-removed",

G_CALLBACK (pad_removed_cb), group);

group->no_more_pads_id = g_signal_connect (uridecodebin, "no-more-pads",

G_CALLBACK (no_more_pads_cb), group);

group->notify_source_id = g_signal_connect (uridecodebin, "notify::source",

G_CALLBACK (notify_source_cb), group);

playsink:

在playbin2中需要加载playsink作为sink element。

static void gst_play_bin_init (GstPlayBin * playbin)

{

playbin->playsink = g_object_new (GST_TYPE_PLAY_SINK, NULL);

gst_bin_add (GST_BIN_CAST (playbin), GST_ELEMENT_CAST (playbin->playsink));

}

关于playsink见另外一篇blog。

It can handle both audio and video files and features

- automatic file type recognition and based on that automatic selection and usage of the right audio/video/subtitle demuxers/decoders

- visualisations for audio files

- subtitle support for video files. Subtitles can be store in external files.

- stream selection between different video/audio/subtitles streams

- meta info (tag) extraction

- easy access to the last video frame

- buffering when playing streams over a network

- volume control with mute option

Usage

A playbin2 element can be created just like any other element using gst_element_factory_make(). The file/URI to play should be set via the "uri" property. This must be an absolute URI, relative file paths are not allowed. Example URIs are file:///home/joe/movie.avi or http://www.joedoe.com/foo.ogg

Playbin is a GstPipeline. It will notify the application of everything that's happening (errors, end of stream, tags found, state changes, etc.) by posting messages on its GstBus. The application needs to watch the bus.

Playback can be initiated by setting the element to PLAYING state using gst_element_set_state(). Note that the state change will take place in the background in a separate thread, when the function returns playback is probably not happening yet and any errors might not have occured yet. Applications using playbin should ideally be written to deal with things completely asynchroneous.

When playback has finished (an EOS message has been received on the bus) or an error has occured (an ERROR message has been received on the bus) or the user wants to play a different track, playbin should be set back to READY or NULL state, then the "uri" property should be set to the new location and then playbin be set to PLAYING state again.

Seeking can be done using gst_element_seek_simple() or gst_element_seek() on the playbin element. Again, the seek will not be executed instantaneously, but will be done in a background thread. When the seek call returns the seek will most likely still be in process. An application may wait for the seek to finish (or fail) using gst_element_get_state() with -1 as the timeout, but this will block the user interface and is not recommended at all.

Applications may query the current position and duration of the stream viagst_element_query_position() and gst_element_query_duration() and setting the format passed to GST_FORMAT_TIME. If the query was successful, the duration or position will have been returned in units of nanoseconds.

2. 代码说明

代码在gst-plugins-base-0.10.30\gst\playback中。

2.1 数据结构

//定义数据结构

struct _GstPlayBin

{

GstPipeline parent;

GStaticRecMutex lock;

GstSourceGroup groups[2];

GstSourceGroup *curr_group;

GstSourceGroup *next_group;

guint connection_speed;

gint current_video;

gint current_audio;

gint current_text;

guint64 buffer_duration;

guint buffer_size;

GstPlaySink *playsink;

GstElement *source;

GMutex *dyn_lock;

gint shutdown;

GMutex *elements_lock;

guint32 elements_cookie;

GList *elements;

gboolean have_selector;

GstElement *audio_sink;

GstElement *video_sink;

GstElement *text_sink;

struct

{

gboolean valid;

GstFormat format;

gint64 duration;

} duration[5];

guint64 ring_buffer_max_size;

};

struct _GstPipeline {

GstBin bin;

GstClock *fixed_clock;

GstClockTime stream_time;

GstClockTime delay;

GstPipelinePrivate *priv;

gpointer _gst_reserved[GST_PADDING-1];

};

struct _GstPipelineClass {

GstBinClass parent_class;

gpointer _gst_reserved[GST_PADDING];

};

//定义函数指针

struct _GstPlayBinClass

{

GstPipelineClass parent_class; //playbin是一个pipeline类型

void (*about_to_finish) (GstPlayBin * playbin);

void (*video_changed) (GstPlayBin * playbin);

void (*audio_changed) (GstPlayBin * playbin);

void (*text_changed) (GstPlayBin * playbin);

void (*video_tags_changed) (GstPlayBin * playbin, gint stream);

void (*audio_tags_changed) (GstPlayBin * playbin, gint stream);

void (*text_tags_changed) (GstPlayBin * playbin, gint stream);

GstTagList *(*get_video_tags) (GstPlayBin * playbin, gint stream);

GstTagList *(*get_audio_tags) (GstPlayBin * playbin, gint stream);

GstTagList *(*get_text_tags) (GstPlayBin * playbin, gint stream);

GstBuffer *(*convert_frame) (GstPlayBin * playbin, GstCaps * caps);

GstPad *(*get_video_pad) (GstPlayBin * playbin, gint stream);

GstPad *(*get_audio_pad) (GstPlayBin * playbin, gint stream);

GstPad *(*get_text_pad) (GstPlayBin * playbin, gint stream);

};

上面几个类的关系:

struct _GstSourceGroup

{

GstPlayBin *playbin;

GMutex *lock;

gboolean valid;

gboolean active;

gchar *uri;

gchar *suburi;

GValueArray *streaminfo;

GstElement *source;

GPtrArray *video_channels;

GPtrArray *audio_channels;

GPtrArray *text_channels;

GstElement *audio_sink;

GstElement *video_sink;

GstElement *uridecodebin;

GstElement *suburidecodebin;

gint pending;

gboolean sub_pending;

gulong pad_added_id;

gulong pad_removed_id;

gulong no_more_pads_id;

gulong notify_source_id;

gulong drained_id;

gulong autoplug_factories_id;

gulong autoplug_select_id;

gulong autoplug_continue_id;

gulong sub_pad_added_id;

gulong sub_pad_removed_id;

gulong sub_no_more_pads_id;

gulong sub_autoplug_continue_id;

gulong block_id;

GMutex *stream_changed_pending_lock;

GList *stream_changed_pending;

GstSourceSelect selector[PLAYBIN_STREAM_LAST];

};

初始化:

gst_play_bin_class_init (GstPlayBinClass * klass)

{

主要是给函数指针赋值。

gst_play_bin_set_property()/gst_play_bin_get_property()/gst_play_bin_finalize()/

gst_play_bin_get_video_tags()/gst_play_bin_get_audio_tags()/gst_play_bin_get_text_tags()

gst_play_bin_convert_frame()/gst_play_bin_get_video_pad()/gst_play_bin_get_audio_pad()

gst_play_bin_get_text_pad()/gst_play_bin_change_state()/gst_play_bin_query()/

gst_play_bin_handle_message()

另外,为这个class声明了几个支持的property:

enum

{

PROP_0,

PROP_FLAGS,

PROP_MUTE,

PROP_VOLUME,

PROP_FONT_DESC,

PROP_SUBTITLE_ENCODING,

PROP_VIS_PLUGIN,

PROP_FRAME,

PROP_AV_OFFSET,

PROP_LAST

};

为这个class定义了几个signal和对应的处理函数

gst_play_bin_signals[SIGNAL_VIDEO_CHANGED] =

g_signal_new ("video-changed", G_TYPE_FROM_CLASS (klass),

G_SIGNAL_RUN_LAST,

G_STRUCT_OFFSET (GstPlayBinClass, video_changed), NULL, NULL,

gst_marshal_VOID__VOID, G_TYPE_NONE, 0, G_TYPE_NONE);

enum

{

SIGNAL_ABOUT_TO_FINISH,

SIGNAL_CONVERT_FRAME,

SIGNAL_VIDEO_CHANGED,

SIGNAL_AUDIO_CHANGED,

SIGNAL_TEXT_CHANGED,

SIGNAL_VIDEO_TAGS_CHANGED,

SIGNAL_AUDIO_TAGS_CHANGED,

SIGNAL_TEXT_TAGS_CHANGED,

SIGNAL_GET_VIDEO_TAGS,

SIGNAL_GET_AUDIO_TAGS,

SIGNAL_GET_TEXT_TAGS,

SIGNAL_GET_VIDEO_PAD,

SIGNAL_GET_AUDIO_PAD,

SIGNAL_GET_TEXT_PAD,

SIGNAL_SOURCE_SETUP,

LAST_SIGNAL

};

}

在playbin这个class的初始化中:

gst_play_bin_init (GstPlayBin * playbin)

{

//创建了2个groups用于切换(配置的ping-pong)

GstSourceGroup groups[2];

GstSourceGroup *curr_group;

GstSourceGroup *next_group;

}

为上面定义的函数指针的函数进行实现。

static void gst_play_bin_set_property (GObject * object, guint prop_id,

const GValue * value, GParamSpec * pspec)

{

为每一个property的设置提供了一个函数,设置到group的数据结构中,作为一种pipeline的配置

switch (prop_id) {

case PROP_URI:

gst_play_bin_set_uri (playbin, g_value_get_string (value));

break;

case PROP_SUBURI:

gst_play_bin_set_suburi (playbin, g_value_get_string (value));

break;

。。。。

}

核心函数:

//状态机控制,NULL/READY/PAUSE/PLAY 4个状态之间的跳转,如果从NULL->PLAYING,中间需要经过

// ready、pause状态,这种中间状态和跳转由playbin自动处理。

static GstStateChangeReturn gst_play_bin_change_state (GstElement * element, GstStateChange transition)

{

//主要是根据状态机对两个group的处理,并且驱动pipeline上的各个element的状态

switch (transition) {

case GST_STATE_CHANGE_NULL_TO_READY:

memset (&playbin->duration, 0, sizeof (playbin->duration));

break;

case GST_STATE_CHANGE_READY_TO_PAUSED:

GST_LOG_OBJECT (playbin, "clearing shutdown flag");

memset (&playbin->duration, 0, sizeof (playbin->duration));

g_atomic_int_set (&playbin->shutdown, 0);

if (!setup_next_source (playbin, GST_STATE_READY)) {

ret = GST_STATE_CHANGE_FAILURE;

goto failure;

}

break;

......

}

根据状态机的各个状态的要求,在ready状态下必须分配好所有需要的资源,包括element的创建等。

setup_next_source()->activate_group()->

static gboolean

activate_group (GstPlayBin * playbin, GstSourceGroup * group, GstState target)

{

//在property中如果设置了audiosink/videosink的类型,直接创建

if (playbin->audio_sink)

group->audio_sink = gst_object_ref (playbin->audio_sink);

if (playbin->video_sink)

group->video_sink = gst_object_ref (playbin->video_sink);

//创建uridecodebin,并加入到playbin中

uridecodebin = gst_element_factory_make ("uridecodebin", NULL);

if (!uridecodebin)

goto no_decodebin;

gst_bin_add (GST_BIN_CAST (playbin), uridecodebin);

group->uridecodebin = gst_object_ref (uridecodebin);

//为decodebin定义一些signal和handler

group->pad_added_id = g_signal_connect (uridecodebin, "pad-added",

G_CALLBACK (pad_added_cb), group);

group->pad_removed_id = g_signal_connect (uridecodebin, "pad-removed",

G_CALLBACK (pad_removed_cb), group);

group->no_more_pads_id = g_signal_connect (uridecodebin, "no-more-pads",

G_CALLBACK (no_more_pads_cb), group);

group->notify_source_id = g_signal_connect (uridecodebin, "notify::source",

G_CALLBACK (notify_source_cb), group);

group->drained_id =

g_signal_connect (uridecodebin, "drained", G_CALLBACK (drained_cb),

group);

group->autoplug_factories_id =

g_signal_connect (uridecodebin, "autoplug-factories",

G_CALLBACK (autoplug_factories_cb), group);

group->autoplug_select_id =

g_signal_connect (uridecodebin, "autoplug-select",

G_CALLBACK (autoplug_select_cb), group);

group->autoplug_continue_id =

g_signal_connect (uridecodebin, "autoplug-continue",

G_CALLBACK (autoplug_continue_cb), group);

}

Playbin中根据signal来进行通信和处理。在内部根据media type(type_found()函数)来加载对应的plugin来形成pipeline。

需要注意的是:

playbin本身就是一个pipeline,因此不再需要用户再创建一个pipeline。

gint

main (gint argc, gchar * argv[])

{

GstElement *player;

GstStateChangeReturn res;

gst_init (&argc, &argv);

player = gst_element_factory_make ("playbin", "player");

g_assert (player);

g_object_set (G_OBJECT (player), "uri", argv[1], NULL);

res = gst_element_set_state (player, GST_STATE_PLAYING);

if (res != GST_STATE_CHANGE_SUCCESS) {

g_print ("could not play\n");

return -1;

}

g_main_loop_run (g_main_loop_new (NULL, TRUE));

return 0;

}

问题1:在早期的playbin中要求用户指定playbin中的各个element的类型,在新的playbin中不需要这么

做,那么pipeline中的element根据什么来创建?

DecodeBin:

struct _GstURIDecodeBin

{

GstBin parent_instance;

GMutex *lock;

GMutex *factories_lock;

guint32 factories_cookie;

GList *factories;

gchar *uri;

guint connection_speed;

GstCaps *caps;

gchar *encoding;

gboolean is_stream;

gboolean is_download;

gboolean need_queue;

guint64 buffer_duration;

guint buffer_size;

gboolean download;

gboolean use_buffering;

GstElement *source;

GstElement *queue;

GstElement *typefind;

guint have_type_id;

GSList *decodebins;

GSList *pending_decodebins;

GHashTable *streams;

gint numpads;

guint src_np_sig_id;

guint src_nmp_sig_id;

gint pending;

gboolean async_pending;

gboolean expose_allstreams;

guint64 ring_buffer_max_size;

};

struct _GstURIDecodeBinClass

{

GstBinClass parent_class;

void (*unknown_type) (GstElement * element, GstPad * pad, GstCaps * caps);

gboolean (*autoplug_continue) (GstElement * element, GstPad * pad,

GstCaps * caps);

GValueArray *(*autoplug_factories) (GstElement * element, GstPad * pad,

GstCaps * caps);

GValueArray *(*autoplug_sort) (GstElement * element, GstPad * pad,

GstCaps * caps, GValueArray * factories);

GstAutoplugSelectResult (*autoplug_select) (GstElement * element,

GstPad * pad, GstCaps * caps, GstElementFactory * factory);

void (*drained) (GstElement * element);

};

PLAYBACK 插件定义:

static gboolean

plugin_init (GstPlugin * plugin)

{

gboolean res;

gst_pb_utils_init ();

#ifdef ENABLE_NLS

GST_DEBUG ("binding text domain %s to locale dir %s", GETTEXT_PACKAGE,

LOCALEDIR);

bindtextdomain (GETTEXT_PACKAGE, LOCALEDIR);

bind_textdomain_codeset (GETTEXT_PACKAGE, "UTF-8");

#endif

g_type_class_ref (GST_TYPE_STREAM_SELECTOR);

res = gst_play_bin2_plugin_init (plugin);

res &= gst_play_sink_plugin_init (plugin);

res &= gst_subtitle_overlay_plugin_init (plugin);

res &= gst_decode_bin_plugin_init (plugin);

res &= gst_uri_decode_bin_plugin_init (plugin);

return res;

}

GST_PLUGIN_DEFINE (GST_VERSION_MAJOR,

GST_VERSION_MINOR,

"playback",

"various playback elements", plugin_init, VERSION, GST_LICENSE,

GST_PACKAGE_NAME, GST_PACKAGE_ORIGIN)

声明一个插件,并且在初始化是调用decodebin/playbin2/playsink/subtitle_overlay等的element注册函数。

总结:在element中实际上都是围绕2个结构体来进行的,一个struct是定义的是该element的数据变量;另外一个struct定义的是该struct支持的成员函数的指针。实现element实际上就是实现这些函数。

在代码中存在这样的代码:

result = GST_ELEMENT_CLASS (parent_class)->change_state (element, transition);

GST_ELEMENT_CLASS (parent_class)表示的是GST_ELEMENT_CLASS结构中的parent_class成员变量:

struct _GstElementClass

{

GstObjectClass parent_class; //父类

.....

}

第二部分:playback插件如何工作

命令: gst-launch -v playbin2 uri=file:///xxx.avi

在一个完整的player中需要下面几个部分:

在playbin中这些部分如何获取包括parser和codec的类型等?

在“plugin writer's guide"中有一章为”pad caps negotiation":

Caps negotiation is the process where elements configure themselves and each other for

streaming a particular media format over their pads

首先要注意的是caps是针对pad的,而不是针对element。

struct _GstCaps {

GType type;

gint refcount;

GstCapsFlags flags;

GPtrArray *structs;

gpointer _gst_reserved[GST_PADDING];

};

上面的定义表示element可以根据pad上的media type来配置自己。比如某个parser element支持多个格式

包括AVI/MKV/MP4/FLV等,那么该parser element需要配置成哪种格式取决于当前播放的是什么格式的

文件,因此需要根据传输过来的文件格式来确定当前element需要配置成哪种格式,同样对codec也有

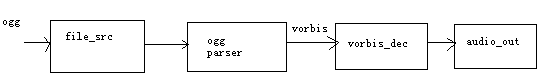

类似的要求。如下面的audio player的例子:

在上面的例子中,ogg_parser的src_pad 需要根据parser出来的audio标准来确定,当parser出来的是

vorbis音频数据时,在ogg_parser的srcpad上设置caps=vorbis,而vorbis_dec的sinkPad上需要能

接收vorbis数据。

对于某些element能够支持多种caps,比如decoder能支持多个标准,因此需要一个"caps negotiate"过程

来确定当前需要配置成哪种caps;也可以设置成fixed caps,该pad上只能使用这个固定的caps,因此不需

要协商过程。

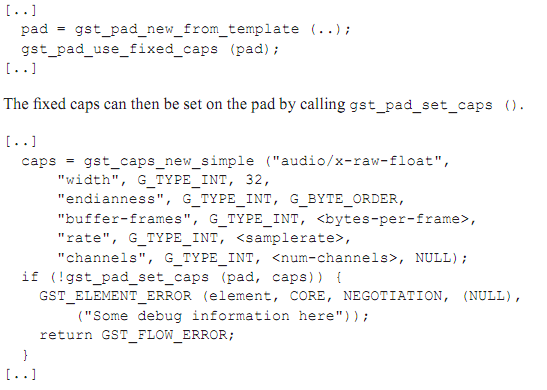

1.fixed caps

是否使用fixed caps可以通过一个属性设置到pad上gst_pad_use_fixed_caps():

2. downstream caps negotiate

对source pad设置caps时有2种情况:

2.1 srcpad需要设置的caps由该element的sinkpad提供(sinkpad和srcpad的caps相同)

When renegotiation takes place, some may merely need to "forward" the renegotiation

backwards upstream.For those elements, all (downstream) caps negotiation can be done in

something that we call the _setcaps () function. This function is called when a buffer

is pushed over a pad, but the format on this buffer is not the same as the format that

was previously negotiated.

There may also be cases where the filter actually is able to change the format of the

stream. In those cases, it will negotiate a new format.

从上面的描述可知: 只有当data buffer已经push到了该pad上,并且buffer上携带的format信息和原来

协商的caps不同时,可以调用_setcaps()来重新设置caps满足buffer中的format要求。

2.2 当element的sinkpad 和srcpad的caps不同时,需要进行设置

比如videodec element的sinkpad是码流,但是output上的是yuv格式,不同的caps。无法用上面的

方法直接将sinkpad上的caps设置给srcpad。

gboolean gst_pad_set_caps (GstPad * pad, GstCaps * caps)

{

setcaps = GST_PAD_SETCAPSFUNC (pad); //得到pad上注册的setcaps()函数的指针

setcaps (pad, caps)

}

3. upstream caps negotiation

这种情况比如视频显示时由于不同的屏分辨率,要求解码出来的YUV分辨率相应的改变(假设decoder中包含

后处理),这种caps协商是由于downstream element的变化而对upstream element提出的需求。

Upstream caps renegotiation is done in the gst_pad_alloc_buffer ()-function.

an element requesting a buffer from downstream, has to specify the type of that buffer. If

renegotiation is to take place, this type will no longer apply, and the downstream element will

set a new caps on the provided buffer. The element should then reconfigure itself to push

buffers with the returned caps. The source pad’s setcaps will be called once the buffer is

pushed.

通过函数gst_pad_alloc_buffer()中实现这种协商。

几个同caps相关的函数:

GstCaps * gst_pad_get_negotiated_caps (GstPad * pad)

{

caps = GST_PAD_CAPS (pad);//#define GST_PAD_CAPS(pad) (GST_PAD_CAST(pad)->caps)

}

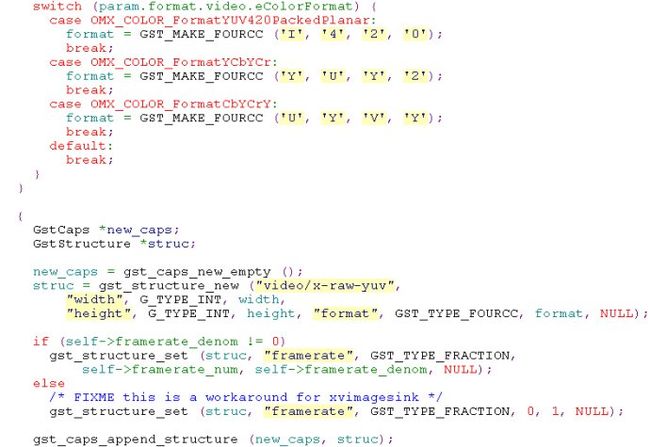

如果没有设置caps,需要提供函数来设置:下面是gstomx_base_videodec.c中的定义:

在这个例子中,如果没有caps被设置或者caps改变,可以调用该element提供的setcaps函数9callback)来设

置。主要是设置struc 数据结构。

outloop():

if (G_LIKELY (self->in_port->enabled)) {

GstCaps *caps = NULL;

caps = gst_pad_get_negotiated_caps (self->srcpad);

if (!caps) { //如果没有设置caps,那么去设置一个caps

GST_WARNING_OBJECT (self, "faking settings changed notification");

if (gomx->settings_changed_cb)

gomx->settings_changed_cb (gomx);

} else {

GST_LOG_OBJECT (self, "caps already fixed: %" GST_PTR_FORMAT, caps);

gst_caps_unref (caps);

}

}

接着讨论playbin2如何确定各个element和pad上的caps:

在playbin2的代码中,可以看到group数据结构,在init_group()中为video/audio/subtitle初始化了几

个selector的media_list。

selector的数据结构:

struct _GstSourceSelect

{

const gchar *media_list[8];

SourceSelectGetMediaCapsFunc get_media_caps;

GstPlaySinkType type;

GstElement *selector;

GPtrArray *channels;

GstPad *srcpad;

GstPad *sinkpad;

gulong src_event_probe_id;

gulong sink_event_probe_id;

GstClockTime group_start_accum;

};

audiosink/videosink/textsink可以通过property来设置(PROP_VIDEO_SINK/PROP_AUDIO_SINK/PROP_TEXT_SINK)。

activate_group()中会建立uridecodebin:

{

uridecodebin = gst_element_factory_make ("uridecodebin", NULL);

if (!uridecodebin)

goto no_decodebin;

gst_bin_add (GST_BIN_CAST (playbin), uridecodebin);

group->pad_added_id = g_signal_connect (uridecodebin, "pad-added",

G_CALLBACK (pad_added_cb), group);

}

从总体上来看都是通过设置在pad上的caps来寻找element,这可以从playbin的几个函数中看到:

函数gst_factory_list_filter()就是通过caps来找到能处理这种caps的element。

下面这个函数也是通过caps来确定element。

在playbin2的说明中:

Advanced Usage: specifying the audio and video sink

By default, if no audio sink or video sink has been specified via the "audio-sink" or "video-sink"property, playbin will use the autoaudiosink and autovideosink elements to find the first-best available output method. This should work in most cases, but is not always desirable. Often either the user or application might want to specify more explicitly what to use for audio and video output.(如果用户没有描述audiosink和videosink,那么playbin会自动去寻找最合适的sink,最好是用户去描述)

If the application wants more control over how audio or video should be output, it may create the audio/video sink elements itself (for example using gst_element_factory_make()) and provide them to playbin using the "audio-sink" or "video-sink" property.

GNOME-based applications, for example, will usually want to create gconfaudiosink and gconfvideosink elements and make playbin use those, so that output happens to whatever the user has configured in the GNOME Multimedia System Selector configuration dialog.

The sink elements do not necessarily need to be ready-made sinks. It is possible to create container elements that look like a sink to playbin, but in reality contain a number of custom elements linked together. This can be achieved by creating a GstBin and putting elements in there and linking them, and then creating a sink GstGhostPad for the bin and pointing it to the sink pad of the first element within the bin. This can be used for a number of purposes, for example to force output to a particular format or to modify or observe the data before it is output.

It is also possible to 'suppress' audio and/or video output by using 'fakesink' elements (or capture it from there using the fakesink element's "handoff" signal, which, nota bene, is fired from the streaming thread!).