Linear Regression & Ridge Regression的matlab实现

背景与原理:

给定一些离散点,利用线性回归过程获取多项式逼近拟合回归曲线。

Polynomial Curve Fitting

其中

a是需要求的位置系数。

于是根据mean-squared error,我们需要最小化

即minimize

其中RSS意为Residual sum of squares.

我们为方便起见,将参数与变量归整化为矩阵形式

需要做的就是求Js(a)的最小值。

根据梯度下降法,我们有

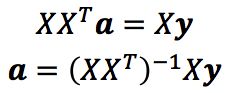

取deltaJs = 0,则有

此处XX'根据矩阵相乘秩<=秩(X) and 秩(X'),当样本数大于特征数时,(XX')非奇异。

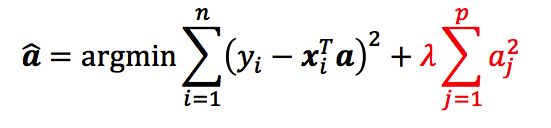

以上方法会有overfitting的问题,为了增加过拟合的penalty,加上一个累积参数

于是有

此时系数矩阵a为

应用:

利用该方法获得线性分类器。

实现代码:

Linear Regression:

function w = linear_regression(X, y) %LINEAR_REGRESSION Linear Regression. % % INPUT: X: training sample features, P-by-N matrix. % y: training sample labels, 1-by-N row vector. % % OUTPUT: w: learned perceptron parameters, (P+1)-by-1 column vector. % % YOUR CODE HERE [P, N] = size(X); X = [ones(1,N);X]; a = inv(X*X')*X*y'; w = a; end

function w = ridge(X, y, lambda) %RIDGE Ridge Regression. % % INPUT: X: training sample features, P-by-N matrix. % y: training sample labels, 1-by-N row vector. % lambda: regularization parameter. % % OUTPUT: w: learned parameters, (P+1)-by-1 column vector. % % YOUR CODE HERE [P, N] = size(X); X = [ones(1,N);X]; a = inv(X*X'+lambda*eye(P+1))*X*y'; w = a; end

顶层调用模块:

%% Part1: Linear Regression

nRep = 1000; % number of replicates

nTrain = 100; % number of training data

nTest = 1000;

total_iter = 0;

enTrain = 0; % error number of train set

enTest = 0; % error number of test set

E_train = 0; % error rate of train set

E_test = 0; % error rate of test set

for i = 1:nRep

% [X, y, w_f] = mkdata(nTrain);

% w_g = linear_regression(X, y);

% Compute training, testing error

[X, y, w_f] = mkdata(nTrain+nTest);

X_train = X( : , 1:nTrain );

y_train = y( 1 , 1:nTrain ); % true label

X_test = X( : , (nTrain+1):(nTrain+nTest) );

y_test = y( 1 , (nTrain+1):(nTrain+nTest) ); % true label

w_g = linear_regression(X_train, y_train);

[error_rate_train,error_rate_test] = error_rate(w_g, X_train, ...

X_test, y_train, ...

y_test, nTrain, nTest);

E_train = E_train + error_rate_train;

E_test = E_test + error_rate_test;

end

E_train = E_train / nRep;

E_test = E_test / nRep;

fprintf('E_train is %f, E_test is %f.\n', E_train, E_test);

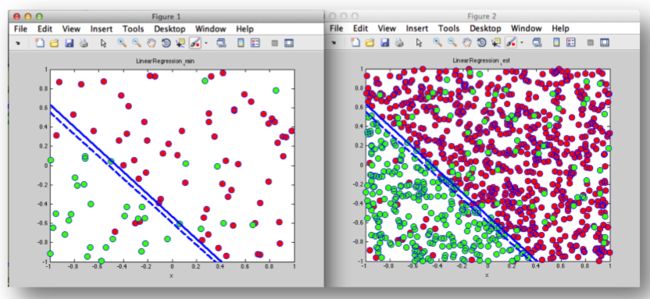

plotdata(X_train, y_train, w_f, w_g, 'Linear Regression_train');

plotdata(X_test, y_test, w_f, w_g, 'Linear Regression_test');

%fprintf('E_train is %f, E_test is %f.\n', E_train, E_test);

%plotdata(X, y, w_f, w_g, 'Linear Regression');

%% Part2: Linear Regression with noisy data

nRep = 1000; % number of replicates

nTrain = 100; % number of training data

nTest = 1000;

total_iter = 0;

enTrain = 0; % error number of train set

enTest = 0; % error number of test set

E_train = 0; % error rate of train set

E_test = 0; % error rate of test set

% for i = 1:nRep

% [X, y, w_f] = mkdata(nTrain, 'noisy');

% w_g = linear_regression(X, y);

% % Compute training, testing error

% end

for i = 1:nRep

% [X, y, w_f] = mkdata(nTrain);

% w_g = linear_regression(X, y);

% Compute training, testing error

[X, y, w_f] = mkdata(nTrain+nTest, 'noisy');

X_train = X( : , 1:nTrain );

y_train = y( 1 , 1:nTrain ); % true label

X_test = X( : , (nTrain+1):(nTrain+nTest) );

y_test = y( 1 , (nTrain+1):(nTrain+nTest) ); % true label

w_g = linear_regression(X_train, y_train);

[error_rate_train,error_rate_test] = error_rate(w_g, X_train, ...

X_test, y_train, ...

y_test, nTrain, nTest);

E_train = E_train + error_rate_train;

E_test = E_test + error_rate_test;

end

E_train = E_train / nRep;

E_test = E_test / nRep;

fprintf('E_train is %f, E_test is %f.\n', E_train, E_test);

plotdata(X_train, y_train, w_f, w_g, 'Linear Regression_train');

plotdata(X_test, y_test, w_f, w_g, 'Linear Regression_test');

%fprintf('E_train is %f, E_test is %f.\n', E_train, E_test);

% plotdata(X, y, w_f, w_g, 'Linear Regression: noisy');

function [X, y, w] = mkdata(N, noisy)

%MKDATA Generate data set.

%

% INPUT: N: number of samples.

% noisy: if or not add noise to y.

%

% OUTPUT: X: sample features, P-by-N matrix.

% y: sample labels, 1-by-N row vector.

% w: target function parameters, (P+1)-by-1 column vector.

%

range = [-1, 1];

dim = 2;

X = rand(dim, N)*(range(2)-range(1)) + range(1);

while true

Xsample = [ones(1, dim); rand(dim, dim)*(range(2)-range(1)) + range(1)];

w = null(Xsample', 'r');

y = sign(w'*[ones(1, N); X]);

if all(y)

break;

end

end

if nargin == 2

if noisy == 'noisy'

idx = randsample(N,N/10);

y(idx) = -y(idx);

end

end

end

绘图模块:

function plotdata(X, y, wf, wg, desc)

%PLOTDATA Plot data set.

%

% INPUT: X: sample features, P-by-N matrix.

% y: sample labels, 1-by-N row vector.

% wf: true target function parameters, (P+1)-by-1 column vector.

% wg: learnt target function parameters, (P+1)-by-1 column vector.

% desc: title of figure.

%

figure;

if size(X, 1) ~= 2

disp('WTF');

return;

end

plot(X(1, y == 1), X(2, y == 1), 'o', 'MarkerFaceColor', 'r', ...

'MarkerSize', 10);

hold on;

plot(X(1, y == -1), X(2, y == -1), 'o', 'MarkerFaceColor', 'g', ...

'MarkerSize', 10);

hold on;

f = ezplot(@(x) (-wf(2)/wf(3))*x-wf(1)/wf(3), [-1 1 -1 1]);

set(f, 'Color', 'b', 'LineWidth', 2, 'LineStyle', '-');

hold on;

g = ezplot(@(x) (-wg(2)/wg(3))*x-wg(1)/wg(3), [-1 1 -1 1]);

set(g, 'Color', 'b', 'LineWidth', 2, 'LineStyle', '--');

title(desc);

hold off;

end

Linear Regression结果:训练集错误率3.7%,测试集错误率4.7%

带噪声的训练、测试结果: