Hadoop 提取KPI 进行海量Web日志分析

Hadoop 提取KPI 进行海量Web日志分析

Web日志包含着网站最重要的信息,通过日志分析,我们可以知道网站的访问量,哪个网页访问人数最多,哪个网页最有价值等。一般中型的网站(10W的PV以上),每天会产生1G以上Web日志文件。大型或超大型的网站,可能每小时就会产生10G的数据量。

- Web日志分析概述

- 需求分析:KPI指标设计

- 算法模型:Hadoop并行算法

- 架构设计:日志KPI系统架构

- 程序开发:MapReduce程序实现

1. Web日志分析概述

Web日志由Web服务器产生,可能是Nginx, Apache, Tomcat等。从Web日志中,我们可以获取网站每类页面的PV值(PageView,页面访问量)、独立IP数;稍微复杂一些的,可以计算得出用户所检索的关键词排行榜、用户停留时间最高的页面等;更复杂的,构建广告点击模型、分析用户行为特征等等。

在Web日志中,每条日志通常代表着用户的一次访问行为,例如下面就是一条nginx日志:

222.68.172.190 - - [18/Sep/2013:06:49:57 +0000] "GET /images/my.jpg HTTP/1.1" 200 19939

"http://www.angularjs.cn/A00n" "Mozilla/5.0 (Windows NT 6.1)

AppleWebKit/537.36 (KHTML, like Gecko) Chrome/29.0.1547.66 Safari/537.36"拆解为以下8个变量

- remote_addr: 记录客户端的ip地址, 222.68.172.190

- remote_user: 记录客户端用户名称, –

- time_local: 记录访问时间与时区, [18/Sep/2013:06:49:57 +0000]

- request: 记录请求的url与http协议, “GET /images/my.jpg HTTP/1.1”

- status: 记录请求状态,成功是200, 200

- body_bytes_sent: 记录发送给客户端文件主体内容大小, 19939

- http_referer: 用来记录从那个页面链接访问过来的, “http://www.angularjs.cn/A00n”

- http_user_agent: 记录客户浏览器的相关信息, “Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/29.0.1547.66 Safari/537.36”

注:要更多的信息,则要用其它手段去获取,通过js代码单独发送请求,使用cookies记录用户的访问信息。

利用这些日志信息,我们可以深入挖掘网站的秘密了。

少量数据的情况

少量数据的情况(10Mb,100Mb,10G),在单机处理尚能忍受的时候,我可以直接利用各种Unix/Linux工具,awk、grep、sort、join等都是日志分析的利器,再配合perl, python,正则表达工,基本就可以解决所有的问题。

例如,我们想从上面提到的nginx日志中得到访问量最高前10个IP,实现很简单:

~ cat access.log.10 | awk '{a[$1]++} END {for(b in a) print b"\t"a[b]}' | sort -k2 -r | head -n 10

163.177.71.12 972

101.226.68.137 972

183.195.232.138 971

50.116.27.194 97

14.17.29.86 96

61.135.216.104 94

61.135.216.105 91

61.186.190.41 9

59.39.192.108 9

220.181.51.212 9海量数据的情况

当数据量每天以10G、100G增长的时候,单机处理能力已经不能满足需求。我们就需要增加系统的复杂性,用计算机集群,存储阵列来解决。在Hadoop出现之前,海量数据存储,和海量日志分析都是非常困难的。只有少数一些公司,掌握着高效的并行计算,分步式计算,分步式存储的核心技术。

Hadoop的出现,大幅度的降低了海量数据处理的门槛,让小公司甚至是个人都能力,搞定海量数据。并且,Hadoop非常适用于日志分析系统。

2.需求分析:KPI指标设计

下面我们将从一个公司案例出发来全面的解释,如何用进行 海量Web日志分析,提取KPI数据 。

案例介绍

某电子商务网站,在线团购业务。每日PV数100w,独立IP数5w。用户通常在工作日上午10:00-12:00和下午15:00-18:00访问量最大。日间主要是通过PC端浏览器访问,休息日及夜间通过移动设备访问较多。网站搜索浏量占整个网站的80%,PC用户不足1%的用户会消费,移动用户有5%会消费。

通过简短的描述,我们可以粗略地看出,这家电商网站的经营状况,并认识到愿意消费的用户从哪里来,有哪些潜在的用户可以挖掘,网站是否存在倒闭风险等。

KPI指标设计

- PV(PageView): 页面访问量统计

- IP: 页面独立IP的访问量统计

- Time: 用户每小时PV的统计

- Source: 用户来源域名的统计

- Browser: 用户的访问设备统计

从商业的角度,个人网站的特征与电商网站不太一样,没有转化率,同时跳出率也比较高。从技术的角度,同样都关注KPI指标设计。

3.算法模型:Hadoop并行算法

并行算法的设计:

PV(PageView): 页面访问量统计

Map过程{key:request,value:1}

Reduce过程{key:request,value:求和(sum)}

IP: 页面独立IP的访问量统计

Map: {key:request,value:remote_addr}

Reduce: {key:request,value:去重再求和(sum(unique))}

Time: 用户每小时PV的统计

Map: {key:time_local,value:1}

Reduce: {key:time_local,value:求和(sum)}

Source: 用户来源域名的统计

Map: {key:http_referer,value:1}

Reduce: {key:http_referer,value:求和(sum)}

Browser: 用户的访问设备统计

Map: {key:http_user_agent,value:1}

Reduce: {key:http_user_agent,value:求和(sum)}

4.架构设计:日志KPI系统架构

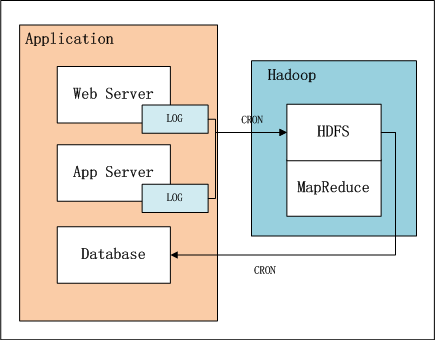

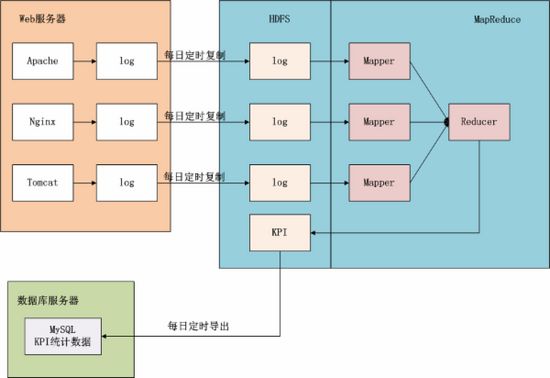

上图中,左边是Application业务系统,右边是Hadoop的HDFS, MapReduce。

1.日志是由业务系统产生的,我们可以设置web服务器每天产生一个新的目录,目录下面会产生多个日志文件,每个日志文件64M。

2.设置系统定时器CRON,夜间在0点后,向HDFS导入昨天的日志文件。

3.完成导入后,设置系统定时器,启动MapReduce程序,提取并计算统计指标。

4.完成计算后,设置系统定时器,从HDFS导出统计指标数据到数据库,方便以后的即使查询。

上面这幅图,我们可以看得更清楚,数据是如何流动的。蓝色背景的部分是在Hadoop中的,接下来我们的任务就是完成MapReduce的程序实现。

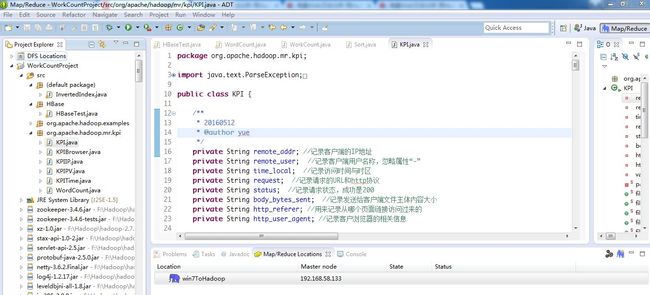

5.程序开发2:MapReduce程序实现

开发流程:

- 对日志行的解析

- Map函数实现

- Reduce函数实现

启动程序实现

1). 对日志行的解析

整体代码

package org.apache.hadoop.mr.kpi;

import java.text.ParseException;

import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.HashSet;

import java.util.Locale;

import java.util.Set;

public class KPI {

/** * 20160512 * @author yue */

private String remote_addr; //记录客户端的IP地址

private String remote_user; //记录客户端用户名称,忽略属性“-”

private String time_local; //记录访问时间与时区

private String request; //记录请求的URL和http协议

private String status; //记录请求状态,成功是200

private String body_bytes_sent; //记录发送给客户端文件主体内容大小

private String http_referer; //用来记录从哪个页面链接访问过来的

private String http_user_agent; //记录客户浏览器的相关信息

private boolean valid = true ; //判断数据是否合法

private static KPI parser(String line){

System.out.println(line);

KPI kpi = new KPI();

String[] arr = line.split(" ");

if (arr.length>11){

kpi.setRemote_addr(arr[0]);

kpi.setRemote_user(arr[1]);

kpi.setTime_local(arr[3].substring(1));

kpi.setRequest(arr[6]);

kpi.setStatus(arr[8]);

kpi.setBody_bytes_sent(arr[9]);

kpi.setHttp_referer(arr[10]);

if(arr.length>12){

kpi.setHttp_user_agent(arr[11] + " " + arr[12]);

} else {

kpi.setHttp_user_agent(arr[11]);

}

if(Integer.parseInt(kpi.getStatus()) >= 400){

//大于400,http錯誤

kpi.setValid(false);

}

}else{

kpi.setValid(false);

}

return kpi;

}

/** * 按page的pv分类 * pageview:页面访问量统计 * @return */

public static KPI filterPVs(String line){

KPI kpi = parser(line);

Set<String> pages = new HashSet<String>();

pages.add("/about/");

pages.add("/black-ip-clustor/");

pages.add("/cassandra-clustor/");

pages.add("/finance-rhive-repurchase/");

pages.add("/hadoop-familiy-roadmap/");

pages.add("/hadoop-hive-intro/");

pages.add("/hadoop-zookeeper-intro/");

pages.add("/hadoop-mahout-roadmap/");

if(!pages.contains(kpi.getRequest())){

kpi.setValid(false);

}

return kpi;

}

/** * 按page的独立IP分类 * @return */

public static KPI filterIPs(String line){

KPI kpi = parser(line);

Set<String> pages = new HashSet<String>();

pages.add("/about/");

pages.add("/black-ip-clustor/");

pages.add("/cassandra-clustor/");

pages.add("/finance-rhive-repurchase/");

pages.add("/hadoop-familiy-roadmap/");

pages.add("/hadoop-hive-intro/");

pages.add("/hadoop-zookeeper-intro/");

pages.add("/hadoop-mahout-roadmap/");

if (!pages.contains(kpi.getRequest())){

kpi.setValid(false);

}

return kpi;

}

/** * PV按浏览器分类 * @return */

public static KPI filterBroswer(String line){

return parser(line);

}

/** * PV按小时分类 * @return */

public static KPI filterTime(String line){

return parser(line);

}

/** * Pv按访问域名分类 * @return */

public static KPI filterDomain(String line){

return parser(line);

}

public String getRemote_addr() {

return remote_addr;

}

public void setRemote_addr(String remote_addr) {

this.remote_addr = remote_addr;

}

public String getRemote_user() {

return remote_user;

}

public void setRemote_user(String remote_user) {

this.remote_user = remote_user;

}

public String getTime_local() {

return time_local;

}

public Date getTime_local_Date() throws ParseException{

SimpleDateFormat df = new SimpleDateFormat("dd/MMM/yyyy:HH:mm:ss",Locale.US);

return df.parse(this.time_local);

}

public String getTime_local_Date_hour() throws ParseException{

SimpleDateFormat df = new SimpleDateFormat("yyyyMMddHH");

return df.format(this.getTime_local_Date());

}

public void setTime_local(String time_local) {

this.time_local = time_local;

}

public String getRequest() {

return request;

}

public void setRequest(String request) {

this.request = request;

}

public String getStatus() {

return status;

}

public void setStatus(String status) {

this.status = status;

}

public String getBody_bytes_sent() {

return body_bytes_sent;

}

public void setBody_bytes_sent(String body_bytes_sent) {

this.body_bytes_sent = body_bytes_sent;

}

public String getHttp_referer() {

return http_referer;

}

public String getHttp_referer_domain(){

if(http_referer.length()<8){

return http_referer;

}

String str = this.http_referer.replace("\\", "").replace("http://", "").replace("https://", "");

return str.indexOf("/")>0?str.substring(0, str.indexOf("/")):str;

}

public void setHttp_referer(String http_referer) {

this.http_referer = http_referer;

}

public String getHttp_user_agent() {

return http_user_agent;

}

public void setHttp_user_agent(String http_user_agent) {

this.http_user_agent = http_user_agent;

}

public boolean isValid() {

return valid;

}

public void setValid(boolean valid) {

this.valid = valid;

}

@Override

public String toString() {

StringBuilder sb = new StringBuilder();

sb.append("valid:" + this.valid);

sb.append("\nremote_addr:" + this.remote_addr);

sb.append("\nremote_user:" + this.remote_user);

sb.append("\ntime_local:" + this.time_local);

sb.append("\nrequest:" + this.request);

sb.append("\nstatus:" + this.status);

sb.append("\nbody_bytes_sent:" + this.body_bytes_sent);

sb.append("\nhttp_referer:" + this.http_referer);

sb.append("\nhttp_user_agent:" + this.http_user_agent);

return super.toString();

}

public static void main(String[] args) {

String line = "222.68.172.190 - - [18/Sep/2013:06:49:57 +0000] \"GET /images/my.jpg HTTP/1.1\" 200 19939 \"http://www.angularjs.cn/A00n\" \"Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/29.0.1547.66 Safari/537.36\"";

System.out.println(line);

KPI kpi = new KPI();

String[] arr = line.split(" ");

kpi.setRemote_addr(arr[0]);

kpi.setRemote_user(arr[1]);

kpi.setTime_local(arr[3].substring(1));

kpi.setRequest(arr[6]);

kpi.setStatus(arr[8]);

kpi.setBody_bytes_sent(arr[9]);

kpi.setHttp_referer(arr[10]);

kpi.setHttp_user_agent(arr[11] + " " + arr[12]);

System.out.println(kpi);

try {

SimpleDateFormat df = new SimpleDateFormat("yyyy.MM.dd:HH:mm:ss",Locale.US);

System.out.println(df.format(kpi.getTime_local_Date()));

System.out.println(kpi.getTime_local_Date_hour());

System.out.println(kpi.getHttp_referer_domain());

} catch (ParseException e) {

e.printStackTrace();

}

}

}从日志文件中,取一行通过main函数写一个简单的解析测试。

控制台输出:

我们看到日志行,被正确的解析成了kpi对象的属性。我们把解析过程,单独封装成一个方法。

private static KPI parser(String line) {

System.out.println(line);

KPI kpi = new KPI();

String[] arr = line.split(" ");

if (arr.length > 11) {

kpi.setRemote_addr(arr[0]);

kpi.setRemote_user(arr[1]);

kpi.setTime_local(arr[3].substring(1));

kpi.setRequest(arr[6]);

kpi.setStatus(arr[8]);

kpi.setBody_bytes_sent(arr[9]);

kpi.setHttp_referer(arr[10]);

if (arr.length > 12) {

kpi.setHttp_user_agent(arr[11] + " " + arr[12]);

} else {

kpi.setHttp_user_agent(arr[11]);

}

if (Integer.parseInt(kpi.getStatus()) >= 400) {// 大于400,HTTP错误

kpi.setValid(false);

}

} else {

kpi.setValid(false);

}

return kpi;

}对map方法,reduce方法,启动方法,我们单独写一个类来实现

下面将分别介绍MapReduce的实现类:

- PV:org.apache.hadoop.mr.kpi.KPIPV.java

- IP: org.apache.hadoop.mr.kpi.KPIIP.java

- Time: org.apache.hadoop.mr.kpi.KPITime.java

- Browser: org.apache.hadoop.mr.kpi.KPIBrowser.java

1). PV:org.apache.hadoop.mr.kpi.KPIPV.java

package org.apache.hadoop.mr.kpi;

import java.io.IOException;

import java.util.Iterator;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileInputFormat;

import org.apache.hadoop.mapred.FileOutputFormat;

import org.apache.hadoop.mapred.JobClient;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.MapReduceBase;

import org.apache.hadoop.mapred.Mapper;

import org.apache.hadoop.mapred.OutputCollector;

import org.apache.hadoop.mapred.Reducer;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.TextInputFormat;

import org.apache.hadoop.mapred.TextOutputFormat;

public class KPIPV {

/** * @author yue * 20160512 */

public static class KPIPVMapper extends MapReduceBase implements Mapper<Object ,Text ,Text,IntWritable>{

private IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value,

OutputCollector<Text, IntWritable> output, Reporter reporter)

throws IOException {

KPI kpi = KPI.filterPVs(value.toString());

if(kpi.isValid()){

word.set(kpi.getRequest());

output.collect(word, one);

}

}

}

public static class KPIPVReducer extends MapReduceBase implements Reducer<Text,IntWritable,Text,IntWritable>{

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterator<IntWritable> values,

OutputCollector<Text, IntWritable> output, Reporter reporter)

throws IOException {

int sum = 0;

while(values.hasNext()){

sum += values.next().get();

}

result.set(sum);

output.collect(key, result);

}

}

public static void main(String[] args) throws Exception{

String input = "hdfs://192.168.37.134:9000/user/hdfs/log_kpi";

String output = "hdfs://192.168.37.134:9000/user/hdfs/log_kpi/pv";

JobConf conf = new JobConf(KPIPV.class);

conf.setJobName("KPIPV");

conf.setMapOutputKeyClass(Text.class);

conf.setMapOutputValueClass(IntWritable.class);

conf.setOutputKeyClass(Text.class);

conf.setOutputValueClass(IntWritable.class);

conf.setMapperClass(KPIPVMapper.class);

conf.setCombinerClass(KPIPVReducer.class);

conf.setReducerClass(KPIPVReducer.class);

conf.setInputFormat(TextInputFormat.class);

conf.setOutputFormat(TextOutputFormat.class);

FileInputFormat.setInputPaths(conf, new Path(input));

FileOutputFormat.setOutputPath(conf, new Path(output));

JobClient.runJob(conf);

System.exit(0);

}

}

在程序中会调用KPI类的方法

KPI kpi = KPI.filterPVs(value.toString());我们运行一下KPIPV.java

用hadoop命令查看HDFS文件

~ hadoop fs -cat /user/hdfs/log_kpi/pv/part-00000

/about 5

/black-ip-list/ 2

/cassandra-clustor/ 3

/finance-rhive-repurchase/ 13

/hadoop-family-roadmap/ 13

/hadoop-hive-intro/ 14

/hadoop-mahout-roadmap/ 20

/hadoop-zookeeper-intro/ 62). IP: org.apache.hadoop.mr.kpi.KPIIP.java

package org.apache.hadoop.mr.kpi;

import java.io.IOException;

import java.util.HashSet;

import java.util.Iterator;

import java.util.Set;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileInputFormat;

import org.apache.hadoop.mapred.FileOutputFormat;

import org.apache.hadoop.mapred.JobClient;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.MapReduceBase;

import org.apache.hadoop.mapred.Mapper;

import org.apache.hadoop.mapred.OutputCollector;

import org.apache.hadoop.mapred.Reducer;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.TextInputFormat;

import org.apache.hadoop.mapred.TextOutputFormat;

import org.apache.hadoop.mr.kpi.KPIIP.KPIIPMapper.KPIIPReducer;

public class KPIIP {

/** * @author yue * 20160512 */

public static class KPIIPMapper extends MapReduceBase implements Mapper<Object,Text,Text,Text>{

private Text word = new Text();

private Text ips = new Text();

public void map(Object key, Text value,

OutputCollector<Text, Text> output, Reporter reporter)

throws IOException {

KPI kpi = KPI.filterIPs(value.toString());

if(kpi.isValid()){

word.set(kpi.getRequest());

ips.set(kpi.getRemote_addr());

output.collect(word, ips);

}

}

public static class KPIIPReducer extends MapReduceBase implements Reducer<Text,Text,Text,Text>{

private Text result = new Text();

private Set<String>count = new HashSet<String>();

public void reduce(Text key, Iterator<Text> values,

OutputCollector<Text, Text> output, Reporter reporter)

throws IOException {

while(values.hasNext()){

count.add(values.next().toString());

}

result.set(String.valueOf(count.size()));

output.collect(key, result);

}

}

}

public static void main(String[] args) throws Exception{

String input = "hdfs://192.168.37.134:9000/user/hdfs/log_kpi";

String output = "hdfs://192.168.37.134:9000/user/hdfs/log_kpi/ip";

JobConf conf = new JobConf(KPIIP.class);

conf.setJobName("KPIIP");

conf.setMapOutputKeyClass(Text.class);

conf.setMapOutputValueClass(Text.class);

conf.setOutputKeyClass(Text.class);

conf.setOutputValueClass(Text.class);

conf.setMapperClass(KPIIPMapper.class);

conf.setCombinerClass(KPIIPReducer.class);

conf.setReducerClass(KPIIPReducer.class);

conf.setInputFormat(TextInputFormat.class);

conf.setOutputFormat(TextOutputFormat.class);

FileInputFormat.setInputPaths(conf, new Path(input));

FileOutputFormat.setOutputPath(conf, new Path(output));

JobClient.runJob(conf);

System.exit(0);

}

}

3). Time: org.apache.hadoop.mr.kpi.KPITime.java

package org.apache.hadoop.mr.kpi;

import java.io.IOException;

import java.text.ParseException;

import java.util.Iterator;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileInputFormat;

import org.apache.hadoop.mapred.FileOutputFormat;

import org.apache.hadoop.mapred.JobClient;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.MapReduceBase;

import org.apache.hadoop.mapred.Mapper;

import org.apache.hadoop.mapred.OutputCollector;

import org.apache.hadoop.mapred.Reducer;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.TextInputFormat;

import org.apache.hadoop.mapred.TextOutputFormat;

public class KPITime {

/** * @author yue 20160512 */

public static class KPITimeMapper extends MapReduceBase implements

Mapper<Object, Text, Text, IntWritable> {

private IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value,

OutputCollector<Text, IntWritable> output, Reporter reporter)

throws IOException {

KPI kpi = KPI.filterTime(value.toString());

if(kpi.isValid()){

try {

word.set(kpi.getTime_local_Date_hour());

output.collect(word, one);

} catch (ParseException e) {

e.printStackTrace();

}

}

}

}

public static class KPITimeReducer extends MapReduceBase implements

Reducer<Text,IntWritable,Text,IntWritable>{

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterator<IntWritable> values,

OutputCollector<Text, IntWritable> output, Reporter reporter)

throws IOException {

int sum = 0;

while(values.hasNext()){

sum+=values.next().get();

}

result.set(sum);

output.collect(key, result);

}

}

public static void main(String[] args) throws Exception{

String input = "hdfs://192.168.37.134:9000/user/hdfs/log_kpi";

String output = "hdfs://192.168.37.134:9000/user/hdfs/log_kpi/time";

JobConf conf = new JobConf(KPITime.class);

conf.setJobName("KPITime");

conf.setOutputKeyClass(Text.class);

conf.setOutputValueClass(IntWritable.class);

conf.setMapperClass(KPITimeMapper.class);

conf.setCombinerClass(KPITimeReducer.class);

conf.setReducerClass(KPITimeReducer.class);

conf.setInputFormat(TextInputFormat.class);

conf.setOutputFormat(TextOutputFormat.class);

FileInputFormat.setInputPaths(conf, new Path(input));

FileOutputFormat.setOutputPath(conf, new Path(output));

JobClient.runJob(conf);

System.exit(0);

}

}

4). Browser: org.apache.hadoop.mr.kpi.KPIBrowser.java

package org.apache.hadoop.mr.kpi;

import java.io.IOException;

import java.util.Iterator;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileInputFormat;

import org.apache.hadoop.mapred.FileOutputFormat;

import org.apache.hadoop.mapred.JobClient;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.MapReduceBase;

import org.apache.hadoop.mapred.Mapper;

import org.apache.hadoop.mapred.OutputCollector;

import org.apache.hadoop.mapred.Reducer;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.TextInputFormat;

import org.apache.hadoop.mapred.TextOutputFormat;

public class KPIBrowser {

/** * 20160512 * @author yue */

public static class KPIBrowserMapper extends MapReduceBase implements Mapper<Object,Text,Text,IntWritable>{

private IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key,Text value,OutputCollector<Text,IntWritable> output , Reporter reporter) throws IOException{

KPI kpi = KPI.filterBroswer(value.toString());

if(kpi.isValid()){

word.set(kpi.getHttp_user_agent());

output.collect(word, one);

}

}

}

public static class KPIBrowserReducer extends MapReduceBase implements Reducer<Text,IntWritable,Text,IntWritable>{

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterator<IntWritable> values,OutputCollector<Text, IntWritable> output, Reporter reporter)throws IOException {

int sum = 0;

while(values.hasNext()){

sum+= values.next().get();

}

result.set(sum);

output.collect(key, result);

}

}

public static void main(String[] args) throws Exception{

String input = "hdfs://192.168.37.134:9000/user/hdfs/log_kpi";

String output = "hdfs://192.168.37.134:9000/user/hdfs/log_kpi/browser";

JobConf conf = new JobConf(KPIBrowser.class);

conf.setJobName("KPIBrowser");

conf.setOutputKeyClass(Text.class);

conf.setOutputValueClass(IntWritable.class);

conf.setMapperClass(KPIBrowserMapper.class);

conf.setCombinerClass(KPIBrowserReducer.class);

conf.setReducerClass(KPIBrowserReducer.class);

conf.setInputFormat(TextInputFormat.class);

conf.setOutputFormat(TextOutputFormat.class);

FileInputFormat.setInputPaths(conf, new Path(input));

FileOutputFormat.setOutputPath(conf, new Path(output));

JobClient.runJob(conf);

System.exit(0);

}

}