[Hadoop]Hadoop异常汇总,不定期更新

异常1:jps命令查看NameNode未启动

重新启动linux虚拟机后,发现hadoop start-all.sh命令启动不了NameNode。并百度搜索了很多网站,并未能成功结果。这里提供一个出现该问题的一个可能(笔者也是遇到的这样的问题)。

问题描述:jps命令查看NameNode未启动

尝试格式化等操作,并不难解决这个问题。后来发现重启后linux虚拟机的IP地址有所变化!!!

解决步骤:

vi /etc/hosts

异常2:格式化namenode失败

异常描述:

命令 hadoop namenode -format 没有显示格式化成功的信息。

解决办法,删除

删除conf文件中,core-site.xml指定的文件夹(提示:命令 rm -rf /usr/local/hadoop/tmp)

就可以正常格式化了。

异常3:按照官网步骤执行 sbin/start-dfs.sh 有错误

异常信息:

[root@hadoop hadoop]# sbin/start-dfs.sh

15/10/18 05:22:43 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Starting namenodes on [hadoop]

hadoop: Error: JAVA_HOME is not set and could not be found.

localhost: Error: JAVA_HOME is not set and could not be found.

Starting secondary namenodes [0.0.0.0]

0.0.0.0: Error: JAVA_HOME is not set and could not be found.

15/10/18 05:22:49 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

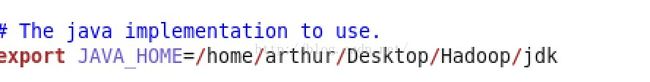

原因:没设置hadoop-env.sh中的JAVA_HOME(其实笔者已经配置好了JAVA_HOME),但是不知道为什么还是不行,于是还是在文件中进行了修改,有效解决。如图1所示。

异常4:hadoop启动报localhost:Error:JAVA_HOME is not set and could not be found 错误。

原因:虽然echo $JAVA_HOME是有值的,但是还是报错,解决方法:

解决办法:

修改/etc/hadoop/hadoop-env.sh中设JAVA_HOME。

应当使用绝对路径。

export JAVA_HOME=$JAVA_HOME //错误,不能这么改

export JAVA_HOME=/usr/java/jdk1.6.0_45 //正确,应该这么改

异常5:通过mac下eclipse访问ubuntu虚拟机的HDFS系统报错。

错误如下。

Exception in thread "main" java.net.ConnectException: Call From MacBook-Pro.local/192.168.1.102 to 172.16.100.146:9000 failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method) at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:57) at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) at java.lang.reflect.Constructor.newInstance(Constructor.java:526) at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:792) at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:732) at org.apache.hadoop.ipc.Client.call(Client.java:1480) at org.apache.hadoop.ipc.Client.call(Client.java:1407) at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:229) at com.sun.proxy.$Proxy7.getBlockLocations(Unknown Source) at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.getBlockLocations(ClientNamenodeProtocolTranslatorPB.java:255) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:606) at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:187) at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102) at com.sun.proxy.$Proxy8.getBlockLocations(Unknown Source) at org.apache.hadoop.hdfs.DFSClient.callGetBlockLocations(DFSClient.java:1240) at org.apache.hadoop.hdfs.DFSClient.getLocatedBlocks(DFSClient.java:1227) at org.apache.hadoop.hdfs.DFSClient.getLocatedBlocks(DFSClient.java:1215) at org.apache.hadoop.hdfs.DFSInputStream.fetchLocatedBlocksAndGetLastBlockLength(DFSInputStream.java:303) at org.apache.hadoop.hdfs.DFSInputStream.openInfo(DFSInputStream.java:269) at org.apache.hadoop.hdfs.DFSInputStream.<init>(DFSInputStream.java:261) at org.apache.hadoop.hdfs.DFSClient.open(DFSClient.java:1540) at org.apache.hadoop.hdfs.DistributedFileSystem$3.doCall(DistributedFileSystem.java:303) at org.apache.hadoop.hdfs.DistributedFileSystem$3.doCall(DistributedFileSystem.java:299) at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81) at org.apache.hadoop.hdfs.DistributedFileSystem.open(DistributedFileSystem.java:299) at org.apache.hadoop.fs.FileSystem.open(FileSystem.java:767) at org.apache.hadoop.fs.FsUrlConnection.connect(FsUrlConnection.java:50) at org.apache.hadoop.fs.FsUrlConnection.getInputStream(FsUrlConnection.java:59) at java.net.URL.openStream(URL.java:1037) at hdfs.App1.main(App1.java:14) Caused by: java.net.ConnectException: Connection refused at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method) at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:739) at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206) at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531) at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:495) at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:609) at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:707) at org.apache.hadoop.ipc.Client$Connection.access$2800(Client.java:370) at org.apache.hadoop.ipc.Client.getConnection(Client.java:1529) at org.apache.hadoop.ipc.Client.call(Client.java:1446) ... 27 more

解决方案:

修改etc/hadoop/core-site.xml文件配置。将原先的localhost修改为 该虚拟机的IP地址即可解决。

异常6:

org.apache.hadoop.security.AccessControlException: Permission denied:

2015-11-22 22:46:35,602 WARN [main] util.NativeCodeLoader (NativeCodeLoader.java:<clinit>(62)) - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable Exception in thread "main" org.apache.hadoop.security.AccessControlException: Permission denied: user=arthur, access=EXECUTE, inode="/he111":taodj:supergroup:d--------- at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.check(FSPermissionChecker.java:319) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkTraverse(FSPermissionChecker.java:259) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:205) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:190) at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1698) at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1682) at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkTraverse(FSDirectory.java:1670) at org.apache.hadoop.hdfs.server.namenode.FSDirMkdirOp.mkdirs(FSDirMkdirOp.java:60) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirs(FSNamesystem.java:3900) at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.mkdirs(NameNodeRpcServer.java:978) at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.mkdirs(ClientNamenodeProtocolServerSideTranslatorPB.java:622) at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java) at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616) at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:969) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2049) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2045) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1657) at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2043) at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method) at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:57) at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) at java.lang.reflect.Constructor.newInstance(Constructor.java:526) at org.apache.hadoop.ipc.RemoteException.instantiateException(RemoteException.java:106) at org.apache.hadoop.ipc.RemoteException.unwrapRemoteException(RemoteException.java:73) at org.apache.hadoop.hdfs.DFSClient.primitiveMkdir(DFSClient.java:3010) at org.apache.hadoop.hdfs.DFSClient.mkdirs(DFSClient.java:2978) at org.apache.hadoop.hdfs.DistributedFileSystem$21.doCall(DistributedFileSystem.java:1047) at org.apache.hadoop.hdfs.DistributedFileSystem$21.doCall(DistributedFileSystem.java:1043) at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81) at org.apache.hadoop.hdfs.DistributedFileSystem.mkdirsInternal(DistributedFileSystem.java:1043) at org.apache.hadoop.hdfs.DistributedFileSystem.mkdirs(DistributedFileSystem.java:1036) at org.apache.hadoop.fs.FileSystem.mkdirs(FileSystem.java:1877) at hdfs.App2.main(App2.java:30) Caused by: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.AccessControlException): Permission denied: user=arthur, access=EXECUTE, inode="/he111":taodj:supergroup:d--------- at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.check(FSPermissionChecker.java:319) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkTraverse(FSPermissionChecker.java:259) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:205) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:190) at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1698) at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1682) at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkTraverse(FSDirectory.java:1670) at org.apache.hadoop.hdfs.server.namenode.FSDirMkdirOp.mkdirs(FSDirMkdirOp.java:60) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirs(FSNamesystem.java:3900) at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.mkdirs(NameNodeRpcServer.java:978) at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.mkdirs(ClientNamenodeProtocolServerSideTranslatorPB.java:622) at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java) at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616) at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:969) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2049) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2045) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1657) at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2043) at org.apache.hadoop.ipc.Client.call(Client.java:1476) at org.apache.hadoop.ipc.Client.call(Client.java:1407) at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:229) at com.sun.proxy.$Proxy7.mkdirs(Unknown Source) at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.mkdirs(ClientNamenodeProtocolTranslatorPB.java:558) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:606) at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:187) at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102) at com.sun.proxy.$Proxy8.mkdirs(Unknown Source) at org.apache.hadoop.hdfs.DFSClient.primitiveMkdir(DFSClient.java:3008) ... 8 more

解决方案:赋予权限,命令hadoop fs -chmod 777 /。即可解决

异常7:虚拟机重启后,IP地址更改后,发现Datanode没法启动。

解决办法:登录50070端口的网页,我的地址是http://172.16.47.130:50070,查看日志信息。异常如下。

java.io.EOFException: End of File Exception between local host is: "ubuntu/127.0.1.1"; destination host is: "localhost":9000; : java.io.EOFException; For more details see: http://wiki.apache.org/hadoop/EOFException at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method) at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62) at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) at java.lang.reflect.Constructor.newInstance(Constructor.java:422) at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:792) at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:765) at org.apache.hadoop.ipc.Client.call(Client.java:1480) at org.apache.hadoop.ipc.Client.call(Client.java:1407) at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:229) at com.sun.proxy.$Proxy14.sendHeartbeat(Unknown Source) at org.apache.hadoop.hdfs.protocolPB.DatanodeProtocolClientSideTranslatorPB.sendHeartbeat(DatanodeProtocolClientSideTranslatorPB.java:153) at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.sendHeartBeat(BPServiceActor.java:553) at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.offerService(BPServiceActor.java:653) at org.apache.hadoop.hdfs.server.datanode.BPServiceActor.run(BPServiceActor.java:823) at java.lang.Thread.run(Thread.java:745) Caused by: java.io.EOFException at java.io.DataInputStream.readInt(DataInputStream.java:392) at org.apache.hadoop.ipc.Client$Connection.receiveRpcResponse(Client.java:1079) at org.apache.hadoop.ipc.Client$Connection.run(Client.java:974)

先删除core-site.xml下配置的tmp目录,再格式化namenode(PS:此步很危险,东西将会不会存在,慎重执行)。

重启,OK解决。

![[Hadoop]Hadoop异常汇总,不定期更新_第1张图片](http://img.e-com-net.com/image/info5/4acb3b7c52bc49b086979e974cd23cb2.jpg)

![[Hadoop]Hadoop异常汇总,不定期更新_第2张图片](http://img.e-com-net.com/image/info5/64a67665695f4e91a7ef309e6e9e28ef.jpg)