RHive

安装RHive

install.packages("RHive")

library(RHive)

Loading required package: rJava

Loading required package: Rserve

This is RHive 0.0-7. For overview type ‘?RHive’.

HIVE_HOME=/home/conan/hadoop/hive-0.9.0

call rhive.init() because HIVE_HOME is set.

4. RHive函数库

rhive.aggregate rhive.connect rhive.hdfs.exists rhive.mapapply

rhive.assign rhive.desc.table rhive.hdfs.get rhive.mrapply

rhive.basic.by rhive.drop.table rhive.hdfs.info rhive.napply

rhive.basic.cut rhive.env rhive.hdfs.ls rhive.query

rhive.basic.cut2 rhive.exist.table rhive.hdfs.mkdirs rhive.reduceapply

rhive.basic.merge rhive.export rhive.hdfs.put rhive.rm

rhive.basic.mode rhive.exportAll rhive.hdfs.rename rhive.sapply

rhive.basic.range rhive.hdfs.cat rhive.hdfs.rm rhive.save

rhive.basic.scale rhive.hdfs.chgrp rhive.hdfs.tail rhive.script.export

rhive.basic.t.test rhive.hdfs.chmod rhive.init rhive.script.unexport

rhive.basic.xtabs rhive.hdfs.chown rhive.list.tables

rhive.size.table

rhive.big.query rhive.hdfs.close rhive.load rhive.write.table

rhive.block.sample rhive.hdfs.connect rhive.load.table

rhive.close rhive.hdfs.du rhive.load.table2

Hive和RHive的基本操作对比:

#连接到hive

Hive: hive shell

RHive: rhive.connect("192.168.1.210")

#列出所有hive的表

Hive: show tables;

RHive: rhive.list.tables()

#查看表结构

Hive: desc o_account;

RHive: rhive.desc.table('o_account'), rhive.desc.table('o_account',TRUE)

#执行HQL查询

Hive: select * from o_account;

RHive: rhive.query('select * from o_account')

#查看hdfs目录

Hive: dfs -ls /;

RHive: rhive.hdfs.ls()

#查看hdfs文件内容

Hive: dfs -cat /user/hive/warehouse/o_account/part-m-00000;

RHive: rhive.hdfs.cat('/user/hive/warehouse/o_account/part-m-00000')

#断开连接

Hive: quit;

RHive: rhive.close()

5. RHive基本使用操作

#初始化

rhive.init()

#连接hive

rhive.connect("192.168.1.210")

#查看所有表

rhive.list.tables()

tab_name

1 hive_algo_t_account

2 o_account

3 r_t_account

#查看表结构

rhive.desc.table('o_account');

col_name data_type comment

1 id int

2 email string

3 create_date string

#执行HQL查询

rhive.query("select * from o_account");

id email create_date

1 1 [email protected] 2013-04-22 12:21:39

2 2 [email protected] 2013-04-22 12:21:39

3 3 [email protected] 2013-04-22 12:21:39

4 4 [email protected] 2013-04-22 12:21:39

5 5 [email protected] 2013-04-22 12:21:39

6 6 [email protected] 2013-04-22 12:21:39

7 7 [email protected] 2013-04-23 09:21:24

8 8 [email protected] 2013-04-23 09:21:24

9 9 [email protected] 2013-04-23 09:21:24

10 10 [email protected] 2013-04-23 09:21:24

11 11 [email protected] 2013-04-23 09:21:24

#关闭连接

rhive.close()

[1] TRUE

创建临时表

rhive.block.sample('o_account', subset="id<5")

[1] "rhive_sblk_1372238856"

rhive.query("select * from rhive_sblk_1372238856");

id email create_date

1 1 [email protected] 2013-04-22 12:21:39

2 2 [email protected] 2013-04-22 12:21:39

3 3 [email protected] 2013-04-22 12:21:39

4 4 [email protected] 2013-04-22 12:21:39

#查看hdfs的文件

rhive.hdfs.ls('/user/hive/warehouse/rhive_sblk_1372238856/')

permission owner group length modify-time

1 rw-r--r-- conan supergroup 141 2013-06-26 17:28

file

1 /user/hive/warehouse/rhive_sblk_1372238856/000000_0

rhive.hdfs.cat('/user/hive/warehouse/rhive_sblk_1372238856/000000_0')

[email protected] 12:21:39

[email protected] 12:21:39

[email protected] 12:21:39

[email protected] 12:21:39

按范围分割字段数据

rhive.basic.cut('o_account','id',breaks='0:100:3')

[1] "rhive_result_20130626173626"

attr(,"result:size")

[1] 443

rhive.query("select * from rhive_result_20130626173626");

email create_date id

1 [email protected] 2013-04-22 12:21:39 (0,3]

2 [email protected] 2013-04-22 12:21:39 (0,3]

3 [email protected] 2013-04-22 12:21:39 (0,3]

4 [email protected] 2013-04-22 12:21:39 (3,6]

5 [email protected] 2013-04-22 12:21:39 (3,6]

6 [email protected] 2013-04-22 12:21:39 (3,6]

7 [email protected] 2013-04-23 09:21:24 (6,9]

8 [email protected] 2013-04-23 09:21:24 (6,9]

9 [email protected] 2013-04-23 09:21:24 (6,9]

10 [email protected] 2013-04-23 09:21:24 (9,12]

11 [email protected] 2013-04-23 09:21:24 (9,12]

4、安装RHive(各个主机上都要安装):

RHive是一种通过Hive高性能查询来扩展R计算能力的包。它可以在R环境中非常容易的调用HQL, 也允许在Hive中使用R的对象和函数。理论上数据处理量可以无限扩展的Hive平台,搭配上数据挖掘的利器R环境, 堪称是一个完美的大数据分析挖掘的工作环境。

1、Rserve包的安装:

RHive依赖于Rserve,因此在安装R的要按照本文R的安装方式,即附带后面两个选项(--disable-nls --enable-R-shlib)

enable-R-shlib是将R作为动态链接库进行安装,这样像Rserve依赖于R动态库的包就可以安装了,但缺点会有20%左右的性能下降。

Rserve使用的的是在线安装方式:

install.packages("Rserve")

$R_HOME的目录下创建Rserv.conf文件,写入“remote enable''保存并退出。通过scp -r 命令将Master节点上安装好的Rserve包,以及Rserv.conf文件拷贝到所有slave节点下。当然在节点不多的情况下也可以分别安装Rserve包、创建Rserv.conf。

scp -r /usr/lib64/R/library/Rserve slave1:/usr/lib64/R/library/

scp -r /usr/lib64/R/Rserv.conf slave3:/usr/lib64/R/

在所有节点启动Rserve

Rserve --RS-conf /usr/lib64/R/Rserv.conf

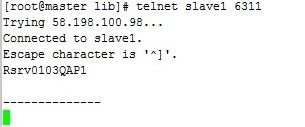

在master节点上telnet(如果未安装,通过shell命令yum install telnet安装)所有slave节点:

telnet slave1 6311

2、RHive包的安装:

安装RHive_0.0-7.tar.gz,并在master和所有slave节点上创建rhive的data目录,并赋予读写权限(最好将$R_HOME赋予777权限)

[root@master admin]# R CMD INSTALL RHive_0.0-7.tar.gz [root@master admin]# cd $R_HOME [root@master R]# mkdir -p rhive/data [root@master R]# chmod 777 -R rhive/data

master和slave中的/etc/profile中配置环境变量RHIVE_DATA=/usr/lib64/R/rhive/data

export RHIVE_DATA=/usr/lib64/R/rhive/data

通过scp命令将master节点上安装的RHive包拷贝到所有的slave节点下:

scp -r /usr/lib64/R/library/RHive slave1:/usr/lib64/R/library/

查看hdfs文件下的jar是否有读写权限

hadoop fs -ls /rhive/lib

安装rhive后,hdfs的根目录并没有rhive及其子目录lib,这就需要自己建立,并将/usr/lib64/R/library/RHive/java下的rhive_udf.jar复制到该目录

hadoop fs -put /usr/lib64/R/library/RHive/java/rhive_udf.jar /rhive/lib

否则在测试rhive.connect()的时候会报没有/rhive/lib/rhive_udf.jar目录或文件的错误。

最后,在hive客户端启(master、各slave均可)动hive远程服务(rhive是通过thrift连接hiveserver的,需要要启动后台thrift服务):

nohup hive --service hiveserver &

3、RHive的使用及测试:

1、RHive API

从HIVE中获得表信息的函数,比如

- rhive.list.tables:获得表名列表,支持pattern参数(正则表达式),类似于HIVE的show table

- rhive.desc.table:表的描述,HIVE中的desc table

- rhive.exist.table:

2、测试

> library("RHive") Loading required package: Rserve This is RHive 0.0-7. For overview type ?.RHive?. HIVE_HOME=/opt/cloudera/parcels/CDH-4.3.0-1.cdh4.3.0.p0.22/lib/hive [1] "there is no slaves file of HADOOP. so you should pass hosts argument when you call rhive.connect()." call rhive.init() because HIVE_HOME is set. Warning message: In file(file, "rt") : cannot open file '/etc/hadoop/conf/slaves': No such file or directory > rhive.connect() 13/06/17 20:32:33 WARN conf.Configuration: fs.default.name is deprecated. Instead, use fs.defaultFS 13/06/17 20:32:33 WARN conf.Configuration: fs.default.name is deprecated. Instead, use fs.defaultFS >

表明安装成功,只是conf下面的slaves没有配置,在/etc/hadoop/conf中新建slaves文件,并写入各个slave的名称即可解决该警告。

RHive的运行环境如下:

> rhive.env() Hive Home Directory : /opt/cloudera/parcels/CDH-4.3.0-1.cdh4.3.0.p0.22/lib/hive Hadoop Home Directory : /opt/cloudera/parcels/CDH-4.3.0-1.cdh4.3.0.p0.22/lib/hadoop Hadoop Conf Directory : /etc/hadoop/conf No RServe Connected HiveServer : 127.0.0.1:10000 Connected HDFS : hdfs://master:8020 >

3、RHive简单应用

载入RHive包,令连接Hive,获取数据:

> library(RHive)

> rhive.connect(host =

'host_ip'

)

> d <- rhive.query(

'select * from emp limit 1000'

)

>

class

(d)

> m <- rhive.block.sample(data_sku, percent = 0.0001, seed = 0)

> rhive.close()

|

一般在系统中已经配置了host,因此可以直接rhive.connect()进行连接,记得最后要有rhive.close()操作。 通过HIVE查询语句,将HIVE中的目标数据加载至R环境下,返回的 d 是一个dataframe。

实际上,rhive.query的实际用途有很多,一般HIVE操作都可以使用,比如变更scheme等操作:

> rhive.query('use scheme1') > rhive.query('show tables') > rhive.query('drop table emp')

但需要注意的是,数据量较大的情况需要使用rhive.big.query,并设置memlimit参数。

将R中的对象通过构建表的方式存储到HIVE中需要使用

> rhive.write.table(dat, tablename = 'usertable', sep = ',')

而后使用join等HIVE语句获得相关建模数据。其实写到这儿,有需求的看官就应该明白了,这几项 RHive 的功能就足够 折腾些有趣的事情了。

- 注1:其他关于在HIVE中调用R函数,暂时还没有应用,未来更新。

-

注2:

rhive.block.sample这个函数需要在HIVE 0.8版本以上才能执行。

Hive操作HDFS

#查看hdfs文件目录

rhive.hdfs.ls()

permission owner group length modify-time file

1 rwxr-xr-x conan supergroup 0 2013-04-24 01:52 /hbase

2 rwxr-xr-x conan supergroup 0 2013-06-23 10:59 /home

3 rwxr-xr-x conan supergroup 0 2013-06-26 11:18 /rhive

4 rwxr-xr-x conan supergroup 0 2013-06-23 13:27 /tmp

5 rwxr-xr-x conan supergroup 0 2013-04-24 19:28 /user

#查看hdfs文件内容

rhive.hdfs.cat('/user/hive/warehouse/o_account/part-m-00000')

[email protected] 12:21:39

[email protected] 12:21:39