创新实训日记三:cnn模型改进

本周主要工作主要包括以下两个方面:

- 论文阅读,寻找新实现方法

- 现有模型的调整和改进

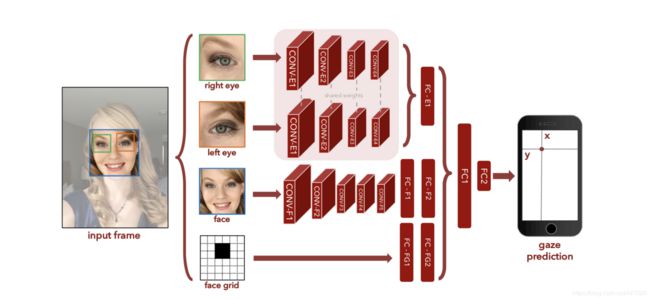

在新读的Eye Tracking for Everyone这篇论文中,作者通过cnns的训练实现了在手机和平板上的视线追踪功能,而且误差在1cm左右,精度较高。

而且对比于之前看的论文(Appearance-Based Gaze Estimation in the Wild)中需要坐标系变换和摄像头位置等参数进行图像的预处理等步骤,新阅读论文中在训练之前需要的图像预处理参数更少,而且由于我们也缺少摄像头等对应的参数,不容易实现原来的方法,我们决定采用新的模型结构在原数据集上进行一下测试

新的模型结构如下图所示

因为原数据集,缺少脸部完整的图像信息,因此在实际使用该模型的时候,我们只使用了左右眼部经过标准化后的图像,利用两个眼部图像分别进行卷积操作,最后喂入全连接层。

测试代码如下(相比于上一篇的cnn结构,添加了另外一只眼睛的图像做卷积操作,同时修改了loss函数为绝对值)

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

""" @author: vali """

# coding:utf8

import tensorflow as tf

import numpy as np

import readMat as rm

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

def weight_variable(shape):

''' 使用卷积神经网络会有很多权重和偏置需要创建,我们可以定义初始化函数便于重复使用 这里我们给权重制造一些随机噪声避免完全对称,使用截断的正态分布噪声,标准差为0.1 :param shape: 需要创建的权重Shape :return: 权重Tensor '''

initial = tf.random_normal(shape,stddev=0.01)

return tf.Variable(initial)

def bias_variable(shape):

''' 偏置生成函数,因为激活函数使用的是ReLU,我们给偏置增加一些小的正值(0.1)避免死亡节点(dead neurons) :param shape: :return: '''

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

''' 卷积层接下来要重复使用,tf.nn.conv2d是Tensorflow中的二维卷积函数, :param x: 输入 例如[5, 5, 1, 32]代表 卷积核尺寸为5x5,1个通道,32个不同卷积核 :param W: 卷积的参数 strides:代表卷积模板移动的步长,都是1代表不遗漏的划过图片的每一个点. padding:代表边界处理方式,SAME代表输入输出同尺寸 :return: '''

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding="SAME")

def max_pool_2x2(x):

''' tf.nn.max_pool是TensorFLow中最大池化函数.我们使用2x2最大池化 因为希望整体上缩小图片尺寸,因而池化层的strides设为横竖两个方向为2步长 :param x: :return: '''

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding="SAME")

def train(data,label,test_data,test_label,data1,label1,test_data1,test_label1):

# 使用占位符

x0 = tf.placeholder(tf.float32, [None, 36,60],'x0')# x为特征 right

x1 = tf.placeholder(tf.float32, [None, 36,60],'x1')# x1为特征 left

y0 = tf.placeholder(tf.float32, [None,2],'y0')

y1 = tf.placeholder(tf.float32, [None,2],'y1')

y_ = (y0+y1)/2# y_为label,取平均值

# 卷积中将1x2160转换为36x60x1 [-1,,,]代表样本数量不变 [,,,1]代表通道数

x_image0 = tf.reshape(x0, [-1, 36, 60, 1])

x_image1 = tf.reshape(x1, [-1, 36, 60, 1])

# 第一个卷积层 [5, 5, 1, 32]代表 卷积核尺寸为5x5,1个通道,32个不同卷积核

# 创建滤波器权值-->加偏置-->卷积-->池化

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

h_conv1 = tf.nn.relu(conv2d(x_image0, W_conv1)+b_conv1) #36x60x1 与32个5x5x1滤波器 --> 36x60x32

h_pool1 = max_pool_2x2(h_conv1) # 36x60x32 -->18x30x32

h_conv1_1 = tf.nn.relu(conv2d(x_image1, W_conv1)+b_conv1) #36x60x1 与32个5x5x1滤波器 --> 36x60x32

h_pool1_1 = max_pool_2x2(h_conv1_1) # 36x60x32 -->18x30x32

# 第二层卷积层 卷积核依旧是5x5 通道为32 有64个不同的卷积核

W_conv2 = weight_variable([5, 5, 32, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2) #18x30x32 与64个5x5x32滤波器 --> 18x30x64

h_pool2 = max_pool_2x2(h_conv2) #18x30x64 --> 9x15x64

h_conv2_1 = tf.nn.relu(conv2d(h_pool1_1, W_conv2) + b_conv2) #18x30x32 与64个5x5x32滤波器 --> 18x30x64

h_pool2_1 = max_pool_2x2(h_conv2_1) #18x30x64 --> 9x15x64

#tensor合并

h_pool2_merge = tf.concat([h_pool2,h_pool2_1],0) #18x15x64

# h_pool2_merge的大小为18x15x64 转为1-D 然后做FC层

W_fc1 = weight_variable([18*15*64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2_merge, [-1, 18*15*64]) #18x15x64 --> 17280

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1) #FC层传播 17280--> 1024

# 使用Dropout层减轻过拟合,通过一个placeholder传入keep_prob比率控制

# 在训练中,我们随机丢弃一部分节点的数据来减轻过拟合,预测时则保留全部数据追求最佳性能

keep_prob = tf.placeholder(tf.float32)

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

# 将Dropout层的输出连接到一个Softmax层,得到最后的概率输出

W_fc2 = weight_variable([1024, 2]) #2种输出可能

b_fc2 = bias_variable([2])

y_conv = (tf.matmul(h_fc1_drop, W_fc2) + b_fc2)

# 定义损失函数,使用均方误差 同时定义优化器 learning rate = 1e-4

cross_entropy = tf.reduce_mean(tf.abs((y_conv-y_)))

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

# 定义评测准确率

#accuracy = (1-tf.abs(tf.abs(y_conv[0][0]-y_[0])/y_[0]))*(1-tf.abs(tf.abs(y_conv[0][1]-y_[1])/y_[1]))

accuracy = y_conv

#开始训练

#writer = tf.summary.FileWriter("./", tf.get_default_graph())

with tf.Session() as sess:

init_op = tf.global_variables_initializer() #初始化所有变量

sess.run(init_op)

STEPS = 100

for i in range(STEPS):

#batch = 5

batch_x = [data[i*5]]

batch_y = [label[i*5]]

batch_x1 = [data1[i*5]]

batch_y1 = [label1[i*5]]

for j in range(1,5):

batch_x = np.vstack((batch_x,[data[5*i+j]]))

batch_y = np.vstack((batch_y,[label[5*i+j]]))

batch_x1 = np.vstack((batch_x1,[data1[5*i+j]]))

batch_y1 = np.vstack((batch_y1,[label1[5*i+j]]))

if i % 2 == 0:

#train_accuracy = sess.run(accuracy, feed_dict={x:batch_x , y_:batch_y, keep_prob: 1.0})

train_cross_entropy= sess.run(cross_entropy,feed_dict={x0:batch_x , y0:batch_y,

x1:batch_x , y1:batch_y, keep_prob: 1.0})

print(i,train_cross_entropy)

sess.run(train_step, feed_dict={x0: batch_x, y0: batch_y,

x1: batch_x, y1: batch_y, keep_prob: 0.5})

#test

for i in range(10):

#batch = 5

batch_x = [test_data[5*i]]

batch_y = [test_label[5*i]]

batch_x1 = [test_data1[5*i]]

batch_y1 = [test_label1[5*i]]

for j in range(1,5):

batch_x = np.vstack((batch_x,[test_data[5*i+j]]))

batch_y = np.vstack((batch_y,[test_label[5*i+j]]))

batch_x1 = np.vstack((batch_x1,[test_data1[5*i+j]]))

batch_y1 = np.vstack((batch_y1,[test_label1[5*i+j]]))

#train_accuracy = sess.run(accuracy, feed_dict={x:batch_x , y_:batch_y, keep_prob: 1.0})

res= sess.run(y_conv,feed_dict={x0:batch_x , y0:batch_y,

x1:batch_x , y1:batch_y, keep_prob: 1.0})

loss = res - batch_y

count = 0

len = 0

for k in loss:

if(abs(k[0])<0.1 and abs(k[1])<0.1):

count+=1

len+=1

print(i,count/len)

if __name__=="__main__":

label,data = rm.getdata('day01.mat')

label1,data1 = rm.getdata('day01.mat',x=1)

test_label,test_data=rm.getdata('day02.mat')

test_label1,test_data1 = rm.getdata('day02.mat',x = 1)

train(data,label,test_data,test_label,data1,label1,test_data1,test_label1)