【深度学习实战03】——YOLO tensorflow运行及源码解析

本文章是深度学习实战系列第三讲文章,以运行代码+源码分析 为主;

转载请注明引用自:https://blog.csdn.net/c20081052/article/details/80260726

首先代码下载链接是:https://github.com/hizhangp/yolo_tensorflow

下载完后建议好好读下里面的README部分内容;

本文结构:一.YOLO源码解读;二.代码运行

一.源码解读

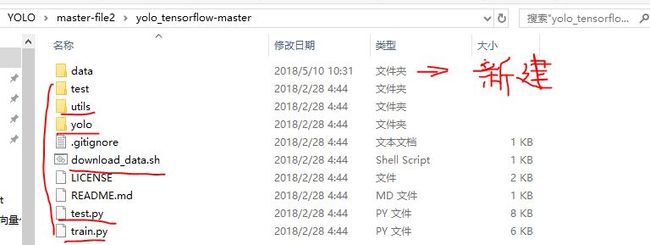

下载代码

YOLO_tensorflow-master.zip到自己常用的目录下,并解压,得到(其中data文件夹是我新建的)

如果需要运行train.py,需要你下载pascal_voc的数据集;如果要运行test.py,需要你下载他人训练后得到的权重数据文件;

在pascal_voc放入训练数据集,下载链接:

链接:

https://pan.baidu.com/s/1kWshVhl 密码: 89iu

在 weights 放入模型参数:

链接:

https://pan.baidu.com/s/1htt9YBE 密码: ehw2

下载后的weights文件放在

data/weight文件夹下

1.utils文件夹下主要文件:pascal_voc.py和timer.py;

其中pascal_voc.py代码解析如下:

import os

import xml.etree.ElementTree as ET #用于解析xml文件的

import numpy as np

import cv2

import pickle

import copy

import yolo.config as cfg

class pascal_voc(object): #定义一个pascal_voc类

def __init__(self, phase, rebuild=False):

self.devkil_path = os.path.join(cfg.PASCAL_PATH, 'VOCdevkit') #开发包列表目录:当前工作路径/data/pascal_voc/VOCdevkit

self.data_path = os.path.join(self.devkil_path, 'VOC2007') #开发包数据目录:当前工作路径/data/pascal_voc/VOCdevkit/VOC2007

self.cache_path = cfg.CACHE_PATH #见yolo目录下的config.py文件

self.batch_size = cfg.BATCH_SIZE

self.image_size = cfg.IMAGE_SIZE

self.cell_size = cfg.CELL_SIZE

self.classes = cfg.CLASSES

self.class_to_ind = dict(zip(self.classes, range(len(self.classes)))) #将类别中文名数字序列化成0,1,2,……

self.flipped = cfg.FLIPPED

self.phase = phase #定义训练or测试

self.rebuild = rebuild

self.cursor = 0 #光标移动用,查询gt_labels这个结构

self.epoch = 1

self.gt_labels = None

self.prepare()

def get(self):

images = np.zeros( #初始化图像。bs x 448x448x3

(self.batch_size, self.image_size, self.image_size, 3))

labels = np.zeros( #初始化类别(gt)。bs x 7x7x25 ,对于另外一个box就不构建维度了,因此是25

(self.batch_size, self.cell_size, self.cell_size, 25))

count = 0

while count < self.batch_size: #batch处理

imname = self.gt_labels[self.cursor]['imname'] #从gt label中读取图像名

flipped = self.gt_labels[self.cursor]['flipped'] #从gt label中查看是否flipped

images[count, :, :, :] = self.image_read(imname, flipped)

labels[count, :, :, :] = self.gt_labels[self.cursor]['label'] #从gt label中获取label类别坐标等信息

count += 1

self.cursor += 1

if self.cursor >= len(self.gt_labels): #判断是否训练完一个epoch了

np.random.shuffle(self.gt_labels)

self.cursor = 0

self.epoch += 1

return images, labels #返回尺寸缩放和归一化后的image序列;以及labels 真实信息

def image_read(self, imname, flipped=False):

image = cv2.imread(imname)

image = cv2.resize(image, (self.image_size, self.image_size))

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB).astype(np.float32)

image = (image / 255.0) * 2.0 - 1.0 #图像像素值归一化到[-1,1]

if flipped:

image = image[:, ::-1, :]

return image

def prepare(self): #是否做flipped并打乱原来次序返回结果

gt_labels = self.load_labels() #获取gt labels数据

if self.flipped: #判断是否做flipped

print('Appending horizontally-flipped training examples ...')

gt_labels_cp = copy.deepcopy(gt_labels)

for idx in range(len(gt_labels_cp)):

gt_labels_cp[idx]['flipped'] = True

gt_labels_cp[idx]['label'] =\

gt_labels_cp[idx]['label'][:, ::-1, :]

for i in range(self.cell_size):

for j in range(self.cell_size):

if gt_labels_cp[idx]['label'][i, j, 0] == 1:

gt_labels_cp[idx]['label'][i, j, 1] = \

self.image_size - 1 -\

gt_labels_cp[idx]['label'][i, j, 1]

gt_labels += gt_labels_cp

np.random.shuffle(gt_labels) #对gt labels打乱顺序

self.gt_labels = gt_labels

return gt_labels

def load_labels(self):

cache_file = os.path.join(

self.cache_path, 'pascal_' + self.phase + '_gt_labels.pkl') #cache/pascal_test/train_gt_labels.pkl

if os.path.isfile(cache_file) and not self.rebuild:

print('Loading gt_labels from: ' + cache_file) #从cache目录加载gt label文件

with open(cache_file, 'rb') as f:

gt_labels = pickle.load(f)

return gt_labels #返回gt

print('Processing gt_labels from: ' + self.data_path) #处理来自data目录下的gt label

if not os.path.exists(self.cache_path): #如果不存在目录文件则创建

os.makedirs(self.cache_path)

if self.phase == 'train': #如果是train阶段,则txtname是:当前工作路径/data/pascal_voc/VOCdevkit/VOC2007/ImageSets/Main/trainval.txt这个

txtname = os.path.join(

self.data_path, 'ImageSets', 'Main', 'trainval.txt')

else:

txtname = os.path.join( #如果是test阶段,则txtname是:当前工作路径/data/pascal_voc/VOCdevkit/VOC2007/ImageSets/Main/test.txt这个

self.data_path, 'ImageSets', 'Main', 'test.txt')

with open(txtname, 'r') as f:

self.image_index = [x.strip() for x in f.readlines()]

gt_labels = [] #创建列表存放gt label

for index in self.image_index:

label, num = self.load_pascal_annotation(index) #取gt label以及num目标数

if num == 0:

continue

imname = os.path.join(self.data_path, 'JPEGImages', index + '.jpg') #找到图像文件夹下对应索引号的图像

gt_labels.append({'imname': imname,

'label': label,

'flipped': False})

print('Saving gt_labels to: ' + cache_file)

with open(cache_file, 'wb') as f:

pickle.dump(gt_labels, f) #将gt labels(图形名,目标类别位置坐标信息,是否flipped)写入cache中

return gt_labels

def load_pascal_annotation(self, index): #从xml文件中获取bbox信息

"""

Load image and bounding boxes info from XML file in the PASCAL VOC

format.

"""

imname = os.path.join(self.data_path, 'JPEGImages', index + '.jpg') #图像目录下读取jpg文件:当前工作路径/data/pascal_voc/VOCdevkit/VOC2007/JPEGImages

im = cv2.imread(imname)

h_ratio = 1.0 * self.image_size / im.shape[0] #尺寸缩放系数

w_ratio = 1.0 * self.image_size / im.shape[1]

# im = cv2.resize(im, [self.image_size, self.image_size])

label = np.zeros((self.cell_size, self.cell_size, 25))

filename = os.path.join(self.data_path, 'Annotations', index + '.xml') #读取xml文件

tree = ET.parse(filename) #解析树

objs = tree.findall('object') #找xml文件中的object

for obj in objs: #遍历object

bbox = obj.find('bndbox') #查找object的bounding box

# Make pixel indexes 0-based

x1 = max(min((float(bbox.find('xmin').text) - 1) * w_ratio, self.image_size - 1), 0) #将xml文件中的坐标做尺寸缩放

y1 = max(min((float(bbox.find('ymin').text) - 1) * h_ratio, self.image_size - 1), 0)

x2 = max(min((float(bbox.find('xmax').text) - 1) * w_ratio, self.image_size - 1), 0)

y2 = max(min((float(bbox.find('ymax').text) - 1) * h_ratio, self.image_size - 1), 0)

cls_ind = self.class_to_ind[obj.find('name').text.lower().strip()] #实际类别对应数字序号

boxes = [(x2 + x1) / 2.0, (y2 + y1) / 2.0, x2 - x1, y2 - y1] #坐标转换成xc,yc,w,h

x_ind = int(boxes[0] * self.cell_size / self.image_size) #判断x属于第几个cell

y_ind = int(boxes[1] * self.cell_size / self.image_size) #判断y属于第几个cell

if label[y_ind, x_ind, 0] == 1:

continue

label[y_ind, x_ind, 0] = 1 #cell索引后,是否存在目标位赋1

label[y_ind, x_ind, 1:5] = boxes # 坐标赋值

label[y_ind, x_ind, 5 + cls_ind] = 1 #类别赋值

return label, len(objs) #返回label(gt)/以及xml中目标个数

其中timer.py代码解析如下:(主要就是计时用的)

import time

import datetime

class Timer(object):

'''

A simple timer.

'''

def __init__(self):

self.init_time = time.time()

self.total_time = 0.

self.calls = 0

self.start_time = 0.

self.diff = 0.

self.average_time = 0.

self.remain_time = 0.

def tic(self):

# using time.time instead of time.clock because time time.clock

# does not normalize for multithreading

self.start_time = time.time() #获取当前系统时间

def toc(self, average=True):

self.diff = time.time() - self.start_time #获取当前系统时间-之前获取的系统时间=时间差

self.total_time += self.diff #获取总的时间差

self.calls += 1 #调用次数

self.average_time = self.total_time / self.calls #多次时间调用,计算平均时间差

if average:

return self.average_time

else:

return self.diff

def remain(self, iters, max_iters): #用于计算完成剩余迭代次数预计所费时间

if iters == 0:

self.remain_time = 0

else:

self.remain_time = (time.time() - self.init_time) * \

(max_iters - iters) / iters

return str(datetime.timedelta(seconds=int(self.remain_time)))

2.yolo文件夹下主要文件:config.py和yolo_net.py;

其中config.py代码解析如下:

import os

#

# path and dataset parameter

#

DATA_PATH = 'data'

PASCAL_PATH = os.path.join(DATA_PATH, 'pascal_voc') #pascal的路径是;当前工作路径/data/pascal_voc

CACHE_PATH = os.path.join(PASCAL_PATH, 'cache') #cache的路径是;当前工作路径/data/pascal_voc/cache

OUTPUT_DIR = os.path.join(PASCAL_PATH, 'output') #output的路径是;当前工作路径/data/pascal_voc/output

WEIGHTS_DIR = os.path.join(PASCAL_PATH, 'weights') #weights的路径是;当前工作路径/data/pascal_voc/weights

WEIGHTS_FILE = None

# WEIGHTS_FILE = os.path.join(DATA_PATH, 'weights', 'YOLO_small.ckpt')

CLASSES = ['aeroplane', 'bicycle', 'bird', 'boat', 'bottle', 'bus', #目标类别

'car', 'cat', 'chair', 'cow', 'diningtable', 'dog', 'horse',

'motorbike', 'person', 'pottedplant', 'sheep', 'sofa',

'train', 'tvmonitor']

FLIPPED = True #是否flipped

#

# model parameter

#

IMAGE_SIZE = 448

CELL_SIZE = 7

BOXES_PER_CELL = 2

ALPHA = 0.1

DISP_CONSOLE = False

OBJECT_SCALE = 1.0 #这四个损失函数系数

NOOBJECT_SCALE = 1.0

CLASS_SCALE = 2.0

COORD_SCALE = 5.0

#

# solver parameter

#

GPU = ''

LEARNING_RATE = 0.0001

DECAY_STEPS = 30000

DECAY_RATE = 0.1

STAIRCASE = True

BATCH_SIZE = 45

MAX_ITER = 15000

SUMMARY_ITER = 10

SAVE_ITER = 1000

#

# test parameter

#

THRESHOLD = 0.2

IOU_THRESHOLD = 0.5

主要是网络训练时的配置要求;

其中yolo_net.py代码解析如下:

import numpy as np

import tensorflow as tf

import yolo.config as cfg

slim = tf.contrib.slim

class YOLONet(object): #定义一个YOLONet类

def __init__(self, is_training=True):

self.classes = cfg.CLASSES #目标类别

self.num_class = len(self.classes) #目标类别数量,值为20

self.image_size = cfg.IMAGE_SIZE #图像尺寸,为448

self.cell_size = cfg.CELL_SIZE # cell尺寸,为7

self.boxes_per_cell = cfg.BOXES_PER_CELL #每个grid cell负责的boxes数量,为2

self.output_size = (self.cell_size * self.cell_size) *\ #输出特征维度,7X7X(20+2X5)

(self.num_class + self.boxes_per_cell * 5)

self.scale = 1.0 * self.image_size / self.cell_size #尺寸缩放系数, 448/7=64

self.boundary1 = self.cell_size * self.cell_size * self.num_class # 7X7X20

self.boundary2 = self.boundary1 +\ # 7X7X20 + 7X7X2 49个所属20个物体类别的概率+98个bbox

self.cell_size * self.cell_size * self.boxes_per_cell

self.object_scale = cfg.OBJECT_SCALE #值为1,有目标存在的系数

self.noobject_scale = cfg.NOOBJECT_SCALE #值为1,没有目标存在的系数(论文貌似为0.5)

self.class_scale = cfg.CLASS_SCALE #值为2.0, 类别损失函数的系数

self.coord_scale = cfg.COORD_SCALE #值为5.0,坐标损失函数的系数

self.learning_rate = cfg.LEARNING_RATE #学习率=0.0001

self.batch_size = cfg.BATCH_SIZE #batch_size=45

self.alpha = cfg.ALPHA #alpha=0.1

self.offset = np.transpose(np.reshape(np.array(

[np.arange(self.cell_size)] * self.cell_size * self.boxes_per_cell), #将2X7X7的三维矩阵,转为7X7X2的三维矩阵

(self.boxes_per_cell, self.cell_size, self.cell_size)), (1, 2, 0))

self.images = tf.placeholder(

tf.float32, [None, self.image_size, self.image_size, 3], #创建输入图像占位符 448X448 3通道

name='images')

self.logits = self.build_network( #输出logits值(预测值)

self.images, num_outputs=self.output_size, alpha=self.alpha,

is_training=is_training)

if is_training:

self.labels = tf.placeholder(

tf.float32,

[None, self.cell_size, self.cell_size, 5 + self.num_class]) #为label(真实值)穿件占位符

self.loss_layer(self.logits, self.labels) #求loss

self.total_loss = tf.losses.get_total_loss() #求所有的loss

tf.summary.scalar('total_loss', self.total_loss)

def build_network(self, #建立网络(卷积层+池化层+全连接层)

images, #输入的图像 [None,448,448,3]

num_outputs, #输出特征维度[None,7X7X30]

alpha,

keep_prob=0.5, #dropout

is_training=True,

scope='yolo'): #命个名字

with tf.variable_scope(scope):

with slim.arg_scope(

[slim.conv2d, slim.fully_connected],

activation_fn=leaky_relu(alpha), #激活函数用的是leaky_relu

weights_regularizer=slim.l2_regularizer(0.0005), #权重正则化用的是l2

weights_initializer=tf.truncated_normal_initializer(0.0, 0.01) #权重初始化用的是正态分布(0.0,0.01)

):

net = tf.pad( #为输入图像进行填充,单张图上下左右各用0填充3行/列

images, np.array([[0, 0], [3, 3], [3, 3], [0, 0]]), #BatchSize维度不填充,行维度上下填充3行0,列维度左右填充3列0,channel维度不填充

name='pad_1')

net = slim.conv2d( # input=net; num_outputs=64个特征图;kernel_size:7X7; strides=2;

net, 64, 7, 2, padding='VALID', scope='conv_2') # 上面已经pad了,所以选padding=VALID,即不停留在图像边缘

net = slim.max_pool2d(net, 2, padding='SAME', scope='pool_3') #最大池化 2X2的核结构,stride=2;输出net 224X224X64

net = slim.conv2d(net, 192, 3, scope='conv_4') #卷积,输出特征图192个,kernel_size:3X3; 输出net: 224X224X192

net = slim.max_pool2d(net, 2, padding='SAME', scope='pool_5') #最大池化 2X2, stride=2; 输出net:112X112X192 OK

net = slim.conv2d(net, 128, 1, scope='conv_6') #卷积, kernel=1X1; 输出net: 112X112X128

net = slim.conv2d(net, 256, 3, scope='conv_7') #卷积, kernel=3X3;输出net: 112X112X256

net = slim.conv2d(net, 256, 1, scope='conv_8') #卷积, kernel=1X1; 输出net: 112X112X256

net = slim.conv2d(net, 512, 3, scope='conv_9') #卷积, kernel=3X3;输出net: 112X112X512

net = slim.max_pool2d(net, 2, padding='SAME', scope='pool_10') #最大池化 2X2,stride=2; 输出net: 56x56x256

net = slim.conv2d(net, 256, 1, scope='conv_11') #连续4组 卷积输出特征数256和512的组合;

net = slim.conv2d(net, 512, 3, scope='conv_12')

net = slim.conv2d(net, 256, 1, scope='conv_13')

net = slim.conv2d(net, 512, 3, scope='conv_14')

net = slim.conv2d(net, 256, 1, scope='conv_15')

net = slim.conv2d(net, 512, 3, scope='conv_16')

net = slim.conv2d(net, 256, 1, scope='conv_17')

net = slim.conv2d(net, 512, 3, scope='conv_18')

net = slim.conv2d(net, 512, 1, scope='conv_19') #卷积,kernel=1X1;输出net: 56x56x512

net = slim.conv2d(net, 1024, 3, scope='conv_20') #卷积,kernel=3X3; 输出net: 56x56x1024 ???

net = slim.max_pool2d(net, 2, padding='SAME', scope='pool_21') #最大池化 2X2,stride=2;输出net:28x28x512 ??

net = slim.conv2d(net, 512, 1, scope='conv_22') #连续两组 卷积输出特征数512和1024的组合

net = slim.conv2d(net, 1024, 3, scope='conv_23')

net = slim.conv2d(net, 512, 1, scope='conv_24')

net = slim.conv2d(net, 1024, 3, scope='conv_25')

net = slim.conv2d(net, 1024, 3, scope='conv_26') #卷积,kernel=3X3;输出net:28X28X1024

net = tf.pad( #对net进行填充

net, np.array([[0, 0], [1, 1], [1, 1], [0, 0]]), #batch维度不填充;28的行维度上下填充1行(值为0);28的列维度左右填充1列(值为0),channel维度不填充;

name='pad_27')

net = slim.conv2d(

net, 1024, 3, 2, padding='VALID', scope='conv_28') #上面已经pad了,所以选padding=VALID,kernel=3X3,stride=2,输出net:14x14x1024 ???

net = slim.conv2d(net, 1024, 3, scope='conv_29') #连续两个卷积,特征数为1024,kernel=3x3

net = slim.conv2d(net, 1024, 3, scope='conv_30') #输出net: 7x7x1024 ???

net = tf.transpose(net, [0, 3, 1, 2], name='trans_31') #输出net:[batchsize,channel,28,28]

net = slim.flatten(net, scope='flat_32') #输出net: (1,batchsize x channel x w x h)

net = slim.fully_connected(net, 512, scope='fc_33') #全连接层 输出net:1x512

net = slim.fully_connected(net, 4096, scope='fc_34') #全连接层 输出net:1x4096

net = slim.dropout( #dropout层,防止过拟合

net, keep_prob=keep_prob, is_training=is_training,

scope='dropout_35')

net = slim.fully_connected( #全连接层,输出net:7x7x30特征

net, num_outputs, activation_fn=None, scope='fc_36')

return net #返回net: 7x7x30

def calc_iou(self, boxes1, boxes2, scope='iou'): #计算box和groundtruth的IOU值

"""calculate ious

Args:

boxes1: 5-D tensor [BATCH_SIZE, CELL_SIZE, CELL_SIZE, BOXES_PER_CELL, 4] ====> (x_center, y_center, w, h)

boxes2: 5-D tensor [BATCH_SIZE, CELL_SIZE, CELL_SIZE, BOXES_PER_CELL, 4] ===> (x_center, y_center, w, h)

Return:

iou: 4-D tensor [BATCH_SIZE, CELL_SIZE, CELL_SIZE, BOXES_PER_CELL]

"""

with tf.variable_scope(scope):

# transform (x_center, y_center, w, h) to (x1, y1, x2, y2)

boxes1_t = tf.stack([boxes1[..., 0] - boxes1[..., 2] / 2.0, #x-w/2=x1(左上)

boxes1[..., 1] - boxes1[..., 3] / 2.0, #y-h/2=y1(左上)

boxes1[..., 0] + boxes1[..., 2] / 2.0, #x+w/2=x2(右下)

boxes1[..., 1] + boxes1[..., 3] / 2.0], #y+h/2=y2(右下)

axis=-1) #替换最后那个维度

boxes2_t = tf.stack([boxes2[..., 0] - boxes2[..., 2] / 2.0,

boxes2[..., 1] - boxes2[..., 3] / 2.0,

boxes2[..., 0] + boxes2[..., 2] / 2.0,

boxes2[..., 1] + boxes2[..., 3] / 2.0],

axis=-1)

# calculate the left up point & right down point #计算重叠区域最左上和最右下点

lu = tf.maximum(boxes1_t[..., :2], boxes2_t[..., :2])

rd = tf.minimum(boxes1_t[..., 2:], boxes2_t[..., 2:])

# intersection

intersection = tf.maximum(0.0, rd - lu) #重叠区域

inter_square = intersection[..., 0] * intersection[..., 1] #重叠区域面积

# calculate the boxs1 square and boxs2 square

square1 = boxes1[..., 2] * boxes1[..., 3] #box1.w * box1.h

square2 = boxes2[..., 2] * boxes2[..., 3] #box2.w * box2.h

union_square = tf.maximum(square1 + square2 - inter_square, 1e-10)

return tf.clip_by_value(inter_square / union_square, 0.0, 1.0) #将IOU计算得到的值归一化到(0,1)

def loss_layer(self, predicts, labels, scope='loss_layer'): #定义损失函数

with tf.variable_scope(scope):

predict_classes = tf.reshape( #预测的类别 batchsize x 7x7x20

predicts[:, :self.boundary1],

[self.batch_size, self.cell_size, self.cell_size, self.num_class])

predict_scales = tf.reshape( #预测的scale batchsize x 7x7x2

predicts[:, self.boundary1:self.boundary2],

[self.batch_size, self.cell_size, self.cell_size, self.boxes_per_cell])

predict_boxes = tf.reshape( #预测的框 batchsize x 7x7x2,每个box四个位置坐标信息

predicts[:, self.boundary2:],

[self.batch_size, self.cell_size, self.cell_size, self.boxes_per_cell, 4])

response = tf.reshape( #label后0位置:有无目标

labels[..., 0],

[self.batch_size, self.cell_size, self.cell_size, 1])

boxes = tf.reshape( #label后(1,2,3,4)位置:目标坐标

labels[..., 1:5],

[self.batch_size, self.cell_size, self.cell_size, 1, 4])

boxes = tf.tile( #由于单个cell预测boxes_per_cell个box信息,先对box进行该维度上的拼贴一份相同尺度的;后将坐标尺度归一化到整幅图

boxes, [1, 1, 1, self.boxes_per_cell, 1]) / self.image_size

classes = labels[..., 5:] #label后[5:25]位置:目标类别信息

offset = tf.reshape(

tf.constant(self.offset, dtype=tf.float32), #将offset维度由7x7x2 reshape成 1x7x7x2

[1, self.cell_size, self.cell_size, self.boxes_per_cell])

offset = tf.tile(offset, [self.batch_size, 1, 1, 1]) #将offset的第一个维度拼贴为batchsize大小,即offset变为:batchsize x 7x7x2

offset_tran = tf.transpose(offset, (0, 2, 1, 3)) #作者是否考虑非AXA情况??如7x8

predict_boxes_tran = tf.stack(

[(predict_boxes[..., 0] + offset) / self.cell_size, #(预测box的x坐标+偏移量)/7

(predict_boxes[..., 1] + offset_tran) / self.cell_size, #(预测box的y坐标+偏移量)/7

tf.square(predict_boxes[..., 2]), #对w求平方

tf.square(predict_boxes[..., 3])], axis=-1) #对h求平方

iou_predict_truth = self.calc_iou(predict_boxes_tran, boxes) #计算IOU的值

# calculate I tensor [BATCH_SIZE, CELL_SIZE, CELL_SIZE, BOXES_PER_CELL] #计算有目标object_mask

object_mask = tf.reduce_max(iou_predict_truth, 3, keep_dims=True) #找出iou_predict_truth 第 3维度(即box_per_cell)维度计算得到的最大值构成一个tensor

object_mask = tf.cast(

(iou_predict_truth >= object_mask), tf.float32) * response #object_mask:表示有目标 以及 目标与gt的IOU

# calculate no_I tensor [CELL_SIZE, CELL_SIZE, BOXES_PER_CELL] #计算无目标noobject_mask

noobject_mask = tf.ones_like( #新建一个与给定tensor(object_mask)大小一致的tensor,其所有元素都为1

object_mask, dtype=tf.float32) - object_mask

boxes_tran = tf.stack(

[boxes[..., 0] * self.cell_size - offset,

boxes[..., 1] * self.cell_size - offset_tran,

tf.sqrt(boxes[..., 2]),

tf.sqrt(boxes[..., 3])], axis=-1)

# class_loss #类别损失函数

class_delta = response * (predict_classes - classes) #有目标情况下 类别误差

class_loss = tf.reduce_mean(

tf.reduce_sum(tf.square(class_delta), axis=[1, 2, 3]), #对7x7x20每个维度上预测的类别做误差平方求和后,乘以损失函数系数class_scale

name='class_loss') * self.class_scale

# object_loss #含有object的box的confidence预测

object_delta = object_mask * (predict_scales - iou_predict_truth)

object_loss = tf.reduce_mean(

tf.reduce_sum(tf.square(object_delta), axis=[1, 2, 3]),

name='object_loss') * self.object_scale

# noobject_loss #不含object的box的confidence预测

noobject_delta = noobject_mask * predict_scales

noobject_loss = tf.reduce_mean(

tf.reduce_sum(tf.square(noobject_delta), axis=[1, 2, 3]),

name='noobject_loss') * self.noobject_scale

# coord_loss #坐标损失函数

coord_mask = tf.expand_dims(object_mask, 4) #先扩维

boxes_delta = coord_mask * (predict_boxes - boxes_tran) #需要判断第i个cell中第j个box会否负责这个object

coord_loss = tf.reduce_mean(

tf.reduce_sum(tf.square(boxes_delta), axis=[1, 2, 3, 4]), #坐标四个维度对应求差,平方和

name='coord_loss') * self.coord_scale

tf.losses.add_loss(class_loss)

tf.losses.add_loss(object_loss)

tf.losses.add_loss(noobject_loss)

tf.losses.add_loss(coord_loss)

tf.summary.scalar('class_loss', class_loss) #以下方便tensorboard显示用

tf.summary.scalar('object_loss', object_loss)

tf.summary.scalar('noobject_loss', noobject_loss)

tf.summary.scalar('coord_loss', coord_loss)

tf.summary.histogram('boxes_delta_x', boxes_delta[..., 0])

tf.summary.histogram('boxes_delta_y', boxes_delta[..., 1])

tf.summary.histogram('boxes_delta_w', boxes_delta[..., 2])

tf.summary.histogram('boxes_delta_h', boxes_delta[..., 3])

tf.summary.histogram('iou', iou_predict_truth)

def leaky_relu(alpha): #leaky_relu激活函数

def op(inputs):

return tf.nn.leaky_relu(inputs, alpha=alpha, name='leaky_relu')

return op

下载的代码比较新,和网上博客的一些注解有些出入,这个代码主要是构建yolo的网络结构的。需要好好理解其结构。

3. train.py和test.py;

其中train.py文件可用来训练自己的权重文件,代码给的是对pascal_voc数据集进行训练。具体代码解析如下:

import os

import argparse

import datetime

import tensorflow as tf

import yolo.config as cfg

from yolo.yolo_net import YOLONet

from utils.timer import Timer

from utils.pascal_voc import pascal_voc

slim = tf.contrib.slim #tensorflow 16年推出的瘦身版代码模块

#这部分主要是用pascal_voc2007数据训练自己的网络权重数据

class Solver(object):

def __init__(self, net, data):

self.net = net

self.data = data

self.weights_file = cfg.WEIGHTS_FILE #权重文件,默认无

self.max_iter = cfg.MAX_ITER #默认15000

self.initial_learning_rate = cfg.LEARNING_RATE #初始学习率0.0001

self.decay_steps = cfg.DECAY_STEPS #衰减步长:30000

self.decay_rate = cfg.DECAY_RATE #衰减率:0.1

self.staircase = cfg.STAIRCASE

self.summary_iter = cfg.SUMMARY_ITER #日志记录迭代步数:10

self.save_iter = cfg.SAVE_ITER #保存迭代步长:1000

self.output_dir = os.path.join(

cfg.OUTPUT_DIR, datetime.datetime.now().strftime('%Y_%m_%d_%H_%M')) #保存路径:output/年_月_日_时_分

if not os.path.exists(self.output_dir):

os.makedirs(self.output_dir)

self.save_cfg()

self.variable_to_restore = tf.global_variables()

self.saver = tf.train.Saver(self.variable_to_restore, max_to_keep=None)

self.ckpt_file = os.path.join(self.output_dir, 'yolo') #模型文件路径: 输出目录/yolo

self.summary_op = tf.summary.merge_all()

self.writer = tf.summary.FileWriter(self.output_dir, flush_secs=60)

self.global_step = tf.train.create_global_step()

self.learning_rate = tf.train.exponential_decay( #产生一个指数衰减的学习速率,learning_rate=initial_learning_rate*decay_rate^(global_step/decay_steps)

self.initial_learning_rate, self.global_step, self.decay_steps,

self.decay_rate, self.staircase, name='learning_rate')

self.optimizer = tf.train.GradientDescentOptimizer(

learning_rate=self.learning_rate)

self.train_op = slim.learning.create_train_op(

self.net.total_loss, self.optimizer, global_step=self.global_step)

gpu_options = tf.GPUOptions()

config = tf.ConfigProto(gpu_options=gpu_options)

self.sess = tf.Session(config=config)

self.sess.run(tf.global_variables_initializer())

if self.weights_file is not None: #如果权重文件空,则打印“恢复权重文件从:”

print('Restoring weights from: ' + self.weights_file)

self.saver.restore(self.sess, self.weights_file)

self.writer.add_graph(self.sess.graph)

def train(self):

train_timer = Timer() #定义类对象

load_timer = Timer()

for step in range(1, self.max_iter + 1): #最大迭代:15000

load_timer.tic() #开始计时

images, labels = self.data.get() #从pascal_voc数据集读取图像和实际标签信息

load_timer.toc() #终止该步(数据加载)计时

feed_dict = {self.net.images: images, #生成一个图像和label对应的字典

self.net.labels: labels}

if step % self.summary_iter == 0: #迭代每10步时执行如下:日志记录步长

if step % (self.summary_iter * 10) == 0: #迭代每100步时执行如下:训练模型,生成报文并打印(主要是打印报文)

train_timer.tic() #训练开始计时

summary_str, loss, _ = self.sess.run(

[self.summary_op, self.net.total_loss, self.train_op], #模型训练,返回 loss

feed_dict=feed_dict)

train_timer.toc() #训练结束计时

log_str = '''{} Epoch: {}, Step: {}, Learning rate: {},''' #报文字符串内容

''' Loss: {:5.3f}\nSpeed: {:.3f}s/iter,'''

'''' Load: {:.3f}s/iter, Remain: {}'''.format(

datetime.datetime.now().strftime('%m-%d %H:%M:%S'),

self.data.epoch,

int(step),

round(self.learning_rate.eval(session=self.sess), 6),

loss,

train_timer.average_time,

load_timer.average_time,

train_timer.remain(step, self.max_iter))

print(log_str)

else: #训练模型,并计时

train_timer.tic()

summary_str, _ = self.sess.run(

[self.summary_op, self.train_op],

feed_dict=feed_dict)

train_timer.toc()

self.writer.add_summary(summary_str, step) #每训练10步,记录日志文件

else: #其他训练步长时,不记录日志,只计时

train_timer.tic()

self.sess.run(self.train_op, feed_dict=feed_dict)

train_timer.toc()

if step % self.save_iter == 0: #模型每训练1000步保存一次

print('{} Saving checkpoint file to: {}'.format(

datetime.datetime.now().strftime('%m-%d %H:%M:%S'),

self.output_dir))

self.saver.save(

self.sess, self.ckpt_file, global_step=self.global_step)

def save_cfg(self): #保存当前的模型配置信息

with open(os.path.join(self.output_dir, 'config.txt'), 'w') as f: #往output/config.txt中写配置信息

cfg_dict = cfg.__dict__

for key in sorted(cfg_dict.keys()):

if key[0].isupper():

cfg_str = '{}: {}\n'.format(key, cfg_dict[key])

f.write(cfg_str)

def update_config_paths(data_dir, weights_file):

cfg.DATA_PATH = data_dir

cfg.PASCAL_PATH = os.path.join(data_dir, 'pascal_voc')

cfg.CACHE_PATH = os.path.join(cfg.PASCAL_PATH, 'cache')

cfg.OUTPUT_DIR = os.path.join(cfg.PASCAL_PATH, 'output')

cfg.WEIGHTS_DIR = os.path.join(cfg.PASCAL_PATH, 'weights') #权重文件在pascal_voc/weights中

cfg.WEIGHTS_FILE = os.path.join(cfg.WEIGHTS_DIR, weights_file)

def main():

parser = argparse.ArgumentParser()

parser.add_argument('--weights', default="YOLO_small.ckpt", type=str)

parser.add_argument('--data_dir', default="data", type=str)

parser.add_argument('--threshold', default=0.2, type=float)

parser.add_argument('--iou_threshold', default=0.5, type=float)

parser.add_argument('--gpu', default='', type=str)

args = parser.parse_args()

if args.gpu is not None: #如果训练传进来的gpu参数非空,则将传进来的gpu信息赋值给配置文件中

cfg.GPU = args.gpu

if args.data_dir != cfg.DATA_PATH: #如果传经来的数据路径与当前配置文件数据路径不一致,则更新配置信息

update_config_paths(args.data_dir, args.weights)

os.environ['CUDA_VISIBLE_DEVICES'] = cfg.GPU

yolo = YOLONet() #声明类对象yolo

pascal = pascal_voc('train') #定义类别

solver = Solver(yolo, pascal) #利用yolo网络结构,对传进的数据,生成solver

print('Start training ...') #开始训练

solver.train()

print('Done training.') #完成训练

if __name__ == '__main__':

# python train.py --weights YOLO_small.ckpt --gpu 0 #示例,默认使用第0个GPU

main()

如果你只想测试下yolo这个模型效果,可加载别人训练好的weights模型参数(本文一开始提到的,已经提供下载链接),也可记载用train训练得到的。该程序解析如下:

import os

import cv2

import argparse

import numpy as np

import tensorflow as tf

import yolo.config as cfg

from yolo.yolo_net import YOLONet

from utils.timer import Timer

#这部分主要是加载训练好的权重文件做测试,这个权重文件可以是下载的YOLO_small.ckpt,也可以是自己训练的。

class Detector(object):

def __init__(self, net, weight_file):

self.net = net

self.weights_file = weight_file

self.classes = cfg.CLASSES

self.num_class = len(self.classes)

self.image_size = cfg.IMAGE_SIZE

self.cell_size = cfg.CELL_SIZE

self.boxes_per_cell = cfg.BOXES_PER_CELL

self.threshold = cfg.THRESHOLD

self.iou_threshold = cfg.IOU_THRESHOLD

self.boundary1 = self.cell_size * self.cell_size * self.num_class

self.boundary2 = self.boundary1 +\

self.cell_size * self.cell_size * self.boxes_per_cell

self.sess = tf.Session()

self.sess.run(tf.global_variables_initializer())

print('Restoring weights from: ' + self.weights_file)

self.saver = tf.train.Saver()

self.saver.restore(self.sess, self.weights_file) #加载权重文件

def draw_result(self, img, result): #在输入图像img上对检测到的result进行绘制框并标注类别概率信息

for i in range(len(result)): #目标个数遍历绘图

x = int(result[i][1]) #目标中心x

y = int(result[i][2]) #目标中心y

w = int(result[i][3] / 2) #目标宽取一半

h = int(result[i][4] / 2) #目标高取一半

cv2.rectangle(img, (x - w, y - h), (x + w, y + h), (0, 255, 0), 2) #目标框

cv2.rectangle(img, (x - w, y - h - 20), #显示目标类别和概率值的灰色填充框

(x + w, y - h), (125, 125, 125), -1)

lineType = cv2.LINE_AA if cv2.__version__ > '3' else cv2.CV_AA #根据opencv版本,作者已经做了考虑了

cv2.putText(

img, result[i][0] + ' : %.2f' % result[i][5], #概率是两位小数的浮点数

(x - w + 5, y - h - 7), cv2.FONT_HERSHEY_SIMPLEX, 0.5,

(0, 0, 0), 1, lineType)

def detect(self, img): #对输入图像做目标检测

img_h, img_w, _ = img.shape

inputs = cv2.resize(img, (self.image_size, self.image_size)) #尺寸缩放到448x448的图像:inputs

inputs = cv2.cvtColor(inputs, cv2.COLOR_BGR2RGB).astype(np.float32) #opencv读取图像格式是bgr,需要转换为rgb格式;

inputs = (inputs / 255.0) * 2.0 - 1.0 #读取图像归一化到【-1,1】

inputs = np.reshape(inputs, (1, self.image_size, self.image_size, 3)) #维度变化为[1,448,448,3]

result = self.detect_from_cvmat(inputs)[0]

for i in range(len(result)):

result[i][1] *= (1.0 * img_w / self.image_size) #检测到目标中心坐标x是448下的坐标,需要变化到原图像尺寸

result[i][2] *= (1.0 * img_h / self.image_size)

result[i][3] *= (1.0 * img_w / self.image_size)

result[i][4] *= (1.0 * img_h / self.image_size)

return result #返回原图像上检测到的目标坐标尺寸信息

def detect_from_cvmat(self, inputs): #输入的inputs;[1,448,448,3]

net_output = self.sess.run(self.net.logits, #网络回归输出目标

feed_dict={self.net.images: inputs})

results = []

for i in range(net_output.shape[0]): #遍历目标个数,将结果放进results中

results.append(self.interpret_output(net_output[i]))

return results #在448x448大小图像上检测到的目标信息

def interpret_output(self, output):

probs = np.zeros((self.cell_size, self.cell_size, #所有box (98个)对应每个类别的概率,[7,7,2,20]

self.boxes_per_cell, self.num_class))

class_probs = np.reshape(

output[0:self.boundary1], #输出的[0:7x7x20]这980个数代表每个cell预测的每个类别的概率值

(self.cell_size, self.cell_size, self.num_class)) #最后输出时,每个cell只返回一个类别,因此类别概率维度变为[7,7,20]

scales = np.reshape(

output[self.boundary1:self.boundary2], #输出的[7x7x20:7x7x22]这98个数reshape成[7,7,2],个人理解是有无目标落在这98个box中

(self.cell_size, self.cell_size, self.boxes_per_cell))

boxes = np.reshape( #输出的[7x7x22:]这些数记录的是每个box对应的目标坐标信息,reshape为[7,7,2,4]

output[self.boundary2:],

(self.cell_size, self.cell_size, self.boxes_per_cell, 4))

offset = np.array(

[np.arange(self.cell_size)] * self.cell_size * self.boxes_per_cell)

offset = np.transpose(

np.reshape(

offset,

[self.boxes_per_cell, self.cell_size, self.cell_size]),#offset;[2,7,7]->[7,7,2]

(1, 2, 0))

boxes[:, :, :, 0] += offset

boxes[:, :, :, 1] += np.transpose(offset, (1, 0, 2))

boxes[:, :, :, :2] = 1.0 * boxes[:, :, :, 0:2] / self.cell_size

boxes[:, :, :, 2:] = np.square(boxes[:, :, :, 2:])

boxes *= self.image_size #将目标坐标相对cell的偏移量反映到448图像上

for i in range(self.boxes_per_cell):

for j in range(self.num_class):

probs[:, :, i, j] = np.multiply( #某cell中第i个box中含目标的概率*该cell中数据第j个类别概率

class_probs[:, :, j], scales[:, :, i])

filter_mat_probs = np.array(probs >= self.threshold, dtype='bool') #若概率大于0.2,filter_mat_probs=1

filter_mat_boxes = np.nonzero(filter_mat_probs) #过滤掉一个cell中的两个box的其中一个,返回filter_mat_probs中不为0的下标

boxes_filtered = boxes[filter_mat_boxes[0],

filter_mat_boxes[1], filter_mat_boxes[2]]

probs_filtered = probs[filter_mat_probs]

classes_num_filtered = np.argmax(

filter_mat_probs, axis=3)[

filter_mat_boxes[0], filter_mat_boxes[1], filter_mat_boxes[2]]

argsort = np.array(np.argsort(probs_filtered))[::-1]

boxes_filtered = boxes_filtered[argsort] #过滤刷选出box

probs_filtered = probs_filtered[argsort] #过滤刷选出probs高的

classes_num_filtered = classes_num_filtered[argsort] #过滤刷选出类别

for i in range(len(boxes_filtered)):

if probs_filtered[i] == 0:

continue

for j in range(i + 1, len(boxes_filtered)):

if self.iou(boxes_filtered[i], boxes_filtered[j]) > self.iou_threshold:

probs_filtered[j] = 0.0

filter_iou = np.array(probs_filtered > 0.0, dtype='bool')

boxes_filtered = boxes_filtered[filter_iou]

probs_filtered = probs_filtered[filter_iou]

classes_num_filtered = classes_num_filtered[filter_iou]

result = []

for i in range(len(boxes_filtered)):

result.append(

[self.classes[classes_num_filtered[i]],

boxes_filtered[i][0],

boxes_filtered[i][1],

boxes_filtered[i][2],

boxes_filtered[i][3],

probs_filtered[i]])

return result #输出过滤后的类别,以及对应box的坐标

def iou(self, box1, box2):

tb = min(box1[0] + 0.5 * box1[2], box2[0] + 0.5 * box2[2]) - \ #得到的tb为重叠区域的宽

max(box1[0] - 0.5 * box1[2], box2[0] - 0.5 * box2[2])

lr = min(box1[1] + 0.5 * box1[3], box2[1] + 0.5 * box2[3]) - \ #得到的lr为重叠区域的高

max(box1[1] - 0.5 * box1[3], box2[1] - 0.5 * box2[3])

inter = 0 if tb < 0 or lr < 0 else tb * lr #重叠区域面积inter=tb*lr

return inter / (box1[2] * box1[3] + box2[2] * box2[3] - inter) #IOU=inter/(box1面积+box2面积)

def camera_detector(self, cap, wait=10): #读取摄像头,延迟10ms

detect_timer = Timer()

ret, _ = cap.read()

while ret:

ret, frame = cap.read()

detect_timer.tic()

result = self.detect(frame)

detect_timer.toc()

print('Average detecting time: {:.3f}s'.format( #统计平均检测时间

detect_timer.average_time))

self.draw_result(frame, result) #绘制结果

cv2.imshow('Camera', frame)

cv2.waitKey(wait)

ret, frame = cap.read()

def image_detector(self, imname, wait=0): #读取图像,一直显示

detect_timer = Timer()

image = cv2.imread(imname)

detect_timer.tic()

result = self.detect(image)

detect_timer.toc()

print('Average detecting time: {:.3f}s'.format(

detect_timer.average_time))

self.draw_result(image, result)

cv2.imshow('Image', image)

cv2.waitKey(wait)

def main():

parser = argparse.ArgumentParser()

parser.add_argument('--weights', default="YOLO_small.ckpt", type=str)

parser.add_argument('--weight_dir', default='weights', type=str)

parser.add_argument('--data_dir', default="data", type=str)

parser.add_argument('--gpu', default='', type=str)

args = parser.parse_args()

os.environ['CUDA_VISIBLE_DEVICES'] = args.gpu

yolo = YOLONet(False)

weight_file = os.path.join(args.data_dir, args.weight_dir, args.weights) #权重文件目录

detector = Detector(yolo, weight_file)

# detect from camera #以下是用摄像头做检测输入源

# cap = cv2.VideoCapture(-1)

# detector.camera_detector(cap)

# detect from image file #以下是用图像做检测输入源

imname = 'test/person.jpg'

detector.image_detector(imname)

if __name__ == '__main__':

main()

如果想更换图像测试,只需要把

test/person.jpg 替换成你的文件目录加文件名即可;

如果想输入摄像头采集的图像,则将

# cap = cv2.VideoCapture(-1)

# detector.camera_detector(cap)

# detector.camera_detector(cap)

取消注释,并注释掉以下两行即可

imname = 'test/person.jpg'

detector.image_detector(imname)

detector.image_detector(imname)

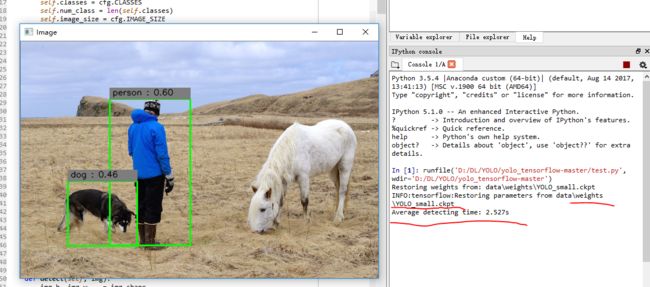

我的运行结果如下(环境是win10,用Spyder运行的,其中tensorflow版本建议更换到1.4以上):

我下载的YOLO_small.ckpt放在weights目录下。单张图像检测用时2.527s。

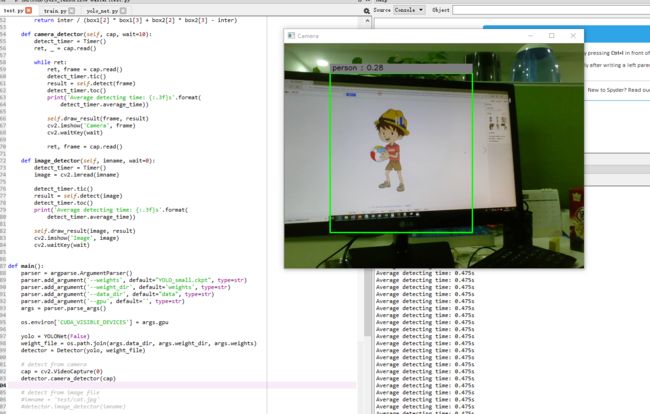

运行视频时如果报错,建议将

with tf.variable_scope(scope):中添加reuse=True就OK了,关于摄像头参数传0,-1,还是1看你具体设备了。

YOLO对卡通识别效果不错,只是当前模型识别种类太少。

参考文章:

https://blog.csdn.net/qq1483661204/article/details/79681926

https://blog.csdn.net/qq_34784753/article/details/78803423