You Only Look Once: Unified, Real-Time Object Detection

文章目录

- Abstract

- 1. Introduction

- 2. Unified Detection

- 2.1. Network Design

- 2.2. Training

- 2.3. Inference

- 2.4. Limitations of YOLO

- 3. Comparison to Other Detection Systems

- 4. Experiments

- 4.1. Comparison to Other Real-Time Systems

- 4.2. VOC 2007 Error Analysis

- 4.3. Combining Fast R-CNN and YOLO

- 4.4. VOC 2012 Results

- 4.5. Generalizability: Person Detection in Artwork

- 5. Real-Time Detection In The Wild

- 6. Conclusion

Abstract

We present YOLO, a new approach to object detection. Prior work on object detection repurposes classifiers to perform detection. Instead, we frame object detection as a regression problem to spatially separated bounding boxes and associated class probabilities. A single neural network predicts bounding boxes and class probabilities directly from full images in one evaluation. Since the whole detection pipeline is a single network, it can be optimized end-to-end directly on detection performance.

我们介绍YOLO,这是一种新的对象检测方法。 有关对象检测的先前工作重新利用了分类器来执行检测。 取而代之的是,我们将对象检测框架化为空间分隔的边界框和相关类概率的回归问题。 单个神经网络可以在一次评估中直接从完整图像中预测边界框和类概率。 由于整个检测管道是单个网络,因此可以直接在检测性能上进行端到端优化。

Our unified architecture is extremely fast. Our base YOLO model processes images in real-time at 45 frames per second. A smaller version of the network, Fast YOLO, processes an astounding 155 frames per second while still achieving double the mAP of other real-time detectors. Compared to state-of-the-art detection systems, YOLO makes more localization errors but is less likely to predict false positives on background. Finally, YOLO learns very general representations of objects. It outperforms other detection methods, including DPM and R-CNN, when generalizing from natural images to other domains like artwork.

我们的统一体系结构非常快。 我们的基本YOLO模型以每秒45帧的速度实时处理图像。 较小的网络Fast YOLO每秒可处理惊人的155帧,同时仍可实现其他实时检测器的mAP两倍的性能。 与最新的检测系统相比,YOLO会产生更多的定位错误,但预测背景假正性的可能性较小。 最后,YOLO学习了非常通用的对象表示形式。 从自然图像推广到艺术品等其他领域时,它的性能优于其他检测方法,包括DPM和R-CNN。

1. Introduction

Humans glance at an image and instantly know what objects are in the image, where they are, and how they interact. The human visual system is fast and accurate, allowing us to perform complex tasks like driving with little conscious thought. Fast, accurate algorithms for object detection would allow computers to drive cars without specialized sensors, enable assistive devices to convey real-time scene information to human users, and unlock the potential for general purpose, responsive robotic systems.

人们看了一眼图像,立即知道图像中有什么对象,它们在哪里以及它们如何相互作用。 人类的视觉系统快速准确,使我们能够执行一些复杂的任务,例如在没有意识的情况下驾驶。 快速,准确的对象检测算法将使计算机无需专用传感器即可驾驶汽车,使辅助设备向人类用户传达实时场景信息,并释放通用响应型机器人系统的潜力。

Current detection systems repurpose classifiers to perform detection. To detect an object, these systems take a classifier for that object and evaluate it at various locations and scales in a test image. Systems like deformable parts models (DPM) use a sliding window approach where the classifier is run at evenly spaced locations over the entire image [10]. More recent approaches like R-CNN use region proposal methods to first generate potential bounding boxes in an image and then run a classifier on these proposed boxes. After classification, post-processing is used to refine the bounding boxes, eliminate duplicate detections, and rescore the boxes based on other objects in the scene [13]. These complex pipelines are slow and hard to optimize because each individual component must be trained separately.

当前的检测系统重新利用分类器来执行检测。 为了检测物体,这些系统采用了该物体的分类器,并在测试图像的各个位置和比例上对其进行了评估。 像可变形零件模型(DPM)之类的系统使用滑动窗口方法,其中分类器在整个图像上均匀分布的位置运行[10]。 诸如R-CNN的最新方法使用区域提议方法,首先在图像中生成潜在的边界框,然后在这些提议的框上运行分类器。 分类后,使用后处理来完善边界框,消除重复检测并根据场景中的其他对象对这些框进行重新评分[13]。 这些复杂的流程缓慢且难以优化,因为每个单独的组件都必须分别进行培训。

We reframe object detection as a single regression problem, straight from image pixels to bounding box coordinates and class probabilities. Using our system, you only look once (YOLO) at an image to predict what objects are present and where they are.

我们将对象检测重新构造为一个回归问题,直接从图像像素到边界框坐标和类概率。 使用我们的系统,您只需看一次(YOLO)图像即可预测存在的物体及其位置。

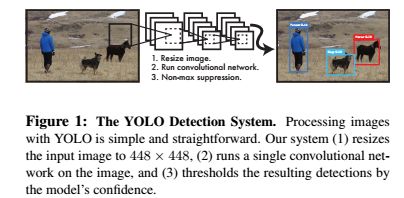

YOLO is refreshingly simple: see Figure 1. A single convolutional network simultaneously predicts multiple bounding boxes and class probabilities for those boxes. YOLO trains on full images and directly optimizes detection performance. This unified model has several benefits over traditional methods of object detection.

YOLO非常简单:请参见图1。单个卷积网络可同时预测多个边界框和这些框的类概率。 YOLO训练完整图像并直接优化检测性能。 与传统的对象检测方法相比,此统一模型具有多个优点。

First, YOLO is extremely fast. Since we frame detection as a regression problem we don’t need a complex pipeline. We simply run our neural network on a new image at test time to predict detections. Our base network runs at 45 frames per second with no batch processing on a Titan X GPU and a fast version runs at more than 150 fps. This means we can process streaming video in real-time with less than 25 milliseconds of latency. Furthermore, YOLO achieves more than twice the mean average precision of other real-time systems. For a demo of our system running in real-time on a webcam please see our project webpage: http://pjreddie.com/yolo/.

首先,YOLO非常快。 由于我们将检测框架定为回归问题,因此不需要复杂的流程。 我们只需在测试时在新图像上运行神经网络即可预测检测结果。 我们的基本网络以每秒45帧的速度运行,在Titan X GPU上没有批处理,而快速版本的运行速度超过150 fps。 这意味着我们可以以不到25毫秒的延迟实时处理流视频。 此外,YOLO的平均精度是其他实时系统的两倍以上。 有关在网络摄像头上实时运行的系统的演示,请参阅我们的项目网页:http://pjreddie.com/yolo/。

Second, YOLO reasons globally about the image when making predictions. Unlike sliding window and region proposal-based techniques, YOLO sees the entire image during training and test time so it implicitly encodes contextual information about classes as well as their appearance. Fast R-CNN, a top detection method [14], mistakes background patches in an image for objects because it can’t see the larger context. YOLO makes less than half the number of background errors compared to Fast R-CNN.

其次,YOLO在做出预测时会全局考虑图像。 与基于滑动窗口和区域提议的技术不同,YOLO在训练和测试期间会看到整个图像,因此它隐式地编码有关类及其外观的上下文信息。 快速R-CNN是一种顶部检测方法[14],因为它看不到较大的上下文,因此将图像中的背景色块误认为是对象。 与Fast R-CNN相比,YOLO产生的背景错误少于一半。

Third, YOLO learns generalizable representations of objects. When trained on natural images and tested on artwork, YOLO outperforms top detection methods like DPM and R-CNN by a wide margin. Since YOLO is highly generalizable it is less likely to break down when applied to new domains or unexpected inputs.

第三,YOLO学习对象的可概括表示。 在自然图像上进行训练并在艺术品上进行测试时,YOLO在很大程度上优于DPM和R-CNN等顶级检测方法。 由于YOLO具有高度通用性,因此在应用于新域或意外输入时,分解的可能性较小。

YOLO still lags behind state-of-the-art detection systems in accuracy. While it can quickly identify objects in images it struggles to precisely localize some objects, especially small ones. We examine these tradeoffs further in our experiments.

All of our training and testing code is open source. A variety of pretrained models are also available to download.

YOLO在准确性方面仍落后于最新的检测系统。 尽管它可以快速识别图像中的对象,但仍难以精确定位某些对象,尤其是小的对象。 我们在实验中进一步研究了这些折衷。

我们所有的培训和测试代码都是开源的。 各种预训练的模型也可以下载。

2. Unified Detection

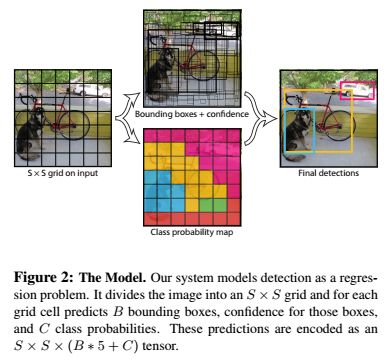

We unify the separate components of object detection into a single neural network. Our network uses features from the entire image to predict each bounding box. It also predicts all bounding boxes across all classes for an image simultaneously. This means our network reasons globally about the full image and all the objects in the image. The YOLO design enables end-to-end training and realtime speeds while maintaining high average precision.

Our system divides the input image into an S × S S × S S×S grid. If the center of an object falls into a grid cell, that grid cell is responsible for detecting that object.

我们将对象检测的各个组成部分统一为一个神经网络。 我们的网络使用整个图像中的特征来预测每个边界框。 它还可以同时预测图像所有类的所有边界框。 这意味着我们的网络会全局考虑整个图像和图像中的所有对象。 YOLO设计可实现端到端的培训和实时速度,同时保持较高的平均精度。我们的系统将输入图像划分为S×S网格。 如果对象的中心落入网格单元,则该网格单元负责检测该对象。

Each grid cell predicts B bounding boxes and confidence scores for those boxes. These confidence scores reflect how confident the model is that the box contains an object and also how accurate it thinks the box is that it predicts. Formally we define confidence as P r ( O b j e c t ) ∗ I O U p r e d t r u t h Pr(Object) ∗ IOU^{truth}_{pred} Pr(Object)∗IOUpredtruth. If no object exists in that cell, the confidence scores should be zero. Otherwise we want the confidence score to equal the intersection over union (IOU) between the predicted box and the ground truth.

每个网格单元预测B个边界框和这些框的置信度得分。 这些置信度得分反映出该模型对框包含一个对象的信心,以及它认为框预测的准确性。 形式上,我们将置信度定义为 P r ( O b j e c t ) ∗ I O U p r e d t r u t h Pr(Object) ∗ IOU^{truth}_{pred} Pr(Object)∗IOUpredtruth。 如果该单元格中没有对象,则置信度分数应为零。 否则,我们希望置信度分数等于预测框与地面实况之间的并集交集(IOU)。

Each bounding box consists of 5 predictions: x , y , w , h x, y, w, h x,y,w,h, and confidence. The ( x , y ) (x, y) (x,y)coordinates represent the center of the box relative to the bounds of the grid cell. The width and height are predicted relative to the whole image. Finally the confidence prediction represents the IOU between the predicted box and any ground truth box. Each grid cell also predicts C C C conditional class probabilities, P r ( C l a s s i ∣ O b j e c t ) Pr(Class_i|Object) Pr(Classi∣Object). These probabilities are conditioned on the grid cell containing an object. We only predict one set of class probabilities per grid cell, regardless of the number of boxes B B B.

每个边界框包含5个预测: x , y , w , h x,y,w,h x,y,w,h和置信度。 ( x , y ) (x,y) (x,y)坐标表示框相对于网格单元边界的中心。 相对于整个图像预测宽度和高度。 最后,置信度预测表示预测框与任何真实框之间的IOU。 每个网格单元还预测 C C C个条件类概率 P r ( C l a s s i ∣ O b j e c t ) Pr(Class_i|Object) Pr(Classi∣Object)。 这些概率以包含对象的网格单元为条件。 无论框 B B B的数量如何,我们仅预测每个网格单元的一组类概率。

At test time we multiply the conditional class probabilities and the individual box confidence predictions, 在测试时,我们将条件类别的概率与各个框的置信度预测相乘,

P r ( C l a s s i ∣ O b j e c t ) ∗ P r ( O b j e c t ) ∗ I O U p r e d t r u t h = P r ( C l a s s i ) ∗ I O U p r e d t r u t h Pr(Class_i|Object)*Pr(Object)*IOU^{truth}_{pred}=Pr(Class_i)*IOU^{truth}_{pred} Pr(Classi∣Object)∗Pr(Object)∗IOUpredtruth=Pr(Classi)∗IOUpredtruth (1)

which gives us class-specific confidence scores for each box. These scores encode both the probability of that class appearing in the box and how well the predicted box fits the object.

这为我们提供了每个框的特定类的置信度得分。 这些分数既编码了该类别出现在盒子中的概率,也预测了预测的盒子适合对象的程度。

For evaluating YOLO on PASCAL VOC, we use S = 7 S = 7 S=7, B = 2 B = 2 B=2. PASCAL VOC has 20 labelled classes so C = 20 C = 20 C=20. Our final prediction is a 7 × 7 × 30 7 × 7 × 30 7×7×30 tensor.

为了评估PASCAL VOC上的YOLO,我们使用 S = 7 S = 7 S=7, B = 2 B = 2 B=2。 PASCAL VOC具有20个标记的类,因此 C = 20 C = 20 C=20。 我们的最终预测是张量 7 × 7 × 30 7×7×30 7×7×30。

2.1. Network Design

We implement this model as a convolutional neural network and evaluate it on the PASCAL VOC detection dataset [9]. The initial convolutional layers of the network extract features from the image while the fully connected layers predict the output probabilities and coordinates. Our network architecture is inspired by the GoogLeNet model for image classification [34]. Our network has 24 24 24 convolutional layers followed by 2 fully connected layers. Instead of the inception modules used by GoogLeNet, we simply use 1 × 1 reduction layers followed by 3 × 3 3 × 3 3×3 convolutional layers, similar to Lin et al [22]. The full network is shown in Figure 3.

我们将该模型实现为卷积神经网络,并在PASCAL VOC检测数据集上对其进行评估[9]。 网络的初始卷积层从图像中提取特征,而完全连接的层则预测输出概率和坐标。 我们的网络架构受GoogLeNet模型进行图像分类的启发[34]。 我们的网络有24个卷积层,其后是2个完全连接的层。 除了使用GoogLeNet的初始模块外,我们只使用1×1归约层,然后使用3×3卷积层,类似于Lin等[22]。 完整的网络如图3所示。

We also train a fast version of YOLO designed to push the boundaries of fast object detection. Fast YOLO uses a neural network with fewer convolutional layers (9 instead of 24) and fewer filters in those layers. Other than the size of the network, all training and testing parameters are the same between YOLO and Fast YOLO.

The final output of our network is the 7 × 7 × 30 7 × 7 × 30 7×7×30 tensor of predictions.

我们还训练了一种快速版本的YOLO,旨在突破快速物体检测的界限。 Fast YOLO使用的神经网络具有较少的卷积层(从9个而不是24个),并且这些层中的过滤器较少。 除了网络的规模外,YOLO和Fast YOLO之间的所有训练和测试参数都相同。

我们网络的最终输出是预测的 7 × 7 × 30 7×7×30 7×7×30张量。

2.2. Training

We pretrain our convolutional layers on the ImageNet 1000-class competition dataset [30]. For pretraining we use the first 20 convolutional layers from Figure 3 followed by a average-pooling layer and a fully connected layer. We train this network for approximately a week and achieve a single crop top-5 accuracy of 88% on the ImageNet 2012 validation set, comparable to the GoogLeNet models in Caffe’s Model Zoo [24]. We use the Darknet framework for all training and inference [26].

We then convert the model to perform detection. Ren et al. show that adding both convolutional and connected layers to pretrained networks can improve performance [29]. Following their example, we add four convolutional layers and two fully connected layers with randomly initialized weights. Detection often requires fine-grained visual information so we increase the input resolution of the network from 224 × 224 224 × 224 224×224 to 448 × 448 448 × 448 448×448.

我们在ImageNet 1000类竞赛数据集上对卷积层进行预训练[30]。 对于预训练,我们使用图3中的前20个卷积层,然后是平均池层和完全连接层。 我们对这个网络进行了大约一周的训练,并在ImageNet 2012验证集上达到了单作物top-5的准确性,达到88%,与Caffe模型动物园[24]中的GoogLeNet模型相当。 我们将Darknet框架用于所有训练和推断[26]。

然后,我们将模型转换为执行检测。 任等人表明将卷积层和连接层都添加到预训练的网络中可以提高性能[29]。 按照他们的示例,我们添加了四个卷积层和两个完全连接的层,它们具有随机初始化的权重。 检测通常需要细粒度的视觉信息,因此我们将网络的输入分辨率从 224 × 224 224×224 224×224增加到 448 × 448 448×448 448×448。

Our final layer predicts both class probabilities and bounding box coordinates. We normalize the bounding box width and height by the image width and height so that they fall between 0 and 1. We parametrize the bounding box x and y coordinates to be offsets of a particular grid cell location so they are also bounded between 0 and 1. We use a linear activation function for the final layer and all other layers use the following leaky rectified linear activation:

我们的最后一层可以预测类概率和边界框坐标。 我们通过图像的宽度和高度对边界框的宽度和高度进行归一化,使它们落在0和1之间。我们将边界框的x和y坐标参数化为特定网格单元位置的偏移量,因此它们也被限制在0和1之间我们对最终层使用线性激活函数,而所有其他层均使用以下泄漏校正线性激活:

ϕ ( x ) = { x , if x > 0 0.1 x , otherwise \phi(x) = \begin{cases} x, & \text{if x > 0} \\ 0.1x, & \text{otherwise} \end{cases} ϕ(x)={x,0.1x,if x > 0otherwise

We optimize for sum-squared error in the output of our model. We use sum-squared error because it is easy to optimize, however it does not perfectly align with our goal of maximizing average precision. It weights localization error equally with classification error which may not be ideal. Also, in every image many grid cells do not contain any object. This pushes the “confidence” scores of those cells towards zero, often overpowering the gradient from cells that do contain objects. This can lead to model instability, causing training to diverge early on.

我们针对模型输出中的平方和误差进行了优化。 我们使用平方和误差是因为它易于优化,但是它与我们实现平均精度最大化的目标并不完全一致。 它对定位误差和分类误差的权重相等,这可能不理想。 同样,在每个图像中,许多网格单元都不包含任何对象。 这会将这些单元格的“置信度”得分置为零,通常会超过确实包含对象的单元格的梯度。 这可能会导致模型不稳定,从而导致训练在早期就出现分歧。

To remedy this, we increase the loss from bounding box coordinate predictions and decrease the loss from confidence predictions for boxes that don’t contain objects. We use two parameters, λ c o o r d \lambda_{coord} λcoord and λ n o o b j \lambda_{noobj} λnoobj to accomplish this. We set λ c o o r d = 5 \lambda_{coord} = 5 λcoord=5 and λ n o o b j = . 5 \lambda_{noobj} = .5 λnoobj=.5.

为了解决这个问题,对于不包含对象的盒子,我们增加了边界框坐标预测的损失,并减少了置信度预测的损失。 我们使用两个参数 λ c o o r d \lambda_ {coord} λcoord和 λ n o o b j \lambda_ {noobj} λnoobj来完成此操作。 我们设置 λ c o o r d = 5 \lambda_ {coord} = 5 λcoord=5和 λ n o o b j = . 5 \lambda_ {noobj} = .5 λnoobj=.5。

Sum-squared error also equally weights errors in large boxes and small boxes. Our error metric should reflect that small deviations in large boxes matter less than in small boxes. To partially address this we predict the square root of the bounding box width and height instead of the width and height directly.

平方和误差也平均权衡大盒子和小盒子中的误差。 我们的误差度量标准应该反映出,大盒子中的小偏差比小盒子中的小偏差要小。 为了部分解决此问题,我们预测边界框的宽度和高度的平方根,而不是直接预测宽度和高度。

YOLO predicts multiple bounding boxes per grid cell. At training time we only want one bounding box predictor to be responsible for each object. We assign one predictor to be “responsible” for predicting an object based on which prediction has the highest current IOU with the ground truth. This leads to specialization between the bounding box predictors. Each predictor gets better at predicting certain sizes, aspect ratios, or classes of object, improving overall recall.

YOLO预测每个网格单元有多个边界框。 在训练时,我们只希望一个边界框预测变量对每个对象负责。 我们将一个预测变量指定为“负责任的”预测对象,基于哪个预测具有最高的当前IOU和真实性。 这导致边界框预测变量之间的专用化。 每个预测器都可以更好地预测某些大小,纵横比或对象类别,从而改善总体召回率。

During training we optimize the following, multi-part loss function:

λ c o o r d ∑ i = 0 S 2 ∑ j = 0 B 1 i j o b j [ ( x i − x ^ i ) 2 + ( y i − y ^ i ) 2 ] + λ ∑ i = 0 S 2 1 i j o b j [ ( w i − w ^ i ) 2 ] + ∑ i = 0 B 2 ∑ j = 0 B 1 i j o b j ( C i − C ^ i ) 2 + λ ∑ i = 0 S 2 ∑ j = 0 B 1 i j n o o b j ( C i − C ^ i ) 2 + ∑ i = 0 S 2 1 i = 0 o b j ∑ c ∈ c l a s s e s ( p i ( c ) − p ^ i ( c ) ) 2 \lambda_{coord}\sum^{S^2}_{i=0}\sum^B_{j=0}1^{obj}_{ij}[(x_i-\hat x_i)^2+(y_i-\hat y_i)^2] +\lambda\sum^{S^2}_{i=0}1^{obj}_{ij}[(\sqrt{w_i}-\sqrt{\hat w_i})^2]+\sum^{B^2}_{i=0}\sum^B_{j=0}1^{obj}_{ij}(C_i-\hat C_i)^2+\lambda\sum^{S^2}_{i=0}\sum^B_{j=0}1^{noobj}_{ij}(C_i-\hat C_i)^2+\sum^{S^2}_{i=0}1^{obj}_{i=0}\sum_{c \in classes}(p_i(c)-\hat p_i(c))^2 λcoord∑i=0S2∑j=0B1ijobj[(xi−x^i)2+(yi−y^i)2]+λ∑i=0S21ijobj[(wi−w^i)2]+∑i=0B2∑j=0B1ijobj(Ci−C^i)2+λ∑i=0S2∑j=0B1ijnoobj(Ci−C^i)2+∑i=0S21i=0obj∑c∈classes(pi(c)−p^i(c))2 (3)

where 1 i o b j 1^{obj}_{i} 1iobj denotes if object appears in cell i i i and 1 i j o b j 1^{obj}_{ij} 1ijobj denotes that the j t h j_{th} jth bounding box predictor in cell i i i is “responsible” for that prediction.

其中 1 i o b j 1 ^ {obj} _ {i} 1iobj表示对象是否出现在单元格 i i i中,而 1 i j o b j 1 ^ {obj} _ {ij} 1ijobj表示单元格 i i i中的 j t h j_ {th} jth边界框预测变量为“ 对那个预测负责”。

Note that the loss function only penalizes classification error if an object is present in that grid cell (hence the conditional class probability discussed earlier). It also only penalizes bounding box coordinate error if that predictor is “responsible” for the ground truth box (i.e. has the highest IOU of any predictor in that grid cell).

请注意,如果该网格单元中存在对象,则损失函数只会惩罚分类错误(因此,前面讨论过的条件分类概率)。 如果该预测变量对地面真值框“负责”(即该网格单元中任何预测变量的IOU最高),它也只会惩罚边界框坐标误差。

We train the network for about 135 epochs on the training and validation data sets from PASCAL VOC 2007 and 2012. When testing on 2012 we also include the VOC 2007 test data for training. Throughout training we use a batch size of 64, a momentum of 0.9 and a decay of 0.0005.

我们根据PASCAL VOC 2007和2012的培训和验证数据集对网络进行了135个时期的培训。在2012年进行测试时,我们还包含了VOC 2007测试数据进行培训。 在整个训练过程中,我们使用的批次大小为64,动量为0.9,衰减为0.0005。

Our learning rate schedule is as follows: For the first epochs we slowly raise the learning rate from 10−3 to 10−2. If we start at a high learning rate our model often diverges due to unstable gradients. We continue training with 10−2 for 75 epochs, then 10−3 for 30 epochs, and finally 10−4 for 30 epochs.

我们的学习率时间表如下:在第一个时期,我们将学习率从10-3缓慢提高到10-2。 如果我们以较高的学习率开始,则由于不稳定的梯度,我们的模型经常会发散。 我们继续以75的10-2训练,然后以30的10-3训练,最后以30的10-4训练。

To avoid overfitting we use dropout and extensive data augmentation. A dropout layer with r a t e = . 5 rate = .5 rate=.5 after the first connected layer prevents co-adaptation between layers [18]. For data augmentation we introduce random scaling and translations of up to 20% of the original image size. We also randomly adjust the exposure and saturation of the image by up to a factor of 1.5 in the HSV color space.

为了避免过度拟合,我们使用了dropout 和广泛的数据扩充。 在第一个连接的层之后,速率为.5的dropout层可防止层之间的协同适应[18]。 对于数据扩充,我们引入了随机缩放和最多原始图像大小20%的转换。我们还将在HSV颜色空间中将图像的曝光和饱和度随机调整至1.5。

2.3. Inference

Just like in training, predicting detections for a test image only requires one network evaluation. On PASCAL VOC the network predicts 98 bounding boxes per image and class probabilities for each box. YOLO is extremely fast at test time since it only requires a single network evaluation, unlike classifier-based methods.

就像在训练中一样,预测测试图像的检测仅需要进行一次网络评估。 在PASCAL VOC上,网络可以预测每个图像98个边界框以及每个框的类概率。 与基于分类器的方法不同,YOLO只需要进行一次网络评估,因此测试时间非常快。

The grid design enforces spatial diversity in the bounding box predictions. Often it is clear which grid cell an object falls in to and the network only predicts one box for each object. However, some large objects or objects near the border of multiple cells can be well localized by multiple cells. Non-maximal suppression can be used to fix these multiple detections. While not critical to performance as it is for R-CNN or DPM, non-maximal suppression adds 2- 3% in mAP.

网格设计在边界框预测中强制执行空间分集。 通常,很明显,一个对象属于哪个网格单元,并且网络仅为每个对象预测一个框。 但是,一些大对象或多个单元格边界附近的对象可以被多个单元格很好地定位。 非最大抑制可用于修复这些多次检测。 尽管对于R-CNN或DPM而言,性能并不是至关重要,但非最大抑制会在mAP中增加2-3%。

2.4. Limitations of YOLO

YOLO imposes strong spatial constraints on bounding box predictions since each grid cell only predicts two boxes and can only have one class. This spatial constraint limits the number of nearby objects that our model can predict. Our model struggles with small objects that appear in groups, such as flocks of birds.

由于每个网格单元仅预测两个框且只能具有一个类别,因此YOLO对边界框的预测施加了强大的空间约束。 这种空间限制了我们的模型可以预测的附近物体的数量。 我们的模型对成组出现的小物体(例如成群的鸟)预测不好。

Since our model learns to predict bounding boxes from data, it struggles to generalize to objects in new or unusual aspect ratios or configurations. Our model also uses relatively coarse features for predicting bounding boxes since our architecture has multiple downsampling layers from the input image.

由于我们的模型学会了根据数据预测边界框,因此很难将其推广到具有新的或不同的长宽比或配置的对象。 我们的模型还使用相对粗略的特征来预测边界框,因为我们的体系结构具有来自输入图像的多个下采样层。

Finally, while we train on a loss function that approximates detection performance, our loss function treats errors the same in small bounding boxes versus large bounding boxes. A small error in a large box is generally benign but a small error in a small box has a much greater effect on IOU. Our main source of error is incorrect localizations.

最后,虽然我们训练的是近似检测性能的损失函数,但损失函数在小边界框与大边界框中对待错误的方式相同。 大盒中的小错误通常是良性的,但小盒中的小错误对IOU的影响更大。 错误的主要来源是错误的定位。

3. Comparison to Other Detection Systems

Object detection is a core problem in computer vision. Detection pipelines generally start by extracting a set of robust features from input images (Haar [25], SIFT [23], HOG [4], convolutional features [6]). Then, classifiers [36, 21, 13, 10] or localizers [1, 32] are used to identify objects in the feature space. These classifiers or localizers are run either in sliding window fashion over the whole image or on some subset of regions in the image [35, 15, 39]. We compare the YOLO detection system to several top detection frameworks, highlighting key similarities and differences.

对象检测是计算机视觉中的核心问题。 检测管线通常从输入图像中提取一组鲁棒特征开始(Haar [25],SIFT [23],HOG [4],卷积特征[6])。 然后,使用分类器[36、21、13、10]或定位器[1、32]识别特征空间中的对象。 这些分类器或定位器以滑动窗口的方式在整个图像上或图像的某些区域子集上运行[35、15、39]。 我们将YOLO检测系统与几个顶级检测框架进行了比较,突出了关键的异同。

Deformable parts models.

Deformable parts models (DPM) use a sliding window approach to object detection [10]. DPM uses a disjoint pipeline to extract static features, classify regions, predict bounding boxes for high scoring regions, etc. Our system replaces all of these disparate parts with a single convolutional neural network. The network performs feature extraction, bounding box prediction, nonmaximal suppression, and contextual reasoning all concurrently. Instead of static features, the network trains the features in-line and optimizes them for the detection task. Our unified architecture leads to a faster, more accurate model than DPM.

可变形零件模型(DPM)使用滑动窗口方法进行对象检测[10]。 DPM使用不相交的管道来提取静态特征,对区域进行分类,预测高分区域的边界框等。我们的系统用单个卷积神经网络替换了所有这些不同的部分。 网络同时执行特征提取,边界框预测,非最大抑制和上下文推理。 网络代替静态功能,而是在线训练功能并针对检测任务对其进行优化。 与DPM相比,我们的统一体系结构可导致更快,更准确的模型。

R-CNN.

R-CNN and its variants use region proposals instead of sliding windows to find objects in images. Selective Search [35] generates potential bounding boxes, a convolutional network extracts features, an SVM scores the boxes, a linear model adjusts the bounding boxes, and non-max suppression eliminates duplicate detections. Each stage of this complex pipeline must be precisely tuned independently and the resulting system is very slow, taking more than 40 seconds per image at test time [14].

R-CNN及其变体使用区域提议而不是滑动窗口来查找图像中的对象。 选择性搜索[35]生成潜在的边界框,卷积网络提取特征,SVM对这些框进行评分,线性模型调整边界框,非最大抑制消除重复的检测。 这个复杂的流水线的每个阶段都必须独立地精确调整,并且结果系统非常慢,在测试时间每个图像花费40秒钟以上的时间[14]。

YOLO shares some similarities with R-CNN. Each grid cell proposes potential bounding boxes and scores those boxes using convolutional features. However, our system puts spatial constraints on the grid cell proposals which helps mitigate multiple detections of the same object. Our system also proposes far fewer bounding boxes, only 98 per image compared to about 2000 from Selective Search. Finally, our system combines these individual components into a single, jointly optimized model.

YOLO与R-CNN有一些相似之处。 每个网格单元都会提出潜在的边界框,并使用卷积特征对这些框进行评分。 但是,我们的系统在网格单元建议上施加了空间限制,这有助于减轻对同一对象的多次检测。 我们的系统还提出了更少的边界框,每个图像只有98个边界框,而选择性搜索的边界框只有2000个。 最后,我们的系统将这些单独的组件组合为一个共同优化的模型。

Other Fast Detectors

Fast and Faster R-CNN focus on speeding up the R-CNN framework by sharing computation and using neural networks to propose regions instead of Selective Search [14] [28]. While they offer speed and accuracy improvements over R- CNN, both still fall short of real-time performance.

越来越快的R-CNN致力于通过共享计算和使用神经网络而不是选择性搜索来提议区域来加速R-CNN框架[14] [28]。 尽管它们提供了比R-CNN更快的速度和更高的准确性,但两者仍未达到实时性能。

Many research efforts focus on speeding up the DPM pipeline [31] [38] [5]. They speed up HOG computation, use cascades, and push computation to GPUs. However, only 30Hz DPM [31] actually runs in real-time.

许多研究工作集中在加速DPM管道[31] [38] [5]。 它们加快了HOG计算,使用级联并将计算推入GPU的速度。 但是,只有30Hz DPM [31]实际上是实时运行的。

Instead of trying to optimize individual components of a large detection pipeline, YOLO throws out the pipeline entirely and is fast by design.

YOLO并没有尝试优化大型检测管道的各个组件,而是完全淘汰了该管道,并且设计合理。

Detectors for single classes like faces or people can be highly optimized since they have to deal with much less variation [37]. YOLO is a general purpose detector that learns to detect a variety of objects simultaneously.

像面孔或人这样的单一类别的检测器可以进行高度优化,因为它们必须处理更少的变化[37]。 YOLO是一种通用检测器,可学会同时检测各种物体。

Deep MultiBox.

Unlike R-CNN, Szegedy et al. train a convolutional neural network to predict regions of interest [8] instead of using Selective Search. MultiBox can also perform single object detection by replacing the confidence prediction with a single class prediction. However, MultiBox cannot perform general object detection and is still just a piece in a larger detection pipeline, requiring further image patch classification. Both YOLO and MultiBox use a convolutional network to predict bounding boxes in an image but YOLO is a complete detection system.

与R-CNN不同,Szegedy等人。 训练卷积神经网络来预测感兴趣区域[8],而不是使用选择性搜索。 MultiBox还可以通过用单个类别预测替换置信度预测来执行单个对象检测。 但是,MultiBox无法执行常规的对象检测,并且仍然只是较大检测管道中的一部分,需要进一步的图像补丁分类。 YOLO和MultiBox都使用卷积网络来预测图像中的边界框,但是YOLO是一个完整的检测系统。

OverFeat.

Sermanet et al. train a convolutional neural network to perform localization and adapt that localizer to perform detection [32]. OverFeat efficiently performs sliding window detection but it is still a disjoint system. OverFeat optimizes for localization, not detection performance. Like DPM, the localizer only sees local information when making a prediction. OverFeat cannot reason about global context and thus requires significant post-processing to produce coherent detections.

Sermanet等训练一个卷积神经网络来执行定位,并使该定位器执行检测[32]。 OverFeat有效地执行滑动窗口检测,但它仍然是不相交的系统。 OverFeat针对本地化而不是检测性能进行优化。 像DPM一样,本地化程序只能在进行预测时看到本地信息。 OverFeat无法推理全局上下文,因此需要进行大量后期处理才能产生连贯的检测结果。

MultiGrasp.

Our work is similar in design to work on grasp detection by Redmon et al [27]. Our grid approach to bounding box prediction is based on the MultiGrasp system for regression to grasps. However, grasp detection is a much simpler task than object detection. MultiGrasp only needs to predict a single graspable region for an image containing one object. It doesn’t have to estimate the size, location, or boundaries of the object or predict it’s class, only find a region suitable for grasping. YOLO predicts both bounding boxes and class probabilities for multiple objects of multiple classes in an image.

我们的工作在设计上类似于Redmon等人[27]的抓握检测工作。 我们用于边界框预测的网格方法是基于MultiGrasp系统进行回归分析的。 但是,抓取检测比对象检测要简单得多。 MultiGrasp只需要为包含一个对象的图像预测单个可抓握区域。 不必估计物体的大小,位置或边界或预测其类别,仅需找到适合抓握的区域即可。 YOLO预测图像中多个类别的多个对象的边界框和类别概率。

4. Experiments

First we compare YOLO with other real-time detection systems on PASCAL VOC 2007. To understand the differences between YOLO and R-CNN variants we explore the errors on VOC 2007 made by YOLO and Fast R-CNN, one of the highest performing versions of R-CNN [14]. Based on the different error profiles we show that YOLO can be used to rescore Fast R-CNN detections and reduce the errors from background false positives, giving a significant performance boost. We also present VOC 2012 results and compare mAP to current state-of-the-art methods. Finally, we show that YOLO generalizes to new domains better than other detectors on two artwork datasets.

首先,我们将YOLO与PASCAL VOC 2007上的其他实时检测系统进行比较。为了了解YOLO和R-CNN变体之间的区别,我们探究了YOLO和Fast R-CNN(性能最高的版本之一)在VOC 2007上的错误。 R-CNN [14]。 基于不同的错误配置文件,我们证明了YOLO可用于对快速R-CNN检测进行评分,并减少背景假正例子引起的错误,从而显着提高性能。 我们还将介绍VOC 2012的结果,并将mAP与当前的最新方法进行比较。 最后,我们证明了YOLO在两个艺术品数据集上比其他检测器能更好地推广到新领域。

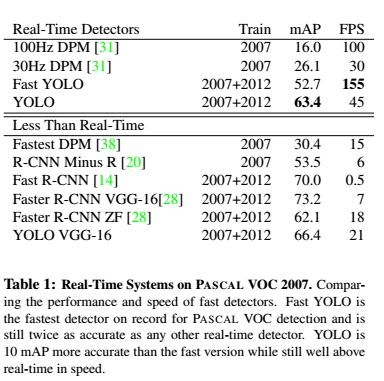

4.1. Comparison to Other Real-Time Systems

Many research efforts in object detection focus on making standard detection pipelines fast. [5] [38] [31] [14] [17] [28] However, only Sadeghi et al. actually produce a detection system that runs in real-time (30 frames per second or better) [31]. We compare YOLO to their GPU implementation of DPM which runs either at 30Hz or 100Hz. While the other efforts don’t reach the real-time milestone we also compare their relative mAP and speed to examine the accuracy-performance tradeoffs available in object detection systems.

对象检测方面的许多研究工作都集中在快速建立标准检测管道上。 [5] [38] [31] [14] [17] [28]但是,只有Sadeghi等人才知道。 实际上产生了一个实时运行的检测系统(每秒30帧或更高)[31]。 我们将YOLO与他们以30Hz或100Hz运行的DPM的GPU实现进行了比较。 尽管其他工作尚未达到实时里程碑,但我们还比较了它们的相对mAP和速度,以检查对象检测系统中可用的精度-性能折衷。

Fast YOLO is the fastest object detection method on PASCAL; as far as we know, it is the fastest extant object detector. With 52.7% mAP, it is more than twice as accurate as prior work on real-time detection. YOLO pushes mAP to 63.4% while still maintaining real-time performance. We also train YOLO using VGG-16. This model is more accurate but also significantly slower than YOLO. It is useful for comparison to other detection systems that rely on VGG-16 but since it is slower than real-time the rest of the paper focuses on our faster models.

快速YOLO是PASCAL上最快的对象检测方法。 据我们所知,它是现存最快的物体检测器。 凭借52.7%的mAP,它的准确度是以前实时检测工作的两倍以上。 YOLO将mAP提升至63.4%,同时仍保持实时性能。 我们还使用VGG-16训练YOLO。 该模型比YOLO更准确,但速度也要慢得多。 与其他依赖VGG-16的检测系统相比,它很有用,但是由于它比实时检测慢,因此本文的其余部分将重点放在我们更快的模型上。

Fastest DPM effectively speeds up DPM without sacrificing much mAP but it still misses real-time performance by a factor of 2 [38]. It also is limited by DPM’s relatively low accuracy on detection compared to neural network approaches. R-CNN minus R replaces Selective Search with static bounding box proposals [20]. While it is much faster than R-CNN, it still falls short of real-time and takes a significant accuracy hit from not having good proposals.

最快的DPM可以在不牺牲很多mAP的情况下有效地加快DPM的速度,但是它仍将实时性能降低了2倍[38]。 与神经网络方法相比,它还受到DPM检测精度相对较低的限制。 R-CNN减R用静态边界框建议替换“选择性搜索” [20]。 尽管它比R-CNN快得多,但它仍然缺乏实时性,并且由于没有好的建议而对准确性造成重大影响。

Fast R-CNN speeds up the classification stage of R-CNN but it still relies on selective search which can take around 2 seconds per image to generate bounding box proposals. Thus it has high mAP but at 0.5 fps it is still far from realtime.

快速的R-CNN可以加快R-CNN的分类速度,但是它仍然依赖于选择性搜索,每个图像可能需要2秒钟左右的时间来生成边界框建议。 因此,它具有较高的mAP,但在0.5 fps时,仍离实时性还很远。

The recent Faster R-CNN replaces selective search with a neural network to propose bounding boxes, similar to Szegedy et al. [8] In our tests, their most accurate model achieves 7 fps while a smaller, less accurate one runs at 18 fps. The VGG-16 version of Faster R-CNN is 10 mAP higher but is also 6 times slower than YOLO. The ZeilerFergus Faster R-CNN is only 2.5 times slower than YOLO but is also less accurate.

最近的Faster R-CNN用神经网络取代了选择性搜索,以提出边界框,类似于Szegedy等。 [8]在我们的测试中,他们最准确的模型达到了7 fps,而较小的,精度较低的模型则以18 fps运行。 Faster R-CNN的VGG-16版本高出10 mAP,但比YOLO慢6倍。 ZeilerFergus Faster R-CNN仅比YOLO慢2.5倍,但准确性也较低。

4.2. VOC 2007 Error Analysis

To further examine the differences between YOLO and state-of-the-art detectors, we look at a detailed breakdown of results on VOC 2007. We compare YOLO to Fast RCNN since Fast R-CNN is one of the highest performing detectors on PASCAL and it’s detections are publicly available.

We use the methodology and tools of Hoiem et al. [19] For each category at test time we look at the top N predictions for that category. Each prediction is either correct or it is classified based on the type of error:

为了进一步检查YOLO和最先进的探测器之间的差异,我们查看了VOC 2007的详细结果细分。我们将YOLO与Fast RCNN进行了比较,因为Fast R-CNN是PASCAL和PASCAL上性能最高的探测器之一。 它的检测结果是公开可用的。

我们使用Hoiem等人的方法论和工具。 [19]对于测试时间的每个类别,我们查看该类别的前N个预测。 每个预测都是正确的,或者根据错误的类型进行分类:

• Correct: correct class and I O U > . 5 IOU > .5 IOU>.5

• Localization: correct class, . 1 < I O U < . 5 .1 < IOU < .5 .1<IOU<.5

• Similar: class is similar, I O U > . 1 IOU > .1 IOU>.1

• Other: class is wrong, I O U > . 1 IOU > .1 IOU>.1

• Background: I O U < . 1 IOU < .1 IOU<.1 for any object

Figure 4 shows the breakdown of each error type averaged across all 20 classes.

YOLO struggles to localize objects correctly. Localization errors account for more of YOLO’s errors than all other sources combined. Fast R-CNN makes much fewer localization errors but far more background errors. 13.6% of it’s top detections are false positives that don’t contain any objects. Fast R-CNN is almost 3x more likely to predict background detections than YOLO.

YOLO努力正确地定位对象。定位错误占YOLO错误的比所有其他来源的总和还多。 快速R-CNN产生的定位错误少得多,但是背景错误却多得多。 最高检测到的13.6%是不包含任何对象的误报。 快速R-CNN预测背景检测的可能性几乎是YOLO的3倍。

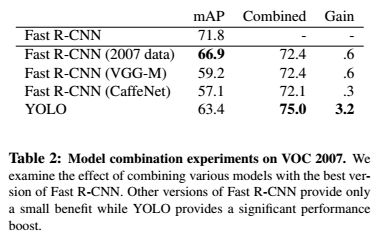

4.3. Combining Fast R-CNN and YOLO

YOLO makes far fewer background mistakes than Fast R-CNN. By using YOLO to eliminate background detections from Fast R-CNN we get a significant boost in performance. For every bounding box that R-CNN predicts we check to see if YOLO predicts a similar box. If it does, we give that prediction a boost based on the probability predicted by YOLO and the overlap between the two boxes.

The best Fast R-CNN model achieves a mAP of 71.8% on the VOC 2007 test set. When combined with YOLO, its mAP increases by 3.2% to 75.0%. We also tried combining

the top Fast R-CNN model with several other versions of Fast R-CNN. Those ensembles produced small increases in mAP between .3 and .6%, see Table 2 for details.

与Fast R-CNN相比,YOLO产生的背景错误少得多。 通过使用YOLO消除Fast R-CNN的背景检测,我们可以显着提高性能。 对于R-CNN预测的每个边界框,我们都会检查YOLO是否预测了类似的框。 如果是这样,我们将根据YOLO预测的概率和两个框之间的重叠来对该预测进行增强。

最佳的Fast R-CNN模型在VOC 2007测试集上的mAP达到71.8%。 与YOLO结合使用时,其mAP增长3.2%,达到75.0%。 我们也尝试结合

顶级Fast R-CNN模型以及其他几个版本的Fast R-CNN。 这些乐团的mAP在0.3和0.6%之间有小幅增加,有关详细信息,请参见表2。

The boost from YOLO is not simply a byproduct of model ensembling since there is little benefit from combining different versions of Fast R-CNN. Rather, it is precisely because YOLO makes different kinds of mistakes at test time that it is so effective at boosting Fast R-CNN’s performance.

Unfortunately, this combination doesn’t benefit from the speed of YOLO since we run each model seperately and then combine the results. However, since YOLO is so fast it doesn’t add any significant computational time compared to Fast R-CNN.

YOLO的推动力不只是模型集成的副产品,因为组合不同版本的Fast R-CNN几乎没有好处。 恰恰是因为YOLO在测试时犯了各种错误,所以它在提高Fast R-CNN的性能方面是如此有效。

不幸的是,这种组合无法从YOLO的速度中受益,因为我们分别运行每个模型然后组合结果。 但是,由于YOLO如此之快,与Fast R-CNN相比,它不会增加任何可观的计算时间。

4.4. VOC 2012 Results

On the VOC 2012 test set, YOLO scores 57.9% mAP. This is lower than the current state of the art, closer to the original R-CNN using VGG-16, see Table 3. Our system struggles with small objects compared to its closest competitors. On categories like bottle, sheep, and tv/monitor YOLO scores 8-10% lower than R-CNN or Feature Edit. However, on other categories like cat and train YOLO achieves higher performance.

Our combined Fast R-CNN + YOLO model is one of the highest performing detection methods. Fast R-CNN gets a 2.3% improvement from the combination with YOLO, boosting it 5 spots up on the public leaderboard.

在VOC 2012测试集上,YOLO的mAP得分为57.9%。 这比当前的技术水平要低,更接近于使用VGG-16的原始R-CNN,请参见表3。与最接近的竞争对手相比,我们的系统在处理小物体时遇到困难。 在瓶子,绵羊和电视/显示器等类别上,YOLO的得分比R-CNN或Feature Edit低8-10%。 但是,在其他类别(如猫和火车)上,YOLO可获得更高的性能。

我们的Fast R-CNN + YOLO组合模型是性能最高的检测方法之一。 与YOLO的组合使Fast R-CNN获得2.3%的提升,使其在公共排行榜上的排名上升了5位。

4.5. Generalizability: Person Detection in Artwork

Academic datasets for object detection draw the training and testing data from the same distribution. In real-world applications it is hard to predict all possible use cases and the test data can diverge from what the system has seen before [3]. We compare YOLO to other detection systems on the Picasso Dataset [12] and the People-Art Dataset [3], two datasets for testing person detection on artwork.

用于对象检测的学术数据集从同一分布中提取训练和测试数据。 在现实世界的应用程序中,很难预测所有可能的用例,并且测试数据可能会与系统之前看到的有所不同[3]。 我们将YOLO与Picasso数据集[12]和People-Art数据集[3]上的其他检测系统进行比较,这两个数据集用于测试艺术品上的人物检测。

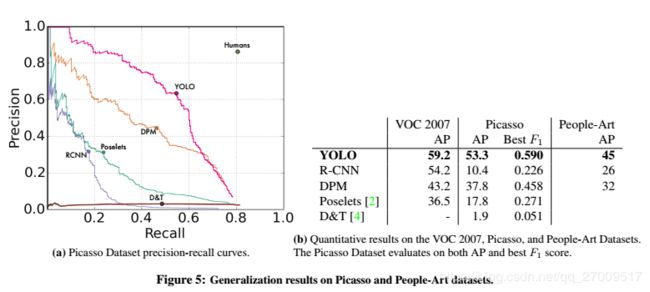

Figure 5 shows comparative performance between YOLO and other detection methods. For reference, we give VOC 2007 detection AP on person where all models are trained only on VOC 2007 data. On Picasso models are trained on VOC 2012 while on People-Art they are trained on VOC 2010.

R-CNN has high AP on VOC 2007. However, R-CNN drops off considerably when applied to artwork. R-CNN uses Selective Search for bounding box proposals which is tuned for natural images. The classifier step in R-CNN only sees small regions and needs good proposals.

图5显示了YOLO和其他检测方法之间的比较性能。 作为参考,我们为仅在VOC 2007数据上训练所有模型的人员提供了VOC 2007检测AP。 在毕加索上,模型在VOC 2012上进行训练,而在“人文艺术”上,模型在VOC 2010上进行训练。

R-CNN在VOC 2007上具有较高的AP。但是,R-CNN在应用于艺术品时会大幅下降。 R-CNN将“选择性搜索”用于边界框建议,该建议针对自然图像进行了调整。 R-CNN中的分类器步骤只能看到很小的区域,并且需要好的建议。

DPM maintains its AP well when applied to artwork. Prior work theorizes that DPM performs well because it has strong spatial models of the shape and layout of objects. Though DPM doesn’t degrade as much as R-CNN, it starts from a lower AP.

DPM应用于艺术品时,可以很好地保持其AP。先前的工作理论认为DPM表现良好,因为它具有强大的对象形状和布局空间模型。 尽管DPM的降级程度不如R-CNN,但它是从较低的AP开始的。

YOLO has good performance on VOC 2007 and its AP degrades less than other methods when applied to artwork. Like DPM, YOLO models the size and shape of objects, as well as relationships between objects and where objects commonly appear. Artwork and natural images are very different on a pixel level but they are similar in terms of the size and shape of objects, thus YOLO can still predict good bounding boxes and detections.

YOLO在VOC 2007上具有良好的性能,并且在应用于艺术品时,其AP的降级比其他方法要少。 与DPM一样,YOLO对对象的大小和形状以及对象之间的关系以及对象通常出现的位置进行建模。 图稿和自然图像在像素级别上有很大不同,但是在对象的大小和形状方面相似,因此YOLO仍可以预测良好的边界框和检测。

5. Real-Time Detection In The Wild

YOLO is a fast, accurate object detector, making it ideal for computer vision applications. We connect YOLO to a webcam and verify that it maintains real-time performance, including the time to fetch images from the camera and display the detections.

YOLO是一种快速,准确的物体检测器,非常适合计算机视觉应用。 我们将YOLO连接到网络摄像机,并验证它是否保持实时性能,包括从摄像机获取图像并显示检测结果的时间。

The resulting system is interactive and engaging. While YOLO processes images individually, when attached to a webcam it functions like a tracking system, detecting objects as they move around and change in appearance. A demo of the system and the source code can be found on our project website: http://pjreddie.com/yolo/.

最终的系统是交互式的并且引人入胜。 YOLO单独处理图像时,将其附加到网络摄像头后,其功能类似于跟踪系统,可以检测到物体移动和外观变化。 该系统的演示和源代码可以在我们的项目网站上找到:http://pjreddie.com/yolo/。

6. Conclusion

We introduce YOLO, a unified model for object detection. Our model is simple to construct and can be trained directly on full images. Unlike classifier-based approaches, YOLO is trained on a loss function that directly corresponds to detection performance and the entire model is trained jointly.

Fast YOLO is the fastest general-purpose object detector in the literature and YOLO pushes the state-of-the-art in real-time object detection. YOLO also generalizes well to new domains making it ideal for applications that rely on fast, robust object detection.

我们介绍了YOLO,这是用于对象检测的统一模型。 我们的模型构造简单,可以直接在完整图像上进行训练。 与基于分类器的方法不同,YOLO在直接与检测性能相对应的损失函数上进行训练,并且整个模型都在一起进行训练。

快速YOLO是文献中最快的通用目标检测器,YOLO推动了实时目标检测的最新发展。 YOLO还很好地推广到了新领域,使其成为依赖快速,强大的对象检测的应用程序的理想选择。

reference:

https://www.bilibili.com/video/av23354360?from=search&seid=1593211955504758713