2019-CS224N-Assignment 1: Exploring Word Vectors

认真看2019-cs224n这门课,好好学习!

斯坦福作业一:http://web.stanford.edu/class/cs224n/assignments/a1_preview/exploring_word_vectors.html

首先导入各种包,这里不用自己写代码:

# All Import Statements Defined Here

# Note: Do not add to this list.

# All the dependencies you need, can be installed by running .

# ----------------

import sys

assert sys.version_info[0]==3

assert sys.version_info[1] >= 5

from gensim.models import KeyedVectors

from gensim.test.utils import datapath

import pprint

import matplotlib.pyplot as plt

plt.rcParams['figure.figsize'] = [10, 5]

import nltk

nltk.download('reuters')

from nltk.corpus import reuters

import numpy as np

import random

import scipy as sp

from sklearn.decomposition import TruncatedSVD

from sklearn.decomposition import PCA

START_TOKEN = ''

END_TOKEN = ''

np.random.seed(0)

random.seed(0)

# ----------------

Part 1: Count-Based Word Vectors (10 points)

接下来就是词向量的内容了,涉及共现矩阵以及利用SVD进行降维,最后画出共现矩阵的词向量。

def read_corpus(category="crude"):

""" Read files from the specified Reuter's category.

Params:

category (string): category name

Return:

list of lists, with words from each of the processed files

"""

files = reuters.fileids(category)

return [[START_TOKEN] + [w.lower() for w in list(reuters.words(f))] + [END_TOKEN] for f in files]

reuters_corpus = read_corpus()

pprint.pprint(reuters_corpus[:3], compact=True, width=100)

# 结果如下:

[['', 'japan', 'to', 'revise', 'long', '-', 'term', 'energy', 'demand', 'downwards', 'the',

'ministry', 'of', 'international', 'trade', 'and', 'industry', '(', 'miti', ')', 'will', 'revise',

'its', 'long', '-', 'term', 'energy', 'supply', '/', 'demand', 'outlook', 'by', 'august', 'to',

'meet', 'a', 'forecast', 'downtrend', 'in', 'japanese', 'energy', 'demand', ',', 'ministry',

'officials', 'said', '.', 'miti', 'is', 'expected', 'to', 'lower', 'the', 'projection', 'for',

'primary', 'energy', 'supplies', 'in', 'the', 'year', '2000', 'to', '550', 'mln', 'kilolitres',

'(', 'kl', ')', 'from', '600', 'mln', ',', 'they', 'said', '.', 'the', 'decision', 'follows',

'the', 'emergence', 'of', 'structural', 'changes', 'in', 'japanese', 'industry', 'following',

'the', 'rise', 'in', 'the', 'value', 'of', 'the', 'yen', 'and', 'a', 'decline', 'in', 'domestic',

'electric', 'power', 'demand', '.', 'miti', 'is', 'planning', 'to', 'work', 'out', 'a', 'revised',

'energy', 'supply', '/', 'demand', 'outlook', 'through', 'deliberations', 'of', 'committee',

'meetings', 'of', 'the', 'agency', 'of', 'natural', 'resources', 'and', 'energy', ',', 'the',

'officials', 'said', '.', 'they', 'said', 'miti', 'will', 'also', 'review', 'the', 'breakdown',

'of', 'energy', 'supply', 'sources', ',', 'including', 'oil', ',', 'nuclear', ',', 'coal', 'and',

'natural', 'gas', '.', 'nuclear', 'energy', 'provided', 'the', 'bulk', 'of', 'japan', "'", 's',

'electric', 'power', 'in', 'the', 'fiscal', 'year', 'ended', 'march', '31', ',', 'supplying',

'an', 'estimated', '27', 'pct', 'on', 'a', 'kilowatt', '/', 'hour', 'basis', ',', 'followed',

'by', 'oil', '(', '23', 'pct', ')', 'and', 'liquefied', 'natural', 'gas', '(', '21', 'pct', '),',

'they', 'noted', '.', ''],

['', 'energy', '/', 'u', '.', 's', '.', 'petrochemical', 'industry', 'cheap', 'oil',

'feedstocks', ',', 'the', 'weakened', 'u', '.', 's', '.', 'dollar', 'and', 'a', 'plant',

'utilization', 'rate', 'approaching', '90', 'pct', 'will', 'propel', 'the', 'streamlined', 'u',

'.', 's', '.', 'petrochemical', 'industry', 'to', 'record', 'profits', 'this', 'year', ',',

'with', 'growth', 'expected', 'through', 'at', 'least', '1990', ',', 'major', 'company',

'executives', 'predicted', '.', 'this', 'bullish', 'outlook', 'for', 'chemical', 'manufacturing',

'and', 'an', 'industrywide', 'move', 'to', 'shed', 'unrelated', 'businesses', 'has', 'prompted',

'gaf', 'corp', '&', 'lt', ';', 'gaf', '>,', 'privately', '-', 'held', 'cain', 'chemical', 'inc',

',', 'and', 'other', 'firms', 'to', 'aggressively', 'seek', 'acquisitions', 'of', 'petrochemical',

'plants', '.', 'oil', 'companies', 'such', 'as', 'ashland', 'oil', 'inc', '&', 'lt', ';', 'ash',

'>,', 'the', 'kentucky', '-', 'based', 'oil', 'refiner', 'and', 'marketer', ',', 'are', 'also',

'shopping', 'for', 'money', '-', 'making', 'petrochemical', 'businesses', 'to', 'buy', '.', '"',

'i', 'see', 'us', 'poised', 'at', 'the', 'threshold', 'of', 'a', 'golden', 'period', ',"', 'said',

'paul', 'oreffice', ',', 'chairman', 'of', 'giant', 'dow', 'chemical', 'co', '&', 'lt', ';',

'dow', '>,', 'adding', ',', '"', 'there', "'", 's', 'no', 'major', 'plant', 'capacity', 'being',

'added', 'around', 'the', 'world', 'now', '.', 'the', 'whole', 'game', 'is', 'bringing', 'out',

'new', 'products', 'and', 'improving', 'the', 'old', 'ones', '."', 'analysts', 'say', 'the',

'chemical', 'industry', "'", 's', 'biggest', 'customers', ',', 'automobile', 'manufacturers',

'and', 'home', 'builders', 'that', 'use', 'a', 'lot', 'of', 'paints', 'and', 'plastics', ',',

'are', 'expected', 'to', 'buy', 'quantities', 'this', 'year', '.', 'u', '.', 's', '.',

'petrochemical', 'plants', 'are', 'currently', 'operating', 'at', 'about', '90', 'pct',

'capacity', ',', 'reflecting', 'tighter', 'supply', 'that', 'could', 'hike', 'product', 'prices',

'by', '30', 'to', '40', 'pct', 'this', 'year', ',', 'said', 'john', 'dosher', ',', 'managing',

'director', 'of', 'pace', 'consultants', 'inc', 'of', 'houston', '.', 'demand', 'for', 'some',

'products', 'such', 'as', 'styrene', 'could', 'push', 'profit', 'margins', 'up', 'by', 'as',

'much', 'as', '300', 'pct', ',', 'he', 'said', '.', 'oreffice', ',', 'speaking', 'at', 'a',

'meeting', 'of', 'chemical', 'engineers', 'in', 'houston', ',', 'said', 'dow', 'would', 'easily',

'top', 'the', '741', 'mln', 'dlrs', 'it', 'earned', 'last', 'year', 'and', 'predicted', 'it',

'would', 'have', 'the', 'best', 'year', 'in', 'its', 'history', '.', 'in', '1985', ',', 'when',

'oil', 'prices', 'were', 'still', 'above', '25', 'dlrs', 'a', 'barrel', 'and', 'chemical',

'exports', 'were', 'adversely', 'affected', 'by', 'the', 'strong', 'u', '.', 's', '.', 'dollar',

',', 'dow', 'had', 'profits', 'of', '58', 'mln', 'dlrs', '.', '"', 'i', 'believe', 'the',

'entire', 'chemical', 'industry', 'is', 'headed', 'for', 'a', 'record', 'year', 'or', 'close',

'to', 'it', ',"', 'oreffice', 'said', '.', 'gaf', 'chairman', 'samuel', 'heyman', 'estimated',

'that', 'the', 'u', '.', 's', '.', 'chemical', 'industry', 'would', 'report', 'a', '20', 'pct',

'gain', 'in', 'profits', 'during', '1987', '.', 'last', 'year', ',', 'the', 'domestic',

'industry', 'earned', 'a', 'total', 'of', '13', 'billion', 'dlrs', ',', 'a', '54', 'pct', 'leap',

'from', '1985', '.', 'the', 'turn', 'in', 'the', 'fortunes', 'of', 'the', 'once', '-', 'sickly',

'chemical', 'industry', 'has', 'been', 'brought', 'about', 'by', 'a', 'combination', 'of', 'luck',

'and', 'planning', ',', 'said', 'pace', "'", 's', 'john', 'dosher', '.', 'dosher', 'said', 'last',

'year', "'", 's', 'fall', 'in', 'oil', 'prices', 'made', 'feedstocks', 'dramatically', 'cheaper',

'and', 'at', 'the', 'same', 'time', 'the', 'american', 'dollar', 'was', 'weakening', 'against',

'foreign', 'currencies', '.', 'that', 'helped', 'boost', 'u', '.', 's', '.', 'chemical',

'exports', '.', 'also', 'helping', 'to', 'bring', 'supply', 'and', 'demand', 'into', 'balance',

'has', 'been', 'the', 'gradual', 'market', 'absorption', 'of', 'the', 'extra', 'chemical',

'manufacturing', 'capacity', 'created', 'by', 'middle', 'eastern', 'oil', 'producers', 'in',

'the', 'early', '1980s', '.', 'finally', ',', 'virtually', 'all', 'major', 'u', '.', 's', '.',

'chemical', 'manufacturers', 'have', 'embarked', 'on', 'an', 'extensive', 'corporate',

'restructuring', 'program', 'to', 'mothball', 'inefficient', 'plants', ',', 'trim', 'the',

'payroll', 'and', 'eliminate', 'unrelated', 'businesses', '.', 'the', 'restructuring', 'touched',

'off', 'a', 'flurry', 'of', 'friendly', 'and', 'hostile', 'takeover', 'attempts', '.', 'gaf', ',',

'which', 'made', 'an', 'unsuccessful', 'attempt', 'in', '1985', 'to', 'acquire', 'union',

'carbide', 'corp', '&', 'lt', ';', 'uk', '>,', 'recently', 'offered', 'three', 'billion', 'dlrs',

'for', 'borg', 'warner', 'corp', '&', 'lt', ';', 'bor', '>,', 'a', 'chicago', 'manufacturer',

'of', 'plastics', 'and', 'chemicals', '.', 'another', 'industry', 'powerhouse', ',', 'w', '.',

'r', '.', 'grace', '&', 'lt', ';', 'gra', '>', 'has', 'divested', 'its', 'retailing', ',',

'restaurant', 'and', 'fertilizer', 'businesses', 'to', 'raise', 'cash', 'for', 'chemical',

'acquisitions', '.', 'but', 'some', 'experts', 'worry', 'that', 'the', 'chemical', 'industry',

'may', 'be', 'headed', 'for', 'trouble', 'if', 'companies', 'continue', 'turning', 'their',

'back', 'on', 'the', 'manufacturing', 'of', 'staple', 'petrochemical', 'commodities', ',', 'such',

'as', 'ethylene', ',', 'in', 'favor', 'of', 'more', 'profitable', 'specialty', 'chemicals',

'that', 'are', 'custom', '-', 'designed', 'for', 'a', 'small', 'group', 'of', 'buyers', '.', '"',

'companies', 'like', 'dupont', '&', 'lt', ';', 'dd', '>', 'and', 'monsanto', 'co', '&', 'lt', ';',

'mtc', '>', 'spent', 'the', 'past', 'two', 'or', 'three', 'years', 'trying', 'to', 'get', 'out',

'of', 'the', 'commodity', 'chemical', 'business', 'in', 'reaction', 'to', 'how', 'badly', 'the',

'market', 'had', 'deteriorated', ',"', 'dosher', 'said', '.', '"', 'but', 'i', 'think', 'they',

'will', 'eventually', 'kill', 'the', 'margins', 'on', 'the', 'profitable', 'chemicals', 'in',

'the', 'niche', 'market', '."', 'some', 'top', 'chemical', 'executives', 'share', 'the',

'concern', '.', '"', 'the', 'challenge', 'for', 'our', 'industry', 'is', 'to', 'keep', 'from',

'getting', 'carried', 'away', 'and', 'repeating', 'past', 'mistakes', ',"', 'gaf', "'", 's',

'heyman', 'cautioned', '.', '"', 'the', 'shift', 'from', 'commodity', 'chemicals', 'may', 'be',

'ill', '-', 'advised', '.', 'specialty', 'businesses', 'do', 'not', 'stay', 'special', 'long',

'."', 'houston', '-', 'based', 'cain', 'chemical', ',', 'created', 'this', 'month', 'by', 'the',

'sterling', 'investment', 'banking', 'group', ',', 'believes', 'it', 'can', 'generate', '700',

'mln', 'dlrs', 'in', 'annual', 'sales', 'by', 'bucking', 'the', 'industry', 'trend', '.',

'chairman', 'gordon', 'cain', ',', 'who', 'previously', 'led', 'a', 'leveraged', 'buyout', 'of',

'dupont', "'", 's', 'conoco', 'inc', "'", 's', 'chemical', 'business', ',', 'has', 'spent', '1',

'.', '1', 'billion', 'dlrs', 'since', 'january', 'to', 'buy', 'seven', 'petrochemical', 'plants',

'along', 'the', 'texas', 'gulf', 'coast', '.', 'the', 'plants', 'produce', 'only', 'basic',

'commodity', 'petrochemicals', 'that', 'are', 'the', 'building', 'blocks', 'of', 'specialty',

'products', '.', '"', 'this', 'kind', 'of', 'commodity', 'chemical', 'business', 'will', 'never',

'be', 'a', 'glamorous', ',', 'high', '-', 'margin', 'business', ',"', 'cain', 'said', ',',

'adding', 'that', 'demand', 'is', 'expected', 'to', 'grow', 'by', 'about', 'three', 'pct',

'annually', '.', 'garo', 'armen', ',', 'an', 'analyst', 'with', 'dean', 'witter', 'reynolds', ',',

'said', 'chemical', 'makers', 'have', 'also', 'benefitted', 'by', 'increasing', 'demand', 'for',

'plastics', 'as', 'prices', 'become', 'more', 'competitive', 'with', 'aluminum', ',', 'wood',

'and', 'steel', 'products', '.', 'armen', 'estimated', 'the', 'upturn', 'in', 'the', 'chemical',

'business', 'could', 'last', 'as', 'long', 'as', 'four', 'or', 'five', 'years', ',', 'provided',

'the', 'u', '.', 's', '.', 'economy', 'continues', 'its', 'modest', 'rate', 'of', 'growth', '.',

''],

['', 'turkey', 'calls', 'for', 'dialogue', 'to', 'solve', 'dispute', 'turkey', 'said',

'today', 'its', 'disputes', 'with', 'greece', ',', 'including', 'rights', 'on', 'the',

'continental', 'shelf', 'in', 'the', 'aegean', 'sea', ',', 'should', 'be', 'solved', 'through',

'negotiations', '.', 'a', 'foreign', 'ministry', 'statement', 'said', 'the', 'latest', 'crisis',

'between', 'the', 'two', 'nato', 'members', 'stemmed', 'from', 'the', 'continental', 'shelf',

'dispute', 'and', 'an', 'agreement', 'on', 'this', 'issue', 'would', 'effect', 'the', 'security',

',', 'economy', 'and', 'other', 'rights', 'of', 'both', 'countries', '.', '"', 'as', 'the',

'issue', 'is', 'basicly', 'political', ',', 'a', 'solution', 'can', 'only', 'be', 'found', 'by',

'bilateral', 'negotiations', ',"', 'the', 'statement', 'said', '.', 'greece', 'has', 'repeatedly',

'said', 'the', 'issue', 'was', 'legal', 'and', 'could', 'be', 'solved', 'at', 'the',

'international', 'court', 'of', 'justice', '.', 'the', 'two', 'countries', 'approached', 'armed',

'confrontation', 'last', 'month', 'after', 'greece', 'announced', 'it', 'planned', 'oil',

'exploration', 'work', 'in', 'the', 'aegean', 'and', 'turkey', 'said', 'it', 'would', 'also',

'search', 'for', 'oil', '.', 'a', 'face', '-', 'off', 'was', 'averted', 'when', 'turkey',

'confined', 'its', 'research', 'to', 'territorrial', 'waters', '.', '"', 'the', 'latest',

'crises', 'created', 'an', 'historic', 'opportunity', 'to', 'solve', 'the', 'disputes', 'between',

'the', 'two', 'countries', ',"', 'the', 'foreign', 'ministry', 'statement', 'said', '.', 'turkey',

"'", 's', 'ambassador', 'in', 'athens', ',', 'nazmi', 'akiman', ',', 'was', 'due', 'to', 'meet',

'prime', 'minister', 'andreas', 'papandreou', 'today', 'for', 'the', 'greek', 'reply', 'to', 'a',

'message', 'sent', 'last', 'week', 'by', 'turkish', 'prime', 'minister', 'turgut', 'ozal', '.',

'the', 'contents', 'of', 'the', 'message', 'were', 'not', 'disclosed', '.', '']]

Question 1.1: Implement distinct_words [code] (2 points)

def distinct_words(corpus):

""" Determine a list of distinct words for the corpus.

Params:

corpus (list of list of strings): corpus of documents

Return:

corpus_words (list of strings): list of distinct words across the corpus, sorted (using python 'sorted' function)

num_corpus_words (integer): number of distinct words across the corpus

"""

corpus_words = []

num_corpus_words = -1

# ------------------

# Write your implementation here.

corpus_words = sorted(list({word for words in corpus for word in words}))

num_corpus_words = len(corpus_words)

# ------------------

return corpus_words, num_corpus_words

# ---------------------

# Run this sanity check

# Note that this not an exhaustive check for correctness.

# ---------------------

# Define toy corpus

test_corpus = ["START All that glitters isn't gold END".split(" "), "START All's well that ends well END".split(" ")]

test_corpus_words, num_corpus_words = distinct_words(test_corpus)

# Correct answers

ans_test_corpus_words = sorted(list(set(["START", "All", "ends", "that", "gold", "All's", "glitters", "isn't", "well", "END"])))

ans_num_corpus_words = len(ans_test_corpus_words)

# Test correct number of words

assert(num_corpus_words == ans_num_corpus_words), "Incorrect number of distinct words. Correct: {}. Yours: {}".format(ans_num_corpus_words, num_corpus_words)

# Test correct words

assert (test_corpus_words == ans_test_corpus_words), "Incorrect corpus_words.\nCorrect: {}\nYours: {}".format(str(ans_test_corpus_words), str(test_corpus_words))

# Print Success

print ("-" * 80)

print("Passed All Tests!")

print ("-" * 80)

结果如下:

--------------------------------------------------------------------------------

Passed All Tests!

--------------------------------------------------------------------------------

Question 1.2: Implement compute_co_occurrence_matrix [code] (3 points)

实现计算共现矩阵,根据窗口的大小来计算中心词附近的词出现的次数

def compute_co_occurrence_matrix(corpus, window_size=4):

""" Compute co-occurrence matrix for the given corpus and window_size (default of 4).

Note: Each word in a document should be at the center of a window. Words near edges will have a smaller

number of co-occurring words.

For example, if we take the document "START All that glitters is not gold END" with window size of 4,

"All" will co-occur with "START", "that", "glitters", "is", and "not".

Params:

corpus (list of list of strings): corpus of documents

window_size (int): size of context window

Return:

M (numpy matrix of shape (number of corpus words, number of corpus words)):

Co-occurence matrix of word counts.

The ordering of the words in the rows/columns should be the same as the ordering of the words given by the distinct_words function.

word2Ind (dict): dictionary that maps word to index (i.e. row/column number) for matrix M.

"""

words, num_words = distinct_words(corpus)

M = None

word2Ind = {}

# ------------------

# Write your implementation here.

M = np.zeros((num_words, num_words))

word2Ind = {word:i for i, word in enumerate(words)}

for doc in corpus:

for i, word in enumerate(doc):

for j in range(i-window_size, i+window_size+1):

if j<0 or j>=len(doc):

continue

if j != i:

M[word2Ind[word], word2Ind[doc[j]]] += 1

# ------------------

return M, word2Ind

# ---------------------

# Run this sanity check

# Note that this is not an exhaustive check for correctness.

# ---------------------

# Define toy corpus and get student's co-occurrence matrix

test_corpus = ["START All that glitters isn't gold END".split(" "), "START All's well that ends well END".split(" ")]

M_test, word2Ind_test = compute_co_occurrence_matrix(test_corpus, window_size=1)

# Correct M and word2Ind

M_test_ans = np.array(

[[0., 0., 0., 1., 0., 0., 0., 0., 1., 0.,],

[0., 0., 0., 1., 0., 0., 0., 0., 0., 1.,],

[0., 0., 0., 0., 0., 0., 1., 0., 0., 1.,],

[1., 1., 0., 0., 0., 0., 0., 0., 0., 0.,],

[0., 0., 0., 0., 0., 0., 0., 0., 1., 1.,],

[0., 0., 0., 0., 0., 0., 0., 1., 1., 0.,],

[0., 0., 1., 0., 0., 0., 0., 1., 0., 0.,],

[0., 0., 0., 0., 0., 1., 1., 0., 0., 0.,],

[1., 0., 0., 0., 1., 1., 0., 0., 0., 1.,],

[0., 1., 1., 0., 1., 0., 0., 0., 1., 0.,]]

)

word2Ind_ans = {'All': 0, "All's": 1, 'END': 2, 'START': 3, 'ends': 4, 'glitters': 5, 'gold': 6, "isn't": 7, 'that': 8, 'well': 9}

# Test correct word2Ind

assert (word2Ind_ans == word2Ind_test), "Your word2Ind is incorrect:\nCorrect: {}\nYours: {}".format(word2Ind_ans, word2Ind_test)

# Test correct M shape

assert (M_test.shape == M_test_ans.shape), "M matrix has incorrect shape.\nCorrect: {}\nYours: {}".format(M_test.shape, M_test_ans.shape)

# Test correct M values

for w1 in word2Ind_ans.keys():

idx1 = word2Ind_ans[w1]

for w2 in word2Ind_ans.keys():

idx2 = word2Ind_ans[w2]

student = M_test[idx1, idx2]

correct = M_test_ans[idx1, idx2]

if student != correct:

print("Correct M:")

print(M_test_ans)

print("Your M: ")

print(M_test)

raise AssertionError("Incorrect count at index ({}, {})=({}, {}) in matrix M. Yours has {} but should have {}.".format(idx1, idx2, w1, w2, student, correct))

# Print Success

print ("-" * 80)

print("Passed All Tests!")

print ("-" * 80)

结果如下:

--------------------------------------------------------------------------------

Passed All Tests!

--------------------------------------------------------------------------------

Question 1.3: Implement reduce_to_k_dim [code]

进行SVD降维,不太懂的同学可以点击这里,官网上查看用法。

def reduce_to_k_dim(M, k=2):

""" Reduce a co-occurence count matrix of dimensionality (num_corpus_words, num_corpus_words)

to a matrix of dimensionality (num_corpus_words, k) using the following SVD function from Scikit-Learn:

- http://scikit-learn.org/stable/modules/generated/sklearn.decomposition.TruncatedSVD.html

Params:

M (numpy matrix of shape (number of corpus words, number of corpus words)): co-occurence matrix of word counts

k (int): embedding size of each word after dimension reduction

Return:

M_reduced (numpy matrix of shape (number of corpus words, k)): matrix of k-dimensioal word embeddings.

In terms of the SVD from math class, this actually returns U * S

"""

n_iters = 10 # Use this parameter in your call to `TruncatedSVD`

M_reduced = None

print("Running Truncated SVD over %i words..." % (M.shape[0]))

# ------------------

# Write your implementation here.

svd = TruncatedSVD(n_components=k, n_iter=n_iters)

M_reduced = svd.fit_transform(M)

# ------------------

print("Done.")

return M_reduced

打印结果如下:

Running Truncated SVD over 10 words...

Done.

--------------------------------------------------------------------------------

Passed All Tests!

--------------------------------------------------------------------------------

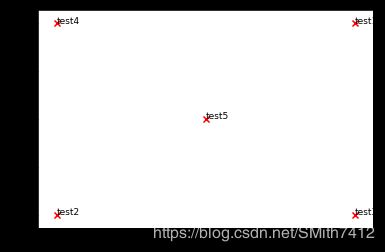

Question 1.4: Implement plot_embeddings [code] (1 point)

画图

def plot_embeddings(M_reduced, word2Ind, words):

""" Plot in a scatterplot the embeddings of the words specified in the list "words".

NOTE: do not plot all the words listed in M_reduced / word2Ind.

Include a label next to each point.

Params:

M_reduced (numpy matrix of shape (number of unique words in the corpus , k)): matrix of k-dimensioal word embeddings

word2Ind (dict): dictionary that maps word to indices for matrix M

words (list of strings): words whose embeddings we want to visualize

"""

# ------------------

# Write your implementation here.

for word in words:

coord = M_reduced[word2Ind[word]]

x = coord[0]

y = coord[1]

plt.scatter(x,y, marker='x', color='red')

plt.text(x, y, word, fontsize=9)

plt.show()

# ------------------

# ---------------------

# Run this sanity check

# Note that this not an exhaustive check for correctness.

# The plot produced should look like the "test solution plot" depicted below.

# ---------------------

print ("-" * 80)

print ("Outputted Plot:")

M_reduced_plot_test = np.array([[1, 1], [-1, -1], [1, -1], [-1, 1], [0, 0]])

word2Ind_plot_test = {'test1': 0, 'test2': 1, 'test3': 2, 'test4': 3, 'test5': 4}

words = ['test1', 'test2', 'test3', 'test4', 'test5']

plot_embeddings(M_reduced_plot_test, word2Ind_plot_test, words)

print ("-" * 80)

结果如下:

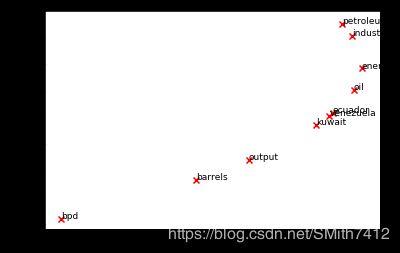

Question 1.5: Co-Occurrence Plot Analysis [written] (3 points)

画出共现矩阵的图

# -----------------------------

# Run This Cell to Produce Your Plot

# ------------------------------

reuters_corpus = read_corpus()

M_co_occurrence, word2Ind_co_occurrence = compute_co_occurrence_matrix(reuters_corpus)

M_reduced_co_occurrence = reduce_to_k_dim(M_co_occurrence, k=2)

# Rescale (normalize) the rows to make them each of unit-length

M_lengths = np.linalg.norm(M_reduced_co_occurrence, axis=1)

M_normalized = M_reduced_co_occurrence / M_lengths[:, np.newaxis] # broadcasting

words = ['barrels', 'bpd', 'ecuador', 'energy', 'industry', 'kuwait', 'oil', 'output', 'petroleum', 'venezuela']

plot_embeddings(M_normalized, word2Ind_co_occurrence, words)

结果如下:

Running Truncated SVD over 8185 words...

Done.

Part 2: Prediction-Based Word Vectors (15 points)

使用SVD降维,将300维降2维

def load_word2vec():

""" Load Word2Vec Vectors

Return:

wv_from_bin: All 3 million embeddings, each lengh 300

"""

import gensim.downloader as api

wv_from_bin = api.load("word2vec-google-news-300")

vocab = list(wv_from_bin.vocab.keys())

print("Loaded vocab size %i" % len(vocab))

return wv_from_bin

# -----------------------------------

# Run Cell to Load Word Vectors

# Note: This may take several minutes

# -----------------------------------

wv_from_bin = load_word2vec()

结果如下:

[==================================================] 100.0% 1662.8/1662.8MB downloaded

Loaded vocab size 3000000

降维

def get_matrix_of_vectors(wv_from_bin, required_words=['barrels', 'bpd', 'ecuador', 'energy', 'industry', 'kuwait', 'oil', 'output', 'petroleum', 'venezuela']):

""" Put the word2vec vectors into a matrix M.

Param:

wv_from_bin: KeyedVectors object; the 3 million word2vec vectors loaded from file

Return:

M: numpy matrix shape (num words, 300) containing the vectors

word2Ind: dictionary mapping each word to its row number in M

"""

import random

words = list(wv_from_bin.vocab.keys())

print("Shuffling words ...")

random.shuffle(words)

words = words[:10000]

print("Putting %i words into word2Ind and matrix M..." % len(words))

word2Ind = {}

M = []

curInd = 0

for w in words:

try:

M.append(wv_from_bin.word_vec(w))

word2Ind[w] = curInd

curInd += 1

except KeyError:

continue

for w in required_words:

try:

M.append(wv_from_bin.word_vec(w))

word2Ind[w] = curInd

curInd += 1

except KeyError:

continue

M = np.stack(M)

print("Done.")

return M, word2Ind

# -----------------------------------------------------------------

# Run Cell to Reduce 300-Dimensinal Word Embeddings to k Dimensions

# Note: This may take several minutes

# -----------------------------------------------------------------

M, word2Ind = get_matrix_of_vectors(wv_from_bin)

M_reduced = reduce_to_k_dim(M, k=2)

结果如下:

Shuffling words ...

Putting 10000 words into word2Ind and matrix M ...

Done.

Running Truncated SVD over 10010 words...

Done.

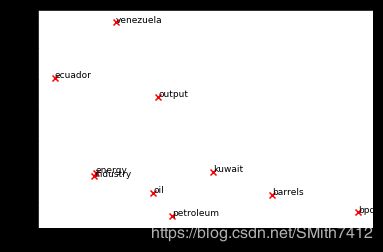

Question 2.1: Word2Vec Plot Analysis [written] (4 points)

words = ['barrels', 'bpd', 'ecuador', 'energy', 'industry', 'kuwait', 'oil', 'output', 'petroleum', 'venezuela']

plot_embeddings(M_reduced, word2Ind, words)

结果:

Question 2.2: Polysemous Words (2 points) [code + written]

多义词

# ------------------

# Write your polysemous word exploration code here.

wv_from_bin.most_similar("exciting")

# ------------------

结果如下:

[('interesting', 0.6667856574058533),

('excited', 0.6454532146453857),

('exhilarating', 0.6273326873779297),

('fantastic', 0.6220911145210266),

('Exciting', 0.616710364818573),

('intriguing', 0.6062461733818054),

('amazing', 0.5953893065452576),

('enjoyable', 0.5866266489028931),

('fascinating', 0.5797898173332214),

('gratifying', 0.575835645198822)]

Question 2.3: Synonyms & Antonyms (2 points) [code + written]

同义词和多义词

# ------------------

# Write your synonym & antonym exploration code here.

w1 = "sleep"

w2 = "nap"

w3 = "awake"

w1_w2_dist = wv_from_bin.distance(w1, w2)

w1_w3_dist = wv_from_bin.distance(w1, w3)

print("Synonyms {}, {} have cosine distance: {}".format(w1, w2, w1_w2_dist))

print("Antonyms {}, {} have cosine distance: {}".format(w1, w3, w1_w3_dist))

# ------------------

结果如下:

Synonyms sleep, nap have cosine distance: 0.38177746534347534

Antonyms sleep, awake have cosine distance: 0.4313364028930664

Solving Analogies with Word Vectors

类比

# Run this cell to answer the analogy -- man : king :: woman : x

pprint.pprint(wv_from_bin.most_similar(positive=['woman', 'king'], negative=['man']))

结果:

[('queen', 0.7118192911148071),

('monarch', 0.6189674139022827),

('princess', 0.5902431607246399),

('crown_prince', 0.5499460697174072),

('prince', 0.5377321243286133),

('kings', 0.5236844420433044),

('Queen_Consort', 0.5235945582389832),

('queens', 0.5181134343147278),

('sultan', 0.5098593235015869),

('monarchy', 0.5087411999702454)]

Question 2.4: Finding Analogies [code + written] (2 Points)

类比

# ------------------

# Write your analogy exploration code here.

pprint.pprint(wv_from_bin.most_similar(positive=['China', 'American'], negative=['America']))

# ------------------

结果如下:

[('Chinese', 0.7894362211227417),

('Beijing', 0.6521856784820557),

('Taiwanese', 0.6029292345046997),

('qualifier_Peng_Shuai', 0.5813732743263245),

('Shanghai', 0.5688328742980957),

('Taiwan', 0.5469641089439392),

('coach_Shang_Ruihua', 0.5457600355148315),

('Zhang', 0.5411955118179321),

('theChinese', 0.5395910739898682),

('Guangzhou', 0.5321415066719055)]

Question 2.5: Incorrect Analogy [code + written] (1 point)

错误的类比

# ------------------

# Write your incorrect analogy exploration code here.

pprint.pprint(wv_from_bin.most_similar(positive=['China', 'American'], negative=['Japan']))

# ------------------

结果如下:

[('Chinese', 0.5462234616279602),

('Amercian', 0.5023548603057861),

('African', 0.44705307483673096),

('Indian', 0.4317880868911743),

('Beijing', 0.41833460330963135),

('Cuban', 0.4132118821144104),

('Americans', 0.4127004146575928),

('U.S.', 0.41143256425857544),

('America', 0.40900349617004395),

('Amercan', 0.4035891890525818)]

Question 2.6: Guided Analysis of Bias in Word Vectors [written] (1 point)

偏差分析

# Run this cell

# Here `positive` indicates the list of words to be similar to and `negative` indicates the list of words to be

# most dissimilar from.

pprint.pprint(wv_from_bin.most_similar(positive=['woman', 'boss'], negative=['man']))

print()

pprint.pprint(wv_from_bin.most_similar(positive=['man', 'boss'], negative=['woman']))

结果如下:

[('bosses', 0.5522644519805908),

('manageress', 0.49151360988616943),

('exec', 0.45940813422203064),

('Manageress', 0.45598435401916504),

('receptionist', 0.4474116563796997),

('Jane_Danson', 0.44480544328689575),

('Fiz_Jennie_McAlpine', 0.44275766611099243),

('Coronation_Street_actress', 0.44275566935539246),

('supremo', 0.4409853219985962),

('coworker', 0.43986251950263977)]

[('supremo', 0.6097398400306702),

('MOTHERWELL_boss', 0.5489562153816223),

('CARETAKER_boss', 0.5375303626060486),

('Bully_Wee_boss', 0.5333974361419678),

('YEOVIL_Town_boss', 0.5321705341339111),

('head_honcho', 0.5281980037689209),

('manager_Stan_Ternent', 0.525971531867981),

('Viv_Busby', 0.5256162881851196),

('striker_Gabby_Agbonlahor', 0.5250812768936157),

('BARNSLEY_boss', 0.5238943099975586)]

Question 2.7: Independent Analysis of Bias in Word Vectors [code + written] (2 points)

单词向量中偏差的独立分析

# ------------------

# Write your bias exploration code here.

pprint.pprint(wv_from_bin.most_similar(positive=['woman', 'doctor'], negative=['man']))

print()

pprint.pprint(wv_from_bin.most_similar(positive=['woman', 'nurse'], negative=['man']))

# ------------------

结果如下:

[('gynecologist', 0.7093892097473145),

('nurse', 0.647728681564331),

('doctors', 0.6471461057662964),

('physician', 0.64389967918396),

('pediatrician', 0.6249487996101379),

('nurse_practitioner', 0.6218312978744507),

('obstetrician', 0.6072014570236206),

('ob_gyn', 0.5986712574958801),

('midwife', 0.5927063226699829),

('dermatologist', 0.5739566683769226)]

[('registered_nurse', 0.7375058531761169),

('nurse_practitioner', 0.6650707721710205),

('midwife', 0.6506887674331665),

('nurses', 0.6448696851730347),

('nurse_midwife', 0.6239829659461975),

('birth_doula', 0.5852460265159607),

('neonatal_nurse', 0.5670714974403381),

('dental_hygienist', 0.5668443441390991),

('lactation_consultant', 0.5667990446090698),

('respiratory_therapist', 0.5652169585227966)]

Question 2.8: Thinking About Bias [written] (1 point)

关于偏差的理解,一开始数据集中就存在了。